url

stringlengths 58

61

| repository_url

stringclasses 1

value | labels_url

stringlengths 72

75

| comments_url

stringlengths 67

70

| events_url

stringlengths 65

68

| html_url

stringlengths 46

51

| id

int64 599M

1.32B

| node_id

stringlengths 18

32

| number

int64 1

4.75k

| title

stringlengths 1

276

| user

dict | labels

list | state

stringclasses 2

values | locked

bool 1

class | assignee

null | assignees

sequence | milestone

null | comments

sequence | created_at

int64 1,587B

1,659B

| updated_at

int64 1,587B

1,659B

| closed_at

int64 1,587B

1,659B

⌀ | author_association

stringclasses 3

values | active_lock_reason

null | body

stringlengths 0

228k

⌀ | reactions

dict | timeline_url

stringlengths 67

70

| performed_via_github_app

null | state_reason

nullclasses 3

values | draft

bool 2

classes | pull_request

dict | is_pull_request

bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/4745 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4745/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4745/comments | https://api.github.com/repos/huggingface/datasets/issues/4745/events | https://github.com/huggingface/datasets/issues/4745 | 1,318,016,655 | I_kwDODunzps5Oj1aP | 4,745 | Allow `list_datasets` to include private datasets | {

"login": "ola13",

"id": 1528523,

"node_id": "MDQ6VXNlcjE1Mjg1MjM=",

"avatar_url": "https://avatars.githubusercontent.com/u/1528523?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/ola13",

"html_url": "https://github.com/ola13",

"followers_url": "https://api.github.com/users/ola13/followers",

"following_url": "https://api.github.com/users/ola13/following{/other_user}",

"gists_url": "https://api.github.com/users/ola13/gists{/gist_id}",

"starred_url": "https://api.github.com/users/ola13/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/ola13/subscriptions",

"organizations_url": "https://api.github.com/users/ola13/orgs",

"repos_url": "https://api.github.com/users/ola13/repos",

"events_url": "https://api.github.com/users/ola13/events{/privacy}",

"received_events_url": "https://api.github.com/users/ola13/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] | open | false | null | [] | null | [

"Thanks for opening this issue :)\r\n\r\nIf it can help, I think you can already use `huggingface_hub` to achieve this:\r\n```python\r\n>>> from huggingface_hub import HfApi\r\n>>> [ds_info.id for ds_info in HfApi().list_datasets(use_auth_token=token) if ds_info.private]\r\n['bigscience/xxxx', 'bigscience-catalogue-data/xxxxxxx', ... ]\r\n```\r\n\r\n---------\r\n\r\nThough the latest versions of `huggingface_hub` that contain this feature are not available on python 3.6, so maybe we should first drop support for python 3.6 (see #4460) to update `list_datasets` in `datasets` as well (or we would have to copy/paste some `huggingface_hub` code)",

"Great, thanks @lhoestq the workaround works! I think it would be intuitive to have the support directly in `datasets` but it makes sense to wait given that the workaround exists :)"

] | 1,658,830,568,000 | 1,658,832,354,000 | null | NONE | null | I am working with a large collection of private datasets, it would be convenient for me to be able to list them.

I would envision extending the convention of using `use_auth_token` keyword argument to `list_datasets` function, then calling:

```

list_datasets(use_auth_token="my_token")

```

would return the list of all datasets I have permissions to view, including private ones. The only current alternative I see is to use the hub website to manually obtain the list of dataset names - this is in the context of BigScience where respective private spaces contain hundreds of datasets, so not very convenient to list manually. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4745/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4745/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/4744 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4744/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4744/comments | https://api.github.com/repos/huggingface/datasets/issues/4744/events | https://github.com/huggingface/datasets/issues/4744 | 1,317,822,345 | I_kwDODunzps5OjF-J | 4,744 | Remove instructions to generate dummy data from our docs | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892861,

"node_id": "MDU6TGFiZWwxOTM1ODkyODYx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/documentation",

"name": "documentation",

"color": "0075ca",

"default": true,

"description": "Improvements or additions to documentation"

}

] | open | false | null | [] | null | [

"Note that for me personally, conceptually all the dummy data (even for \"canonical\" datasets) should be superseded by `datasets-server`, which performs some kind of CI/CD of datasets (including the canonical ones)",

"I totally agree: next step should be rethinking if dummy data makes sense for canonical datasets (once we have datasets-server) and eventually remove it.\r\n\r\nBut for now, we could at least start by removing the indication to generate dummy data from our docs."

] | 1,658,820,778,000 | 1,658,823,453,000 | null | MEMBER | null | In our docs, we indicate to generate the dummy data: https://huggingface.co/docs/datasets/dataset_script#testing-data-and-checksum-metadata

However:

- dummy data makes sense only for datasets in our GitHub repo: so that we can test their loading with our CI

- for datasets on the Hub:

- they do not pass any CI test requiring dummy data

- there are no instructions on how they can test their dataset locally using the dummy data

- the generation of the dummy data assumes our GitHub directory structure:

- the dummy data will be generated under `./datasets/<dataset_name>/dummy` even if locally there is no `./datasets` directory (which is the usual case). See issue:

- #4742

CC: @stevhliu | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4744/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4744/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/4743 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4743/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4743/comments | https://api.github.com/repos/huggingface/datasets/issues/4743/events | https://github.com/huggingface/datasets/pull/4743 | 1,317,362,561 | PR_kwDODunzps48EUFs | 4,743 | Update map docs | {

"login": "stevhliu",

"id": 59462357,

"node_id": "MDQ6VXNlcjU5NDYyMzU3",

"avatar_url": "https://avatars.githubusercontent.com/u/59462357?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/stevhliu",

"html_url": "https://github.com/stevhliu",

"followers_url": "https://api.github.com/users/stevhliu/followers",

"following_url": "https://api.github.com/users/stevhliu/following{/other_user}",

"gists_url": "https://api.github.com/users/stevhliu/gists{/gist_id}",

"starred_url": "https://api.github.com/users/stevhliu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/stevhliu/subscriptions",

"organizations_url": "https://api.github.com/users/stevhliu/orgs",

"repos_url": "https://api.github.com/users/stevhliu/repos",

"events_url": "https://api.github.com/users/stevhliu/events{/privacy}",

"received_events_url": "https://api.github.com/users/stevhliu/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892861,

"node_id": "MDU6TGFiZWwxOTM1ODkyODYx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/documentation",

"name": "documentation",

"color": "0075ca",

"default": true,

"description": "Improvements or additions to documentation"

}

] | open | false | null | [] | null | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_4743). All of your documentation changes will be reflected on that endpoint."

] | 1,658,782,775,000 | 1,658,783,163,000 | null | MEMBER | null | This PR updates the `map` docs for processing text to include `return_tensors="np"` to make it run faster (see #4676). | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4743/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4743/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4743",

"html_url": "https://github.com/huggingface/datasets/pull/4743",

"diff_url": "https://github.com/huggingface/datasets/pull/4743.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4743.patch",

"merged_at": null

} | true |

https://api.github.com/repos/huggingface/datasets/issues/4742 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4742/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4742/comments | https://api.github.com/repos/huggingface/datasets/issues/4742/events | https://github.com/huggingface/datasets/issues/4742 | 1,317,260,663 | I_kwDODunzps5Og813 | 4,742 | Dummy data nowhere to be found | {

"login": "BramVanroy",

"id": 2779410,

"node_id": "MDQ6VXNlcjI3Nzk0MTA=",

"avatar_url": "https://avatars.githubusercontent.com/u/2779410?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/BramVanroy",

"html_url": "https://github.com/BramVanroy",

"followers_url": "https://api.github.com/users/BramVanroy/followers",

"following_url": "https://api.github.com/users/BramVanroy/following{/other_user}",

"gists_url": "https://api.github.com/users/BramVanroy/gists{/gist_id}",

"starred_url": "https://api.github.com/users/BramVanroy/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/BramVanroy/subscriptions",

"organizations_url": "https://api.github.com/users/BramVanroy/orgs",

"repos_url": "https://api.github.com/users/BramVanroy/repos",

"events_url": "https://api.github.com/users/BramVanroy/events{/privacy}",

"received_events_url": "https://api.github.com/users/BramVanroy/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | open | false | null | [] | null | [

"Hi @BramVanroy, thanks for reporting.\r\n\r\nFirst of all, please note that you do not need the dummy data: this was the case when we were adding datasets to the `datasets` library (on this GitHub repo), so that we could test the correct loading of all datasets with our CI. However, this is no longer the case for datasets on the Hub.\r\n- We should definitely update our docs.\r\n\r\nSecond, the dummy data is generated locally:\r\n- in your case, the dummy data will be generated inside the directory: `./datasets/hebban-reviews/dummy`\r\n- please note the preceding `./datasets` directory: the reason for this is that the command to generate the dummy data was specifically created for our `datasets` library, and therefore assumes our directory structure: commands are run from the root directory of our GitHub repo, and datasets scripts are under `./datasets` \r\n\r\n\r\n ",

"I have opened an Issue to update the instructions on dummy data generation:\r\n- #4744"

] | 1,658,776,722,000 | 1,658,820,827,000 | null | CONTRIBUTOR | null | ## Describe the bug

To finalize my dataset, I wanted to create dummy data as per the guide and I ran

```shell

datasets-cli dummy_data datasets/hebban-reviews --auto_generate

```

where hebban-reviews is [this repo](https://huggingface.co/datasets/BramVanroy/hebban-reviews). And even though the scripts runs and shows a message at the end that it succeeded, I cannot find the dummy data anywhere. Where is it?

## Expected results

To see the dummy data in the datasets' folder or in the folder where I ran the command.

## Actual results

I see the following message but I cannot find the dummy data anywhere.

```

Dummy data generation done and dummy data test succeeded for config 'filtered''.

Automatic dummy data generation succeeded for all configs of '.\datasets\hebban-reviews\'

```

## Environment info

- `datasets` version: 2.4.1.dev0

- Platform: Windows-10-10.0.19041-SP0

- Python version: 3.8.8

- PyArrow version: 8.0.0

- Pandas version: 1.4.3

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4742/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4742/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/4741 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4741/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4741/comments | https://api.github.com/repos/huggingface/datasets/issues/4741/events | https://github.com/huggingface/datasets/pull/4741 | 1,316,621,272 | PR_kwDODunzps48B2fl | 4,741 | Fix to dict conversion of `DatasetInfo`/`Features` | {

"login": "mariosasko",

"id": 47462742,

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/mariosasko",

"html_url": "https://github.com/mariosasko",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | 1,658,745,687,000 | 1,658,753,436,000 | 1,658,752,673,000 | CONTRIBUTOR | null | Fix #4681 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4741/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4741/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4741",

"html_url": "https://github.com/huggingface/datasets/pull/4741",

"diff_url": "https://github.com/huggingface/datasets/pull/4741.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4741.patch",

"merged_at": 1658752673000

} | true |

https://api.github.com/repos/huggingface/datasets/issues/4740 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4740/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4740/comments | https://api.github.com/repos/huggingface/datasets/issues/4740/events | https://github.com/huggingface/datasets/pull/4740 | 1,316,478,007 | PR_kwDODunzps48BX5l | 4,740 | Fix multiprocessing in map_nested | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_4740). All of your documentation changes will be reflected on that endpoint."

] | 1,658,738,659,000 | 1,658,829,903,000 | null | MEMBER | null | As previously discussed:

Before, multiprocessing was not used in `map_nested` if `num_proc` was greater than or equal to `len(iterable)`.

- Multiprocessing was not used e.g. when passing `num_proc=20` but having 19 files to download

- As by default, `DownloadManager` sets `num_proc=16`, before multiprocessing was only used when `len(iterable)>16` by default

Now, if `num_proc` is greater than or equal to ``len(iterable)``, `num_proc` is set to ``len(iterable)`` and multiprocessing is used.

- As by default, `DownloadManager` sets `num_proc=16`, now multiprocessing is used when `len(iterable)>1` by default

After having had to fix some tests (87602ac), I am wondering:

- do we want to have multiprocessing by default?

- please note that `DownloadManager.download` sets `num_proc=16` by default

- or would it be better to ask the user to set it explicitly if they want multiprocessing (and default to `num_proc=1`)?

Fix #4636.

CC: @nateraw | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4740/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4740/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4740",

"html_url": "https://github.com/huggingface/datasets/pull/4740",

"diff_url": "https://github.com/huggingface/datasets/pull/4740.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4740.patch",

"merged_at": null

} | true |

https://api.github.com/repos/huggingface/datasets/issues/4739 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4739/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4739/comments | https://api.github.com/repos/huggingface/datasets/issues/4739/events | https://github.com/huggingface/datasets/pull/4739 | 1,316,400,915 | PR_kwDODunzps48BHdE | 4,739 | Deprecate metrics | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_4739). All of your documentation changes will be reflected on that endpoint.",

"I mark this as Draft because the deprecated version number needs being updated after the latest release."

] | 1,658,734,555,000 | 1,658,764,702,000 | null | MEMBER | null | Deprecate metrics:

- deprecate public functions: `load_metric`, `list_metrics` and `inspect_metric`: docstring and warning

- test deprecation warnings are issues

- deprecate metrics in all docs

- remove mentions to metrics in docs and README

- deprecate internal functions/classes

Maybe we should also stop testing metrics? | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4739/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4739/timeline | null | null | true | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4739",

"html_url": "https://github.com/huggingface/datasets/pull/4739",

"diff_url": "https://github.com/huggingface/datasets/pull/4739.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4739.patch",

"merged_at": null

} | true |

https://api.github.com/repos/huggingface/datasets/issues/4738 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4738/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4738/comments | https://api.github.com/repos/huggingface/datasets/issues/4738/events | https://github.com/huggingface/datasets/pull/4738 | 1,315,222,166 | PR_kwDODunzps479hq4 | 4,738 | Use CI unit/integration tests | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_4738). All of your documentation changes will be reflected on that endpoint."

] | 1,658,508,480,000 | 1,658,746,554,000 | null | MEMBER | null | This PR:

- Implements separate unit/integration tests

- The fail fast policy is only applied to unit tests: a fail in integration tests does not cancel the rest of the jobs

- We should implement more robust integration tests: work in progress in a subsequent PR | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4738/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4738/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4738",

"html_url": "https://github.com/huggingface/datasets/pull/4738",

"diff_url": "https://github.com/huggingface/datasets/pull/4738.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4738.patch",

"merged_at": null

} | true |

https://api.github.com/repos/huggingface/datasets/issues/4737 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4737/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4737/comments | https://api.github.com/repos/huggingface/datasets/issues/4737/events | https://github.com/huggingface/datasets/issues/4737 | 1,315,011,004 | I_kwDODunzps5OYXm8 | 4,737 | Download error on scene_parse_150 | {

"login": "juliensimon",

"id": 3436143,

"node_id": "MDQ6VXNlcjM0MzYxNDM=",

"avatar_url": "https://avatars.githubusercontent.com/u/3436143?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/juliensimon",

"html_url": "https://github.com/juliensimon",

"followers_url": "https://api.github.com/users/juliensimon/followers",

"following_url": "https://api.github.com/users/juliensimon/following{/other_user}",

"gists_url": "https://api.github.com/users/juliensimon/gists{/gist_id}",

"starred_url": "https://api.github.com/users/juliensimon/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/juliensimon/subscriptions",

"organizations_url": "https://api.github.com/users/juliensimon/orgs",

"repos_url": "https://api.github.com/users/juliensimon/repos",

"events_url": "https://api.github.com/users/juliensimon/events{/privacy}",

"received_events_url": "https://api.github.com/users/juliensimon/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | open | false | null | [] | null | [

"Hi! The server with the data seems to be down. I've reported this issue (https://github.com/CSAILVision/sceneparsing/issues/34) in the dataset repo. "

] | 1,658,496,508,000 | 1,658,500,151,000 | null | NONE | null | ```

from datasets import load_dataset

dataset = load_dataset("scene_parse_150", "scene_parsing")

FileNotFoundError: Couldn't find file at http://data.csail.mit.edu/places/ADEchallenge/ADEChallengeData2016.zip

```

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4737/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4737/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/4736 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4736/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4736/comments | https://api.github.com/repos/huggingface/datasets/issues/4736/events | https://github.com/huggingface/datasets/issues/4736 | 1,314,931,996 | I_kwDODunzps5OYEUc | 4,736 | Dataset Viewer issue for deepklarity/huggingface-spaces-dataset | {

"login": "dk-crazydiv",

"id": 47515542,

"node_id": "MDQ6VXNlcjQ3NTE1NTQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47515542?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/dk-crazydiv",

"html_url": "https://github.com/dk-crazydiv",

"followers_url": "https://api.github.com/users/dk-crazydiv/followers",

"following_url": "https://api.github.com/users/dk-crazydiv/following{/other_user}",

"gists_url": "https://api.github.com/users/dk-crazydiv/gists{/gist_id}",

"starred_url": "https://api.github.com/users/dk-crazydiv/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/dk-crazydiv/subscriptions",

"organizations_url": "https://api.github.com/users/dk-crazydiv/orgs",

"repos_url": "https://api.github.com/users/dk-crazydiv/repos",

"events_url": "https://api.github.com/users/dk-crazydiv/events{/privacy}",

"received_events_url": "https://api.github.com/users/dk-crazydiv/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 3470211881,

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer",

"name": "dataset-viewer",

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co"

}

] | closed | false | null | [

{

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"organizations_url": "https://api.github.com/users/severo/orgs",

"repos_url": "https://api.github.com/users/severo/repos",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"received_events_url": "https://api.github.com/users/severo/received_events",

"type": "User",

"site_admin": false

}

] | null | [

"Thanks for reporting. You're right, workers were under-provisioned due to a manual error, and the job queue was full. It's fixed now."

] | 1,658,492,058,000 | 1,658,497,598,000 | 1,658,497,598,000 | NONE | null | ### Link

https://huggingface.co/datasets/deepklarity/huggingface-spaces-dataset/viewer/deepklarity--huggingface-spaces-dataset/train

### Description

Hi Team,

I'm getting the following error on a uploaded dataset. I'm getting the same status for a couple of hours now. The dataset size is `<1MB` and the format is csv, so I'm not sure if it's supposed to take this much time or not.

```

Status code: 400

Exception: Status400Error

Message: The split is being processed. Retry later.

```

Is there any explicit step to be taken to get the viewer to work?

### Owner

Yes | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4736/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4736/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/4735 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4735/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4735/comments | https://api.github.com/repos/huggingface/datasets/issues/4735/events | https://github.com/huggingface/datasets/pull/4735 | 1,314,501,641 | PR_kwDODunzps477CuP | 4,735 | Pin rouge_score test dependency | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | 1,658,474,301,000 | 1,658,476,694,000 | 1,658,475,918,000 | MEMBER | null | Temporarily pin `rouge_score` (to avoid latest version 0.7.0) until the issue is fixed.

Fix #4734 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4735/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4735/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4735",

"html_url": "https://github.com/huggingface/datasets/pull/4735",

"diff_url": "https://github.com/huggingface/datasets/pull/4735.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4735.patch",

"merged_at": 1658475918000

} | true |

https://api.github.com/repos/huggingface/datasets/issues/4734 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4734/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4734/comments | https://api.github.com/repos/huggingface/datasets/issues/4734/events | https://github.com/huggingface/datasets/issues/4734 | 1,314,495,382 | I_kwDODunzps5OWZuW | 4,734 | Package rouge-score cannot be imported | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | null | [

{

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

}

] | null | [

"We have added a comment on an existing issue opened in their repo: https://github.com/google-research/google-research/issues/1212#issuecomment-1192267130\r\n- https://github.com/google-research/google-research/issues/1212"

] | 1,658,474,105,000 | 1,658,475,919,000 | 1,658,475,918,000 | MEMBER | null | ## Describe the bug

After the today release of `rouge_score-0.0.7` it seems no longer importable. Our CI fails: https://github.com/huggingface/datasets/runs/7463218591?check_suite_focus=true

```

FAILED tests/test_dataset_common.py::LocalDatasetTest::test_builder_class_bigbench

FAILED tests/test_dataset_common.py::LocalDatasetTest::test_builder_configs_bigbench

FAILED tests/test_dataset_common.py::LocalDatasetTest::test_load_dataset_bigbench

FAILED tests/test_metric_common.py::LocalMetricTest::test_load_metric_rouge

```

with errors:

```

> from rouge_score import rouge_scorer

E ModuleNotFoundError: No module named 'rouge_score'

```

```

E ImportError: To be able to use rouge, you need to install the following dependency: rouge_score.

E Please install it using 'pip install rouge_score' for instance'

```

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4734/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4734/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/4733 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4733/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4733/comments | https://api.github.com/repos/huggingface/datasets/issues/4733/events | https://github.com/huggingface/datasets/issues/4733 | 1,314,479,616 | I_kwDODunzps5OWV4A | 4,733 | rouge metric | {

"login": "asking28",

"id": 29248466,

"node_id": "MDQ6VXNlcjI5MjQ4NDY2",

"avatar_url": "https://avatars.githubusercontent.com/u/29248466?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/asking28",

"html_url": "https://github.com/asking28",

"followers_url": "https://api.github.com/users/asking28/followers",

"following_url": "https://api.github.com/users/asking28/following{/other_user}",

"gists_url": "https://api.github.com/users/asking28/gists{/gist_id}",

"starred_url": "https://api.github.com/users/asking28/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/asking28/subscriptions",

"organizations_url": "https://api.github.com/users/asking28/orgs",

"repos_url": "https://api.github.com/users/asking28/repos",

"events_url": "https://api.github.com/users/asking28/events{/privacy}",

"received_events_url": "https://api.github.com/users/asking28/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | null | [

{

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

}

] | null | [

"Fixed by:\r\n- #4735"

] | 1,658,473,611,000 | 1,658,480,882,000 | 1,658,480,735,000 | NONE | null | ## Describe the bug

A clear and concise description of what the bug is.

Loading Rouge metric gives error after latest rouge-score==0.0.7 release.

Downgrading rougemetric==0.0.4 works fine.

## Steps to reproduce the bug

```python

# Sample code to reproduce the bug

```

## Expected results

A clear and concise description of the expected results.

from rouge_score import rouge_scorer, scoring

should run

## Actual results

Specify the actual results or traceback.

File "/root/.cache/huggingface/modules/datasets_modules/metrics/rouge/0ffdb60f436bdb8884d5e4d608d53dbe108e82dac4f494a66f80ef3f647c104f/rouge.py", line 21, in <module>

from rouge_score import rouge_scorer, scoring

ImportError: cannot import name 'rouge_scorer' from 'rouge_score' (unknown location)

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version:

- Platform: Linux

- Python version:3.9

- PyArrow version:

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4733/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4733/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/4732 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4732/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4732/comments | https://api.github.com/repos/huggingface/datasets/issues/4732/events | https://github.com/huggingface/datasets/issues/4732 | 1,314,371,566 | I_kwDODunzps5OV7fu | 4,732 | Document better that loading a dataset passing its name does not use the local script | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892861,

"node_id": "MDU6TGFiZWwxOTM1ODkyODYx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/documentation",

"name": "documentation",

"color": "0075ca",

"default": true,

"description": "Improvements or additions to documentation"

}

] | open | false | null | [] | null | [

"Thanks for the feedback!\r\n\r\nI think since this issue is closely related to loading, I can add a clearer explanation under [Load > local loading script](https://huggingface.co/docs/datasets/main/en/loading#local-loading-script).",

"That makes sense but I think having a line about it under https://huggingface.co/docs/datasets/installation#source the \"source\" header here would be useful. My mental model of `pip install -e .` does not include the fact that the source files aren't actually being used. "

] | 1,658,470,051,000 | 1,658,777,132,000 | null | MEMBER | null | As reported by @TrentBrick here https://github.com/huggingface/datasets/issues/4725#issuecomment-1191858596, it could be more clear that loading a dataset by passing its name does not use the (modified) local script of it.

What he did:

- he installed `datasets` from source

- he modified locally `datasets/the_pile/the_pile.py` loading script

- he tried to load it but using `load_dataset("the_pile")` instead of `load_dataset("datasets/the_pile")`

- as explained here https://github.com/huggingface/datasets/issues/4725#issuecomment-1191040245:

- the former does not use the local script, but instead it downloads a copy of `the_pile.py` from our GitHub, caches it locally (inside `~/.cache/huggingface/modules`) and uses that.

He suggests adding a more clear explanation about this. He suggests adding it maybe in [Installation > source](https://huggingface.co/docs/datasets/installation))

CC: @stevhliu | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4732/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4732/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/4731 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4731/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4731/comments | https://api.github.com/repos/huggingface/datasets/issues/4731/events | https://github.com/huggingface/datasets/pull/4731 | 1,313,773,348 | PR_kwDODunzps474dlZ | 4,731 | docs: ✏️ fix TranslationVariableLanguages example | {

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"organizations_url": "https://api.github.com/users/severo/orgs",

"repos_url": "https://api.github.com/users/severo/repos",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"received_events_url": "https://api.github.com/users/severo/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | 1,658,435,741,000 | 1,658,473,260,000 | 1,658,472,522,000 | CONTRIBUTOR | null | null | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4731/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4731/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4731",

"html_url": "https://github.com/huggingface/datasets/pull/4731",

"diff_url": "https://github.com/huggingface/datasets/pull/4731.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4731.patch",

"merged_at": 1658472522000

} | true |

https://api.github.com/repos/huggingface/datasets/issues/4730 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4730/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4730/comments | https://api.github.com/repos/huggingface/datasets/issues/4730/events | https://github.com/huggingface/datasets/issues/4730 | 1,313,421,263 | I_kwDODunzps5OSTfP | 4,730 | Loading imagenet-1k validation split takes much more RAM than expected | {

"login": "fxmarty",

"id": 9808326,

"node_id": "MDQ6VXNlcjk4MDgzMjY=",

"avatar_url": "https://avatars.githubusercontent.com/u/9808326?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/fxmarty",

"html_url": "https://github.com/fxmarty",

"followers_url": "https://api.github.com/users/fxmarty/followers",

"following_url": "https://api.github.com/users/fxmarty/following{/other_user}",

"gists_url": "https://api.github.com/users/fxmarty/gists{/gist_id}",

"starred_url": "https://api.github.com/users/fxmarty/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/fxmarty/subscriptions",

"organizations_url": "https://api.github.com/users/fxmarty/orgs",

"repos_url": "https://api.github.com/users/fxmarty/repos",

"events_url": "https://api.github.com/users/fxmarty/events{/privacy}",

"received_events_url": "https://api.github.com/users/fxmarty/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | null | [] | null | [

"My bad, `482 * 418 * 50000 * 3 / 1000000 = 30221 MB` ( https://stackoverflow.com/a/42979315 ).\r\n\r\nMeanwhile `256 * 256 * 50000 * 3 / 1000000 = 9830 MB`. We are loading the non-cropped images and that is why we take so much RAM."

] | 1,658,416,446,000 | 1,658,421,664,000 | 1,658,421,664,000 | CONTRIBUTOR | null | ## Describe the bug

Loading into memory the validation split of imagenet-1k takes much more RAM than expected. Assuming ImageNet-1k is 150 GB, split is 50000 validation images and 1,281,167 train images, I would expect only about 6 GB loaded in RAM.

## Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("imagenet-1k", split="validation")

print(dataset)

"""prints

Dataset({

features: ['image', 'label'],

num_rows: 50000

})

"""

pipe_inputs = dataset["image"]

# and wait :-)

```

## Expected results

Use only < 10 GB RAM when loading the images.

## Actual results

```

Using custom data configuration default

Reusing dataset imagenet-1k (/home/fxmarty/.cache/huggingface/datasets/imagenet-1k/default/1.0.0/a1e9bfc56c3a7350165007d1176b15e9128fcaf9ab972147840529aed3ae52bc)

Killed

```

## Environment info

- `datasets` version: 2.3.3.dev0

- Platform: Linux-5.15.0-41-generic-x86_64-with-glibc2.35

- Python version: 3.9.12

- PyArrow version: 7.0.0

- Pandas version: 1.3.5

- datasets commit: 4e4222f1b6362c2788aec0dd2cd8cede6dd17b80

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4730/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4730/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/4729 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4729/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4729/comments | https://api.github.com/repos/huggingface/datasets/issues/4729/events | https://github.com/huggingface/datasets/pull/4729 | 1,313,374,015 | PR_kwDODunzps473GmR | 4,729 | Refactor Hub tests | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | 1,658,414,593,000 | 1,658,502,589,000 | 1,658,501,789,000 | MEMBER | null | This PR refactors `test_upstream_hub` by removing unittests and using the following pytest Hub fixtures:

- `ci_hub_config`

- `set_ci_hub_access_token`: to replace setUp/tearDown

- `temporary_repo` context manager: to replace `try... finally`

- `cleanup_repo`: to delete repo accidentally created if one of the tests fails

This is a preliminary work done to manage unit/integration tests separately. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4729/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4729/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4729",

"html_url": "https://github.com/huggingface/datasets/pull/4729",

"diff_url": "https://github.com/huggingface/datasets/pull/4729.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4729.patch",

"merged_at": 1658501789000

} | true |

https://api.github.com/repos/huggingface/datasets/issues/4728 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4728/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4728/comments | https://api.github.com/repos/huggingface/datasets/issues/4728/events | https://github.com/huggingface/datasets/issues/4728 | 1,312,897,454 | I_kwDODunzps5OQTmu | 4,728 | load_dataset gives "403" error when using Financial Phrasebank | {

"login": "rohitvincent",

"id": 2209134,

"node_id": "MDQ6VXNlcjIyMDkxMzQ=",

"avatar_url": "https://avatars.githubusercontent.com/u/2209134?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/rohitvincent",

"html_url": "https://github.com/rohitvincent",

"followers_url": "https://api.github.com/users/rohitvincent/followers",

"following_url": "https://api.github.com/users/rohitvincent/following{/other_user}",

"gists_url": "https://api.github.com/users/rohitvincent/gists{/gist_id}",

"starred_url": "https://api.github.com/users/rohitvincent/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/rohitvincent/subscriptions",

"organizations_url": "https://api.github.com/users/rohitvincent/orgs",

"repos_url": "https://api.github.com/users/rohitvincent/repos",

"events_url": "https://api.github.com/users/rohitvincent/events{/privacy}",

"received_events_url": "https://api.github.com/users/rohitvincent/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"Hi @rohitvincent, thanks for reporting.\r\n\r\nUnfortunately I'm not able to reproduce your issue:\r\n```python\r\nIn [2]: from datasets import load_dataset, DownloadMode\r\n ...: load_dataset(path='financial_phrasebank',name='sentences_allagree', download_mode=\"force_redownload\")\r\nDownloading builder script: 6.04kB [00:00, 2.87MB/s] \r\nDownloading metadata: 13.7kB [00:00, 7.24MB/s] \r\nDownloading and preparing dataset financial_phrasebank/sentences_allagree (download: 665.91 KiB, generated: 296.26 KiB, post-processed: Unknown size, total: 962.17 KiB) to .../.cache/huggingface/datasets/financial_phrasebank/sentences_allagree/1.0.0/550bde12e6c30e2674da973a55f57edde5181d53f5a5a34c1531c53f93b7e141...\r\nDownloading data: 100%|█████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 682k/682k [00:00<00:00, 7.66MB/s]\r\nDataset financial_phrasebank downloaded and prepared to .../.cache/huggingface/datasets/financial_phrasebank/sentences_allagree/1.0.0/550bde12e6c30e2674da973a55f57edde5181d53f5a5a34c1531c53f93b7e141. Subsequent calls will reuse this data.\r\n100%|███████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 1/1 [00:00<00:00, 918.80it/s]\r\nOut[2]: \r\nDatasetDict({\r\n train: Dataset({\r\n features: ['sentence', 'label'],\r\n num_rows: 2264\r\n })\r\n})\r\n```\r\n\r\nAre you able to access the link? https://www.researchgate.net/profile/Pekka-Malo/publication/251231364_FinancialPhraseBank-v10/data/0c96051eee4fb1d56e000000/FinancialPhraseBank-v10.zip",

"Yes was able to download from the link manually. But still, get the same error when I use load_dataset."

] | 1,658,393,012,000 | 1,658,478,333,000 | null | NONE | null | I tried both codes below to download the financial phrasebank dataset (https://huggingface.co/datasets/financial_phrasebank) with the sentences_allagree subset. However, the code gives a 403 error when executed from multiple machines locally or on the cloud.

```

from datasets import load_dataset, DownloadMode

load_dataset(path='financial_phrasebank',name='sentences_allagree',download_mode=DownloadMode.FORCE_REDOWNLOAD)

```

```

from datasets import load_dataset, DownloadMode

load_dataset(path='financial_phrasebank',name='sentences_allagree')

```

**Error**

ConnectionError: Couldn't reach https://www.researchgate.net/profile/Pekka_Malo/publication/251231364_FinancialPhraseBank-v10/data/0c96051eee4fb1d56e000000/FinancialPhraseBank-v10.zip (error 403)

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4728/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4728/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/4727 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4727/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4727/comments | https://api.github.com/repos/huggingface/datasets/issues/4727/events | https://github.com/huggingface/datasets/issues/4727 | 1,312,645,391 | I_kwDODunzps5OPWEP | 4,727 | Dataset Viewer issue for TheNoob3131/mosquito-data | {

"login": "thenerd31",

"id": 53668030,

"node_id": "MDQ6VXNlcjUzNjY4MDMw",

"avatar_url": "https://avatars.githubusercontent.com/u/53668030?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/thenerd31",

"html_url": "https://github.com/thenerd31",

"followers_url": "https://api.github.com/users/thenerd31/followers",

"following_url": "https://api.github.com/users/thenerd31/following{/other_user}",

"gists_url": "https://api.github.com/users/thenerd31/gists{/gist_id}",

"starred_url": "https://api.github.com/users/thenerd31/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/thenerd31/subscriptions",

"organizations_url": "https://api.github.com/users/thenerd31/orgs",

"repos_url": "https://api.github.com/users/thenerd31/repos",

"events_url": "https://api.github.com/users/thenerd31/events{/privacy}",

"received_events_url": "https://api.github.com/users/thenerd31/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 3470211881,

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer",

"name": "dataset-viewer",

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co"

}

] | closed | false | null | [] | null | [

"The preview is working OK:\r\n\r\n\r\n\r\n"

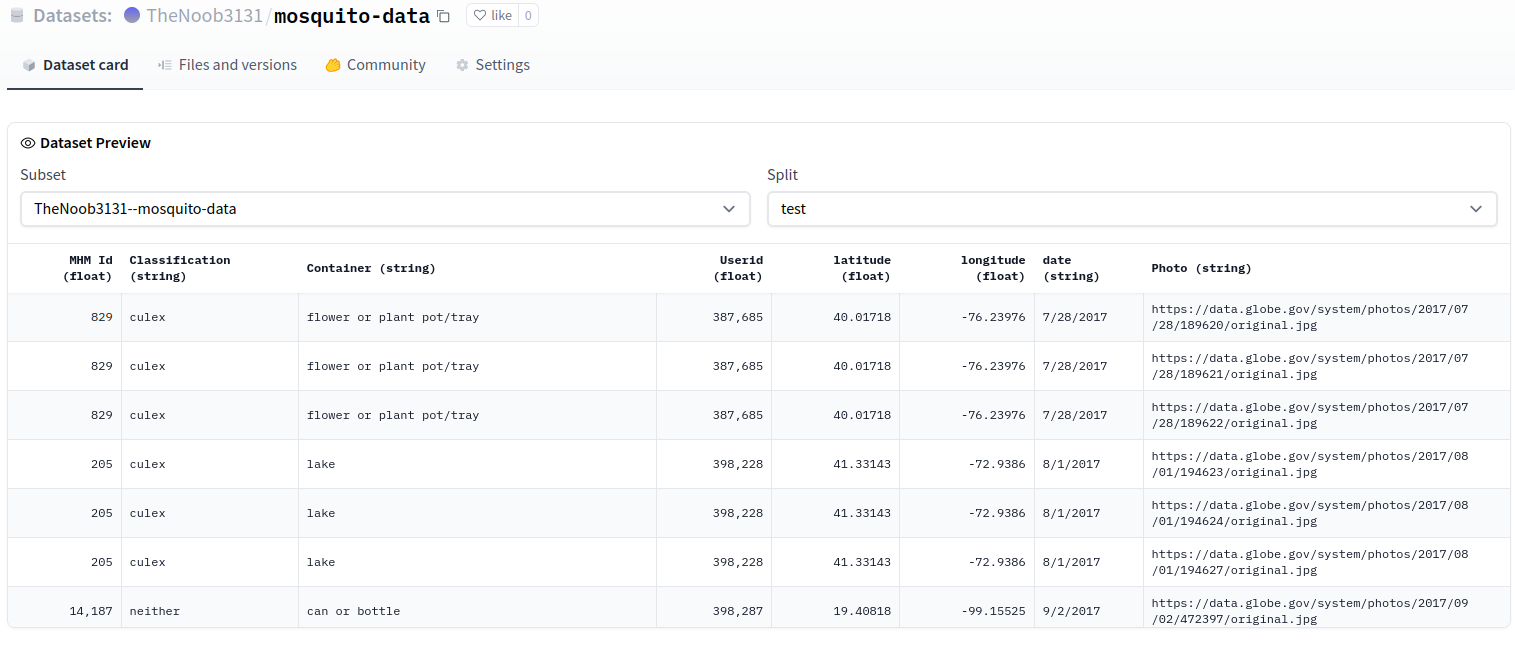

] | 1,658,381,088,000 | 1,658,389,916,000 | 1,658,389,501,000 | NONE | null | ### Link

https://huggingface.co/datasets/TheNoob3131/mosquito-data/viewer/TheNoob3131--mosquito-data/test

### Description

Dataset preview not showing with large files. Says 'split cache is empty' even though there are train and test splits.

### Owner

_No response_ | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4727/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4727/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/4726 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4726/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4726/comments | https://api.github.com/repos/huggingface/datasets/issues/4726/events | https://github.com/huggingface/datasets/pull/4726 | 1,312,082,175 | PR_kwDODunzps47ykPI | 4,726 | Fix broken link to the Hub | {

"login": "stevhliu",

"id": 59462357,

"node_id": "MDQ6VXNlcjU5NDYyMzU3",

"avatar_url": "https://avatars.githubusercontent.com/u/59462357?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/stevhliu",

"html_url": "https://github.com/stevhliu",

"followers_url": "https://api.github.com/users/stevhliu/followers",

"following_url": "https://api.github.com/users/stevhliu/following{/other_user}",

"gists_url": "https://api.github.com/users/stevhliu/gists{/gist_id}",

"starred_url": "https://api.github.com/users/stevhliu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/stevhliu/subscriptions",

"organizations_url": "https://api.github.com/users/stevhliu/orgs",

"repos_url": "https://api.github.com/users/stevhliu/repos",

"events_url": "https://api.github.com/users/stevhliu/events{/privacy}",

"received_events_url": "https://api.github.com/users/stevhliu/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | 1,658,357,847,000 | 1,658,413,998,000 | 1,658,390,454,000 | MEMBER | null | The Markdown link fails to render if it is in the same line as the `<span>`. This PR implements @mishig25's fix by using `<a href=" ">` instead.

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4726/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4726/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4726",

"html_url": "https://github.com/huggingface/datasets/pull/4726",

"diff_url": "https://github.com/huggingface/datasets/pull/4726.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4726.patch",

"merged_at": 1658390454000

} | true |

https://api.github.com/repos/huggingface/datasets/issues/4725 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4725/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4725/comments | https://api.github.com/repos/huggingface/datasets/issues/4725/events | https://github.com/huggingface/datasets/issues/4725 | 1,311,907,096 | I_kwDODunzps5OMh0Y | 4,725 | the_pile datasets URL broken. | {

"login": "TrentBrick",

"id": 12433427,

"node_id": "MDQ6VXNlcjEyNDMzNDI3",

"avatar_url": "https://avatars.githubusercontent.com/u/12433427?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/TrentBrick",

"html_url": "https://github.com/TrentBrick",

"followers_url": "https://api.github.com/users/TrentBrick/followers",

"following_url": "https://api.github.com/users/TrentBrick/following{/other_user}",

"gists_url": "https://api.github.com/users/TrentBrick/gists{/gist_id}",

"starred_url": "https://api.github.com/users/TrentBrick/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/TrentBrick/subscriptions",

"organizations_url": "https://api.github.com/users/TrentBrick/orgs",

"repos_url": "https://api.github.com/users/TrentBrick/repos",

"events_url": "https://api.github.com/users/TrentBrick/events{/privacy}",

"received_events_url": "https://api.github.com/users/TrentBrick/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | null | [

{

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

}

] | null | [

"Thanks for reporting, @TrentBrick. We are addressing the change with their data host server.\r\n\r\nOn the meantime, if you would like to work with your fixed local copy of the_pile script, you should use:\r\n```python\r\nload_dataset(\"path/to/your/local/the_pile/the_pile.py\",...\r\n```\r\ninstead of just `load_dataset(\"the_pile\",...`.\r\n\r\nThe latter downloads a copy of `the_pile.py` from our GitHub, caches it locally (inside `~/.cache/huggingface/modules`) and uses that.",

"@TrentBrick, I have checked the URLs and both hosts work, the original (https://the-eye.eu/) and the mirror (https://mystic.the-eye.eu/). See e.g.:\r\n- https://mystic.the-eye.eu/public/AI/pile/\r\n- https://mystic.the-eye.eu/public/AI/pile_preliminary_components/\r\n\r\nPlease, let me know if you still find any issue loading this dataset by using current server URLs.",

"Great this is working now. Re the download from GitHub... I'm sure thought went into doing this but could it be made more clear maybe here? https://huggingface.co/docs/datasets/installation for example under installing from source? I spent over an hour questioning my sanity as I kept trying to edit this file, uninstall and reinstall the repo, git reset to previous versions of the file etc.",

"Thanks for the quick reply and help too\r\n",

"Thanks @TrentBrick for the suggestion about improving our docs: we should definitely do this if you find they are not clear enough.\r\n\r\nCurrently, our docs explain how to load a dataset from a local loading script here: [Load > Local loading script](https://huggingface.co/docs/datasets/loading#local-loading-script)\r\n\r\nI've opened an issue here:\r\n- #4732\r\n\r\nFeel free to comment on it any additional explanation/suggestion/requirement related to this problem."

] | 1,658,350,650,000 | 1,658,470,186,000 | 1,658,389,099,000 | NONE | null | https://github.com/huggingface/datasets/pull/3627 changed the Eleuther AI Pile dataset URL from https://the-eye.eu/ to https://mystic.the-eye.eu/ but the latter is now broken and the former works again.

Note that when I git clone the repo and use `pip install -e .` and then edit the URL back the codebase doesn't seem to use this edit so the mystic URL is also cached somewhere else that I can't find? | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4725/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4725/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/4724 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4724/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4724/comments | https://api.github.com/repos/huggingface/datasets/issues/4724/events | https://github.com/huggingface/datasets/pull/4724 | 1,311,127,404 | PR_kwDODunzps47vLrP | 4,724 | Download and prepare as Parquet for cloud storage | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_4724). All of your documentation changes will be reflected on that endpoint."

] | 1,658,324,342,000 | 1,658,769,419,000 | null | MEMBER | null | Download a dataset as Parquet in a cloud storage can be useful for streaming mode and to use with spark/dask/ray.

This PR adds support for `fsspec` URIs like `s3://...`, `gcs://...` etc. and ads the `file_format` to save as parquet instead of arrow:

```python

from datasets import *

cache_dir = "s3://..."

builder = load_dataset_builder("crime_and_punish", cache_dir=cache_dir)

builder.download_and_prepare(file_format="parquet")

```

credentials to cloud storage can be passed using the `storage_options` argument in `load_dataset_builder`

For consistency with the BeamBasedBuilder, I name the parquet files `{builder.name}-{split}-xxxxx-of-xxxxx.parquet`. I think this is fine since we'll need to implement parquet sharding after this PR, so that a dataset can be used efficiently with dask for example.

TODO:

- [x] docs

- [x] tests | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/4724/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/4724/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4724",

"html_url": "https://github.com/huggingface/datasets/pull/4724",

"diff_url": "https://github.com/huggingface/datasets/pull/4724.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4724.patch",

"merged_at": null

} | true |