future-architect/Llama-3.1-Future-Code-Ja-8B

Text Generation

•

8B

•

Updated

•

726

•

6

markdown

stringlengths 0

1.02M

| code

stringlengths 0

832k

| output

stringlengths 0

1.02M

| license

stringlengths 3

36

| path

stringlengths 6

265

| repo_name

stringlengths 6

127

|

|---|---|---|---|---|---|

09 Strain GageThis is one of the most commonly used sensor. It is used in many transducers. Its fundamental operating principle is fairly easy to understand and it will be the purpose of this lecture. A strain gage is essentially a thin wire that is wrapped on film of plastic. The strain gage is then mounted (glued) on the part for which the strain must be measured. Stress, StrainWhen a beam is under axial load, the axial stress, $\sigma_a$, is defined as:\begin{align*}\sigma_a = \frac{F}{A}\end{align*}with $F$ the axial load, and $A$ the cross sectional area of the beam under axial load.Under the load, the beam of length $L$ will extend by $dL$, giving rise to the definition of strain, $\epsilon_a$:\begin{align*}\epsilon_a = \frac{dL}{L}\end{align*}The beam will also contract laterally: the cross sectional area is reduced by $dA$. This results in a transverval strain $\epsilon_t$. The transversal and axial strains are related by the Poisson's ratio:\begin{align*}\nu = - \frac{\epsilon_t }{\epsilon_a}\end{align*}For a metal the Poission's ratio is typically $\nu = 0.3$, for an incompressible material, such as rubber (or water), $\nu = 0.5$.Within the elastic limit, the axial stress and axial strain are related through Hooke's law by the Young's modulus, $E$:\begin{align*}\sigma_a = E \epsilon_a\end{align*} Resistance of a wireThe electrical resistance of a wire $R$ is related to its physical properties (the electrical resistiviy, $\rho$ in $\Omega$/m) and its geometry: length $L$ and cross sectional area $A$.\begin{align*}R = \frac{\rho L}{A}\end{align*}Mathematically, the change in wire dimension will result inchange in its electrical resistance. This can be derived from first principle:\begin{align}\frac{dR}{R} = \frac{d\rho}{\rho} + \frac{dL}{L} - \frac{dA}{A}\end{align}If the wire has a square cross section, then:\begin{align*}A & = L'^2 \\\frac{dA}{A} & = \frac{d(L'^2)}{L'^2} = \frac{2L'dL'}{L'^2} = 2 \frac{dL'}{L'}\end{align*}We have related the change in cross sectional area to the transversal strain.\begin{align*}\epsilon_t = \frac{dL'}{L'}\end{align*}Using the Poisson's ratio, we can relate then relate the change in cross-sectional area ($dA/A$) to axial strain $\epsilon_a = dL/L$.\begin{align*}\epsilon_t &= - \nu \epsilon_a \\\frac{dL'}{L'} &= - \nu \frac{dL}{L} \; \text{or}\\\frac{dA}{A} & = 2\frac{dL'}{L'} = -2 \nu \frac{dL}{L}\end{align*}Finally we can substitute express $dA/A$ in eq. for $dR/R$ and relate change in resistance to change of wire geometry, remembering that for a metal $\nu =0.3$:\begin{align}\frac{dR}{R} & = \frac{d\rho}{\rho} + \frac{dL}{L} - \frac{dA}{A} \\& = \frac{d\rho}{\rho} + \frac{dL}{L} - (-2\nu \frac{dL}{L}) \\& = \frac{d\rho}{\rho} + 1.6 \frac{dL}{L} = \frac{d\rho}{\rho} + 1.6 \epsilon_a\end{align}It also happens that for most metals, the resistivity increases with axial strain. In general, one can then related the change in resistance to axial strain by defining the strain gage factor:\begin{align}S = 1.6 + \frac{d\rho}{\rho}\cdot \frac{1}{\epsilon_a}\end{align}and finally, we have:\begin{align*}\frac{dR}{R} = S \epsilon_a\end{align*}$S$ is materials dependent and is typically equal to 2.0 for most commercially availabe strain gages. It is dimensionless.Strain gages are made of thin wire that is wraped in several loops, effectively increasing the length of the wire and therefore the sensitivity of the sensor._Question:Explain why a longer wire is necessary to increase the sensitivity of the sensor_.Most commercially available strain gages have a nominal resistance (resistance under no load, $R_{ini}$) of 120 or 350 $\Omega$.Within the elastic regime, strain is typically within the range $10^{-6} - 10^{-3}$, in fact strain is expressed in unit of microstrain, with a 1 microstrain = $10^{-6}$. Therefore, changes in resistances will be of the same order. If one were to measure resistances, we will need a dynamic range of 120 dB, whih is typically very expensive. Instead, one uses the Wheatstone bridge to transform the change in resistance to a voltage, which is easier to measure and does not require such a large dynamic range. Wheatstone bridge:The output voltage is related to the difference in resistances in the bridge:\begin{align*}\frac{V_o}{V_s} = \frac{R_1R_3-R_2R_4}{(R_1+R_4)(R_2+R_3)}\end{align*}If the bridge is balanced, then $V_o = 0$, it implies: $R_1/R_2 = R_4/R_3$.In practice, finding a set of resistors that balances the bridge is challenging, and a potentiometer is used as one of the resistances to do minor adjustement to balance the bridge. If one did not do the adjustement (ie if we did not zero the bridge) then all the measurement will have an offset or bias that could be removed in a post-processing phase, as long as the bias stayed constant.If each resistance $R_i$ is made to vary slightly around its initial value, ie $R_i = R_{i,ini} + dR_i$. For simplicity, we will assume that the initial value of the four resistances are equal, ie $R_{1,ini} = R_{2,ini} = R_{3,ini} = R_{4,ini} = R_{ini}$. This implies that the bridge was initially balanced, then the output voltage would be:\begin{align*}\frac{V_o}{V_s} = \frac{1}{4} \left( \frac{dR_1}{R_{ini}} - \frac{dR_2}{R_{ini}} + \frac{dR_3}{R_{ini}} - \frac{dR_4}{R_{ini}} \right)\end{align*}Note here that the changes in $R_1$ and $R_3$ have a positive effect on $V_o$, while the changes in $R_2$ and $R_4$ have a negative effect on $V_o$. In practice, this means that is a beam is a in tension, then a strain gage mounted on the branch 1 or 3 of the Wheatstone bridge will produce a positive voltage, while a strain gage mounted on branch 2 or 4 will produce a negative voltage. One takes advantage of this to increase sensitivity to measure strain. Quarter bridgeOne uses only one quarter of the bridge, ie strain gages are only mounted on one branch of the bridge.\begin{align*}\frac{V_o}{V_s} = \pm \frac{1}{4} \epsilon_a S\end{align*}Sensitivity, $G$:\begin{align*}G = \frac{V_o}{\epsilon_a} = \pm \frac{1}{4}S V_s\end{align*} Half bridgeOne uses half of the bridge, ie strain gages are mounted on two branches of the bridge.\begin{align*}\frac{V_o}{V_s} = \pm \frac{1}{2} \epsilon_a S\end{align*} Full bridgeOne uses of the branches of the bridge, ie strain gages are mounted on each branch.\begin{align*}\frac{V_o}{V_s} = \pm \epsilon_a S\end{align*}Therefore, as we increase the order of bridge, the sensitivity of the instrument increases. However, one should be carefull how we mount the strain gages as to not cancel out their measurement. _Exercise_1- Wheatstone bridge> How important is it to know \& match the resistances of the resistors you employ to create your bridge?> How would you do that practically?> Assume $R_1=120\,\Omega$, $R_2=120\,\Omega$, $R_3=120\,\Omega$, $R_4=110\,\Omega$, $V_s=5.00\,\text{V}$. What is $V_\circ$?

|

Vs = 5.00

Vo = (120**2-120*110)/(230*240) * Vs

print('Vo = ',Vo, ' V')

# typical range in strain a strain gauge can measure

# 1 -1000 micro-Strain

AxialStrain = 1000*10**(-6) # axial strain

StrainGageFactor = 2

R_ini = 120 # Ohm

R_1 = R_ini+R_ini*StrainGageFactor*AxialStrain

print(R_1)

Vo = (120**2-120*(R_1))/((120+R_1)*240) * Vs

print('Vo = ', Vo, ' V')

|

120.24

Vo = -0.002497502497502434 V

|

BSD-3-Clause

|

Lectures/09_StrainGage.ipynb

|

eiriniflorou/GWU-MAE3120_2022

|

> How important is it to know \& match the resistances of the resistors you employ to create your bridge?> How would you do that practically?> Assume $R_1= R_2 =R_3=120\,\Omega$, $R_4=120.01\,\Omega$, $V_s=5.00\,\text{V}$. What is $V_\circ$?

|

Vs = 5.00

Vo = (120**2-120*120.01)/(240.01*240) * Vs

print(Vo)

|

-0.00010416232656978944

|

BSD-3-Clause

|

Lectures/09_StrainGage.ipynb

|

eiriniflorou/GWU-MAE3120_2022

|

2- Strain gage 1:One measures the strain on a bridge steel beam. The modulus of elasticity is $E=190$ GPa. Only one strain gage is mounted on the bottom of the beam; the strain gage factor is $S=2.02$.> a) What kind of electronic circuit will you use? Draw a sketch of it.> b) Assume all your resistors including the unloaded strain gage are balanced and measure $120\,\Omega$, and that the strain gage is at location $R_2$. The supply voltage is $5.00\,\text{VDC}$. Will $V_\circ$ be positive or negative when a downward load is added? In practice, we cannot have all resistances = 120 $\Omega$. at zero load, the bridge will be unbalanced (show $V_o \neq 0$). How could we balance our bridge?Use a potentiometer to balance bridge, for the load cell, we ''zero'' the instrument.Other option to zero-out our instrument? Take data at zero-load, record the voltage, $V_{o,noload}$. Substract $V_{o,noload}$ to my data. > c) For a loading in which $V_\circ = -1.25\,\text{mV}$, calculate the strain $\epsilon_a$ in units of microstrain. \begin{align*}\frac{V_o}{V_s} & = - \frac{1}{4} \epsilon_a S\\\epsilon_a & = -\frac{4}{S} \frac{V_o}{V_s}\end{align*}

|

S = 2.02

Vo = -0.00125

Vs = 5

eps_a = -1*(4/S)*(Vo/Vs)

print(eps_a)

|

0.0004950495049504951

|

BSD-3-Clause

|

Lectures/09_StrainGage.ipynb

|

eiriniflorou/GWU-MAE3120_2022

|

Tabular learner> The function to immediately get a `Learner` ready to train for tabular data The main function you probably want to use in this module is `tabular_learner`. It will automatically create a `TabulaModel` suitable for your data and infer the irght loss function. See the [tabular tutorial](http://docs.fast.ai/tutorial.tabular) for an example of use in context. Main functions

|

#export

@log_args(but_as=Learner.__init__)

class TabularLearner(Learner):

"`Learner` for tabular data"

def predict(self, row):

tst_to = self.dls.valid_ds.new(pd.DataFrame(row).T)

tst_to.process()

tst_to.conts = tst_to.conts.astype(np.float32)

dl = self.dls.valid.new(tst_to)

inp,preds,_,dec_preds = self.get_preds(dl=dl, with_input=True, with_decoded=True)

i = getattr(self.dls, 'n_inp', -1)

b = (*tuplify(inp),*tuplify(dec_preds))

full_dec = self.dls.decode((*tuplify(inp),*tuplify(dec_preds)))

return full_dec,dec_preds[0],preds[0]

show_doc(TabularLearner, title_level=3)

|

_____no_output_____

|

Apache-2.0

|

nbs/43_tabular.learner.ipynb

|

NickVlasov/fastai

|

It works exactly as a normal `Learner`, the only difference is that it implements a `predict` method specific to work on a row of data.

|

#export

@log_args(to_return=True, but_as=Learner.__init__)

@delegates(Learner.__init__)

def tabular_learner(dls, layers=None, emb_szs=None, config=None, n_out=None, y_range=None, **kwargs):

"Get a `Learner` using `dls`, with `metrics`, including a `TabularModel` created using the remaining params."

if config is None: config = tabular_config()

if layers is None: layers = [200,100]

to = dls.train_ds

emb_szs = get_emb_sz(dls.train_ds, {} if emb_szs is None else emb_szs)

if n_out is None: n_out = get_c(dls)

assert n_out, "`n_out` is not defined, and could not be infered from data, set `dls.c` or pass `n_out`"

if y_range is None and 'y_range' in config: y_range = config.pop('y_range')

model = TabularModel(emb_szs, len(dls.cont_names), n_out, layers, y_range=y_range, **config)

return TabularLearner(dls, model, **kwargs)

|

_____no_output_____

|

Apache-2.0

|

nbs/43_tabular.learner.ipynb

|

NickVlasov/fastai

|

If your data was built with fastai, you probably won't need to pass anything to `emb_szs` unless you want to change the default of the library (produced by `get_emb_sz`), same for `n_out` which should be automatically inferred. `layers` will default to `[200,100]` and is passed to `TabularModel` along with the `config`.Use `tabular_config` to create a `config` and cusotmize the model used. There is just easy access to `y_range` because this argument is often used.All the other arguments are passed to `Learner`.

|

path = untar_data(URLs.ADULT_SAMPLE)

df = pd.read_csv(path/'adult.csv')

cat_names = ['workclass', 'education', 'marital-status', 'occupation', 'relationship', 'race']

cont_names = ['age', 'fnlwgt', 'education-num']

procs = [Categorify, FillMissing, Normalize]

dls = TabularDataLoaders.from_df(df, path, procs=procs, cat_names=cat_names, cont_names=cont_names,

y_names="salary", valid_idx=list(range(800,1000)), bs=64)

learn = tabular_learner(dls)

#hide

tst = learn.predict(df.iloc[0])

#hide

#test y_range is passed

learn = tabular_learner(dls, y_range=(0,32))

assert isinstance(learn.model.layers[-1], SigmoidRange)

test_eq(learn.model.layers[-1].low, 0)

test_eq(learn.model.layers[-1].high, 32)

learn = tabular_learner(dls, config = tabular_config(y_range=(0,32)))

assert isinstance(learn.model.layers[-1], SigmoidRange)

test_eq(learn.model.layers[-1].low, 0)

test_eq(learn.model.layers[-1].high, 32)

#export

@typedispatch

def show_results(x:Tabular, y:Tabular, samples, outs, ctxs=None, max_n=10, **kwargs):

df = x.all_cols[:max_n]

for n in x.y_names: df[n+'_pred'] = y[n][:max_n].values

display_df(df)

|

_____no_output_____

|

Apache-2.0

|

nbs/43_tabular.learner.ipynb

|

NickVlasov/fastai

|

Export -

|

#hide

from nbdev.export import notebook2script

notebook2script()

|

Converted 00_torch_core.ipynb.

Converted 01_layers.ipynb.

Converted 02_data.load.ipynb.

Converted 03_data.core.ipynb.

Converted 04_data.external.ipynb.

Converted 05_data.transforms.ipynb.

Converted 06_data.block.ipynb.

Converted 07_vision.core.ipynb.

Converted 08_vision.data.ipynb.

Converted 09_vision.augment.ipynb.

Converted 09b_vision.utils.ipynb.

Converted 09c_vision.widgets.ipynb.

Converted 10_tutorial.pets.ipynb.

Converted 11_vision.models.xresnet.ipynb.

Converted 12_optimizer.ipynb.

Converted 13_callback.core.ipynb.

Converted 13a_learner.ipynb.

Converted 13b_metrics.ipynb.

Converted 14_callback.schedule.ipynb.

Converted 14a_callback.data.ipynb.

Converted 15_callback.hook.ipynb.

Converted 15a_vision.models.unet.ipynb.

Converted 16_callback.progress.ipynb.

Converted 17_callback.tracker.ipynb.

Converted 18_callback.fp16.ipynb.

Converted 18a_callback.training.ipynb.

Converted 19_callback.mixup.ipynb.

Converted 20_interpret.ipynb.

Converted 20a_distributed.ipynb.

Converted 21_vision.learner.ipynb.

Converted 22_tutorial.imagenette.ipynb.

Converted 23_tutorial.vision.ipynb.

Converted 24_tutorial.siamese.ipynb.

Converted 24_vision.gan.ipynb.

Converted 30_text.core.ipynb.

Converted 31_text.data.ipynb.

Converted 32_text.models.awdlstm.ipynb.

Converted 33_text.models.core.ipynb.

Converted 34_callback.rnn.ipynb.

Converted 35_tutorial.wikitext.ipynb.

Converted 36_text.models.qrnn.ipynb.

Converted 37_text.learner.ipynb.

Converted 38_tutorial.text.ipynb.

Converted 40_tabular.core.ipynb.

Converted 41_tabular.data.ipynb.

Converted 42_tabular.model.ipynb.

Converted 43_tabular.learner.ipynb.

Converted 44_tutorial.tabular.ipynb.

Converted 45_collab.ipynb.

Converted 46_tutorial.collab.ipynb.

Converted 50_tutorial.datablock.ipynb.

Converted 60_medical.imaging.ipynb.

Converted 61_tutorial.medical_imaging.ipynb.

Converted 65_medical.text.ipynb.

Converted 70_callback.wandb.ipynb.

Converted 71_callback.tensorboard.ipynb.

Converted 72_callback.neptune.ipynb.

Converted 73_callback.captum.ipynb.

Converted 74_callback.cutmix.ipynb.

Converted 97_test_utils.ipynb.

Converted 99_pytorch_doc.ipynb.

Converted index.ipynb.

Converted tutorial.ipynb.

|

Apache-2.0

|

nbs/43_tabular.learner.ipynb

|

NickVlasov/fastai

|

Aerospike Connect for Spark - SparkML Prediction Model Tutorial Tested with Java 8, Spark 3.0.0, Python 3.7, and Aerospike Spark Connector 3.0.0 SummaryBuild a linear regression model to predict birth weight using Aerospike Database and Spark.Here are the features used:- gestation weeks- mother’s age- father’s age- mother’s weight gain during pregnancy- [Apgar score](https://en.wikipedia.org/wiki/Apgar_score)Aerospike is used to store the Natality dataset that is published by CDC. The table is accessed in Apache Spark using the Aerospike Spark Connector, and Spark ML is used to build and evaluate the model. The model can later be converted to PMML and deployed on your inference server for predictions. Prerequisites1. Load Aerospike server if not alrady available - docker run -d --name aerospike -p 3000:3000 -p 3001:3001 -p 3002:3002 -p 3003:3003 aerospike2. Feature key needs to be located in AS_FEATURE_KEY_PATH3. [Download the connector](https://www.aerospike.com/enterprise/download/connectors/aerospike-spark/3.0.0/)

|

#IP Address or DNS name for one host in your Aerospike cluster.

#A seed address for the Aerospike database cluster is required

AS_HOST ="127.0.0.1"

# Name of one of your namespaces. Type 'show namespaces' at the aql prompt if you are not sure

AS_NAMESPACE = "test"

AS_FEATURE_KEY_PATH = "/etc/aerospike/features.conf"

AEROSPIKE_SPARK_JAR_VERSION="3.0.0"

AS_PORT = 3000 # Usually 3000, but change here if not

AS_CONNECTION_STRING = AS_HOST + ":"+ str(AS_PORT)

#Locate the Spark installation - this'll use the SPARK_HOME environment variable

import findspark

findspark.init()

# Below will help you download the Spark Connector Jar if you haven't done so already.

import urllib

import os

def aerospike_spark_jar_download_url(version=AEROSPIKE_SPARK_JAR_VERSION):

DOWNLOAD_PREFIX="https://www.aerospike.com/enterprise/download/connectors/aerospike-spark/"

DOWNLOAD_SUFFIX="/artifact/jar"

AEROSPIKE_SPARK_JAR_DOWNLOAD_URL = DOWNLOAD_PREFIX+AEROSPIKE_SPARK_JAR_VERSION+DOWNLOAD_SUFFIX

return AEROSPIKE_SPARK_JAR_DOWNLOAD_URL

def download_aerospike_spark_jar(version=AEROSPIKE_SPARK_JAR_VERSION):

JAR_NAME="aerospike-spark-assembly-"+AEROSPIKE_SPARK_JAR_VERSION+".jar"

if(not(os.path.exists(JAR_NAME))) :

urllib.request.urlretrieve(aerospike_spark_jar_download_url(),JAR_NAME)

else :

print(JAR_NAME+" already downloaded")

return os.path.join(os.getcwd(),JAR_NAME)

AEROSPIKE_JAR_PATH=download_aerospike_spark_jar()

os.environ["PYSPARK_SUBMIT_ARGS"] = '--jars ' + AEROSPIKE_JAR_PATH + ' pyspark-shell'

import pyspark

from pyspark.context import SparkContext

from pyspark.sql.context import SQLContext

from pyspark.sql.session import SparkSession

from pyspark.ml.linalg import Vectors

from pyspark.ml.regression import LinearRegression

from pyspark.sql.types import StringType, StructField, StructType, ArrayType, IntegerType, MapType, LongType, DoubleType

#Get a spark session object and set required Aerospike configuration properties

sc = SparkContext.getOrCreate()

print("Spark Verison:", sc.version)

spark = SparkSession(sc)

sqlContext = SQLContext(sc)

spark.conf.set("aerospike.namespace",AS_NAMESPACE)

spark.conf.set("aerospike.seedhost",AS_CONNECTION_STRING)

spark.conf.set("aerospike.keyPath",AS_FEATURE_KEY_PATH )

|

Spark Verison: 3.0.0

|

MIT

|

notebooks/spark/other_notebooks/AerospikeSparkMLLinearRegression.ipynb

|

artanderson/interactive-notebooks

|

Step 1: Load Data into a DataFrame

|

as_data=spark \

.read \

.format("aerospike") \

.option("aerospike.set", "natality").load()

as_data.show(5)

print("Inferred Schema along with Metadata.")

as_data.printSchema()

|

+-----+--------------------+---------+------------+-------+-------------+---------------+-------------+----------+----------+----------+

|__key| __digest| __expiry|__generation| __ttl| weight_pnd|weight_gain_pnd|gstation_week|apgar_5min|mother_age|father_age|

+-----+--------------------+---------+------------+-------+-------------+---------------+-------------+----------+----------+----------+

| null|[00 E0 68 A0 09 5...|354071840| 1|2367835| 6.9996768185| 99| 36| 99| 13| 15|

| null|[01 B0 1F 4D D6 9...|354071839| 1|2367834| 5.291094288| 18| 40| 9| 14| 99|

| null|[02 C0 93 23 F1 1...|354071837| 1|2367832| 6.8122838958| 24| 39| 9| 42| 36|

| null|[02 B0 C4 C7 3B F...|354071838| 1|2367833|7.67649596284| 99| 39| 99| 14| 99|

| null|[02 70 2A 45 E4 2...|354071843| 1|2367838| 7.8594796403| 40| 39| 8| 13| 99|

+-----+--------------------+---------+------------+-------+-------------+---------------+-------------+----------+----------+----------+

only showing top 5 rows

Inferred Schema along with Metadata.

root

|-- __key: string (nullable = true)

|-- __digest: binary (nullable = false)

|-- __expiry: integer (nullable = false)

|-- __generation: integer (nullable = false)

|-- __ttl: integer (nullable = false)

|-- weight_pnd: double (nullable = true)

|-- weight_gain_pnd: long (nullable = true)

|-- gstation_week: long (nullable = true)

|-- apgar_5min: long (nullable = true)

|-- mother_age: long (nullable = true)

|-- father_age: long (nullable = true)

|

MIT

|

notebooks/spark/other_notebooks/AerospikeSparkMLLinearRegression.ipynb

|

artanderson/interactive-notebooks

|

To speed up the load process at scale, use the [knobs](https://www.aerospike.com/docs/connect/processing/spark/performance.html) available in the Aerospike Spark Connector. For example, **spark.conf.set("aerospike.partition.factor", 15 )** will map 4096 Aerospike partitions to 32K Spark partitions. (Note: Please configure this carefully based on the available resources (CPU threads) in your system.) Step 2 - Prep data

|

# This Spark3.0 setting, if true, will turn on Adaptive Query Execution (AQE), which will make use of the

# runtime statistics to choose the most efficient query execution plan. It will speed up any joins that you

# plan to use for data prep step.

spark.conf.set("spark.sql.adaptive.enabled", 'true')

# Run a query in Spark SQL to ensure no NULL values exist.

as_data.createOrReplaceTempView("natality")

sql_query = """

SELECT *

from natality

where weight_pnd is not null

and mother_age is not null

and father_age is not null

and father_age < 80

and gstation_week is not null

and weight_gain_pnd < 90

and apgar_5min != "99"

and apgar_5min != "88"

"""

clean_data = spark.sql(sql_query)

#Drop the Aerospike metadata from the dataset because its not required.

#The metadata is added because we are inferring the schema as opposed to providing a strict schema

columns_to_drop = ['__key','__digest','__expiry','__generation','__ttl' ]

clean_data = clean_data.drop(*columns_to_drop)

# dropping null values

clean_data = clean_data.dropna()

clean_data.cache()

clean_data.show(5)

#Descriptive Analysis of the data

clean_data.describe().toPandas().transpose()

|

+------------------+---------------+-------------+----------+----------+----------+

| weight_pnd|weight_gain_pnd|gstation_week|apgar_5min|mother_age|father_age|

+------------------+---------------+-------------+----------+----------+----------+

| 7.5398093604| 38| 39| 9| 42| 41|

| 7.3634395508| 25| 37| 9| 14| 18|

| 7.06361087448| 26| 39| 9| 42| 28|

|6.1244416383599996| 20| 37| 9| 44| 41|

| 7.06361087448| 49| 38| 9| 14| 18|

+------------------+---------------+-------------+----------+----------+----------+

only showing top 5 rows

|

MIT

|

notebooks/spark/other_notebooks/AerospikeSparkMLLinearRegression.ipynb

|

artanderson/interactive-notebooks

|

Step 3 Visualize Data

|

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

import math

pdf = clean_data.toPandas()

#Histogram - Father Age

pdf[['father_age']].plot(kind='hist',bins=10,rwidth=0.8)

plt.xlabel('Fathers Age (years)',fontsize=12)

plt.legend(loc=None)

plt.style.use('seaborn-whitegrid')

plt.show()

'''

pdf[['mother_age']].plot(kind='hist',bins=10,rwidth=0.8)

plt.xlabel('Mothers Age (years)',fontsize=12)

plt.legend(loc=None)

plt.style.use('seaborn-whitegrid')

plt.show()

'''

pdf[['weight_pnd']].plot(kind='hist',bins=10,rwidth=0.8)

plt.xlabel('Babys Weight (Pounds)',fontsize=12)

plt.legend(loc=None)

plt.style.use('seaborn-whitegrid')

plt.show()

pdf[['gstation_week']].plot(kind='hist',bins=10,rwidth=0.8)

plt.xlabel('Gestation (Weeks)',fontsize=12)

plt.legend(loc=None)

plt.style.use('seaborn-whitegrid')

plt.show()

pdf[['weight_gain_pnd']].plot(kind='hist',bins=10,rwidth=0.8)

plt.xlabel('mother’s weight gain during pregnancy',fontsize=12)

plt.legend(loc=None)

plt.style.use('seaborn-whitegrid')

plt.show()

#Histogram - Apgar Score

print("Apgar Score: Scores of 7 and above are generally normal; 4 to 6, fairly low; and 3 and below are generally \

regarded as critically low and cause for immediate resuscitative efforts.")

pdf[['apgar_5min']].plot(kind='hist',bins=10,rwidth=0.8)

plt.xlabel('Apgar score',fontsize=12)

plt.legend(loc=None)

plt.style.use('seaborn-whitegrid')

plt.show()

|

_____no_output_____

|

MIT

|

notebooks/spark/other_notebooks/AerospikeSparkMLLinearRegression.ipynb

|

artanderson/interactive-notebooks

|

Step 4 - Create Model**Steps used for model creation:**1. Split cleaned data into Training and Test sets2. Vectorize features on which the model will be trained3. Create a linear regression model (Choose any ML algorithm that provides the best fit for the given dataset)4. Train model (Although not shown here, you could use K-fold cross-validation and Grid Search to choose the best hyper-parameters for the model)5. Evaluate model

|

# Define a function that collects the features of interest

# (mother_age, father_age, and gestation_weeks) into a vector.

# Package the vector in a tuple containing the label (`weight_pounds`) for that

# row.##

def vector_from_inputs(r):

return (r["weight_pnd"], Vectors.dense(float(r["mother_age"]),

float(r["father_age"]),

float(r["gstation_week"]),

float(r["weight_gain_pnd"]),

float(r["apgar_5min"])))

#Split that data 70% training and 30% Evaluation data

train, test = clean_data.randomSplit([0.7, 0.3])

#Check the shape of the data

train.show()

print((train.count(), len(train.columns)))

test.show()

print((test.count(), len(test.columns)))

# Create an input DataFrame for Spark ML using the above function.

training_data = train.rdd.map(vector_from_inputs).toDF(["label",

"features"])

# Construct a new LinearRegression object and fit the training data.

lr = LinearRegression(maxIter=5, regParam=0.2, solver="normal")

#Voila! your first model using Spark ML is trained

model = lr.fit(training_data)

# Print the model summary.

print("Coefficients:" + str(model.coefficients))

print("Intercept:" + str(model.intercept))

print("R^2:" + str(model.summary.r2))

model.summary.residuals.show()

|

Coefficients:[0.00858931617782676,0.0008477851947958541,0.27948866120791893,0.009329081045860402,0.18817058385589935]

Intercept:-5.893364345930709

R^2:0.3970187134779115

+--------------------+

| residuals|

+--------------------+

| -1.845934264937739|

| -2.2396120149639067|

| -0.7717836944756593|

| -0.6160804608336026|

| -0.6986641251138215|

| -0.672589930891391|

| -0.8699157049741881|

|-0.13870265354963962|

|-0.26366319351660383|

| -0.5260646593713352|

| 0.3191520988648042|

| 0.08956511232072462|

| 0.28423773834709554|

| 0.5367216316177004|

|-0.34304851596998454|

| 0.613435294490146|

| 1.3680838827256254|

| -1.887922569557201|

| -1.4788456210255978|

| -1.5035698497034602|

+--------------------+

only showing top 20 rows

|

MIT

|

notebooks/spark/other_notebooks/AerospikeSparkMLLinearRegression.ipynb

|

artanderson/interactive-notebooks

|

Evaluate Model

|

eval_data = test.rdd.map(vector_from_inputs).toDF(["label",

"features"])

eval_data.show()

evaluation_summary = model.evaluate(eval_data)

print("MAE:", evaluation_summary.meanAbsoluteError)

print("RMSE:", evaluation_summary.rootMeanSquaredError)

print("R-squared value:", evaluation_summary.r2)

|

+------------------+--------------------+

| label| features|

+------------------+--------------------+

| 3.62439958728|[42.0,37.0,35.0,5...|

| 5.3351867404|[43.0,48.0,38.0,6...|

| 6.8122838958|[42.0,36.0,39.0,2...|

| 6.9776305923|[46.0,42.0,39.0,2...|

| 7.06361087448|[14.0,18.0,38.0,4...|

| 7.3634395508|[14.0,18.0,37.0,2...|

| 7.4075320032|[45.0,45.0,38.0,1...|

| 7.68751907594|[42.0,49.0,38.0,2...|

| 3.09088091324|[43.0,46.0,32.0,4...|

| 5.62619692624|[44.0,50.0,39.0,2...|

|6.4992274837599995|[42.0,47.0,39.0,2...|

|6.5918216337999995|[42.0,38.0,35.0,6...|

| 6.686620406459999|[14.0,17.0,38.0,3...|

| 6.6910296517|[42.0,42.0,40.0,3...|

| 6.8122838958|[14.0,15.0,35.0,1...|

| 7.1870697412|[14.0,15.0,36.0,4...|

| 7.4075320032|[43.0,45.0,40.0,1...|

| 7.4736706818|[43.0,53.0,37.0,4...|

| 7.62578964258|[43.0,46.0,38.0,3...|

| 7.62578964258|[42.0,37.0,39.0,3...|

+------------------+--------------------+

only showing top 20 rows

MAE: 0.9094828902906563

RMSE: 1.1665322992147173

R-squared value: 0.378390902740944

|

MIT

|

notebooks/spark/other_notebooks/AerospikeSparkMLLinearRegression.ipynb

|

artanderson/interactive-notebooks

|

Step 5 - Batch Prediction

|

#eval_data contains the records (ideally production) that you'd like to use for the prediction

predictions = model.transform(eval_data)

predictions.show()

|

+------------------+--------------------+-----------------+

| label| features| prediction|

+------------------+--------------------+-----------------+

| 3.62439958728|[42.0,37.0,35.0,5...|6.440847435018738|

| 5.3351867404|[43.0,48.0,38.0,6...| 6.88674880594522|

| 6.8122838958|[42.0,36.0,39.0,2...|7.315398187463249|

| 6.9776305923|[46.0,42.0,39.0,2...|7.382829406480911|

| 7.06361087448|[14.0,18.0,38.0,4...|7.013375565916365|

| 7.3634395508|[14.0,18.0,37.0,2...|6.509988959607797|

| 7.4075320032|[45.0,45.0,38.0,1...|7.013333055266812|

| 7.68751907594|[42.0,49.0,38.0,2...|7.244430398689434|

| 3.09088091324|[43.0,46.0,32.0,4...|5.543968185959089|

| 5.62619692624|[44.0,50.0,39.0,2...|7.344445812546044|

|6.4992274837599995|[42.0,47.0,39.0,2...|7.287407500422561|

|6.5918216337999995|[42.0,38.0,35.0,6...| 6.56297327380972|

| 6.686620406459999|[14.0,17.0,38.0,3...|7.079420310981281|

| 6.6910296517|[42.0,42.0,40.0,3...|7.721251613436126|

| 6.8122838958|[14.0,15.0,35.0,1...|5.836519309057246|

| 7.1870697412|[14.0,15.0,36.0,4...|6.179722574647495|

| 7.4075320032|[43.0,45.0,40.0,1...|7.564460826372854|

| 7.4736706818|[43.0,53.0,37.0,4...|6.938016907316393|

| 7.62578964258|[43.0,46.0,38.0,3...| 6.96742600202968|

| 7.62578964258|[42.0,37.0,39.0,3...|7.456182188345951|

+------------------+--------------------+-----------------+

only showing top 20 rows

|

MIT

|

notebooks/spark/other_notebooks/AerospikeSparkMLLinearRegression.ipynb

|

artanderson/interactive-notebooks

|

Compare the labels and the predictions, they should ideally match up for an accurate model. Label is the actual weight of the baby and prediction is the predicated weight Saving the Predictions to Aerospike for ML Application's consumption

|

# Aerospike is a key/value database, hence a key is needed to store the predictions into the database. Hence we need

# to add the _id column to the predictions using SparkSQL

predictions.createOrReplaceTempView("predict_view")

sql_query = """

SELECT *, monotonically_increasing_id() as _id

from predict_view

"""

predict_df = spark.sql(sql_query)

predict_df.show()

print("#records:", predict_df.count())

# Now we are good to write the Predictions to Aerospike

predict_df \

.write \

.mode('overwrite') \

.format("aerospike") \

.option("aerospike.writeset", "predictions")\

.option("aerospike.updateByKey", "_id") \

.save()

|

_____no_output_____

|

MIT

|

notebooks/spark/other_notebooks/AerospikeSparkMLLinearRegression.ipynb

|

artanderson/interactive-notebooks

|

Concurrency with asyncio Thread vs. coroutine

|

# spinner_thread.py

import threading

import itertools

import time

import sys

class Signal:

go = True

def spin(msg, signal):

write, flush = sys.stdout.write, sys.stdout.flush

for char in itertools.cycle('|/-\\'):

status = char + ' ' + msg

write(status)

flush()

write('\x08' * len(status))

time.sleep(.1)

if not signal.go:

break

write(' ' * len(status) + '\x08' * len(status))

def slow_function():

time.sleep(3)

return 42

def supervisor():

signal = Signal()

spinner = threading.Thread(target=spin, args=('thinking!', signal))

print('spinner object:', spinner)

spinner.start()

result = slow_function()

signal.go = False

spinner.join()

return result

def main():

result = supervisor()

print('Answer:', result)

if __name__ == '__main__':

main()

# spinner_asyncio.py

import asyncio

import itertools

import sys

@asyncio.coroutine

def spin(msg):

write, flush = sys.stdout.write, sys.stdout.flush

for char in itertools.cycle('|/-\\'):

status = char + ' ' + msg

write(status)

flush()

write('\x08' * len(status))

try:

yield from asyncio.sleep(.1)

except asyncio.CancelledError:

break

write(' ' * len(status) + '\x08' * len(status))

@asyncio.coroutine

def slow_function():

yield from asyncio.sleep(3)

return 42

@asyncio.coroutine

def supervisor():

#Schedule the execution of a coroutine object:

#wrap it in a future. Return a Task object.

spinner = asyncio.ensure_future(spin('thinking!'))

print('spinner object:', spinner)

result = yield from slow_function()

spinner.cancel()

return result

def main():

loop = asyncio.get_event_loop()

result = loop.run_until_complete(supervisor())

loop.close()

print('Answer:', result)

if __name__ == '__main__':

main()

# flags_asyncio.py

import asyncio

import aiohttp

from flags import BASE_URL, save_flag, show, main

@asyncio.coroutine

def get_flag(cc):

url = '{}/{cc}/{cc}.gif'.format(BASE_URL, cc=cc.lower())

resp = yield from aiohttp.request('GET', url)

image = yield from resp.read()

return image

@asyncio.coroutine

def download_one(cc):

image = yield from get_flag(cc)

show(cc)

save_flag(image, cc.lower() + '.gif')

return cc

def download_many(cc_list):

loop = asyncio.get_event_loop()

to_do = [download_one(cc) for cc in sorted(cc_list)]

wait_coro = asyncio.wait(to_do)

res, _ = loop.run_until_complete(wait_coro)

loop.close()

return len(res)

if __name__ == '__main__':

main(download_many)

# flags2_asyncio.py

import asyncio

import collections

import aiohttp

from aiohttp import web

import tqdm

from flags2_common import HTTPStatus, save_flag, Result, main

DEFAULT_CONCUR_REQ = 5

MAX_CONCUR_REQ = 1000

class FetchError(Exception):

def __init__(self, country_code):

self.country_code = country_code

@asyncio.coroutine

def get_flag(base_url, cc):

url = '{}/{cc}/{cc}.gif'.format(BASE_URL, cc=cc.lower())

resp = yield from aiohttp.ClientSession().get(url)

if resp.status == 200:

image = yield from resp.read()

return image

elif resp.status == 404:

raise web.HTTPNotFound()

else:

raise aiohttp.HttpProcessingError(

code=resp.status, message=resp.reason, headers=resp.headers)

@asyncio.coroutine

def download_one(cc, base_url, semaphore, verbose):

try:

with (yield from semaphore):

image = yield from get_flag(base_url, cc)

except web.HTTPNotFound:

status = HTTPStatus.not_found

msg = 'not found'

except Exception as exc:

raise FetchError(cc) from exc

else:

save_flag(image, cc.lower() + '.gif')

status = HTTPStatus.ok

msg = 'OK'

if verbose and msg:

print(cc, msg)

return Result(status, cc)

@asyncio.coroutine

def downloader_coro(cc_list, base_url, verbose, concur_req):

counter = collections.Counter()

semaphore = asyncio.Semaphore(concur_req)

to_do = [download_one(cc, base_url, semaphore, verbose)

for cc in sorted(cc_list)]

to_do_iter = asyncio.as_completed(to_do)

if not verbose:

to_do_iter = tqdm.tqdm(to_do_iter, total=len(cc_list))

for future in to_do_iter:

try:

res = yield from future

except FetchError as exc:

country_code = exc.country_code

try:

error_msg = exc.__cause__.args[0]

except IndexError:

error_msg = exc.__cause__.__class__.__name__

if verbose and error_msg:

msg = '*** Error for {}: {}'

print(msg.format(country_code, error_msg))

status = HTTPStatus.error

else:

status = res.status

counter[status] += 1

return counter

def download_many(cc_list, base_url, verbose, concur_req):

loop = asyncio.get_event_loop()

coro = download_coro(cc_list, base_url, verbose, concur_req)

counts = loop.run_until_complete(wait_coro)

loop.close()

return counts

if __name__ == '__main__':

main(download_many, DEFAULT_CONCUR_REQ, MAX_CONCUR_REQ)

# run_in_executor

@asyncio.coroutine

def download_one(cc, base_url, semaphore, verbose):

try:

with (yield from semaphore):

image = yield from get_flag(base_url, cc)

except web.HTTPNotFound:

status = HTTPStatus.not_found

msg = 'not found'

except Exception as exc:

raise FetchError(cc) from exc

else:

# save_flag 也是阻塞操作,所以使用run_in_executor调用save_flag进行

# 异步操作

loop = asyncio.get_event_loop()

loop.run_in_executor(None, save_flag, image, cc.lower() + '.gif')

status = HTTPStatus.ok

msg = 'OK'

if verbose and msg:

print(cc, msg)

return Result(status, cc)

## Doing multiple requests for each download

# flags3_asyncio.py

@asyncio.coroutine

def http_get(url):

res = yield from aiohttp.request('GET', url)

if res.status == 200:

ctype = res.headers.get('Content-type', '').lower()

if 'json' in ctype or url.endswith('json'):

data = yield from res.json()

else:

data = yield from res.read()

elif res.status == 404:

raise web.HTTPNotFound()

else:

raise aiohttp.errors.HttpProcessingError(

code=res.status, message=res.reason,

headers=res.headers)

@asyncio.coroutine

def get_country(base_url, cc):

url = '{}/{cc}/metadata.json'.format(base_url, cc=cc.lower())

metadata = yield from http_get(url)

return metadata['country']

@asyncio.coroutine

def get_flag(base_url, cc):

url = '{}/{cc}/{cc}.gif'.format(base_url, cc=cc.lower())

return (yield from http_get(url))

@asyncio.coroutine

def download_one(cc, base_url, semaphore, verbose):

try:

with (yield from semaphore):

image = yield from get_flag(base_url, cc)

with (yield from semaphore):

country = yield from get_country(base_url, cc)

except web.HTTPNotFound:

status = HTTPStatus.not_found

msg = 'not found'

except Exception as exc:

raise FetchError(cc) from exc

else:

country = country.replace(' ', '_')

filename = '{}-{}.gif'.format(country, cc)

loop = asyncio.get_event_loop()

loop.run_in_executor(None, save_flag, image, filename)

status = HTTPStatus.ok

msg = 'OK'

if verbose and msg:

print(cc, msg)

return Result(status, cc)

|

_____no_output_____

|

Apache-2.0

|

notebook/fluent_ch18.ipynb

|

Lin0818/py-study-notebook

|

Writing asyncio servers

|

# tcp_charfinder.py

import sys

import asyncio

from charfinder import UnicodeNameIndex

CRLF = b'\r\n'

PROMPT = b'?>'

index = UnicodeNameIndex()

@asyncio.coroutine

def handle_queries(reader, writer):

while True:

writer.write(PROMPT)

yield from writer.drain()

data = yield from reader.readline()

try:

query = data.decode().strip()

except UnicodeDecodeError:

query = '\x00'

client = writer.get_extra_info('peername')

print('Received from {}: {!r}'.format(client, query))

if query:

if ord(query[:1]) < 32:

break

lines = list(index.find_description_strs(query))

if lines:

writer.writelines(line.encode() + CRLF for line in lines)

writer.write(index.status(query, len(lines)).encode() + CRLF)

yield from writer.drain()

print('Sent {} results'.format(len(lines)))

print('Close the client socket')

writer.close()

def main(address='127.0.0.1', port=2323):

port = int(port)

loop = asyncio.get_event_loop()

server_coro = asyncio.start_server(handle_queries, address, port, loop=loop)

server = loop.run_until_complete(server_coro)

host = server.sockets[0].getsockname()

print('Serving on {}. Hit CTRL-C to stop.'.format(host))

try:

loop.run_forever()

except KeyboardInterrupt:

pass

print('Server shutting down.')

server.close()

loop.run_until_complete(server.wait_closed())

loop.close()

if __name__ == '__main__':

main()

# http_charfinder.py

@asyncio.coroutine

def init(loop, address, port):

app = web.Application(loop=loop)

app.router.add_route('GET', '/', home)

handler = app.make_handler()

server = yield from loop.create_server(handler, address, port)

return server.sockets[0].getsockname()

def home(request):

query = request.GET.get('query', '').strip()

print('Query: {!r}'.format(query))

if query:

descriptions = list(index.find_descriptions(query))

res = '\n'.join(ROW_TPL.format(**vars(descr))

for descr in descriptions)

msg = index.status(query, len(descriptions))

else:

descriptions = []

res = ''

msg = 'Enter words describing characters.'

html = template.format(query=query, result=res, message=msg)

print('Sending {} results'.format(len(descriptions)))

return web.Response(content_type=CONTENT_TYPE, text=html)

def main(address='127.0.0.1', port=8888):

port = int(port)

loop = asyncio.get_event_loop()

host = loop.run_until_complete(init(loop, address, port))

print('Serving on {}. Hit CTRL-C to stop.'.format(host))

try:

loop.run_forever()

except KeyboardInterrupt: # CTRL+C pressed

pass

print('Server shutting down.')

loop.close()

if __name__ == '__main__':

main(*sys.argv[1:])

|

_____no_output_____

|

Apache-2.0

|

notebook/fluent_ch18.ipynb

|

Lin0818/py-study-notebook

|

原始数据处理程序 本程序用于将原始txt格式数据以utf-8编码写入到csv文件中, 以便后续操作请在使用前确认原始数据所在文件夹内无无关文件,并修改各分类文件夹名至1-9一个可行的对应关系如下所示:财经 1 economy房产 2 realestate健康 3 health教育 4 education军事 5 military科技 6 technology体育 7 sports娱乐 8 entertainment证券 9 stock 先导入一些库

|

import os #用于文件操作

import pandas as pd #用于读写数据

|

_____no_output_____

|

MIT

|

filePreprocessing.ipynb

|

zinccat/WeiboTextClassification

|

数据处理所用函数,读取文件夹名作为数据的类别,将数据以文本(text),类别(category)的形式输出至csv文件中传入参数: corpus_path: 原始语料库根目录 out_path: 处理后文件输出目录

|

def processing(corpus_path, out_path):

if not os.path.exists(out_path): #检测输出目录是否存在,若不存在则创建目录

os.makedirs(out_path)

clist = os.listdir(corpus_path) #列出原始数据根目录下的文件夹

for classid in clist: #对每个文件夹分别处理

dict = {'text': [], 'category': []}

class_path = corpus_path+classid+"/"

filelist = os.listdir(class_path)

for fileN in filelist: #处理单个文件

file_path = class_path + fileN

with open(file_path, encoding='utf-8', errors='ignore') as f:

content = f.read()

dict['text'].append(content) #将文本内容加入字典

dict['category'].append(classid) #将类别加入字典

pf = pd.DataFrame(dict, columns=["text", "category"])

if classid == '1': #第一类数据输出时创建新文件并添加header

pf.to_csv(out_path+'dataUTF8.csv', mode='w',

header=True, encoding='utf-8', index=False)

else: #将剩余类别的数据写入到已生成的文件中

pf.to_csv(out_path+'dataUTF8.csv', mode='a',

header=False, encoding='utf-8', index=False)

|

_____no_output_____

|

MIT

|

filePreprocessing.ipynb

|

zinccat/WeiboTextClassification

|

处理文件

|

processing("./data/", "./dataset/")

|

_____no_output_____

|

MIT

|

filePreprocessing.ipynb

|

zinccat/WeiboTextClassification

|

Logistic Regression Table of ContentsIn this lab, we will cover logistic regression using PyTorch. Logistic Function Build a Logistic Regression Using nn.Sequential Build Custom ModulesEstimated Time Needed: 15 min Preparation We'll need the following libraries:

|

# Import the libraries we need for this lab

import torch.nn as nn

import torch

import matplotlib.pyplot as plt

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Set the random seed:

|

# Set the random seed

torch.manual_seed(2)

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Logistic Function Create a tensor ranging from -100 to 100:

|

z = torch.arange(-100, 100, 0.1).view(-1, 1)

print("The tensor: ", z)

|

The tensor: tensor([[-100.0000],

[ -99.9000],

[ -99.8000],

...,

[ 99.7000],

[ 99.8000],

[ 99.9000]])

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Create a sigmoid object:

|

# Create sigmoid object

sig = nn.Sigmoid()

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Apply the element-wise function Sigmoid with the object:

|

# Use sigmoid object to calculate the

yhat = sig(z)

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Plot the results:

|

plt.plot(z.numpy(), yhat.numpy())

plt.xlabel('z')

plt.ylabel('yhat')

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Apply the element-wise Sigmoid from the function module and plot the results:

|

yhat = torch.sigmoid(z)

plt.plot(z.numpy(), yhat.numpy())

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Build a Logistic Regression with nn.Sequential Create a 1x1 tensor where x represents one data sample with one dimension, and 2x1 tensor X represents two data samples of one dimension:

|

# Create x and X tensor

x = torch.tensor([[1.0]])

X = torch.tensor([[1.0], [100]])

print('x = ', x)

print('X = ', X)

|

x = tensor([[1.]])

X = tensor([[ 1.],

[100.]])

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Create a logistic regression object with the nn.Sequential model with a one-dimensional input:

|

# Use sequential function to create model

model = nn.Sequential(nn.Linear(1, 1), nn.Sigmoid())

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

The object is represented in the following diagram: In this case, the parameters are randomly initialized. You can view them the following ways:

|

# Print the parameters

print("list(model.parameters()):\n ", list(model.parameters()))

print("\nmodel.state_dict():\n ", model.state_dict())

|

list(model.parameters()):

[Parameter containing:

tensor([[0.2294]], requires_grad=True), Parameter containing:

tensor([-0.2380], requires_grad=True)]

model.state_dict():

OrderedDict([('0.weight', tensor([[0.2294]])), ('0.bias', tensor([-0.2380]))])

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Make a prediction with one sample:

|

# The prediction for x

yhat = model(x)

print("The prediction: ", yhat)

|

The prediction: tensor([[0.4979]], grad_fn=<SigmoidBackward>)

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Calling the object with tensor X performed the following operation (code values may not be the same as the diagrams value depending on the version of PyTorch) : Make a prediction with multiple samples:

|

# The prediction for X

yhat = model(X)

yhat

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Calling the object performed the following operation: Create a 1x2 tensor where x represents one data sample with one dimension, and 2x3 tensor X represents one data sample of two dimensions:

|

# Create and print samples

x = torch.tensor([[1.0, 1.0]])

X = torch.tensor([[1.0, 1.0], [1.0, 2.0], [1.0, 3.0]])

print('x = ', x)

print('X = ', X)

|

x = tensor([[1., 1.]])

X = tensor([[1., 1.],

[1., 2.],

[1., 3.]])

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Create a logistic regression object with the nn.Sequential model with a two-dimensional input:

|

# Create new model using nn.sequential()

model = nn.Sequential(nn.Linear(2, 1), nn.Sigmoid())

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

The object will apply the Sigmoid function to the output of the linear function as shown in the following diagram: In this case, the parameters are randomly initialized. You can view them the following ways:

|

# Print the parameters

print("list(model.parameters()):\n ", list(model.parameters()))

print("\nmodel.state_dict():\n ", model.state_dict())

|

list(model.parameters()):

[Parameter containing:

tensor([[ 0.1939, -0.0361]], requires_grad=True), Parameter containing:

tensor([0.3021], requires_grad=True)]

model.state_dict():

OrderedDict([('0.weight', tensor([[ 0.1939, -0.0361]])), ('0.bias', tensor([0.3021]))])

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Make a prediction with one sample:

|

# Make the prediction of x

yhat = model(x)

print("The prediction: ", yhat)

|

The prediction: tensor([[0.6130]], grad_fn=<SigmoidBackward>)

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

The operation is represented in the following diagram: Make a prediction with multiple samples:

|

# The prediction of X

yhat = model(X)

print("The prediction: ", yhat)

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

The operation is represented in the following diagram: Build Custom Modules In this section, you will build a custom Module or class. The model or object function is identical to using nn.Sequential. Create a logistic regression custom module:

|

# Create logistic_regression custom class

class logistic_regression(nn.Module):

# Constructor

def __init__(self, n_inputs):

super(logistic_regression, self).__init__()

self.linear = nn.Linear(n_inputs, 1)

# Prediction

def forward(self, x):

yhat = torch.sigmoid(self.linear(x))

return yhat

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Create a 1x1 tensor where x represents one data sample with one dimension, and 3x1 tensor where $X$ represents one data sample of one dimension:

|

# Create x and X tensor

x = torch.tensor([[1.0]])

X = torch.tensor([[-100], [0], [100.0]])

print('x = ', x)

print('X = ', X)

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Create a model to predict one dimension:

|

# Create logistic regression model

model = logistic_regression(1)

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

In this case, the parameters are randomly initialized. You can view them the following ways:

|

# Print parameters

print("list(model.parameters()):\n ", list(model.parameters()))

print("\nmodel.state_dict():\n ", model.state_dict())

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Make a prediction with one sample:

|

# Make the prediction of x

yhat = model(x)

print("The prediction result: \n", yhat)

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Make a prediction with multiple samples:

|

# Make the prediction of X

yhat = model(X)

print("The prediction result: \n", yhat)

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Create a logistic regression object with a function with two inputs:

|

# Create logistic regression model

model = logistic_regression(2)

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Create a 1x2 tensor where x represents one data sample with one dimension, and 3x2 tensor X represents one data sample of one dimension:

|

# Create x and X tensor

x = torch.tensor([[1.0, 2.0]])

X = torch.tensor([[100, -100], [0.0, 0.0], [-100, 100]])

print('x = ', x)

print('X = ', X)

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Make a prediction with one sample:

|

# Make the prediction of x

yhat = model(x)

print("The prediction result: \n", yhat)

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Make a prediction with multiple samples:

|

# Make the prediction of X

yhat = model(X)

print("The prediction result: \n", yhat)

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Practice Make your own model my_model as applying linear regression first and then logistic regression using nn.Sequential(). Print out your prediction.

|

# Practice: Make your model and make the prediction

X = torch.tensor([-10.0])

|

_____no_output_____

|

MIT

|

IBM_AI/4_Pytorch/5.1logistic_regression_prediction_v2.ipynb

|

merula89/cousera_notebooks

|

Classification on Iris dataset with sklearn and DJLIn this notebook, you will try to use a pre-trained sklearn model to run on DJL for a general classification task. The model was trained with [Iris flower dataset](https://en.wikipedia.org/wiki/Iris_flower_data_set). Background Iris DatasetThe dataset contains a set of 150 records under five attributes - sepal length, sepal width, petal length, petal width and species.Iris setosa | Iris versicolor | Iris virginica:-------------------------:|:-------------------------:|:-------------------------: |  |  The chart above shows three different kinds of the Iris flowers. We will use sepal length, sepal width, petal length, petal width as the feature and species as the label to train the model. Sklearn ModelYou can find more information [here](http://onnx.ai/sklearn-onnx/). You can use the sklearn built-in iris dataset to load the data. Then we defined a [RandomForestClassifer](https://scikit-learn.org/stable/modules/generated/sklearn.ensemble.RandomForestClassifier.html) to train the model. After that, we convert the model to onnx format for DJL to run inference. The following code is a sample classification setup using sklearn:```python Train a model.from sklearn.datasets import load_irisfrom sklearn.model_selection import train_test_splitfrom sklearn.ensemble import RandomForestClassifieriris = load_iris()X, y = iris.data, iris.targetX_train, X_test, y_train, y_test = train_test_split(X, y)clr = RandomForestClassifier()clr.fit(X_train, y_train)``` PreparationThis tutorial requires the installation of Java Kernel. To install the Java Kernel, see the [README](https://github.com/awslabs/djl/blob/master/jupyter/README.md).These are dependencies we will use. To enhance the NDArray operation capability, we are importing ONNX Runtime and PyTorch Engine at the same time. Please find more information [here](https://github.com/awslabs/djl/blob/master/docs/onnxruntime/hybrid_engine.mdhybrid-engine-for-onnx-runtime).

|

// %mavenRepo snapshots https://oss.sonatype.org/content/repositories/snapshots/

%maven ai.djl:api:0.8.0

%maven ai.djl.onnxruntime:onnxruntime-engine:0.8.0

%maven ai.djl.pytorch:pytorch-engine:0.8.0

%maven org.slf4j:slf4j-api:1.7.26

%maven org.slf4j:slf4j-simple:1.7.26

%maven com.microsoft.onnxruntime:onnxruntime:1.4.0

%maven ai.djl.pytorch:pytorch-native-auto:1.6.0

import ai.djl.inference.*;

import ai.djl.modality.*;

import ai.djl.ndarray.*;

import ai.djl.ndarray.types.*;

import ai.djl.repository.zoo.*;

import ai.djl.translate.*;

import java.util.*;

|

_____no_output_____

|

Apache-2.0

|

jupyter/onnxruntime/machine_learning_with_ONNXRuntime.ipynb

|

raghav-deepsource/djl

|

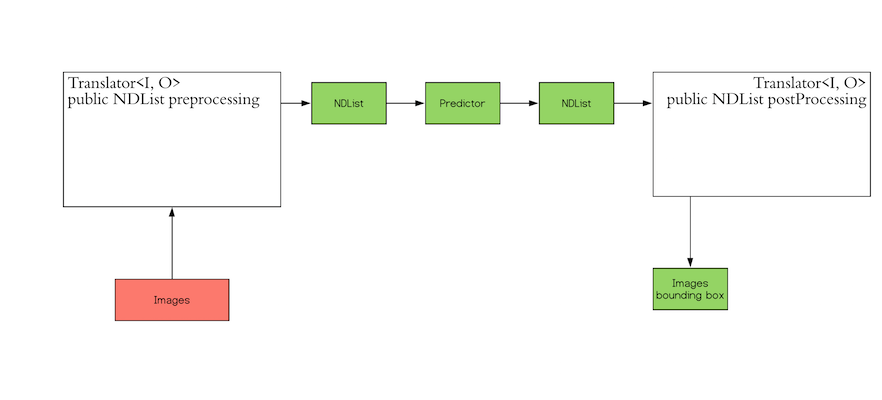

Step 1 create a TranslatorInference in machine learning is the process of predicting the output for a given input based on a pre-defined model.DJL abstracts away the whole process for ease of use. It can load the model, perform inference on the input, and provideoutput. DJL also allows you to provide user-defined inputs. The workflow looks like the following:The `Translator` interface encompasses the two white blocks: Pre-processing and Post-processing. The pre-processingcomponent converts the user-defined input objects into an NDList, so that the `Predictor` in DJL can understand theinput and make its prediction. Similarly, the post-processing block receives an NDList as the output from the`Predictor`. The post-processing block allows you to convert the output from the `Predictor` to the desired outputformat.In our use case, we use a class namely `IrisFlower` as our input class type. We will use [`Classifications`](https://javadoc.io/doc/ai.djl/api/latest/ai/djl/modality/Classifications.html) as our output class type.

|

public static class IrisFlower {

public float sepalLength;

public float sepalWidth;

public float petalLength;

public float petalWidth;

public IrisFlower(float sepalLength, float sepalWidth, float petalLength, float petalWidth) {

this.sepalLength = sepalLength;

this.sepalWidth = sepalWidth;

this.petalLength = petalLength;

this.petalWidth = petalWidth;

}

}

|

_____no_output_____

|

Apache-2.0

|

jupyter/onnxruntime/machine_learning_with_ONNXRuntime.ipynb

|

raghav-deepsource/djl

|

Let's create a translator

|

public static class MyTranslator implements Translator<IrisFlower, Classifications> {

private final List<String> synset;

public MyTranslator() {

// species name

synset = Arrays.asList("setosa", "versicolor", "virginica");

}

@Override

public NDList processInput(TranslatorContext ctx, IrisFlower input) {

float[] data = {input.sepalLength, input.sepalWidth, input.petalLength, input.petalWidth};

NDArray array = ctx.getNDManager().create(data, new Shape(1, 4));

return new NDList(array);

}

@Override

public Classifications processOutput(TranslatorContext ctx, NDList list) {

return new Classifications(synset, list.get(1));

}

@Override

public Batchifier getBatchifier() {

return null;

}

}

|

_____no_output_____

|

Apache-2.0

|

jupyter/onnxruntime/machine_learning_with_ONNXRuntime.ipynb

|

raghav-deepsource/djl

|

Step 2 Prepare your modelWe will load a pretrained sklearn model into DJL. We defined a [`ModelZoo`](https://javadoc.io/doc/ai.djl/api/latest/ai/djl/repository/zoo/ModelZoo.html) concept to allow user load model from varity of locations, such as remote URL, local files or DJL pretrained model zoo. We need to define `Criteria` class to help the modelzoo locate the model and attach translator. In this example, we download a compressed ONNX model from S3.

|

String modelUrl = "https://mlrepo.djl.ai/model/tabular/random_forest/ai/djl/onnxruntime/iris_flowers/0.0.1/iris_flowers.zip";

Criteria<IrisFlower, Classifications> criteria = Criteria.builder()

.setTypes(IrisFlower.class, Classifications.class)

.optModelUrls(modelUrl)

.optTranslator(new MyTranslator())

.optEngine("OnnxRuntime") // use OnnxRuntime engine by default

.build();

ZooModel<IrisFlower, Classifications> model = ModelZoo.loadModel(criteria);

|

_____no_output_____

|

Apache-2.0

|

jupyter/onnxruntime/machine_learning_with_ONNXRuntime.ipynb

|

raghav-deepsource/djl

|

Step 3 Run inferenceUser will just need to create a `Predictor` from model to run the inference.

|

Predictor<IrisFlower, Classifications> predictor = model.newPredictor();

IrisFlower info = new IrisFlower(1.0f, 2.0f, 3.0f, 4.0f);

predictor.predict(info);

|

_____no_output_____

|

Apache-2.0

|

jupyter/onnxruntime/machine_learning_with_ONNXRuntime.ipynb

|

raghav-deepsource/djl

|

View source on GitHub Notebook Viewer Run in Google Colab Install Earth Engine API and geemapInstall the [Earth Engine Python API](https://developers.google.com/earth-engine/python_install) and [geemap](https://geemap.org). The **geemap** Python package is built upon the [ipyleaflet](https://github.com/jupyter-widgets/ipyleaflet) and [folium](https://github.com/python-visualization/folium) packages and implements several methods for interacting with Earth Engine data layers, such as `Map.addLayer()`, `Map.setCenter()`, and `Map.centerObject()`.The following script checks if the geemap package has been installed. If not, it will install geemap, which automatically installs its [dependencies](https://github.com/giswqs/geemapdependencies), including earthengine-api, folium, and ipyleaflet.

|

# Installs geemap package

import subprocess

try:

import geemap

except ImportError:

print('Installing geemap ...')

subprocess.check_call(["python", '-m', 'pip', 'install', 'geemap'])

import ee

import geemap

|

_____no_output_____

|

MIT

|

Algorithms/landsat_radiance.ipynb

|

OIEIEIO/earthengine-py-notebooks

|

Create an interactive map The default basemap is `Google Maps`. [Additional basemaps](https://github.com/giswqs/geemap/blob/master/geemap/basemaps.py) can be added using the `Map.add_basemap()` function.

|

Map = geemap.Map(center=[40,-100], zoom=4)

Map

|

_____no_output_____

|

MIT

|

Algorithms/landsat_radiance.ipynb

|

OIEIEIO/earthengine-py-notebooks

|

Add Earth Engine Python script

|

# Add Earth Engine dataset

# Load a raw Landsat scene and display it.

raw = ee.Image('LANDSAT/LC08/C01/T1/LC08_044034_20140318')

Map.centerObject(raw, 10)

Map.addLayer(raw, {'bands': ['B4', 'B3', 'B2'], 'min': 6000, 'max': 12000}, 'raw')

# Convert the raw data to radiance.

radiance = ee.Algorithms.Landsat.calibratedRadiance(raw)

Map.addLayer(radiance, {'bands': ['B4', 'B3', 'B2'], 'max': 90}, 'radiance')

# Convert the raw data to top-of-atmosphere reflectance.

toa = ee.Algorithms.Landsat.TOA(raw)

Map.addLayer(toa, {'bands': ['B4', 'B3', 'B2'], 'max': 0.2}, 'toa reflectance')

|

_____no_output_____

|

MIT

|

Algorithms/landsat_radiance.ipynb

|

OIEIEIO/earthengine-py-notebooks

|

Display Earth Engine data layers

|

Map.addLayerControl() # This line is not needed for ipyleaflet-based Map.

Map

|

_____no_output_____

|

MIT

|

Algorithms/landsat_radiance.ipynb

|

OIEIEIO/earthengine-py-notebooks

|

Import Libraries

|

from __future__ import print_function

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

import torchvision

from torchvision import datasets, transforms

%matplotlib inline

import matplotlib.pyplot as plt

|

_____no_output_____

|

MIT

|

MNIST/Session2/3_Global_Average_Pooling.ipynb

|

gmshashank/pytorch_vision

|

Data TransformationsWe first start with defining our data transformations. We need to think what our data is and how can we augment it to correct represent images which it might not see otherwise.

|

# Train Phase transformations

train_transforms = transforms.Compose([

# transforms.Resize((28, 28)),

# transforms.ColorJitter(brightness=0.10, contrast=0.1, saturation=0.10, hue=0.1),

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,)) # The mean and std have to be sequences (e.g., tuples), therefore you should add a comma after the values.

# Note the difference between (0.1307) and (0.1307,)

])

# Test Phase transformations

test_transforms = transforms.Compose([

# transforms.Resize((28, 28)),

# transforms.ColorJitter(brightness=0.10, contrast=0.1, saturation=0.10, hue=0.1),

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])

|

_____no_output_____

|

MIT

|

MNIST/Session2/3_Global_Average_Pooling.ipynb

|

gmshashank/pytorch_vision

|

Dataset and Creating Train/Test Split

|

train = datasets.MNIST('./data', train=True, download=True, transform=train_transforms)

test = datasets.MNIST('./data', train=False, download=True, transform=test_transforms)

|

Downloading http://yann.lecun.com/exdb/mnist/train-images-idx3-ubyte.gz to ./data/MNIST/raw/train-images-idx3-ubyte.gz

|

MIT

|

MNIST/Session2/3_Global_Average_Pooling.ipynb

|

gmshashank/pytorch_vision

|

Dataloader Arguments & Test/Train Dataloaders

|

SEED = 1

# CUDA?

cuda = torch.cuda.is_available()

print("CUDA Available?", cuda)

# For reproducibility

torch.manual_seed(SEED)

if cuda:

torch.cuda.manual_seed(SEED)

# dataloader arguments - something you'll fetch these from cmdprmt

dataloader_args = dict(shuffle=True, batch_size=128, num_workers=4, pin_memory=True) if cuda else dict(shuffle=True, batch_size=64)

# train dataloader

train_loader = torch.utils.data.DataLoader(train, **dataloader_args)

# test dataloader

test_loader = torch.utils.data.DataLoader(test, **dataloader_args)

|

CUDA Available? True

|

MIT

|

MNIST/Session2/3_Global_Average_Pooling.ipynb

|

gmshashank/pytorch_vision

|

Data StatisticsIt is important to know your data very well. Let's check some of the statistics around our data and how it actually looks like

|

# We'd need to convert it into Numpy! Remember above we have converted it into tensors already

train_data = train.train_data

train_data = train.transform(train_data.numpy())

print('[Train]')

print(' - Numpy Shape:', train.train_data.cpu().numpy().shape)

print(' - Tensor Shape:', train.train_data.size())

print(' - min:', torch.min(train_data))

print(' - max:', torch.max(train_data))

print(' - mean:', torch.mean(train_data))

print(' - std:', torch.std(train_data))

print(' - var:', torch.var(train_data))

dataiter = iter(train_loader)

images, labels = dataiter.next()

print(images.shape)

print(labels.shape)

# Let's visualize some of the images

plt.imshow(images[0].numpy().squeeze(), cmap='gray_r')

|

MIT

|

MNIST/Session2/3_Global_Average_Pooling.ipynb

|

gmshashank/pytorch_vision

|

|

MOREIt is important that we view as many images as possible. This is required to get some idea on image augmentation later on

|

figure = plt.figure()

num_of_images = 60

for index in range(1, num_of_images + 1):

plt.subplot(6, 10, index)

plt.axis('off')

plt.imshow(images[index].numpy().squeeze(), cmap='gray_r')

|

_____no_output_____

|

MIT

|

MNIST/Session2/3_Global_Average_Pooling.ipynb

|

gmshashank/pytorch_vision

|

The modelLet's start with the model we first saw

|

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

# Input Block

self.convblock1 = nn.Sequential(

nn.Conv2d(in_channels=1, out_channels=16, kernel_size=(3, 3), padding=0, bias=False),

nn.ReLU(),

) # output_size = 26

# CONVOLUTION BLOCK 1

self.convblock2 = nn.Sequential(

nn.Conv2d(in_channels=16, out_channels=16, kernel_size=(3, 3), padding=0, bias=False),

nn.ReLU(),

) # output_size = 24

# TRANSITION BLOCK 1

self.convblock3 = nn.Sequential(

nn.Conv2d(in_channels=16, out_channels=16, kernel_size=(1, 1), padding=0, bias=False),

nn.ReLU(),

) # output_size = 24

self.pool1 = nn.MaxPool2d(2, 2) # output_size = 12

# CONVOLUTION BLOCK 2

self.convblock4 = nn.Sequential(

nn.Conv2d(in_channels=16, out_channels=16, kernel_size=(3, 3), padding=0, bias=False),

nn.ReLU(),

) # output_size = 10

self.convblock5 = nn.Sequential(

nn.Conv2d(in_channels=16, out_channels=16, kernel_size=(3, 3), padding=0, bias=False),

nn.ReLU(),

) # output_size = 8

self.convblock6 = nn.Sequential(

nn.Conv2d(in_channels=16, out_channels=10, kernel_size=(3, 3), padding=0, bias=False),

nn.ReLU(),

) # output_size = 6

# OUTPUT BLOCK

self.convblock7 = nn.Sequential(

nn.Conv2d(in_channels=10, out_channels=10, kernel_size=(3, 3), padding=1, bias=False),

nn.ReLU(),

) # output_size = 6

self.gap = nn.Sequential(

nn.AvgPool2d(kernel_size=6)

)

self.convblock8 = nn.Sequential(

nn.Conv2d(in_channels=10, out_channels=10, kernel_size=(1, 1), padding=0, bias=False),

# nn.BatchNorm2d(10), NEVER

# nn.ReLU() NEVER!

) # output_size = 1

def forward(self, x):

x = self.convblock1(x)

x = self.convblock2(x)

x = self.convblock3(x)

x = self.pool1(x)

x = self.convblock4(x)

x = self.convblock5(x)

x = self.convblock6(x)

x = self.convblock7(x)

x = self.gap(x)

x = self.convblock8(x)

x = x.view(-1, 10)

return F.log_softmax(x, dim=-1)

|

_____no_output_____

|

MIT

|

MNIST/Session2/3_Global_Average_Pooling.ipynb

|

gmshashank/pytorch_vision

|

Model ParamsCan't emphasize on how important viewing Model Summary is. Unfortunately, there is no in-built model visualizer, so we have to take external help

|

!pip install torchsummary

from torchsummary import summary

use_cuda = torch.cuda.is_available()

device = torch.device("cuda" if use_cuda else "cpu")

print(device)

model = Net().to(device)

summary(model, input_size=(1, 28, 28))

|

Requirement already satisfied: torchsummary in /usr/local/lib/python3.6/dist-packages (1.5.1)

cuda

----------------------------------------------------------------

Layer (type) Output Shape Param #

================================================================

Conv2d-1 [-1, 16, 26, 26] 144

ReLU-2 [-1, 16, 26, 26] 0

Conv2d-3 [-1, 16, 24, 24] 2,304

ReLU-4 [-1, 16, 24, 24] 0

Conv2d-5 [-1, 16, 24, 24] 256

ReLU-6 [-1, 16, 24, 24] 0

MaxPool2d-7 [-1, 16, 12, 12] 0

Conv2d-8 [-1, 16, 10, 10] 2,304

ReLU-9 [-1, 16, 10, 10] 0

Conv2d-10 [-1, 16, 8, 8] 2,304

ReLU-11 [-1, 16, 8, 8] 0

Conv2d-12 [-1, 10, 6, 6] 1,440

ReLU-13 [-1, 10, 6, 6] 0

Conv2d-14 [-1, 10, 6, 6] 900

ReLU-15 [-1, 10, 6, 6] 0

AvgPool2d-16 [-1, 10, 1, 1] 0

Conv2d-17 [-1, 10, 1, 1] 100

================================================================

Total params: 9,752

Trainable params: 9,752

Non-trainable params: 0

----------------------------------------------------------------

Input size (MB): 0.00

Forward/backward pass size (MB): 0.52

Params size (MB): 0.04

Estimated Total Size (MB): 0.56

----------------------------------------------------------------

|

MIT

|

MNIST/Session2/3_Global_Average_Pooling.ipynb

|

gmshashank/pytorch_vision

|

Training and TestingLooking at logs can be boring, so we'll introduce **tqdm** progressbar to get cooler logs. Let's write train and test functions

|

from tqdm import tqdm

train_losses = []

test_losses = []

train_acc = []

test_acc = []

def train(model, device, train_loader, optimizer, epoch):

global train_max