File size: 11,010 Bytes

f0a65b0 1538351 00602c6 37e6b14 f0a65b0 31e20b8 f0a65b0 31e20b8 f0a65b0 31e20b8 d37beb9 31e20b8 f0a65b0 d37beb9 f0a65b0 d37beb9 f0a65b0 1538351 d37beb9 f0a65b0 31e20b8 f0a65b0 31e20b8 f0a65b0 31e20b8 f0a65b0 d37beb9 f0a65b0 1538351 d37beb9 f0a65b0 31e20b8 f0a65b0 31e20b8 f0a65b0 31e20b8 f0a65b0 d37beb9 f0a65b0 8330fd2 31e20b8 f0a65b0 31e20b8 f0a65b0 8330fd2 1538351 f0a65b0 8330fd2 f0a65b0 8330fd2 1538351 f0a65b0 8330fd2 f0a65b0 0b278f9 f0a65b0 37e6b14 920ed7b f0a65b0 3dd9d30 37e6b14 920ed7b 3dd9d30 920ed7b 3dd9d30 920ed7b 8330fd2 920ed7b 8330fd2 5844a8e 920ed7b 7aba9a1 3dd9d30 7aba9a1 3dd9d30 9f0f389 31e20b8 9f0f389 3dd9d30 7aba9a1 3dd9d30 7aba9a1 3dd9d30 9f0f389 31e20b8 3dd9d30 f0a65b0 31e20b8 f0a65b0 37e6b14 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 |

---

size_categories:

- 10K<n<100K

paperswithcode_id: spair-71k

tags:

- semantic-correspondence

dataset_info:

- config_name: data

features:

- name: img

dtype: image

- name: name

dtype: string

- name: segmentation

dtype: image

- name: filename

dtype: string

- name: src_database

dtype: string

- name: src_annotation

dtype: string

- name: src_image

dtype: string

- name: image_width

dtype: uint32

- name: image_height

dtype: uint32

- name: image_depth

dtype: uint32

- name: category

dtype: string

- name: category_id

dtype:

class_label:

names:

'0': cat

'1': pottedplant

'2': train

'3': bicycle

'4': car

'5': bus

'6': aeroplane

'7': dog

'8': bird

'9': chair

'10': motorbike

'11': cow

'12': bottle

'13': person

'14': boat

'15': sheep

'16': horse

'17': tvmonitor

- name: pose

dtype: string

- name: truncated

dtype: uint8

- name: occluded

dtype: uint8

- name: difficult

dtype: uint8

- name: bndbox

sequence:

dtype: uint32

length: 4

- name: kps

dtype:

array2_d:

dtype: int32

shape:

- None

- 2

- name: azimuth_id

dtype: uint8

splits:

- name: train

num_bytes: 2016911

num_examples: 1800

download_size: 226961117

dataset_size: 2016911

- config_name: pairs

features:

- name: pair_id

dtype: uint32

- name: src_img

dtype: image

- name: src_segmentation

dtype: image

- name: src_data_index

dtype: uint32

- name: src_name

dtype: string

- name: src_imsize

sequence:

dtype: uint32

length: 3

- name: src_bndbox

sequence:

dtype: uint32

length: 4

- name: src_pose

dtype:

class_label:

names:

'0': Unspecified

'1': Frontal

'2': Left

'3': Rear

'4': Right

- name: src_kps

dtype:

array2_d:

dtype: uint32

shape:

- None

- 2

- name: trg_img

dtype: image

- name: trg_segmentation

dtype: image

- name: trg_data_index

dtype: uint32

- name: trg_name

dtype: string

- name: trg_imsize

sequence:

dtype: uint32

length: 3

- name: trg_bndbox

sequence:

dtype: uint32

length: 4

- name: trg_pose

dtype:

class_label:

names:

'0': Unspecified

'1': Frontal

'2': Left

'3': Rear

'4': Right

- name: trg_kps

dtype:

array2_d:

dtype: uint32

shape:

- None

- 2

- name: kps_ids

sequence:

dtype: uint32

- name: category

dtype: string

- name: category_id

dtype:

class_label:

names:

'0': cat

'1': pottedplant

'2': train

'3': bicycle

'4': car

'5': bus

'6': aeroplane

'7': dog

'8': bird

'9': chair

'10': motorbike

'11': cow

'12': bottle

'13': person

'14': boat

'15': sheep

'16': horse

'17': tvmonitor

- name: viewpoint_variation

dtype: uint8

- name: scale_variation

dtype: uint8

- name: truncation

dtype: uint8

- name: occlusion

dtype: uint8

splits:

- name: train

num_bytes: 55925012

num_examples: 53340

- name: validation

num_bytes: 5700112

num_examples: 5384

- name: test

num_bytes: 12931172

num_examples: 12234

download_size: 226961117

dataset_size: 74556296

---

# SPair-71k

This is an inofficial dataset loading script for the semantic correspondence dataset [SPair-71k](https://cvlab.postech.ac.kr/research/SPair-71k/). It downloads the data from the original source and converts it to the huggingface format.

## Dataset Description

- **Homepage:** [SPair-71k dataset](https://cvlab.postech.ac.kr/research/SPair-71k/)

- **Paper:** [SPair-71k: A Large-scale Benchmark for Semantic Correspondence](https://arxiv.org/abs/1908.10543)

## Official Description

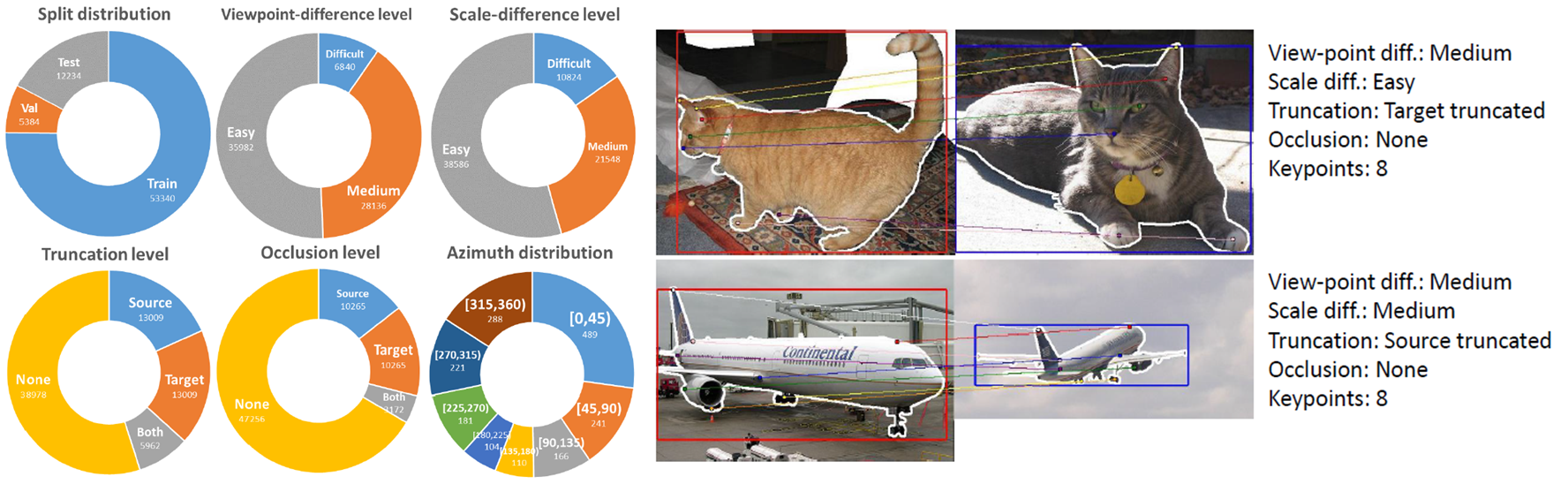

Establishing visual correspondences under large intra-class variations, which is often referred to as semantic correspondence or semantic matching, remains a challenging problem in computer vision. Despite its significance, however, most of the datasets for semantic correspondence are limited to a small amount of image pairs with similar viewpoints and scales. In this paper, we present a new large-scale benchmark dataset of semantically paired images, SPair-71k, which contains 70,958 image pairs with diverse variations in viewpoint and scale. Compared to previous datasets, it is significantly larger in number and contains more accurate and richer annotations. We believe this dataset will provide a reliable testbed to study the problem of semantic correspondence and will help to advance research in this area. We provide the results of recent methods on our new dataset as baselines for further research.

## Usage

```python

from datasets import load_dataset

# load image pairs for the semantic correspondence task

pairs = load_dataset("0jl/SPair-71k", trust_remote_code=True)

print('available splits:', pairs.keys()) # train, validation, test

print('exemplary sample:', pairs['train'][0])

# load only the data, i.e. images, segmentation and annotations

data = load_dataset("0jl/SPair-71k", "data", trust_remote_code=True)

print('available splits:', data.keys()) # only train, as the data is used in all splits

print('exemplary sample:', data['train'][0])

```

Output:

```

available splits: dict_keys(['train', 'validation', 'test'])

exemplary sample: {'pair_id': 35987, 'src_img': <PIL.JpegImagePlugin.JpegImageFile image mode=RGB size=500x333 at ...>, 'src_segmentation': <PIL.PngImagePlugin.PngImageFile image mode=L size=500x333 at ...>, 'src_data_index': 1017, 'src_name': 'horse/2009_003294', 'src_imsize': [500, 333, 3], 'src_bndbox': [207, 38, 393, 310], 'src_pose': 0, 'src_kps': [[239, 42], [212, 45], [206, 100], [228, 51], [316, 265], [297, 259]], 'trg_img': <PIL.JpegImagePlugin.JpegImageFile image mode=RGB size=500x375 at ...>, 'trg_segmentation': <PIL.PngImagePlugin.PngImageFile image mode=L size=500x375 at ...>, 'trg_data_index': 85, 'trg_name': 'horse/2007_006134', 'trg_imsize': [500, 375, 3], 'trg_bndbox': [125, 99, 442, 318], 'trg_pose': 4, 'trg_kps': [[401, 101], [385, 98], [408, 204], [380, 112], [138, 247], [160, 246]], 'kps_ids': [2, 3, 8, 9, 18, 19], 'category': 16, 'viewpoint_variation': 2, 'scale_variation': 0, 'truncation': 0, 'occlusion': 1}

available splits: dict_keys(['train'])

exemplary sample: {'img': <PIL.JpegImagePlugin.JpegImageFile image mode=RGB size=500x332 at ...>, 'segmentation': <PIL.PngImagePlugin.PngImageFile image mode=L size=500x332 at ...>, 'annotation': '{"filename": "2007_000175.jpg", "src_database": "The VOC2007 Database", "src_annotation": "PASCAL VOC2007", "src_image": "flickr", "image_width": 500, "image_height": 332, "image_depth": 3, "category": "sheep", "pose": "Unspecified", "truncated": 0, "occluded": 0, "difficult": 0, "bndbox": [25, 34, 419, 271], "kps": {"0": [211, 100], "1": [347, 87], "2": [132, 99], "3": [423, 72], "4": [245, 91], "5": [329, 88], "6": [282, 137], "7": [304, 138], "8": [295, 162], "9": null, "10": null, "11": null, "12": null, "13": null, "14": null, "15": [173, 247], "16": null, "17": [45, 205], "18": null, "19": null, "20": null, "21": null, "22": null, "23": null, "24": null, "25": null, "26": null, "27": null, "28": null, "29": null}, "azimuth_id": 7}', 'name': 'sheep/2007_000175'}

```

## Featues for `pairs` (default)

```python

pairs = load_dataset("0jl/SPair-71k", name='pairs', trust_remote_code=True)

```

splits: `train`, `validation`, `test`

- `pair_id`: `Value(dtype='uint32')`

- `src_img`: `Image(decode=True)`

- `src_segmentation`: `Image(decode=True)`

- `src_data_index`: `Value(dtype='uint32')`

- `src_name`: `Value(dtype='string')`

- `src_imsize`: `Sequence(feature=Value(dtype='uint32'), length=3)`

- `src_bndbox`: `Sequence(feature=Value(dtype='uint32'), length=4)`

- `src_pose`: `ClassLabel(names=['Unspecified', 'Frontal', 'Left', 'Rear', 'Right'])`

- `src_kps`: `Array2D(shape=(None, 2), dtype='uint32')`

- `trg_img`: `Image(decode=True)`

- `trg_segmentation`: `Image(decode=True)`

- `trg_data_index`: `Value(dtype='uint32')`

- `trg_name`: `Value(dtype='string')`

- `trg_imsize`: `Sequence(feature=Value(dtype='uint32'), length=3)`

- `trg_bndbox`: `Sequence(feature=Value(dtype='uint32'), length=4)`

- `trg_pose`: `ClassLabel(names=['Unspecified', 'Frontal', 'Left', 'Rear', 'Right'])`

- `trg_kps`: `Array2D(shape=(None, 2), dtype='uint32')`

- `kps_ids`: `Sequence(feature=Value(dtype='uint32'), length=-1)`

- `category`: `Value(dtype='string')`

- `category_id`: `ClassLabel(names=['cat', 'pottedplant', 'train', 'bicycle', 'car', 'bus', 'aeroplane', 'dog', 'bird', 'chair', 'motorbike', 'cow', 'bottle', 'person', 'boat', 'sheep', 'horse', 'tvmonitor'])`

- `viewpoint_variation`: `Value(dtype='uint8')`

- `scale_variation`: `Value(dtype='uint8')`

- `truncation`: `Value(dtype='uint8')`

- `occlusion`: `Value(dtype='uint8')`

## Features for `data`

```python

data = load_dataset("0jl/SPair-71k", name='data', trust_remote_code=True)

```

splits: `train`

- `img`: `Image(decode=True)`

- `name`: `Value(dtype='string')`

- `segmentation`: `Image(decode=True)`

- `filename`: `Value(dtype='string')`

- `src_database`: `Value(dtype='string')`

- `src_annotation`: `Value(dtype='string')`

- `src_image`: `Value(dtype='string')`

- `image_width`: `Value(dtype='uint32')`

- `image_height`: `Value(dtype='uint32')`

- `image_depth`: `Value(dtype='uint32')`

- `category`: `Value(dtype='string')`

- `category_id`: `ClassLabel(names=['cat', 'pottedplant', 'train', 'bicycle', 'car', 'bus', 'aeroplane', 'dog', 'bird', 'chair', 'motorbike', 'cow', 'bottle', 'person', 'boat', 'sheep', 'horse', 'tvmonitor'])`

- `pose`: `Value(dtype='string')`

- `truncated`: `Value(dtype='uint8')`

- `occluded`: `Value(dtype='uint8')`

- `difficult`: `Value(dtype='uint8')`

- `bndbox`: `Sequence(feature=Value(dtype='uint32'), length=4)`

- `kps`: `Array2D(shape=(None, 2), dtype='int32')`

- `azimuth_id`: `Value(dtype='uint8')`

## Terms of Use

The SPair-71k data includes images and metadata obtained from the [PASCAL-VOC](http://host.robots.ox.ac.uk/pascal/VOC/) and [flickr](https://www.flickr.com/) website. Use of these images and metadata must respect the corresponding [terms of use](https://www.flickr.com/help/terms).

## Citation of original dataset

```bibtex

@article{min2019spair,

title={SPair-71k: A Large-scale Benchmark for Semantic Correspondence},

author={Juhong Min and Jongmin Lee and Jean Ponce and Minsu Cho},

journal={arXiv prepreint arXiv:1908.10543},

year={2019}

}

```

|