File size: 802 Bytes

c76f737 232bece c76f737 232bece |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

---

license: apache-2.0

datasets:

- datajuicer/alpaca-cot-en-refined-by-data-juicer

---

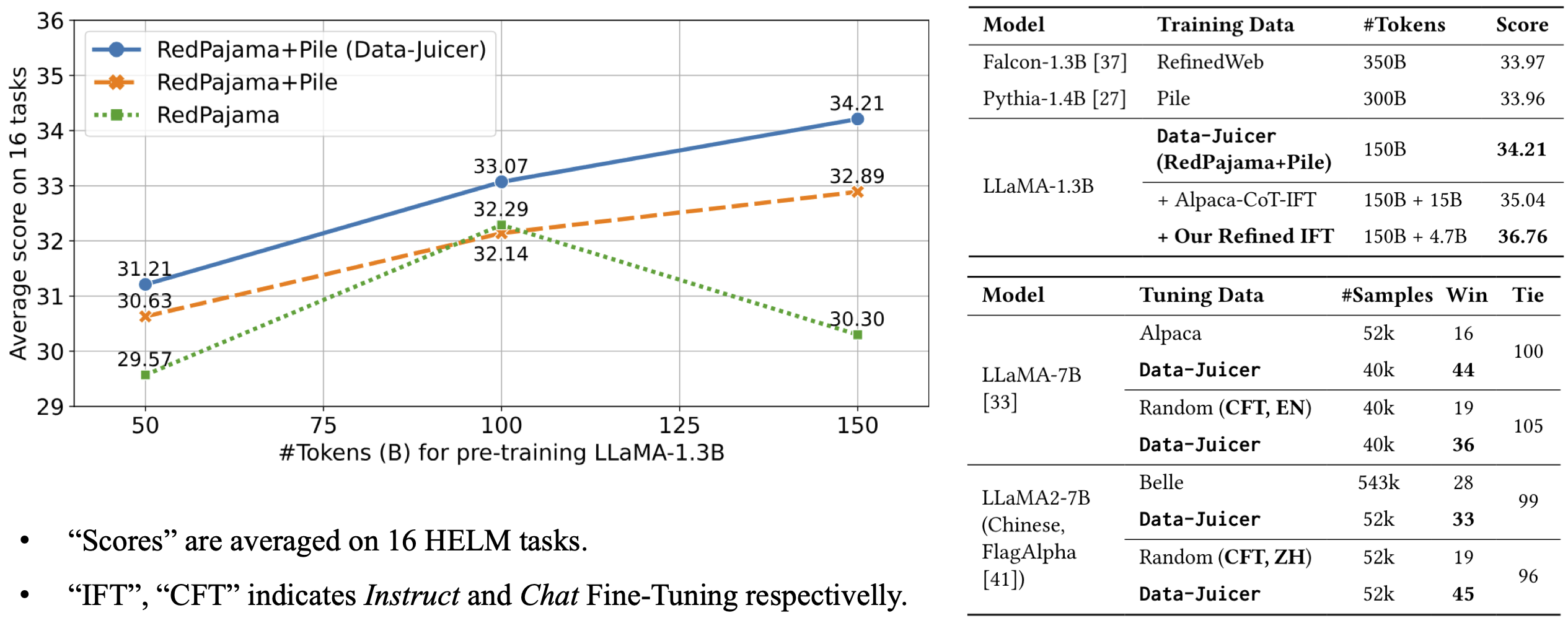

This is a reference LLM from [Data-Juicer](https://github.com/alibaba/data-juicer).

The model architecture is LLaMA-7B and we built it upon the pre-trained [checkpoint](https://huggingface.co/huggyllama/llama-7b).

The model is fine-trained on 40k English chat samples of Data-Juicer's refined [alpaca-CoT data](https://github.com/alibaba/data-juicer/blob/main/configs/data_juicer_recipes/alpaca_cot/README.md#refined-alpaca-cot-dataset-meta-info).

It beats LLaMA-7B fine-tuned on 52k Alpaca samples in GPT-4 evaluation.

For more details, please refer to our [paper](https://arxiv.org/abs/2309.02033).

|