llama-translate

llama-translate は、多言語翻訳モデルllama-translate-amd-npuをggufフォーマットに変更してgpuがなくても動くようにしたモデルです。

llama-translate is a model that converts the multilingual translation model llama-translate-amd-npu into gguf format so that it can run even without a GPU.

現在、日本語、英語、フランス語、中国語(北京語)間の翻訳をサポートしています。

Currently supports translation between Japanese, English, French, and Chinese(Mandarin).

しかし、残念ながら現時点ではRyzenAI-SW 1.2に含まれる2024年4月版のllama.cppでは正常に日本語や中国語、フランス語の入出力ができません

Unfortunately, at present, the April 2024 version of llama.cpp included in RyzenAI-SW 1.2 does not allow for proper input and output of Japanese, Chinese, or French.

このモデルを正常に動かすためには少なくとも2024年8月以降にリリースされたllama.cppを使う必要があります

To run this model correctly, you must use llama.cpp released after August 2024.

以下のエラーが出たらllama.cppのVersionが古いと考えてください

If you get the following error, the version of llama.cpp is probably outdated.

llama_model_load: error loading model: done_getting_tensors: wrong number of tensors; expected 292, got 291

llama.cppのインストール/セットアップについては公式サイトを参考にしてください

Please refer to the official website for installation/setup of llama.cpp.

sample prompt

.llama-cli -m ./llama-translate.f16.Q8_0.gguf -e -n 400 -p "<|begin_of_text|><|start_header_id|>system<|end_header_id|>\nYou are a highly skilled professional translator.<|eot_id|><|start_header_id|>user<|end_header_id|>\n\n### Instruction:\nTranslate Japanese to Mandarin.\n\n### Input:\\n生成AIは近年、その活用用途に広がりを見せている。企業でも生成AIを取り入れようとする動きが高まっており、人手不足の現状を打破するための生産性向上への>活用や、ビジネスチャンス創出が期待されているが、実際に活用している企業はどれほどなのだろうか。帝国データバンクが行った「現在の生成AIの活用状況について調査」の結果を見ると、どうやらまだまだ生成AIは普及していないようだ。\n\n### Response\n<|eot_id|><|start_header_id|>assistant<|end_header_id|>\n"

Windows では、CMD では日本語や中国語の入出力が動ない事がありますので、llama-server コマンドを使用してください。

In Windows, please use the llama-server command because it may not be possible to enter Japanese or Chinese characters in Windows CMD.

For example.

server start command

.\llama.cpp\build\bin\Release\llama-server -m .\llama-translate.f16.Q8_0.gguf -c 2048

client script example

import torch

import psutil

import requests

import json

import subprocess

def translation(instruction, input_text):

system = """<|start_header_id|>system<|end_header_id|>\nYou are a highly skilled professional translator. You are a native speaker of English, Japanese, French and Mandarin. Translate the given text accurately, taking into account the context and specific instructions provided. Steps may include hints enclosed in square brackets [] with the key and value separated by a colon:. If no additional instructions or context are provided, use your expertise to consider what the most appropriate context is and provide a natural translation that aligns with that context. When translating, strive to faithfully reflect the meaning and tone of the original text, pay attention to cultural nuances and differences in language usage, and ensure that the translation is grammatically correct and easy to read. For technical terms and proper nouns, either leave them in the original language or use appropriate translations as necessary. Take a deep breath, calm down, and start translating.<|eot_id|><|start_header_id|>user<|end_header_id|>"""

prompt = f"""{system}

### Instruction:

{instruction}

### Input:

{input_text}

### Response:

<|eot_id|><|start_header_id|>assistant<|end_header_id|>

"""

# Prepare the payload for the POST request

payload = {

"prompt": prompt,

"n_predict": 128 # Adjust this parameter as needed

}

# Define the URL and headers for the POST request

url = "http://localhost:8080/completion"

headers = {

"Content-Type": "application/json"

}

# Send the POST request and capture the response

response = requests.post(url, headers=headers, data=json.dumps(payload))

# print(response)

# print( response.json() )

# Check if the request was successful

if response.status_code != 200:

print(f"Error: {response.text}")

return None

# Parse the response JSON

response_data = response.json()

# Extract the 'content' field from the response

response_content = response_data.get('content', '').strip()

return response_content

if __name__ == "__main__":

translated_line = translation(f"Translate Japanese to English.", "アメリカ代表が怒涛の逆転劇で五輪5連覇に王手…セルビア下し開催国フランス代表との決勝へ")

print(translated_line)

translated_line = translation(f"Translate Japanese to Mandarin.", "石川佳純さんの『中国語インタビュー』に視聴者驚き…卓球女子の中国選手から笑顔引き出し、最後はハイタッチ「めちゃ仲良し」【パリオリンピック】")

print(translated_line)

translated_line = translation(f"Translate Japanese to French.", "開催国フランス すでに史上最多のメダル数に パリオリンピック")

print(translated_line)

translated_line = translation(f"Translate English to Japanese.", "U.S. Women's Volleyball Will Try For Back-to-Back Golds After Defeating Rival Brazil in Five-Set Thriller")

print(translated_line)

translated_line = translation(f"Translate Mandarin to Japanese.", "2024巴黎奥运中国队一日三金!举重双卫冕,花游历史首金,女曲再创辉煌")

print(translated_line)

translated_line = translation(f"Translate French to Japanese.", "Handball aux JO 2024 : Laura Glauser et Hatadou Sako, l’assurance tous risques de l’équipe de France")

print(translated_line)

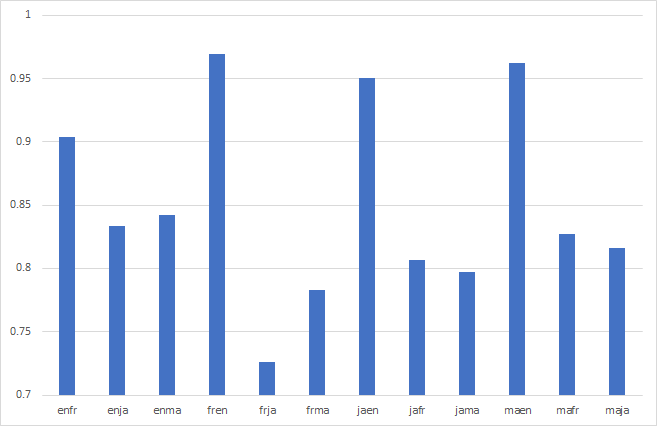

simple benchmark result

8ビットモデルは英仏翻訳、仏英翻訳、日英翻訳、中英翻訳について一定の品質を確保できていると考えられます

The 8-bit model is believed to be able to ensure a certain level of quality for English-French, French-English, Japanese-English, and Chinese-English translations.

- Downloads last month

- 1,024

4-bit

5-bit

6-bit

8-bit