AI Lineage Explorer: A Step Towards AI Integrity.

Demo: AI Lineage Explorer

Demo: AI Lineage Explorer

We're EQTY Lab, working on building trust in public accountability as well as open source AI.

Our years being in the mix of data integrity's and AI's evolving landscape led us to focus on a crucial element: the lineage of AI models. Things move fast when you’re developing AI. You experiment. You collaborate with many people. Keeping track of all the moving parts is tough.

But we need to get it right. There is a vital role for transparent and reproducible open research in democratizing AI technology — and allowing it to stay open.

Today we are introducing on Hugging Face Spaces an early and open version of our first product: The AI Lineage Explorer.

The tool is a practical solution designed to enhance transparency throughout the AI engineering process without getting in your way. It’s free and easily launches right from your model page on Hugging Face.

We worked with our favorite designers to make this happen and brought to the table some really cool new cryptographic methods to make this a reliable tool that we hope anyone will want to use.

We built the AI Lineage Explorer with two simple goals in mind:

Create a tamper-proof manifest that tags along when you ship to bolster trust in your creation.

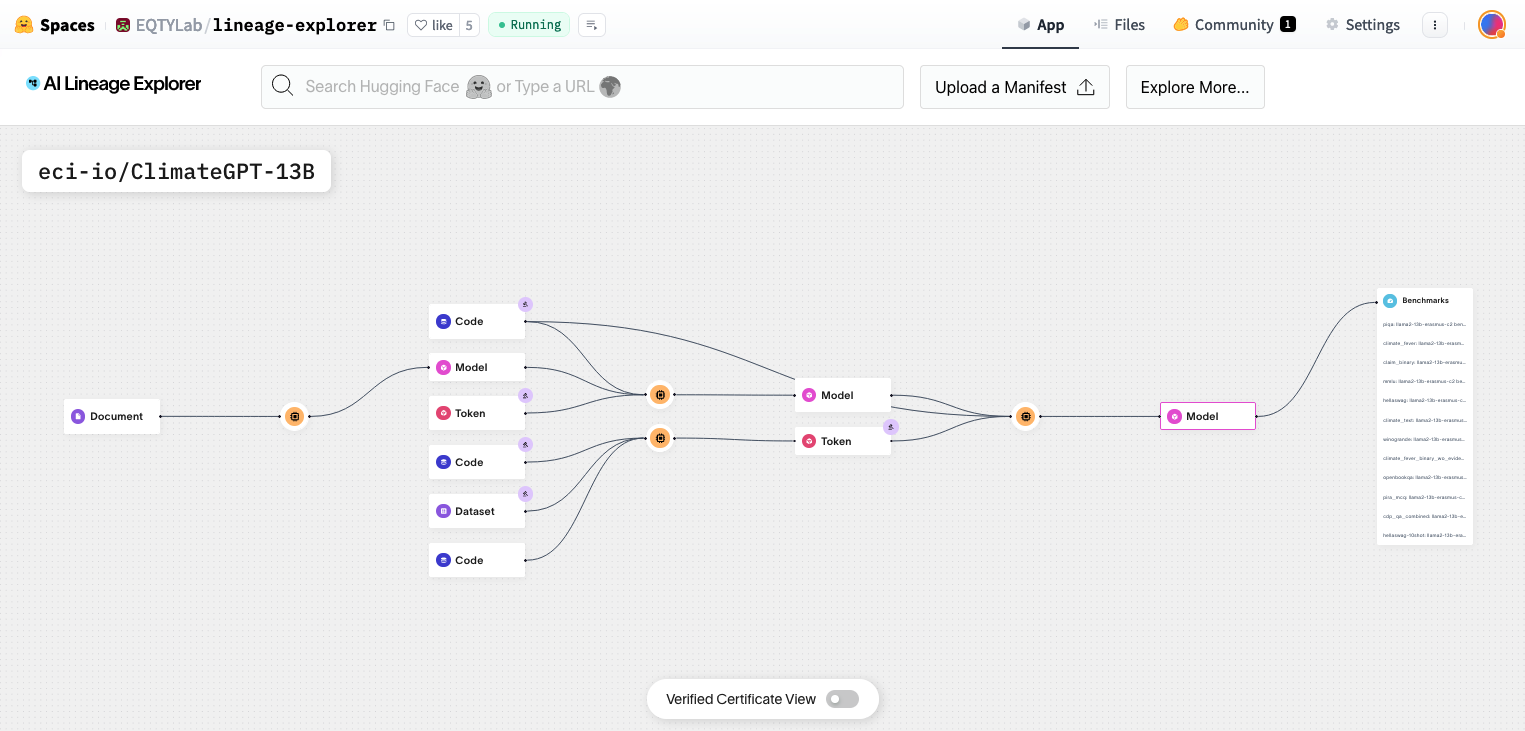

Transform boring attestations into an immersive blueprint that provides a real-time visual of your model’s entire lifecycle —now just one click away on Hugging Face.

With the AI Lineage Explorer, you’re able to:

- Inspect your model’s data sources

- Examine and share its developmental journey

- Determine its governance layers —both machine and human

- Understand its applications or applied performance, such as benchmark results

- Track distributed assets

- And much more…

The backbone of the AI Lineage Explorer is what we call an Integrity Graph, a concept rooted in cryptographic attestations based on digital signatures and verifiable computing. This approach draws inspiration from our collaborations with organizations like Content Authenticity Initiative, and Creative Commons.

It's not just another theoretical framework for Responsible AI; it's a practical foundation for bolstering the accountability of models. From there, you can do all sorts of awesome things, like design compliance, trigger automated reviews, and even pay people their fair share. There’s lots more to come.

But first, we prioritized user experience.

We focused on creating a simple manifest format and an interface that aligns with typical ML engineering workflows to minimize workflow disruptions. A simple line of code is all you should need to kick off a smart tool that quietly works in the background.

We will soon release an open source SDK that will allow anyone to create their own Integrity Manifest and load them into our tools to visualize the model lineage.

Hang tight. We’re working hard to get this to you pronto. But we couldn’t wait to share the Explorer with some real implementations.

Curious to see how it works? Select one of the following models in the AI Lineage Explorer, which we hand-held in the creation of using our early/private versions of the SDK:

Interested in staying updated on the SDK release? Sign up here.

If you're looking to collaborate or have questions, feel free to reach out to hello@eqtylab.io.

And feel free to read on below if you’d like to go beyond the TL;DR.

A bit more on our approach.

While we’ve been at work on different versions of this tool for over a year, we’ve been inspired by a couple of projects along the way, such as Stanford Ecosystem Graphs, Weights and Biases Artifact Lineage, and Content Credentials and Verify by the C2PA.

The problem is that AI consists of really complex engineering processes. You must handle large amounts of data, compute at scale, and manage challenging governance.

That’s just the beginning. The reality is that most models are not just one model, but an ensemble of models and tasks. Take Stable Diffusion 2’s typical architecture. It consists of at least four core models (an image encoder like VAE, a CLIP text encoder, the U-Net denoising model, and an image decoder) that each have their own intensive training history with multiple data sets —not including Stable Diffusion’s own training data. The cool thing is that a bunch of people figured out how to make this model work, but there are lingering uncertainties about the model’s actual provenance.

So it’s going to take time and a determined community (like the one we have here) to figure it out.

So how?

Our design philosophy revolves around meeting people where they are and keeping things very practical without disrupting how engineers create AI.

We’re working to integrate into each step of ML workflows a bunch of new tools. But we don’t want you to focus on them. Just build AI. The steps should fall into place:

Registration & Documentation: Capture critical statements about data, model, compute, governance, and transformations.

Integrity & Trust: Digitally sign each statement to cryptographically establish attributability and tamper-resistance of all inputs, compute, and outputs.

Verifiability: Use graph data structures to establish chains of lineage for added transparency.

Federated Collaborations: Registration statements can be produced by many parties, and composed together to describe collaborative processes. These statements can also optionally be anchored on public ledgers, fostering a decentralized approach to AI advancements and added dimensions for transparency and trust.

Composability & Integration: Uploads a self-contained integrity graph as a single data model to repositories like Hugging Face, enabling easy visualization and verification.

Afterward, show your work and easily bring in others to help make your models even better.

The bottom line —this isn’t an empty promise. While developing AI Lineage Explorer, we've seen ourselves become even more committed to the broader movement towards Responsible AI. Here’s a sketch of our emerging convictions:

Clarity in AI Creation: By labeling AI-generated content and providing metadata for authentic content, we’re fostering an environment where origin and authenticity are clear BUT always up to the user on how to control.

Ethical Foundation in Training Data: Steering clear of problematic data, our tool encourages transparency and ethical practices in AI training.

Streamlining Verification: In line with emerging standards, our tool simplifies the process of verifying credentials, which is especially crucial as more people abuse AI tooling.

Empowering Content Creators: By automating digital signatures, we make it easier for creators to claim and protect their work without having to go to a centralized registry.

Opening Up AI Access: We believe in democratizing AI, not centralizing it. Our tool is a step towards a future where AI is accessible, secure, and utilized for the greater good.

It’s just the beginning. Stay tuned.