Action2Sound: Ambient-Aware Generation of Action Sounds from Egocentric Videos

Motivation

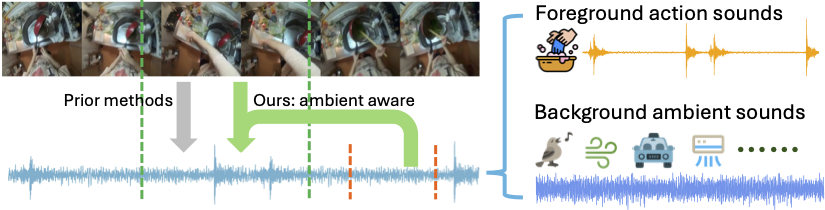

Generating realistic audio for human interactions is important for many applications, such as creating sound effects for films or virtual reality games. Existing approaches implicitly assume total correspondence between the video and audio during training, yet many sounds happen off-screen and have weak to no correspondence with the visuals---resulting in uncontrolled ambient sounds or hallucinations at test time. We propose a novel ambient-aware audio generation model, AV-LDM. We devise a novel audio-conditioning mechanism to learn to disentangle foreground action sounds from the ambient background sounds in in-the-wild training videos. Given a novel silent video, our model uses retrieval-augmented generation to create audio that matches the visual content both semantically and temporally. We train and evaluate our model on two in-the-wild egocentric video datasets Ego4D and EPIC-KITCHENS. Our model outperforms an array of existing methods, allows controllable generation of the ambient sound, and even shows promise for generalizing to computer graphics game clips. Overall, our work is the first to focus video-to-audio generation faithfully on the observed visual content despite training from uncurated clips with natural background sounds.

Project page: Action2Sound

Getting Started

The instructions to run and use our model are available on our GitHub repository. Please refer to the repository for detailed setup and usage guidelines. The pre-trained model weights are stored in this Hugging Face repository. You can download and use them directly in your projects.

Citation

If you find the code, data, or models useful for your research, please cite the following paper:

@article{chen2024action2sound,

title = {Action2Sound: Ambient-Aware Generation of Action Sounds from Egocentric Videos},

author = {Changan Chen and Puyuan Peng and Ami Baid and Sherry Xue and Wei-Ning Hsu and David Harwath and Kristen Grauman},

year = {2024},

journal = {arXiv},

}

License

This repo is licensed, as found in the LICENSE file.