File size: 3,730 Bytes

8e85059 2c1575f e93a827 2c1575f 8e85059 2c1575f 50b5a3d 8e85059 2c1575f 0ee90b1 8e85059 2c1575f 03056cd 2c1575f 0ee90b1 2c1575f 3d0b57f 8e85059 2c1575f 2c959b8 2c1575f 2c959b8 2c1575f 8e85059 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 |

---

license: apache-2.0

datasets:

- klue/klue

language:

- ko

metrics:

- f1

- accuracy

- pearsonr

---

# RoBERTa-base Korean

## 모델 설명

이 RoBERTa 모델은 다양한 한국어 텍스트 데이터셋에서 **음절** 단위로 사전 학습되었습니다.

자체 구축한 한국어 음절 단위 vocab을 사용하였습니다.

## 아키텍처

- **모델 유형**: RoBERTa

- **아키텍처**: RobertaForMaskedLM

- **모델 크기**: 256 hidden size, 8 hidden layers, 8 attention heads

- **max_position_embeddings**: 514

- **intermediate_size**: 2,048

- **vocab_size**: 1,428

## 학습 데이터

사용된 데이터셋은 다음과 같습니다:

- **모두의말뭉치**: 채팅, 게시판, 일상대화, 뉴스, 방송대본, 책 등

- **AIHUB**: SNS, 유튜브 댓글, 도서 문장

- **기타**: 나무위키, 한국어 위키피디아

총 합산된 데이터는 **약 11GB** 입니다. **(4B tokens)**

## 학습 상세

- **BATCH_SIZE**: 112 (GPU당)

- **ACCUMULATE**: 36

- **Total_BATCH_SIZE**: 8,064

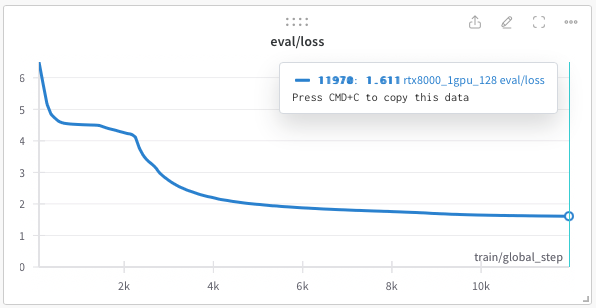

- **MAX_STEPS**: 12,500

- **TRAIN_STEPS * BATCH_SIZE**: **100M**

- **WARMUP_STEPS**: 2,400

- **최적화**: AdamW, LR 1e-3, BETA (0.9, 0.98), eps 1e-6

- **학습률 감쇠**: linear

- **사용된 하드웨어**: 2x RTX 8000 GPU

## 사용 방법

### tokenizer의 경우 wordpiece가 아닌 syllable 단위이기에 AutoTokenizer가 아니라 SyllableTokenizer를 사용해야 합니다.

### (레포에서 제공하고 있는 syllabletokenizer.py를 가져와서 사용해야 합니다.)

## 성능 평가

- **KLUE benchmark test를 통해서 성능을 평가했습니다.**

- klue-roberta-base에 비해서 매우 작은 크기라 성능이 낮기는 하지만 hidden size 512인 모델은 크기 대비 좋은 성능을 보였습니다.

## 사용 방법

### tokenizer의 경우 wordpiece가 아닌 syllable 단위이기에 AutoTokenizer가 아니라 SyllableTokenizer를 사용해야 합니다.

### (레포에서 제공하고 있는 syllabletokenizer.py를 가져와서 사용해야 합니다.)

```python

from transformers import AutoModel, AutoTokenizer

from syllabletokenizer import SyllableTokenizer

# 모델과 토크나이저 불러오기

model = AutoModelForMaskedLM.from_pretrained("Trofish/korean_syllable_roberta")

tokenizer = SyllableTokenizer(vocab_file='vocab.json',**tokenizer_kwargs)

# 텍스트를 토큰으로 변환하고 예측 수행

inputs = tokenizer("여기에 한국어 텍스트 입력", return_tensors="pt")

outputs = model(**inputs)

```

## Citation

**klue**

```

@misc{park2021klue,

title={KLUE: Korean Language Understanding Evaluation},

author={Sungjoon Park and Jihyung Moon and Sungdong Kim and Won Ik Cho and Jiyoon Han and Jangwon Park and Chisung Song and Junseong Kim and Yongsook Song and Taehwan Oh and Joohong Lee and Juhyun Oh and Sungwon Lyu and Younghoon Jeong and Inkwon Lee and Sangwoo Seo and Dongjun Lee and Hyunwoo Kim and Myeonghwa Lee and Seongbo Jang and Seungwon Do and Sunkyoung Kim and Kyungtae Lim and Jongwon Lee and Kyumin Park and Jamin Shin and Seonghyun Kim and Lucy Park and Alice Oh and Jungwoo Ha and Kyunghyun Cho},

year={2021},

eprint={2105.09680},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|