TheBloke's LLM work is generously supported by a grant from andreessen horowitz (a16z)

WizardMath 70B V1.0 - GGML

- Model creator: WizardLM

- Original model: WizardMath 70B V1.0

Description

This repo contains GGML format model files for WizardLM's WizardMath 70B V1.0.

Important note regarding GGML files.

The GGML format has now been superseded by GGUF. As of August 21st 2023, llama.cpp no longer supports GGML models. Third party clients and libraries are expected to still support it for a time, but many may also drop support.

Please use the GGUF models instead.

About GGML

GPU acceleration is now available for Llama 2 70B GGML files, with both CUDA (NVidia) and Metal (macOS). The following clients/libraries are known to work with these files, including with GPU acceleration:

- llama.cpp, commit

e76d630and later. - text-generation-webui, the most widely used web UI.

- KoboldCpp, version 1.37 and later. A powerful GGML web UI, especially good for story telling.

- LM Studio, a fully featured local GUI with GPU acceleration for both Windows and macOS. Use 0.1.11 or later for macOS GPU acceleration with 70B models.

- llama-cpp-python, version 0.1.77 and later. A Python library with LangChain support, and OpenAI-compatible API server.

- ctransformers, version 0.2.15 and later. A Python library with LangChain support, and OpenAI-compatible API server.

Repositories available

- GPTQ models for GPU inference, with multiple quantisation parameter options.

- 2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference

- 2, 3, 4, 5, 6 and 8-bit GGML models for CPU+GPU inference (deprecated)

- WizardLM's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions

Prompt template: Alpaca-CoT

Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response: Let's think step by step.

Compatibility

Works with llama.cpp commit e76d630 until August 21st, 2023

Will not work with llama.cpp after commit dadbed99e65252d79f81101a392d0d6497b86caa.

For compatibility with latest llama.cpp, please use GGUF files instead.

Or one of the other tools and libraries listed above.

To use in llama.cpp, you must add -gqa 8 argument.

For other UIs and libraries, please check the docs.

Explanation of the new k-quant methods

Click to see details

The new methods available are:

- GGML_TYPE_Q2_K - "type-1" 2-bit quantization in super-blocks containing 16 blocks, each block having 16 weight. Block scales and mins are quantized with 4 bits. This ends up effectively using 2.5625 bits per weight (bpw)

- GGML_TYPE_Q3_K - "type-0" 3-bit quantization in super-blocks containing 16 blocks, each block having 16 weights. Scales are quantized with 6 bits. This end up using 3.4375 bpw.

- GGML_TYPE_Q4_K - "type-1" 4-bit quantization in super-blocks containing 8 blocks, each block having 32 weights. Scales and mins are quantized with 6 bits. This ends up using 4.5 bpw.

- GGML_TYPE_Q5_K - "type-1" 5-bit quantization. Same super-block structure as GGML_TYPE_Q4_K resulting in 5.5 bpw

- GGML_TYPE_Q6_K - "type-0" 6-bit quantization. Super-blocks with 16 blocks, each block having 16 weights. Scales are quantized with 8 bits. This ends up using 6.5625 bpw

- GGML_TYPE_Q8_K - "type-0" 8-bit quantization. Only used for quantizing intermediate results. The difference to the existing Q8_0 is that the block size is 256. All 2-6 bit dot products are implemented for this quantization type.

Refer to the Provided Files table below to see what files use which methods, and how.

Provided files

| Name | Quant method | Bits | Size | Max RAM required | Use case |

|---|---|---|---|---|---|

| wizardmath-70b-v1.0.ggmlv3.q2_K.bin | q2_K | 2 | 28.96 GB | 31.46 GB | New k-quant method. Uses GGML_TYPE_Q4_K for the attention.vw and feed_forward.w2 tensors, GGML_TYPE_Q2_K for the other tensors. |

| wizardmath-70b-v1.0.ggmlv3.q3_K_S.bin | q3_K_S | 3 | 30.09 GB | 32.59 GB | New k-quant method. Uses GGML_TYPE_Q3_K for all tensors |

| wizardmath-70b-v1.0.ggmlv3.q3_K_M.bin | q3_K_M | 3 | 33.39 GB | 35.89 GB | New k-quant method. Uses GGML_TYPE_Q4_K for the attention.wv, attention.wo, and feed_forward.w2 tensors, else GGML_TYPE_Q3_K |

| wizardmath-70b-v1.0.ggmlv3.q3_K_L.bin | q3_K_L | 3 | 36.49 GB | 38.99 GB | New k-quant method. Uses GGML_TYPE_Q5_K for the attention.wv, attention.wo, and feed_forward.w2 tensors, else GGML_TYPE_Q3_K |

| wizardmath-70b-v1.0.ggmlv3.q4_0.bin | q4_0 | 4 | 38.80 GB | 41.30 GB | Original quant method, 4-bit. |

| wizardmath-70b-v1.0.ggmlv3.q4_K_S.bin | q4_K_S | 4 | 39.18 GB | 41.68 GB | New k-quant method. Uses GGML_TYPE_Q4_K for all tensors |

| wizardmath-70b-v1.0.ggmlv3.q4_K_M.bin | q4_K_M | 4 | 41.69 GB | 44.19 GB | New k-quant method. Uses GGML_TYPE_Q6_K for half of the attention.wv and feed_forward.w2 tensors, else GGML_TYPE_Q4_K |

| wizardmath-70b-v1.0.ggmlv3.q4_1.bin | q4_1 | 4 | 43.12 GB | 45.62 GB | Original quant method, 4-bit. Higher accuracy than q4_0 but not as high as q5_0. However has quicker inference than q5 models. |

| wizardmath-70b-v1.0.ggmlv3.q5_0.bin | q5_0 | 5 | 47.43 GB | 49.93 GB | Original quant method, 5-bit. Higher accuracy, higher resource usage and slower inference. |

| wizardmath-70b-v1.0.ggmlv3.q5_K_S.bin | q5_K_S | 5 | 47.74 GB | 50.24 GB | New k-quant method. Uses GGML_TYPE_Q5_K for all tensors |

| wizardmath-70b-v1.0.ggmlv3.q5_K_M.bin | q5_K_M | 5 | 49.03 GB | 51.53 GB | New k-quant method. Uses GGML_TYPE_Q6_K for half of the attention.wv and feed_forward.w2 tensors, else GGML_TYPE_Q5_K |

Note: the above RAM figures assume no GPU offloading. If layers are offloaded to the GPU, this will reduce RAM usage and use VRAM instead.

How to run in llama.cpp

Make sure you are using llama.cpp from commit dadbed99e65252d79f81101a392d0d6497b86caa or earlier.

For compatibility with latest llama.cpp, please use GGUF files instead.

I use the following command line; adjust for your tastes and needs:

./main -t 10 -ngl 40 -gqa 8 -m wizardmath-70b-v1.0.ggmlv3.q4_K_M.bin --color -c 4096 --temp 0.7 --repeat_penalty 1.1 -n -1 -p "Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n\n### Instruction:\n{prompt}\n\n\n### Response: Let's think step by step."

Change -t 10 to the number of physical CPU cores you have. For example if your system has 8 cores/16 threads, use -t 8. If you are fully offloading the model to GPU, use -t 1

Change -ngl 40 to the number of GPU layers you have VRAM for. Use -ngl 100 to offload all layers to VRAM - if you have a 48GB card, or 2 x 24GB, or similar. Otherwise you can partially offload as many as you have VRAM for, on one or more GPUs.

If you want to have a chat-style conversation, replace the -p <PROMPT> argument with -i -ins

Remember the -gqa 8 argument, required for Llama 70B models.

Change -c 4096 to the desired sequence length for this model. For models that use RoPE, add --rope-freq-base 10000 --rope-freq-scale 0.5 for doubled context, or --rope-freq-base 10000 --rope-freq-scale 0.25 for 4x context.

For other parameters and how to use them, please refer to the llama.cpp documentation

How to run in text-generation-webui

Further instructions here: text-generation-webui/docs/llama.cpp-models.md.

Discord

For further support, and discussions on these models and AI in general, join us at:

Thanks, and how to contribute.

Thanks to the chirper.ai team!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

- Patreon: https://patreon.com/TheBlokeAI

- Ko-Fi: https://ko-fi.com/TheBlokeAI

Special thanks to: Aemon Algiz.

Patreon special mentions: Russ Johnson, J, alfie_i, Alex, NimbleBox.ai, Chadd, Mandus, Nikolai Manek, Ken Nordquist, ya boyyy, Illia Dulskyi, Viktor Bowallius, vamX, Iucharbius, zynix, Magnesian, Clay Pascal, Pierre Kircher, Enrico Ros, Tony Hughes, Elle, Andrey, knownsqashed, Deep Realms, Jerry Meng, Lone Striker, Derek Yates, Pyrater, Mesiah Bishop, James Bentley, Femi Adebogun, Brandon Frisco, SuperWojo, Alps Aficionado, Michael Dempsey, Vitor Caleffi, Will Dee, Edmond Seymore, usrbinkat, LangChain4j, Kacper Wikieł, Luke Pendergrass, John Detwiler, theTransient, Nathan LeClaire, Tiffany J. Kim, biorpg, Eugene Pentland, Stanislav Ovsiannikov, Fred von Graf, terasurfer, Kalila, Dan Guido, Nitin Borwankar, 阿明, Ai Maven, John Villwock, Gabriel Puliatti, Stephen Murray, Asp the Wyvern, danny, Chris Smitley, ReadyPlayerEmma, S_X, Daniel P. Andersen, Olakabola, Jeffrey Morgan, Imad Khwaja, Caitlyn Gatomon, webtim, Alicia Loh, Trenton Dambrowitz, Swaroop Kallakuri, Erik Bjäreholt, Leonard Tan, Spiking Neurons AB, Luke @flexchar, Ajan Kanaga, Thomas Belote, Deo Leter, RoA, Willem Michiel, transmissions 11, subjectnull, Matthew Berman, Joseph William Delisle, David Ziegler, Michael Davis, Johann-Peter Hartmann, Talal Aujan, senxiiz, Artur Olbinski, Rainer Wilmers, Spencer Kim, Fen Risland, Cap'n Zoog, Rishabh Srivastava, Michael Levine, Geoffrey Montalvo, Sean Connelly, Alexandros Triantafyllidis, Pieter, Gabriel Tamborski, Sam, Subspace Studios, Junyu Yang, Pedro Madruga, Vadim, Cory Kujawski, K, Raven Klaugh, Randy H, Mano Prime, Sebastain Graf, Space Cruiser

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

Original model card: WizardLM's WizardMath 70B V1.0

WizardMath: Empowering Mathematical Reasoning for Large Language Models via Reinforced Evol-Instruct (RLEIF)

🤗 HF Repo •🐱 Github Repo • 🐦 Twitter • 📃 [WizardLM] • 📃 [WizardCoder] • 📃 [WizardMath]

👋 Join our Discord

| Model | Checkpoint | Paper | HumanEval | MBPP | Demo | License |

|---|---|---|---|---|---|---|

| WizardCoder-Python-34B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 73.2 | 61.2 | Demo | Llama2 |

| WizardCoder-15B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 59.8 | 50.6 | -- | OpenRAIL-M |

| WizardCoder-Python-13B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 64.0 | 55.6 | -- | Llama2 |

| WizardCoder-Python-7B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 55.5 | 51.6 | Demo | Llama2 |

| WizardCoder-3B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 34.8 | 37.4 | -- | OpenRAIL-M |

| WizardCoder-1B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 23.8 | 28.6 | -- | OpenRAIL-M |

| Model | Checkpoint | Paper | GSM8k | MATH | Online Demo | License |

|---|---|---|---|---|---|---|

| WizardMath-70B-V1.0 | 🤗 HF Link | 📃 [WizardMath] | 81.6 | 22.7 | Demo | Llama 2 |

| WizardMath-13B-V1.0 | 🤗 HF Link | 📃 [WizardMath] | 63.9 | 14.0 | Demo | Llama 2 |

| WizardMath-7B-V1.0 | 🤗 HF Link | 📃 [WizardMath] | 54.9 | 10.7 | Demo | Llama 2 |

| Model | Checkpoint | Paper | MT-Bench | AlpacaEval | GSM8k | HumanEval | License |

|---|---|---|---|---|---|---|---|

| WizardLM-70B-V1.0 | 🤗 HF Link | 📃Coming Soon | 7.78 | 92.91% | 77.6% | 50.6 pass@1 | Llama 2 License |

| WizardLM-13B-V1.2 | 🤗 HF Link | 7.06 | 89.17% | 55.3% | 36.6 pass@1 | Llama 2 License | |

| WizardLM-13B-V1.1 | 🤗 HF Link | 6.76 | 86.32% | 25.0 pass@1 | Non-commercial | ||

| WizardLM-30B-V1.0 | 🤗 HF Link | 7.01 | 37.8 pass@1 | Non-commercial | |||

| WizardLM-13B-V1.0 | 🤗 HF Link | 6.35 | 75.31% | 24.0 pass@1 | Non-commercial | ||

| WizardLM-7B-V1.0 | 🤗 HF Link | 📃 [WizardLM] | 19.1 pass@1 | Non-commercial | |||

Github Repo: https://github.com/nlpxucan/WizardLM/tree/main/WizardMath

Twitter: https://twitter.com/WizardLM_AI/status/1689998428200112128

Discord: https://discord.gg/VZjjHtWrKs

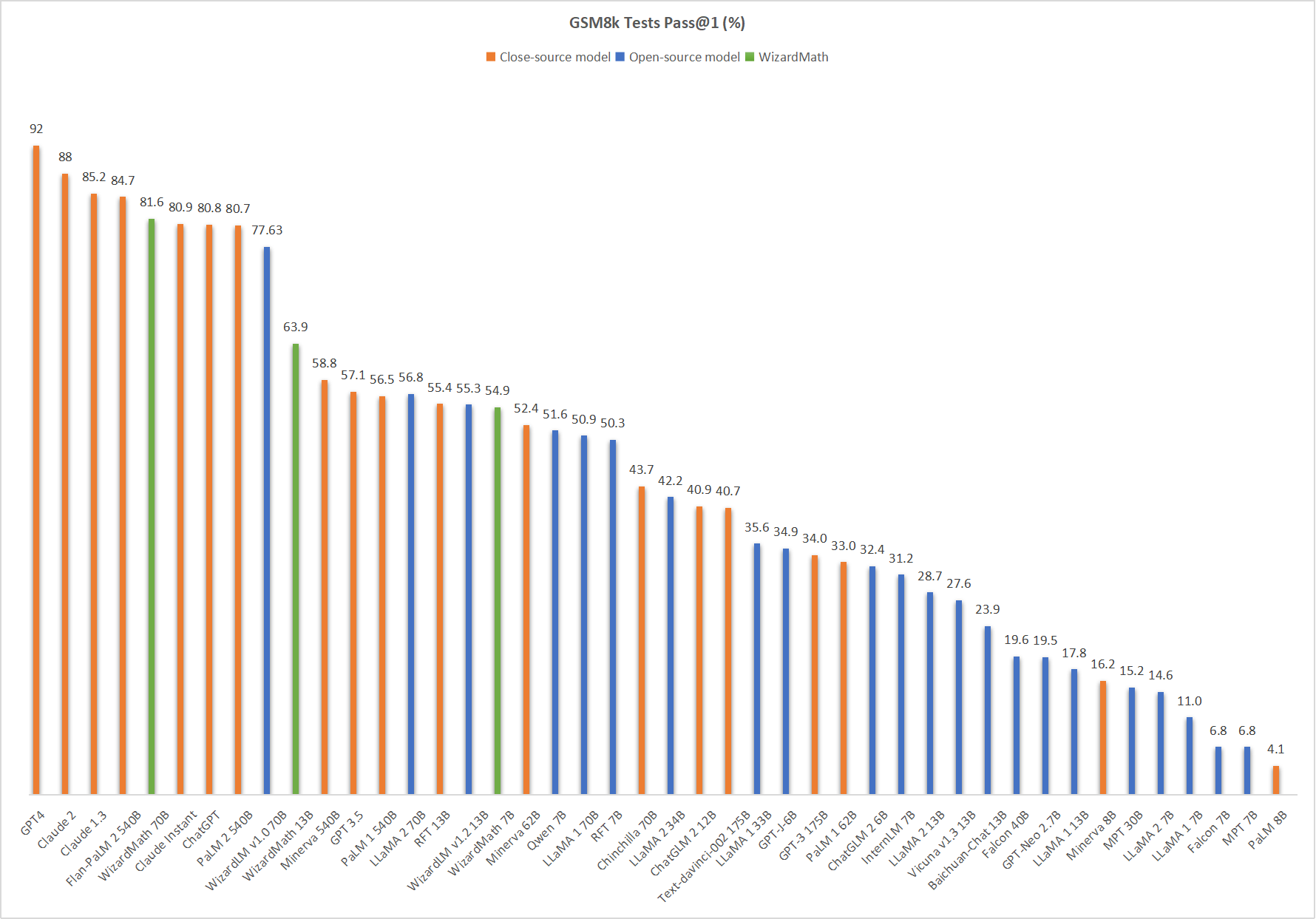

Comparing WizardMath-V1.0 with Other LLMs.

🔥 The following figure shows that our WizardMath-70B-V1.0 attains the fifth position in this benchmark, surpassing ChatGPT (81.6 vs. 80.8) , Claude Instant (81.6 vs. 80.9), PaLM 2 540B (81.6 vs. 80.7).

❗Note for model system prompts usage:

Please use the same systems prompts strictly with us, and we do not guarantee the accuracy of the quantified versions.

Default version:

"Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n### Instruction:\n{instruction}\n\n### Response:"

CoT Version: (❗For the simple math questions, we do NOT recommend to use the CoT prompt.)

"Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n### Instruction:\n{instruction}\n\n### Response: Let's think step by step."

Inference WizardMath Demo Script

We provide the WizardMath inference demo code here.

❗To commen concern about dataset:

Recently, there have been clear changes in the open-source policy and regulations of our overall organization's code, data, and models. Despite this, we have still worked hard to obtain opening the weights of the model first, but the data involves stricter auditing and is in review with our legal team . Our researchers have no authority to publicly release them without authorization. Thank you for your understanding.

Citation

Please cite the repo if you use the data, method or code in this repo.

@article{luo2023wizardmath,

title={WizardMath: Empowering Mathematical Reasoning for Large Language Models via Reinforced Evol-Instruct},

author={Luo, Haipeng and Sun, Qingfeng and Xu, Can and Zhao, Pu and Lou, Jianguang and Tao, Chongyang and Geng, Xiubo and Lin, Qingwei and Chen, Shifeng and Zhang, Dongmei},

journal={arXiv preprint arXiv:2308.09583},

year={2023}

}

- Downloads last month

- 2