Upload folder using huggingface_hub (#4)

Browse files- 74d0a8a161eede15a99bdb56234959185a03875d57e019013306fa87a0f8e1dd (39141bb0a18c4328b1ea18d3c8af431393046baa)

- config.json +1 -1

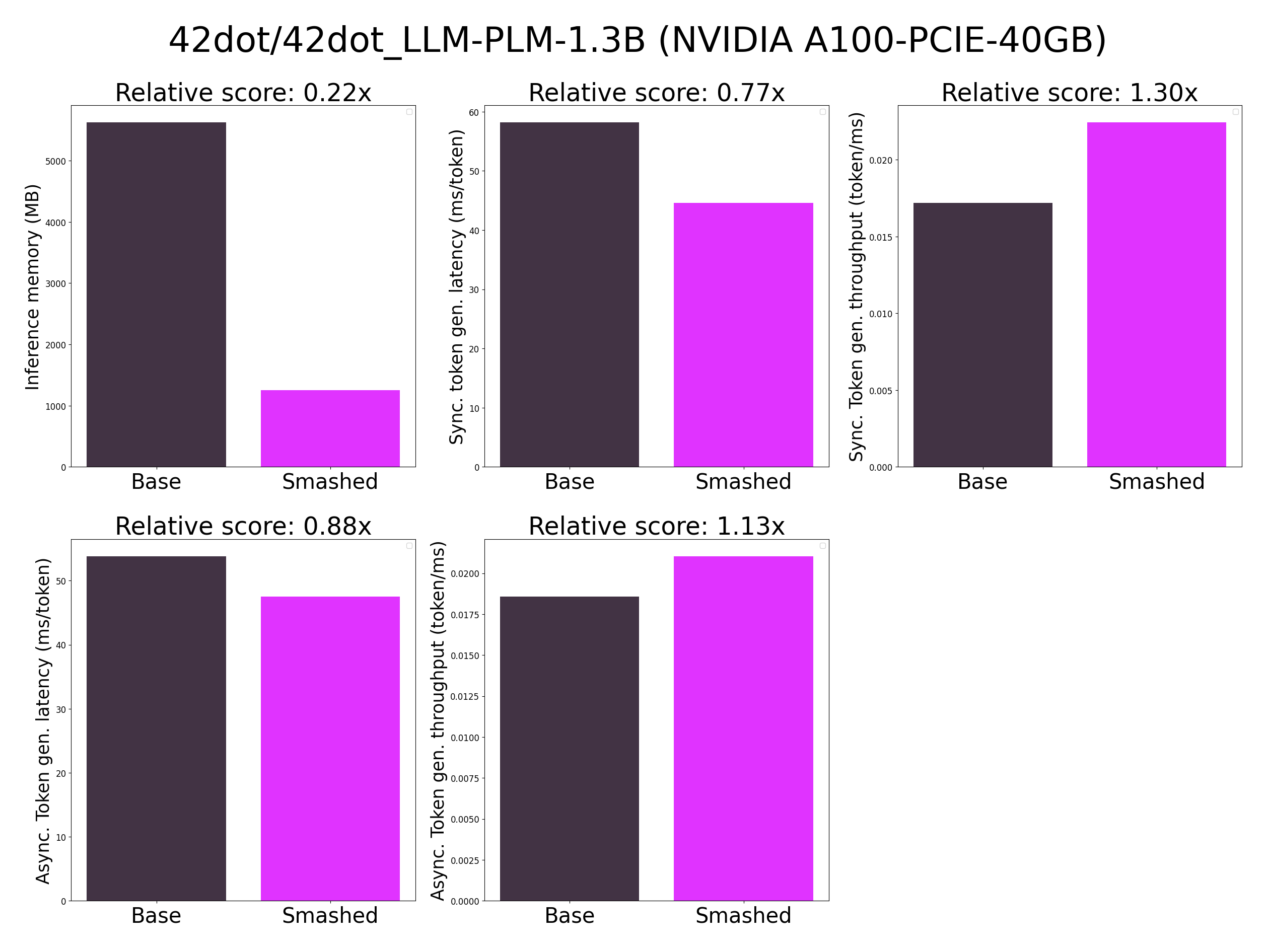

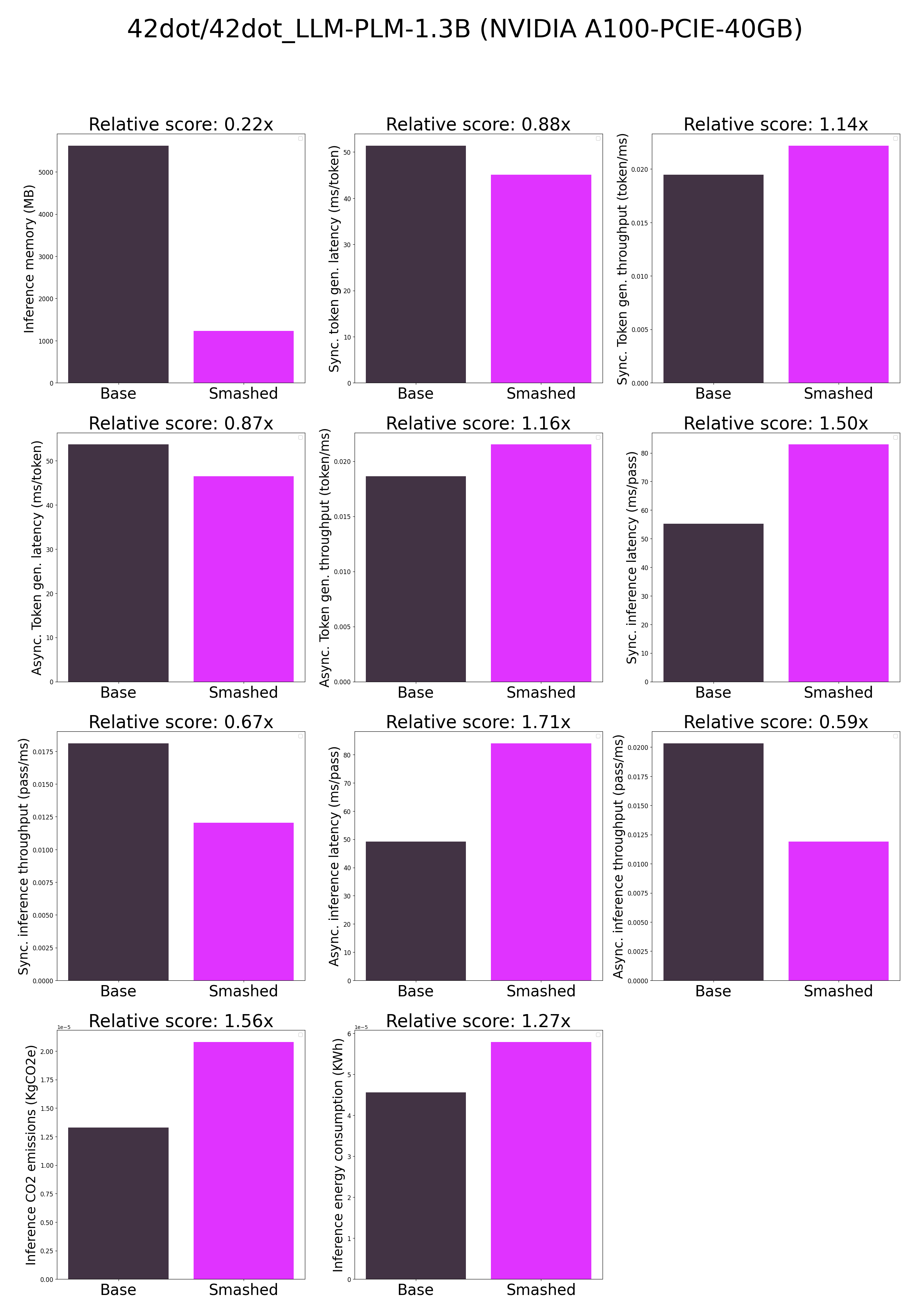

- plots.png +0 -0

- smash_config.json +1 -1

config.json

CHANGED

|

@@ -1,5 +1,5 @@

|

|

| 1 |

{

|

| 2 |

-

"_name_or_path": "/tmp/

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

|

|

|

| 1 |

{

|

| 2 |

+

"_name_or_path": "/tmp/tmpahl9ahxq",

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

plots.png

CHANGED

|

|

smash_config.json

CHANGED

|

@@ -8,7 +8,7 @@

|

|

| 8 |

"compilers": "[]",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

-

"cache_dir": "/ceph/hdd/staff/charpent/.cache/

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "42dot/42dot_LLM-PLM-1.3B",

|

| 14 |

"pruning_ratio": 0.0,

|

|

|

|

| 8 |

"compilers": "[]",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

+

"cache_dir": "/ceph/hdd/staff/charpent/.cache/modelsq_fbgga3",

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "42dot/42dot_LLM-PLM-1.3B",

|

| 14 |

"pruning_ratio": 0.0,

|