File size: 9,478 Bytes

6d6db12 797cc55 6d6db12 797cc55 6d6db12 797cc55 1eb13a0 38e10e5 797cc55 1eb13a0 797cc55 9e11fe0 38e10e5 797cc55 63dbe71 797cc55 1eb13a0 797cc55 1eb13a0 797cc55 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 |

---

thumbnail: >-

https://huggingface.co/OFA-Sys/small-stable-diffusion-v0/resolve/main/sample_images_compressed.jpg

datasets:

- ChristophSchuhmann/improved_aesthetics_6plus

license: openrail

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

language:

- en

pipeline_tag: text-to-image

---

# Small Stable Diffusion Model Card

【Update 2023/02/07】 Recently, we have released [a diffusion deployment repo](https://github.com/OFA-Sys/diffusion-deploy) to speedup the inference on both GPU (\~4x speedup, based on TensorRT) and CPU (\~12x speedup, based on IntelOpenVINO).

Integrated with this repo, small-stable-diffusion could generate images in just **5 seconds on the CPU**\*.

*\* Test on Intel(R) Xeon(R) Platinum 8369B CPU, DPMSolverMultistepScheduler 10 steps, fix channel/height/width when converting to Onnx*

Similar image generation quality, but is nearly 1/2 smaller!

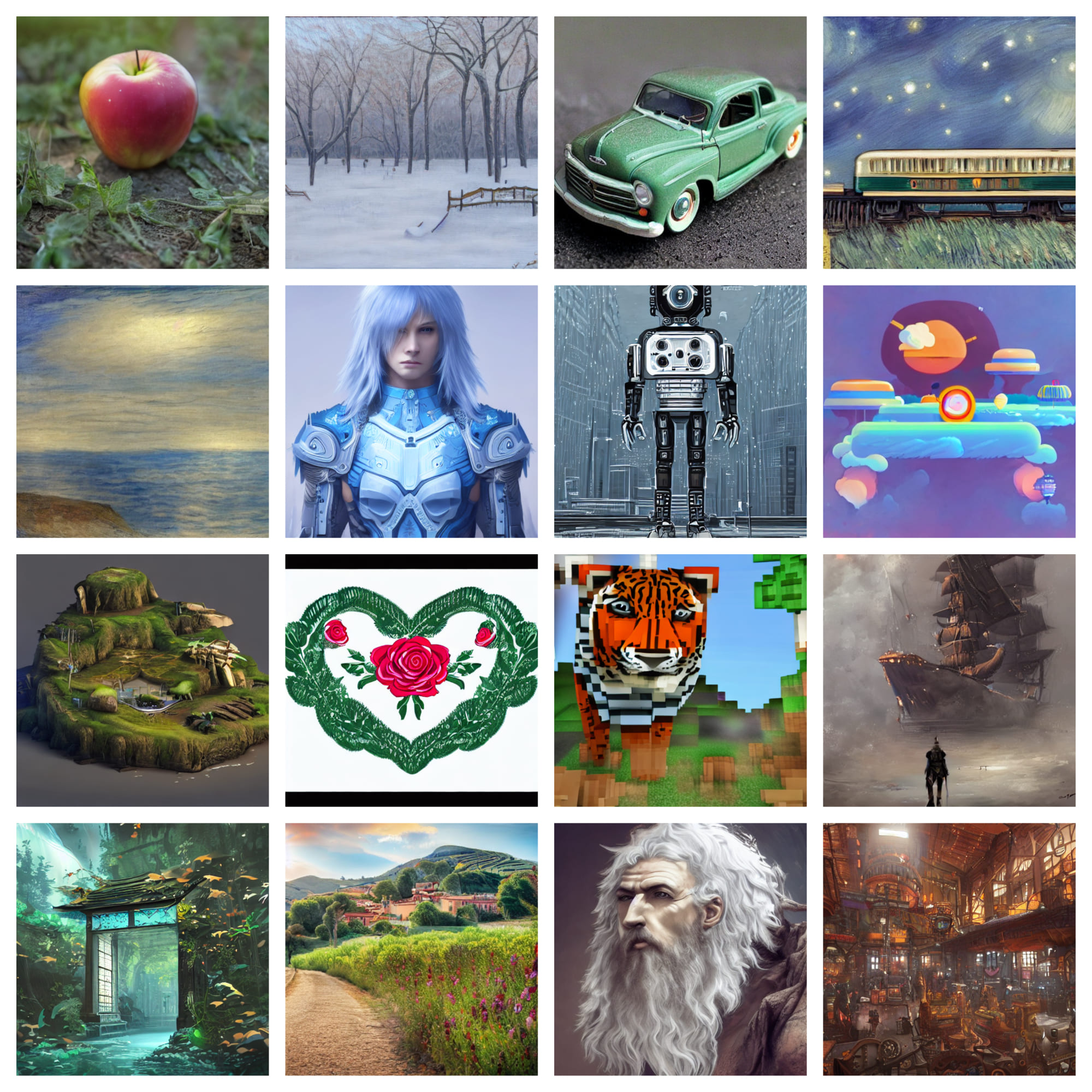

Here are some samples:

# Gradio

We support a [Gradio](https://github.com/gradio-app/gradio) Web UI to run small-stable-diffusion-v0:

[](https://huggingface.co/spaces/akhaliq/small-stable-diffusion-v0)

We also provide a space demo for [`small-stable-diffusion-v0 + diffusion-deploy`](https://huggingface.co/spaces/OFA-Sys/FAST-CPU-small-stable-diffusion-v0).

*As huggingface provides AMD CPU for the space demo, it costs about 35 seconds to generate an image with 15 steps, which is much slower than the Intel CPU environment as diffusion-deploy is based on Intel's OpenVINO.*

## Example

*Use `Diffusers` >=0.8.0, do not support lower versions.*

```python

import torch

from diffusers import StableDiffusionPipeline

model_id = "OFA-Sys/small-stable-diffusion-v0/"

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

pipe = pipe.to("cuda")

prompt = "an apple, 4k"

image = pipe(prompt).images[0]

image.save("apple.png")

```

# Training

### Initialization

This model is initialized from stable-diffusion v1-4. As the model structure is not the same as stable-diffusion and the number of parameters is smaller, the parameters of stable diffusion could not be utilized directly. Therefore, small stable diffusion set `layers_per_block=1` and select the first layer of each block in original stable diffusion to initilize the small model.

### Training Procedure

After the initialization, the model has been trained for 1100k steps in 8xA100 GPUS. The training progress consists of three stages. The first stage is a simple pre-training precedure. In the last two stages, the original stable diffusion was utilized to distill knowledge to small model as a teacher model. In all stages, only the parameters in unet were trained and other parameters were frozen.

- **Hardware:** 8 x A100-80GB GPUs

- **Optimizer:** AdamW

- **Stage 1** - Pretrain the unet part of the model.

- **Steps**: 500,000

- **Batch:** batch size=8, GPUs=8, Gradient Accumulations=2. Total batch size=128

- **Learning rate:** warmup to 1e-5 for 10,000 steps and then kept constant

- **Stage 2** - Distill the model using stable-diffusion v1-4 as the teacher. Besides the ground truth, the training in this stage uses the soft-label (`pred_noise`) generated by teacher model as well.

- **Steps**: 400,000

- **Batch:** batch size=8, GPUs=8, Gradient Accumulations=2. Total batch size=128

- **Learning rate:** warmup to 1e-5 for 5,000 steps and then kept constant

- **Soft label weight:** 0.5

- **Hard label weight:** 0.5

- **Stage 3** - Distill the model using stable-diffusion v1-5 as the teacher. Use several techniques in `Knowledge Distillation of Transformer-based Language Models Revisited`, including similarity-based layer match apart from soft label.

- **Steps**: 200,000

- **Batch:** batch size=8, GPUs=8, Gradient Accumulations=2. Total batch size=128

- **Learning rate:** warmup to 1e-5 for 5,000 steps and then kept constant

- **Softlabel weight:** 0.5

- **Hard label weight:** 0.5

### Training Data

The model developers used the following dataset for training the model:

1. [LAION-2B en aesthetic](https://huggingface.co/datasets/laion/laion2B-en-aesthetic)

2. [LAION-Art](https://huggingface.co/datasets/laion/laion-art)

3. [LAION-HD](https://huggingface.co/datasets/laion/laion-high-resolution)

### Citation

```bibtex

@article{Lu2022KnowledgeDO,

title={Knowledge Distillation of Transformer-based Language Models Revisited},

author={Chengqiang Lu and Jianwei Zhang and Yunfei Chu and Zhengyu Chen and Jingren Zhou and Fei Wu and Haiqing Chen and Hongxia Yang},

journal={ArXiv},

year={2022},

volume={abs/2206.14366}

}

```

# Uses

_The following section is adapted from the [Stable Diffusion model card](https://huggingface.co/CompVis/stable-diffusion-v1-4)_

## Direct Use

The model is intended for research purposes only. Possible research areas and

tasks include

- Safe deployment of models which have the potential to generate harmful content.

- Probing and understanding the limitations and biases of generative models.

- Generation of artworks and use in design and other artistic processes.

- Applications in educational or creative tools.

- Research on generative models.

Excluded uses are described below.

### Misuse, Malicious Use, and Out-of-Scope Use

The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. This includes generating images that people would foreseeably find disturbing, distressing, or offensive; or content that propagates historical or current stereotypes.

#### Out-of-Scope Use

The model was not trained to be factual or true representations of people or events, and therefore using the model to generate such content is out-of-scope for the abilities of this model.

#### Misuse and Malicious Use

Using the model to generate content that is cruel to individuals is a misuse of this model. This includes, but is not limited to:

- Generating demeaning, dehumanizing, or otherwise harmful representations of people or their environments, cultures, religions, etc.

- Intentionally promoting or propagating discriminatory content or harmful stereotypes.

- Impersonating individuals without their consent.

- Sexual content without consent of the people who might see it.

- Mis- and disinformation

- Representations of egregious violence and gore

- Sharing of copyrighted or licensed material in violation of its terms of use.

- Sharing content that is an alteration of copyrighted or licensed material in violation of its terms of use.

## Limitations and Bias

### Limitations

- The model does not achieve perfect photorealism

- The model cannot render legible text

- The model does not perform well on more difficult tasks which involve compositionality, such as rendering an image corresponding to “A red cube on top of a blue sphere”

- Faces and people in general may not be generated properly.

- The model was trained mainly with English captions and will not work as well in other languages.

- The autoencoding part of the model is lossy

- The model was trained on a large-scale dataset

[LAION-5B](https://laion.ai/blog/laion-5b/) which contains adult material

and is not fit for product use without additional safety mechanisms and

considerations.

- No additional measures were used to deduplicate the dataset. As a result, we observe some degree of memorization for images that are duplicated in the training data.

The training data can be searched at [https://rom1504.github.io/clip-retrieval/](https://rom1504.github.io/clip-retrieval/) to possibly assist in the detection of memorized images.

### Bias

While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases.

Stable Diffusion v1 was trained on subsets of [LAION-2B(en)](https://laion.ai/blog/laion-5b/),

which consists of images that are primarily limited to English descriptions.

Texts and images from communities and cultures that use other languages are likely to be insufficiently accounted for.

This affects the overall output of the model, as white and western cultures are often set as the default. Further, the

ability of the model to generate content with non-English prompts is significantly worse than with English-language prompts.

### Safety Module

The intended use of this model is with the [Safety Checker](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/safety_checker.py) in Diffusers.

This checker works by checking model outputs against known hard-coded NSFW concepts.

The concepts are intentionally hidden to reduce the likelihood of reverse-engineering this filter.

Specifically, the checker compares the class probability of harmful concepts in the embedding space of the `CLIPModel` *after generation* of the images.

The concepts are passed into the model with the generated image and compared to a hand-engineered weight for each NSFW concept.

*This model card was written by: Justin Pinkney and is based on the [Stable Diffusion model card](https://huggingface.co/CompVis/stable-diffusion-v1-4).* |