Upload folder using huggingface_hub

#1

by

Kardbord

- opened

- .gitattributes +1 -0

- README.md +41 -0

- feature_extractor/preprocessor_config.json +28 -0

- imgs/batchgrid.jpg +3 -0

- imgs/page1.jpg +0 -0

- imgs/page2.jpg +0 -0

- model_index.json +1 -0

- parameters_for_samples.txt +80 -0

- portrait+1.0.ckpt +3 -0

- portrait+1.0.safetensors +3 -0

- safety_checker/config.json +181 -0

- safety_checker/model.safetensors +3 -0

- safety_checker/pytorch_model.bin +3 -0

- scheduler/scheduler_config.json +14 -0

- text_encoder/config.json +25 -0

- text_encoder/model.safetensors +3 -0

- text_encoder/pytorch_model.bin +3 -0

- tokenizer/merges.txt +0 -0

- tokenizer/special_tokens_map.json +24 -0

- tokenizer/tokenizer_config.json +34 -0

- tokenizer/vocab.json +0 -0

- unet/config.json +41 -0

- unet/diffusion_pytorch_model.bin +3 -0

- unet/diffusion_pytorch_model.safetensors +3 -0

- vae/config.json +30 -0

- vae/diffusion_pytorch_model.bin +3 -0

- vae/diffusion_pytorch_model.safetensors +3 -0

.gitattributes

CHANGED

|

@@ -32,3 +32,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 32 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 33 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 32 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 33 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

imgs/batchgrid.jpg filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,41 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language:

|

| 3 |

+

- en

|

| 4 |

+

license: creativeml-openrail-m

|

| 5 |

+

tags:

|

| 6 |

+

- stable-diffusion

|

| 7 |

+

- stable-diffusion-diffusers

|

| 8 |

+

- text-to-image

|

| 9 |

+

- safetensors

|

| 10 |

+

- diffusers

|

| 11 |

+

thumbnail: https://huggingface.co/wavymulder/portraitplus/resolve/main/imgs/page1.jpg

|

| 12 |

+

inference: true

|

| 13 |

+

---

|

| 14 |

+

# Overview

|

| 15 |

+

|

| 16 |

+

This is simply wavymulder/portraitplus with the safety checker disabled.

|

| 17 |

+

|

| 18 |

+

**DO NOT** attempt to use this model to generate harmful or illegal content.

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

**Portrait+**

|

| 24 |

+

|

| 25 |

+

[*CKPT DOWNLOAD LINK*](https://huggingface.co/wavymulder/portraitplus/resolve/main/portrait%2B1.0.ckpt) - this is a dreambooth model trained on a diverse set of close to medium range portraits of people.

|

| 26 |

+

|

| 27 |

+

Use `portrait+ style` in your prompt (I recommend at the start)

|

| 28 |

+

|

| 29 |

+

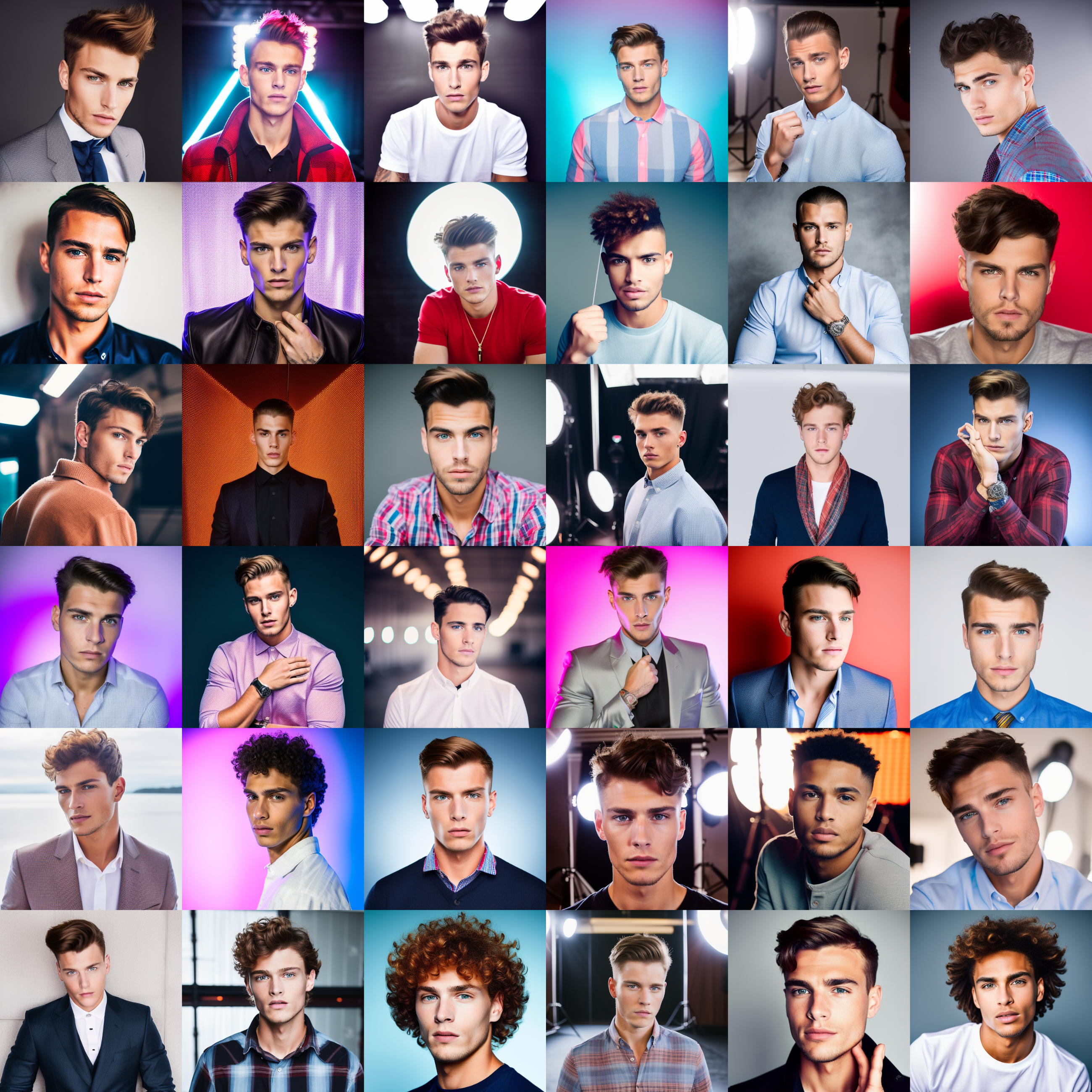

The goal was to create a model with a consistent portrait composition and consistent eyes. See the batch example below for the consistency of the model's eyes. This model can do several styles, so you'll want to guide it along depending on your goals. Note below in the document that prompting celebrities works a bit differently than prompting generic characters, since real people have a more photoreal presence in the base 1.5 model. Also note that fantasy concepts, like cyberpunk people or wizards, will require more rigid prompting for photoreal styles than something common like a person in a park.

|

| 30 |

+

|

| 31 |

+

Portrait+ works best at a 1:1 aspect ratio, though I've had success with tall aspect ratios as well.

|

| 32 |

+

|

| 33 |

+

Please see [this document where I share the parameters (prompt, sampler, seed, etc.) used for all example images above.](https://huggingface.co/wavymulder/portraitplus/resolve/main/parameters_for_samples.txt)

|

| 34 |

+

|

| 35 |

+

We support a [Gradio](https://github.com/gradio-app/gradio) Web UI to run portraitplus:

|

| 36 |

+

[](https://huggingface.co/spaces/wavymulder/portraitplus)

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

|

feature_extractor/preprocessor_config.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"crop_size": {

|

| 3 |

+

"height": 224,

|

| 4 |

+

"width": 224

|

| 5 |

+

},

|

| 6 |

+

"do_center_crop": true,

|

| 7 |

+

"do_convert_rgb": true,

|

| 8 |

+

"do_normalize": true,

|

| 9 |

+

"do_rescale": true,

|

| 10 |

+

"do_resize": true,

|

| 11 |

+

"feature_extractor_type": "CLIPFeatureExtractor",

|

| 12 |

+

"image_mean": [

|

| 13 |

+

0.48145466,

|

| 14 |

+

0.4578275,

|

| 15 |

+

0.40821073

|

| 16 |

+

],

|

| 17 |

+

"image_processor_type": "CLIPFeatureExtractor",

|

| 18 |

+

"image_std": [

|

| 19 |

+

0.26862954,

|

| 20 |

+

0.26130258,

|

| 21 |

+

0.27577711

|

| 22 |

+

],

|

| 23 |

+

"resample": 3,

|

| 24 |

+

"rescale_factor": 0.00392156862745098,

|

| 25 |

+

"size": {

|

| 26 |

+

"shortest_edge": 224

|

| 27 |

+

}

|

| 28 |

+

}

|

imgs/batchgrid.jpg

ADDED

|

Git LFS Details

|

imgs/page1.jpg

ADDED

|

imgs/page2.jpg

ADDED

|

model_index.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"_class_name": "StableDiffusionPipeline", "_diffusers_version": "0.11.0.dev0", "feature_extractor": ["transformers", "CLIPImageProcessor"], "requires_safety_checker": false, "safety_checker": [null, null], "scheduler": ["diffusers", "PNDMScheduler"], "text_encoder": ["transformers", "CLIPTextModel"], "tokenizer": ["transformers", "CLIPTokenizer"], "unet": ["diffusers", "UNet2DConditionModel"], "vae": ["diffusers", "AutoencoderKL"]}

|

parameters_for_samples.txt

ADDED

|

@@ -0,0 +1,80 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

In order (from left to right, top to bottom):

|

| 2 |

+

|

| 3 |

+

portrait+ style photograph of Selena Gomez

|

| 4 |

+

Negative prompt: blender

|

| 5 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 262145884, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 6 |

+

|

| 7 |

+

portrait+ style photograph of Heath Ledger

|

| 8 |

+

Negative prompt: blender illustration hdr

|

| 9 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 1955757401, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 10 |

+

|

| 11 |

+

portrait+ style photograph of Emma Watson as Hermione Granger

|

| 12 |

+

Negative prompt: blender illustration hdr

|

| 13 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 2989175071, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 14 |

+

|

| 15 |

+

portrait+ style photograph of young Daniel Radcliffe as Harry Potter

|

| 16 |

+

Negative prompt: blender illustration hdr

|

| 17 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 4013224728, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 18 |

+

|

| 19 |

+

portrait+ style girl Princess Zelda

|

| 20 |

+

Negative prompt: blur haze

|

| 21 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3620776300, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 22 |

+

|

| 23 |

+

anime portrait+ style shonen protagonist

|

| 24 |

+

Negative prompt: blur haze

|

| 25 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 2525289016, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 26 |

+

|

| 27 |

+

portrait+ style photograph of a witch cute girl

|

| 28 |

+

Negative prompt: blender illustration painted blur haze cosplay

|

| 29 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 2361115831, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 30 |

+

|

| 31 |

+

portrait+ style photograph of young Carrie Fisher as Princess Leia

|

| 32 |

+

Negative prompt: blender illustration hdr cosplay

|

| 33 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3924291730, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 34 |

+

|

| 35 |

+

portrait+ style girl astronaut flat illustration

|

| 36 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 1978186121, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 37 |

+

|

| 38 |

+

portrait+ style photograph of John Boyega as a stormtrooper

|

| 39 |

+

Negative prompt: blender illustration hdr cosplay helmet

|

| 40 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 4203393211, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 41 |

+

|

| 42 |

+

portrait+ style photograph of a spacepilot girl

|

| 43 |

+

Negative prompt: painted illustration blur haze

|

| 44 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 957133394, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 45 |

+

|

| 46 |

+

anime portrait+ style royal queen

|

| 47 |

+

Negative prompt: blur haze

|

| 48 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 1882413796, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 49 |

+

|

| 50 |

+

portrait+ style photograph of a paladin knight man

|

| 51 |

+

Negative prompt: painted illustration blender

|

| 52 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 2073715162, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 53 |

+

|

| 54 |

+

portrait+ style photograph of young Natalie Portman as Padme Amidala

|

| 55 |

+

Negative prompt: blender illustration hdr cosplay

|

| 56 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 2191434366, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 57 |

+

|

| 58 |

+

portrait+ style a beautiful sorceress

|

| 59 |

+

Negative prompt: blur haze

|

| 60 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 2212513167, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 61 |

+

|

| 62 |

+

portrait+ style photograph of a cyberpunk girl

|

| 63 |

+

Negative prompt: painted illustration blur haze

|

| 64 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3252607763, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 65 |

+

|

| 66 |

+

portrait+ style photograph of a rock star man

|

| 67 |

+

Negative prompt: painted illustration blender

|

| 68 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 1503681581, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 69 |

+

|

| 70 |

+

portrait+ style a beautiful sorceress

|

| 71 |

+

Negative prompt: blur haze

|

| 72 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 1428179101, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 73 |

+

|

| 74 |

+

portrait+ style photograph of Lionel Messi wearing a tuxedo

|

| 75 |

+

Negative prompt: blender illustration hdr painted

|

| 76 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 1293913057, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

| 77 |

+

|

| 78 |

+

portrait+ style photograph of a cyberpunk man, cyborg

|

| 79 |

+

Negative prompt: painted blender illustration blur haze

|

| 80 |

+

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3926729433, Size: 768x768, Model hash: 585879fc, Denoising strength: 0.3, First pass size: 0x0

|

portrait+1.0.ckpt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:345033419b5030bc7207879cfe0b080623c4bce85600cd8d406b989fbe777173

|

| 3 |

+

size 2132856622

|

portrait+1.0.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:90674f4d8629e57da4dfe546e8b7db65a0ee72e2226e02cc595d7897895301a9

|

| 3 |

+

size 2132625462

|

safety_checker/config.json

ADDED

|

@@ -0,0 +1,181 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_commit_hash": "cb41f3a270d63d454d385fc2e4f571c487c253c5",

|

| 3 |

+

"_name_or_path": "CompVis/stable-diffusion-safety-checker",

|

| 4 |

+

"architectures": [

|

| 5 |

+

"StableDiffusionSafetyChecker"

|

| 6 |

+

],

|

| 7 |

+

"initializer_factor": 1.0,

|

| 8 |

+

"logit_scale_init_value": 2.6592,

|

| 9 |

+

"model_type": "clip",

|

| 10 |

+

"projection_dim": 768,

|

| 11 |

+

"text_config": {

|

| 12 |

+

"_name_or_path": "",

|

| 13 |

+

"add_cross_attention": false,

|

| 14 |

+

"architectures": null,

|

| 15 |

+

"attention_dropout": 0.0,

|

| 16 |

+

"bad_words_ids": null,

|

| 17 |

+

"begin_suppress_tokens": null,

|

| 18 |

+

"bos_token_id": 0,

|

| 19 |

+

"chunk_size_feed_forward": 0,

|

| 20 |

+

"cross_attention_hidden_size": null,

|

| 21 |

+

"decoder_start_token_id": null,

|

| 22 |

+

"diversity_penalty": 0.0,

|

| 23 |

+

"do_sample": false,

|

| 24 |

+

"dropout": 0.0,

|

| 25 |

+

"early_stopping": false,

|

| 26 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 27 |

+

"eos_token_id": 2,

|

| 28 |

+

"exponential_decay_length_penalty": null,

|

| 29 |

+

"finetuning_task": null,

|

| 30 |

+

"forced_bos_token_id": null,

|

| 31 |

+

"forced_eos_token_id": null,

|

| 32 |

+

"hidden_act": "quick_gelu",

|

| 33 |

+

"hidden_size": 768,

|

| 34 |

+

"id2label": {

|

| 35 |

+

"0": "LABEL_0",

|

| 36 |

+

"1": "LABEL_1"

|

| 37 |

+

},

|

| 38 |

+

"initializer_factor": 1.0,

|

| 39 |

+

"initializer_range": 0.02,

|

| 40 |

+

"intermediate_size": 3072,

|

| 41 |

+

"is_decoder": false,

|

| 42 |

+

"is_encoder_decoder": false,

|

| 43 |

+

"label2id": {

|

| 44 |

+

"LABEL_0": 0,

|

| 45 |

+

"LABEL_1": 1

|

| 46 |

+

},

|

| 47 |

+

"layer_norm_eps": 1e-05,

|

| 48 |

+

"length_penalty": 1.0,

|

| 49 |

+

"max_length": 20,

|

| 50 |

+

"max_position_embeddings": 77,

|

| 51 |

+

"min_length": 0,

|

| 52 |

+

"model_type": "clip_text_model",

|

| 53 |

+

"no_repeat_ngram_size": 0,

|

| 54 |

+

"num_attention_heads": 12,

|

| 55 |

+

"num_beam_groups": 1,

|

| 56 |

+

"num_beams": 1,

|

| 57 |

+

"num_hidden_layers": 12,

|

| 58 |

+

"num_return_sequences": 1,

|

| 59 |

+

"output_attentions": false,

|

| 60 |

+

"output_hidden_states": false,

|

| 61 |

+

"output_scores": false,

|

| 62 |

+

"pad_token_id": 1,

|

| 63 |

+

"prefix": null,

|

| 64 |

+

"problem_type": null,

|

| 65 |

+

"projection_dim": 512,

|

| 66 |

+

"pruned_heads": {},

|

| 67 |

+

"remove_invalid_values": false,

|

| 68 |

+

"repetition_penalty": 1.0,

|

| 69 |

+

"return_dict": true,

|

| 70 |

+

"return_dict_in_generate": false,

|

| 71 |

+

"sep_token_id": null,

|

| 72 |

+

"suppress_tokens": null,

|

| 73 |

+

"task_specific_params": null,

|

| 74 |

+

"temperature": 1.0,

|

| 75 |

+

"tf_legacy_loss": false,

|

| 76 |

+

"tie_encoder_decoder": false,

|

| 77 |

+

"tie_word_embeddings": true,

|

| 78 |

+

"tokenizer_class": null,

|

| 79 |

+

"top_k": 50,

|

| 80 |

+

"top_p": 1.0,

|

| 81 |

+

"torch_dtype": null,

|

| 82 |

+

"torchscript": false,

|

| 83 |

+

"transformers_version": "4.26.0.dev0",

|

| 84 |

+

"typical_p": 1.0,

|

| 85 |

+

"use_bfloat16": false,

|

| 86 |

+

"vocab_size": 49408

|

| 87 |

+

},

|

| 88 |

+

"text_config_dict": {

|

| 89 |

+

"hidden_size": 768,

|

| 90 |

+

"intermediate_size": 3072,

|

| 91 |

+

"num_attention_heads": 12,

|

| 92 |

+

"num_hidden_layers": 12

|

| 93 |

+

},

|

| 94 |

+

"torch_dtype": "float32",

|

| 95 |

+

"transformers_version": null,

|

| 96 |

+

"vision_config": {

|

| 97 |

+

"_name_or_path": "",

|

| 98 |

+

"add_cross_attention": false,

|

| 99 |

+

"architectures": null,

|

| 100 |

+

"attention_dropout": 0.0,

|

| 101 |

+

"bad_words_ids": null,

|

| 102 |

+

"begin_suppress_tokens": null,

|

| 103 |

+

"bos_token_id": null,

|

| 104 |

+

"chunk_size_feed_forward": 0,

|

| 105 |

+

"cross_attention_hidden_size": null,

|

| 106 |

+

"decoder_start_token_id": null,

|

| 107 |

+

"diversity_penalty": 0.0,

|

| 108 |

+

"do_sample": false,

|

| 109 |

+

"dropout": 0.0,

|

| 110 |

+

"early_stopping": false,

|

| 111 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 112 |

+

"eos_token_id": null,

|

| 113 |

+

"exponential_decay_length_penalty": null,

|

| 114 |

+

"finetuning_task": null,

|

| 115 |

+

"forced_bos_token_id": null,

|

| 116 |

+

"forced_eos_token_id": null,

|

| 117 |

+

"hidden_act": "quick_gelu",

|

| 118 |

+

"hidden_size": 1024,

|

| 119 |

+

"id2label": {

|

| 120 |

+

"0": "LABEL_0",

|

| 121 |

+

"1": "LABEL_1"

|

| 122 |

+

},

|

| 123 |

+

"image_size": 224,

|

| 124 |

+

"initializer_factor": 1.0,

|

| 125 |

+

"initializer_range": 0.02,

|

| 126 |

+

"intermediate_size": 4096,

|

| 127 |

+

"is_decoder": false,

|

| 128 |

+

"is_encoder_decoder": false,

|

| 129 |

+

"label2id": {

|

| 130 |

+

"LABEL_0": 0,

|

| 131 |

+

"LABEL_1": 1

|

| 132 |

+

},

|

| 133 |

+

"layer_norm_eps": 1e-05,

|

| 134 |

+

"length_penalty": 1.0,

|

| 135 |

+

"max_length": 20,

|

| 136 |

+

"min_length": 0,

|

| 137 |

+

"model_type": "clip_vision_model",

|

| 138 |

+

"no_repeat_ngram_size": 0,

|

| 139 |

+

"num_attention_heads": 16,

|

| 140 |

+

"num_beam_groups": 1,

|

| 141 |

+

"num_beams": 1,

|

| 142 |

+

"num_channels": 3,

|

| 143 |

+

"num_hidden_layers": 24,

|

| 144 |

+

"num_return_sequences": 1,

|

| 145 |

+

"output_attentions": false,

|

| 146 |

+

"output_hidden_states": false,

|

| 147 |

+

"output_scores": false,

|

| 148 |

+

"pad_token_id": null,

|

| 149 |

+

"patch_size": 14,

|

| 150 |

+

"prefix": null,

|

| 151 |

+

"problem_type": null,

|

| 152 |

+

"projection_dim": 512,

|

| 153 |

+

"pruned_heads": {},

|

| 154 |

+

"remove_invalid_values": false,

|

| 155 |

+

"repetition_penalty": 1.0,

|

| 156 |

+

"return_dict": true,

|

| 157 |

+

"return_dict_in_generate": false,

|

| 158 |

+

"sep_token_id": null,

|

| 159 |

+

"suppress_tokens": null,

|

| 160 |

+

"task_specific_params": null,

|

| 161 |

+

"temperature": 1.0,

|

| 162 |

+

"tf_legacy_loss": false,

|

| 163 |

+

"tie_encoder_decoder": false,

|

| 164 |

+

"tie_word_embeddings": true,

|

| 165 |

+

"tokenizer_class": null,

|

| 166 |

+

"top_k": 50,

|

| 167 |

+

"top_p": 1.0,

|

| 168 |

+

"torch_dtype": null,

|

| 169 |

+

"torchscript": false,

|

| 170 |

+

"transformers_version": "4.26.0.dev0",

|

| 171 |

+

"typical_p": 1.0,

|

| 172 |

+

"use_bfloat16": false

|

| 173 |

+

},

|

| 174 |

+

"vision_config_dict": {

|

| 175 |

+

"hidden_size": 1024,

|

| 176 |

+

"intermediate_size": 4096,

|

| 177 |

+

"num_attention_heads": 16,

|

| 178 |

+

"num_hidden_layers": 24,

|

| 179 |

+

"patch_size": 14

|

| 180 |

+

}

|

| 181 |

+

}

|

safety_checker/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9d6a233ff6fd5ccb9f76fd99618d73369c52dd3d8222376384d0e601911089e8

|

| 3 |

+

size 1215981830

|

safety_checker/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:16d28f2b37109f222cdc33620fdd262102ac32112be0352a7f77e9614b35a394

|

| 3 |

+

size 1216064769

|

scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,14 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "PNDMScheduler",

|

| 3 |

+

"_diffusers_version": "0.11.0.dev0",

|

| 4 |

+

"beta_end": 0.012,

|

| 5 |

+

"beta_schedule": "scaled_linear",

|

| 6 |

+

"beta_start": 0.00085,

|

| 7 |

+

"clip_sample": false,

|

| 8 |

+

"num_train_timesteps": 1000,

|

| 9 |

+

"prediction_type": "epsilon",

|

| 10 |

+

"set_alpha_to_one": false,

|

| 11 |

+

"skip_prk_steps": true,

|

| 12 |

+

"steps_offset": 1,

|

| 13 |

+

"trained_betas": null

|

| 14 |

+

}

|

text_encoder/config.json

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "openai/clip-vit-large-patch14",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CLIPTextModel"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"dropout": 0.0,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"hidden_act": "quick_gelu",

|

| 11 |

+

"hidden_size": 768,

|

| 12 |

+

"initializer_factor": 1.0,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 3072,

|

| 15 |

+

"layer_norm_eps": 1e-05,

|

| 16 |

+

"max_position_embeddings": 77,

|

| 17 |

+

"model_type": "clip_text_model",

|

| 18 |

+

"num_attention_heads": 12,

|

| 19 |

+

"num_hidden_layers": 12,

|

| 20 |

+

"pad_token_id": 1,

|

| 21 |

+

"projection_dim": 768,

|

| 22 |

+

"torch_dtype": "float32",

|

| 23 |

+

"transformers_version": "4.26.0.dev0",

|

| 24 |

+

"vocab_size": 49408

|

| 25 |

+

}

|

text_encoder/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:75ad3d32a3e7253a788dfd2d8b1bfa40389d6f4a70822c39eb78410946a42a23

|

| 3 |

+

size 492265874

|

text_encoder/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:09b1edabf58d366bf84a91ad52c453e029022afba40d3a795437e7c6be13704d

|

| 3 |

+

size 492307041

|

tokenizer/merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

tokenizer/special_tokens_map.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<|startoftext|>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": true,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "<|endoftext|>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": true,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": "<|endoftext|>",

|

| 17 |

+

"unk_token": {

|

| 18 |

+

"content": "<|endoftext|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": true,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

}

|

| 24 |

+

}

|

tokenizer/tokenizer_config.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_prefix_space": false,

|

| 3 |

+

"bos_token": {

|

| 4 |

+

"__type": "AddedToken",

|

| 5 |

+

"content": "<|startoftext|>",

|

| 6 |

+

"lstrip": false,

|

| 7 |

+

"normalized": true,

|

| 8 |

+

"rstrip": false,

|

| 9 |

+

"single_word": false

|

| 10 |

+

},

|

| 11 |

+

"do_lower_case": true,

|

| 12 |

+

"eos_token": {

|

| 13 |

+

"__type": "AddedToken",

|

| 14 |

+

"content": "<|endoftext|>",

|

| 15 |

+

"lstrip": false,

|

| 16 |

+

"normalized": true,

|

| 17 |

+

"rstrip": false,

|

| 18 |

+

"single_word": false

|

| 19 |

+

},

|

| 20 |

+

"errors": "replace",

|

| 21 |

+

"model_max_length": 77,

|

| 22 |

+

"name_or_path": "openai/clip-vit-large-patch14",

|

| 23 |

+

"pad_token": "<|endoftext|>",

|

| 24 |

+

"special_tokens_map_file": "./special_tokens_map.json",

|

| 25 |

+

"tokenizer_class": "CLIPTokenizer",

|

| 26 |

+

"unk_token": {

|

| 27 |

+

"__type": "AddedToken",

|

| 28 |

+

"content": "<|endoftext|>",

|

| 29 |

+

"lstrip": false,

|

| 30 |

+

"normalized": true,

|

| 31 |

+

"rstrip": false,

|

| 32 |

+

"single_word": false

|

| 33 |

+

}

|

| 34 |

+

}

|

tokenizer/vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

unet/config.json

ADDED

|

@@ -0,0 +1,41 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.11.0.dev0",

|

| 4 |

+

"act_fn": "silu",

|

| 5 |

+

"attention_head_dim": 8,

|

| 6 |

+

"block_out_channels": [

|

| 7 |

+

320,

|

| 8 |

+

640,

|

| 9 |

+

1280,

|

| 10 |

+

1280

|

| 11 |

+

],

|

| 12 |

+

"center_input_sample": false,

|

| 13 |

+

"cross_attention_dim": 768,

|

| 14 |

+

"down_block_types": [

|

| 15 |

+

"CrossAttnDownBlock2D",

|

| 16 |

+

"CrossAttnDownBlock2D",

|

| 17 |

+

"CrossAttnDownBlock2D",

|

| 18 |

+

"DownBlock2D"

|

| 19 |

+

],

|

| 20 |

+

"downsample_padding": 1,

|

| 21 |

+

"dual_cross_attention": false,

|

| 22 |

+

"flip_sin_to_cos": true,

|

| 23 |

+

"freq_shift": 0,

|

| 24 |

+

"in_channels": 4,

|

| 25 |

+

"layers_per_block": 2,

|

| 26 |

+

"mid_block_scale_factor": 1,

|

| 27 |

+

"norm_eps": 1e-05,

|

| 28 |

+

"norm_num_groups": 32,

|

| 29 |

+

"num_class_embeds": null,

|

| 30 |

+

"only_cross_attention": false,

|

| 31 |

+

"out_channels": 4,

|

| 32 |

+

"sample_size": 64,

|

| 33 |

+

"up_block_types": [

|

| 34 |

+

"UpBlock2D",

|

| 35 |

+

"CrossAttnUpBlock2D",

|

| 36 |

+

"CrossAttnUpBlock2D",

|

| 37 |

+

"CrossAttnUpBlock2D"

|

| 38 |

+

],

|

| 39 |

+

"upcast_attention": false,

|

| 40 |

+

"use_linear_projection": false

|

| 41 |

+

}

|

unet/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a25e879620beeb1330dc2710d94be11d20215645849f95e20917c5f67a218bcb

|

| 3 |

+

size 3438366373

|

unet/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c722739953eeab20c63cbac3523410d0743c6ce73f0bb56f9cd7d4abed272398

|

| 3 |

+

size 3438167540

|

vae/config.json

ADDED

|

@@ -0,0 +1,30 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "AutoencoderKL",

|

| 3 |

+

"_diffusers_version": "0.11.0.dev0",

|

| 4 |

+

"act_fn": "silu",

|

| 5 |

+

"block_out_channels": [

|

| 6 |

+

128,

|

| 7 |

+

256,

|

| 8 |

+

512,

|

| 9 |

+

512

|

| 10 |

+

],

|

| 11 |

+

"down_block_types": [

|

| 12 |

+

"DownEncoderBlock2D",

|

| 13 |

+

"DownEncoderBlock2D",

|

| 14 |

+

"DownEncoderBlock2D",

|

| 15 |

+

"DownEncoderBlock2D"

|

| 16 |

+

],

|

| 17 |

+

"in_channels": 3,

|

| 18 |

+

"latent_channels": 4,

|

| 19 |

+

"layers_per_block": 2,

|

| 20 |

+

"norm_num_groups": 32,

|

| 21 |

+

"out_channels": 3,

|

| 22 |

+

"sample_size": 512,

|

| 23 |

+

"scaling_factor": 0.18215,

|

| 24 |

+

"up_block_types": [

|

| 25 |

+

"UpDecoderBlock2D",

|

| 26 |

+

"UpDecoderBlock2D",

|

| 27 |

+

"UpDecoderBlock2D",

|

| 28 |

+

"UpDecoderBlock2D"

|

| 29 |

+

]

|

| 30 |

+

}

|

vae/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:dcf4507d99b88db73f3916e2a20169fe74ada6b5582e9af56cfa80f5f3141765

|

| 3 |

+

size 334711857

|

vae/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:55ad9628530430eed28c01dcf3c971a29ffe5d657856cb054728b6c956da3037

|

| 3 |

+

size 334643276

|