Salamandra Model Card

SalamandraTA-2B is a machine translation model that has been continually pre-trained on Salamandra 2B on 70 billion tokens of parallel data in 30 different languages: Catalan, Italian, Portuguese, German, English, Spanish, Euskera, Galician, French, Bulgarian, Czech, Lithuanian, Croatian, Dutch, Romanian, Danish, Greek, Finnish, Hungarian, Slovak, Slovenian, Estonian, Polish, Latvian, Swedish, Maltese, Irish, Aranese, Aragonese, Asturian. SalamandraTA-2B is the first model in SalamandraTA series and is trained to handle sentence-level machine translation.

- Developed by: The Language Technologies Unit from Barcelona Supercomputing Center (BSC).

- Model type: A 2B parameter model continually pre-trained on 70 billion tokens.

- Languages: Catalan, Italian, Portuguese, German, English, Spanish, Euskera, Galician, French, Bulgarian, Czech, Lithuanian, Croatian, Dutch, Romanian, Danish, Greek, Finnish, Hungarian, Slovak, Slovenian, Estonian, Polish, Latvian, Swedish, Maltese, Irish, Aranese, Aragonese, Asturian.

- License: Apache License, Version 2.0

Model Details

Description

This machine translation model is built upon the foundation of Salamandra 2B. By leveraging the knowledge of the base Salamandra 2B model, this model is able to perform high quality translations between almost 900 translation directions.

Key Features:

- Continual Pretraining: The model is trained on 70 Billion tokens of parallel data. All data employed is open-sourced or generated from open-source

- data using the Machine Translation models at BSC

- Large Language Model Foundation: Built on Salamandra 2B, providing a strong language understanding and generation capability.

- Multilingual Support: Capable of translating between 30 european languages, including low-resource languages.

- High-Quality Translations: Delivers accurate and fluent translations, thanks to its continual pretraining and large-scale dataset.

- Efficient Inference: 2 Billion parameters allow for a trade-off between performance and hardware requirements by most systems.

Hyperparameters

The full list of hyperparameters for each model can be found here.

Architecture

| Total Parameters | 2,253,490,176 |

| Embedding Parameters | 524,288,000 |

| Layers | 24 |

| Hidden size | 2,048 |

| Attention heads | 16 |

| Context length | 8,192 |

| Vocabulary size | 256,000 |

| Precision | bfloat16 |

| Embedding type | RoPE |

| Activation Function | SwiGLU |

| Layer normalization | RMS Norm |

| Flash attention | ✅ |

| Grouped Query Attention | ❌ |

| Num. query groups | N/A |

Intended Use

Direct Use

The models are intended for both research and commercial use in any of the languages included in the training data. The base models are intended for general machine translation tasks.

Out-of-scope Use

The model is not intended for malicious activities, such as harming others or violating human rights. Any downstream application must comply with current laws and regulations. Irresponsible usage in production environments without proper risk assessment and mitigation is also discouraged.

Hardware and Software

Training Framework

Continual pre-training was conducted using LLaMA-Factory framework.

Compute Infrastructure

All models were trained on MareNostrum 5, a pre-exascale EuroHPC supercomputer hosted and operated by Barcelona Supercomputing Center.

The accelerated partition is composed of 1,120 nodes with the following specifications:

- 4x Nvidia Hopper GPUs with 64 HBM2 memory

- 2x Intel Sapphire Rapids 8460Y+ at 2.3Ghz and 32c each (64 cores)

- 4x NDR200 (BW per node 800Gb/s)

- 512 GB of Main memory (DDR5)

- 460GB on NVMe storage

How to use

To translate with the salamandraTA-2B model, first you need to create a prompt that specifies the source and target languages in this format:

[source_language] sentence \n[target_language]

You can translate between these languages by using their names directly:

Italian, Portuguese, German, English, Spanish, Euskera, Galician, French, Bulgarian, Czech, Lithuanian, Croatian, Dutch, Romanian, Danish, Greek, Finnish, Hungarian, Slovak, Slovenian, Estonian, Polish, Latvian, Swedish, Maltese, Irish, Aranese, Aragonese, Asturian.

Inference

To translate from Spanish to Catalan using Huggingface's AutoModel class on a single sentence you can use the following code:

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

model_id = 'BSC-LT/salamandraTA-2b'

# Load tokenizer and model

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(model_id)

# Move model to GPU if available

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model.to(device)

src_lang_code = 'Spanish'

tgt_lang_code = 'Catalan'

sentence = 'Ayer se fue, tomó sus cosas y se puso a navegar.'

prompt = f'[{src_lang_code}] {sentence} \n[{tgt_lang_code}]'

# Tokenize and move inputs to the same device as the model

input_ids = tokenizer(prompt, return_tensors='pt').input_ids.to(device)

output_ids = model.generate(input_ids, max_length=500, num_beams=5)

input_length = input_ids.shape[1]

generated_text = tokenizer.decode(output_ids[0, input_length:], skip_special_tokens=True).strip()

print(generated_text)

#Ahir se'n va anar, va agafar les seves coses i es va posar a navegar.

To run batch inference using Huggingface's AutoModel class you can use the following code.

Show code

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

model_id = 'BSC-LT/salamandraTA-2b'

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(model_id, attn_implementation='eager')

# Move the model to GPU

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

model = model.to(device)

# List of sentences to translate

sentences = [

'Ayer se fue, tomó sus cosas y se puso a navegar.',

'Se despidió y decidió batirse en duelo con el mar, y recorrer el mundo en su velero',

'Su corazón buscó una forma diferente de vivir, pero las olas le gritaron: Vete con los demás',

'Y se durmió y la noche le gritó: Dónde vas, y en sus sueños dibujó gaviotas, y pensó: Hoy debo regresar.'

]

src_lang_code = 'Spanish'

tgt_lang_code = 'Catalan'

prompt = lambda x: f'[{src_lang_code}] {x} \n[{tgt_lang_code}]'

prompts = [prompt(x) for x in sentences]

encodings = tokenizer(prompts, return_tensors='pt', padding=True, add_special_tokens=True)

input_ids = encodings['input_ids'].to(model.device)

attention_mask = encodings['attention_mask'].to(model.device)

with torch.no_grad():

outputs = model.generate(input_ids=input_ids, attention_mask=attention_mask, num_beams=5,max_length=256,early_stopping=True)

results_detokenized = []

for i, output in enumerate(outputs):

input_length = input_ids[i].shape[0]

generated_text = tokenizer.decode(output[input_length:], skip_special_tokens=True).strip()

results_detokenized.append(generated_text)

print("Generated Translations:", results_detokenized)

#Generated Translations: ["Ahir se'n va anar, va agafar les seves coses i es va posar a navegar.",

#"Es va acomiadar i va decidir batre's en duel amb el mar, i recórrer el món en el seu veler",

#"El seu cor va buscar una forma diferent de viure, però les onades li van cridar: Vés amb els altres",

#"I es va adormir i la nit li va cridar: On vas, i en els seus somnis va dibuixar gavines, i va pensar: Avui he de tornar."]

Data

Pretraining Data

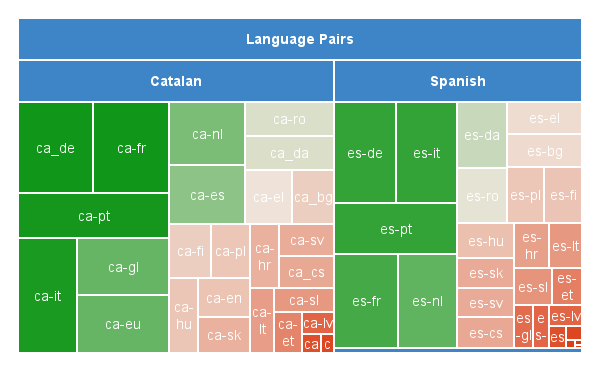

The training corpus consists of 70 billion tokens of Catalan- and Spanish-centric parallel data, including all of the official European languages plus Catalan, Basque, Galician, Asturian, Aragonese and Aranese. It amounts to 3,157,965,012 parallel sentence pairs.

This highly multilingual corpus is predominantly composed of data sourced from OPUS, with additional data taken from the NTEU project and Project Aina’s existing corpora. Where little parallel Catalan <-> xx data could be found, synthetic Catalan data was generated from the Spanish side of the collected Spanish <-> xx corpora using Projecte Aina’s Spanish-Catalan model. The final distribution of languages was as below:

Click the expand button below to see the full list of corpora included in the training data.

Data Sources

| Dataset | Ca-xx Languages | Es-xx Langugages |

|---|---|---|

| CCMatrix | eu | |

| DGT | bg,cs,da,de,el ,et,fi,fr,ga,hr,hu,lt,lv,mt,nl,pl,pt,ro,sk,sl,sv | |

| ELRC-EMEA | bg,cs,da,hu,lt,lv,mt,pl,ro,sk,sl | |

| EMEA | bg,cs,da,el,fi,hu,lt,mt,nl,pl,ro,sk,sl,sv | |

| EUBookshop | lt,pl,pt | cs,da,de,el,fi,fr,ga,it,lv,mt,nl,pl,pt,ro,sk,sl,sv |

| Europarl | bg,cs,da,el,fi,fr,hu,lt,lv,nl,pl,pt ,ro,sk,sl,sv | |

| Europat | hr | |

| KDE4 | bg,cs,da,de,el ,et,eu,fi,fr,ga,gl,hr,it,lt,lv,nl,pl,pt,ro,sk,sl,sv | bg,ga,hr |

| GlobalVoices | bg,de,fr,it,nl,pl,pt | bg,de,fr,pt |

| GNOME | eu,fr,ga,gl,pt | ga |

| JRC-Arquis | cs,da,et,fr,lt,lv,mt,nl,pl ,ro,sv | |

| MultiCCAligned | bg,cs,de,el,et,fi,fr,hr,hu,it,lt,lv,nl,pl,ro,sk,sv | bg,fi,fr,hr,it,lv,nl,pt |

| MultiHPLT | et,fi,ga,hr,mt | |

| MultiParaCrawl | bg,da | de,fr,ga,hr,hu,it,mt,pt |

| MultiUN | fr | |

| News-Commentary | fr | |

| NLLB | bg,da,el,et,fi,fr,gl,hu,it ,lt,lv,pt,ro,sk,sl | bg,cs,da,de,el ,et,fi,fr,hu,it,lt,lv,nl,pl,pt ,ro,sk,sl,sv |

| NTEU | bg,cs,da,de,el ,et,fi,fr,ga,hr,hu,it,lt,lv,mt,nl,pl,pt,ro,sk,sl,sv | |

| OpenSubtitles | bg,cs,da,de,el ,et,eu,fi,gl,hr,hu,lt,lv,nl,pl,pt,ro,sk,sl,sv | da,de,fi,fr,hr,hu,it,lv,nl |

| Tatoeba | de,pt | pt |

| TildeModel | bg | |

| UNPC | fr | |

| WikiMatrix | bg,cs,da,de,el ,et,eu,fi,fr,gl,hr,hu,it,lt,nl,pl,pt,ro,sk,sl,sv | bg,fr,hr,it,pt |

| XLENT | eu,ga,gl | ga |

References

- Aulamo, M., Sulubacak, U., Virpioja, S., & Tiedemann, J. (2020). OpusTools and Parallel Corpus Diagnostics. In N. Calzolari, F. Béchet, P. Blache, K. Choukri, C. Cieri, T. Declerck, S. Goggi, H. Isahara, B. Maegaard, J. Mariani, H. Mazo, A. Moreno, J. Odijk, & S. Piperidis (Eds.), Proceedings of the Twelfth Language Resources and Evaluation Conference (pp. 3782–3789). European Language Resources Association. https://aclanthology.org/2020.lrec-1.467

- Chaudhary, V., Tang, Y., Guzmán, F., Schwenk, H., & Koehn, P. (2019). Low-Resource Corpus Filtering Using Multilingual Sentence Embeddings. In O. Bojar, R. Chatterjee, C. Federmann, M. Fishel, Y. Graham, B. Haddow, M. Huck, A. J. Yepes, P. Koehn, A. Martins, C. Monz, M. Negri, A. Névéol, M. Neves, M. Post, M. Turchi, & K. Verspoor (Eds.), Proceedings of the Fourth Conference on Machine Translation (Volume 3: Shared Task Papers, Day 2) (pp. 261–266). Association for Computational Linguistics. https://doi.org/10.18653/v1/W19-5435

- DGT-Translation Memory—European Commission. (n.d.). Retrieved November 4, 2024, from https://joint-research-centre.ec.europa.eu/language-technology-resources/dgt-translation-memory_en

- Eisele, A., & Chen, Y. (2010). MultiUN: A Multilingual Corpus from United Nation Documents. In N. Calzolari, K. Choukri, B. Maegaard, J. Mariani, J. Odijk, S. Piperidis, M. Rosner, & D. Tapias (Eds.), Proceedings of the Seventh International Conference on Language Resources and Evaluation (LREC’10). European Language Resources Association (ELRA). http://www.lrec-conf.org/proceedings/lrec2010/pdf/686_Paper.pdf

- El-Kishky, A., Chaudhary, V., Guzmán, F., & Koehn, P. (2020). CCAligned: A Massive Collection of Cross-Lingual Web-Document Pairs. Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), 5960–5969. https://doi.org/10.18653/v1/2020.emnlp-main.480

- El-Kishky, A., Renduchintala, A., Cross, J., Guzmán, F., & Koehn, P. (2021). XLEnt: Mining a Large Cross-lingual Entity Dataset with Lexical-Semantic-Phonetic Word Alignment. Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 10424–10430. https://doi.org/10.18653/v1/2021.emnlp-main.814

- Fan, A., Bhosale, S., Schwenk, H., Ma, Z., El-Kishky, A., Goyal, S., Baines, M., Celebi, O., Wenzek, G., Chaudhary, V., Goyal, N., Birch, T., Liptchinsky, V., Edunov, S., Grave, E., Auli, M., & Joulin, A. (2020). Beyond English-Centric Multilingual Machine Translation (No. arXiv:2010.11125). arXiv. https://doi.org/10.48550/arXiv.2010.11125

- García-Martínez, M., Bié, L., Cerdà, A., Estela, A., Herranz, M., Krišlauks, R., Melero, M., O’Dowd, T., O’Gorman, S., Pinnis, M., Stafanovič, A., Superbo, R., & Vasiļevskis, A. (2021). Neural Translation for European Union (NTEU). 316–334. https://aclanthology.org/2021.mtsummit-up.23

- Gibert, O. de, Nail, G., Arefyev, N., Bañón, M., Linde, J. van der, Ji, S., Zaragoza-Bernabeu, J., Aulamo, M., Ramírez-Sánchez, G., Kutuzov, A., Pyysalo, S., Oepen, S., & Tiedemann, J. (2024). A New Massive Multilingual Dataset for High-Performance Language Technologies (No. arXiv:2403.14009). arXiv. http://arxiv.org/abs/2403.14009

- Koehn, P. (2005). Europarl: A Parallel Corpus for Statistical Machine Translation. Proceedings of Machine Translation Summit X: Papers, 79–86. https://aclanthology.org/2005.mtsummit-papers.11

- Kreutzer, J., Caswell, I., Wang, L., Wahab, A., Van Esch, D., Ulzii-Orshikh, N., Tapo, A., Subramani, N., Sokolov, A., Sikasote, C., Setyawan, M., Sarin, S., Samb, S., Sagot, B., Rivera, C., Rios, A., Papadimitriou, I., Osei, S., Suarez, P. O., … Adeyemi, M. (2022). Quality at a Glance: An Audit of Web-Crawled Multilingual Datasets. Transactions of the Association for Computational Linguistics, 10, 50–72. https://doi.org/10.1162/tacl_a_00447

- Rozis, R.,Skadiņš, R (2017). Tilde MODEL - Multilingual Open Data for EU Languages. https://aclanthology.org/W17-0235

- Schwenk, H., Chaudhary, V., Sun, S., Gong, H., & Guzmán, F. (2019). WikiMatrix: Mining 135M Parallel Sentences in 1620 Language Pairs from Wikipedia (No. arXiv:1907.05791). arXiv. https://doi.org/10.48550/arXiv.1907.05791

- Schwenk, H., Wenzek, G., Edunov, S., Grave, E., & Joulin, A. (2020). CCMatrix: Mining Billions of High-Quality Parallel Sentences on the WEB (No. arXiv:1911.04944). arXiv. https://doi.org/10.48550/arXiv.1911.04944

- Steinberger, R., Pouliquen, B., Widiger, A., Ignat, C., Erjavec, T., Tufiş, D., & Varga, D. (n.d.). The JRC-Acquis: A Multilingual Aligned Parallel Corpus with 20+ Languages. http://www.lrec-conf.org/proceedings/lrec2006/pdf/340_pdf

- Subramani, N., Luccioni, S., Dodge, J., & Mitchell, M. (2023). Detecting Personal Information in Training Corpora: An Analysis. In A. Ovalle, K.-W. Chang, N. Mehrabi, Y. Pruksachatkun, A. Galystan, J. Dhamala, A. Verma, T. Cao, A. Kumar, & R. Gupta (Eds.), Proceedings of the 3rd Workshop on Trustworthy Natural Language Processing (TrustNLP 2023) (pp. 208–220). Association for Computational Linguistics. https://doi.org/10.18653/v1/2023.trustnlp-1.18

- Tiedemann, J. (23-25). Parallel Data, Tools and Interfaces in OPUS. In N. C. (Conference Chair), K. Choukri, T. Declerck, M. U. Doğan, B. Maegaard, J. Mariani, A. Moreno, J. Odijk, & S. Piperidis (Eds.), Proceedings of the Eight International Conference on Language Resources and Evaluation (LREC’12). European Language Resources Association (ELRA). http://www.lrec-conf.org/proceedings/lrec2012/pdf/463_Paper

- Ziemski, M., Junczys-Dowmunt, M., & Pouliquen, B. (n.d.). The United Nations Parallel Corpus v1.0. https://aclanthology.org/L16-1561

Evaluation

Below are the evaluation results on Flores-200 dev and devtest compared to NLLB-3.3 (Costa-jussà et al., 2022) for CA-XX and XX-CA directions. The metrics have been computed excluding Asturian, Aranese, and Aragonese as we report them separately. The evaluation was conducted using MT Lens following the standard setting (beam search with beam size 5, limiting the translation length to 250 tokens). We report the following metrics:

Click to show metrics details

BLEU: Sacrebleu implementation. Signature: nrefs:1— case:mixed— eff:no— tok:13a— smooth:exp—version:2.3.1TER: Sacrebleu implementation.ChrF: Sacrebleu implementation.Comet: Model checkpoint: "Unbabel/wmt22-comet-da".Comet-kiwi: Model checkpoint: "Unbabel/wmt22-cometkiwi-da".Bleurt: Model checkpoint: "lucadiliello/BLEURT-20".

Flores200-dev

| Bleu ↑ | Ter ↓ | ChrF ↑ | Comet ↑ | Comet-kiwi ↑ | Bleurt ↑ | |

|---|---|---|---|---|---|---|

| CA-XX | ||||||

| SalamandraTA-2B | 27.41 | 60.88 | 56.27 | 0.86 | 0.82 | 0.76 |

| nllb 3.3B | 26.84 | 61.75 | 55.7 | 0.86 | 0.82 | 0.76 |

| XX-CA | ||||||

| SalamandraTA-2B | 30.75 | 57.66 | 57.6 | 0.85 | 0.81 | 0.73 |

| nllb 3.3B | 29.76 | 58.25 | 56.75 | 0.85 | 0.82 | 0.73 |

Click to show full table CA-XX Flores-dev

| source | target | Bleu ↑ | Ter ↓ | ChrF ↑ | Comet ↑ | Comet-kiwi ↑ | Bleurt ↑ | |

|---|---|---|---|---|---|---|---|---|

| nllb 3.3B | ca | sv | 33.05 | 53.98 | 60.09 | 0.88 | 0.83 | 0.79 |

| SalamandraTA-2B | ca | sv | 30.62 | 55.4 | 57.77 | 0.87 | 0.81 | 0.78 |

| SalamandraTA-2B | ca | sl | 25.74 | 63.78 | 54.29 | 0.88 | 0.83 | 0.81 |

| nllb 3.3B | ca | sl | 25.04 | 65.02 | 53.08 | 0.88 | 0.83 | 0.82 |

| SalamandraTA-2B | ca | sk | 26.03 | 62.58 | 53.53 | 0.89 | 0.84 | 0.8 |

| nllb 3.3B | ca | sk | 25.59 | 63.17 | 53.28 | 0.89 | 0.84 | 0.8 |

| SalamandraTA-2B | ca | ro | 33.08 | 54.36 | 59.18 | 0.89 | 0.85 | 0.8 |

| nllb 3.3B | ca | ro | 31.91 | 55.46 | 58.36 | 0.89 | 0.85 | 0.81 |

| SalamandraTA-2B | ca | pt | 37.6 | 48.82 | 62.73 | 0.88 | 0.84 | 0.76 |

| nllb 3.3B | ca | pt | 36.85 | 49.56 | 62.02 | 0.88 | 0.85 | 0.76 |

| nllb 3.3B | ca | pl | 17.97 | 73.06 | 47.94 | 0.88 | 0.84 | 0.78 |

| SalamandraTA-2B | ca | pl | 17.85 | 72.67 | 47.77 | 0.88 | 0.84 | 0.78 |

| SalamandraTA-2B | ca | nl | 23.88 | 64.95 | 54.46 | 0.85 | 0.84 | 0.75 |

| nllb 3.3B | ca | nl | 23.26 | 66.46 | 54.17 | 0.85 | 0.85 | 0.75 |

| SalamandraTA-2B | ca | mt | 25.62 | 59.08 | 60.83 | 0.69 | 0.61 | 0.43 |

| nllb 3.3B | ca | mt | 25.37 | 59.47 | 60.1 | 0.69 | 0.63 | 0.39 |

| SalamandraTA-2B | ca | lv | 21.23 | 71.48 | 49.47 | 0.82 | 0.79 | 0.73 |

| nllb 3.3B | ca | lv | 20.56 | 70.88 | 50.07 | 0.85 | 0.78 | 0.77 |

| SalamandraTA-2B | ca | lt | 19.92 | 71.02 | 50.88 | 0.87 | 0.8 | 0.81 |

| nllb 3.3B | ca | lt | 18.82 | 71.8 | 51.84 | 0.87 | 0.82 | 0.82 |

| SalamandraTA-2B | ca | it | 26.76 | 60.67 | 56.3 | 0.88 | 0.85 | 0.77 |

| nllb 3.3B | ca | it | 26.42 | 61.47 | 55.66 | 0.87 | 0.86 | 0.77 |

| SalamandraTA-2B | ca | hu | 22.8 | 66.41 | 53.41 | 0.86 | 0.82 | 0.85 |

| nllb 3.3B | ca | hu | 21.2 | 68.54 | 51.99 | 0.87 | 0.83 | 0.87 |

| SalamandraTA-2B | ca | hr | 26.24 | 61.83 | 55.87 | 0.89 | 0.84 | 0.81 |

| nllb 3.3B | ca | hr | 24.04 | 64.25 | 53.79 | 0.89 | 0.85 | 0.82 |

| nllb 3.3B | ca | gl | 32.85 | 51.69 | 59.33 | 0.87 | 0.85 | 0.72 |

| SalamandraTA-2B | ca | gl | 31.84 | 52.52 | 59.16 | 0.87 | 0.84 | 0.71 |

| SalamandraTA-2B | ca | ga | 25.24 | 63.36 | 53.24 | 0.78 | 0.64 | 0.62 |

| nllb 3.3B | ca | ga | 23.51 | 66.54 | 51.53 | 0.77 | 0.66 | 0.62 |

| SalamandraTA-2B | ca | fr | 40.14 | 48.34 | 64.24 | 0.86 | 0.84 | 0.73 |

| nllb 3.3B | ca | fr | 39.8 | 48.96 | 63.97 | 0.86 | 0.85 | 0.74 |

| nllb 3.3B | ca | fi | 18.63 | 71.42 | 52.71 | 0.89 | 0.82 | 0.82 |

| SalamandraTA-2B | ca | fi | 18.49 | 71.46 | 52.09 | 0.88 | 0.8 | 0.8 |

| SalamandraTA-2B | ca | eu | 18.75 | 71.09 | 57.05 | 0.87 | 0.81 | 0.8 |

| nllb 3.3B | ca | eu | 13.15 | 77.69 | 50.35 | 0.83 | 0.75 | 0.75 |

| SalamandraTA-2B | ca | et | 22.03 | 67.55 | 54.87 | 0.88 | 0.8 | 0.79 |

| nllb 3.3B | ca | et | 20.07 | 70.66 | 53.19 | 0.88 | 0.81 | 0.8 |

| nllb 3.3B | ca | es | 25.59 | 60.39 | 53.7 | 0.86 | 0.86 | 0.74 |

| SalamandraTA-2B | ca | es | 24.46 | 61.54 | 53.02 | 0.86 | 0.86 | 0.74 |

| nllb 3.3B | ca | en | 49.62 | 37.33 | 71.65 | 0.89 | 0.86 | 0.8 |

| SalamandraTA-2B | ca | en | 46.62 | 40.03 | 70.23 | 0.88 | 0.86 | 0.79 |

| SalamandraTA-2B | ca | el | 23.38 | 63 | 50.03 | 0.87 | 0.84 | 0.74 |

| nllb 3.3B | ca | el | 22.62 | 63.73 | 49.5 | 0.87 | 0.84 | 0.74 |

| SalamandraTA-2B | ca | de | 31.89 | 57.12 | 59.07 | 0.84 | 0.83 | 0.75 |

| nllb 3.3B | ca | de | 31.19 | 57.87 | 58.47 | 0.85 | 0.84 | 0.76 |

| SalamandraTA-2B | ca | da | 34.69 | 53.31 | 61.11 | 0.87 | 0.82 | 0.75 |

| nllb 3.3B | ca | da | 34.32 | 54.2 | 60.2 | 0.88 | 0.83 | 0.77 |

| SalamandraTA-2B | ca | cs | 25.67 | 63.37 | 53.07 | 0.89 | 0.85 | 0.79 |

| nllb 3.3B | ca | cs | 25.02 | 63.59 | 52.43 | 0.89 | 0.85 | 0.79 |

| SalamandraTA-2B | ca | bg | 32.09 | 57.01 | 59.4 | 0.89 | 0.85 | 0.84 |

| nllb 3.3B | ca | bg | 31.24 | 58.41 | 58.81 | 0.89 | 0.86 | 0.85 |

Click to show full table XX-CA Flores-dev

| source | target | Bleu ↑ | Ter ↓ | ChrF ↑ | Comet ↑ | Comet-kiwi ↑ | Bleurt ↑ | |

|---|---|---|---|---|---|---|---|---|

| SalamandraTA-2B | sv | ca | 34.21 | 53 | 59.52 | 0.86 | 0.83 | 0.74 |

| nllb 3.3B | sv | ca | 33.03 | 53.42 | 59.02 | 0.86 | 0.84 | 0.75 |

| SalamandraTA-2B | sl | ca | 28.98 | 59.95 | 56.24 | 0.85 | 0.82 | 0.72 |

| nllb 3.3B | sl | ca | 27.51 | 61.23 | 54.96 | 0.85 | 0.83 | 0.72 |

| SalamandraTA-2B | sk | ca | 30.61 | 58.1 | 57.53 | 0.86 | 0.81 | 0.73 |

| nllb 3.3B | sk | ca | 29.24 | 58.93 | 56.29 | 0.86 | 0.83 | 0.73 |

| SalamandraTA-2B | ro | ca | 33.73 | 54.23 | 60.11 | 0.87 | 0.83 | 0.75 |

| nllb 3.3B | ro | ca | 32.9 | 54.71 | 59.56 | 0.87 | 0.84 | 0.75 |

| SalamandraTA-2B | pt | ca | 35.99 | 50.64 | 61.52 | 0.87 | 0.84 | 0.76 |

| nllb 3.3B | pt | ca | 34.63 | 51.15 | 60.68 | 0.87 | 0.84 | 0.76 |

| SalamandraTA-2B | pl | ca | 25.77 | 64.99 | 53.46 | 0.84 | 0.82 | 0.71 |

| nllb 3.3B | pl | ca | 24.41 | 65.69 | 52.45 | 0.85 | 0.83 | 0.71 |

| SalamandraTA-2B | nl | ca | 26.04 | 64.09 | 53.64 | 0.84 | 0.84 | 0.71 |

| nllb 3.3B | nl | ca | 25.35 | 64.64 | 53.15 | 0.84 | 0.85 | 0.71 |

| SalamandraTA-2B | mt | ca | 37.51 | 50.18 | 62.42 | 0.79 | 0.69 | 0.75 |

| nllb 3.3B | mt | ca | 36.29 | 51.01 | 61.24 | 0.79 | 0.7 | 0.75 |

| SalamandraTA-2B | lv | ca | 27.14 | 62.61 | 55.6 | 0.84 | 0.78 | 0.7 |

| nllb 3.3B | lv | ca | 27.02 | 61.12 | 54.28 | 0.84 | 0.79 | 0.71 |

| SalamandraTA-2B | lt | ca | 27.76 | 61.3 | 54.52 | 0.84 | 0.76 | 0.71 |

| nllb 3.3B | lt | ca | 26.05 | 62.75 | 53.4 | 0.84 | 0.77 | 0.71 |

| SalamandraTA-2B | it | ca | 28.44 | 61.09 | 57.12 | 0.87 | 0.85 | 0.74 |

| nllb 3.3B | it | ca | 27.79 | 61.42 | 56.62 | 0.87 | 0.86 | 0.74 |

| SalamandraTA-2B | hu | ca | 28.15 | 60.01 | 55.29 | 0.85 | 0.81 | 0.72 |

| nllb 3.3B | hu | ca | 27.06 | 60.44 | 54.38 | 0.85 | 0.83 | 0.72 |

| SalamandraTA-2B | hr | ca | 29.89 | 58.61 | 56.62 | 0.85 | 0.82 | 0.72 |

| nllb 3.3B | hr | ca | 28.23 | 59.55 | 55.37 | 0.86 | 0.84 | 0.73 |

| nllb 3.3B | gl | ca | 34.28 | 52.34 | 60.86 | 0.87 | 0.85 | 0.76 |

| SalamandraTA-2B | gl | ca | 32.14 | 54.03 | 60.3 | 0.87 | 0.84 | 0.75 |

| SalamandraTA-2B | ga | ca | 28.59 | 61.13 | 55.61 | 0.8 | 0.69 | 0.68 |

| nllb 3.3B | ga | ca | 28.09 | 61.12 | 54.55 | 0.8 | 0.7 | 0.68 |

| SalamandraTA-2B | fr | ca | 34.53 | 52.9 | 60.38 | 0.87 | 0.83 | 0.76 |

| nllb 3.3B | fr | ca | 33.61 | 53.57 | 59.73 | 0.87 | 0.84 | 0.76 |

| SalamandraTA-2B | fi | ca | 26.71 | 62.19 | 54.09 | 0.86 | 0.8 | 0.71 |

| nllb 3.3B | fi | ca | 26.31 | 62.6 | 54.06 | 0.86 | 0.82 | 0.71 |

| SalamandraTA-2B | eu | ca | 27.93 | 60.26 | 55.27 | 0.87 | 0.83 | 0.73 |

| nllb 3.3B | eu | ca | 26.43 | 63.76 | 53.75 | 0.86 | 0.82 | 0.72 |

| SalamandraTA-2B | et | ca | 30.03 | 58.25 | 56.88 | 0.86 | 0.79 | 0.72 |

| nllb 3.3B | et | ca | 27.56 | 59.95 | 54.92 | 0.86 | 0.8 | 0.72 |

| nllb 3.3B | es | ca | 25.33 | 64.23 | 55.1 | 0.86 | 0.84 | 0.73 |

| SalamandraTA-2B | es | ca | 22.95 | 67.1 | 53.67 | 0.86 | 0.84 | 0.72 |

| SalamandraTA-2B | en | ca | 43.55 | 42.62 | 67.03 | 0.88 | 0.85 | 0.78 |

| nllb 3.3B | en | ca | 42.21 | 43.63 | 65.95 | 0.88 | 0.85 | 0.78 |

| SalamandraTA-2B | el | ca | 28.52 | 60.34 | 54.99 | 0.85 | 0.83 | 0.71 |

| nllb 3.3B | el | ca | 27.36 | 60.49 | 54.76 | 0.85 | 0.85 | 0.72 |

| SalamandraTA-2B | de | ca | 33.07 | 54.46 | 59.06 | 0.85 | 0.84 | 0.74 |

| nllb 3.3B | de | ca | 31.43 | 56.05 | 57.95 | 0.86 | 0.85 | 0.74 |

| SalamandraTA-2B | da | ca | 34.6 | 53.22 | 60.43 | 0.86 | 0.83 | 0.75 |

| nllb 3.3B | da | ca | 32.71 | 54.2 | 58.9 | 0.86 | 0.84 | 0.75 |

| SalamandraTA-2B | cs | ca | 30.92 | 57.54 | 57.71 | 0.86 | 0.82 | 0.73 |

| nllb 3.3B | cs | ca | 29.02 | 58.78 | 56.44 | 0.86 | 0.83 | 0.73 |

| SalamandraTA-2B | bg | ca | 31.68 | 56.32 | 58.61 | 0.85 | 0.84 | 0.73 |

| nllb 3.3B | bg | ca | 29.87 | 57.75 | 57.26 | 0.85 | 0.85 | 0.73 |

Flores200-devtest

| Bleu ↑ | Ter ↓ | ChrF ↑ | Comet ↑ | Comet-kiwi ↑ | Bleurt ↑ | |

|---|---|---|---|---|---|---|

| CA-XX | ||||||

| SalamandraTA-2B | 27.09 | 61.06 | 56.41 | 0.86 | 0.81 | 0.75 |

| nllb 3.3B | 26.7 | 61.74 | 55.85 | 0.86 | 0.82 | 0.76 |

| XX-CA | ||||||

| SalamandraTA-2B | 31 | 57.46 | 57.96 | 0.85 | 0.81 | 0.73 |

| nllb 3.3B | 30.31 | 58.26 | 57.12 | 0.85 | 0.82 | 0.73 |

Click to show full table CA-XX Flores-devtest

| source | target | Bleu ↑ | Ter ↓ | ChrF ↑ | Comet ↑ | Comet-kiwi ↑ | Bleurt ↑ | |

|---|---|---|---|---|---|---|---|---|

| nllb 3.3B | ca | sv | 32.49 | 55.11 | 59.93 | 0.88 | 0.82 | 0.79 |

| SalamandraTA-2B | ca | sv | 30.53 | 56.24 | 58.05 | 0.87 | 0.8 | 0.77 |

| SalamandraTA-2B | ca | sl | 25.16 | 64.25 | 53.88 | 0.87 | 0.82 | 0.8 |

| nllb 3.3B | ca | sl | 24.64 | 66.02 | 52.71 | 0.88 | 0.82 | 0.81 |

| SalamandraTA-2B | ca | sk | 25.64 | 63.03 | 53.55 | 0.88 | 0.83 | 0.79 |

| nllb 3.3B | ca | sk | 25.44 | 63.29 | 53.37 | 0.89 | 0.84 | 0.79 |

| SalamandraTA-2B | ca | ro | 33.21 | 54.27 | 59.53 | 0.89 | 0.84 | 0.8 |

| nllb 3.3B | ca | ro | 31.29 | 56.44 | 58.16 | 0.89 | 0.85 | 0.8 |

| SalamandraTA-2B | ca | pt | 37.9 | 48.95 | 63.15 | 0.88 | 0.84 | 0.75 |

| nllb 3.3B | ca | pt | 37.31 | 49.31 | 62.7 | 0.88 | 0.85 | 0.75 |

| SalamandraTA-2B | ca | pl | 18.62 | 71.88 | 48.44 | 0.88 | 0.83 | 0.77 |

| nllb 3.3B | ca | pl | 18.01 | 72.23 | 48.26 | 0.88 | 0.83 | 0.77 |

| SalamandraTA-2B | ca | nl | 23.4 | 65.66 | 54.55 | 0.85 | 0.84 | 0.74 |

| nllb 3.3B | ca | nl | 22.99 | 66.68 | 53.95 | 0.85 | 0.84 | 0.75 |

| nllb 3.3B | ca | mt | 24.78 | 59.97 | 59.58 | 0.68 | 0.62 | 0.36 |

| SalamandraTA-2B | ca | mt | 24.35 | 60.1 | 60.51 | 0.69 | 0.6 | 0.4 |

| SalamandraTA-2B | ca | lv | 20.55 | 71.85 | 50.24 | 0.82 | 0.78 | 0.74 |

| nllb 3.3B | ca | lv | 20.16 | 70.37 | 50.3 | 0.85 | 0.78 | 0.78 |

| SalamandraTA-2B | ca | lt | 20.37 | 70.15 | 51.61 | 0.88 | 0.79 | 0.82 |

| nllb 3.3B | ca | lt | 19.95 | 70.47 | 52.49 | 0.88 | 0.81 | 0.81 |

| SalamandraTA-2B | ca | it | 27.18 | 60.37 | 56.65 | 0.88 | 0.85 | 0.77 |

| nllb 3.3B | ca | it | 26.83 | 60.96 | 56.33 | 0.88 | 0.85 | 0.77 |

| SalamandraTA-2B | ca | hu | 21.76 | 66.96 | 53.45 | 0.86 | 0.81 | 0.85 |

| nllb 3.3B | ca | hu | 20.54 | 68.28 | 52.2 | 0.87 | 0.82 | 0.87 |

| SalamandraTA-2B | ca | hr | 25.41 | 62.55 | 55.65 | 0.89 | 0.84 | 0.81 |

| nllb 3.3B | ca | hr | 24.01 | 64.39 | 53.95 | 0.89 | 0.84 | 0.82 |

| nllb 3.3B | ca | gl | 32.33 | 52.64 | 59.3 | 0.87 | 0.85 | 0.71 |

| SalamandraTA-2B | ca | gl | 31.97 | 52.76 | 59.48 | 0.87 | 0.84 | 0.7 |

| SalamandraTA-2B | ca | ga | 23.19 | 66.3 | 51.99 | 0.77 | 0.64 | 0.6 |

| nllb 3.3B | ca | ga | 22.38 | 67.76 | 50.92 | 0.77 | 0.66 | 0.6 |

| nllb 3.3B | ca | fr | 40.82 | 47.72 | 64.82 | 0.86 | 0.85 | 0.74 |

| SalamandraTA-2B | ca | fr | 40.35 | 47.79 | 64.56 | 0.86 | 0.84 | 0.73 |

| nllb 3.3B | ca | fi | 18.93 | 70.8 | 53.03 | 0.89 | 0.81 | 0.82 |

| SalamandraTA-2B | ca | fi | 18.92 | 70.69 | 52.85 | 0.88 | 0.8 | 0.8 |

| SalamandraTA-2B | ca | eu | 18.33 | 72 | 56.65 | 0.86 | 0.81 | 0.79 |

| nllb 3.3B | ca | eu | 12.79 | 78.69 | 50.19 | 0.83 | 0.75 | 0.75 |

| SalamandraTA-2B | ca | et | 21.45 | 67.08 | 55.01 | 0.88 | 0.8 | 0.79 |

| nllb 3.3B | ca | et | 19.84 | 70.08 | 53.48 | 0.88 | 0.8 | 0.79 |

| nllb 3.3B | ca | es | 25.87 | 59.66 | 54.06 | 0.86 | 0.86 | 0.74 |

| SalamandraTA-2B | ca | es | 24.73 | 60.79 | 53.48 | 0.86 | 0.86 | 0.73 |

| nllb 3.3B | ca | en | 48.41 | 38.1 | 71.29 | 0.89 | 0.86 | 0.8 |

| SalamandraTA-2B | ca | en | 45.19 | 41.18 | 69.46 | 0.88 | 0.85 | 0.78 |

| SalamandraTA-2B | ca | el | 22.78 | 63.17 | 49.97 | 0.87 | 0.83 | 0.73 |

| nllb 3.3B | ca | el | 22.59 | 63.8 | 49.33 | 0.87 | 0.83 | 0.73 |

| SalamandraTA-2B | ca | de | 31.31 | 57.16 | 59.42 | 0.85 | 0.83 | 0.75 |

| nllb 3.3B | ca | de | 31.25 | 57.87 | 59.05 | 0.85 | 0.83 | 0.75 |

| SalamandraTA-2B | ca | da | 34.83 | 53.16 | 61.44 | 0.88 | 0.82 | 0.75 |

| nllb 3.3B | ca | da | 34.43 | 53.82 | 60.73 | 0.88 | 0.83 | 0.76 |

| SalamandraTA-2B | ca | cs | 24.98 | 63.45 | 53.11 | 0.89 | 0.84 | 0.77 |

| nllb 3.3B | ca | cs | 24.73 | 63.94 | 52.66 | 0.89 | 0.85 | 0.78 |

| SalamandraTA-2B | ca | bg | 32.25 | 55.76 | 59.85 | 0.89 | 0.85 | 0.84 |

| nllb 3.3B | ca | bg | 31.45 | 56.93 | 59.29 | 0.89 | 0.85 | 0.85 |

Click to show full table XX-CA Flores-devtest

| source | target | Bleu ↑ | Ter ↓ | ChrF ↑ | Comet ↑ | Comet-kiwi ↑ | Bleurt ↑ | |

|---|---|---|---|---|---|---|---|---|

| SalamandraTA-2B | sv | ca | 34.4 | 52.6 | 59.96 | 0.86 | 0.82 | 0.73 |

| nllb 3.3B | sv | ca | 33.4 | 53.19 | 59.29 | 0.86 | 0.83 | 0.74 |

| SalamandraTA-2B | sl | ca | 29.12 | 59.26 | 56.56 | 0.85 | 0.8 | 0.71 |

| nllb 3.3B | sl | ca | 28.23 | 60.61 | 55.34 | 0.85 | 0.82 | 0.72 |

| SalamandraTA-2B | sk | ca | 30.71 | 57.99 | 57.81 | 0.85 | 0.8 | 0.72 |

| nllb 3.3B | sk | ca | 29.79 | 58.99 | 56.61 | 0.85 | 0.82 | 0.73 |

| SalamandraTA-2B | ro | ca | 34.79 | 53.37 | 61.22 | 0.87 | 0.83 | 0.75 |

| nllb 3.3B | ro | ca | 33.53 | 54.36 | 60.18 | 0.87 | 0.84 | 0.75 |

| SalamandraTA-2B | pt | ca | 36.72 | 50.64 | 62.08 | 0.87 | 0.84 | 0.76 |

| nllb 3.3B | pt | ca | 36.11 | 50.96 | 61.33 | 0.87 | 0.84 | 0.76 |

| SalamandraTA-2B | pl | ca | 25.62 | 64.15 | 53.55 | 0.85 | 0.81 | 0.71 |

| nllb 3.3B | pl | ca | 25.14 | 64.43 | 53.09 | 0.85 | 0.83 | 0.71 |

| SalamandraTA-2B | nl | ca | 26.17 | 63.88 | 54.01 | 0.84 | 0.83 | 0.7 |

| nllb 3.3B | nl | ca | 25.61 | 64.26 | 53.43 | 0.84 | 0.85 | 0.71 |

| SalamandraTA-2B | mt | ca | 36.97 | 50.43 | 62.69 | 0.79 | 0.68 | 0.75 |

| nllb 3.3B | mt | ca | 36.03 | 51.51 | 61.46 | 0.79 | 0.69 | 0.74 |

| SalamandraTA-2B | lv | ca | 27.81 | 61.96 | 56.12 | 0.84 | 0.77 | 0.7 |

| nllb 3.3B | lv | ca | 26.83 | 63.33 | 53.93 | 0.84 | 0.78 | 0.7 |

| SalamandraTA-2B | lt | ca | 27.29 | 61.15 | 54.14 | 0.84 | 0.75 | 0.7 |

| nllb 3.3B | lt | ca | 26.13 | 62.2 | 53.17 | 0.84 | 0.77 | 0.7 |

| SalamandraTA-2B | it | ca | 29.12 | 60.95 | 57.85 | 0.87 | 0.85 | 0.74 |

| nllb 3.3B | it | ca | 28.06 | 61.81 | 57.06 | 0.87 | 0.85 | 0.74 |

| SalamandraTA-2B | hu | ca | 28.21 | 60.54 | 55.38 | 0.85 | 0.81 | 0.71 |

| nllb 3.3B | hu | ca | 27.58 | 60.77 | 54.76 | 0.85 | 0.83 | 0.72 |

| SalamandraTA-2B | hr | ca | 30.13 | 57.59 | 57.25 | 0.86 | 0.81 | 0.72 |

| nllb 3.3B | hr | ca | 29.15 | 62.59 | 56.04 | 0.86 | 0.83 | 0.72 |

| nllb 3.3B | gl | ca | 34.23 | 53.25 | 61.28 | 0.88 | 0.85 | 0.76 |

| SalamandraTA-2B | gl | ca | 32.09 | 54.77 | 60.42 | 0.87 | 0.84 | 0.75 |

| SalamandraTA-2B | ga | ca | 28.11 | 62.93 | 55.28 | 0.8 | 0.68 | 0.67 |

| nllb 3.3B | ga | ca | 27.73 | 62.91 | 53.93 | 0.79 | 0.69 | 0.66 |

| SalamandraTA-2B | fr | ca | 35.87 | 52.28 | 61.2 | 0.87 | 0.83 | 0.75 |

| nllb 3.3B | fr | ca | 34.42 | 53.05 | 60.31 | 0.87 | 0.84 | 0.76 |

| SalamandraTA-2B | fi | ca | 27.35 | 61.33 | 54.95 | 0.86 | 0.8 | 0.7 |

| nllb 3.3B | fi | ca | 27.04 | 62.35 | 54.48 | 0.86 | 0.81 | 0.71 |

| SalamandraTA-2B | eu | ca | 28.02 | 60.45 | 55.44 | 0.87 | 0.82 | 0.73 |

| nllb 3.3B | eu | ca | 26.68 | 62.62 | 54.22 | 0.86 | 0.82 | 0.71 |

| SalamandraTA-2B | et | ca | 29.84 | 58.79 | 56.74 | 0.86 | 0.78 | 0.72 |

| nllb 3.3B | et | ca | 28.43 | 60.01 | 55.48 | 0.86 | 0.79 | 0.72 |

| nllb 3.3B | es | ca | 25.64 | 64.21 | 55.18 | 0.87 | 0.85 | 0.73 |

| SalamandraTA-2B | es | ca | 23.47 | 66.71 | 54.05 | 0.86 | 0.84 | 0.72 |

| SalamandraTA-2B | en | ca | 43.98 | 42.35 | 67.3 | 0.87 | 0.85 | 0.77 |

| nllb 3.3B | en | ca | 43.24 | 43.37 | 66.58 | 0.88 | 0.85 | 0.78 |

| SalamandraTA-2B | el | ca | 28.91 | 59.86 | 55.26 | 0.85 | 0.83 | 0.71 |

| nllb 3.3B | el | ca | 28.46 | 60.28 | 55.13 | 0.85 | 0.84 | 0.72 |

| SalamandraTA-2B | de | ca | 33.71 | 54.06 | 59.79 | 0.86 | 0.83 | 0.74 |

| nllb 3.3B | de | ca | 32.71 | 54.91 | 58.91 | 0.86 | 0.84 | 0.74 |

| SalamandraTA-2B | da | ca | 35.14 | 52.51 | 60.81 | 0.86 | 0.82 | 0.74 |

| nllb 3.3B | da | ca | 34.03 | 53.41 | 59.46 | 0.86 | 0.83 | 0.75 |

| SalamandraTA-2B | cs | ca | 31.12 | 56.71 | 58.22 | 0.86 | 0.81 | 0.73 |

| nllb 3.3B | cs | ca | 29.26 | 58.38 | 56.53 | 0.86 | 0.82 | 0.73 |

| SalamandraTA-2B | bg | ca | 31.33 | 56.72 | 58.75 | 0.85 | 0.84 | 0.73 |

| nllb 3.3B | bg | ca | 30.5 | 57.03 | 57.92 | 0.85 | 0.85 | 0.73 |

Evaluation Aranese, Aragonese, Asturian

Using MT Lens we evaluate Spanish-Asturian (ast), Spanish-Aragonese (an) and Spanish-Aranese (arn) on BLEU and ChrF scores on the Flores+ dev evaluation dataset. We also report BLEU and ChrF scores for catalan directions.

Asturian Flores+ dev

Below are the evaluation results compared to Apertium, Eslema and NLLB (Costa-jussà et al., 2022).

| source | target | Bleu | ChrF | |

|---|---|---|---|---|

| nllb 3.3B | es | ast | 18.78 | 50.5 |

| Eslema | es | ast | 17.30 | 50.77 |

| nllb 600M | es | ast | 17.23 | 49.72 |

| SalamandraTA-2B | es | ast | 17.11 | 49.49 |

| Apertium | es | ast | 16.66 | 50.57 |

| nllb 3.3B | ca | ast | 25.87 | 54.9 |

| SalamandraTA-2B | ca | ast | 25.17 | 55.17 |

Aragonese Flores+ dev

Below are the evaluation results on compared to Apertium, Softcatalà and Traduze.

| source | target | Bleu | ChrF | |

|---|---|---|---|---|

| Apertium | es | an | 65.34 | 82.00 |

| Softcatalà | es | an | 50.21 | 73.97 |

| SalamandraTA-2B | es | an | 49.13 | 74.22 |

| Traduze | es | an | 37.43 | 69.51 |

| SalamandraTA-2B | ca | an | 17.06 | 49.12 |

Aranese Flores+ dev

Below are the evaluation results on compared to Apertium and Softcatalà.

| source | target | Bleu | ChrF | |

|---|---|---|---|---|

| Apertium | es | arn | 48.96 | 72.63 |

| Softcatalà | es | arn | 34.43 | 58.61 |

| SalamandraTA-2B | es | arn | 34.35 | 57.78 |

| SalamandraTA-2B | ca | arn | 21.95 | 48.67 |

Ethical Considerations and Limitations

Detailed information on the work done to examine the presence of unwanted social and cognitive biases in the base model can be found at Salamandra-2B model card. With regard to MT models, no specific analysis has yet been carried out in order to evaluate potential biases or limitations in translation accuracy across different languages, dialects, or domains. However, we recognize the importance of identifying and addressing any harmful stereotypes, cultural inaccuracies, or systematic performance discrepancies that may arise in Machine Translation. As such, we plan to perform more analyses as soon as we have implemented the necessary metrics and methods within our evaluation framework MT Lens.

Additional information

Author

The Language Technologies Unit from Barcelona Supercomputing Center.

Contact

For further information, please send an email to langtech@bsc.es.

Copyright

Copyright(c) 2024 by Language Technologies Unit, Barcelona Supercomputing Center.

Funding

This work has been promoted and financed by the Government of Catalonia through the Aina Project.

This work is funded by the Ministerio para la Transformación Digital y de la Función Pública - Funded by EU – NextGenerationEU within the framework of ILENIA Project with reference 2022/TL22/00215337.

Disclaimer

Be aware that the model may contain biases or other unintended distortions. When third parties deploy systems or provide services based on this model, or use the model themselves, they bear the responsibility for mitigating any associated risks and ensuring compliance with applicable regulations, including those governing the use of Artificial Intelligence.

The Barcelona Supercomputing Center, as the owner and creator of the model, shall not be held liable for any outcomes resulting from third-party use.

License

- Downloads last month

- 1,038