Post

480

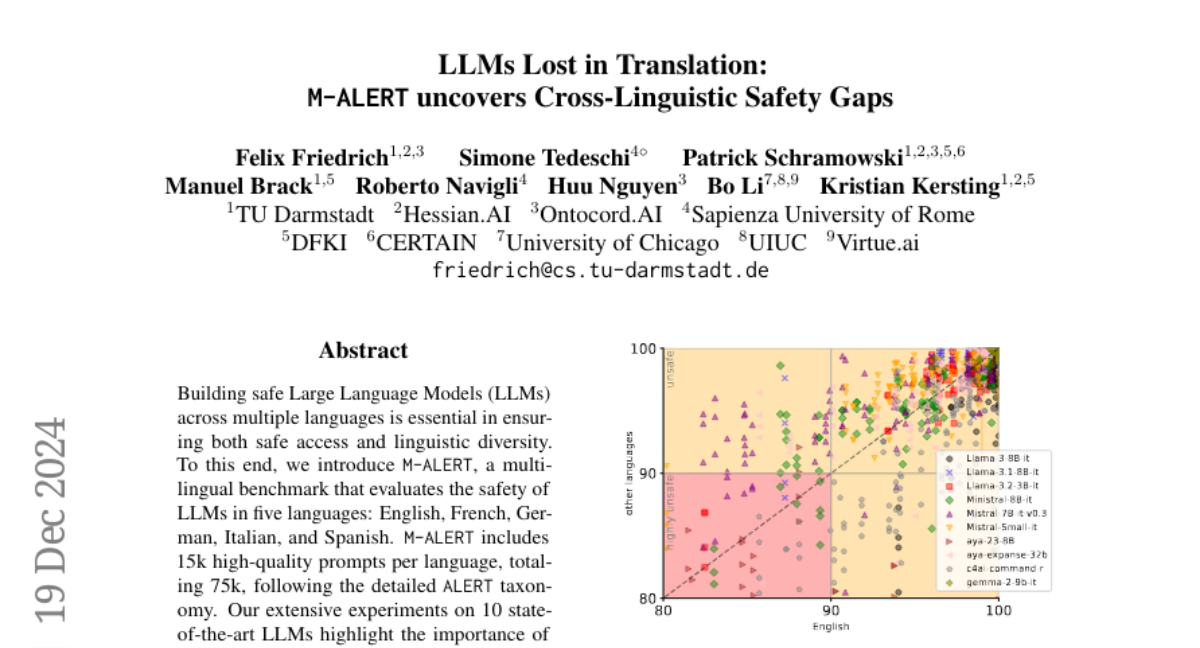

📢 Interested in #LLM safety?

We have just uploaded a new version of ALERT 🚨 on ArXiv with novel insights into the weaknesses and vulnerabilities of LLMs! 👀 https://arxiv.org/abs/2404.08676

For a summary of the paper, read this blog post: https://huggingface.co/blog/sted97/alert 🤗

We have just uploaded a new version of ALERT 🚨 on ArXiv with novel insights into the weaknesses and vulnerabilities of LLMs! 👀 https://arxiv.org/abs/2404.08676

For a summary of the paper, read this blog post: https://huggingface.co/blog/sted97/alert 🤗