Spaces:

Runtime error

A Small Chat with Chinese Language Model: ChatLM-Chinese-0.2B

中文 | English

1. 👋Introduction

Today's large language models tend to have large parameters, and consumer-grade computers are slow to do simple inference, let alone train a model from scratch. The goal of this project is to train a generative language models from scratch, including data cleaning, tokenizer training, model pre-training, SFT instruction fine-tuning, RLHF optimization, etc.

ChatLM-mini-Chinese is a small Chinese chat model with only 0.2B (added shared weight is about 210M) parameters. It can be pre-trained on machine with a minimum of 4GB of GPU memory (batch_size=1, fp16 or bf16), float16 loading and inference only require a minimum of 512MB of GPU memory.

- Make public all pre-training, SFT instruction fine-tuning, and DPO preference optimization datasets sources.

- Use the

HuggingfaceNLP framework, includingtransformers,accelerate,trl,peft, etc. - Self-implemented

trainer, supporting pre-training and SFT fine-tuning on a single machine with a single card or with multiple cards on a single machine. It supports stopping at any position during training and continuing training at any position. - Pre-training: Integrated into end-to-end

Text-to-Textpre-training, non-maskmask prediction pre-training.- Open source all data cleaning (such as standardization, document deduplication based on mini_hash, etc.), data set construction, data set loading optimization and other processes;

- tokenizer multi-process word frequency statistics, supports tokenizer training of

sentencepieceandhuggingface tokenizers; - Pre-training supports checkpoint at any step, and training can be continued from the breakpoint;

- Streaming loading of large datasets (GB level), supporting buffer data shuffling, does not use memory or hard disk as cache, effectively reducing memory and disk usage. configuring

batch_size=1, max_len=320, supporting pre-training on a machine with at least 16GB RAM + 4GB GPU memory; - Training log record.

- SFT fine-tuning: open source SFT dataset and data processing process.

- The self-implemented

trainersupports prompt command fine-tuning and supports any breakpoint to continue training; - Support

sequence to sequencefine-tuning ofHuggingface trainer; - Supports traditional low learning rate and only trains fine-tuning of the decoder layer.

- The self-implemented

- RLHF Preference optimization: Use DPO to optimize all preferences.

- Support using

peft lorafor preference optimization; - Supports model merging,

Lora adaptercan be merged into the original model.

- Support using

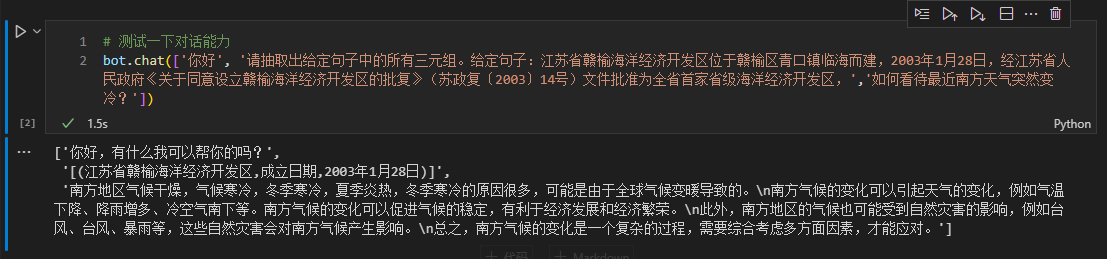

- Support downstream task fine-tuning: finetune_examples gives a fine-tuning example of the Triple Information Extraction Task. The model dialogue capability after fine-tuning is still there.

If you need to do retrieval augmented generation (RAG) based on small models, you can refer to my other project Phi2-mini-Chinese. For the code, see rag_with_langchain.ipynb

🟢Latest Update

2024-01-30

- The model files are updated to Moda modelscope and can be quickly downloaded through `snapshot_download`.2024-01-07

- Add document deduplication based on mini hash during the data cleaning process (in this project, it's to deduplicated the rows of datasets actually). Prevent the model from spitting out training data during inference after encountering multiple repeated data.- Add the `DropDatasetDuplicate` class to implement deduplication of documents from large data sets.

2023-12-29

- Update the model code (weights is NOT changed), you can directly use `AutoModelForSeq2SeqLM.from_pretrained(...)` to load the model for using.- Updated readme documentation.

2023-12-18

- Supplementary use of the `ChatLM-mini-0.2B` model to fine-tune the downstream triplet information extraction task code and display the extraction results.- Updated readme documentation.

2023-12-14

- Updated model weight files after SFT and DPO.- Updated pre-training, SFT and DPO scripts.

- update `tokenizer` to `PreTrainedTokenizerFast`.

- Refactor the `dataset` code to support dynamic maximum length. The maximum length of each batch is determined by the longest text in the batch, saving GPU memory.

- Added `tokenizer` training details.

2023-12-04

- Updated `generate` parameters and model effect display.- Updated readme documentation.

2023-11-28

- Updated dpo training code and model weights.2023-10-19

- The project is open source and the model weights are open for download.2. 🛠️ChatLM-0.2B-Chinese model training process

2.1 Pre-training dataset

All datasets come from the Single Round Conversation dataset published on the Internet. After data cleaning and formatting, they are saved as parquet files. For the data processing process, see utils/raw_data_process.py. Main datasets include:

- Community Q&A json version webtext2019zh-large-scale high-quality dataset, see: nlp_chinese_corpus. A total of 4.1 million, with 2.6 million remaining after cleaning.

- baike_qa2019 encyclopedia Q&A, see: https://aistudio.baidu.com/datasetdetail/107726, a total of 1.4 million, and the remaining 1.3 million after waking up.

- Chinese medical field question and answer dataset, see: Chinese-medical-dialogue-data, with a total of 790,000, and the remaining 790,000 after cleaning.

- ~~Financial industry question and answer data, see: https://zhuanlan.zhihu.com/p/609821974, a total of 770,000, and the remaining 520,000 after cleaning. ~~**The data quality is too poor and not used. **

- Zhihu question and answer data, see: Zhihu-KOL, with a total of 1 million rows, and 970,000 rows remain after cleaning.

- belle open source instruction training data, introduction: BELLE, download: BelleGroup, only select

Belle_open_source_1M,train_2M_CN, andtrain_3.5M_CNcontain some data with short answers, no complex table structure, and translation tasks (no English vocabulary list), totaling 3.7 million rows, and 3.38 million rows remain after cleaning. - Wikipedia entry data, piece together the entries into prompts, the first

Nwords of the encyclopedia are the answers, use the encyclopedia data of202309, and after cleaning, the remaining 1.19 million entry prompts and answers . Wiki download: zhwiki, convert the downloaded bz2 file to wiki.txt reference: WikiExtractor.

The total number of datasets is 10.23 million: Text-to-Text pre-training set: 9.3 million, evaluation set: 25,000 (because the decoding is slow, the evaluation set is not set too large). Test set: 900,000

SFT fine-tuning and DPO optimization datasets are shown below.

2.2 Model

T5 model (Text-to-Text Transfer Transformer), for details, see the paper: Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer.

The model source code comes from huggingface, see: T5ForConditionalGeneration.

For model configuration, see model_config.json. The official T5-base: encoder layer and decoder layer are both 12 layers. In this project, these two parameters are modified to 10 layers.

Model parameters: 0.2B. Word list size: 29298, including only Chinese and a small amount of English.

2.3 Training process

hardware:

# Pre-training phase:

CPU: 28 vCPU Intel(R) Xeon(R) Gold 6330 CPU @ 2.00GHz

Memory: 60 GB

GPU: RTX A5000 (24GB) * 2

# sft and dpo stages:

CPU: Intel(R) i5-13600k @ 5.1GHz

Memory: 32 GB

GPU: NVIDIA GeForce RTX 4060 Ti 16GB * 1

tokenizer training: The existing

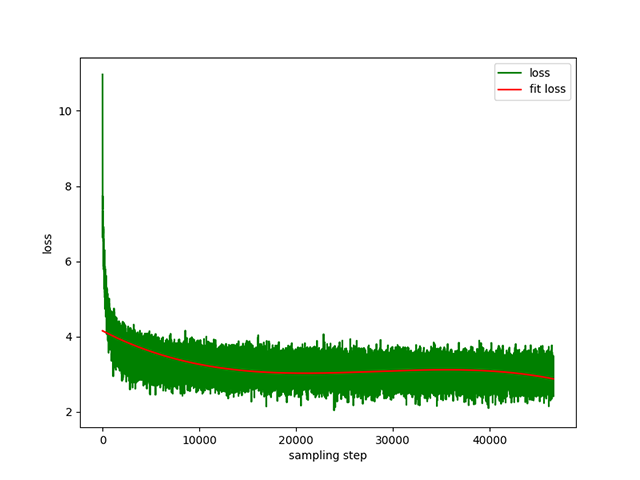

tokenizertraining library has OOM problems when encountering large corpus. Therefore, the full corpus is merged and constructed according to word frequency according to a method similar toBPE, and the operation takes half a day.Text-to-Text pre-training: The learning rate is a dynamic learning rate from

1e-4to5e-3, and the pre-training time is 8 days. Training loss:

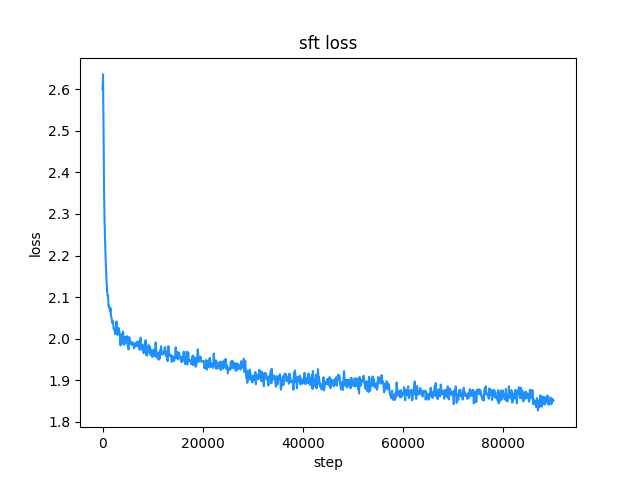

prompt supervised fine-tuning (SFT): Use the

belleinstruction training dataset (both instruction and answer lengths are below 512), with a dynamic learning rate from1e-7to5e-5, the fine-tuning time is 2 days. Fine-tuning loss:

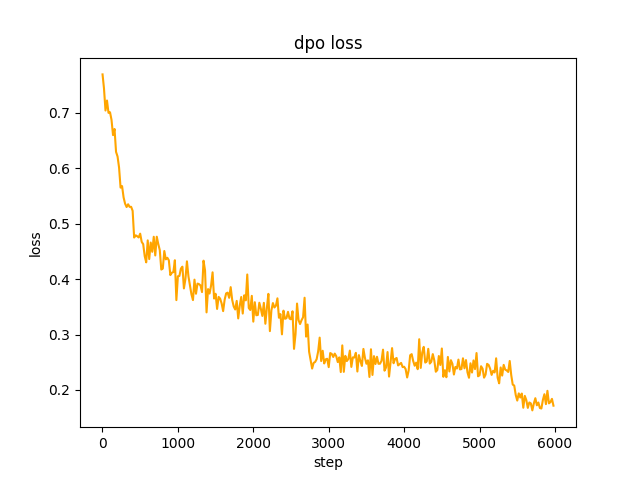

dpo direct preference optimization(RLHF): dataset alpaca-gpt4-data-zh as

chosentext , in step2, the SFT model performs batchgenerateon the prompts in the dataset, and obtains therejectedtext, which takes 1 day, dpo full preference optimization, learning ratele-5, half precisionfp16, total2epoch, taking 3h. dpo loss:

2.4 chat show

2.4.1 stream chat

By default, TextIteratorStreamer of huggingface transformers is used to implement streaming dialogue, and only greedy search is supported. If you need beam sample and other generation methods, please change the stream_chat parameter of cli_demo.py to False .

2.4.2 Dialogue show

There are problems: the pre-training dataset only has more than 9 million, and the model parameters are only 0.2B. It cannot cover all aspects, and there will be situations where the answer is wrong and the generator is nonsense.

3. 📑Instructions for using

3.1 Quick start:

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM

import torch

model_id = 'charent/ChatLM-mini-Chinese'

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

# 如果无法连接huggingface,打开以下两行代码的注释,将从modelscope下载模型文件,模型文件保存到'./model_save'目录

# from modelscope import snapshot_download

# model_id = snapshot_download(model_id, cache_dir='./model_save')

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForSeq2SeqLM.from_pretrained(model_id, trust_remote_code=True).to(device)

txt = '如何评价Apple这家公司?'

encode_ids = tokenizer([txt])

input_ids, attention_mask = torch.LongTensor(encode_ids['input_ids']), torch.LongTensor(encode_ids['attention_mask'])

outs = model.my_generate(

input_ids=input_ids.to(device),

attention_mask=attention_mask.to(device),

max_seq_len=256,

search_type='beam',

)

outs_txt = tokenizer.batch_decode(outs.cpu().numpy(), skip_special_tokens=True, clean_up_tokenization_spaces=True)

print(outs_txt[0])

Apple是一家专注于设计和用户体验的公司,其产品在设计上注重简约、流畅和功能性,而在用户体验方面则注重用户的反馈和使用体验。作为一家领先的科技公司,苹果公司一直致力于为用户提供最优质的产品和服务,不断推陈出新,不断创新和改进,以满足不断变化的市场需求。

在iPhone、iPad和Mac等产品上,苹果公司一直保持着创新的态度,不断推出新的功能和设计,为用户提供更好的使用体验。在iPad上推出的iPad Pro和iPod touch等产品,也一直保持着优秀的用户体验。

此外,苹果公司还致力于开发和销售软件和服务,例如iTunes、iCloud和App Store等,这些产品在市场上也获得了广泛的认可和好评。

总的来说,苹果公司在设计、用户体验和产品创新方面都做得非常出色,为用户带来了许多便利和惊喜。

3.2 from clone code repository start

The model of this project is the

TextToTextmodel. In theprompt,responseand other fields of the pre-training stage, SFT stage, and RLFH stage, please be sure to add the[EOS]end-of-sequence mark.

3.2.1 Clone repository

git clone --depth 1 https://github.com/charent/ChatLM-mini-Chinese.git

cd ChatLM-mini-Chinese

3.2.2 Install dependencies

It is recommended to use python 3.10 for this project. Older python versions may not be compatible with the third-party libraries it depends on.

pip installation:

pip install -r ./requirements.txt

If pip installed the CPU version of pytorch, you can install the CUDA version of pytorch with the following command:

# pip install torch + cu118

pip3 install torch --index-url https://download.pytorch.org/whl/cu118

conda installation:

conda install --yes --file ./requirements.txt

3.2.3 Download the pre-trained model and model configuration file

Download model weights and configuration files from Hugging Face Hub with git command, you need to install [Git LFS](https://docs.github.com/zh/repositories/working-with-files/managing-large-files/installing-git-large -file-storage), then run:

# Use the git command to download the huggingface model. Install [Git LFS] first, otherwise the downloaded model file will not be available.

git clone --depth 1 https://huggingface.co/charent/ChatLM-mini-Chinese

# If unable to connect huggingface, please download from modelscope

git clone --depth 1 https://www.modelscope.cn/charent/ChatLM-mini-Chinese.git

mv ChatLM-mini-Chinese model_save

You can also manually download it directly from the Hugging Face Hub warehouse ChatLM-mini-Chinese and move the downloaded file to the model_save directory. .

3.3 Tokenizer training

- Prepare txt corpus

The corpus requirements should be as complete as possible. It is recommended to add multiple corpora, such as encyclopedias, codes, papers, blogs, conversations, etc.

This project is mainly based on wiki Chinese encyclopedia. How to obtain Chinese wiki corpus: Chinese Wiki download address: zhwiki, download the zhwiki-[archive date]-pages-articles-multistream.xml.bz2 file, About 2.7GB, convert the downloaded bz2 file to wiki.txt reference: WikiExtractor, then use python's OpenCC library to convert to Simplified Chinese, and finally get the Just put wiki.simple.txt in the data directory of the project root directory. Please merge multiple corpora into one txt file yourself.

Since training tokenizer consumes a lot of memory, if your corpus is very large (the merged txt file exceeds 2G), it is recommended to sample the corpus according to categories and proportions to reduce training time and memory consumption. Training a 1.7GB txt file requires about 48GB of memory (estimated, I only have 32GB, triggering swap frequently, computer stuck for a long time T_T), 13600k CPU takes about 1 hour.

- train tokenizer

The difference between char level and byte level is as follows (Please search for information on your own for specific differences in use.). The tokenizer of char level is trained by default. If byte level is required, just set token_type='byte' in train_tokenizer.py.

# original text

txt = '这是一段中英混输的句子, (chinese and English, here are words.)'

tokens = charlevel_tokenizer.tokenize(txt)

print(tokens)

# char level tokens output

# ['▁这是', '一段', '中英', '混', '输', '的', '句子', '▁,', '▁(', '▁ch', 'inese', '▁and', '▁Eng', 'lish', '▁,', '▁h', 'ere', '▁', 'are', '▁w', 'ord', 's', '▁.', '▁)']

tokens = bytelevel_tokenizer.tokenize(txt)

print(tokens)

# byte level tokens output

# ['Ġè¿Ļæĺ¯', 'ä¸Ģ段', 'ä¸Ńèĭ±', 'æ··', 'è¾ĵ', 'çļĦ', 'åı¥åŃIJ', 'Ġ,', 'Ġ(', 'Ġch', 'inese', 'Ġand', 'ĠEng', 'lish', 'Ġ,', 'Ġh', 'ere', 'Ġare', 'Ġw', 'ord', 's', 'Ġ.', 'Ġ)']

Start training:

# Make sure your training corpus `txt` file is in the data directory

python train_tokenizer.py

3.4 Text-to-Text pre-training

- Pre-training dataset example

{

"prompt": "对于花园街,你有什么了解或看法吗?",

"response": "花园街(是香港油尖旺区的一条富有特色的街道,位于九龙旺角东部,北至界限街,南至登打士街,与通菜街及洗衣街等街道平行。现时这条街道是香港著名的购物区之一。位于亚皆老街以南的一段花园街,也就是\"波鞋街\"整条街约150米长,有50多间售卖运动鞋和运动用品的店舖。旺角道至太子道西一段则为排档区,售卖成衣、蔬菜和水果等。花园街一共分成三段。明清时代,花园街是芒角村栽种花卉的地方。此外,根据历史专家郑宝鸿的考证:花园街曾是1910年代东方殷琴拿烟厂的花园。纵火案。自2005年起,花园街一带最少发生5宗纵火案,当中4宗涉及排档起火。2010年。2010年12月6日,花园街222号一个卖鞋的排档于凌晨5时许首先起火,浓烟涌往旁边住宅大厦,消防接报4"

}

jupyter-lab or jupyter notebook:

See the file

train.ipynb. It is recommended to use jupyter-lab to avoid considering the situation where the terminal process is killed after disconnecting from the server.Console:

Console training needs to consider that the process will be killed after the connection is disconnected. It is recommended to use the process daemon tool

Supervisororscreento establish a connection session.First, configure

accelerate, execute the following command, and select according to the prompts. Refer toaccelerate.yaml, Note: DeepSpeed installation in Windows is more troublesome.accelerate configStart training. If you want to use the configuration provided by the project, please add the parameter

--config_file ./accelerate.yamlafter the following commandaccelerate launch. This configuration is based on the single-machine 2xGPU configuration.There are two scripts for pre-training. The trainer implemented in this project corresponds to

train.py, and the trainer implemented by huggingface corresponds topre_train.py. You can use either one and the effect will be the same. The training information display of the trainer implemented in this project is more beautiful, and it is easier to modify the training details (such as loss function, log records, etc.). All support checkpoint to continue training. The trainer implemented in this project supports continuing training after a breakpoint at any position. Pressctrl+cwill save the breakpoint information when exiting the script.Single machine and single card:

# The trainer implemented in this project accelerate launch ./train.py train # Or use huggingface trainer accelerate launch --multi_gpu --num_processes 2 pre_train.pySingle machine with multiple GPUs: '2' is the number of gpus, please modify it according to your actual situation.

# The trainer implemented in this project accelerate launch --multi_gpu --num_processes 2 ./train.py train # Or use huggingface trainer accelerate launch --multi_gpu --num_processes 2 pre_train.pyContinue training from the breakpoint:

# The trainer implemented in this project accelerate launch --multi_gpu --num_processes 2 ./train.py train --is_keep_training=True # Or use huggingface trainer # You need to add `resume_from_checkpoint=True` to the `train` function in `pre_train.py` python pre_train.py

3.5 Supervised Fine-tuning, SFT

The SFT dataset all comes from the contribution of BELLE. Thank you. The SFT datasets are: generated_chat_0.4M, train_0.5M_CN and train_2M_CN, about 1.37 million rows remain after cleaning. Example of fine-tuning dataset with sft command:

{

"prompt": "解释什么是欧洲启示录",

"response": "欧洲启示录(The Book of Revelation)是新约圣经的最后一卷书,也被称为《启示录》、《默示录》或《约翰默示录》。这本书从宗教的角度描述了世界末日的来临,以及上帝对世界的审判和拯救。 书中的主题包括来临的基督的荣耀,上帝对人性的惩罚和拯救,以及魔鬼和邪恶力量的存在。欧洲启示录是一个充满象征和暗示的文本,对于解读和理解有许多不同的方法和观点。"

}

Make your own dataset by referring to the sample parquet file in the data directory. The dataset format is: the parquet file is divided into two columns, one column of prompt text, representing the prompt, and one column of response text, representing the expected model. output.

For fine-tuning details, see the train method under model/trainer.py. When is_finetune is set to True, fine-tuning will be performed. Fine-tuning will freeze the embedding layer and encoder layer by default, and only train the decoder layer. If you need to freeze other parameters, please adjust the code yourself.

Run SFT fine-tuning:

# For the trainer implemented in this project, just add the parameter `--is_finetune=True`. The parameter `--is_keep_training=True` can continue training from any breakpoint.

accelerate launch --multi_gpu --num_processes 2 ./train.py --is_finetune=True

# Or use huggingface trainer

python sft_train.py

3.6 RLHF (Reinforcement Learning Human Feedback Optimization Method)

Here are two common preferred methods: PPO and DPO. Please search papers and blogs for specific implementations.

PPO method (approximate preference optimization, Proximal Policy Optimization)

Step 1: Use the fine-tuning dataset to do supervised fine-tuning (SFT, Supervised Finetuning).

Step 2: Use the preference dataset (a prompt contains at least 2 responses, one wanted response and one unwanted response. Multiple responses can be sorted by score, with the most wanted one having the highest score) to train the reward model (RM, Reward Model). You can use thepeftlibrary to quickly build the Lora reward model.

Step 3: Use RM to perform supervised PPO training on the SFT model so that the model meets preferences.Use DPO (Direct Preference Optimization) fine-tuning (This project uses the DPO fine-tuning method, which saves GPU memory) On the basis of obtaining the SFT model, there is no need to train the reward model, and fine-tuning can be started by obtaining the positive answer (chosen) and the negative answer (rejected). The fine-tuned

chosentext comes from the original dataset alpaca-gpt4-data-zh, and the rejected textrejectedcomes from SFT Model output after fine-tuning 1 epoch, two other datasets: huozi_rlhf_data_json and [rlhf-reward-single-round-trans_chinese](https:// huggingface.co/datasets/beyond/rlhf-reward-single-round-trans_chinese), a total of 80,000 dpo data after the merger.For the dpo dataset processing process, see

utils/dpo_data_process.py.

DPO preference optimization dataset example:

{

"prompt": "为给定的产品创建一个创意标语。,输入:可重复使用的水瓶。",

"chosen": "\"保护地球,从拥有可重复使用的水瓶开始!\"",

"rejected": "\"让你的水瓶成为你的生活伴侣,使用可重复使用的水瓶,让你的水瓶成为你的伙伴\""

}

Run preference optimization:

pythondpo_train.py

3.7 Infering

Make sure there are the following files in the model_save directory, These files can be found in the Hugging Face Hub repository ChatLM-Chinese-0.2B::

ChatLM-mini-Chinese

├─model_save

| ├─config.json

| ├─configuration_chat_model.py

| ├─generation_config.json

| ├─model.safetensors

| ├─modeling_chat_model.py

| ├─special_tokens_map.json

| ├─tokenizer.json

| └─tokenizer_config.json

- Console run:

python cli_demo.py

- API call

python api_demo.py

API call example: API调用示例:

curl --location '127.0.0.1:8812/api/chat' \

--header 'Content-Type: application/json' \

--header 'Authorization: Bearer Bearer' \

--data '{

"input_txt": "感冒了要怎么办"

}'

3.8 Fine-tuning of downstream tasks

Here we take the triplet information in the text as an example to do downstream fine-tuning. Traditional deep learning extraction methods for this task can be found in the repository pytorch_IE_model. Extract all the triples in a piece of text, such as the sentence "Sketching Essays" is a book published by Metallurgical Industry in 2006, the author is Zhang Lailiang, extract the triples (Sketching Essays, author, Zhang Lailiang) and ( Sketching essays, publishing house, metallurgical industry).

The original dataset is: Baidu Triplet Extraction dataset. Example of the processed fine-tuned dataset format:

{

"prompt": "请抽取出给定句子中的所有三元组。给定句子:《家乡的月亮》是宋雪莱演唱的一首歌曲,所属专辑是《久违的哥们》",

"response": "[(家乡的月亮,歌手,宋雪莱),(家乡的月亮,所属专辑,久违的哥们)]"

}

You can directly use the sft_train.py script for fine-tuning. The script finetune_IE_task.ipynb contains the detailed decoding process. The training dataset is about 17000, the learning rate is 5e-5, and the training epoch is 5. The dialogue capabilities of other tasks have not disappeared after fine-tuning.

Fine-tuning effects:

The public dev dataset of Baidu triple extraction dataset is used as a test set to compare with the traditional method pytorch_IE_model.

| Model | F1 score | Precision | Recall |

|---|---|---|---|

| ChatLM-Chinese-0.2B fine-tuning | 0.74 | 0.75 | 0.73 |

| ChatLM-Chinese-0.2B without pre-training | 0.51 | 0.53 | 0.49 |

| Traditional deep learning method | 0.80 | 0.79 | 80.1 |

Note: ChatLM-Chinese-0.2B without pre-training means directly initializing random parameters, starting training, learning rate 1e-4, and other parameters are consistent with fine-tuning.

3.9 C-Eval score

The model itself is not trained with a large dataset and it is no fine-tuning for the instructions for answering multiple-choice questions, and the C-Eval score is basically at the baseline level. If necessary, it can be used as a reference. The C-Eval review code can be found at: 'eval/c_eavl.ipynb'

| category | correct | question_count | accuracy |

|---|---|---|---|

| Humanities | 63 | 257 | 24.51% |

| Other | 89 | 384 | 23.18% |

| STEM | 89 | 430 | 20.70% |

| Social Science | 72 | 275 | 26.18% |

4. 🎓Citation

If you think this project is helpful to you, please site it.

@misc{Charent2023,

author={Charent Chen},

title={A small chinese chat language model with 0.2B parameters base on T5},

year={2023},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/charent/ChatLM-mini-Chinese}},

}

5. 🤔Other matters

This project does not bear any risks and responsibilities arising from data security and public opinion risks caused by open source models and codes, or any model being misled, abused, disseminated, or improperly exploited.