Spaces:

Runtime error

A newer version of the Gradio SDK is available:

5.16.1

title: amol-rainfallStratosphere

app_file: app.py

sdk: gradio

sdk_version: 3.37.0

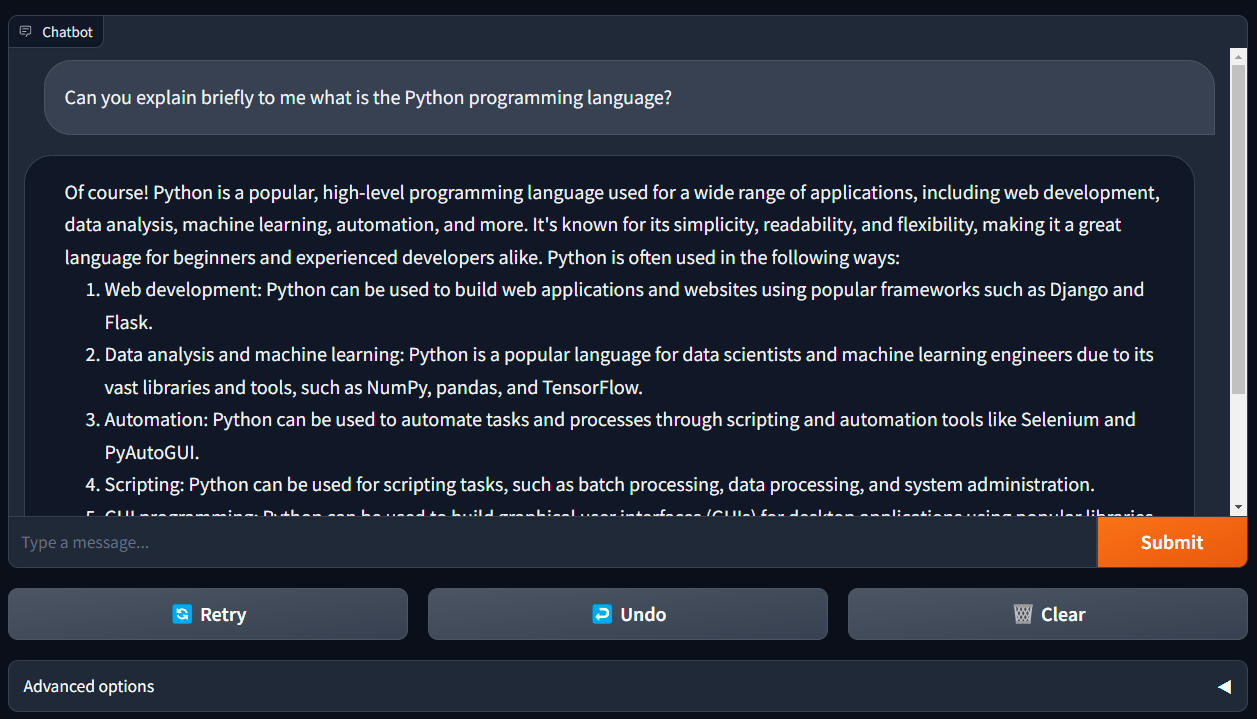

llama2-webui

Running Llama 2 with gradio web UI on GPU or CPU from anywhere (Linux/Windows/Mac).

- Supporting all Llama 2 models (7B, 13B, 70B, GPTQ, GGML) with 8-bit, 4-bit mode.

- Use llama2-wrapper as your local llama2 backend for Generative Agents/Apps; colab example.

Features

- Supporting models: Llama-2-7b/13b/70b, all Llama-2-GPTQ, all Llama-2-GGML ...

- Supporting model backends: tranformers, bitsandbytes(8-bit inference), AutoGPTQ(4-bit inference), llama.cpp

- Demos: Run Llama2 on MacBook Air; Run Llama2 on free Colab T4 GPU

- Use llama2-wrapper as your local llama2 backend for Generative Agents/Apps; colab example.

- News, Benchmark, Issue Solutions

Contents

Install

Method 1: From PyPI

pip install llama2-wrapper

Method 2: From Source:

git clone https://github.com/liltom-eth/llama2-webui.git

cd llama2-webui

pip install -r requirements.txt

Install Issues:

bitsandbytes >= 0.39 may not work on older NVIDIA GPUs. In that case, to use LOAD_IN_8BIT, you may have to downgrade like this:

pip install bitsandbytes==0.38.1

bitsandbytes also need a special install for Windows:

pip uninstall bitsandbytes

pip install https://github.com/jllllll/bitsandbytes-windows-webui/releases/download/wheels/bitsandbytes-0.41.0-py3-none-win_amd64.whl

Usage

Start Web UI

Run chatbot simply with web UI:

python app.py

app.py will load the default config .env which uses llama.cpp as the backend to run llama-2-7b-chat.ggmlv3.q4_0.bin model for inference. The model llama-2-7b-chat.ggmlv3.q4_0.bin will be automatically downloaded.

Running on backend llama.cpp.

Use default model path: ./models/llama-2-7b-chat.ggmlv3.q4_0.bin

Start downloading model to: ./models/llama-2-7b-chat.ggmlv3.q4_0.bin

You can also customize your MODEL_PATH, BACKEND_TYPE, and model configs in .env file to run different llama2 models on different backends (llama.cpp, transformers, gptq).

Env Examples

There are some examples in ./env_examples/ folder.

| Model Setup | Example .env |

|---|---|

| Llama-2-7b-chat-hf 8-bit (transformers backend) | .env.7b_8bit_example |

| Llama-2-7b-Chat-GPTQ 4-bit (gptq transformers backend) | .env.7b_gptq_example |

| Llama-2-7B-Chat-GGML 4bit (llama.cpp backend) | .env.7b_ggmlv3_q4_0_example |

| Llama-2-13b-chat-hf (transformers backend) | .env.13b_example |

| ... | ... |

Use llama2-wrapper for Your App

🔥 For developers, we released llama2-wrapper as a llama2 backend wrapper in PYPI.

Use llama2-wrapper as your local llama2 backend to answer questions and more, colab example:

# pip install llama2-wrapper

from llama2_wrapper import LLAMA2_WRAPPER, get_prompt

llama2_wrapper = LLAMA2_WRAPPER()

# Default running on backend llama.cpp.

# Automatically downloading model to: ./models/llama-2-7b-chat.ggmlv3.q4_0.bin

prompt = "Do you know Pytorch"

answer = llama2_wrapper(get_prompt(prompt), temperature=0.9)

Run gptq llama2 model on Nvidia GPU, colab example:

from llama2_wrapper import LLAMA2_WRAPPER

llama2_wrapper = LLAMA2_WRAPPER(backend_type="gptq")

# Automatically downloading model to: ./models/Llama-2-7b-Chat-GPTQ

Run llama2 7b with bitsandbytes 8 bit with a model_path:

from llama2_wrapper import LLAMA2_WRAPPER

llama2_wrapper = LLAMA2_WRAPPER(

model_path = "./models/Llama-2-7b-chat-hf",

backend_type = "transformers",

load_in_8bit = True

)

Benchmark

Run benchmark script to compute performance on your device, benchmark.py will load the same .env as app.py.:

python benchmark.py

You can also select the iter, backend_type and model_path the benchmark will be run (overwrite .env args) :

python benchmark.py --iter NB_OF_ITERATIONS --backend_type gptq

By default, the number of iterations is 5, but if you want a faster result or a more accurate one you can set it to whatever value you want, but please only report results with at least 5 iterations.

This colab example also show you how to benchmark gptq model on free Google Colab T4 GPU.

Some benchmark performance:

| Model | Precision | Device | RAM / GPU VRAM | Speed (tokens/sec) | load time (s) |

|---|---|---|---|---|---|

| Llama-2-7b-chat-hf | 8 bit | NVIDIA RTX 2080 Ti | 7.7 GB VRAM | 3.76 | 641.36 |

| Llama-2-7b-Chat-GPTQ | 4 bit | NVIDIA RTX 2080 Ti | 5.8 GB VRAM | 18.85 | 192.91 |

| Llama-2-7b-Chat-GPTQ | 4 bit | Google Colab T4 | 5.8 GB VRAM | 18.19 | 37.44 |

| llama-2-7b-chat.ggmlv3.q4_0 | 4 bit | Apple M1 Pro CPU | 5.4 GB RAM | 17.90 | 0.18 |

| llama-2-7b-chat.ggmlv3.q4_0 | 4 bit | Apple M2 CPU | 5.4 GB RAM | 13.70 | 0.13 |

| llama-2-7b-chat.ggmlv3.q4_0 | 4 bit | Apple M2 Metal | 5.4 GB RAM | 12.60 | 0.10 |

| llama-2-7b-chat.ggmlv3.q2_K | 2 bit | Intel i7-8700 | 4.5 GB RAM | 7.88 | 31.90 |

Check/contribute the performance of your device in the full performance doc.

Download Llama-2 Models

Llama 2 is a collection of pre-trained and fine-tuned generative text models ranging in scale from 7 billion to 70 billion parameters.

Llama-2-7b-Chat-GPTQ is the GPTQ model files for Meta's Llama 2 7b Chat. GPTQ 4-bit Llama-2 model require less GPU VRAM to run it.

Model List

| Model Name | set MODEL_PATH in .env | Download URL |

|---|---|---|

| meta-llama/Llama-2-7b-chat-hf | /path-to/Llama-2-7b-chat-hf | Link |

| meta-llama/Llama-2-13b-chat-hf | /path-to/Llama-2-13b-chat-hf | Link |

| meta-llama/Llama-2-70b-chat-hf | /path-to/Llama-2-70b-chat-hf | Link |

| meta-llama/Llama-2-7b-hf | /path-to/Llama-2-7b-hf | Link |

| meta-llama/Llama-2-13b-hf | /path-to/Llama-2-13b-hf | Link |

| meta-llama/Llama-2-70b-hf | /path-to/Llama-2-70b-hf | Link |

| TheBloke/Llama-2-7b-Chat-GPTQ | /path-to/Llama-2-7b-Chat-GPTQ | Link |

| TheBloke/Llama-2-7B-Chat-GGML | /path-to/llama-2-7b-chat.ggmlv3.q4_0.bin | Link |

| ... | ... | ... |

Running 4-bit model Llama-2-7b-Chat-GPTQ needs GPU with 6GB VRAM.

Running 4-bit model llama-2-7b-chat.ggmlv3.q4_0.bin needs CPU with 6GB RAM. There is also a list of other 2, 3, 4, 5, 6, 8-bit GGML models that can be used from TheBloke/Llama-2-7B-Chat-GGML.

Download Script

These models can be downloaded from the link using CMD like:

# Make sure you have git-lfs installed (https://git-lfs.com)

git lfs install

git clone git@hf.co:meta-llama/Llama-2-7b-chat-hf

To download Llama 2 models, you need to request access from https://ai.meta.com/llama/ and also enable access on repos like meta-llama/Llama-2-7b-chat-hf. Requests will be processed in hours.

For GPTQ models like TheBloke/Llama-2-7b-Chat-GPTQ, you can directly download without requesting access.

For GGML models like TheBloke/Llama-2-7B-Chat-GGML, you can directly download without requesting access.

Tips

Run on Nvidia GPU

The running requires around 14GB of GPU VRAM for Llama-2-7b and 28GB of GPU VRAM for Llama-2-13b.

If you are running on multiple GPUs, the model will be loaded automatically on GPUs and split the VRAM usage. That allows you to run Llama-2-7b (requires 14GB of GPU VRAM) on a setup like 2 GPUs (11GB VRAM each).

Run bitsandbytes 8 bit

If you do not have enough memory, you can set up your LOAD_IN_8BIT as True in .env. This can reduce memory usage by around half with slightly degraded model quality. It is compatible with the CPU, GPU, and Metal backend.

Llama-2-7b with 8-bit compression can run on a single GPU with 8 GB of VRAM, like an Nvidia RTX 2080Ti, RTX 4080, T4, V100 (16GB).

Run GPTQ 4 bit

If you want to run 4 bit Llama-2 model like Llama-2-7b-Chat-GPTQ, you can set up your BACKEND_TYPE as gptq in .env like example .env.7b_gptq_example.

Make sure you have downloaded the 4-bit model from Llama-2-7b-Chat-GPTQ and set the MODEL_PATH and arguments in .env file.

Llama-2-7b-Chat-GPTQ can run on a single GPU with 6 GB of VRAM.

If you encounter issue like NameError: name 'autogptq_cuda_256' is not defined, please refer to here

Run on CPU

Run Llama-2 model on CPU requires llama.cpp dependency and llama.cpp Python Bindings, which are already installed.

Download GGML models like llama-2-7b-chat.ggmlv3.q4_0.bin following Download Llama-2 Models section. llama-2-7b-chat.ggmlv3.q4_0.bin model requires at least 6 GB RAM to run on CPU.

Set up configs like .env.7b_ggmlv3_q4_0_example from env_examples as .env.

Run web UI python app.py .

Mac Metal Acceleration

For Mac users, you can also set up Mac Metal for acceleration, try install this dependencies:

pip uninstall llama-cpp-python -y

CMAKE_ARGS="-DLLAMA_METAL=on" FORCE_CMAKE=1 pip install -U llama-cpp-python --no-cache-dir

pip install 'llama-cpp-python[server]'

or check details:

AMD/Nvidia GPU Acceleration

If you would like to use AMD/Nvidia GPU for acceleration, check this:

License

MIT - see MIT License

This project enables users to adapt it freely for proprietary purposes without any restrictions.

Contributing

Kindly read our Contributing Guide to learn and understand our development process.