Note

This English README is automatically generated by the markdown translation plugin in this project, and may not be 100% correct.

ChatGPT Academic Optimization

ChatGPT Academic Optimization

If you like this project, please give it a Star. If you've come up with more useful academic shortcuts or functional plugins, feel free to open an issue or pull request. We also have a README in English translated by this project itself.

Note

Please note that only functions with red color supports reading files, some functions are located in the dropdown menu of plugins. Additionally, we welcome and prioritize any new plugin PRs with highest priority!

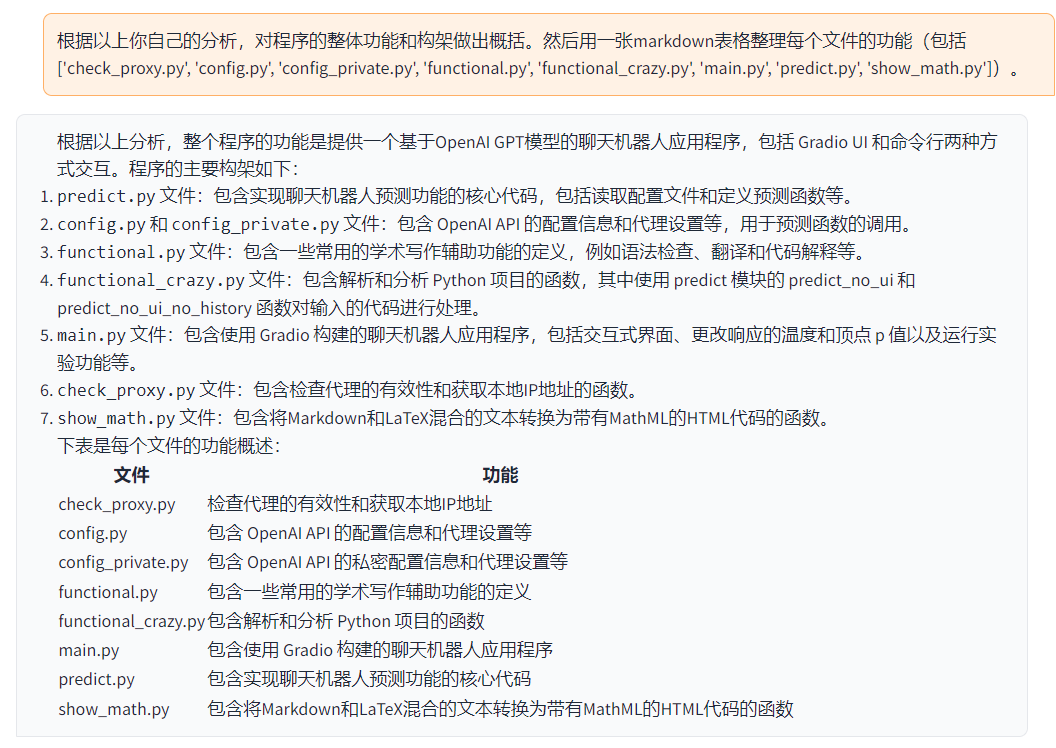

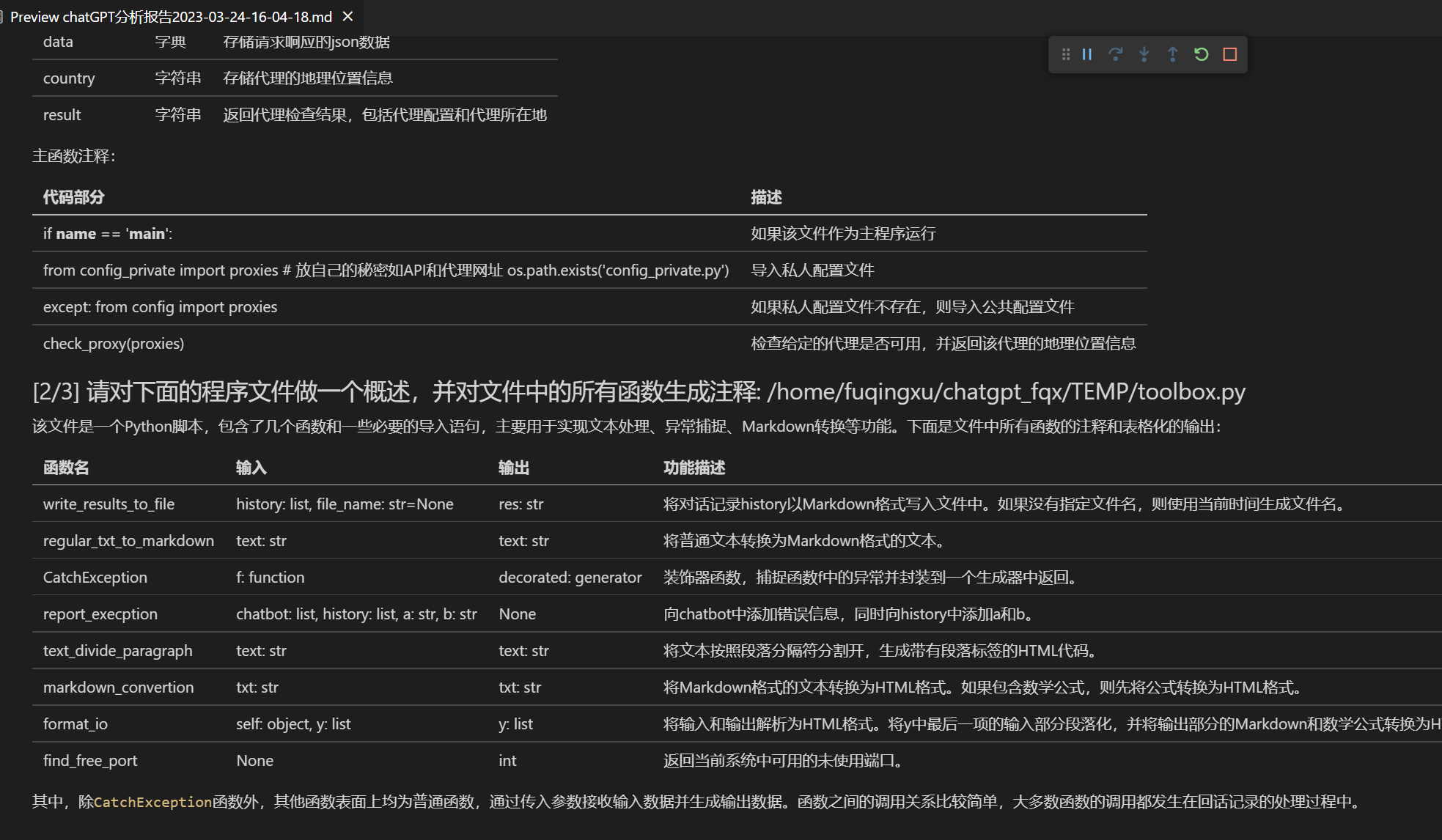

The functionality of each file in this project is detailed in the self-translation report

self_analysis.mdof the project. With the iteration of the version, you can also click on the relevant function plugins at any time to call GPT to regenerate the self-analysis report of the project. The FAQ summary is in thewikisection.

| Function | Description |

|---|---|

| One-Click Polish | Supports one-click polishing and finding grammar errors in academic papers. |

| One-Key Translation Between Chinese and English | One-click translation between Chinese and English. |

| One-Key Code Interpretation | Can correctly display and interpret code. |

| Custom Shortcut Keys | Supports custom shortcut keys. |

| Configure Proxy Server | Supports configuring proxy servers. |

| Modular Design | Supports custom high-order function plugins and [function plugins], and plugins support hot updates. |

| Self-programming Analysis | [Function Plugin] [One-Key Read] (https://github.com/binary-husky/chatgpt_academic/wiki/chatgpt-academic%E9%A1%B9%E7%9B%AE%E8%87%AA%E8%AF%91%E8%A7%A3%E6%8A%A5%E5%91%8A) The source code of this project is analyzed. |

| Program Analysis | [Function Plugin] One-click can analyze the project tree of other Python/C/C++/Java/Lua/... projects |

| Read the Paper | [Function Plugin] One-click interpretation of the full text of latex paper and generation of abstracts |

| Latex Full Text Translation, Proofreading | [Function Plugin] One-click translation or proofreading of latex papers. |

| Batch Comment Generation | [Function Plugin] One-click batch generation of function comments |

| Chat Analysis Report Generation | [Function Plugin] After running, an automatic summary report will be generated |

| Arxiv Assistant | [Function Plugin] Enter the arxiv article url to translate the abstract and download the PDF with one click |

| Full-text Translation Function of PDF Paper | [Function Plugin] Extract the title & abstract of the PDF paper + translate the full text (multithreading) |

| Google Scholar Integration Assistant | [Function Plugin] Given any Google Scholar search page URL, let gpt help you choose interesting articles. |

| Formula / Picture / Table Display | Can display both the tex form and the rendering form of formulas at the same time, support formula and code highlighting |

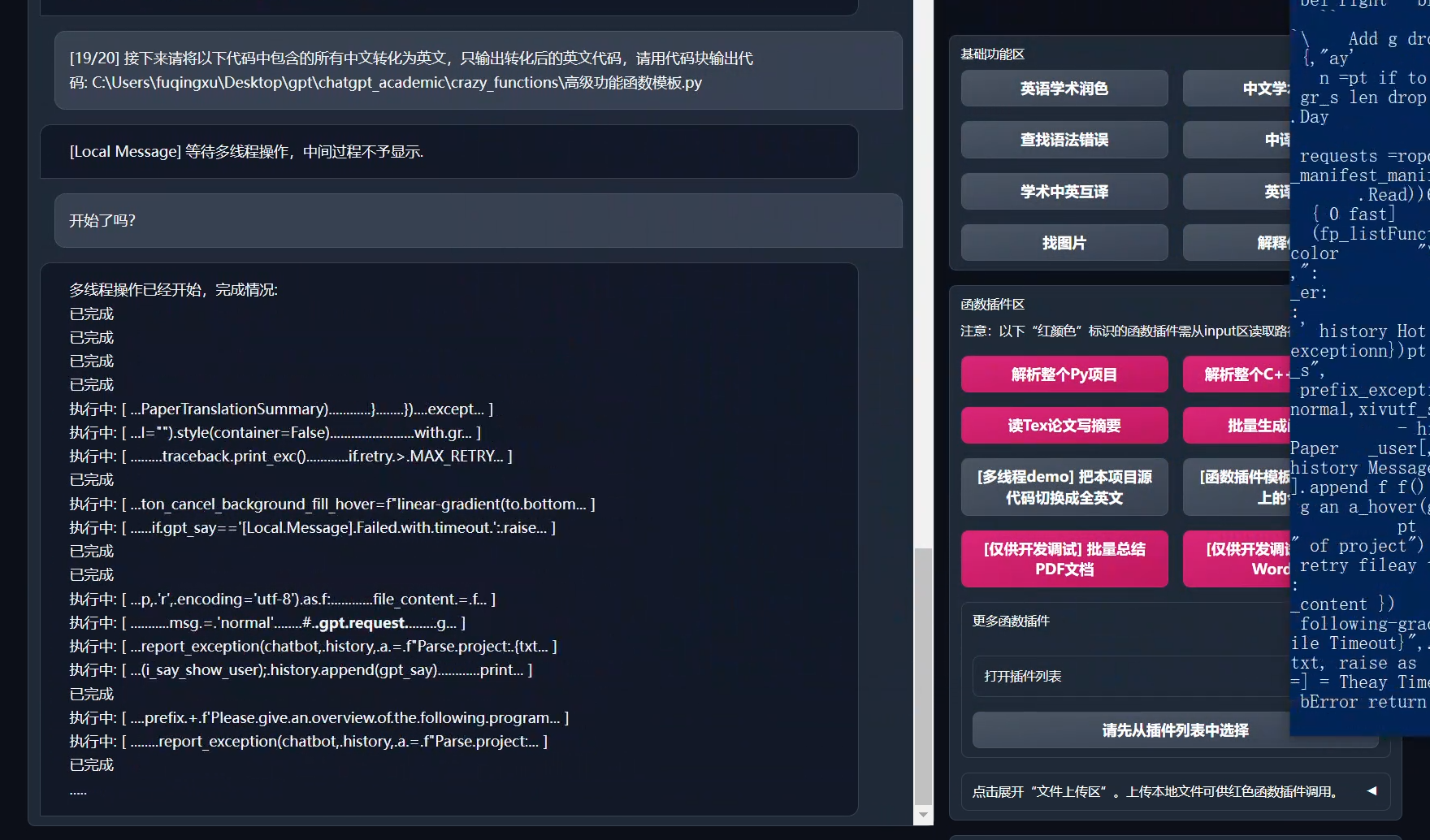

| Multithreaded Function Plugin Support | Supports multi-threaded calling chatgpt, one-click processing of massive text or programs |

| Start Dark Gradio Theme | Add /?__dark-theme=true at the end of the browser url to switch to dark theme |

| Multiple LLM Models support, API2D interface support | It must feel nice to be served by both GPT3.5, GPT4, and Tsinghua ChatGLM! |

| Huggingface non-Science Net Online Experience | After logging in to huggingface, copy this space |

| ... | ... |

New interface (switch between "left-right layout" and "up-down layout" by modifying the LAYOUT option in config.py)

All buttons are dynamically generated by reading functional.py and can add custom functionality at will, freeing up clipboard

Proofreading / correcting

If the output contains formulas, it will be displayed in both the tex form and the rendering form at the same time, which is convenient for copying and reading

Don't want to read the project code? Just take the whole project to chatgpt

Multiple major language model mixing calls (ChatGLM + OpenAI-GPT3.5 + API2D-GPT4)

Multiple major language model mixing call huggingface beta version (the huggingface version does not support chatglm)

Installation-Method 1: Run directly (Windows, Linux or MacOS)

- Download project

git clone https://github.com/binary-husky/chatgpt_academic.git

cd chatgpt_academic

- Configure API_KEY and proxy settings

In config.py, configure the overseas Proxy and OpenAI API KEY as follows:

1. If you are in China, you need to set up an overseas proxy to use the OpenAI API smoothly. Please read config.py carefully for setup details (1. Modify USE_PROXY to True; 2. Modify proxies according to the instructions).

2. Configure the OpenAI API KEY. You need to register and obtain an API KEY on the OpenAI website. Once you get the API KEY, you can configure it in the config.py file.

3. Issues related to proxy networks (network timeouts, proxy failures) are summarized at https://github.com/binary-husky/chatgpt_academic/issues/1

(P.S. When the program runs, it will first check whether there is a private configuration file named config_private.py and use the same-name configuration in config.py to overwrite it. Therefore, if you can understand our configuration reading logic, we strongly recommend that you create a new configuration file named config_private.py next to config.py and transfer (copy) the configuration in config.py to config_private.py. config_private.py is not controlled by git and can make your privacy information more secure.))

- Install dependencies

# (Option One) Recommended

python -m pip install -r requirements.txt

# (Option Two) If you use anaconda, the steps are similar:

# (Option Two.1) conda create -n gptac_venv python=3.11

# (Option Two.2) conda activate gptac_venv

# (Option Two.3) python -m pip install -r requirements.txt

# Note: Use official pip source or Ali pip source. Other pip sources (such as some university pips) may have problems, and temporary replacement methods are as follows:

# python -m pip install -r requirements.txt -i https://mirrors.aliyun.com/pypi/simple/

If you need to support Tsinghua ChatGLM, you need to install more dependencies (if you are not familiar with python or your computer configuration is not good, we recommend not to try):

python -m pip install -r request_llm/requirements_chatglm.txt

- Run

python main.py

- Test function plugins

- Test Python project analysis

In the input area, enter `./crazy_functions/test_project/python/dqn`, and then click "Analyze the entire Python project"

- Test self-code interpretation

Click "[Multithreading Demo] Interpretation of This Project Itself (Source Code Interpretation)"

- Test experimental function template function (requires gpt to answer what happened today in history). You can use this function as a template to implement more complex functions.

Click "[Function Plugin Template Demo] Today in History"

- There are more functions to choose from in the function plugin area drop-down menu.

Installation-Method 2: Use Docker (Linux)

- ChatGPT only (recommended for most people)

# download project

git clone https://github.com/binary-husky/chatgpt_academic.git

cd chatgpt_academic

# configure overseas Proxy and OpenAI API KEY

Edit config.py with any text editor

# Install

docker build -t gpt-academic .

# Run

docker run --rm -it --net=host gpt-academic

# Test function plug-in

## Test function plugin template function (requires gpt to answer what happened today in history). You can use this function as a template to implement more complex functions.

Click "[Function Plugin Template Demo] Today in History"

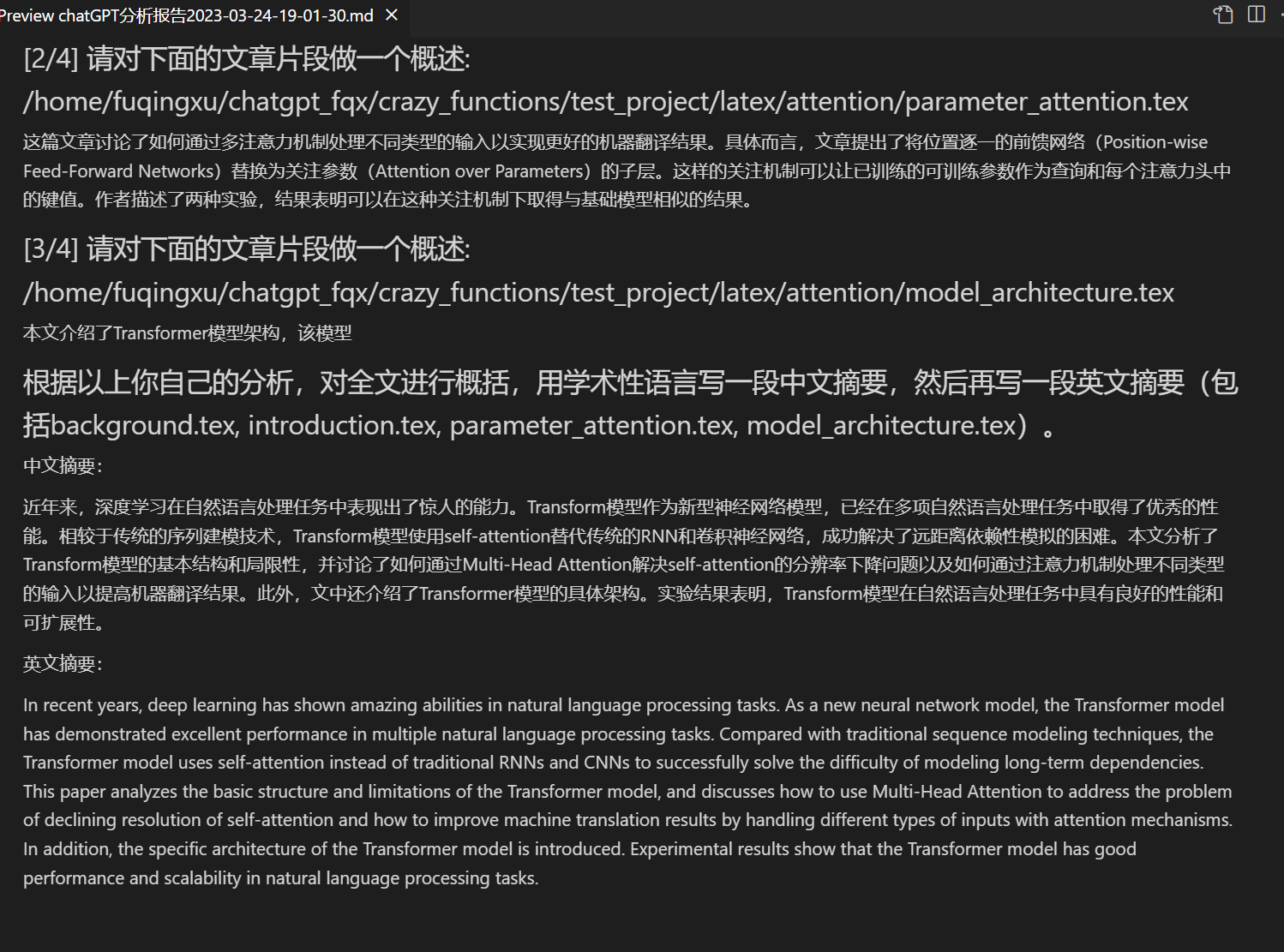

## Test Abstract Writing for Latex Projects

Enter ./crazy_functions/test_project/latex/attention in the input area, and then click "Read Tex Paper and Write Abstract"

## Test Python Project Analysis

Enter ./crazy_functions/test_project/python/dqn in the input area and click "Analyze the entire Python project."

More functions are available in the function plugin area drop-down menu.

- ChatGPT+ChatGLM (requires strong familiarity with docker + strong computer configuration)

# Modify dockerfile

cd docs && nano Dockerfile+ChatGLM

# How to build | 如何构建 (Dockerfile+ChatGLM在docs路径下,请先cd docs)

docker build -t gpt-academic --network=host -f Dockerfile+ChatGLM .

# How to run | 如何运行 (1) 直接运行:

docker run --rm -it --net=host --gpus=all gpt-academic

# How to run | 如何运行 (2) 我想运行之前进容器做一些调整:

docker run --rm -it --net=host --gpus=all gpt-academic bash

Installation-Method 3: Other Deployment Methods

Remote Cloud Server Deployment Please visit [Deployment Wiki-1] (https://github.com/binary-husky/chatgpt_academic/wiki/%E4%BA%91%E6%9C%8D%E5%8A%A1%E5%99%A8%E8%BF%9C%E7%A8%8B%E9%83%A8%E7%BD%B2%E6%8C%87%E5%8D%97)

Use WSL2 (Windows Subsystem for Linux) Please visit Deployment Wiki-2

Installation-Proxy Configuration

Method 1: Conventional method

Method Two: Step-by-step tutorial for newcomers

Step-by-step tutorial for newcomers

Customizing Convenient Buttons (Customizing Academic Shortcuts)

Open core_functional.py with any text editor and add an item as follows, then restart the program (if the button has been successfully added and visible, both the prefix and suffix support hot modification without the need to restart the program to take effect). For example:

"Super English to Chinese translation": {

# Prefix, which will be added before your input. For example, to describe your requirements, such as translation, code interpretation, polishing, etc.

"Prefix": "Please translate the following content into Chinese and use a markdown table to interpret the proprietary terms in the text one by one:\n\n",

# Suffix, which will be added after your input. For example, combined with the prefix, you can put your input content in quotes.

"Suffix": "",

},

Some Function Displays

Image Display:

You are a professional academic paper translator.

If a program can understand and analyze itself:

Analysis of any Python/Cpp project:

One-click reading comprehension and summary generation of Latex papers

Automatic report generation

Modular functional design

Source code translation to English

Todo and version planning:

- version 3.2+ (todo): Function plugin supports more parameter interfaces

- version 3.1: Support for inquiring multiple GPT models at the same time! Support for api2d, support for multiple apikeys load balancing

- version 3.0: Support for chatglm and other small llms

- version 2.6: Refactored the plugin structure, improved interactivity, added more plugins

- version 2.5: Self-updating, solves the problem of text being too long and token overflowing when summarizing large project source code

- version 2.4: (1) Added PDF full text translation function; (2) Added function to switch input area position; (3) Added vertical layout option; (4) Multi-threaded function plugin optimization.

- version 2.3: Enhanced multi-threaded interactivity

- version 2.2: Function plugin supports hot reloading

- version 2.1: Foldable layout

- version 2.0: Introduction of modular function plugins

- version 1.0: Basic functions

Reference and learning

The code design of this project has referenced many other excellent projects, including:

# Reference project 1: Borrowed many tips from ChuanhuChatGPT

https://github.com/GaiZhenbiao/ChuanhuChatGPT

# Reference project 2: Tsinghua ChatGLM-6B:

https://github.com/THUDM/ChatGLM-6B