+

+  +

+

+

+

+ +🤗 Diffusers is the go-to library for state-of-the-art pretrained diffusion models for generating images, audio, and even 3D structures of molecules. Whether you're looking for a simple inference solution or training your own diffusion models, 🤗 Diffusers is a modular toolbox that supports both. Our library is designed with a focus on [usability over performance](https://huggingface.co/docs/diffusers/conceptual/philosophy#usability-over-performance), [simple over easy](https://huggingface.co/docs/diffusers/conceptual/philosophy#simple-over-easy), and [customizability over abstractions](https://huggingface.co/docs/diffusers/conceptual/philosophy#tweakable-contributorfriendly-over-abstraction). + +🤗 Diffusers offers three core components: + +- State-of-the-art [diffusion pipelines](https://huggingface.co/docs/diffusers/api/pipelines/overview) that can be run in inference with just a few lines of code. +- Interchangeable noise [schedulers](https://huggingface.co/docs/diffusers/api/schedulers/overview) for different diffusion speeds and output quality. +- Pretrained [models](https://huggingface.co/docs/diffusers/api/models) that can be used as building blocks, and combined with schedulers, for creating your own end-to-end diffusion systems. + +## Installation + +We recommend installing 🤗 Diffusers in a virtual environment from PyPi or Conda. For more details about installing [PyTorch](https://pytorch.org/get-started/locally/) and [Flax](https://flax.readthedocs.io/en/latest/#installation), please refer to their official documentation. + +### PyTorch + +With `pip` (official package): + +```bash +pip install --upgrade diffusers[torch] +``` + +With `conda` (maintained by the community): + +```sh +conda install -c conda-forge diffusers +``` + +### Flax + +With `pip` (official package): + +```bash +pip install --upgrade diffusers[flax] +``` + +### Apple Silicon (M1/M2) support + +Please refer to the [How to use Stable Diffusion in Apple Silicon](https://huggingface.co/docs/diffusers/optimization/mps) guide. + +## Quickstart + +Generating outputs is super easy with 🤗 Diffusers. To generate an image from text, use the `from_pretrained` method to load any pretrained diffusion model (browse the [Hub](https://huggingface.co/models?library=diffusers&sort=downloads) for 4000+ checkpoints): + +```python +from diffusers import DiffusionPipeline +import torch + +pipeline = DiffusionPipeline.from_pretrained("runwayml/stable-diffusion-v1-5", torch_dtype=torch.float16) +pipeline.to("cuda") +pipeline("An image of a squirrel in Picasso style").images[0] +``` + +You can also dig into the models and schedulers toolbox to build your own diffusion system: + +```python +from diffusers import DDPMScheduler, UNet2DModel +from PIL import Image +import torch +import numpy as np + +scheduler = DDPMScheduler.from_pretrained("google/ddpm-cat-256") +model = UNet2DModel.from_pretrained("google/ddpm-cat-256").to("cuda") +scheduler.set_timesteps(50) + +sample_size = model.config.sample_size +noise = torch.randn((1, 3, sample_size, sample_size)).to("cuda") +input = noise + +for t in scheduler.timesteps: + with torch.no_grad(): + noisy_residual = model(input, t).sample + prev_noisy_sample = scheduler.step(noisy_residual, t, input).prev_sample + input = prev_noisy_sample + +image = (input / 2 + 0.5).clamp(0, 1) +image = image.cpu().permute(0, 2, 3, 1).numpy()[0] +image = Image.fromarray((image * 255).round().astype("uint8")) +image +``` + +Check out the [Quickstart](https://huggingface.co/docs/diffusers/quicktour) to launch your diffusion journey today! + +## How to navigate the documentation + +| **Documentation** | **What can I learn?** | +|---------------------------------------------------------------------|-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------| +| [Tutorial](https://huggingface.co/docs/diffusers/tutorials/tutorial_overview) | A basic crash course for learning how to use the library's most important features like using models and schedulers to build your own diffusion system, and training your own diffusion model. | +| [Loading](https://huggingface.co/docs/diffusers/using-diffusers/loading_overview) | Guides for how to load and configure all the components (pipelines, models, and schedulers) of the library, as well as how to use different schedulers. | +| [Pipelines for inference](https://huggingface.co/docs/diffusers/using-diffusers/pipeline_overview) | Guides for how to use pipelines for different inference tasks, batched generation, controlling generated outputs and randomness, and how to contribute a pipeline to the library. | +| [Optimization](https://huggingface.co/docs/diffusers/optimization/opt_overview) | Guides for how to optimize your diffusion model to run faster and consume less memory. | +| [Training](https://huggingface.co/docs/diffusers/training/overview) | Guides for how to train a diffusion model for different tasks with different training techniques. | +## Contribution + +We ❤️ contributions from the open-source community! +If you want to contribute to this library, please check out our [Contribution guide](https://github.com/huggingface/diffusers/blob/main/CONTRIBUTING.md). +You can look out for [issues](https://github.com/huggingface/diffusers/issues) you'd like to tackle to contribute to the library. +- See [Good first issues](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22) for general opportunities to contribute +- See [New model/pipeline](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+pipeline%2Fmodel%22) to contribute exciting new diffusion models / diffusion pipelines +- See [New scheduler](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+scheduler%22) + +Also, say 👋 in our public Discord channel . We discuss the hottest trends about diffusion models, help each other with contributions, personal projects or

+just hang out ☕.

+

+

+## Popular Tasks & Pipelines

+

+

. We discuss the hottest trends about diffusion models, help each other with contributions, personal projects or

+just hang out ☕.

+

+

+## Popular Tasks & Pipelines

+

+ +

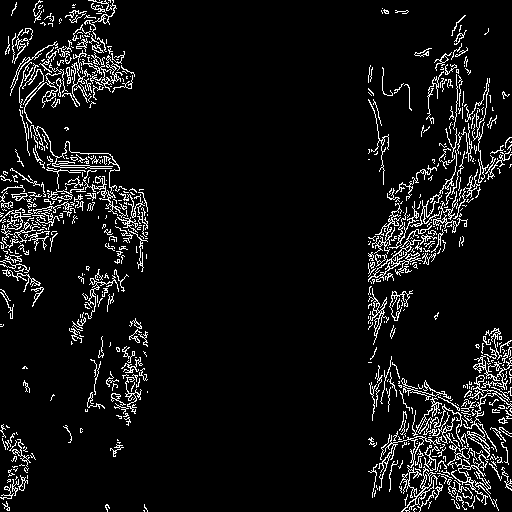

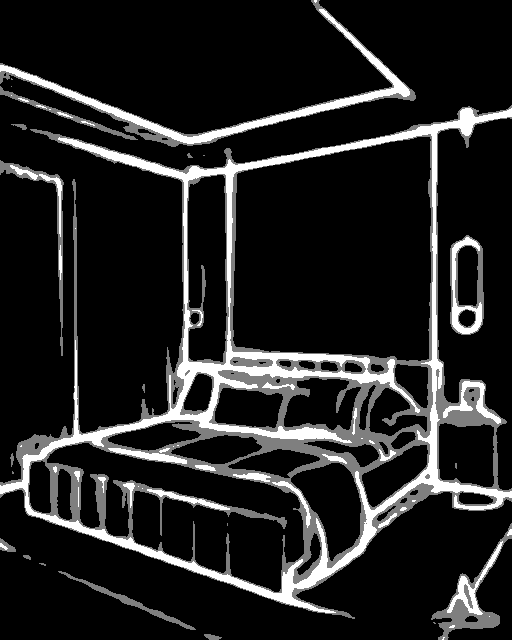

+Prepare the conditioning:

+

+```python

+from diffusers.utils import load_image

+from PIL import Image

+import cv2

+import numpy as np

+from diffusers.utils import load_image

+

+canny_image = load_image(

+ "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/landscape.png"

+)

+canny_image = np.array(canny_image)

+

+low_threshold = 100

+high_threshold = 200

+

+canny_image = cv2.Canny(canny_image, low_threshold, high_threshold)

+

+# zero out middle columns of image where pose will be overlayed

+zero_start = canny_image.shape[1] // 4

+zero_end = zero_start + canny_image.shape[1] // 2

+canny_image[:, zero_start:zero_end] = 0

+

+canny_image = canny_image[:, :, None]

+canny_image = np.concatenate([canny_image, canny_image, canny_image], axis=2)

+canny_image = Image.fromarray(canny_image)

+```

+

+

+

+Prepare the conditioning:

+

+```python

+from diffusers.utils import load_image

+from PIL import Image

+import cv2

+import numpy as np

+from diffusers.utils import load_image

+

+canny_image = load_image(

+ "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/landscape.png"

+)

+canny_image = np.array(canny_image)

+

+low_threshold = 100

+high_threshold = 200

+

+canny_image = cv2.Canny(canny_image, low_threshold, high_threshold)

+

+# zero out middle columns of image where pose will be overlayed

+zero_start = canny_image.shape[1] // 4

+zero_end = zero_start + canny_image.shape[1] // 2

+canny_image[:, zero_start:zero_end] = 0

+

+canny_image = canny_image[:, :, None]

+canny_image = np.concatenate([canny_image, canny_image, canny_image], axis=2)

+canny_image = Image.fromarray(canny_image)

+```

+

+ +

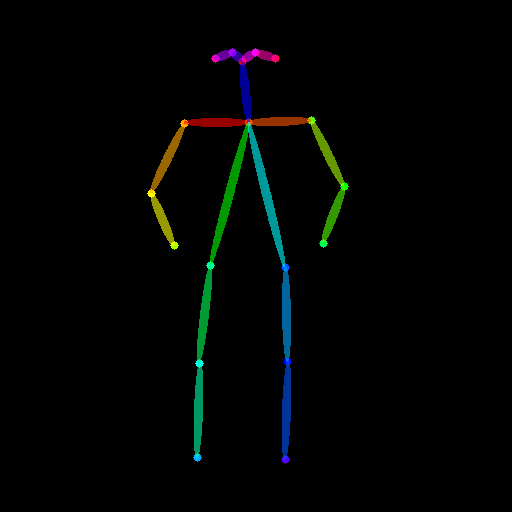

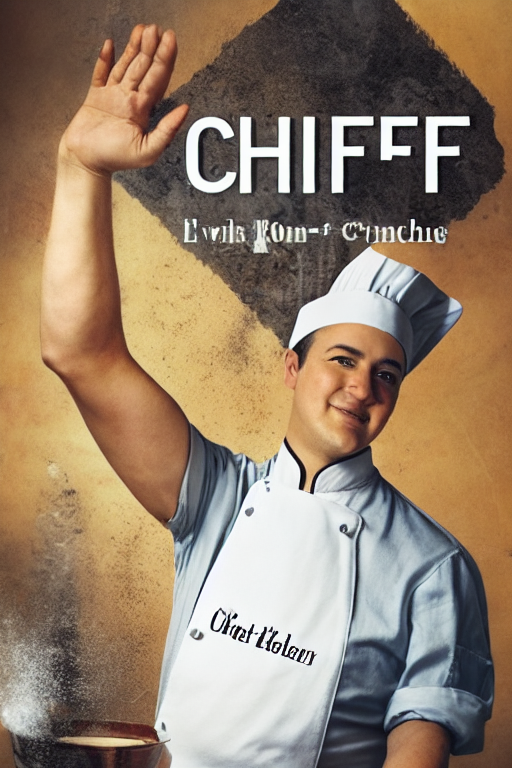

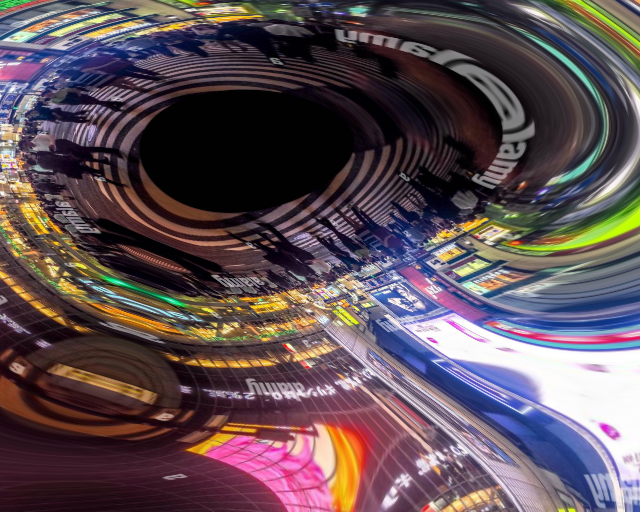

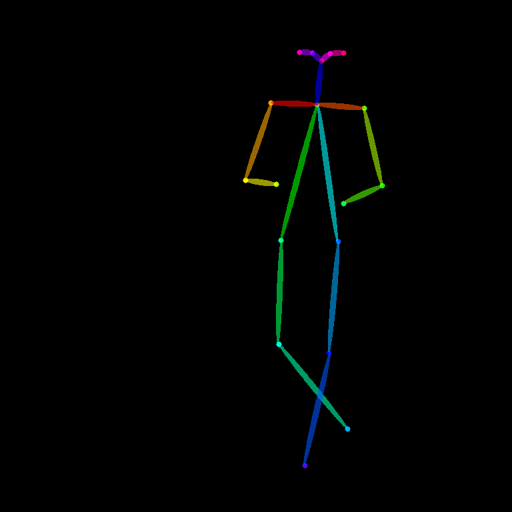

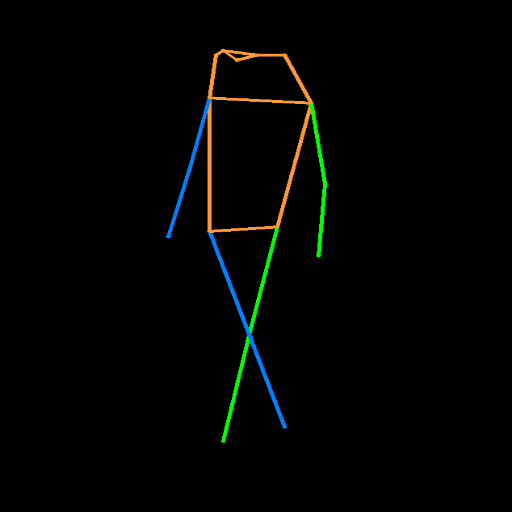

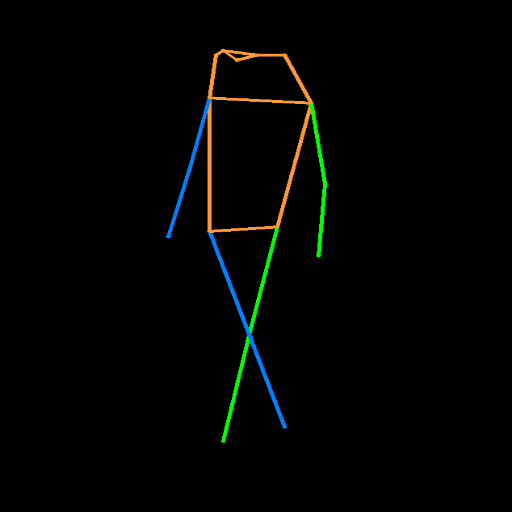

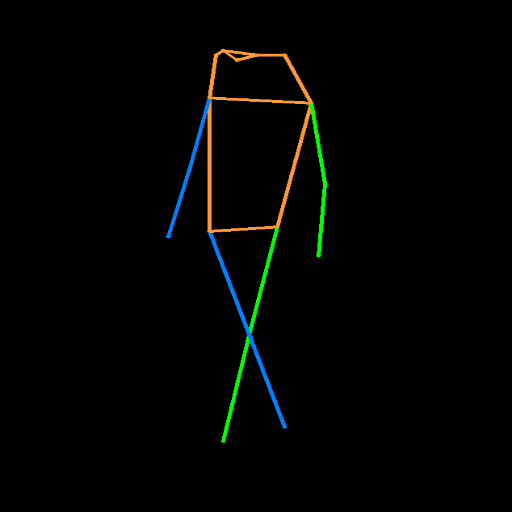

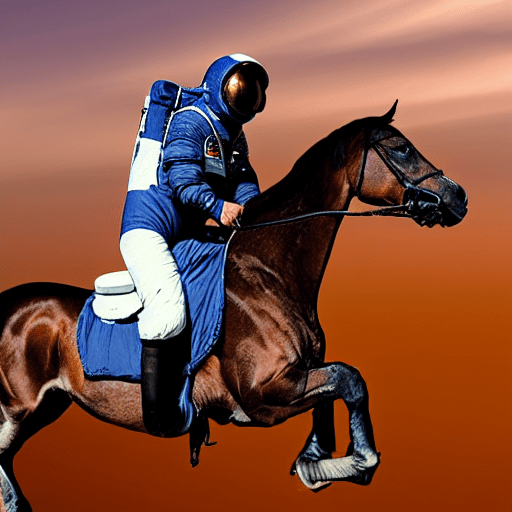

+### Openpose conditioning

+

+The original image:

+

+

+

+### Openpose conditioning

+

+The original image:

+

+ +

+Prepare the conditioning:

+

+```python

+from controlnet_aux import OpenposeDetector

+from diffusers.utils import load_image

+

+openpose = OpenposeDetector.from_pretrained("lllyasviel/ControlNet")

+

+openpose_image = load_image(

+ "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/person.png"

+)

+openpose_image = openpose(openpose_image)

+```

+

+

+

+Prepare the conditioning:

+

+```python

+from controlnet_aux import OpenposeDetector

+from diffusers.utils import load_image

+

+openpose = OpenposeDetector.from_pretrained("lllyasviel/ControlNet")

+

+openpose_image = load_image(

+ "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/person.png"

+)

+openpose_image = openpose(openpose_image)

+```

+

+ +

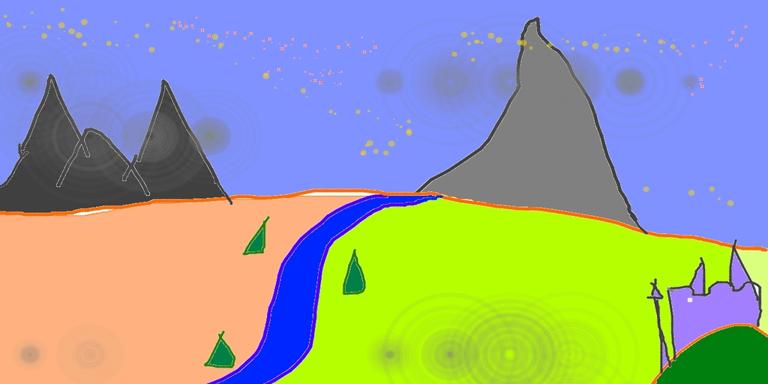

+### Running ControlNet with multiple conditionings

+

+```python

+from diffusers import StableDiffusionControlNetPipeline, ControlNetModel, UniPCMultistepScheduler

+import torch

+

+controlnet = [

+ ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-openpose", torch_dtype=torch.float16),

+ ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny", torch_dtype=torch.float16),

+]

+

+pipe = StableDiffusionControlNetPipeline.from_pretrained(

+ "runwayml/stable-diffusion-v1-5", controlnet=controlnet, torch_dtype=torch.float16

+)

+pipe.scheduler = UniPCMultistepScheduler.from_config(pipe.scheduler.config)

+

+pipe.enable_xformers_memory_efficient_attention()

+pipe.enable_model_cpu_offload()

+

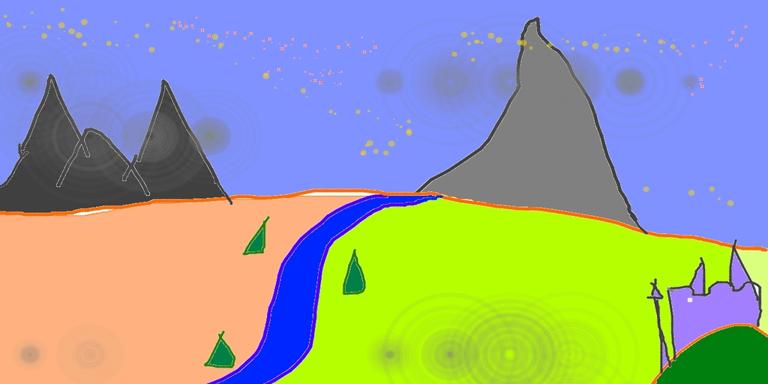

+prompt = "a giant standing in a fantasy landscape, best quality"

+negative_prompt = "monochrome, lowres, bad anatomy, worst quality, low quality"

+

+generator = torch.Generator(device="cpu").manual_seed(1)

+

+images = [openpose_image, canny_image]

+

+image = pipe(

+ prompt,

+ images,

+ num_inference_steps=20,

+ generator=generator,

+ negative_prompt=negative_prompt,

+ controlnet_conditioning_scale=[1.0, 0.8],

+).images[0]

+

+image.save("./multi_controlnet_output.png")

+```

+

+

+

+### Running ControlNet with multiple conditionings

+

+```python

+from diffusers import StableDiffusionControlNetPipeline, ControlNetModel, UniPCMultistepScheduler

+import torch

+

+controlnet = [

+ ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-openpose", torch_dtype=torch.float16),

+ ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny", torch_dtype=torch.float16),

+]

+

+pipe = StableDiffusionControlNetPipeline.from_pretrained(

+ "runwayml/stable-diffusion-v1-5", controlnet=controlnet, torch_dtype=torch.float16

+)

+pipe.scheduler = UniPCMultistepScheduler.from_config(pipe.scheduler.config)

+

+pipe.enable_xformers_memory_efficient_attention()

+pipe.enable_model_cpu_offload()

+

+prompt = "a giant standing in a fantasy landscape, best quality"

+negative_prompt = "monochrome, lowres, bad anatomy, worst quality, low quality"

+

+generator = torch.Generator(device="cpu").manual_seed(1)

+

+images = [openpose_image, canny_image]

+

+image = pipe(

+ prompt,

+ images,

+ num_inference_steps=20,

+ generator=generator,

+ negative_prompt=negative_prompt,

+ controlnet_conditioning_scale=[1.0, 0.8],

+).images[0]

+

+image.save("./multi_controlnet_output.png")

+```

+

+ +

+### Guess Mode

+

+Guess Mode is [a ControlNet feature that was implemented](https://github.com/lllyasviel/ControlNet#guess-mode--non-prompt-mode) after the publication of [the paper](https://arxiv.org/abs/2302.05543). The description states:

+

+>In this mode, the ControlNet encoder will try best to recognize the content of the input control map, like depth map, edge map, scribbles, etc, even if you remove all prompts.

+

+#### The core implementation:

+

+It adjusts the scale of the output residuals from ControlNet by a fixed ratio depending on the block depth. The shallowest DownBlock corresponds to `0.1`. As the blocks get deeper, the scale increases exponentially, and the scale for the output of the MidBlock becomes `1.0`.

+

+Since the core implementation is just this, **it does not have any impact on prompt conditioning**. While it is common to use it without specifying any prompts, it is also possible to provide prompts if desired.

+

+#### Usage:

+

+Just specify `guess_mode=True` in the pipe() function. A `guidance_scale` between 3.0 and 5.0 is [recommended](https://github.com/lllyasviel/ControlNet#guess-mode--non-prompt-mode).

+```py

+from diffusers import StableDiffusionControlNetPipeline, ControlNetModel

+import torch

+

+controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny")

+pipe = StableDiffusionControlNetPipeline.from_pretrained("runwayml/stable-diffusion-v1-5", controlnet=controlnet).to(

+ "cuda"

+)

+image = pipe("", image=canny_image, guess_mode=True, guidance_scale=3.0).images[0]

+image.save("guess_mode_generated.png")

+```

+

+#### Output image comparison:

+Canny Control Example

+

+|no guess_mode with prompt|guess_mode without prompt|

+|---|---|

+|

+

+### Guess Mode

+

+Guess Mode is [a ControlNet feature that was implemented](https://github.com/lllyasviel/ControlNet#guess-mode--non-prompt-mode) after the publication of [the paper](https://arxiv.org/abs/2302.05543). The description states:

+

+>In this mode, the ControlNet encoder will try best to recognize the content of the input control map, like depth map, edge map, scribbles, etc, even if you remove all prompts.

+

+#### The core implementation:

+

+It adjusts the scale of the output residuals from ControlNet by a fixed ratio depending on the block depth. The shallowest DownBlock corresponds to `0.1`. As the blocks get deeper, the scale increases exponentially, and the scale for the output of the MidBlock becomes `1.0`.

+

+Since the core implementation is just this, **it does not have any impact on prompt conditioning**. While it is common to use it without specifying any prompts, it is also possible to provide prompts if desired.

+

+#### Usage:

+

+Just specify `guess_mode=True` in the pipe() function. A `guidance_scale` between 3.0 and 5.0 is [recommended](https://github.com/lllyasviel/ControlNet#guess-mode--non-prompt-mode).

+```py

+from diffusers import StableDiffusionControlNetPipeline, ControlNetModel

+import torch

+

+controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny")

+pipe = StableDiffusionControlNetPipeline.from_pretrained("runwayml/stable-diffusion-v1-5", controlnet=controlnet).to(

+ "cuda"

+)

+image = pipe("", image=canny_image, guess_mode=True, guidance_scale=3.0).images[0]

+image.save("guess_mode_generated.png")

+```

+

+#### Output image comparison:

+Canny Control Example

+

+|no guess_mode with prompt|guess_mode without prompt|

+|---|---|

+| |

| |

+

+

+## Available checkpoints

+

+ControlNet requires a *control image* in addition to the text-to-image *prompt*.

+Each pretrained model is trained using a different conditioning method that requires different images for conditioning the generated outputs. For example, Canny edge conditioning requires the control image to be the output of a Canny filter, while depth conditioning requires the control image to be a depth map. See the overview and image examples below to know more.

+

+All checkpoints can be found under the authors' namespace [lllyasviel](https://huggingface.co/lllyasviel).

+

+**13.04.2024 Update**: The author has released improved controlnet checkpoints v1.1 - see [here](#controlnet-v1.1).

+

+### ControlNet v1.0

+

+| Model Name | Control Image Overview| Control Image Example | Generated Image Example |

+|---|---|---|---|

+|[lllyasviel/sd-controlnet-canny](https://huggingface.co/lllyasviel/sd-controlnet-canny)

|

+

+

+## Available checkpoints

+

+ControlNet requires a *control image* in addition to the text-to-image *prompt*.

+Each pretrained model is trained using a different conditioning method that requires different images for conditioning the generated outputs. For example, Canny edge conditioning requires the control image to be the output of a Canny filter, while depth conditioning requires the control image to be a depth map. See the overview and image examples below to know more.

+

+All checkpoints can be found under the authors' namespace [lllyasviel](https://huggingface.co/lllyasviel).

+

+**13.04.2024 Update**: The author has released improved controlnet checkpoints v1.1 - see [here](#controlnet-v1.1).

+

+### ControlNet v1.0

+

+| Model Name | Control Image Overview| Control Image Example | Generated Image Example |

+|---|---|---|---|

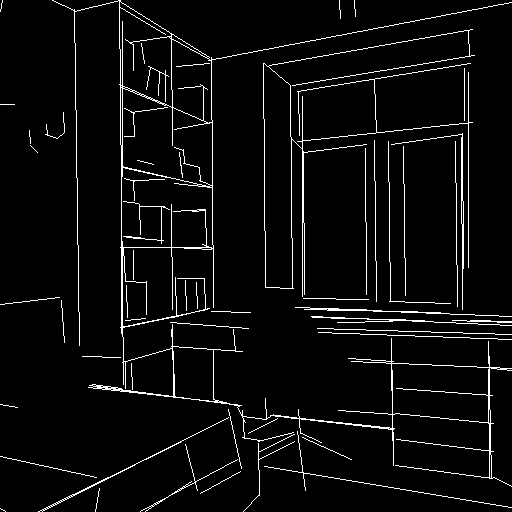

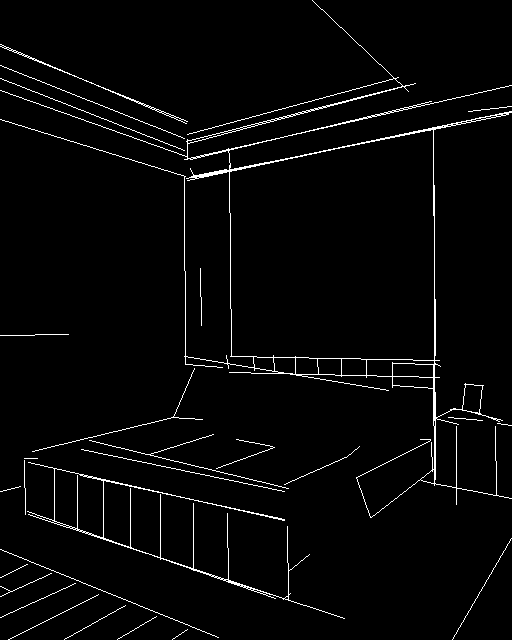

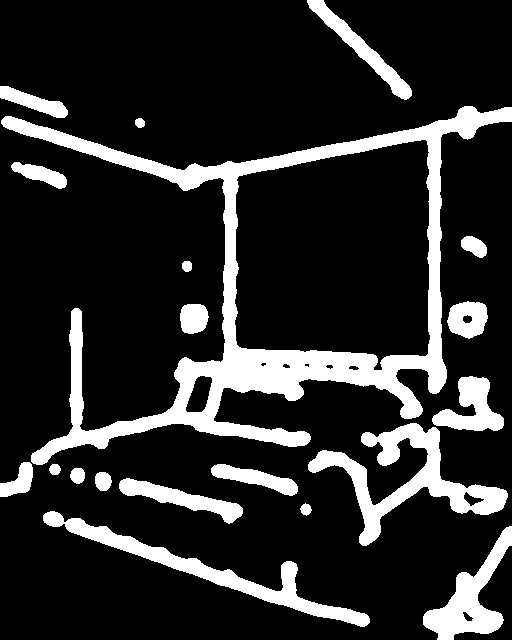

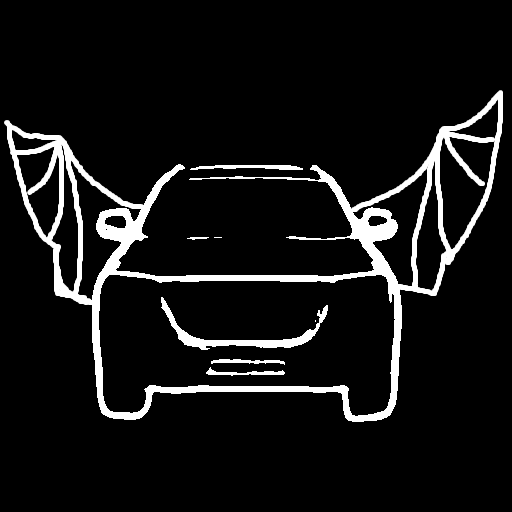

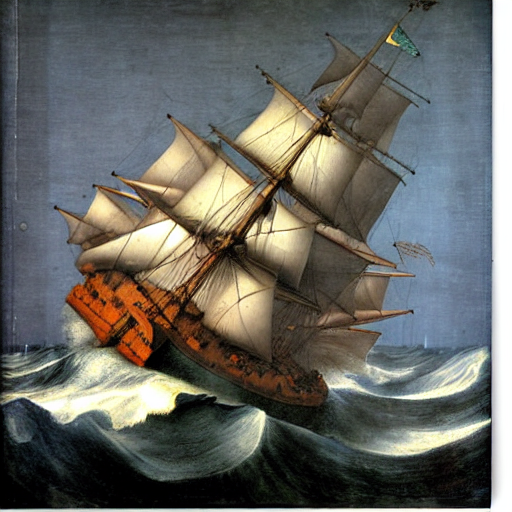

+|[lllyasviel/sd-controlnet-canny](https://huggingface.co/lllyasviel/sd-controlnet-canny)*Trained with canny edge detection* | A monochrome image with white edges on a black background.|

|

| |

+|[lllyasviel/sd-controlnet-depth](https://huggingface.co/lllyasviel/sd-controlnet-depth)

|

+|[lllyasviel/sd-controlnet-depth](https://huggingface.co/lllyasviel/sd-controlnet-depth)*Trained with Midas depth estimation* |A grayscale image with black representing deep areas and white representing shallow areas.|

|

| |

+|[lllyasviel/sd-controlnet-hed](https://huggingface.co/lllyasviel/sd-controlnet-hed)

|

+|[lllyasviel/sd-controlnet-hed](https://huggingface.co/lllyasviel/sd-controlnet-hed)*Trained with HED edge detection (soft edge)* |A monochrome image with white soft edges on a black background.|

|

| |

+|[lllyasviel/sd-controlnet-mlsd](https://huggingface.co/lllyasviel/sd-controlnet-mlsd)

|

+|[lllyasviel/sd-controlnet-mlsd](https://huggingface.co/lllyasviel/sd-controlnet-mlsd)*Trained with M-LSD line detection* |A monochrome image composed only of white straight lines on a black background.|

|

| |

+|[lllyasviel/sd-controlnet-normal](https://huggingface.co/lllyasviel/sd-controlnet-normal)

|

+|[lllyasviel/sd-controlnet-normal](https://huggingface.co/lllyasviel/sd-controlnet-normal)*Trained with normal map* |A [normal mapped](https://en.wikipedia.org/wiki/Normal_mapping) image.|

|

| |

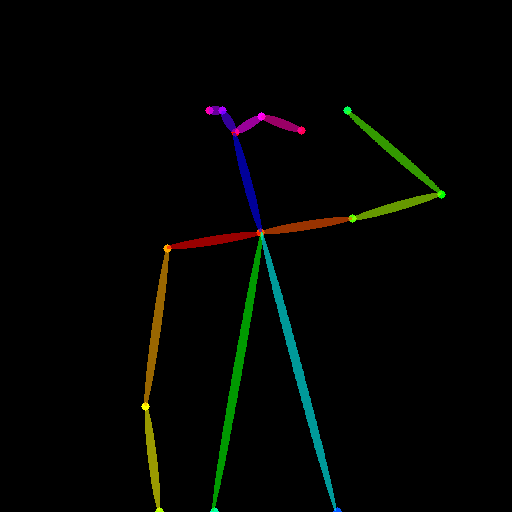

+|[lllyasviel/sd-controlnet-openpose](https://huggingface.co/lllyasviel/sd-controlnet_openpose)

|

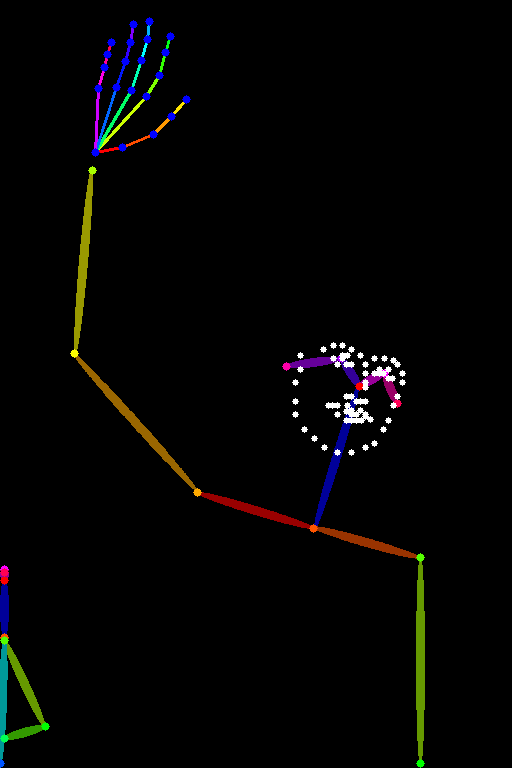

+|[lllyasviel/sd-controlnet-openpose](https://huggingface.co/lllyasviel/sd-controlnet_openpose)*Trained with OpenPose bone image* |A [OpenPose bone](https://github.com/CMU-Perceptual-Computing-Lab/openpose) image.|

|

| |

+|[lllyasviel/sd-controlnet-scribble](https://huggingface.co/lllyasviel/sd-controlnet_scribble)

|

+|[lllyasviel/sd-controlnet-scribble](https://huggingface.co/lllyasviel/sd-controlnet_scribble)*Trained with human scribbles* |A hand-drawn monochrome image with white outlines on a black background.|

|

| |

+|[lllyasviel/sd-controlnet-seg](https://huggingface.co/lllyasviel/sd-controlnet_seg)

|

+|[lllyasviel/sd-controlnet-seg](https://huggingface.co/lllyasviel/sd-controlnet_seg)*Trained with semantic segmentation* |An [ADE20K](https://groups.csail.mit.edu/vision/datasets/ADE20K/)'s segmentation protocol image.|

|

| |

+

+### ControlNet v1.1

+

+| Model Name | Control Image Overview| Condition Image | Control Image Example | Generated Image Example |

+|---|---|---|---|---|

+|[lllyasviel/control_v11p_sd15_canny](https://huggingface.co/lllyasviel/control_v11p_sd15_canny)

|

+

+### ControlNet v1.1

+

+| Model Name | Control Image Overview| Condition Image | Control Image Example | Generated Image Example |

+|---|---|---|---|---|

+|[lllyasviel/control_v11p_sd15_canny](https://huggingface.co/lllyasviel/control_v11p_sd15_canny)| *Trained with canny edge detection* | A monochrome image with white edges on a black background.|

|

| |

+|[lllyasviel/control_v11e_sd15_ip2p](https://huggingface.co/lllyasviel/control_v11e_sd15_ip2p)

|

+|[lllyasviel/control_v11e_sd15_ip2p](https://huggingface.co/lllyasviel/control_v11e_sd15_ip2p)| *Trained with pixel to pixel instruction* | No condition .|

|

| |

+|[lllyasviel/control_v11p_sd15_inpaint](https://huggingface.co/lllyasviel/control_v11p_sd15_inpaint)

|

+|[lllyasviel/control_v11p_sd15_inpaint](https://huggingface.co/lllyasviel/control_v11p_sd15_inpaint)| Trained with image inpainting | No condition.|

|

| |

+|[lllyasviel/control_v11p_sd15_mlsd](https://huggingface.co/lllyasviel/control_v11p_sd15_mlsd)

|

+|[lllyasviel/control_v11p_sd15_mlsd](https://huggingface.co/lllyasviel/control_v11p_sd15_mlsd)| Trained with multi-level line segment detection | An image with annotated line segments.|

|

| |

+|[lllyasviel/control_v11f1p_sd15_depth](https://huggingface.co/lllyasviel/control_v11f1p_sd15_depth)

|

+|[lllyasviel/control_v11f1p_sd15_depth](https://huggingface.co/lllyasviel/control_v11f1p_sd15_depth)| Trained with depth estimation | An image with depth information, usually represented as a grayscale image.|

|

| |

+|[lllyasviel/control_v11p_sd15_normalbae](https://huggingface.co/lllyasviel/control_v11p_sd15_normalbae)

|

+|[lllyasviel/control_v11p_sd15_normalbae](https://huggingface.co/lllyasviel/control_v11p_sd15_normalbae)| Trained with surface normal estimation | An image with surface normal information, usually represented as a color-coded image.|

|

| |

+|[lllyasviel/control_v11p_sd15_seg](https://huggingface.co/lllyasviel/control_v11p_sd15_seg)

|

+|[lllyasviel/control_v11p_sd15_seg](https://huggingface.co/lllyasviel/control_v11p_sd15_seg)| Trained with image segmentation | An image with segmented regions, usually represented as a color-coded image.|

|

| |

+|[lllyasviel/control_v11p_sd15_lineart](https://huggingface.co/lllyasviel/control_v11p_sd15_lineart)

|

+|[lllyasviel/control_v11p_sd15_lineart](https://huggingface.co/lllyasviel/control_v11p_sd15_lineart)| Trained with line art generation | An image with line art, usually black lines on a white background.|

|

| |

+|[lllyasviel/control_v11p_sd15s2_lineart_anime](https://huggingface.co/lllyasviel/control_v11p_sd15s2_lineart_anime)

|

+|[lllyasviel/control_v11p_sd15s2_lineart_anime](https://huggingface.co/lllyasviel/control_v11p_sd15s2_lineart_anime)| Trained with anime line art generation | An image with anime-style line art.|

|

| |

+|[lllyasviel/control_v11p_sd15_openpose](https://huggingface.co/lllyasviel/control_v11p_sd15s2_lineart_anime)

|

+|[lllyasviel/control_v11p_sd15_openpose](https://huggingface.co/lllyasviel/control_v11p_sd15s2_lineart_anime)| Trained with human pose estimation | An image with human poses, usually represented as a set of keypoints or skeletons.|

|

| |

+|[lllyasviel/control_v11p_sd15_scribble](https://huggingface.co/lllyasviel/control_v11p_sd15_scribble)

|

+|[lllyasviel/control_v11p_sd15_scribble](https://huggingface.co/lllyasviel/control_v11p_sd15_scribble)| Trained with scribble-based image generation | An image with scribbles, usually random or user-drawn strokes.|

|

| |

+|[lllyasviel/control_v11p_sd15_softedge](https://huggingface.co/lllyasviel/control_v11p_sd15_softedge)

|

+|[lllyasviel/control_v11p_sd15_softedge](https://huggingface.co/lllyasviel/control_v11p_sd15_softedge)| Trained with soft edge image generation | An image with soft edges, usually to create a more painterly or artistic effect.|

|

| |

+|[lllyasviel/control_v11e_sd15_shuffle](https://huggingface.co/lllyasviel/control_v11e_sd15_shuffle)

|

+|[lllyasviel/control_v11e_sd15_shuffle](https://huggingface.co/lllyasviel/control_v11e_sd15_shuffle)| Trained with image shuffling | An image with shuffled patches or regions.|

|

| |

+|[lllyasviel/control_v11f1e_sd15_tile](https://huggingface.co/lllyasviel/control_v11f1e_sd15_tile)

|

+|[lllyasviel/control_v11f1e_sd15_tile](https://huggingface.co/lllyasviel/control_v11f1e_sd15_tile)| Trained with image tiling | A blurry image or part of an image .|

|

| |

+

+## StableDiffusionControlNetPipeline

+[[autodoc]] StableDiffusionControlNetPipeline

+ - all

+ - __call__

+ - enable_attention_slicing

+ - disable_attention_slicing

+ - enable_vae_slicing

+ - disable_vae_slicing

+ - enable_xformers_memory_efficient_attention

+ - disable_xformers_memory_efficient_attention

+ - load_textual_inversion

+

+## StableDiffusionControlNetImg2ImgPipeline

+[[autodoc]] StableDiffusionControlNetImg2ImgPipeline

+ - all

+ - __call__

+ - enable_attention_slicing

+ - disable_attention_slicing

+ - enable_vae_slicing

+ - disable_vae_slicing

+ - enable_xformers_memory_efficient_attention

+ - disable_xformers_memory_efficient_attention

+ - load_textual_inversion

+

+## StableDiffusionControlNetInpaintPipeline

+[[autodoc]] StableDiffusionControlNetInpaintPipeline

+ - all

+ - __call__

+ - enable_attention_slicing

+ - disable_attention_slicing

+ - enable_vae_slicing

+ - disable_vae_slicing

+ - enable_xformers_memory_efficient_attention

+ - disable_xformers_memory_efficient_attention

+ - load_textual_inversion

+

+## FlaxStableDiffusionControlNetPipeline

+[[autodoc]] FlaxStableDiffusionControlNetPipeline

+ - all

+ - __call__

+

diff --git a/diffusers/docs/source/en/api/pipelines/cycle_diffusion.md b/diffusers/docs/source/en/api/pipelines/cycle_diffusion.md

new file mode 100644

index 0000000000000000000000000000000000000000..3ff0d768879a5b073c6e987e6e9eb5e5d8fe3742

--- /dev/null

+++ b/diffusers/docs/source/en/api/pipelines/cycle_diffusion.md

@@ -0,0 +1,33 @@

+

+

+# Cycle Diffusion

+

+Cycle Diffusion is a text guided image-to-image generation model proposed in [Unifying Diffusion Models' Latent Space, with Applications to CycleDiffusion and Guidance](https://huggingface.co/papers/2210.05559) by Chen Henry Wu, Fernando De la Torre.

+

+The abstract from the paper is:

+

+*Diffusion models have achieved unprecedented performance in generative modeling. The commonly-adopted formulation of the latent code of diffusion models is a sequence of gradually denoised samples, as opposed to the simpler (e.g., Gaussian) latent space of GANs, VAEs, and normalizing flows. This paper provides an alternative, Gaussian formulation of the latent space of various diffusion models, as well as an invertible DPM-Encoder that maps images into the latent space. While our formulation is purely based on the definition of diffusion models, we demonstrate several intriguing consequences. (1) Empirically, we observe that a common latent space emerges from two diffusion models trained independently on related domains. In light of this finding, we propose CycleDiffusion, which uses DPM-Encoder for unpaired image-to-image translation. Furthermore, applying CycleDiffusion to text-to-image diffusion models, we show that large-scale text-to-image diffusion models can be used as zero-shot image-to-image editors. (2) One can guide pre-trained diffusion models and GANs by controlling the latent codes in a unified, plug-and-play formulation based on energy-based models. Using the CLIP model and a face recognition model as guidance, we demonstrate that diffusion models have better coverage of low-density sub-populations and individuals than GANs.*

+

+

|

+

+## StableDiffusionControlNetPipeline

+[[autodoc]] StableDiffusionControlNetPipeline

+ - all

+ - __call__

+ - enable_attention_slicing

+ - disable_attention_slicing

+ - enable_vae_slicing

+ - disable_vae_slicing

+ - enable_xformers_memory_efficient_attention

+ - disable_xformers_memory_efficient_attention

+ - load_textual_inversion

+

+## StableDiffusionControlNetImg2ImgPipeline

+[[autodoc]] StableDiffusionControlNetImg2ImgPipeline

+ - all

+ - __call__

+ - enable_attention_slicing

+ - disable_attention_slicing

+ - enable_vae_slicing

+ - disable_vae_slicing

+ - enable_xformers_memory_efficient_attention

+ - disable_xformers_memory_efficient_attention

+ - load_textual_inversion

+

+## StableDiffusionControlNetInpaintPipeline

+[[autodoc]] StableDiffusionControlNetInpaintPipeline

+ - all

+ - __call__

+ - enable_attention_slicing

+ - disable_attention_slicing

+ - enable_vae_slicing

+ - disable_vae_slicing

+ - enable_xformers_memory_efficient_attention

+ - disable_xformers_memory_efficient_attention

+ - load_textual_inversion

+

+## FlaxStableDiffusionControlNetPipeline

+[[autodoc]] FlaxStableDiffusionControlNetPipeline

+ - all

+ - __call__

+

diff --git a/diffusers/docs/source/en/api/pipelines/cycle_diffusion.md b/diffusers/docs/source/en/api/pipelines/cycle_diffusion.md

new file mode 100644

index 0000000000000000000000000000000000000000..3ff0d768879a5b073c6e987e6e9eb5e5d8fe3742

--- /dev/null

+++ b/diffusers/docs/source/en/api/pipelines/cycle_diffusion.md

@@ -0,0 +1,33 @@

+

+

+# Cycle Diffusion

+

+Cycle Diffusion is a text guided image-to-image generation model proposed in [Unifying Diffusion Models' Latent Space, with Applications to CycleDiffusion and Guidance](https://huggingface.co/papers/2210.05559) by Chen Henry Wu, Fernando De la Torre.

+

+The abstract from the paper is:

+

+*Diffusion models have achieved unprecedented performance in generative modeling. The commonly-adopted formulation of the latent code of diffusion models is a sequence of gradually denoised samples, as opposed to the simpler (e.g., Gaussian) latent space of GANs, VAEs, and normalizing flows. This paper provides an alternative, Gaussian formulation of the latent space of various diffusion models, as well as an invertible DPM-Encoder that maps images into the latent space. While our formulation is purely based on the definition of diffusion models, we demonstrate several intriguing consequences. (1) Empirically, we observe that a common latent space emerges from two diffusion models trained independently on related domains. In light of this finding, we propose CycleDiffusion, which uses DPM-Encoder for unpaired image-to-image translation. Furthermore, applying CycleDiffusion to text-to-image diffusion models, we show that large-scale text-to-image diffusion models can be used as zero-shot image-to-image editors. (2) One can guide pre-trained diffusion models and GANs by controlling the latent codes in a unified, plug-and-play formulation based on energy-based models. Using the CLIP model and a face recognition model as guidance, we demonstrate that diffusion models have better coverage of low-density sub-populations and individuals than GANs.*

+

+*Trained with spatial color palette* | A image with 8x8 color palette.|

|

| |

+|[TencentARC/t2iadapter_canny_sd14v1](https://huggingface.co/TencentARC/t2iadapter_canny_sd14v1)

|

+|[TencentARC/t2iadapter_canny_sd14v1](https://huggingface.co/TencentARC/t2iadapter_canny_sd14v1)*Trained with canny edge detection* | A monochrome image with white edges on a black background.|

|

| |

+|[TencentARC/t2iadapter_sketch_sd14v1](https://huggingface.co/TencentARC/t2iadapter_sketch_sd14v1)

|

+|[TencentARC/t2iadapter_sketch_sd14v1](https://huggingface.co/TencentARC/t2iadapter_sketch_sd14v1)*Trained with [PidiNet](https://github.com/zhuoinoulu/pidinet) edge detection* | A hand-drawn monochrome image with white outlines on a black background.|

|

| |

+|[TencentARC/t2iadapter_depth_sd14v1](https://huggingface.co/TencentARC/t2iadapter_depth_sd14v1)

|

+|[TencentARC/t2iadapter_depth_sd14v1](https://huggingface.co/TencentARC/t2iadapter_depth_sd14v1)*Trained with Midas depth estimation* | A grayscale image with black representing deep areas and white representing shallow areas.|

|

| |

+|[TencentARC/t2iadapter_openpose_sd14v1](https://huggingface.co/TencentARC/t2iadapter_openpose_sd14v1)

|

+|[TencentARC/t2iadapter_openpose_sd14v1](https://huggingface.co/TencentARC/t2iadapter_openpose_sd14v1)*Trained with OpenPose bone image* | A [OpenPose bone](https://github.com/CMU-Perceptual-Computing-Lab/openpose) image.|

|

| |

+|[TencentARC/t2iadapter_keypose_sd14v1](https://huggingface.co/TencentARC/t2iadapter_keypose_sd14v1)

|

+|[TencentARC/t2iadapter_keypose_sd14v1](https://huggingface.co/TencentARC/t2iadapter_keypose_sd14v1)*Trained with mmpose skeleton image* | A [mmpose skeleton](https://github.com/open-mmlab/mmpose) image.|

|

| |

+|[TencentARC/t2iadapter_seg_sd14v1](https://huggingface.co/TencentARC/t2iadapter_seg_sd14v1)

|

+|[TencentARC/t2iadapter_seg_sd14v1](https://huggingface.co/TencentARC/t2iadapter_seg_sd14v1)*Trained with semantic segmentation* | An [custom](https://github.com/TencentARC/T2I-Adapter/discussions/25) segmentation protocol image.|

|

| |

+|[TencentARC/t2iadapter_canny_sd15v2](https://huggingface.co/TencentARC/t2iadapter_canny_sd15v2)||

+|[TencentARC/t2iadapter_depth_sd15v2](https://huggingface.co/TencentARC/t2iadapter_depth_sd15v2)||

+|[TencentARC/t2iadapter_sketch_sd15v2](https://huggingface.co/TencentARC/t2iadapter_sketch_sd15v2)||

+|[TencentARC/t2iadapter_zoedepth_sd15v1](https://huggingface.co/TencentARC/t2iadapter_zoedepth_sd15v1)||

+

+## Combining multiple adapters

+

+[`MultiAdapter`] can be used for applying multiple conditionings at once.

+

+Here we use the keypose adapter for the character posture and the depth adapter for creating the scene.

+

+```py

+import torch

+from PIL import Image

+from diffusers.utils import load_image

+

+cond_keypose = load_image(

+ "https://huggingface.co/datasets/diffusers/docs-images/resolve/main/t2i-adapter/keypose_sample_input.png"

+)

+cond_depth = load_image(

+ "https://huggingface.co/datasets/diffusers/docs-images/resolve/main/t2i-adapter/depth_sample_input.png"

+)

+cond = [[cond_keypose, cond_depth]]

+

+prompt = ["A man walking in an office room with a nice view"]

+```

+

+The two control images look as such:

+

+

+

+

+

+`MultiAdapter` combines keypose and depth adapters.

+

+`adapter_conditioning_scale` balances the relative influence of the different adapters.

+

+```py

+from diffusers import StableDiffusionAdapterPipeline, MultiAdapter

+

+adapters = MultiAdapter(

+ [

+ T2IAdapter.from_pretrained("TencentARC/t2iadapter_keypose_sd14v1"),

+ T2IAdapter.from_pretrained("TencentARC/t2iadapter_depth_sd14v1"),

+ ]

+)

+adapters = adapters.to(torch.float16)

+

+pipe = StableDiffusionAdapterPipeline.from_pretrained(

+ "CompVis/stable-diffusion-v1-4",

+ torch_dtype=torch.float16,

+ adapter=adapters,

+)

+

+images = pipe(prompt, cond, adapter_conditioning_scale=[0.8, 0.8])

+```

+

+

+

+

+## T2I Adapter vs ControlNet

+

+T2I-Adapter is similar to [ControlNet](https://huggingface.co/docs/diffusers/main/en/api/pipelines/controlnet).

+T2i-Adapter uses a smaller auxiliary network which is only run once for the entire diffusion process.

+However, T2I-Adapter performs slightly worse than ControlNet.

+

+## StableDiffusionAdapterPipeline

+[[autodoc]] StableDiffusionAdapterPipeline

+ - all

+ - __call__

+ - enable_attention_slicing

+ - disable_attention_slicing

+ - enable_vae_slicing

+ - disable_vae_slicing

+ - enable_xformers_memory_efficient_attention

+ - disable_xformers_memory_efficient_attention

diff --git a/diffusers/docs/source/en/api/pipelines/stable_diffusion/depth2img.md b/diffusers/docs/source/en/api/pipelines/stable_diffusion/depth2img.md

new file mode 100644

index 0000000000000000000000000000000000000000..09814f387b724071d5c29a28dec9efd9b2bfc02f

--- /dev/null

+++ b/diffusers/docs/source/en/api/pipelines/stable_diffusion/depth2img.md

@@ -0,0 +1,40 @@

+

+

+# Depth-to-image

+

+The Stable Diffusion model can also infer depth based on an image using [MiDas](https://github.com/isl-org/MiDaS). This allows you to pass a text prompt and an initial image to condition the generation of new images as well as a `depth_map` to preserve the image structure.

+

+

|

+|[TencentARC/t2iadapter_canny_sd15v2](https://huggingface.co/TencentARC/t2iadapter_canny_sd15v2)||

+|[TencentARC/t2iadapter_depth_sd15v2](https://huggingface.co/TencentARC/t2iadapter_depth_sd15v2)||

+|[TencentARC/t2iadapter_sketch_sd15v2](https://huggingface.co/TencentARC/t2iadapter_sketch_sd15v2)||

+|[TencentARC/t2iadapter_zoedepth_sd15v1](https://huggingface.co/TencentARC/t2iadapter_zoedepth_sd15v1)||

+

+## Combining multiple adapters

+

+[`MultiAdapter`] can be used for applying multiple conditionings at once.

+

+Here we use the keypose adapter for the character posture and the depth adapter for creating the scene.

+

+```py

+import torch

+from PIL import Image

+from diffusers.utils import load_image

+

+cond_keypose = load_image(

+ "https://huggingface.co/datasets/diffusers/docs-images/resolve/main/t2i-adapter/keypose_sample_input.png"

+)

+cond_depth = load_image(

+ "https://huggingface.co/datasets/diffusers/docs-images/resolve/main/t2i-adapter/depth_sample_input.png"

+)

+cond = [[cond_keypose, cond_depth]]

+

+prompt = ["A man walking in an office room with a nice view"]

+```

+

+The two control images look as such:

+

+

+

+

+

+`MultiAdapter` combines keypose and depth adapters.

+

+`adapter_conditioning_scale` balances the relative influence of the different adapters.

+

+```py

+from diffusers import StableDiffusionAdapterPipeline, MultiAdapter

+

+adapters = MultiAdapter(

+ [

+ T2IAdapter.from_pretrained("TencentARC/t2iadapter_keypose_sd14v1"),

+ T2IAdapter.from_pretrained("TencentARC/t2iadapter_depth_sd14v1"),

+ ]

+)

+adapters = adapters.to(torch.float16)

+

+pipe = StableDiffusionAdapterPipeline.from_pretrained(

+ "CompVis/stable-diffusion-v1-4",

+ torch_dtype=torch.float16,

+ adapter=adapters,

+)

+

+images = pipe(prompt, cond, adapter_conditioning_scale=[0.8, 0.8])

+```

+

+

+

+

+## T2I Adapter vs ControlNet

+

+T2I-Adapter is similar to [ControlNet](https://huggingface.co/docs/diffusers/main/en/api/pipelines/controlnet).

+T2i-Adapter uses a smaller auxiliary network which is only run once for the entire diffusion process.

+However, T2I-Adapter performs slightly worse than ControlNet.

+

+## StableDiffusionAdapterPipeline

+[[autodoc]] StableDiffusionAdapterPipeline

+ - all

+ - __call__

+ - enable_attention_slicing

+ - disable_attention_slicing

+ - enable_vae_slicing

+ - disable_vae_slicing

+ - enable_xformers_memory_efficient_attention

+ - disable_xformers_memory_efficient_attention

diff --git a/diffusers/docs/source/en/api/pipelines/stable_diffusion/depth2img.md b/diffusers/docs/source/en/api/pipelines/stable_diffusion/depth2img.md

new file mode 100644

index 0000000000000000000000000000000000000000..09814f387b724071d5c29a28dec9efd9b2bfc02f

--- /dev/null

+++ b/diffusers/docs/source/en/api/pipelines/stable_diffusion/depth2img.md

@@ -0,0 +1,40 @@

+

+

+# Depth-to-image

+

+The Stable Diffusion model can also infer depth based on an image using [MiDas](https://github.com/isl-org/MiDaS). This allows you to pass a text prompt and an initial image to condition the generation of new images as well as a `depth_map` to preserve the image structure.

+

+| + Pipeline + | ++ Supported tasks + | ++ Space + | + +

|---|---|---|

| + StableDiffusion + | +text-to-image | + +

+ |

+

| + StableDiffusionImg2Img + | +image-to-image | + +

+ |

+

| + StableDiffusionInpaint + | +inpainting | + +

+ |

+

| + StableDiffusionDepth2Img + | +depth-to-image | + +

+ |

+

| + StableDiffusionImageVariation + | +image variation | + +

+ |

+

| + StableDiffusionPipelineSafe + | +filtered text-to-image | + +

+ |

+

| + StableDiffusion2 + | +text-to-image, inpainting, depth-to-image, super-resolution | + +

+ |

+

| + StableDiffusionXL + | +text-to-image, image-to-image | + +

+ |

+

| + StableDiffusionLatentUpscale + | +super-resolution | + +

+ |

+

| + StableDiffusionUpscale + | +super-resolution | +|

| + StableDiffusionLDM3D + | +text-to-rgb, text-to-depth | + +

+ |

+

+  +

+ |

+ +  +

+ |

+

+  +

+ |

+

+

+Whichever way you choose to contribute, we strive to be part of an open, welcoming, and kind community. Please, read our [code of conduct](https://github.com/huggingface/diffusers/blob/main/CODE_OF_CONDUCT.md) and be mindful to respect it during your interactions. We also recommend you become familiar with the [ethical guidelines](https://huggingface.co/docs/diffusers/conceptual/ethical_guidelines) that guide our project and ask you to adhere to the same principles of transparency and responsibility.

+

+We enormously value feedback from the community, so please do not be afraid to speak up if you believe you have valuable feedback that can help improve the library - every message, comment, issue, and pull request (PR) is read and considered.

+

+## Overview

+

+You can contribute in many ways ranging from answering questions on issues to adding new diffusion models to

+the core library.

+

+In the following, we give an overview of different ways to contribute, ranked by difficulty in ascending order. All of them are valuable to the community.

+

+* 1. Asking and answering questions on [the Diffusers discussion forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers) or on [Discord](https://discord.gg/G7tWnz98XR).

+* 2. Opening new issues on [the GitHub Issues tab](https://github.com/huggingface/diffusers/issues/new/choose)

+* 3. Answering issues on [the GitHub Issues tab](https://github.com/huggingface/diffusers/issues)

+* 4. Fix a simple issue, marked by the "Good first issue" label, see [here](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22).

+* 5. Contribute to the [documentation](https://github.com/huggingface/diffusers/tree/main/docs/source).

+* 6. Contribute a [Community Pipeline](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3Acommunity-examples)

+* 7. Contribute to the [examples](https://github.com/huggingface/diffusers/tree/main/examples).

+* 8. Fix a more difficult issue, marked by the "Good second issue" label, see [here](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22Good+second+issue%22).

+* 9. Add a new pipeline, model, or scheduler, see ["New Pipeline/Model"](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+pipeline%2Fmodel%22) and ["New scheduler"](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+scheduler%22) issues. For this contribution, please have a look at [Design Philosophy](https://github.com/huggingface/diffusers/blob/main/PHILOSOPHY.md).

+

+As said before, **all contributions are valuable to the community**.

+In the following, we will explain each contribution a bit more in detail.

+

+For all contributions 4.-9. you will need to open a PR. It is explained in detail how to do so in [Opening a pull requst](#how-to-open-a-pr)

+

+### 1. Asking and answering questions on the Diffusers discussion forum or on the Diffusers Discord

+

+Any question or comment related to the Diffusers library can be asked on the [discussion forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/) or on [Discord](https://discord.gg/G7tWnz98XR). Such questions and comments include (but are not limited to):

+- Reports of training or inference experiments in an attempt to share knowledge

+- Presentation of personal projects

+- Questions to non-official training examples

+- Project proposals

+- General feedback

+- Paper summaries

+- Asking for help on personal projects that build on top of the Diffusers library

+- General questions

+- Ethical questions regarding diffusion models

+- ...

+

+Every question that is asked on the forum or on Discord actively encourages the community to publicly

+share knowledge and might very well help a beginner in the future that has the same question you're

+having. Please do pose any questions you might have.

+In the same spirit, you are of immense help to the community by answering such questions because this way you are publicly documenting knowledge for everybody to learn from.

+

+**Please** keep in mind that the more effort you put into asking or answering a question, the higher

+the quality of the publicly documented knowledge. In the same way, well-posed and well-answered questions create a high-quality knowledge database accessible to everybody, while badly posed questions or answers reduce the overall quality of the public knowledge database.

+In short, a high quality question or answer is *precise*, *concise*, *relevant*, *easy-to-understand*, *accesible*, and *well-formated/well-posed*. For more information, please have a look through the [How to write a good issue](#how-to-write-a-good-issue) section.

+

+**NOTE about channels**:

+[*The forum*](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63) is much better indexed by search engines, such as Google. Posts are ranked by popularity rather than chronologically. Hence, it's easier to look up questions and answers that we posted some time ago.

+In addition, questions and answers posted in the forum can easily be linked to.

+In contrast, *Discord* has a chat-like format that invites fast back-and-forth communication.

+While it will most likely take less time for you to get an answer to your question on Discord, your

+question won't be visible anymore over time. Also, it's much harder to find information that was posted a while back on Discord. We therefore strongly recommend using the forum for high-quality questions and answers in an attempt to create long-lasting knowledge for the community. If discussions on Discord lead to very interesting answers and conclusions, we recommend posting the results on the forum to make the information more available for future readers.

+

+### 2. Opening new issues on the GitHub issues tab

+

+The 🧨 Diffusers library is robust and reliable thanks to the users who notify us of

+the problems they encounter. So thank you for reporting an issue.

+

+Remember, GitHub issues are reserved for technical questions directly related to the Diffusers library, bug reports, feature requests, or feedback on the library design.

+

+In a nutshell, this means that everything that is **not** related to the **code of the Diffusers library** (including the documentation) should **not** be asked on GitHub, but rather on either the [forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63) or [Discord](https://discord.gg/G7tWnz98XR).

+

+**Please consider the following guidelines when opening a new issue**:

+- Make sure you have searched whether your issue has already been asked before (use the search bar on GitHub under Issues).

+- Please never report a new issue on another (related) issue. If another issue is highly related, please

+open a new issue nevertheless and link to the related issue.

+- Make sure your issue is written in English. Please use one of the great, free online translation services, such as [DeepL](https://www.deepl.com/translator) to translate from your native language to English if you are not comfortable in English.

+- Check whether your issue might be solved by updating to the newest Diffusers version. Before posting your issue, please make sure that `python -c "import diffusers; print(diffusers.__version__)"` is higher or matches the latest Diffusers version.

+- Remember that the more effort you put into opening a new issue, the higher the quality of your answer will be and the better the overall quality of the Diffusers issues.

+

+New issues usually include the following.

+

+#### 2.1. Reproducible, minimal bug reports.

+

+A bug report should always have a reproducible code snippet and be as minimal and concise as possible.

+This means in more detail:

+- Narrow the bug down as much as you can, **do not just dump your whole code file**

+- Format your code

+- Do not include any external libraries except for Diffusers depending on them.

+- **Always** provide all necessary information about your environment; for this, you can run: `diffusers-cli env` in your shell and copy-paste the displayed information to the issue.

+- Explain the issue. If the reader doesn't know what the issue is and why it is an issue, she cannot solve it.

+- **Always** make sure the reader can reproduce your issue with as little effort as possible. If your code snippet cannot be run because of missing libraries or undefined variables, the reader cannot help you. Make sure your reproducible code snippet is as minimal as possible and can be copy-pasted into a simple Python shell.

+- If in order to reproduce your issue a model and/or dataset is required, make sure the reader has access to that model or dataset. You can always upload your model or dataset to the [Hub](https://huggingface.co) to make it easily downloadable. Try to keep your model and dataset as small as possible, to make the reproduction of your issue as effortless as possible.

+

+For more information, please have a look through the [How to write a good issue](#how-to-write-a-good-issue) section.

+

+You can open a bug report [here](https://github.com/huggingface/diffusers/issues/new/choose).

+

+#### 2.2. Feature requests.

+

+A world-class feature request addresses the following points:

+

+1. Motivation first:

+* Is it related to a problem/frustration with the library? If so, please explain

+why. Providing a code snippet that demonstrates the problem is best.

+* Is it related to something you would need for a project? We'd love to hear

+about it!

+* Is it something you worked on and think could benefit the community?

+Awesome! Tell us what problem it solved for you.

+2. Write a *full paragraph* describing the feature;

+3. Provide a **code snippet** that demonstrates its future use;

+4. In case this is related to a paper, please attach a link;

+5. Attach any additional information (drawings, screenshots, etc.) you think may help.

+

+You can open a feature request [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=&template=feature_request.md&title=).

+

+#### 2.3 Feedback.

+

+Feedback about the library design and why it is good or not good helps the core maintainers immensely to build a user-friendly library. To understand the philosophy behind the current design philosophy, please have a look [here](https://huggingface.co/docs/diffusers/conceptual/philosophy). If you feel like a certain design choice does not fit with the current design philosophy, please explain why and how it should be changed. If a certain design choice follows the design philosophy too much, hence restricting use cases, explain why and how it should be changed.

+If a certain design choice is very useful for you, please also leave a note as this is great feedback for future design decisions.

+

+You can open an issue about feedback [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=&template=feedback.md&title=).

+

+#### 2.4 Technical questions.

+

+Technical questions are mainly about why certain code of the library was written in a certain way, or what a certain part of the code does. Please make sure to link to the code in question and please provide detail on

+why this part of the code is difficult to understand.

+

+You can open an issue about a technical question [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=bug&template=bug-report.yml).

+

+#### 2.5 Proposal to add a new model, scheduler, or pipeline.

+

+If the diffusion model community released a new model, pipeline, or scheduler that you would like to see in the Diffusers library, please provide the following information:

+

+* Short description of the diffusion pipeline, model, or scheduler and link to the paper or public release.

+* Link to any of its open-source implementation.

+* Link to the model weights if they are available.

+

+If you are willing to contribute to the model yourself, let us know so we can best guide you. Also, don't forget

+to tag the original author of the component (model, scheduler, pipeline, etc.) by GitHub handle if you can find it.

+

+You can open a request for a model/pipeline/scheduler [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=New+model%2Fpipeline%2Fscheduler&template=new-model-addition.yml).

+

+### 3. Answering issues on the GitHub issues tab

+

+Answering issues on GitHub might require some technical knowledge of Diffusers, but we encourage everybody to give it a try even if you are not 100% certain that your answer is correct.

+Some tips to give a high-quality answer to an issue:

+- Be as concise and minimal as possible

+- Stay on topic. An answer to the issue should concern the issue and only the issue.

+- Provide links to code, papers, or other sources that prove or encourage your point.

+- Answer in code. If a simple code snippet is the answer to the issue or shows how the issue can be solved, please provide a fully reproducible code snippet.

+

+Also, many issues tend to be simply off-topic, duplicates of other issues, or irrelevant. It is of great

+help to the maintainers if you can answer such issues, encouraging the author of the issue to be

+more precise, provide the link to a duplicated issue or redirect them to [the forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63) or [Discord](https://discord.gg/G7tWnz98XR)

+

+If you have verified that the issued bug report is correct and requires a correction in the source code,

+please have a look at the next sections.

+

+For all of the following contributions, you will need to open a PR. It is explained in detail how to do so in the [Opening a pull requst](#how-to-open-a-pr) section.

+

+### 4. Fixing a `Good first issue`

+

+*Good first issues* are marked by the [Good first issue](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22) label. Usually, the issue already

+explains how a potential solution should look so that it is easier to fix.

+If the issue hasn't been closed and you would like to try to fix this issue, you can just leave a message "I would like to try this issue.". There are usually three scenarios:

+- a.) The issue description already proposes a fix. In this case and if the solution makes sense to you, you can open a PR or draft PR to fix it.

+- b.) The issue description does not propose a fix. In this case, you can ask what a proposed fix could look like and someone from the Diffusers team should answer shortly. If you have a good idea of how to fix it, feel free to directly open a PR.

+- c.) There is already an open PR to fix the issue, but the issue hasn't been closed yet. If the PR has gone stale, you can simply open a new PR and link to the stale PR. PRs often go stale if the original contributor who wanted to fix the issue suddenly cannot find the time anymore to proceed. This often happens in open-source and is very normal. In this case, the community will be very happy if you give it a new try and leverage the knowledge of the existing PR. If there is already a PR and it is active, you can help the author by giving suggestions, reviewing the PR or even asking whether you can contribute to the PR.

+

+

+### 5. Contribute to the documentation

+

+A good library **always** has good documentation! The official documentation is often one of the first points of contact for new users of the library, and therefore contributing to the documentation is a **highly

+valuable contribution**.

+

+Contributing to the library can have many forms:

+

+- Correcting spelling or grammatical errors.

+- Correct incorrect formatting of the docstring. If you see that the official documentation is weirdly displayed or a link is broken, we are very happy if you take some time to correct it.

+- Correct the shape or dimensions of a docstring input or output tensor.

+- Clarify documentation that is hard to understand or incorrect.

+- Update outdated code examples.

+- Translating the documentation to another language.

+

+Anything displayed on [the official Diffusers doc page](https://huggingface.co/docs/diffusers/index) is part of the official documentation and can be corrected, adjusted in the respective [documentation source](https://github.com/huggingface/diffusers/tree/main/docs/source).

+

+Please have a look at [this page](https://github.com/huggingface/diffusers/tree/main/docs) on how to verify changes made to the documentation locally.

+

+

+### 6. Contribute a community pipeline

+

+[Pipelines](https://huggingface.co/docs/diffusers/api/pipelines/overview) are usually the first point of contact between the Diffusers library and the user.

+Pipelines are examples of how to use Diffusers [models](https://huggingface.co/docs/diffusers/api/models) and [schedulers](https://huggingface.co/docs/diffusers/api/schedulers/overview).

+We support two types of pipelines:

+

+- Official Pipelines

+- Community Pipelines

+

+Both official and community pipelines follow the same design and consist of the same type of components.

+

+Official pipelines are tested and maintained by the core maintainers of Diffusers. Their code

+resides in [src/diffusers/pipelines](https://github.com/huggingface/diffusers/tree/main/src/diffusers/pipelines).

+In contrast, community pipelines are contributed and maintained purely by the **community** and are **not** tested.

+They reside in [examples/community](https://github.com/huggingface/diffusers/tree/main/examples/community) and while they can be accessed via the [PyPI diffusers package](https://pypi.org/project/diffusers/), their code is not part of the PyPI distribution.

+

+The reason for the distinction is that the core maintainers of the Diffusers library cannot maintain and test all

+possible ways diffusion models can be used for inference, but some of them may be of interest to the community.

+Officially released diffusion pipelines,

+such as Stable Diffusion are added to the core src/diffusers/pipelines package which ensures

+high quality of maintenance, no backward-breaking code changes, and testing.

+More bleeding edge pipelines should be added as community pipelines. If usage for a community pipeline is high, the pipeline can be moved to the official pipelines upon request from the community. This is one of the ways we strive to be a community-driven library.

+

+To add a community pipeline, one should add a

+

+Whichever way you choose to contribute, we strive to be part of an open, welcoming, and kind community. Please, read our [code of conduct](https://github.com/huggingface/diffusers/blob/main/CODE_OF_CONDUCT.md) and be mindful to respect it during your interactions. We also recommend you become familiar with the [ethical guidelines](https://huggingface.co/docs/diffusers/conceptual/ethical_guidelines) that guide our project and ask you to adhere to the same principles of transparency and responsibility.

+

+We enormously value feedback from the community, so please do not be afraid to speak up if you believe you have valuable feedback that can help improve the library - every message, comment, issue, and pull request (PR) is read and considered.

+

+## Overview

+

+You can contribute in many ways ranging from answering questions on issues to adding new diffusion models to

+the core library.

+

+In the following, we give an overview of different ways to contribute, ranked by difficulty in ascending order. All of them are valuable to the community.

+

+* 1. Asking and answering questions on [the Diffusers discussion forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers) or on [Discord](https://discord.gg/G7tWnz98XR).

+* 2. Opening new issues on [the GitHub Issues tab](https://github.com/huggingface/diffusers/issues/new/choose)

+* 3. Answering issues on [the GitHub Issues tab](https://github.com/huggingface/diffusers/issues)

+* 4. Fix a simple issue, marked by the "Good first issue" label, see [here](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22).

+* 5. Contribute to the [documentation](https://github.com/huggingface/diffusers/tree/main/docs/source).

+* 6. Contribute a [Community Pipeline](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3Acommunity-examples)

+* 7. Contribute to the [examples](https://github.com/huggingface/diffusers/tree/main/examples).

+* 8. Fix a more difficult issue, marked by the "Good second issue" label, see [here](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22Good+second+issue%22).

+* 9. Add a new pipeline, model, or scheduler, see ["New Pipeline/Model"](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+pipeline%2Fmodel%22) and ["New scheduler"](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+scheduler%22) issues. For this contribution, please have a look at [Design Philosophy](https://github.com/huggingface/diffusers/blob/main/PHILOSOPHY.md).

+

+As said before, **all contributions are valuable to the community**.

+In the following, we will explain each contribution a bit more in detail.

+

+For all contributions 4.-9. you will need to open a PR. It is explained in detail how to do so in [Opening a pull requst](#how-to-open-a-pr)

+

+### 1. Asking and answering questions on the Diffusers discussion forum or on the Diffusers Discord

+

+Any question or comment related to the Diffusers library can be asked on the [discussion forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/) or on [Discord](https://discord.gg/G7tWnz98XR). Such questions and comments include (but are not limited to):

+- Reports of training or inference experiments in an attempt to share knowledge

+- Presentation of personal projects

+- Questions to non-official training examples

+- Project proposals

+- General feedback

+- Paper summaries

+- Asking for help on personal projects that build on top of the Diffusers library

+- General questions

+- Ethical questions regarding diffusion models

+- ...

+

+Every question that is asked on the forum or on Discord actively encourages the community to publicly

+share knowledge and might very well help a beginner in the future that has the same question you're

+having. Please do pose any questions you might have.

+In the same spirit, you are of immense help to the community by answering such questions because this way you are publicly documenting knowledge for everybody to learn from.

+

+**Please** keep in mind that the more effort you put into asking or answering a question, the higher

+the quality of the publicly documented knowledge. In the same way, well-posed and well-answered questions create a high-quality knowledge database accessible to everybody, while badly posed questions or answers reduce the overall quality of the public knowledge database.

+In short, a high quality question or answer is *precise*, *concise*, *relevant*, *easy-to-understand*, *accesible*, and *well-formated/well-posed*. For more information, please have a look through the [How to write a good issue](#how-to-write-a-good-issue) section.

+

+**NOTE about channels**:

+[*The forum*](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63) is much better indexed by search engines, such as Google. Posts are ranked by popularity rather than chronologically. Hence, it's easier to look up questions and answers that we posted some time ago.

+In addition, questions and answers posted in the forum can easily be linked to.

+In contrast, *Discord* has a chat-like format that invites fast back-and-forth communication.

+While it will most likely take less time for you to get an answer to your question on Discord, your

+question won't be visible anymore over time. Also, it's much harder to find information that was posted a while back on Discord. We therefore strongly recommend using the forum for high-quality questions and answers in an attempt to create long-lasting knowledge for the community. If discussions on Discord lead to very interesting answers and conclusions, we recommend posting the results on the forum to make the information more available for future readers.

+

+### 2. Opening new issues on the GitHub issues tab

+

+The 🧨 Diffusers library is robust and reliable thanks to the users who notify us of

+the problems they encounter. So thank you for reporting an issue.

+

+Remember, GitHub issues are reserved for technical questions directly related to the Diffusers library, bug reports, feature requests, or feedback on the library design.

+

+In a nutshell, this means that everything that is **not** related to the **code of the Diffusers library** (including the documentation) should **not** be asked on GitHub, but rather on either the [forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63) or [Discord](https://discord.gg/G7tWnz98XR).

+

+**Please consider the following guidelines when opening a new issue**:

+- Make sure you have searched whether your issue has already been asked before (use the search bar on GitHub under Issues).

+- Please never report a new issue on another (related) issue. If another issue is highly related, please

+open a new issue nevertheless and link to the related issue.

+- Make sure your issue is written in English. Please use one of the great, free online translation services, such as [DeepL](https://www.deepl.com/translator) to translate from your native language to English if you are not comfortable in English.

+- Check whether your issue might be solved by updating to the newest Diffusers version. Before posting your issue, please make sure that `python -c "import diffusers; print(diffusers.__version__)"` is higher or matches the latest Diffusers version.

+- Remember that the more effort you put into opening a new issue, the higher the quality of your answer will be and the better the overall quality of the Diffusers issues.

+

+New issues usually include the following.

+

+#### 2.1. Reproducible, minimal bug reports.

+

+A bug report should always have a reproducible code snippet and be as minimal and concise as possible.

+This means in more detail:

+- Narrow the bug down as much as you can, **do not just dump your whole code file**

+- Format your code

+- Do not include any external libraries except for Diffusers depending on them.

+- **Always** provide all necessary information about your environment; for this, you can run: `diffusers-cli env` in your shell and copy-paste the displayed information to the issue.

+- Explain the issue. If the reader doesn't know what the issue is and why it is an issue, she cannot solve it.

+- **Always** make sure the reader can reproduce your issue with as little effort as possible. If your code snippet cannot be run because of missing libraries or undefined variables, the reader cannot help you. Make sure your reproducible code snippet is as minimal as possible and can be copy-pasted into a simple Python shell.

+- If in order to reproduce your issue a model and/or dataset is required, make sure the reader has access to that model or dataset. You can always upload your model or dataset to the [Hub](https://huggingface.co) to make it easily downloadable. Try to keep your model and dataset as small as possible, to make the reproduction of your issue as effortless as possible.

+

+For more information, please have a look through the [How to write a good issue](#how-to-write-a-good-issue) section.

+

+You can open a bug report [here](https://github.com/huggingface/diffusers/issues/new/choose).

+

+#### 2.2. Feature requests.

+

+A world-class feature request addresses the following points:

+

+1. Motivation first:

+* Is it related to a problem/frustration with the library? If so, please explain

+why. Providing a code snippet that demonstrates the problem is best.

+* Is it related to something you would need for a project? We'd love to hear

+about it!

+* Is it something you worked on and think could benefit the community?

+Awesome! Tell us what problem it solved for you.

+2. Write a *full paragraph* describing the feature;

+3. Provide a **code snippet** that demonstrates its future use;

+4. In case this is related to a paper, please attach a link;

+5. Attach any additional information (drawings, screenshots, etc.) you think may help.

+

+You can open a feature request [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=&template=feature_request.md&title=).

+

+#### 2.3 Feedback.

+

+Feedback about the library design and why it is good or not good helps the core maintainers immensely to build a user-friendly library. To understand the philosophy behind the current design philosophy, please have a look [here](https://huggingface.co/docs/diffusers/conceptual/philosophy). If you feel like a certain design choice does not fit with the current design philosophy, please explain why and how it should be changed. If a certain design choice follows the design philosophy too much, hence restricting use cases, explain why and how it should be changed.

+If a certain design choice is very useful for you, please also leave a note as this is great feedback for future design decisions.

+

+You can open an issue about feedback [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=&template=feedback.md&title=).

+

+#### 2.4 Technical questions.

+

+Technical questions are mainly about why certain code of the library was written in a certain way, or what a certain part of the code does. Please make sure to link to the code in question and please provide detail on

+why this part of the code is difficult to understand.

+

+You can open an issue about a technical question [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=bug&template=bug-report.yml).

+

+#### 2.5 Proposal to add a new model, scheduler, or pipeline.

+

+If the diffusion model community released a new model, pipeline, or scheduler that you would like to see in the Diffusers library, please provide the following information:

+

+* Short description of the diffusion pipeline, model, or scheduler and link to the paper or public release.

+* Link to any of its open-source implementation.

+* Link to the model weights if they are available.

+

+If you are willing to contribute to the model yourself, let us know so we can best guide you. Also, don't forget

+to tag the original author of the component (model, scheduler, pipeline, etc.) by GitHub handle if you can find it.

+

+You can open a request for a model/pipeline/scheduler [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=New+model%2Fpipeline%2Fscheduler&template=new-model-addition.yml).

+

+### 3. Answering issues on the GitHub issues tab

+

+Answering issues on GitHub might require some technical knowledge of Diffusers, but we encourage everybody to give it a try even if you are not 100% certain that your answer is correct.

+Some tips to give a high-quality answer to an issue:

+- Be as concise and minimal as possible

+- Stay on topic. An answer to the issue should concern the issue and only the issue.

+- Provide links to code, papers, or other sources that prove or encourage your point.

+- Answer in code. If a simple code snippet is the answer to the issue or shows how the issue can be solved, please provide a fully reproducible code snippet.

+

+Also, many issues tend to be simply off-topic, duplicates of other issues, or irrelevant. It is of great

+help to the maintainers if you can answer such issues, encouraging the author of the issue to be

+more precise, provide the link to a duplicated issue or redirect them to [the forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63) or [Discord](https://discord.gg/G7tWnz98XR)

+

+If you have verified that the issued bug report is correct and requires a correction in the source code,

+please have a look at the next sections.

+

+For all of the following contributions, you will need to open a PR. It is explained in detail how to do so in the [Opening a pull requst](#how-to-open-a-pr) section.

+

+### 4. Fixing a `Good first issue`

+

+*Good first issues* are marked by the [Good first issue](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22) label. Usually, the issue already

+explains how a potential solution should look so that it is easier to fix.

+If the issue hasn't been closed and you would like to try to fix this issue, you can just leave a message "I would like to try this issue.". There are usually three scenarios:

+- a.) The issue description already proposes a fix. In this case and if the solution makes sense to you, you can open a PR or draft PR to fix it.

+- b.) The issue description does not propose a fix. In this case, you can ask what a proposed fix could look like and someone from the Diffusers team should answer shortly. If you have a good idea of how to fix it, feel free to directly open a PR.

+- c.) There is already an open PR to fix the issue, but the issue hasn't been closed yet. If the PR has gone stale, you can simply open a new PR and link to the stale PR. PRs often go stale if the original contributor who wanted to fix the issue suddenly cannot find the time anymore to proceed. This often happens in open-source and is very normal. In this case, the community will be very happy if you give it a new try and leverage the knowledge of the existing PR. If there is already a PR and it is active, you can help the author by giving suggestions, reviewing the PR or even asking whether you can contribute to the PR.

+

+

+### 5. Contribute to the documentation

+