license: apache-2.0

language:

- ar

datasets:

- MIRACL

tags:

- miniDense

- passage-retrieval

- knowledge-distillation

- middle-training

- sentence-transformers

pretty_name: >-

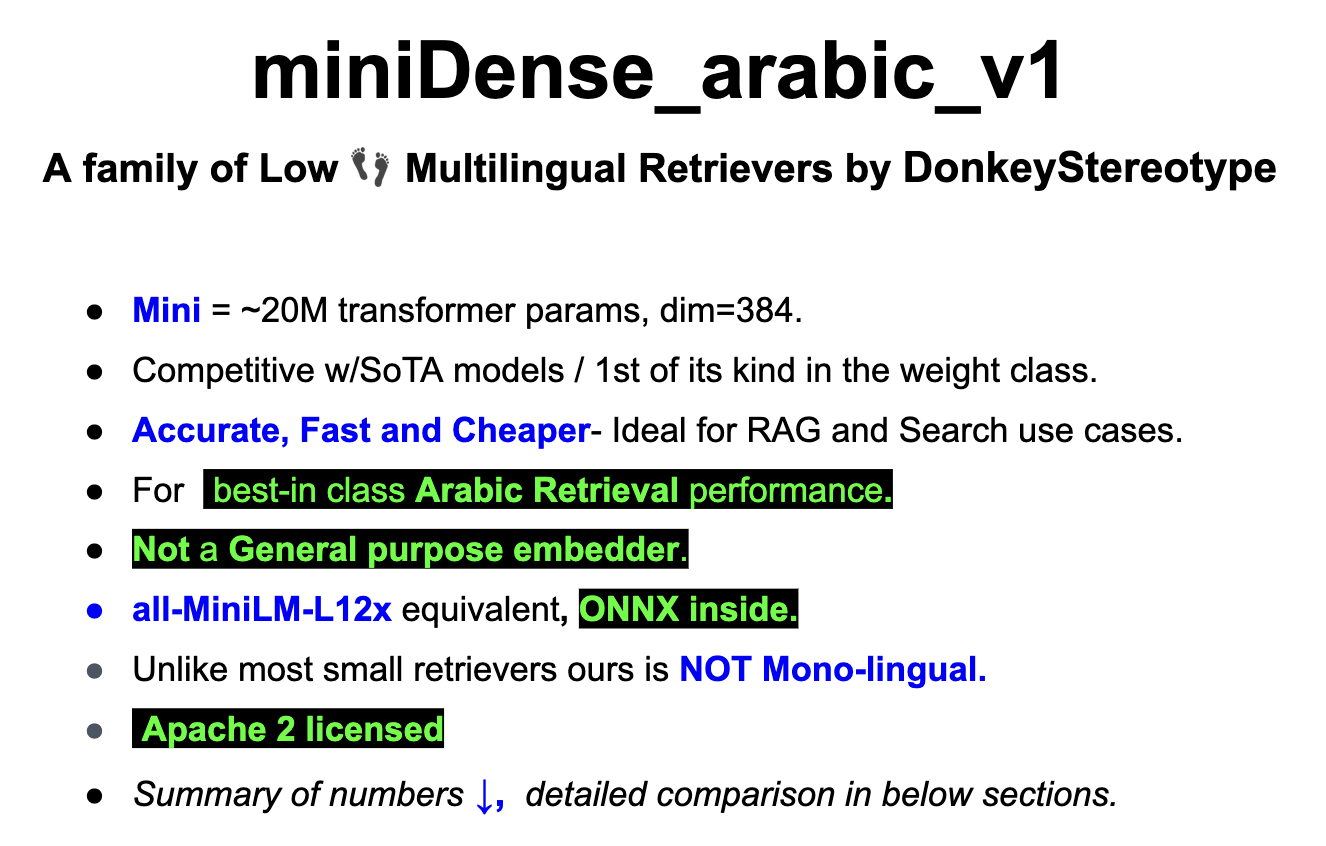

miniDense is a family of High-quality, Light Weight and Easy deploy

multilingual embedders / retrievers, primarily focussed on Indo-Aryan and

Indo-Dravidian Languages.

library_name: transformers

pipeline_tag: sentence-similarity

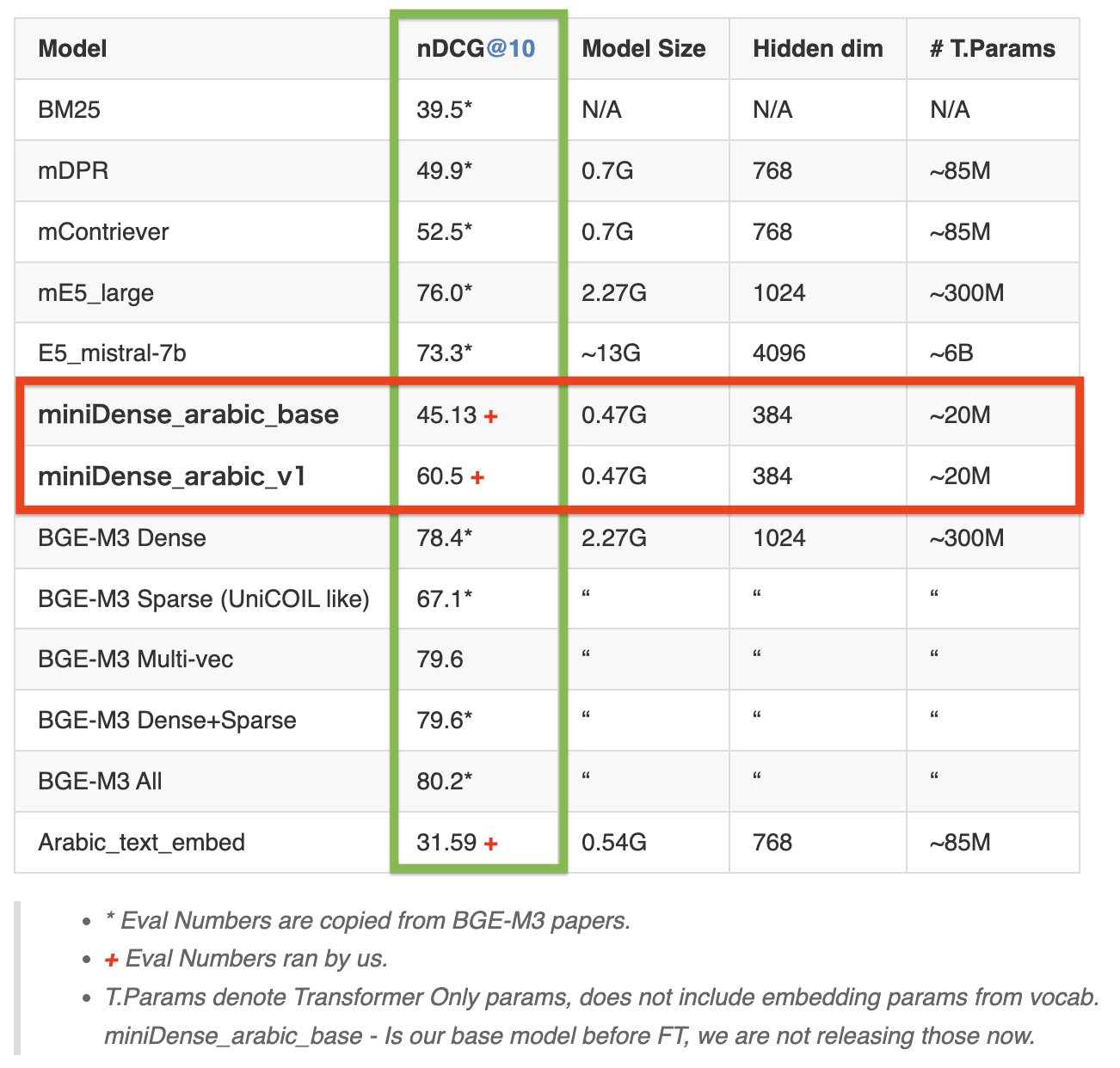

Table 1: Arabic retrieval performance on the MIRACL dev set (measured by nDCG@10)

Architecture:

- Model: BERT.

- Tokenizer: XLM-Roberta's Tokenizer.

- Vocab: 250K

Table Of Contents

- Request, Terms, Disclaimers:

- Detailed comparison & Our Contribution:

- ONNX & GGUF Status:

- Usage:

- FAQs

- MTEB numbers

- Roadmap

- Notes on Reproducing:

- Reference:

- Note on model bias

Request, Terms, Disclaimers:

https://github.com/sponsors/PrithivirajDamodaran

Detailed comparison & Our Contribution:

English language famously have all-minilm series models which were great for quick experimentations and for certain production workloads. The Idea is to have same for the other popular langauges, starting with Indo-Aryan and Indo-Dravidian languages. Our innovation is in bringing high quality models which easy to serve and embeddings are cheaper to store without ANY pretraining or expensive finetuning. For instance, all-minilm are finetuned on 1-Billion pairs. We offer a very lean model but with a huge vocabulary - around 250K. We will add more details here.

Table 2: Detailed Arabic retrieval performance on the MIRACL dev set (measured by nDCG@10)

Full set of evaluation numbers for our model

{'NDCG@1': 0.50449, 'NDCG@3': 0.52437, 'NDCG@5': 0.55649, 'NDCG@10': 0.60599, 'NDCG@100': 0.64745, 'NDCG@1000': 0.65717}

{'MAP@1': 0.34169, 'MAP@3': 0.45784, 'MAP@5': 0.48922, 'MAP@10': 0.51316, 'MAP@100': 0.53012, 'MAP@1000': 0.53069}

{'Recall@10': 0.72479, 'Recall@50': 0.87686, 'Recall@100': 0.91178, 'Recall@200': 0.93593, 'Recall@500': 0.96254, 'Recall@1000': 0.97557}

{'P@1': 0.50449, 'P@3': 0.29604, 'P@5': 0.21581, 'P@10': 0.13149, 'P@100': 0.01771, 'P@1000': 0.0019}

{'MRR@10': 0.61833, 'MRR@100': 0.62314, 'MRR@1000': 0.62329}

ONNX & GGUF Status:

| Variant | Status |

|---|---|

| FP16 ONNX | ✅ |

| GGUF | WIP |

Usage:

With Sentence Transformers:

from sentence_transformers import SentenceTransformer

import scipy.spatial

model = SentenceTransformer('prithivida/miniDense_arabic_v1')

corpus = [

'رجل يأكل',

'الناس يأكلون قطعة خبز',

'فتاة تحمل طفل',

'رجل يركب حصان',

'امرأة تعزف على الجيتار',

'شخصان يدفعان عربة عبر الغابة',

'شخص يركب حصانًا أبيض في حقل مغلق',

'قرد يقرع الطبل',

'فهد يطارد فريسة',

'أكلت النساء بعض سلطات الفاكهة'

]

queries = [

'شخص يأكل المعكرونة',

'شخص يرتدي زي الغوريلا يعزف على الطبل'

]

corpus_embeddings = model.encode(corpus)

query_embeddings = model.encode(queries)

# Find the closest 3 sentences of the corpus for each query sentence based on cosine similarity

closest_n = 3

for query, query_embedding in zip(queries, query_embeddings):

distances = scipy.spatial.distance.cdist([query_embedding], corpus_embeddings, "cosine")[0]

results = zip(range(len(distances)), distances)

results = sorted(results, key=lambda x: x[1])

print("\n======================\n")

print("Query:", query)

print("\nTop 3 most similar sentences in corpus:\n")

for idx, distance in results[0:closest_n]:

print(corpus[idx].strip(), "(Score: %.4f)" % (1-distance))

# Optional: How to quantize the embeddings

# binary_embeddings = quantize_embeddings(embeddings, precision="ubinary")

With Huggingface Transformers:

- T.B.A

FAQs:

How can I reduce overall inference cost?

- You can host these models without heavy torch dependency using the ONNX flavours of these models via FlashEmbed library.

How do I reduce vector storage cost?

Use Binary and Scalar Quantisation

How do I offer hybrid search to improve accuracy?

MIRACL paper shows simply combining BM25 is a good starting point for a Hybrid option: The below numbers are with mDPR model, but miniDense_arabic_v1 should give a even better hybrid performance.

| Language | ISO | nDCG@10 BM25 | nDCG@10 mDPR | nDCG@10 Hybrid |

|---|---|---|---|---|

| Arabic | ar | 0.395 | 0.499 | 0.673 |

Note: MIRACL paper shows a different (higher) value for BM25 Arabic, So we are taking that value from BGE-M3 paper, rest all are form the MIRACL paper.

MTEB Retrieval numbers:

MTEB is a general purpose embedding evaluation benchmark covering wide range of tasks, but miniDense models (like BGE-M3) are predominantly tuned for retireval tasks aimed at search & IR based usecases. So it makes sense to evaluate our models in retrieval slice of the MTEB benchmark.

MIRACL Retrieval

Refer tables above

Sadeem Question Retrieval

Table 3: Detailed Arabic retrieval performance on the SadeemQA eval set (measured by nDCG@10)

Long Document Retrieval

This is very ambitious eval because we have not trained for long context, the max_len was 512 for all the models below except BGE-M3 which had 8192 context and finetuned for long doc.

Table 4: Detailed Arabic retrieval performance on the MultiLongDoc dev set (measured by nDCG@10)

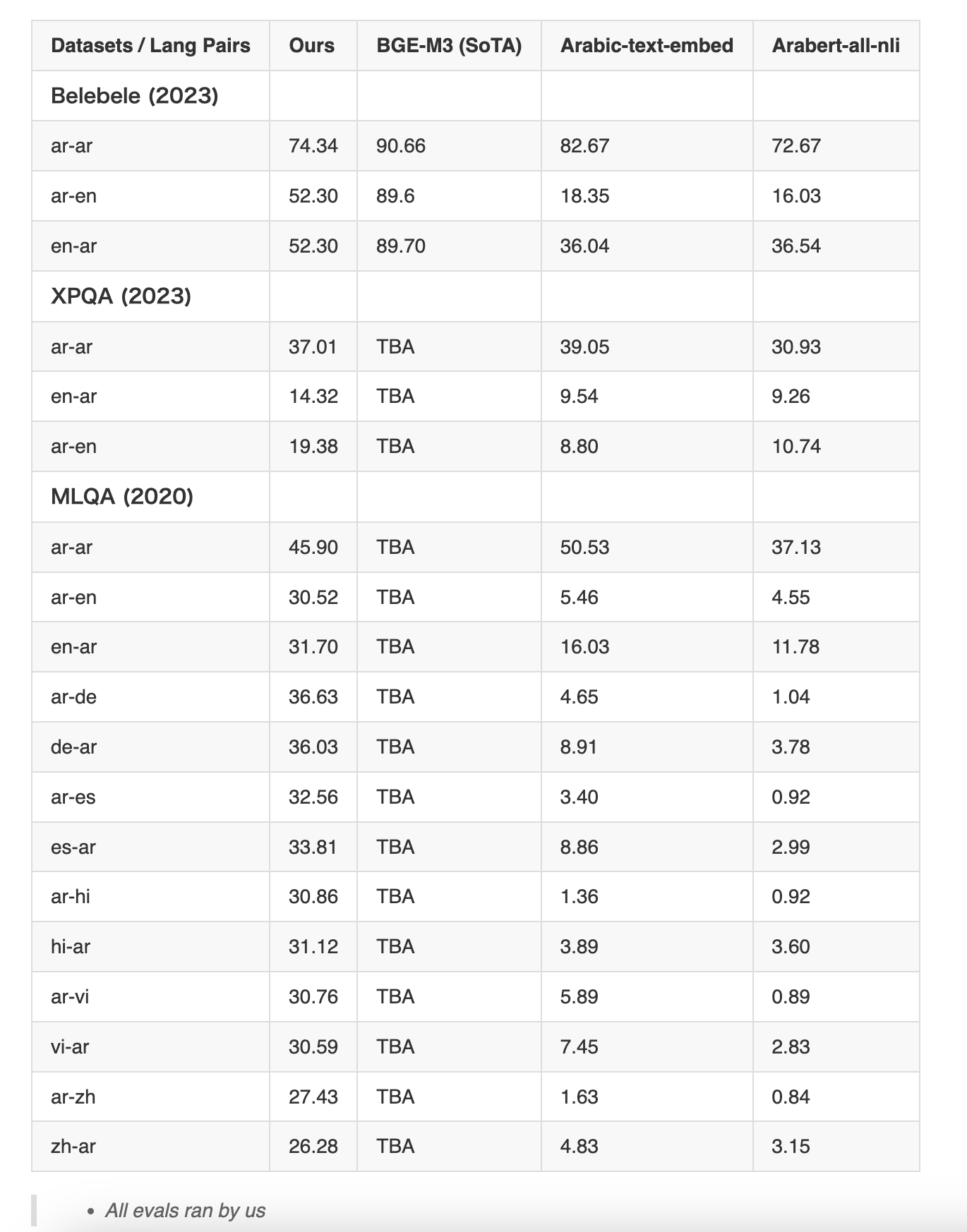

X-lingual Retrieval

Except BGE-M3 all are monolingual arabic models so they have no notion of any other languages. But the below table shows how our model understands arabic in context with other languages. This explains it's overall competitive performance when compared to models that are a LOT larger.

Table 5: Detailed Arabic retrieval performance on the 3 X-lingual test set (measured by nDCG@10)

Roadmap

We will add miniDense series of models for all popular languages as we see fit or based on community requests in phases. Some of the languages we have in our list are

- Spanish

- Tamil

- German

- English ?

Notes on reproducing:

We welcome anyone to reproduce our results. Here are some tips and observations:

- Use CLS Pooling (not mean) and Inner Product (not cosine).

- There may be minor differences in the numbers when reproducing, for instance BGE-M3 reports a nDCG@10 of 59.3 for MIRACL hindi and we Observed only 58.9.

Here are our numbers for the full hindi run on BGE-M3

{'NDCG@1': 0.49714, 'NDCG@3': 0.5115, 'NDCG@5': 0.53908, 'NDCG@10': 0.58936, 'NDCG@100': 0.6457, 'NDCG@1000': 0.65336}

{'MAP@1': 0.28845, 'MAP@3': 0.42424, 'MAP@5': 0.46455, 'MAP@10': 0.49955, 'MAP@100': 0.51886, 'MAP@1000': 0.51933}

{'Recall@10': 0.73032, 'Recall@50': 0.8987, 'Recall@100': 0.93974, 'Recall@200': 0.95763, 'Recall@500': 0.97813, 'Recall@1000': 0.9902}

{'P@1': 0.49714, 'P@3': 0.33048, 'P@5': 0.24629, 'P@10': 0.15543, 'P@100': 0.0202, 'P@1000': 0.00212}

{'MRR@10': 0.60893, 'MRR@100': 0.615, 'MRR@1000': 0.6151}

Fair warning BGE-M3 is $ expensive to evaluate, probably* that's why it's not part of any of the retrieval slice of MTEB benchmarks.

Reference:

- All Cohere numbers are copied form here

- BGE M3-Embedding: Multi-Lingual, Multi-Functionality, Multi-Granularity Text Embeddings Through Self-Knowledge Distillation

- Making a MIRACL: Multilingual Information Retrieval Across a Continuum of Languages

- IndicIRSuite: Multilingual Dataset and Neural Information Models for Indian Languages

Note on model bias:

- Like any model this model might carry inherent biases from the base models and the datasets it was pretrained and finetuned on. Please use responsibly.