Post

Today, we’re releasing our first pretrained Open Language Models (OLMo) at the Allen Institute for AI (AI2), a set of 7 billion parameter models and one 1 billion parameter variant. This line of work was probably the main reason I joined AI2 and is the biggest lever I see possible to enact meaningful change in how AI is used, studied, and discussed in the short term.

Links at the top because that's what you want:

* Core 7B model: allenai/OLMo-7B

* 7B model twin (different GPU hardware): allenai/OLMo-7B-Twin-2T

* 1B model: allenai/OLMo-1B

* Dataset: allenai/dolma

* Paper (arxiv soon): https://allenai.org/olmo/olmo-paper.pdf

* My personal blog post: https://www.interconnects.ai/p/olmo

OLMo will represent a new type of LLM enabling new approaches to ML research and deployment, because on a key axis of openness, OLMo represents something entirely different. OLMo is built for scientists to be able to develop research directions at every point in the development process and execute on them, which was previously not available due to incomplete information and tools.

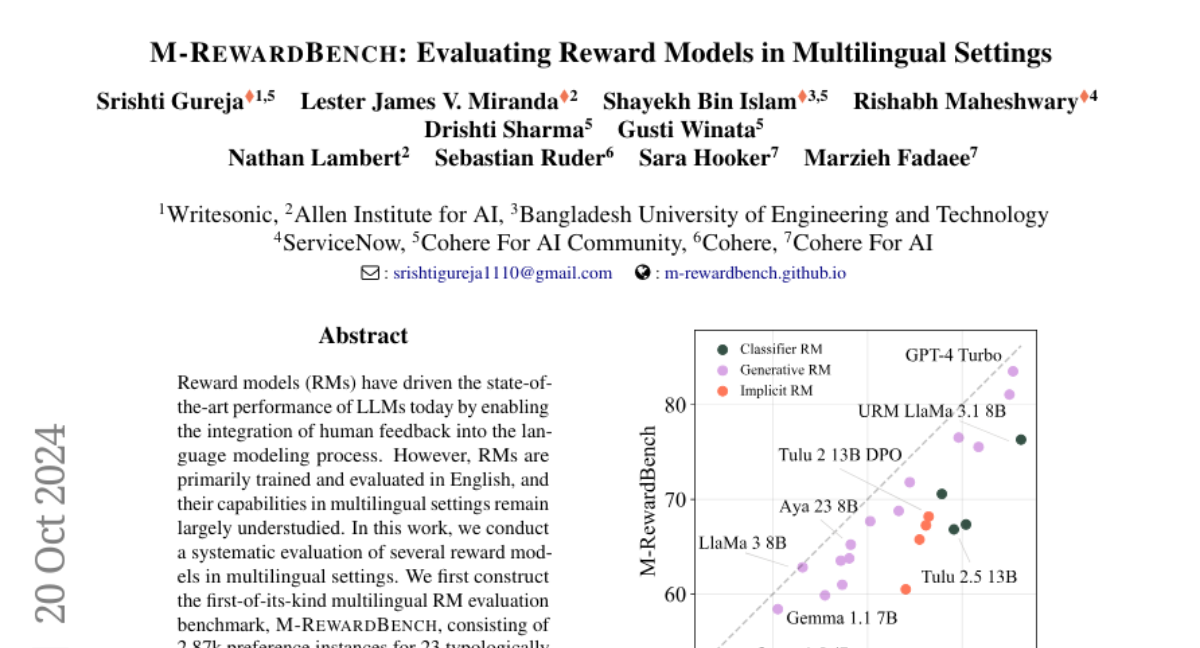

Depending on the evaluation methods, OLMo 1 is either the best 7 billion parameter base model available for download or one of the best. This relies on a new way of thinking where models are judged on parameter plus token budget, similar to how scaling laws are measured for LLMs.

We're just getting started, so please help us learn how to be more scientific with LLMs!

Links at the top because that's what you want:

* Core 7B model: allenai/OLMo-7B

* 7B model twin (different GPU hardware): allenai/OLMo-7B-Twin-2T

* 1B model: allenai/OLMo-1B

* Dataset: allenai/dolma

* Paper (arxiv soon): https://allenai.org/olmo/olmo-paper.pdf

* My personal blog post: https://www.interconnects.ai/p/olmo

OLMo will represent a new type of LLM enabling new approaches to ML research and deployment, because on a key axis of openness, OLMo represents something entirely different. OLMo is built for scientists to be able to develop research directions at every point in the development process and execute on them, which was previously not available due to incomplete information and tools.

Depending on the evaluation methods, OLMo 1 is either the best 7 billion parameter base model available for download or one of the best. This relies on a new way of thinking where models are judged on parameter plus token budget, similar to how scaling laws are measured for LLMs.

We're just getting started, so please help us learn how to be more scientific with LLMs!