metadata

language:

- en

license: apache-2.0

library_name: transformers

tags:

- code

datasets:

- Intel/orca_dpo_pairs

model-index:

- name: Orca-SOLAR-4x10.7b

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: AI2 Reasoning Challenge (25-Shot)

type: ai2_arc

config: ARC-Challenge

split: test

args:

num_few_shot: 25

metrics:

- type: acc_norm

value: 68.52

name: normalized accuracy

source:

url: >-

https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/Orca-SOLAR-4x10.7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HellaSwag (10-Shot)

type: hellaswag

split: validation

args:

num_few_shot: 10

metrics:

- type: acc_norm

value: 86.78

name: normalized accuracy

source:

url: >-

https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/Orca-SOLAR-4x10.7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU (5-Shot)

type: cais/mmlu

config: all

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 67.03

name: accuracy

source:

url: >-

https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/Orca-SOLAR-4x10.7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: TruthfulQA (0-shot)

type: truthful_qa

config: multiple_choice

split: validation

args:

num_few_shot: 0

metrics:

- type: mc2

value: 64.54

source:

url: >-

https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/Orca-SOLAR-4x10.7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Winogrande (5-shot)

type: winogrande

config: winogrande_xl

split: validation

args:

num_few_shot: 5

metrics:

- type: acc

value: 83.9

name: accuracy

source:

url: >-

https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/Orca-SOLAR-4x10.7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GSM8k (5-shot)

type: gsm8k

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 68.23

name: accuracy

source:

url: >-

https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/Orca-SOLAR-4x10.7b

name: Open LLM Leaderboard

🌞🚀 Orca-SOLAR-4x10.7_36B

Merge of four Solar-10.7B instruct finetunes.

🌟 Usage

This SOLAR model loves to code. In my experience, if you ask it a code question it will use almost all of the available token limit to complete the code.

However, this can also be to its own detriment. If the request is complex it may not finish the code in a given time period. This behavior is not because of an eos token, as it finishes sentences quite normally if its a non code question.

Your mileage may vary.

🌎 HF Spaces

This 36B parameter model is capabale of running on free tier hardware (CPU only - GGUF)

- Try the model here

🌅 Code Example

Example also available in colab

from transformers import AutoModelForCausalLM, AutoTokenizer

def generate_response(prompt):

"""

Generate a response from the model based on the input prompt.

Args:

prompt (str): Prompt for the model.

Returns:

str: The generated response from the model.

"""

# Tokenize the input prompt

inputs = tokenizer(prompt, return_tensors="pt")

# Generate output tokens

outputs = model.generate(**inputs, max_new_tokens=512, eos_token_id=tokenizer.eos_token_id, pad_token_id=tokenizer.pad_token_id)

# Decode the generated tokens to a string

response = tokenizer.decode(outputs[0], skip_special_tokens=True)

return response

# Load the model and tokenizer

model_id = "macadeliccc/Orca-SOLAR-4x10.7b"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(model_id, load_in_4bit=True)

prompt = "Explain the proof of Fermat's Last Theorem and its implications in number theory."

print("Response:")

print(generate_response(prompt), "\n")

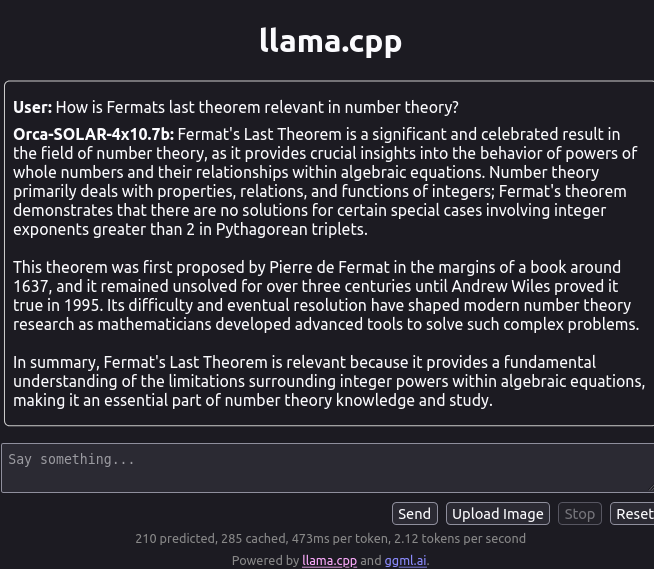

Llama.cpp

GGUF Quants available here

Evaluations

https://huggingface.co/datasets/open-llm-leaderboard/details_macadeliccc__Orca-SOLAR-4x10.7b

📚 Citations

@misc{kim2023solar,

title={SOLAR 10.7B: Scaling Large Language Models with Simple yet Effective Depth Up-Scaling},

author={Dahyun Kim and Chanjun Park and Sanghoon Kim and Wonsung Lee and Wonho Song and Yunsu Kim and Hyeonwoo Kim and Yungi Kim and Hyeonju Lee and Jihoo Kim and Changbae Ahn and Seonghoon Yang and Sukyung Lee and Hyunbyung Park and Gyoungjin Gim and Mikyoung Cha and Hwalsuk Lee and Sunghun Kim},

year={2023},

eprint={2312.15166},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

Open LLM Leaderboard Evaluation Results

Detailed results can be found here

| Metric | Value |

|---|---|

| Avg. | 73.17 |

| AI2 Reasoning Challenge (25-Shot) | 68.52 |

| HellaSwag (10-Shot) | 86.78 |

| MMLU (5-Shot) | 67.03 |

| TruthfulQA (0-shot) | 64.54 |

| Winogrande (5-shot) | 83.90 |

| GSM8k (5-shot) | 68.23 |