license: apache-2.0

base_model: microsoft/swin-tiny-patch4-window7-224

tags:

- generated_from_trainer

datasets:

- imagefolder

metrics:

- accuracy

model-index:

- name: swin-tiny-patch4-window7-224-finetuned-parkinson-classification

results:

- task:

name: Image Classification

type: image-classification

dataset:

name: imagefolder

type: imagefolder

config: default

split: train

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.9090909090909091

swin-tiny-patch4-window7-224-finetuned-parkinson-classification

This model is a fine-tuned version of microsoft/swin-tiny-patch4-window7-224 on the imagefolder dataset. It achieves the following results on the evaluation set:

- Loss: 0.4966

- Accuracy: 0.9091

Model description

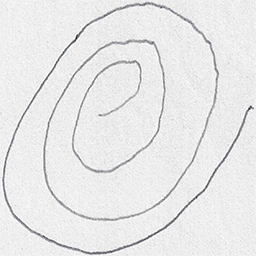

This model was created by importing the dataset of spiral drawings made by both parkinsons patients and healthy people into Google Colab from kaggle here: https://www.kaggle.com/datasets/kmader/parkinsons-drawings/data. I then used the image classification tutorial here: https://colab.research.google.com/github/huggingface/notebooks/blob/main/examples/image_classification.ipynb

obtaining the following notebook:

https://colab.research.google.com/drive/1oRjwgHjmaQYRU1qf-TTV7cg1qMZXgMaO?usp=sharing

The possible classified data are:

- Healthy

- Parkinson

Spiral drawing example:

Intended uses & limitations

Acknowledgements

The data came from the paper: Zham P, Kumar DK, Dabnichki P, Poosapadi Arjunan S and Raghav S (2017) Distinguishing Different Stages of Parkinson’s Disease Using Composite Index of Speed and Pen-Pressure of Sketching a Spiral. Front. Neurol. 8:435. doi: 10.3389/fneur.2017.00435

https://www.frontiersin.org/articles/10.3389/fneur.2017.00435/full

Data licence : https://creativecommons.org/licenses/by-nc-nd/4.0/

Training and evaluation data

More information needed

Training procedure

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 20

Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|---|---|---|---|---|

| No log | 1.0 | 1 | 0.6801 | 0.4545 |

| No log | 2.0 | 3 | 0.8005 | 0.3636 |

| No log | 3.0 | 5 | 0.6325 | 0.6364 |

| No log | 4.0 | 6 | 0.5494 | 0.8182 |

| No log | 5.0 | 7 | 0.5214 | 0.8182 |

| No log | 6.0 | 9 | 0.5735 | 0.7273 |

| 0.3063 | 7.0 | 11 | 0.4966 | 0.9091 |

| 0.3063 | 8.0 | 12 | 0.4557 | 0.9091 |

| 0.3063 | 9.0 | 13 | 0.4444 | 0.9091 |

| 0.3063 | 10.0 | 15 | 0.6226 | 0.6364 |

| 0.3063 | 11.0 | 17 | 0.8224 | 0.4545 |

| 0.3063 | 12.0 | 18 | 0.8127 | 0.4545 |

| 0.3063 | 13.0 | 19 | 0.7868 | 0.4545 |

| 0.2277 | 14.0 | 21 | 0.8195 | 0.4545 |

| 0.2277 | 15.0 | 23 | 0.7499 | 0.4545 |

| 0.2277 | 16.0 | 24 | 0.7022 | 0.5455 |

| 0.2277 | 17.0 | 25 | 0.6755 | 0.5455 |

| 0.2277 | 18.0 | 27 | 0.6277 | 0.6364 |

| 0.2277 | 19.0 | 29 | 0.5820 | 0.6364 |

| 0.1867 | 20.0 | 30 | 0.5784 | 0.6364 |

Framework versions

- Transformers 4.35.2

- Pytorch 2.1.0+cu121

- Datasets 2.16.1

- Tokenizers 0.15.0