metadata

license: apache-2.0

language:

- en

base_model:

- Ultralytics/YOLO11

tags:

- yolo

- yolo11

- nsfw

pipeline_tag: object-detection

🔞 WARNING: SENSITIVE CONTENT 🔞

THIS MEDIA CONTAINS SENSITIVE CONTENT (I.E. NUDITY, VIOLENCE, PROFANITY, PORN) THAT SOME PEOPLE MAY FIND OFFENSIVE. YOU MUST BE 18 OR OLDER TO VIEW THIS CONTENT.

EraX-NSFW-V1.0

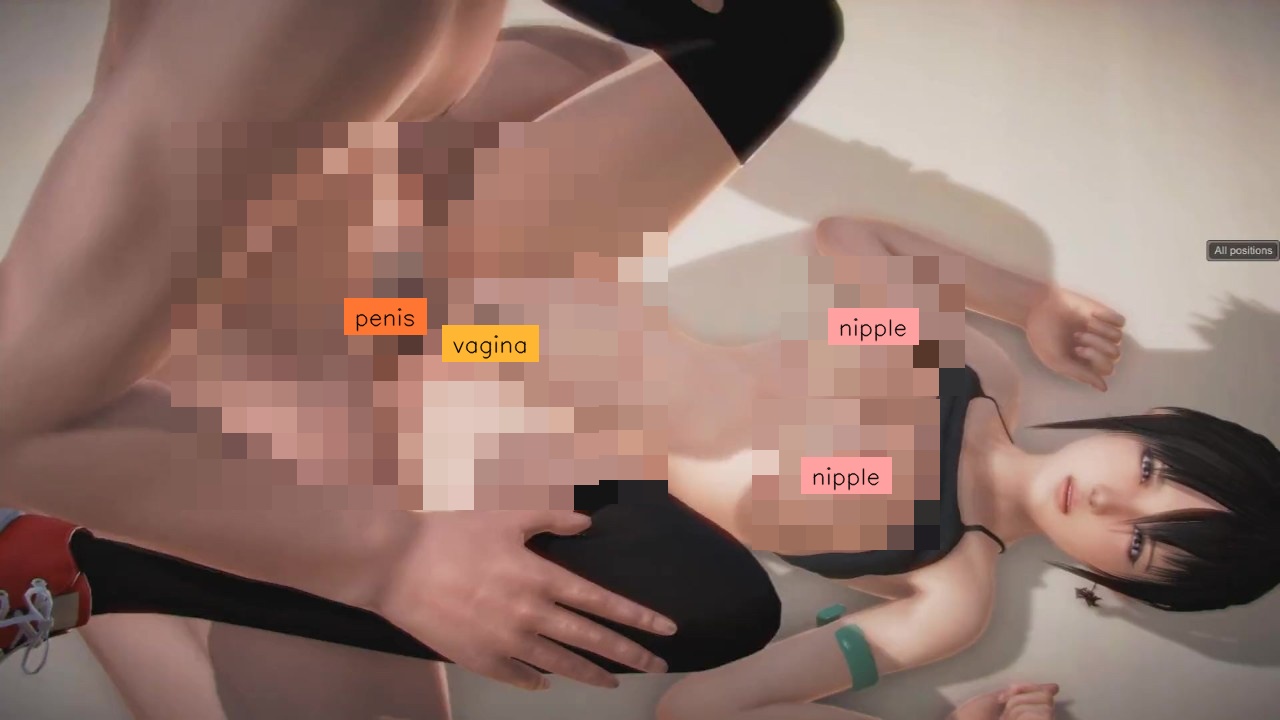

A Highly Efficient Model for NSFW Detection. Very effective for pre-publication image and video control, or for limiting children's access to harmful publications. You can either just predict the classes and their boundingboxes or even mask the predicted harmful object(s) or mask the entire image. Please see the deployment codes below.

- Developed by:

- Phạm Đình Thục (thuc.pd@erax.ai)

- Mr. Nguyễn Anh Nguyên (nguyen@erax.ai)

- Model version: v1.0

- License: Apache 2.0

Model Details / Overview

- Model Architecture: YOLO11 (nano, small, medium)

- Task: Object Detection (NSFW Detection)

- Dataset: Private datasets (from Internet).

- Training set: 31890 images.

- Validation set: 11538 images.

- Classes: anus, make_love, nipple, penis, vagina.

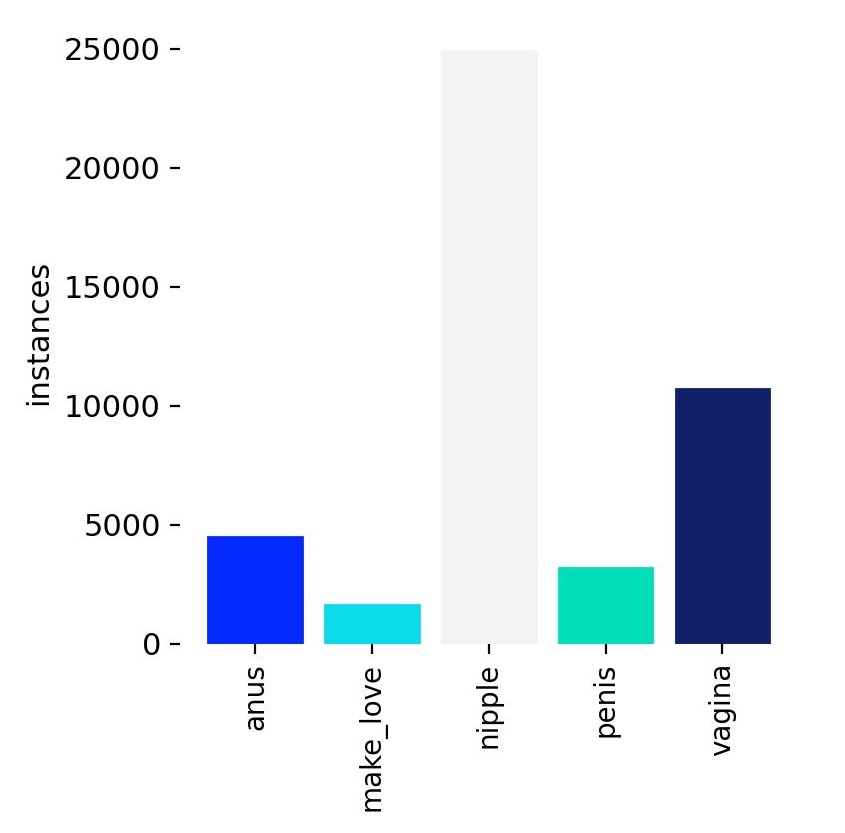

Labels

Training Configuration

- Model Weights Files:

- Nano:

erax_nsfw_yolo11n.pt - Small:

erax_nsfw_yolo11s.pt - Medium:

erax_nsfw_yolo11m.pt

- Nano:

- Number of Epochs: 100

- Learning Rate: 0.01

- Batch Size: 208

- Image Size: 640x640

- Training server: 8 x NVIDIA RTX A4000 (16GB GDDR6)

- Training time: ~10 hours

Evaluation Metrics

Below are the key metrics from the model evaluation on the validation set: comming soon

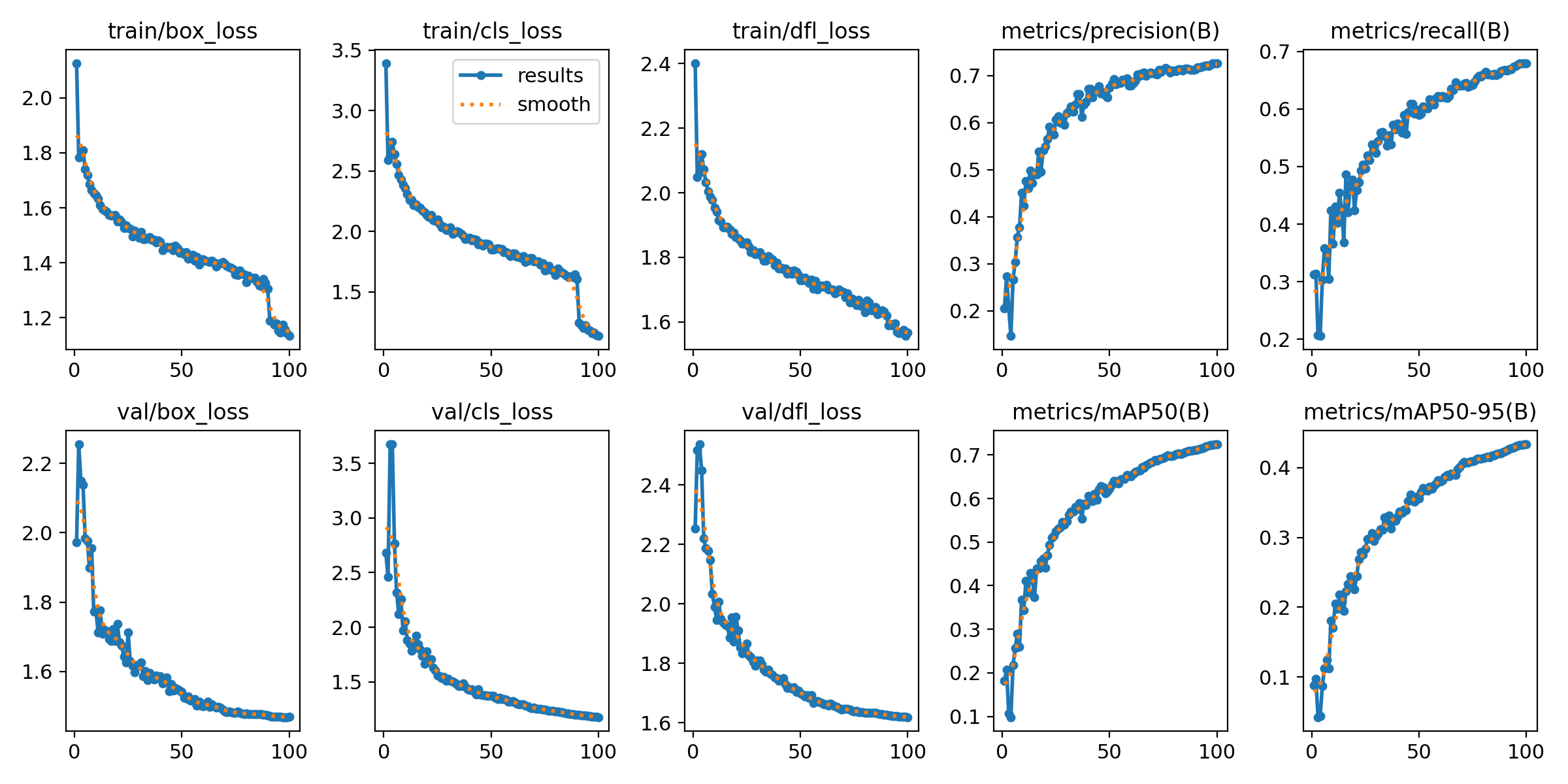

Training Validation Results

Training and Validation Losses

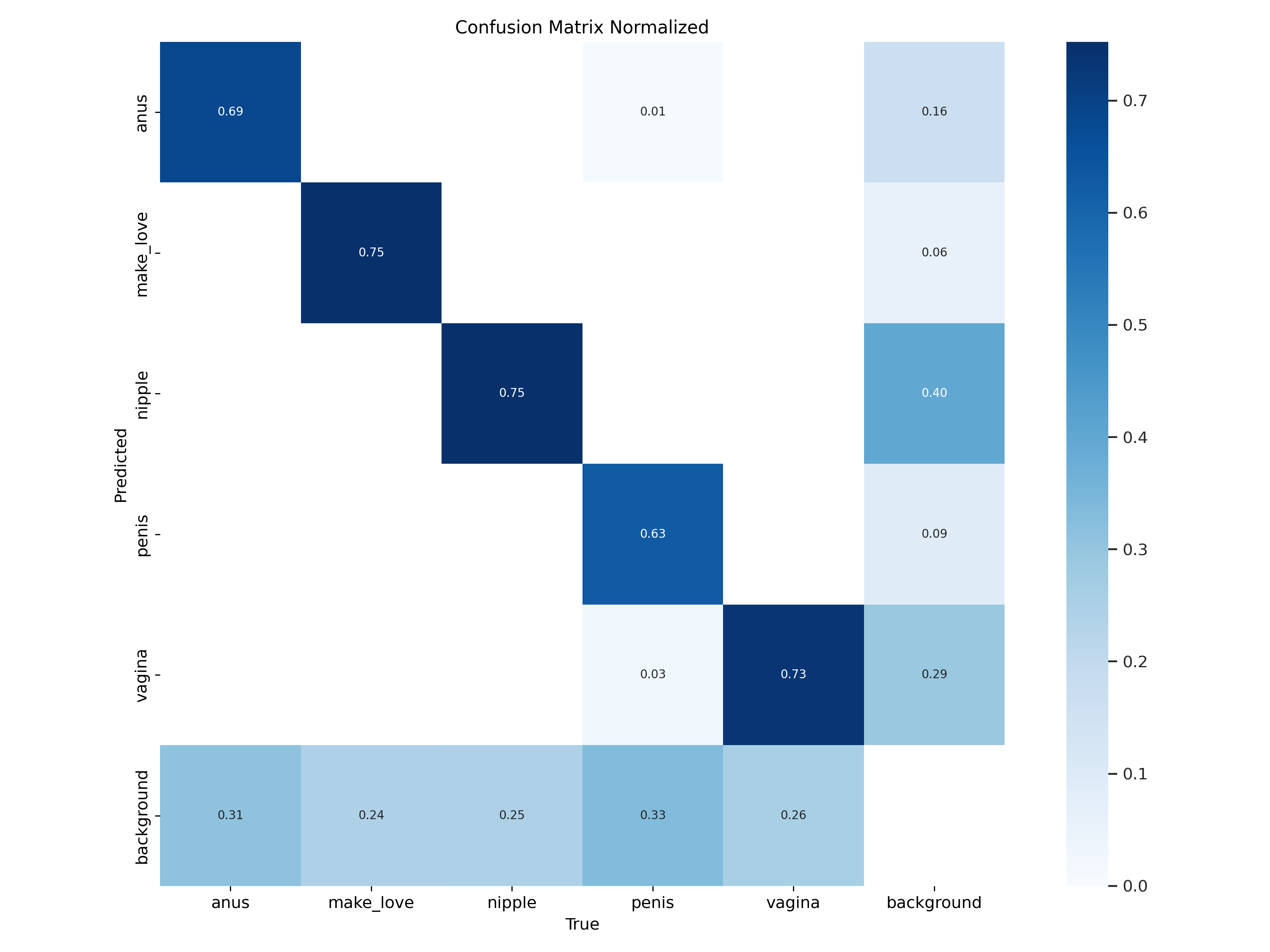

Confusion Matrix

Inference

To use the trained model, follow these steps:

- Install the necessary packages:

pip install ultralytics supervision huggingface-hub

- Download Pretrained model:

from huggingface_hub import snapshot_download

snapshot_download(repo_id="erax-ai/EraX-NSFW-V1.0", local_dir="./", force_download=True)

- Simple Use Case:

from ultralytics import YOLO

from PIL import Image

import supervision as sv

import numpy as np

IOU_THRESHOLD = 0.3

CONFIDENCE_THRESHOLD = 0.2

# pretrained_path = "erax_nsfw_yolo11n.pt"

# pretrained_path = "erax_nsfw_yolo11s.pt"

pretrained_path = "erax_nsfw_yolo11m.pt"

image_path_list = ["test_images/img_1.jpg", "test_images/img_2.jpg"]

model = YOLO(pretrained_path)

results = model(image_path_list,

conf=CONFIDENCE_THRESHOLD,

iou=IOU_THRESHOLD

)

for result in results:

annotated_image = result.orig_img.copy()

h, w = annotated_image.shape[:2]

anchor = h if h > w else w

detections = sv.Detections.from_ultralytics(result)

label_annotator = sv.LabelAnnotator(text_color=sv.Color.BLACK,

text_position=sv.Position.CENTER,

text_scale=anchor/1700)

pixelate_annotator = sv.PixelateAnnotator(pixel_size=anchor/50)

annotated_image = pixelate_annotator.annotate(

scene=annotated_image.copy(),

detections=detections

)

annotated_image = label_annotator.annotate(

annotated_image,

detections=detections

)

sv.plot_image(annotated_image, size=(10, 10))

Training

Scripts for training: https://github.com/EraX-JS-Company/EraX-NSFW-V1.0

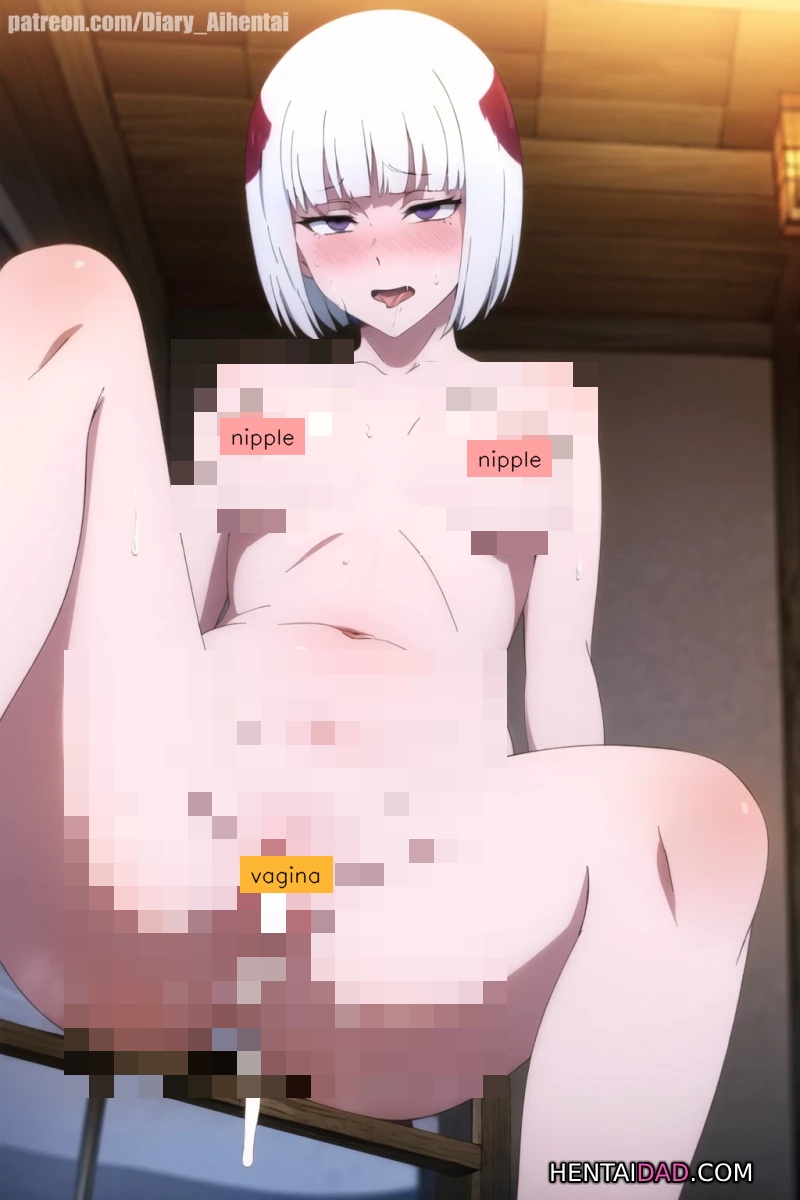

More examples

Example 03: SAFEEST for using make_love class as it will cover entire context.

Citation

If you find our project useful, we would appreciate it if you could star our repository and cite our work as follows:

@article{EraX-NSFW-V1.0,

author = {Phạm Đình Thục and

Mr. Nguyễn Anh Nguyên and

Đoàn Thành Khang and

Mr. Trần Hải Khương and

Mr. Trương Công Đức and

Phan Nguyễn Tuấn Kha and

Phạm Huỳnh Nhật},

title = {EraX-NSFW-V1.0: A Highly Efficient Model for NSFW Detection},

organization={EraX JS Company},

year={2024},

url={https://huggingface.co/erax-ai/EraX-NSFW-V1.0}

}