Transformers documentation

OneFormer

OneFormer

Overview

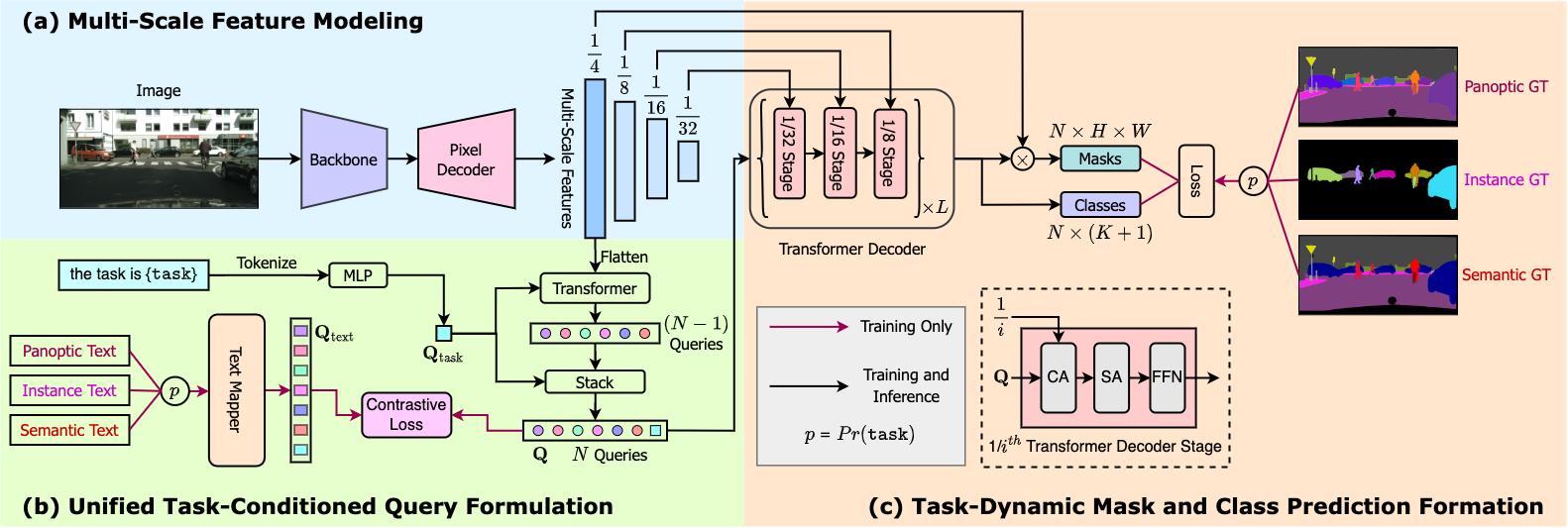

The OneFormer model was proposed in OneFormer: One Transformer to Rule Universal Image Segmentation by Jitesh Jain, Jiachen Li, MangTik Chiu, Ali Hassani, Nikita Orlov, Humphrey Shi. OneFormer is a universal image segmentation framework that can be trained on a single panoptic dataset to perform semantic, instance, and panoptic segmentation tasks. OneFormer uses a task token to condition the model on the task in focus, making the architecture task-guided for training, and task-dynamic for inference.

The abstract from the paper is the following:

Universal Image Segmentation is not a new concept. Past attempts to unify image segmentation in the last decades include scene parsing, panoptic segmentation, and, more recently, new panoptic architectures. However, such panoptic architectures do not truly unify image segmentation because they need to be trained individually on the semantic, instance, or panoptic segmentation to achieve the best performance. Ideally, a truly universal framework should be trained only once and achieve SOTA performance across all three image segmentation tasks. To that end, we propose OneFormer, a universal image segmentation framework that unifies segmentation with a multi-task train-once design. We first propose a task-conditioned joint training strategy that enables training on ground truths of each domain (semantic, instance, and panoptic segmentation) within a single multi-task training process. Secondly, we introduce a task token to condition our model on the task at hand, making our model task-dynamic to support multi-task training and inference. Thirdly, we propose using a query-text contrastive loss during training to establish better inter-task and inter-class distinctions. Notably, our single OneFormer model outperforms specialized Mask2Former models across all three segmentation tasks on ADE20k, CityScapes, and COCO, despite the latter being trained on each of the three tasks individually with three times the resources. With new ConvNeXt and DiNAT backbones, we observe even more performance improvement. We believe OneFormer is a significant step towards making image segmentation more universal and accessible.

The figure below illustrates the architecture of OneFormer. Taken from the original paper.

This model was contributed by Jitesh Jain. The original code can be found here.

Usage tips

- OneFormer requires two inputs during inference: image and task token.

- During training, OneFormer only uses panoptic annotations.

- If you want to train the model in a distributed environment across multiple nodes, then one should update the

get_num_masksfunction inside in theOneFormerLossclass ofmodeling_oneformer.py. When training on multiple nodes, this should be set to the average number of target masks across all nodes, as can be seen in the original implementation here. - One can use OneFormerProcessor to prepare input images and task inputs for the model and optional targets for the model. OneFormerProcessor wraps OneFormerImageProcessor and CLIPTokenizer into a single instance to both prepare the images and encode the task inputs.

- To get the final segmentation, depending on the task, you can call post_process_semantic_segmentation() or post_process_instance_segmentation() or post_process_panoptic_segmentation(). All three tasks can be solved using OneFormerForUniversalSegmentation output, panoptic segmentation accepts an optional

label_ids_to_fuseargument to fuse instances of the target object/s (e.g. sky) together.

Resources

A list of official Hugging Face and community (indicated by 🌎) resources to help you get started with OneFormer.

- Demo notebooks regarding inference + fine-tuning on custom data can be found here.

If you’re interested in submitting a resource to be included here, please feel free to open a Pull Request and we will review it. The resource should ideally demonstrate something new instead of duplicating an existing resource.

OneFormer specific outputs

class transformers.models.oneformer.modeling_oneformer.OneFormerModelOutput

< source >( encoder_hidden_states: Optional = None pixel_decoder_hidden_states: Optional = None transformer_decoder_hidden_states: Optional = None transformer_decoder_object_queries: FloatTensor = None transformer_decoder_contrastive_queries: Optional = None transformer_decoder_mask_predictions: FloatTensor = None transformer_decoder_class_predictions: FloatTensor = None transformer_decoder_auxiliary_predictions: Optional = None text_queries: Optional = None task_token: FloatTensor = None attentions: Optional = None )

Parameters

- encoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, num_channels, height, width). Hidden-states (also called feature maps) of the encoder model at the output of each stage. - pixel_decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, num_channels, height, width). Hidden-states (also called feature maps) of the pixel decoder model at the output of each stage. - transformer_decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, sequence_length, hidden_size). Hidden-states (also called feature maps) of the transformer decoder at the output of each stage. - transformer_decoder_object_queries (

torch.FloatTensorof shape(batch_size, num_queries, hidden_dim)) — Output object queries from the last layer in the transformer decoder. - transformer_decoder_contrastive_queries (

torch.FloatTensorof shape(batch_size, num_queries, hidden_dim)) — Contrastive queries from the transformer decoder. - transformer_decoder_mask_predictions (

torch.FloatTensorof shape(batch_size, num_queries, height, width)) — Mask Predictions from the last layer in the transformer decoder. - transformer_decoder_class_predictions (

torch.FloatTensorof shape(batch_size, num_queries, num_classes+1)) — Class Predictions from the last layer in the transformer decoder. - transformer_decoder_auxiliary_predictions (Tuple of Dict of

str, torch.FloatTensor, optional) — Tuple of class and mask predictions from each layer of the transformer decoder. - text_queries (

torch.FloatTensor, optional of shape(batch_size, num_queries, hidden_dim)) — Text queries derived from the input text list used for calculating contrastive loss during training. - task_token (

torch.FloatTensorof shape(batch_size, hidden_dim)) — 1D task token to condition the queries. - attentions (

tuple(tuple(torch.FloatTensor)), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftuple(torch.FloatTensor)(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Self and Cross Attentions weights from transformer decoder.

Class for outputs of OneFormerModel. This class returns all the needed hidden states to compute the logits.

class transformers.models.oneformer.modeling_oneformer.OneFormerForUniversalSegmentationOutput

< source >( loss: Optional = None class_queries_logits: FloatTensor = None masks_queries_logits: FloatTensor = None auxiliary_predictions: List = None encoder_hidden_states: Optional = None pixel_decoder_hidden_states: Optional = None transformer_decoder_hidden_states: Optional = None transformer_decoder_object_queries: FloatTensor = None transformer_decoder_contrastive_queries: Optional = None transformer_decoder_mask_predictions: FloatTensor = None transformer_decoder_class_predictions: FloatTensor = None transformer_decoder_auxiliary_predictions: Optional = None text_queries: Optional = None task_token: FloatTensor = None attentions: Optional = None )

Parameters

- loss (

torch.Tensor, optional) — The computed loss, returned when labels are present. - class_queries_logits (

torch.FloatTensor) — A tensor of shape(batch_size, num_queries, num_labels + 1)representing the proposed classes for each query. Note the+ 1is needed because we incorporate the null class. - masks_queries_logits (

torch.FloatTensor) — A tensor of shape(batch_size, num_queries, height, width)representing the proposed masks for each query. - auxiliary_predictions (List of Dict of

str, torch.FloatTensor, optional) — List of class and mask predictions from each layer of the transformer decoder. - encoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, num_channels, height, width). Hidden-states (also called feature maps) of the encoder model at the output of each stage. - pixel_decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, num_channels, height, width). Hidden-states (also called feature maps) of the pixel decoder model at the output of each stage. - transformer_decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, sequence_length, hidden_size). Hidden-states (also called feature maps) of the transformer decoder at the output of each stage. - transformer_decoder_object_queries (

torch.FloatTensorof shape(batch_size, num_queries, hidden_dim)) — Output object queries from the last layer in the transformer decoder. - transformer_decoder_contrastive_queries (

torch.FloatTensorof shape(batch_size, num_queries, hidden_dim)) — Contrastive queries from the transformer decoder. - transformer_decoder_mask_predictions (

torch.FloatTensorof shape(batch_size, num_queries, height, width)) — Mask Predictions from the last layer in the transformer decoder. - transformer_decoder_class_predictions (

torch.FloatTensorof shape(batch_size, num_queries, num_classes+1)) — Class Predictions from the last layer in the transformer decoder. - transformer_decoder_auxiliary_predictions (List of Dict of

str, torch.FloatTensor, optional) — List of class and mask predictions from each layer of the transformer decoder. - text_queries (

torch.FloatTensor, optional of shape(batch_size, num_queries, hidden_dim)) — Text queries derived from the input text list used for calculating contrastive loss during training. - task_token (

torch.FloatTensorof shape(batch_size, hidden_dim)) — 1D task token to condition the queries. - attentions (

tuple(tuple(torch.FloatTensor)), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftuple(torch.FloatTensor)(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Self and Cross Attentions weights from transformer decoder.

Class for outputs of OneFormerForUniversalSegmentationOutput.

This output can be directly passed to post_process_semantic_segmentation() or post_process_instance_segmentation() or post_process_panoptic_segmentation() depending on the task. Please, see [`~OneFormerImageProcessor] for details regarding usage.

OneFormerConfig

class transformers.OneFormerConfig

< source >( backbone_config: Optional = None backbone: Optional = None use_pretrained_backbone: bool = False use_timm_backbone: bool = False backbone_kwargs: Optional = None ignore_value: int = 255 num_queries: int = 150 no_object_weight: int = 0.1 class_weight: float = 2.0 mask_weight: float = 5.0 dice_weight: float = 5.0 contrastive_weight: float = 0.5 contrastive_temperature: float = 0.07 train_num_points: int = 12544 oversample_ratio: float = 3.0 importance_sample_ratio: float = 0.75 init_std: float = 0.02 init_xavier_std: float = 1.0 layer_norm_eps: float = 1e-05 is_training: bool = False use_auxiliary_loss: bool = True output_auxiliary_logits: bool = True strides: Optional = [4, 8, 16, 32] task_seq_len: int = 77 text_encoder_width: int = 256 text_encoder_context_length: int = 77 text_encoder_num_layers: int = 6 text_encoder_vocab_size: int = 49408 text_encoder_proj_layers: int = 2 text_encoder_n_ctx: int = 16 conv_dim: int = 256 mask_dim: int = 256 hidden_dim: int = 256 encoder_feedforward_dim: int = 1024 norm: str = 'GN' encoder_layers: int = 6 decoder_layers: int = 10 use_task_norm: bool = True num_attention_heads: int = 8 dropout: float = 0.1 dim_feedforward: int = 2048 pre_norm: bool = False enforce_input_proj: bool = False query_dec_layers: int = 2 common_stride: int = 4 **kwargs )

Parameters

- backbone_config (

PretrainedConfig, optional, defaults toSwinConfig) — The configuration of the backbone model. - backbone (

str, optional) — Name of backbone to use whenbackbone_configisNone. Ifuse_pretrained_backboneisTrue, this will load the corresponding pretrained weights from the timm or transformers library. Ifuse_pretrained_backboneisFalse, this loads the backbone’s config and uses that to initialize the backbone with random weights. - use_pretrained_backbone (

bool, optional, defaults toFalse) — Whether to use pretrained weights for the backbone. - use_timm_backbone (

bool, optional, defaults toFalse) — Whether to loadbackbonefrom the timm library. IfFalse, the backbone is loaded from the transformers library. - backbone_kwargs (

dict, optional) — Keyword arguments to be passed to AutoBackbone when loading from a checkpoint e.g.{'out_indices': (0, 1, 2, 3)}. Cannot be specified ifbackbone_configis set. - ignore_value (

int, optional, defaults to 255) — Values to be ignored in GT label while calculating loss. - num_queries (

int, optional, defaults to 150) — Number of object queries. - no_object_weight (

float, optional, defaults to 0.1) — Weight for no-object class predictions. - class_weight (

float, optional, defaults to 2.0) — Weight for Classification CE loss. - mask_weight (

float, optional, defaults to 5.0) — Weight for binary CE loss. - dice_weight (

float, optional, defaults to 5.0) — Weight for dice loss. - contrastive_weight (

float, optional, defaults to 0.5) — Weight for contrastive loss. - contrastive_temperature (

float, optional, defaults to 0.07) — Initial value for scaling the contrastive logits. - train_num_points (

int, optional, defaults to 12544) — Number of points to sample while calculating losses on mask predictions. - oversample_ratio (

float, optional, defaults to 3.0) — Ratio to decide how many points to oversample. - importance_sample_ratio (

float, optional, defaults to 0.75) — Ratio of points that are sampled via importance sampling. - init_std (

float, optional, defaults to 0.02) — Standard deviation for normal intialization. - init_xavier_std (

float, optional, defaults to 1.0) — Standard deviation for xavier uniform initialization. - layer_norm_eps (

float, optional, defaults to 1e-05) — Epsilon for layer normalization. - is_training (

bool, optional, defaults toFalse) — Whether to run in training or inference mode. - use_auxiliary_loss (

bool, optional, defaults toTrue) — Whether to calculate loss using intermediate predictions from transformer decoder. - output_auxiliary_logits (

bool, optional, defaults toTrue) — Whether to return intermediate predictions from transformer decoder. - strides (

list, optional, defaults to[4, 8, 16, 32]) — List containing the strides for feature maps in the encoder. - task_seq_len (

int, optional, defaults to 77) — Sequence length for tokenizing text list input. - text_encoder_width (

int, optional, defaults to 256) — Hidden size for text encoder. - text_encoder_context_length (

int, optional, defaults to 77) — Input sequence length for text encoder. - text_encoder_num_layers (

int, optional, defaults to 6) — Number of layers for transformer in text encoder. - text_encoder_vocab_size (

int, optional, defaults to 49408) — Vocabulary size for tokenizer. - text_encoder_proj_layers (

int, optional, defaults to 2) — Number of layers in MLP for project text queries. - text_encoder_n_ctx (

int, optional, defaults to 16) — Number of learnable text context queries. - conv_dim (

int, optional, defaults to 256) — Feature map dimension to map outputs from the backbone. - mask_dim (

int, optional, defaults to 256) — Dimension for feature maps in pixel decoder. - hidden_dim (

int, optional, defaults to 256) — Dimension for hidden states in transformer decoder. - encoder_feedforward_dim (

int, optional, defaults to 1024) — Dimension for FFN layer in pixel decoder. - norm (

str, optional, defaults to"GN") — Type of normalization. - encoder_layers (

int, optional, defaults to 6) — Number of layers in pixel decoder. - decoder_layers (

int, optional, defaults to 10) — Number of layers in transformer decoder. - use_task_norm (

bool, optional, defaults toTrue) — Whether to normalize the task token. - num_attention_heads (

int, optional, defaults to 8) — Number of attention heads in transformer layers in the pixel and transformer decoders. - dropout (

float, optional, defaults to 0.1) — Dropout probability for pixel and transformer decoders. - dim_feedforward (

int, optional, defaults to 2048) — Dimension for FFN layer in transformer decoder. - pre_norm (

bool, optional, defaults toFalse) — Whether to normalize hidden states before attention layers in transformer decoder. - enforce_input_proj (

bool, optional, defaults toFalse) — Whether to project hidden states in transformer decoder. - query_dec_layers (

int, optional, defaults to 2) — Number of layers in query transformer. - common_stride (

int, optional, defaults to 4) — Common stride used for features in pixel decoder.

This is the configuration class to store the configuration of a OneFormerModel. It is used to instantiate a OneFormer model according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the OneFormer shi-labs/oneformer_ade20k_swin_tiny architecture trained on ADE20k-150.

Configuration objects inherit from PretrainedConfig and can be used to control the model outputs. Read the documentation from PretrainedConfig for more information.

Examples:

>>> from transformers import OneFormerConfig, OneFormerModel

>>> # Initializing a OneFormer shi-labs/oneformer_ade20k_swin_tiny configuration

>>> configuration = OneFormerConfig()

>>> # Initializing a model (with random weights) from the shi-labs/oneformer_ade20k_swin_tiny style configuration

>>> model = OneFormerModel(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configOneFormerImageProcessor

class transformers.OneFormerImageProcessor

< source >( do_resize: bool = True size: Dict = None resample: Resampling = <Resampling.BILINEAR: 2> do_rescale: bool = True rescale_factor: float = 0.00392156862745098 do_normalize: bool = True image_mean: Union = None image_std: Union = None ignore_index: Optional = None do_reduce_labels: bool = False repo_path: Optional = 'shi-labs/oneformer_demo' class_info_file: str = None num_text: Optional = None num_labels: Optional = None **kwargs )

Parameters

- do_resize (

bool, optional, defaults toTrue) — Whether to resize the input to a certainsize. - size (

int, optional, defaults to 800) — Resize the input to the given size. Only has an effect ifdo_resizeis set toTrue. If size is a sequence like(width, height), output size will be matched to this. If size is an int, smaller edge of the image will be matched to this number. i.e, ifheight > width, then image will be rescaled to(size * height / width, size). - resample (

int, optional, defaults toResampling.BILINEAR) — An optional resampling filter. This can be one ofPIL.Image.Resampling.NEAREST,PIL.Image.Resampling.BOX,PIL.Image.Resampling.BILINEAR,PIL.Image.Resampling.HAMMING,PIL.Image.Resampling.BICUBICorPIL.Image.Resampling.LANCZOS. Only has an effect ifdo_resizeis set toTrue. - do_rescale (

bool, optional, defaults toTrue) — Whether to rescale the input to a certainscale. - rescale_factor (

float, optional, defaults to1/ 255) — Rescale the input by the given factor. Only has an effect ifdo_rescaleis set toTrue. - do_normalize (

bool, optional, defaults toTrue) — Whether or not to normalize the input with mean and standard deviation. - image_mean (

int, optional, defaults to[0.485, 0.456, 0.406]) — The sequence of means for each channel, to be used when normalizing images. Defaults to the ImageNet mean. - image_std (

int, optional, defaults to[0.229, 0.224, 0.225]) — The sequence of standard deviations for each channel, to be used when normalizing images. Defaults to the ImageNet std. - ignore_index (

int, optional) — Label to be assigned to background pixels in segmentation maps. If provided, segmentation map pixels denoted with 0 (background) will be replaced withignore_index. - do_reduce_labels (

bool, optional, defaults toFalse) — Whether or not to decrement all label values of segmentation maps by 1. Usually used for datasets where 0 is used for background, and background itself is not included in all classes of a dataset (e.g. ADE20k). The background label will be replaced byignore_index. - repo_path (

str, optional, defaults to"shi-labs/oneformer_demo") — Path to hub repo or local directory containing the JSON file with class information for the dataset. If unset, will look forclass_info_filein the current working directory. - class_info_file (

str, optional) — JSON file containing class information for the dataset. Seeshi-labs/oneformer_demo/cityscapes_panoptic.jsonfor an example. - num_text (

int, optional) — Number of text entries in the text input list. - num_labels (

int, optional) — The number of labels in the segmentation map.

Constructs a OneFormer image processor. The image processor can be used to prepare image(s), task input(s) and optional text inputs and targets for the model.

This image processor inherits from BaseImageProcessor which contains most of the main methods. Users should refer to this superclass for more information regarding those methods.

preprocess

< source >( images: Union task_inputs: Optional = None segmentation_maps: Union = None instance_id_to_semantic_id: Optional = None do_resize: Optional = None size: Optional = None resample: Resampling = None do_rescale: Optional = None rescale_factor: Optional = None do_normalize: Optional = None image_mean: Union = None image_std: Union = None ignore_index: Optional = None do_reduce_labels: Optional = None return_tensors: Union = None data_format: Union = <ChannelDimension.FIRST: 'channels_first'> input_data_format: Union = None )

encode_inputs

< source >( pixel_values_list: List task_inputs: List segmentation_maps: Union = None instance_id_to_semantic_id: Union = None ignore_index: Optional = None do_reduce_labels: bool = False return_tensors: Union = None input_data_format: Union = None ) → BatchFeature

Parameters

- pixel_values_list (

List[ImageInput]) — List of images (pixel values) to be padded. Each image should be a tensor of shape(channels, height, width). - task_inputs (

List[str]) — List of task values. - segmentation_maps (

ImageInput, optional) — The corresponding semantic segmentation maps with the pixel-wise annotations.(

bool, optional, defaults toTrue): Whether or not to pad images up to the largest image in a batch and create a pixel mask.If left to the default, will return a pixel mask that is:

- 1 for pixels that are real (i.e. not masked),

- 0 for pixels that are padding (i.e. masked).

- instance_id_to_semantic_id (

List[Dict[int, int]]orDict[int, int], optional) — A mapping between object instance ids and class ids. If passed,segmentation_mapsis treated as an instance segmentation map where each pixel represents an instance id. Can be provided as a single dictionary with a global/dataset-level mapping or as a list of dictionaries (one per image), to map instance ids in each image separately. - return_tensors (

stror TensorType, optional) — If set, will return tensors instead of NumPy arrays. If set to'pt', return PyTorchtorch.Tensorobjects. - input_data_format (

strorChannelDimension, optional) — The channel dimension format of the input image. If not provided, it will be inferred from the input image.

Returns

A BatchFeature with the following fields:

- pixel_values — Pixel values to be fed to a model.

- pixel_mask — Pixel mask to be fed to a model (when

=Trueor ifpixel_maskis inself.model_input_names). - mask_labels — Optional list of mask labels of shape

(labels, height, width)to be fed to a model (whenannotationsare provided). - class_labels — Optional list of class labels of shape

(labels)to be fed to a model (whenannotationsare provided). They identify the labels ofmask_labels, e.g. the label ofmask_labels[i][j]ifclass_labels[i][j]. - text_inputs — Optional list of text string entries to be fed to a model (when

annotationsare provided). They identify the binary masks present in the image.

Pad images up to the largest image in a batch and create a corresponding pixel_mask.

OneFormer addresses semantic segmentation with a mask classification paradigm, thus input segmentation maps

will be converted to lists of binary masks and their respective labels. Let’s see an example, assuming

segmentation_maps = [[2,6,7,9]], the output will contain mask_labels = [[1,0,0,0],[0,1,0,0],[0,0,1,0],[0,0,0,1]] (four binary masks) and class_labels = [2,6,7,9], the labels for

each mask.

post_process_semantic_segmentation

< source >( outputs target_sizes: Optional = None ) → List[torch.Tensor]

Parameters

- outputs (MaskFormerForInstanceSegmentation) — Raw outputs of the model.

- target_sizes (

List[Tuple[int, int]], optional) — List of length (batch_size), where each list item (Tuple[int, int]]) corresponds to the requested final size (height, width) of each prediction. If left to None, predictions will not be resized.

Returns

List[torch.Tensor]

A list of length batch_size, where each item is a semantic segmentation map of shape (height, width)

corresponding to the target_sizes entry (if target_sizes is specified). Each entry of each

torch.Tensor correspond to a semantic class id.

Converts the output of MaskFormerForInstanceSegmentation into semantic segmentation maps. Only supports PyTorch.

post_process_instance_segmentation

< source >( outputs task_type: str = 'instance' is_demo: bool = True threshold: float = 0.5 mask_threshold: float = 0.5 overlap_mask_area_threshold: float = 0.8 target_sizes: Optional = None return_coco_annotation: Optional = False ) → List[Dict]

Parameters

- outputs (

OneFormerForUniversalSegmentationOutput) — The outputs fromOneFormerForUniversalSegmentationOutput. - task_type (

str, optional, defaults to “instance”) — The post processing depends on the task token input. If thetask_typeis “panoptic”, we need to ignore the stuff predictions. - is_demo (

bool, optional), defaults toTrue) — Whether the model is in demo mode. If true, use threshold to predict final masks. - threshold (

float, optional, defaults to 0.5) — The probability score threshold to keep predicted instance masks. - mask_threshold (

float, optional, defaults to 0.5) — Threshold to use when turning the predicted masks into binary values. - overlap_mask_area_threshold (

float, optional, defaults to 0.8) — The overlap mask area threshold to merge or discard small disconnected parts within each binary instance mask. - target_sizes (

List[Tuple], optional) — List of length (batch_size), where each list item (Tuple[int, int]]) corresponds to the requested final size (height, width) of each prediction in batch. If left to None, predictions will not be resized. - return_coco_annotation (

bool, optional), defaults toFalse) — Whether to return predictions in COCO format.

Returns

List[Dict]

A list of dictionaries, one per image, each dictionary containing two keys:

- segmentation — a tensor of shape

(height, width)where each pixel represents asegment_id, set toNoneif no mask if found abovethreshold. Iftarget_sizesis specified, segmentation is resized to the correspondingtarget_sizesentry. - segments_info — A dictionary that contains additional information on each segment.

- id — an integer representing the

segment_id. - label_id — An integer representing the label / semantic class id corresponding to

segment_id. - was_fused — a boolean,

Trueiflabel_idwas inlabel_ids_to_fuse,Falseotherwise. Multiple instances of the same class / label were fused and assigned a singlesegment_id. - score — Prediction score of segment with

segment_id.

- id — an integer representing the

Converts the output of OneFormerForUniversalSegmentationOutput into image instance segmentation

predictions. Only supports PyTorch.

post_process_panoptic_segmentation

< source >( outputs threshold: float = 0.5 mask_threshold: float = 0.5 overlap_mask_area_threshold: float = 0.8 label_ids_to_fuse: Optional = None target_sizes: Optional = None ) → List[Dict]

Parameters

- outputs (

MaskFormerForInstanceSegmentationOutput) — The outputs from MaskFormerForInstanceSegmentation. - threshold (

float, optional, defaults to 0.5) — The probability score threshold to keep predicted instance masks. - mask_threshold (

float, optional, defaults to 0.5) — Threshold to use when turning the predicted masks into binary values. - overlap_mask_area_threshold (

float, optional, defaults to 0.8) — The overlap mask area threshold to merge or discard small disconnected parts within each binary instance mask. - label_ids_to_fuse (

Set[int], optional) — The labels in this state will have all their instances be fused together. For instance we could say there can only be one sky in an image, but several persons, so the label ID for sky would be in that set, but not the one for person. - target_sizes (

List[Tuple], optional) — List of length (batch_size), where each list item (Tuple[int, int]]) corresponds to the requested final size (height, width) of each prediction in batch. If left to None, predictions will not be resized.

Returns

List[Dict]

A list of dictionaries, one per image, each dictionary containing two keys:

- segmentation — a tensor of shape

(height, width)where each pixel represents asegment_id, set toNoneif no mask if found abovethreshold. Iftarget_sizesis specified, segmentation is resized to the correspondingtarget_sizesentry. - segments_info — A dictionary that contains additional information on each segment.

- id — an integer representing the

segment_id. - label_id — An integer representing the label / semantic class id corresponding to

segment_id. - was_fused — a boolean,

Trueiflabel_idwas inlabel_ids_to_fuse,Falseotherwise. Multiple instances of the same class / label were fused and assigned a singlesegment_id. - score — Prediction score of segment with

segment_id.

- id — an integer representing the

Converts the output of MaskFormerForInstanceSegmentationOutput into image panoptic segmentation

predictions. Only supports PyTorch.

OneFormerProcessor

class transformers.OneFormerProcessor

< source >( image_processor = None tokenizer = None max_seq_length: int = 77 task_seq_length: int = 77 **kwargs )

Parameters

- image_processor (OneFormerImageProcessor) — The image processor is a required input.

- tokenizer ([

CLIPTokenizer,CLIPTokenizerFast]) — The tokenizer is a required input. - max_seq_len (

int, optional, defaults to 77)) — Sequence length for input text list. - task_seq_len (

int, optional, defaults to 77) — Sequence length for input task token.

Constructs an OneFormer processor which wraps OneFormerImageProcessor and CLIPTokenizer/CLIPTokenizerFast into a single processor that inherits both the image processor and tokenizer functionalities.

This method forwards all its arguments to OneFormerImageProcessor.encode_inputs() and then tokenizes the task_inputs. Please refer to the docstring of this method for more information.

This method forwards all its arguments to OneFormerImageProcessor.post_process_instance_segmentation(). Please refer to the docstring of this method for more information.

This method forwards all its arguments to OneFormerImageProcessor.post_process_panoptic_segmentation(). Please refer to the docstring of this method for more information.

This method forwards all its arguments to OneFormerImageProcessor.post_process_semantic_segmentation(). Please refer to the docstring of this method for more information.

OneFormerModel

class transformers.OneFormerModel

< source >( config: OneFormerConfig )

Parameters

- config (OneFormerConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The bare OneFormer Model outputting raw hidden-states without any specific head on top. This model is a PyTorch nn.Module sub-class. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: Tensor task_inputs: Tensor text_inputs: Optional = None pixel_mask: Optional = None output_hidden_states: Optional = None output_attentions: Optional = None return_dict: Optional = None ) → transformers.models.oneformer.modeling_oneformer.OneFormerModelOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Pixel values can be obtained using OneFormerProcessor. SeeOneFormerProcessor.__call__()for details. - task_inputs (

torch.FloatTensorof shape(batch_size, sequence_length)) — Task inputs. Task inputs can be obtained using AutoImageProcessor. SeeOneFormerProcessor.__call__()for details. - pixel_mask (

torch.LongTensorof shape(batch_size, height, width), optional) — Mask to avoid performing attention on padding pixel values. Mask values selected in[0, 1]:- 1 for pixels that are real (i.e. not masked),

- 0 for pixels that are padding (i.e. masked).

- output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of Detr’s decoder attention layers. - return_dict (

bool, optional) — Whether or not to return a~OneFormerModelOutputinstead of a plain tuple.

Returns

transformers.models.oneformer.modeling_oneformer.OneFormerModelOutput or tuple(torch.FloatTensor)

A transformers.models.oneformer.modeling_oneformer.OneFormerModelOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (OneFormerConfig) and inputs.

- encoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, num_channels, height, width). Hidden-states (also called feature maps) of the encoder model at the output of each stage. - pixel_decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, num_channels, height, width). Hidden-states (also called feature maps) of the pixel decoder model at the output of each stage. - transformer_decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, sequence_length, hidden_size). Hidden-states (also called feature maps) of the transformer decoder at the output of each stage. - transformer_decoder_object_queries (

torch.FloatTensorof shape(batch_size, num_queries, hidden_dim)) Output object queries from the last layer in the transformer decoder. - transformer_decoder_contrastive_queries (

torch.FloatTensorof shape(batch_size, num_queries, hidden_dim)) Contrastive queries from the transformer decoder. - transformer_decoder_mask_predictions (

torch.FloatTensorof shape(batch_size, num_queries, height, width)) Mask Predictions from the last layer in the transformer decoder. - transformer_decoder_class_predictions (

torch.FloatTensorof shape(batch_size, num_queries, num_classes+1)) — Class Predictions from the last layer in the transformer decoder. - transformer_decoder_auxiliary_predictions (Tuple of Dict of

str, torch.FloatTensor, optional) — Tuple of class and mask predictions from each layer of the transformer decoder. - text_queries (

torch.FloatTensor, optional of shape(batch_size, num_queries, hidden_dim)) Text queries derived from the input text list used for calculating contrastive loss during training. - task_token (

torch.FloatTensorof shape(batch_size, hidden_dim)) 1D task token to condition the queries. - attentions (

tuple(tuple(torch.FloatTensor)), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftuple(torch.FloatTensor)(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Self and Cross Attentions weights from transformer decoder.

OneFormerModelOutput

The OneFormerModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Example:

>>> import torch

>>> from PIL import Image

>>> import requests

>>> from transformers import OneFormerProcessor, OneFormerModel

>>> # download texting image

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> # load processor for preprocessing the inputs

>>> processor = OneFormerProcessor.from_pretrained("shi-labs/oneformer_ade20k_swin_tiny")

>>> model = OneFormerModel.from_pretrained("shi-labs/oneformer_ade20k_swin_tiny")

>>> inputs = processor(image, ["semantic"], return_tensors="pt")

>>> with torch.no_grad():

... outputs = model(**inputs)

>>> mask_predictions = outputs.transformer_decoder_mask_predictions

>>> class_predictions = outputs.transformer_decoder_class_predictions

>>> f"👉 Mask Predictions Shape: {list(mask_predictions.shape)}, Class Predictions Shape: {list(class_predictions.shape)}"

'👉 Mask Predictions Shape: [1, 150, 128, 171], Class Predictions Shape: [1, 150, 151]'OneFormerForUniversalSegmentation

class transformers.OneFormerForUniversalSegmentation

< source >( config: OneFormerConfig )

Parameters

- config (OneFormerConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

OneFormer Model for instance, semantic and panoptic image segmentation. This model is a PyTorch nn.Module sub-class. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: Tensor task_inputs: Tensor text_inputs: Optional = None mask_labels: Optional = None class_labels: Optional = None pixel_mask: Optional = None output_auxiliary_logits: Optional = None output_hidden_states: Optional = None output_attentions: Optional = None return_dict: Optional = None ) → transformers.models.oneformer.modeling_oneformer.OneFormerForUniversalSegmentationOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Pixel values can be obtained using OneFormerProcessor. SeeOneFormerProcessor.__call__()for details. - task_inputs (

torch.FloatTensorof shape(batch_size, sequence_length)) — Task inputs. Task inputs can be obtained using AutoImageProcessor. SeeOneFormerProcessor.__call__()for details. - pixel_mask (

torch.LongTensorof shape(batch_size, height, width), optional) — Mask to avoid performing attention on padding pixel values. Mask values selected in[0, 1]:- 1 for pixels that are real (i.e. not masked),

- 0 for pixels that are padding (i.e. masked).

- output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of Detr’s decoder attention layers. - return_dict (

bool, optional) — Whether or not to return a~OneFormerModelOutputinstead of a plain tuple. - text_inputs (

List[torch.Tensor], optional) — Tensor fof shape(num_queries, sequence_length)to be fed to a model - mask_labels (

List[torch.Tensor], optional) — List of mask labels of shape(num_labels, height, width)to be fed to a model - class_labels (

List[torch.LongTensor], optional) — list of target class labels of shape(num_labels, height, width)to be fed to a model. They identify the labels ofmask_labels, e.g. the label ofmask_labels[i][j]ifclass_labels[i][j].

Returns

transformers.models.oneformer.modeling_oneformer.OneFormerForUniversalSegmentationOutput or tuple(torch.FloatTensor)

A transformers.models.oneformer.modeling_oneformer.OneFormerForUniversalSegmentationOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (OneFormerConfig) and inputs.

- loss (

torch.Tensor, optional) — The computed loss, returned when labels are present. - class_queries_logits (

torch.FloatTensor) — A tensor of shape(batch_size, num_queries, num_labels + 1)representing the proposed classes for each query. Note the+ 1is needed because we incorporate the null class. - masks_queries_logits (

torch.FloatTensor) — A tensor of shape(batch_size, num_queries, height, width)representing the proposed masks for each query. - auxiliary_predictions (List of Dict of

str, torch.FloatTensor, optional) — List of class and mask predictions from each layer of the transformer decoder. - encoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, num_channels, height, width). Hidden-states (also called feature maps) of the encoder model at the output of each stage. - pixel_decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, num_channels, height, width). Hidden-states (also called feature maps) of the pixel decoder model at the output of each stage. - transformer_decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, sequence_length, hidden_size). Hidden-states (also called feature maps) of the transformer decoder at the output of each stage. - transformer_decoder_object_queries (

torch.FloatTensorof shape(batch_size, num_queries, hidden_dim)) Output object queries from the last layer in the transformer decoder. - transformer_decoder_contrastive_queries (

torch.FloatTensorof shape(batch_size, num_queries, hidden_dim)) Contrastive queries from the transformer decoder. - transformer_decoder_mask_predictions (

torch.FloatTensorof shape(batch_size, num_queries, height, width)) Mask Predictions from the last layer in the transformer decoder. - transformer_decoder_class_predictions (

torch.FloatTensorof shape(batch_size, num_queries, num_classes+1)) — Class Predictions from the last layer in the transformer decoder. - transformer_decoder_auxiliary_predictions (List of Dict of

str, torch.FloatTensor, optional) — List of class and mask predictions from each layer of the transformer decoder. - text_queries (

torch.FloatTensor, optional of shape(batch_size, num_queries, hidden_dim)) Text queries derived from the input text list used for calculating contrastive loss during training. - task_token (

torch.FloatTensorof shape(batch_size, hidden_dim)) 1D task token to condition the queries. - attentions (

tuple(tuple(torch.FloatTensor)), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftuple(torch.FloatTensor)(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Self and Cross Attentions weights from transformer decoder.

OneFormerUniversalSegmentationOutput

The OneFormerForUniversalSegmentation forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Example:

Universal segmentation example:

>>> from transformers import OneFormerProcessor, OneFormerForUniversalSegmentation

>>> from PIL import Image

>>> import requests

>>> import torch

>>> # load OneFormer fine-tuned on ADE20k for universal segmentation

>>> processor = OneFormerProcessor.from_pretrained("shi-labs/oneformer_ade20k_swin_tiny")

>>> model = OneFormerForUniversalSegmentation.from_pretrained("shi-labs/oneformer_ade20k_swin_tiny")

>>> url = (

... "https://huggingface.co/datasets/hf-internal-testing/fixtures_ade20k/resolve/main/ADE_val_00000001.jpg"

... )

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> # Semantic Segmentation

>>> inputs = processor(image, ["semantic"], return_tensors="pt")

>>> with torch.no_grad():

... outputs = model(**inputs)

>>> # model predicts class_queries_logits of shape `(batch_size, num_queries)`

>>> # and masks_queries_logits of shape `(batch_size, num_queries, height, width)`

>>> class_queries_logits = outputs.class_queries_logits

>>> masks_queries_logits = outputs.masks_queries_logits

>>> # you can pass them to processor for semantic postprocessing

>>> predicted_semantic_map = processor.post_process_semantic_segmentation(

... outputs, target_sizes=[image.size[::-1]]

... )[0]

>>> f"👉 Semantic Predictions Shape: {list(predicted_semantic_map.shape)}"

'👉 Semantic Predictions Shape: [512, 683]'

>>> # Instance Segmentation

>>> inputs = processor(image, ["instance"], return_tensors="pt")

>>> with torch.no_grad():

... outputs = model(**inputs)

>>> # model predicts class_queries_logits of shape `(batch_size, num_queries)`

>>> # and masks_queries_logits of shape `(batch_size, num_queries, height, width)`

>>> class_queries_logits = outputs.class_queries_logits

>>> masks_queries_logits = outputs.masks_queries_logits

>>> # you can pass them to processor for instance postprocessing

>>> predicted_instance_map = processor.post_process_instance_segmentation(

... outputs, target_sizes=[image.size[::-1]]

... )[0]["segmentation"]

>>> f"👉 Instance Predictions Shape: {list(predicted_instance_map.shape)}"

'👉 Instance Predictions Shape: [512, 683]'

>>> # Panoptic Segmentation

>>> inputs = processor(image, ["panoptic"], return_tensors="pt")

>>> with torch.no_grad():

... outputs = model(**inputs)

>>> # model predicts class_queries_logits of shape `(batch_size, num_queries)`

>>> # and masks_queries_logits of shape `(batch_size, num_queries, height, width)`

>>> class_queries_logits = outputs.class_queries_logits

>>> masks_queries_logits = outputs.masks_queries_logits

>>> # you can pass them to processor for panoptic postprocessing

>>> predicted_panoptic_map = processor.post_process_panoptic_segmentation(

... outputs, target_sizes=[image.size[::-1]]

... )[0]["segmentation"]

>>> f"👉 Panoptic Predictions Shape: {list(predicted_panoptic_map.shape)}"

'👉 Panoptic Predictions Shape: [512, 683]'