Datasets:

dataset_info:

features:

- name: instruction

dtype: string

- name: context

dtype: string

- name: response

dtype: string

- name: category

dtype: string

- name: instruction_original_en

dtype: string

- name: context_original_en

dtype: string

- name: response_original_en

dtype: string

- name: id

dtype: int64

splits:

- name: de

num_bytes: 25985140

num_examples: 15015

- name: en

num_bytes: 24125109

num_examples: 15015

- name: es

num_bytes: 25902709

num_examples: 15015

- name: fr

num_bytes: 26704314

num_examples: 15015

download_size: 65586669

dataset_size: 102717272

license: cc-by-sa-3.0

task_categories:

- text-generation

- text2text-generation

language:

- es

- de

- fr

tags:

- machine-translated

- instruction-following

pretty_name: Databrick Dolly Instructions Multilingual

size_categories:

- 10K<n<100K

Dataset Card for "databricks-dolly-15k-curated-multilingual"

A curated and multilingual version of the Databricks Dolly instructions dataset. It includes a programmatically and manually corrected version of the original en dataset. See below.

STATUS:

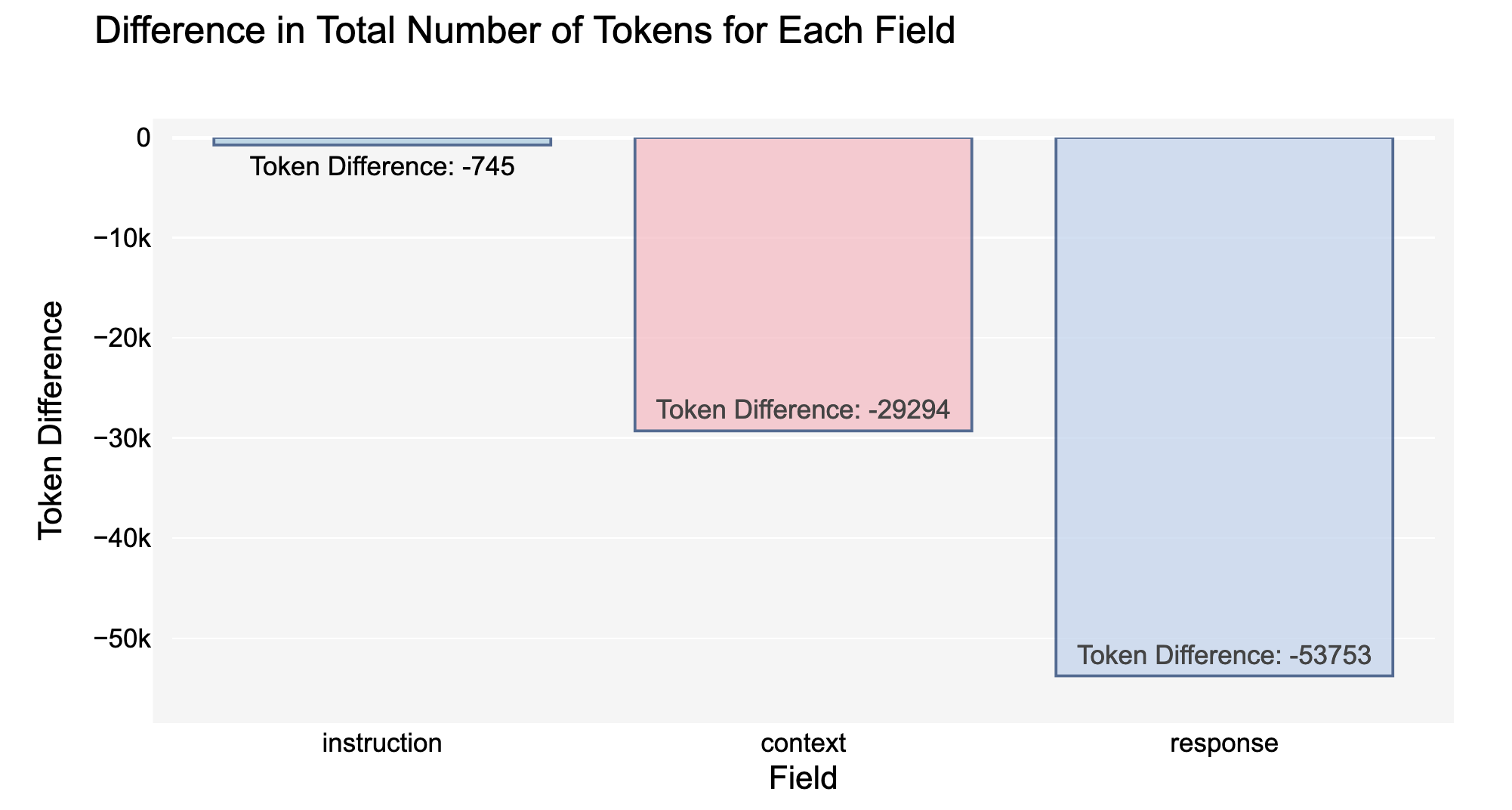

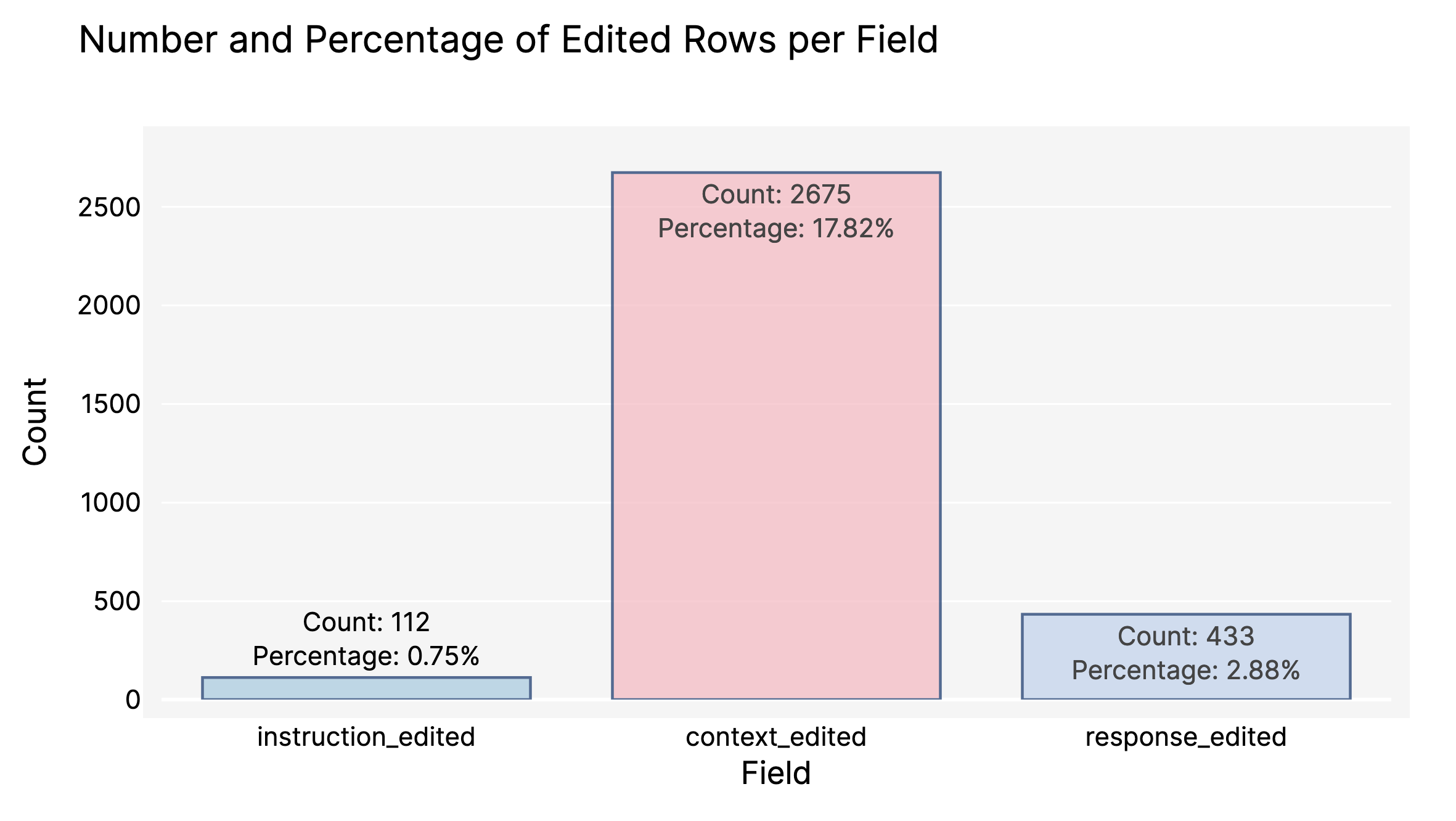

Currently, the original Dolly v2 English version has been curated combining automatic processing and collaborative human curation using Argilla (~400 records have been manually edited and fixed). The following graph shows a summary about the number of edited fields.

Table of Contents

- Table of Contents

- Dataset Description

- Dataset Structure

- Dataset Creation

- Considerations for Using the Data

- Additional Information

Dataset Description

- Homepage: https://huggingface.co/datasets/argilla/databricks-dolly-15k-multilingual/

- Repository: https://huggingface.co/datasets/argilla/databricks-dolly-15k-multilingual/

- Paper:

- Leaderboard:

- Point of Contact: contact@argilla.io, https://github.com/argilla-io/argilla

Dataset Summary

This dataset collection is a curated and machine-translated version of the databricks-dolly-15k dataset originally created by Databricks, Inc. in 2023.

The goal is to give practitioners a starting point for training open-source instruction-following models with better-quality English data and translated data beyond English. However, as the translation quality will not be perfect, we highly recommend dedicating time to curate and fix translation issues. Below we explain how to load the datasets into Argilla for data curation and fixing. Additionally, we'll be improving the datasets made available here, with the help of different communities.

Currently, the original English version has been curated combining automatic processing and collaborative human curation using Argilla (~400 records have been manually edited and fixed). The following graph shows a summary of the number of edited fields.

The main issues (likely many issues still remaining) are the following:

- Some labelers misunderstood the usage of the

contextfield. Thiscontextfield is used as part of the prompt for instruction-tuning and in other works it's calledinput(e.g., Alpaca). Likely, the name context, has led to some labelers using it to provide the full context of where they have extracted the response. This is problematic for some types of tasks (summarization, closed-qa or information-extraction) because sometimes the context is shorter than or unrelated to summaries, or the information cannot be extracted from the context (closed-qa, information-extraction). - Some labelers misunderstood the way to give instructions for summarization or closed-qa, for example, they ask: Who is Thomas Jefferson? then provide a very long context and a response equally long.

We programmatically identified records with these potential issues and ran a campaign to fix it and as a result more than 400 records have been adapted. See below for statistics:

As a result of this curation process the content of the fields has been reduced, counted in number of tokens, especially for the responses:

If you want to browse and curate your dataset with Argilla, you can:

- Duplicate this Space. IMPORTANT: The Space's Visibility need to be Public, but you can setup your own password and API KEYS following this guide.

- Setup two secrets:

HF_TOKENandLANGfor indicating the language split - Login with

admin/12345678and start browsing and labelling. - Start labeling. Every 5 min the validations will be stored on a Hub dataset in your personal HF space.

- Please get in touch to contribute fixes and improvements to the source datasets.

There's one split per language:

from datasets import load_dataset

# loads all splits

load_dataset("argilla/databricks-dolly-15k-curate-multilingual")

# loads Spanish splits

load_dataset("argilla/databricks-dolly-15k-curated-multilingual", split="es")

Supported Tasks and Leaderboards

As described in the README of the original dataset, this dataset can be used for:

- Training LLMs

- Synthetic Data Generation

- Data Augmentation

Languages

Currently: es, fr, de, en

Join Argilla Slack community if you want to help us include other languages.

Dataset Structure

Data Instances

[More Information Needed]

Data Fields

[More Information Needed]

Data Splits

There's one split per language:

from datasets import load_dataset

# loads all splits

load_dataset("argilla/databricks-dolly-15k-multilingual")

# loads Spanish splits

load_dataset("argilla/databricks-dolly-15k-multilingual", split="es")

Dataset Creation

These datasets have been translated using the DeepL API from the original English dataset between the 13th and 14th of April

Curation Logbook

- 28/04/23: Removed references from Wikipedia copy pastes for 8113 rows. Applied to context and response fields with the following regex:

r'\[[\w]+\]'

Source Data

Initial Data Collection and Normalization

Refer to the original dataset for more information.

Who are the source language producers?

[More Information Needed]

Annotations

Annotations are planned but not performed yet.

Annotation process

[More Information Needed]

Who are the annotators?

[More Information Needed]

Personal and Sensitive Information

[More Information Needed]

Considerations for Using the Data

Social Impact of Dataset

[More Information Needed]

Discussion of Biases

[More Information Needed]

Other Known Limitations

[More Information Needed]

Additional Information

Dataset Curators

[More Information Needed]

Licensing Information

This dataset can be used for any purpose, whether academic or commercial, under the terms of the Creative Commons Attribution-ShareAlike 3.0 Unported License.

Original dataset Owner: Databricks, Inc.

Citation Information

[More Information Needed]