Add files using upload-large-folder tool

Browse files- .gitattributes +1 -0

- README.md +131 -0

- aya-expanse-8B.png +0 -0

- config.json +28 -0

- generation_config.json +7 -0

- model-00001-of-00004.safetensors +3 -0

- model-00002-of-00004.safetensors +3 -0

- model-00003-of-00004.safetensors +3 -0

- model-00004-of-00004.safetensors +3 -0

- model.safetensors.index.json +265 -0

- special_tokens_map.json +23 -0

- tokenizer.json +3 -0

- tokenizer_config.json +330 -0

- winrates_by_lang.png +0 -0

- winrates_dolly.png +0 -0

- winrates_marenahard.png +0 -0

- winrates_marenahard_complete.png +0 -0

- winrates_step_by_step.png +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,131 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

inference: false

|

| 3 |

+

library_name: transformers

|

| 4 |

+

language:

|

| 5 |

+

- en

|

| 6 |

+

- fr

|

| 7 |

+

- de

|

| 8 |

+

- es

|

| 9 |

+

- it

|

| 10 |

+

- pt

|

| 11 |

+

- ja

|

| 12 |

+

- ko

|

| 13 |

+

- zh

|

| 14 |

+

- ar

|

| 15 |

+

- el

|

| 16 |

+

- fa

|

| 17 |

+

- pl

|

| 18 |

+

- id

|

| 19 |

+

- cs

|

| 20 |

+

- he

|

| 21 |

+

- hi

|

| 22 |

+

- nl

|

| 23 |

+

- ro

|

| 24 |

+

- ru

|

| 25 |

+

- tr

|

| 26 |

+

- uk

|

| 27 |

+

- vi

|

| 28 |

+

license: cc-by-nc-4.0

|

| 29 |

+

extra_gated_prompt: "By submitting this form, you agree to the [License Agreement](https://cohere.com/c4ai-cc-by-nc-license) and acknowledge that the information you provide will be collected, used, and shared in accordance with Cohere’s [Privacy Policy]( https://cohere.com/privacy). You’ll receive email updates about C4AI and Cohere research, events, products and services. You can unsubscribe at any time."

|

| 30 |

+

extra_gated_fields:

|

| 31 |

+

Name: text

|

| 32 |

+

Affiliation: text

|

| 33 |

+

Country: country

|

| 34 |

+

I agree to use this model for non-commercial use ONLY: checkbox

|

| 35 |

+

---

|

| 36 |

+

|

| 37 |

+

# Model Card for Aya Expanse 8B

|

| 38 |

+

|

| 39 |

+

<img src="aya-expanse-8B.png" width="650" style="margin-left:'auto' margin-right:'auto' display:'block'"/>

|

| 40 |

+

|

| 41 |

+

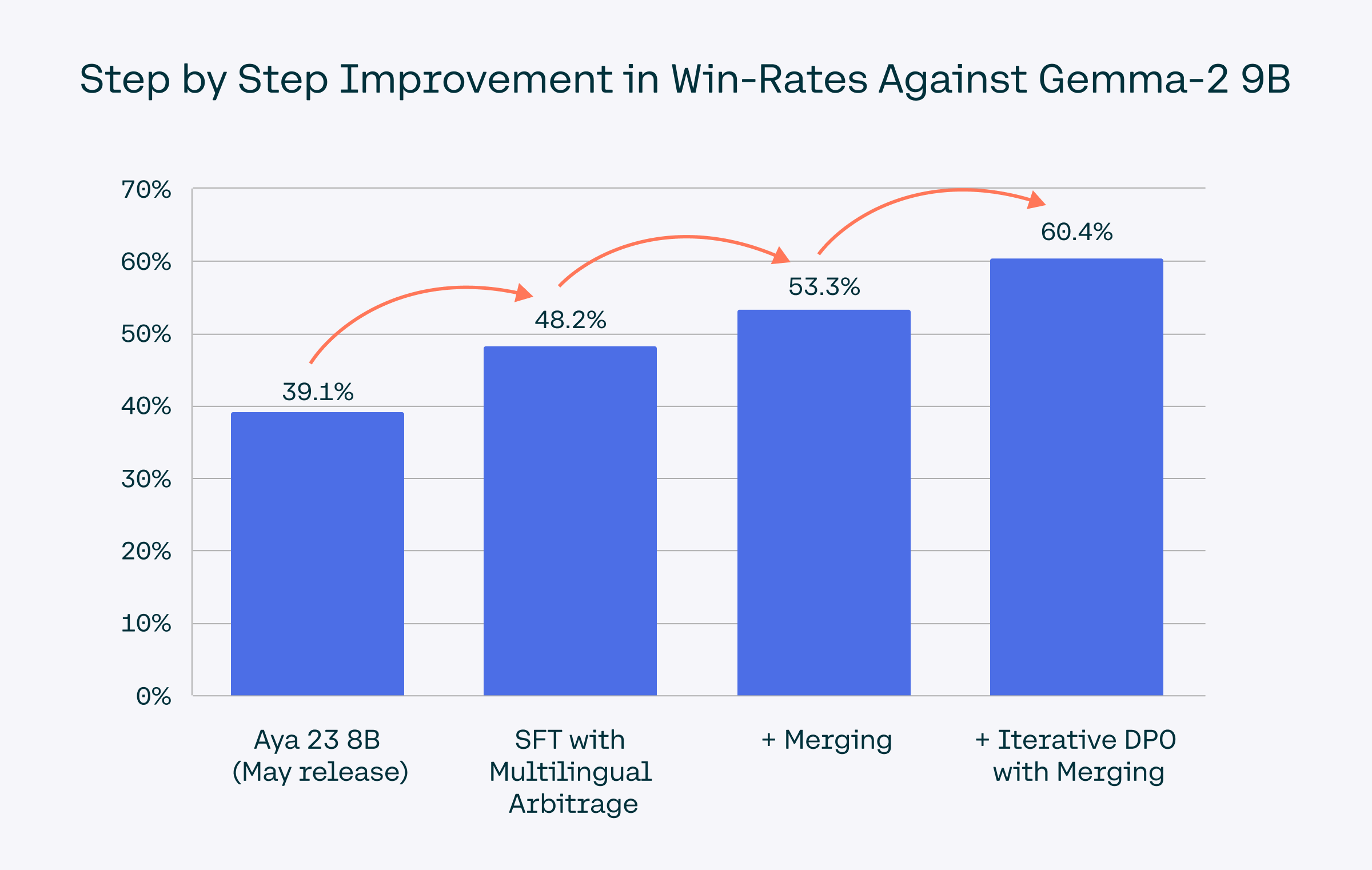

**Aya Expanse 8B** is an open-weight research release of a model with highly advanced multilingual capabilities. It focuses on pairing a highly performant pre-trained [Command family](https://huggingface.co/CohereForAI/c4ai-command-r-plus) of models with the result of a year’s dedicated research from [Cohere For AI](https://cohere.for.ai/), including [data arbitrage](https://arxiv.org/abs/2408.14960), [multilingual preference training](https://arxiv.org/abs/2407.02552), [safety tuning](https://arxiv.org/abs/2406.18682), and [model merging](https://arxiv.org/abs/2410.10801). The result is a powerful multilingual large language model.

|

| 42 |

+

|

| 43 |

+

This model card corresponds to the 8-billion version of the Aya Expanse model. We also released an 32-billion version which you can find [here](https://huggingface.co/CohereForAI/aya-expanse-32B).

|

| 44 |

+

|

| 45 |

+

- Developed by: [Cohere For AI](https://cohere.for.ai/)

|

| 46 |

+

- Point of Contact: Cohere For AI: [cohere.for.ai](https://cohere.for.ai/)

|

| 47 |

+

- License: [CC-BY-NC](https://cohere.com/c4ai-cc-by-nc-license), requires also adhering to [C4AI's Acceptable Use Policy](https://docs.cohere.com/docs/c4ai-acceptable-use-policy)

|

| 48 |

+

- Model: Aya Expanse 8B

|

| 49 |

+

- Model Size: 8 billion parameters

|

| 50 |

+

|

| 51 |

+

### Supported Languages

|

| 52 |

+

|

| 53 |

+

We cover 23 languages: Arabic, Chinese (simplified & traditional), Czech, Dutch, English, French, German, Greek, Hebrew, Hebrew, Hindi, Indonesian, Italian, Japanese, Korean, Persian, Polish, Portuguese, Romanian, Russian, Spanish, Turkish, Ukrainian, and Vietnamese.

|

| 54 |

+

|

| 55 |

+

### Try it: Aya Expanse in Action

|

| 56 |

+

|

| 57 |

+

Use the [Cohere playground](https://dashboard.cohere.com/playground/chat) or our [Hugging Face Space](https://huggingface.co/spaces/CohereForAI/aya_expanse) for interactive exploration.

|

| 58 |

+

|

| 59 |

+

### How to Use Aya Expanse

|

| 60 |

+

|

| 61 |

+

Install the transformers library and load Aya Expanse 8B as follows:

|

| 62 |

+

|

| 63 |

+

```python

|

| 64 |

+

from transformers import AutoTokenizer, AutoModelForCausalLM

|

| 65 |

+

|

| 66 |

+

model_id = "CohereForAI/aya-expanse-8b"

|

| 67 |

+

tokenizer = AutoTokenizer.from_pretrained(model_id)

|

| 68 |

+

model = AutoModelForCausalLM.from_pretrained(model_id)

|

| 69 |

+

|

| 70 |

+

# Format the message with the chat template

|

| 71 |

+

messages = [{"role": "user", "content": "Anneme onu ne kadar sevdiğimi anlatan bir mektup yaz"}]

|

| 72 |

+

input_ids = tokenizer.apply_chat_template(messages, tokenize=True, add_generation_prompt=True, return_tensors="pt")

|

| 73 |

+

## <BOS_TOKEN><|START_OF_TURN_TOKEN|><|USER_TOKEN|>Anneme onu ne kadar sevdiğimi anlatan bir mektup yaz<|END_OF_TURN_TOKEN|><|START_OF_TURN_TOKEN|><|CHATBOT_TOKEN|>

|

| 74 |

+

|

| 75 |

+

gen_tokens = model.generate(

|

| 76 |

+

input_ids,

|

| 77 |

+

max_new_tokens=100,

|

| 78 |

+

do_sample=True,

|

| 79 |

+

temperature=0.3,

|

| 80 |

+

)

|

| 81 |

+

|

| 82 |

+

gen_text = tokenizer.decode(gen_tokens[0])

|

| 83 |

+

print(gen_text)

|

| 84 |

+

```

|

| 85 |

+

|

| 86 |

+

### Example Notebooks

|

| 87 |

+

|

| 88 |

+

**Fine-Tuning:**

|

| 89 |

+

- [Detailed Fine-Tuning Notebook](https://colab.research.google.com/drive/1ryPYXzqb7oIn2fchMLdCNSIH5KfyEtv4).

|

| 90 |

+

|

| 91 |

+

**Community-Contributed Use Cases:**:

|

| 92 |

+

|

| 93 |

+

The following notebooks contributed by *Cohere For AI Community* members show how Aya Expanse can be used for different use cases:

|

| 94 |

+

- [Mulitlingual Writing Assistant](https://colab.research.google.com/drive/1SRLWQ0HdYN_NbRMVVUHTDXb-LSMZWF60)

|

| 95 |

+

- [AyaMCooking](https://colab.research.google.com/drive/1-cnn4LXYoZ4ARBpnsjQM3sU7egOL_fLB?usp=sharing)

|

| 96 |

+

- [Multilingual Question-Answering System](https://colab.research.google.com/drive/1bbB8hzyzCJbfMVjsZPeh4yNEALJFGNQy?usp=sharing)

|

| 97 |

+

|

| 98 |

+

|

| 99 |

+

## Model Details

|

| 100 |

+

|

| 101 |

+

**Input**: Models input text only.

|

| 102 |

+

|

| 103 |

+

**Output**: Models generate text only.

|

| 104 |

+

|

| 105 |

+

**Model Architecture**: Aya Expanse 8B is an auto-regressive language model that uses an optimized transformer architecture. Post-training includes supervised finetuning, preference training, and model merging.

|

| 106 |

+

|

| 107 |

+

**Languages covered**: The model is particularly optimized for multilinguality and supports the following languages: Arabic, Chinese (simplified & traditional), Czech, Dutch, English, French, German, Greek, Hebrew, Hindi, Indonesian, Italian, Japanese, Korean, Persian, Polish, Portuguese, Romanian, Russian, Spanish, Turkish, Ukrainian, and Vietnamese

|

| 108 |

+

|

| 109 |

+

**Context length**: 8K

|

| 110 |

+

|

| 111 |

+

For more details about how the model was trained, check out [our blogpost](https://huggingface.co/blog/aya-expanse).

|

| 112 |

+

|

| 113 |

+

### Evaluation

|

| 114 |

+

|

| 115 |

+

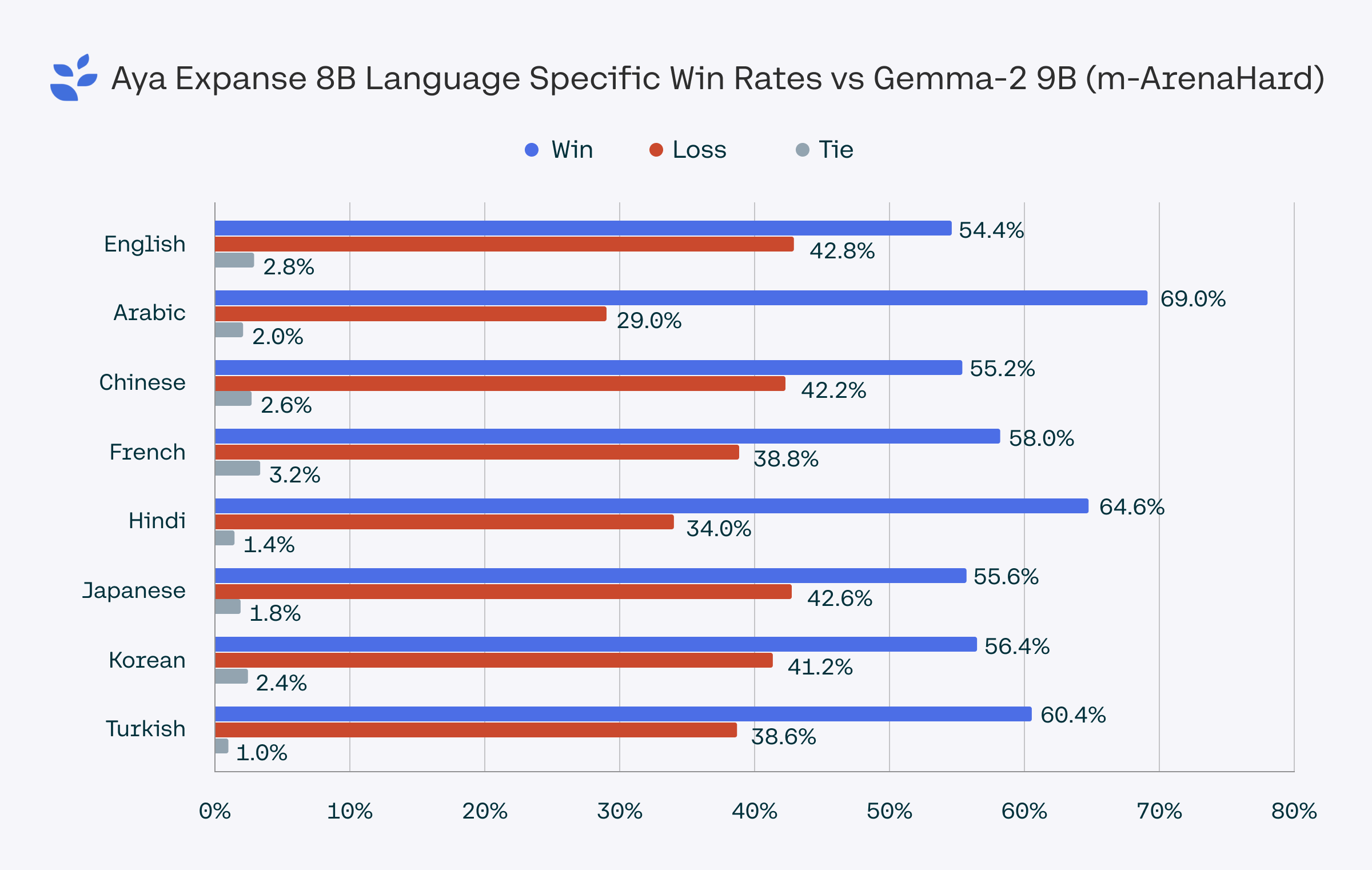

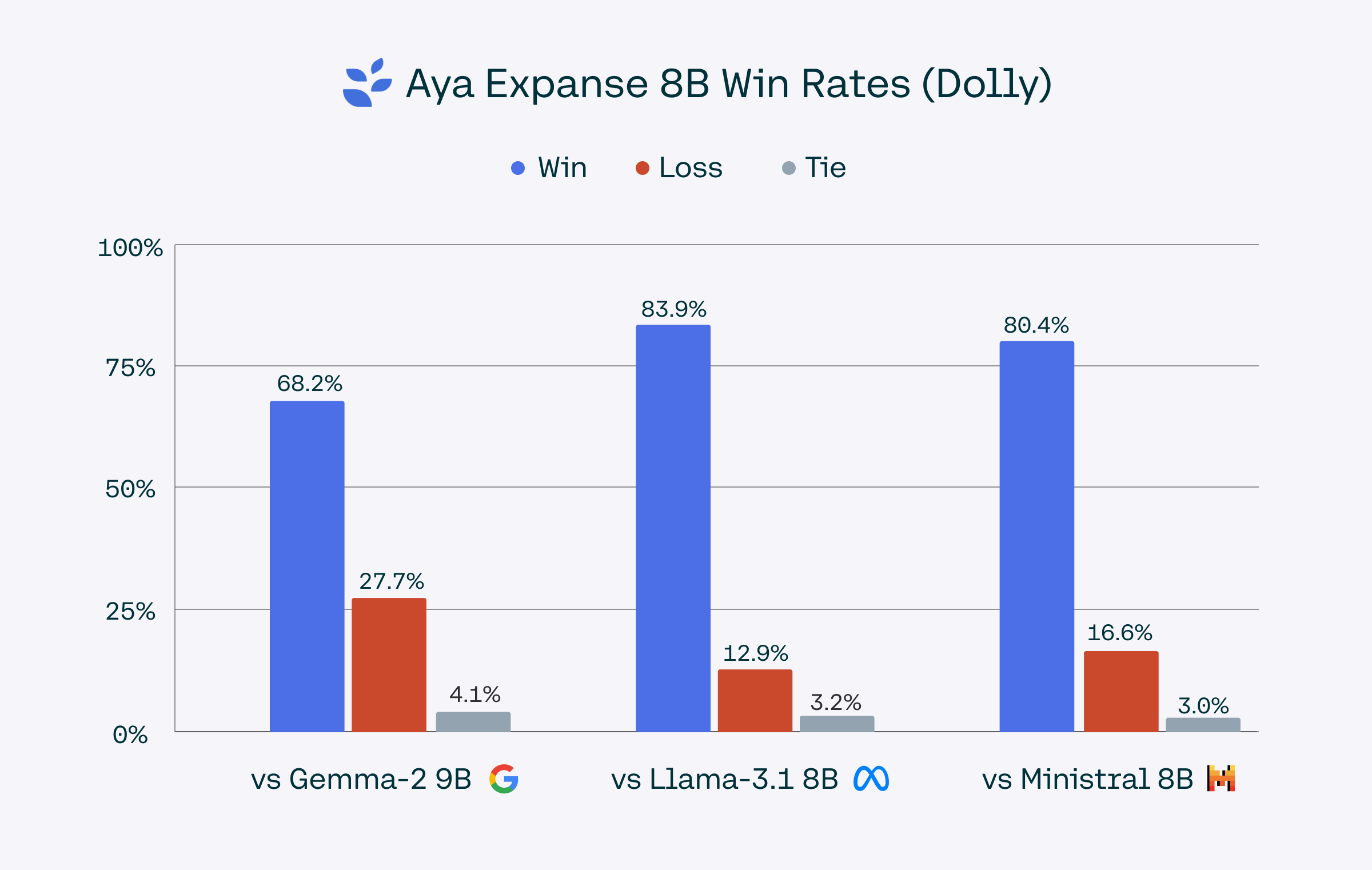

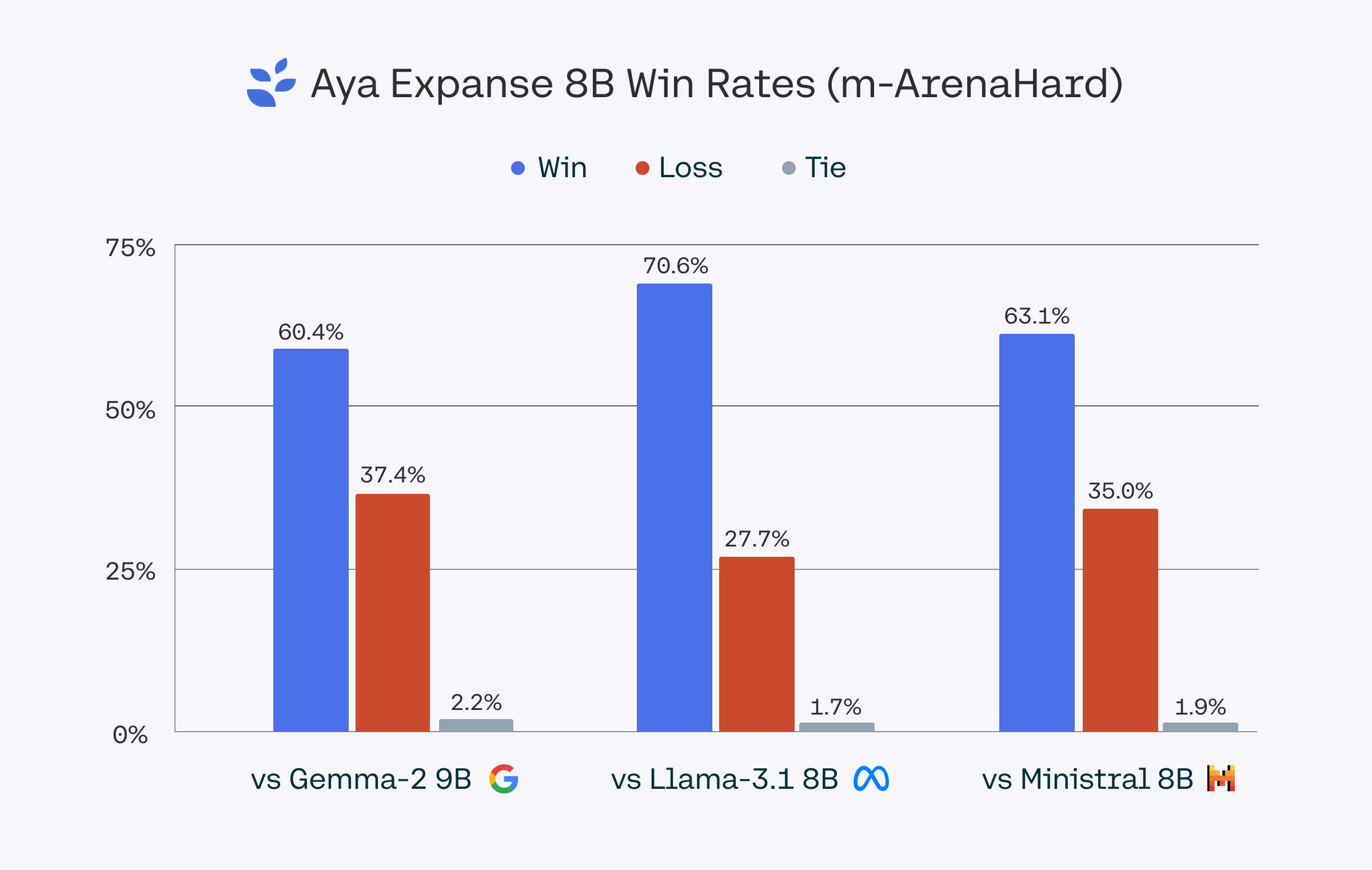

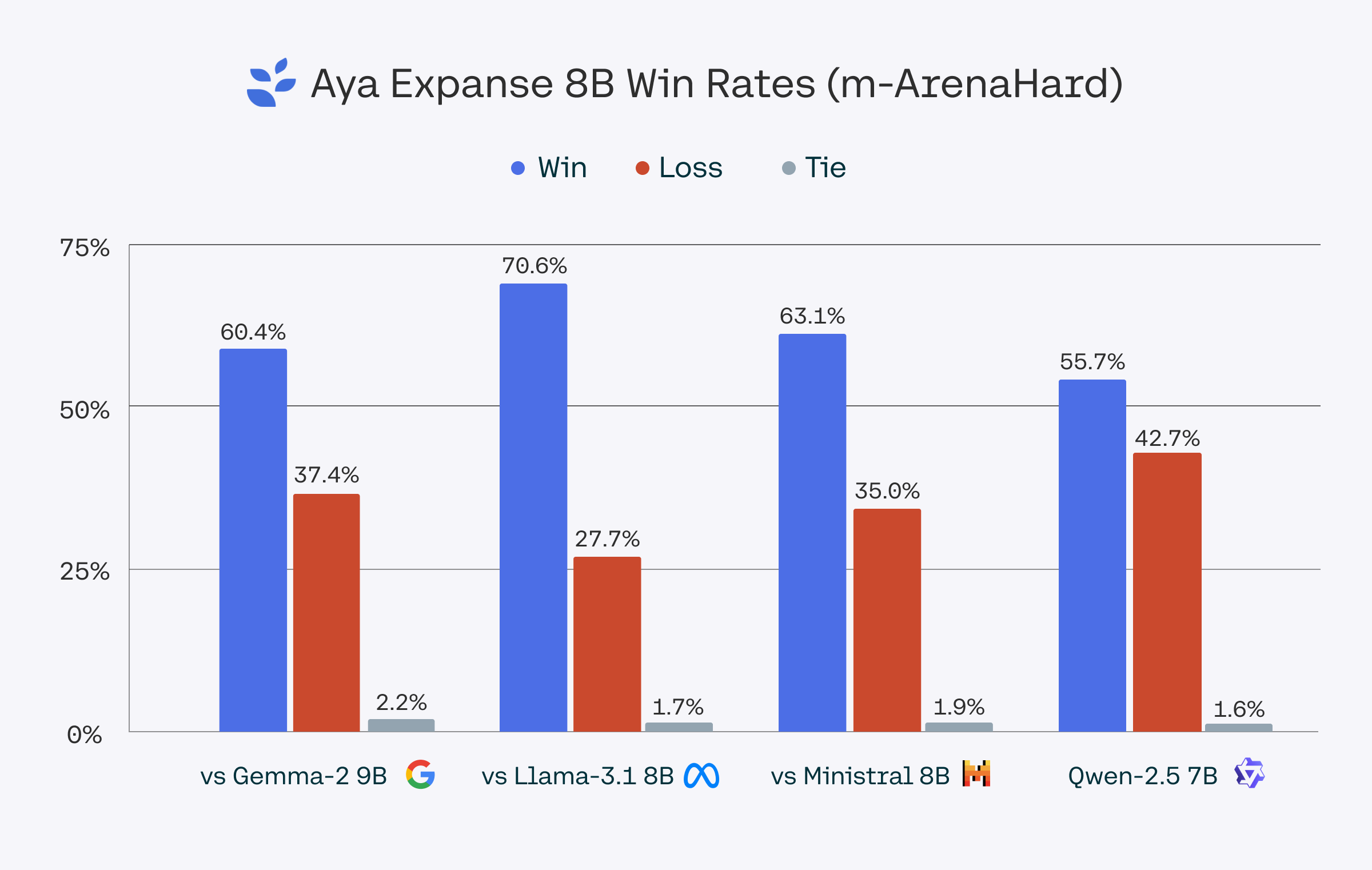

We evaluated Aya Expanse 8B against Gemma 2 9B, Llama 3.1 8B, Ministral 8B, and Qwen 2.5 7B using Dolly and m-ArenaHard, a dataset based on the [Arena-Hard-Auto dataset](https://huggingface.co/datasets/lmarena-ai/arena-hard-auto-v0.1) and translated to the 23 languages we support in Aya Expanse 8B. Win-rates were determined using gpt-4o-2024-08-06 as a judge. For a conservative benchmark, we report results from gpt-4o-2024-08-06, though gpt-4o-mini scores showed even stronger performance.

|

| 116 |

+

|

| 117 |

+

The m-ArenaHard dataset, used to evaluate Aya Expanse’s capabilities, is publicly available [here](https://huggingface.co/datasets/CohereForAI/m-ArenaHard).

|

| 118 |

+

|

| 119 |

+

<img src="winrates_marenahard_complete.png" width="650" style="margin-left:'auto' margin-right:'auto' display:'block'"/>

|

| 120 |

+

<img src="winrates_dolly.png" width="650" style="margin-left:'auto' margin-right:'auto' display:'block'"/>

|

| 121 |

+

<img src="winrates_by_lang.png" width="650" style="margin-left:'auto' margin-right:'auto' display:'block'"/>

|

| 122 |

+

<img src="winrates_step_by_step.png" width="650" style="margin-left:'auto' margin-right:'auto' display:'block'"/>

|

| 123 |

+

|

| 124 |

+

|

| 125 |

+

### Model Card Contact

|

| 126 |

+

|

| 127 |

+

For errors or additional questions about details in this model card, contact info@for.ai.

|

| 128 |

+

|

| 129 |

+

### Terms of Use

|

| 130 |

+

|

| 131 |

+

We hope that the release of this model will make community-based research efforts more accessible, by releasing the weights of a highly performant multilingual model to researchers all over the world. This model is governed by a [CC-BY-NC](https://cohere.com/c4ai-cc-by-nc-license) License with an acceptable use addendum, and also requires adhering to [C4AI's Acceptable Use Policy](https://docs.cohere.com/docs/c4ai-acceptable-use-policy).

|

aya-expanse-8B.png

ADDED

|

config.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "models/8b-unsharded/20241013_013504_most_profession/ckpt-1239",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CohereForCausalLM"

|

| 5 |

+

],

|

| 6 |

+

"attention_bias": false,

|

| 7 |

+

"attention_dropout": 0.0,

|

| 8 |

+

"bos_token_id": 5,

|

| 9 |

+

"eos_token_id": 255001,

|

| 10 |

+

"hidden_act": "silu",

|

| 11 |

+

"hidden_size": 4096,

|

| 12 |

+

"initializer_range": 0.02,

|

| 13 |

+

"intermediate_size": 14336,

|

| 14 |

+

"layer_norm_eps": 1e-05,

|

| 15 |

+

"logit_scale": 0.125,

|

| 16 |

+

"max_position_embeddings": 8192,

|

| 17 |

+

"model_type": "cohere",

|

| 18 |

+

"num_attention_heads": 32,

|

| 19 |

+

"num_hidden_layers": 32,

|

| 20 |

+

"num_key_value_heads": 8,

|

| 21 |

+

"pad_token_id": 0,

|

| 22 |

+

"rope_theta": 10000,

|

| 23 |

+

"torch_dtype": "float16",

|

| 24 |

+

"transformers_version": "4.44.0",

|

| 25 |

+

"use_cache": true,

|

| 26 |

+

"use_qk_norm": false,

|

| 27 |

+

"vocab_size": 256000

|

| 28 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 5,

|

| 4 |

+

"eos_token_id": 255001,

|

| 5 |

+

"pad_token_id": 0,

|

| 6 |

+

"transformers_version": "4.44.0"

|

| 7 |

+

}

|

model-00001-of-00004.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4caed059a6c25f86bc9c576c852438b220179a410725e8c6e02a02567bb25811

|

| 3 |

+

size 4915779640

|

model-00002-of-00004.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c98d47d38a411c1f71b6ef21d85890f90f8b215df49ee11679b2f21deb411ddd

|

| 3 |

+

size 4915824616

|

model-00003-of-00004.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d879c402bed6398a700784fade48fd3fc9941d86c621caf0973fedc54b2c8400

|

| 3 |

+

size 4999719496

|

model-00004-of-00004.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d0510958ff72c271230ddc0c880c49102bcd8dedc6d00ab290cffb51efe335f3

|

| 3 |

+

size 1224771920

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,265 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 16056066048

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"model.embed_tokens.weight": "model-00001-of-00004.safetensors",

|

| 7 |

+

"model.layers.0.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 8 |

+

"model.layers.0.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

| 9 |

+

"model.layers.0.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

| 10 |

+

"model.layers.0.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

| 11 |

+

"model.layers.0.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

| 12 |

+

"model.layers.0.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

| 13 |

+

"model.layers.0.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 14 |

+

"model.layers.0.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 15 |

+

"model.layers.1.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 16 |

+

"model.layers.1.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

| 17 |

+

"model.layers.1.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

| 18 |

+

"model.layers.1.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

| 19 |

+

"model.layers.1.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

| 20 |

+

"model.layers.1.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

| 21 |

+

"model.layers.1.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 22 |

+

"model.layers.1.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 23 |

+

"model.layers.10.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 24 |

+

"model.layers.10.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 25 |

+

"model.layers.10.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 26 |

+

"model.layers.10.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 27 |

+

"model.layers.10.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 28 |

+

"model.layers.10.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 29 |

+

"model.layers.10.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 30 |

+

"model.layers.10.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 31 |

+

"model.layers.11.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 32 |

+

"model.layers.11.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 33 |

+

"model.layers.11.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 34 |

+

"model.layers.11.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 35 |

+

"model.layers.11.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 36 |

+

"model.layers.11.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 37 |

+

"model.layers.11.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 38 |

+

"model.layers.11.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 39 |

+

"model.layers.12.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 40 |

+

"model.layers.12.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 41 |

+

"model.layers.12.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 42 |

+

"model.layers.12.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 43 |

+

"model.layers.12.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 44 |

+

"model.layers.12.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 45 |

+

"model.layers.12.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 46 |

+

"model.layers.12.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 47 |

+

"model.layers.13.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 48 |

+

"model.layers.13.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 49 |

+

"model.layers.13.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 50 |

+

"model.layers.13.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 51 |

+

"model.layers.13.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 52 |

+

"model.layers.13.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 53 |

+

"model.layers.13.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 54 |

+

"model.layers.13.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 55 |

+

"model.layers.14.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 56 |

+

"model.layers.14.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 57 |

+

"model.layers.14.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 58 |

+

"model.layers.14.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 59 |

+

"model.layers.14.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 60 |

+

"model.layers.14.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 61 |

+

"model.layers.14.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 62 |

+

"model.layers.14.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 63 |

+

"model.layers.15.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 64 |

+

"model.layers.15.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 65 |

+

"model.layers.15.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 66 |

+

"model.layers.15.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 67 |

+

"model.layers.15.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 68 |

+

"model.layers.15.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 69 |

+

"model.layers.15.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 70 |

+

"model.layers.15.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 71 |

+

"model.layers.16.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 72 |

+

"model.layers.16.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 73 |

+

"model.layers.16.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 74 |

+

"model.layers.16.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 75 |

+

"model.layers.16.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 76 |

+

"model.layers.16.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 77 |

+

"model.layers.16.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 78 |

+

"model.layers.16.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 79 |

+

"model.layers.17.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 80 |

+

"model.layers.17.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 81 |

+

"model.layers.17.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 82 |

+

"model.layers.17.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 83 |

+

"model.layers.17.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 84 |

+

"model.layers.17.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 85 |

+

"model.layers.17.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 86 |

+

"model.layers.17.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 87 |

+

"model.layers.18.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 88 |

+

"model.layers.18.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 89 |

+

"model.layers.18.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 90 |

+

"model.layers.18.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 91 |

+

"model.layers.18.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 92 |

+

"model.layers.18.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 93 |

+

"model.layers.18.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 94 |

+

"model.layers.18.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 95 |

+

"model.layers.19.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 96 |

+

"model.layers.19.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 97 |

+

"model.layers.19.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 98 |

+

"model.layers.19.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 99 |

+

"model.layers.19.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 100 |

+

"model.layers.19.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 101 |

+

"model.layers.19.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 102 |

+

"model.layers.19.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 103 |

+

"model.layers.2.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 104 |

+

"model.layers.2.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

| 105 |

+

"model.layers.2.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

| 106 |

+

"model.layers.2.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

| 107 |

+

"model.layers.2.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

| 108 |

+

"model.layers.2.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

| 109 |

+

"model.layers.2.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 110 |

+

"model.layers.2.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 111 |

+

"model.layers.20.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 112 |

+

"model.layers.20.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 113 |

+

"model.layers.20.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 114 |

+

"model.layers.20.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 115 |

+

"model.layers.20.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 116 |

+

"model.layers.20.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 117 |

+

"model.layers.20.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 118 |

+

"model.layers.20.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 119 |

+

"model.layers.21.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 120 |

+

"model.layers.21.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 121 |

+

"model.layers.21.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 122 |

+

"model.layers.21.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 123 |

+

"model.layers.21.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 124 |

+

"model.layers.21.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 125 |

+

"model.layers.21.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 126 |

+

"model.layers.21.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 127 |

+

"model.layers.22.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 128 |

+

"model.layers.22.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 129 |

+

"model.layers.22.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 130 |

+

"model.layers.22.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 131 |

+

"model.layers.22.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 132 |

+

"model.layers.22.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 133 |

+

"model.layers.22.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 134 |

+

"model.layers.22.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 135 |

+

"model.layers.23.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 136 |

+

"model.layers.23.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 137 |

+

"model.layers.23.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 138 |

+

"model.layers.23.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 139 |

+

"model.layers.23.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 140 |

+

"model.layers.23.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 141 |

+

"model.layers.23.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 142 |

+

"model.layers.23.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 143 |

+

"model.layers.24.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 144 |

+

"model.layers.24.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 145 |

+

"model.layers.24.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 146 |

+

"model.layers.24.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 147 |

+

"model.layers.24.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 148 |

+

"model.layers.24.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 149 |

+

"model.layers.24.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 150 |

+

"model.layers.24.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 151 |

+

"model.layers.25.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 152 |

+

"model.layers.25.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 153 |

+

"model.layers.25.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 154 |

+

"model.layers.25.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 155 |

+

"model.layers.25.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 156 |

+

"model.layers.25.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 157 |

+

"model.layers.25.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 158 |

+

"model.layers.25.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 159 |

+

"model.layers.26.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 160 |

+

"model.layers.26.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 161 |

+

"model.layers.26.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 162 |

+

"model.layers.26.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 163 |

+

"model.layers.26.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 164 |

+

"model.layers.26.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 165 |

+

"model.layers.26.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 166 |

+

"model.layers.26.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 167 |

+

"model.layers.27.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 168 |

+

"model.layers.27.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 169 |

+

"model.layers.27.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 170 |

+

"model.layers.27.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 171 |

+

"model.layers.27.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 172 |

+

"model.layers.27.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 173 |

+

"model.layers.27.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 174 |

+

"model.layers.27.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 175 |

+

"model.layers.28.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 176 |

+

"model.layers.28.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 177 |

+

"model.layers.28.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 178 |

+

"model.layers.28.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 179 |

+

"model.layers.28.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 180 |

+

"model.layers.28.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 181 |

+

"model.layers.28.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 182 |

+

"model.layers.28.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 183 |

+

"model.layers.29.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

| 184 |

+

"model.layers.29.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

| 185 |

+

"model.layers.29.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

| 186 |

+

"model.layers.29.mlp.up_proj.weight": "model-00004-of-00004.safetensors",

|

| 187 |

+

"model.layers.29.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 188 |

+

"model.layers.29.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 189 |

+

"model.layers.29.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 190 |

+

"model.layers.29.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 191 |

+

"model.layers.3.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 192 |

+

"model.layers.3.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

| 193 |

+

"model.layers.3.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

| 194 |

+

"model.layers.3.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

| 195 |

+

"model.layers.3.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

| 196 |

+

"model.layers.3.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

| 197 |

+

"model.layers.3.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 198 |

+

"model.layers.3.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 199 |

+

"model.layers.30.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

| 200 |

+

"model.layers.30.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

| 201 |

+

"model.layers.30.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

| 202 |

+

"model.layers.30.mlp.up_proj.weight": "model-00004-of-00004.safetensors",

|

| 203 |

+

"model.layers.30.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

| 204 |

+

"model.layers.30.self_attn.o_proj.weight": "model-00004-of-00004.safetensors",

|

| 205 |

+

"model.layers.30.self_attn.q_proj.weight": "model-00004-of-00004.safetensors",

|

| 206 |

+

"model.layers.30.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

| 207 |

+

"model.layers.31.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

| 208 |

+

"model.layers.31.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

| 209 |

+

"model.layers.31.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

| 210 |

+

"model.layers.31.mlp.up_proj.weight": "model-00004-of-00004.safetensors",

|

| 211 |

+

"model.layers.31.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

| 212 |

+

"model.layers.31.self_attn.o_proj.weight": "model-00004-of-00004.safetensors",

|

| 213 |

+

"model.layers.31.self_attn.q_proj.weight": "model-00004-of-00004.safetensors",

|

| 214 |

+

"model.layers.31.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

| 215 |

+

"model.layers.4.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 216 |

+

"model.layers.4.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

| 217 |

+

"model.layers.4.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

| 218 |

+

"model.layers.4.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

| 219 |

+

"model.layers.4.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

| 220 |

+

"model.layers.4.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

| 221 |

+

"model.layers.4.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 222 |

+

"model.layers.4.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 223 |

+

"model.layers.5.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 224 |

+

"model.layers.5.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

| 225 |

+

"model.layers.5.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

| 226 |

+

"model.layers.5.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

| 227 |

+

"model.layers.5.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

| 228 |

+

"model.layers.5.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

| 229 |

+

"model.layers.5.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 230 |

+

"model.layers.5.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 231 |

+

"model.layers.6.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 232 |

+

"model.layers.6.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 233 |

+

"model.layers.6.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

| 234 |

+

"model.layers.6.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 235 |

+

"model.layers.6.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

| 236 |

+

"model.layers.6.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

| 237 |

+

"model.layers.6.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 238 |

+

"model.layers.6.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 239 |

+

"model.layers.7.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 240 |

+

"model.layers.7.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 241 |

+

"model.layers.7.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 242 |

+

"model.layers.7.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 243 |

+

"model.layers.7.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 244 |

+

"model.layers.7.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 245 |

+

"model.layers.7.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 246 |

+

"model.layers.7.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 247 |

+

"model.layers.8.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 248 |

+

"model.layers.8.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 249 |

+

"model.layers.8.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 250 |

+

"model.layers.8.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 251 |

+

"model.layers.8.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 252 |

+

"model.layers.8.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 253 |

+

"model.layers.8.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 254 |

+

"model.layers.8.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 255 |

+

"model.layers.9.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 256 |

+

"model.layers.9.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 257 |

+

"model.layers.9.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 258 |

+

"model.layers.9.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 259 |

+

"model.layers.9.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 260 |

+

"model.layers.9.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 261 |

+

"model.layers.9.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 262 |

+

"model.layers.9.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 263 |

+

"model.norm.weight": "model-00004-of-00004.safetensors"

|

| 264 |

+

}

|

| 265 |

+

}

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,23 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<BOS_TOKEN>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": false,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "<|END_OF_TURN_TOKEN|>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": false,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": {

|

| 17 |

+

"content": "<PAD>",

|

| 18 |

+

"lstrip": false,

|

| 19 |

+

"normalized": false,

|

| 20 |

+

"rstrip": false,

|

| 21 |

+

"single_word": false

|

| 22 |

+

}

|

| 23 |

+

}

|

tokenizer.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c69a7ea6c0927dfac8c349186ebcf0466a4723c21cbdb2e850cf559f0bee92b8

|

| 3 |

+

size 12777433

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,330 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_bos_token": true,

|

| 3 |

+

"add_eos_token": false,

|

| 4 |

+

"add_prefix_space": false,

|

| 5 |

+

"added_tokens_decoder": {

|

| 6 |

+

"0": {

|

| 7 |

+

"content": "<PAD>",

|

| 8 |

+

"lstrip": false,

|

| 9 |

+

"normalized": false,

|

| 10 |

+

"rstrip": false,

|

| 11 |

+

"single_word": false,

|

| 12 |

+

"special": true

|

| 13 |

+

},

|

| 14 |

+

"1": {

|

| 15 |

+

"content": "<UNK>",

|

| 16 |

+

"lstrip": false,

|

| 17 |

+

"normalized": false,

|

| 18 |

+

"rstrip": false,

|

| 19 |

+

"single_word": false,

|

| 20 |

+

"special": true

|

| 21 |

+

},

|

| 22 |

+

"2": {

|

| 23 |

+

"content": "<CLS>",

|

| 24 |

+

"lstrip": false,

|

| 25 |

+

"normalized": false,

|

| 26 |

+

"rstrip": false,

|

| 27 |

+

"single_word": false,

|

| 28 |

+

"special": true

|

| 29 |

+

},

|

| 30 |

+

"3": {

|

| 31 |

+

"content": "<SEP>",

|

| 32 |

+

"lstrip": false,

|

| 33 |

+

"normalized": false,

|

| 34 |

+

"rstrip": false,

|

| 35 |

+

"single_word": false,

|

| 36 |

+

"special": true

|

| 37 |

+

},

|

| 38 |

+

"4": {

|

| 39 |

+

"content": "<MASK_TOKEN>",

|

| 40 |

+

"lstrip": false,

|

| 41 |

+

"normalized": false,

|

| 42 |

+

"rstrip": false,

|

| 43 |

+

"single_word": false,

|

| 44 |

+

"special": true

|

| 45 |

+

},

|

| 46 |

+

"5": {

|

| 47 |

+

"content": "<BOS_TOKEN>",

|

| 48 |

+

"lstrip": false,

|

| 49 |

+

"normalized": false,

|

| 50 |

+

"rstrip": false,

|

| 51 |

+

"single_word": false,

|

| 52 |

+

"special": true

|

| 53 |

+

},

|

| 54 |

+

"6": {

|

| 55 |

+

"content": "<EOS_TOKEN>",

|

| 56 |

+

"lstrip": false,

|

| 57 |

+

"normalized": false,

|

| 58 |

+

"rstrip": false,

|

| 59 |

+

"single_word": false,

|

| 60 |

+

"special": true

|

| 61 |

+

},

|

| 62 |

+

"7": {

|

| 63 |

+

"content": "<EOP_TOKEN>",

|

| 64 |

+

"lstrip": false,

|

| 65 |

+

"normalized": false,

|

| 66 |

+

"rstrip": false,

|

| 67 |

+

"single_word": false,

|

| 68 |

+

"special": true

|

| 69 |

+

},

|

| 70 |

+

"255000": {

|

| 71 |

+

"content": "<|START_OF_TURN_TOKEN|>",

|

| 72 |

+

"lstrip": false,

|

| 73 |

+

"normalized": false,

|

| 74 |

+

"rstrip": false,

|

| 75 |

+

"single_word": false,

|

| 76 |

+

"special": false

|

| 77 |

+

},

|

| 78 |

+

"255001": {

|

| 79 |

+

"content": "<|END_OF_TURN_TOKEN|>",

|

| 80 |

+

"lstrip": false,

|

| 81 |

+

"normalized": false,

|

| 82 |

+

"rstrip": false,

|

| 83 |

+

"single_word": false,

|

| 84 |

+

"special": true

|

| 85 |

+

},

|

| 86 |

+

"255002": {

|

| 87 |

+

"content": "<|YES_TOKEN|>",

|

| 88 |

+

"lstrip": false,

|

| 89 |

+

"normalized": false,

|

| 90 |

+

"rstrip": false,

|

| 91 |

+

"single_word": false,

|

| 92 |

+

"special": false

|

| 93 |

+

},

|

| 94 |

+

"255003": {

|

| 95 |

+

"content": "<|NO_TOKEN|>",

|

| 96 |

+

"lstrip": false,

|

| 97 |

+

"normalized": false,

|

| 98 |

+

"rstrip": false,

|

| 99 |

+

"single_word": false,

|

| 100 |

+

"special": false

|

| 101 |

+

},

|

| 102 |

+

"255004": {

|

| 103 |

+

"content": "<|GOOD_TOKEN|>",

|

| 104 |

+

"lstrip": false,

|

| 105 |

+

"normalized": false,

|

| 106 |

+

"rstrip": false,

|

| 107 |

+

"single_word": false,

|

| 108 |

+

"special": false

|

| 109 |

+

},

|

| 110 |

+

"255005": {

|

| 111 |

+

"content": "<|BAD_TOKEN|>",

|

| 112 |

+

"lstrip": false,

|

| 113 |

+

"normalized": false,

|

| 114 |

+

"rstrip": false,

|

| 115 |

+

"single_word": false,

|

| 116 |

+

"special": false

|

| 117 |

+

},

|

| 118 |

+

"255006": {

|

| 119 |

+

"content": "<|USER_TOKEN|>",

|

| 120 |

+

"lstrip": false,

|

| 121 |

+

"normalized": false,

|

| 122 |

+

"rstrip": false,

|

| 123 |

+

"single_word": false,

|

| 124 |

+

"special": false

|

| 125 |

+

},

|

| 126 |

+

"255007": {

|

| 127 |

+

"content": "<|CHATBOT_TOKEN|>",

|

| 128 |

+

"lstrip": false,

|

| 129 |

+

"normalized": false,

|

| 130 |

+

"rstrip": false,

|

| 131 |

+

"single_word": false,

|

| 132 |

+

"special": false

|

| 133 |

+

},

|

| 134 |

+

"255008": {

|

| 135 |

+

"content": "<|SYSTEM_TOKEN|>",

|

| 136 |

+

"lstrip": false,

|

| 137 |

+

"normalized": false,

|

| 138 |

+

"rstrip": false,

|

| 139 |

+

"single_word": false,

|

| 140 |

+

"special": false

|

| 141 |

+

},

|

| 142 |

+

"255009": {

|

| 143 |

+

"content": "<|USER_0_TOKEN|>",

|

| 144 |

+

"lstrip": false,

|

| 145 |

+

"normalized": false,

|

| 146 |

+

"rstrip": false,

|

| 147 |

+

"single_word": false,

|

| 148 |

+

"special": false

|

| 149 |

+

},

|

| 150 |

+

"255010": {

|

| 151 |

+

"content": "<|USER_1_TOKEN|>",

|

| 152 |

+

"lstrip": false,

|

| 153 |

+

"normalized": false,

|

| 154 |

+

"rstrip": false,

|

| 155 |

+

"single_word": false,

|

| 156 |

+

"special": false

|

| 157 |

+

},

|

| 158 |

+

"255011": {

|

| 159 |

+

"content": "<|USER_2_TOKEN|>",

|

| 160 |

+

"lstrip": false,

|

| 161 |

+

"normalized": false,

|

| 162 |

+

"rstrip": false,

|

| 163 |

+

"single_word": false,

|

| 164 |

+

"special": false

|

| 165 |

+

},

|

| 166 |

+

"255012": {

|

| 167 |

+

"content": "<|USER_3_TOKEN|>",

|

| 168 |

+

"lstrip": false,

|

| 169 |

+

"normalized": false,

|

| 170 |

+

"rstrip": false,

|

| 171 |

+

"single_word": false,

|

| 172 |

+

"special": false

|

| 173 |

+

},

|

| 174 |

+

"255013": {

|

| 175 |

+

"content": "<|USER_4_TOKEN|>",

|

| 176 |

+

"lstrip": false,

|

| 177 |

+

"normalized": false,

|

| 178 |

+

"rstrip": false,

|

| 179 |

+

"single_word": false,

|

| 180 |

+

"special": false

|

| 181 |