license: apache-2.0

datasets:

- CaptionEmporium/coyo-hd-11m-llavanext

- CortexLM/midjourney-v6

language:

- en

base_model:

- black-forest-labs/FLUX.1-dev

pipeline_tag: image-to-image

library_name: diffusers

This repository provides a IP-Adapter checkpoint for FLUX.1-dev model by Black Forest Labs

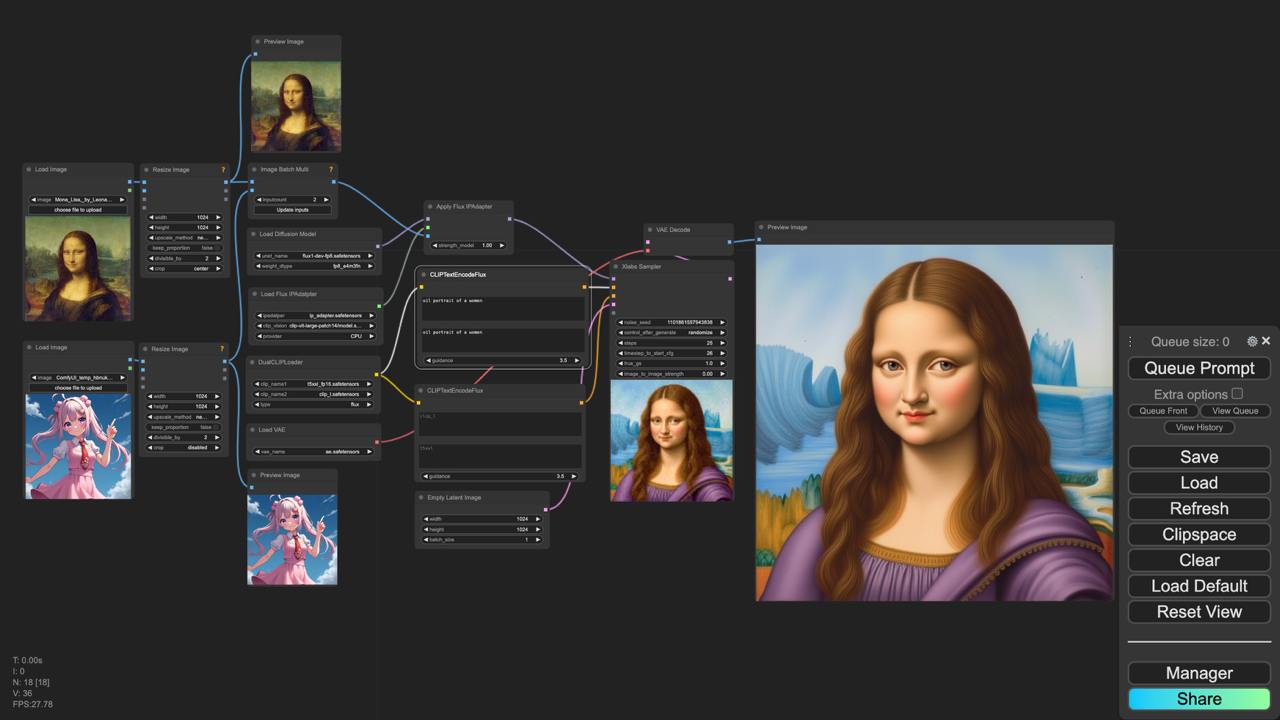

See our github for comfy ui workflows.

Models

The IP adapter is trained on a resolution of 512x512 for 150k steps and 1024x1024 for 350k steps while maintaining the aspect ratio. We release v2 version - which can be used directly in ComfyUI!

Please, see our ComfyUI custom nodes installation guide

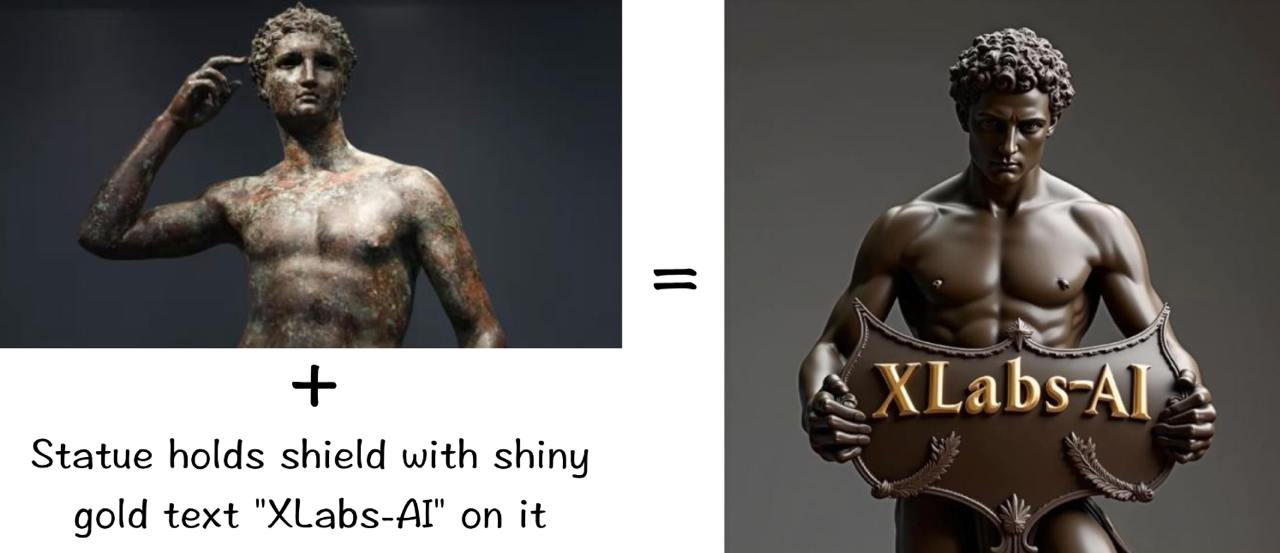

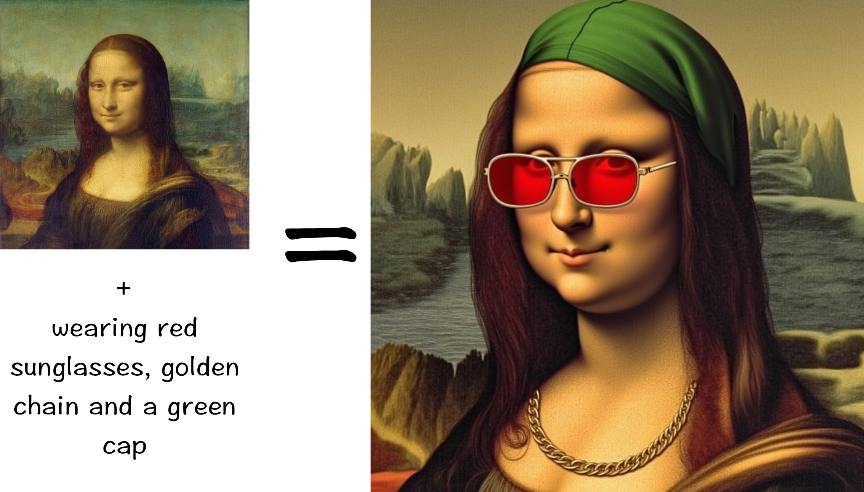

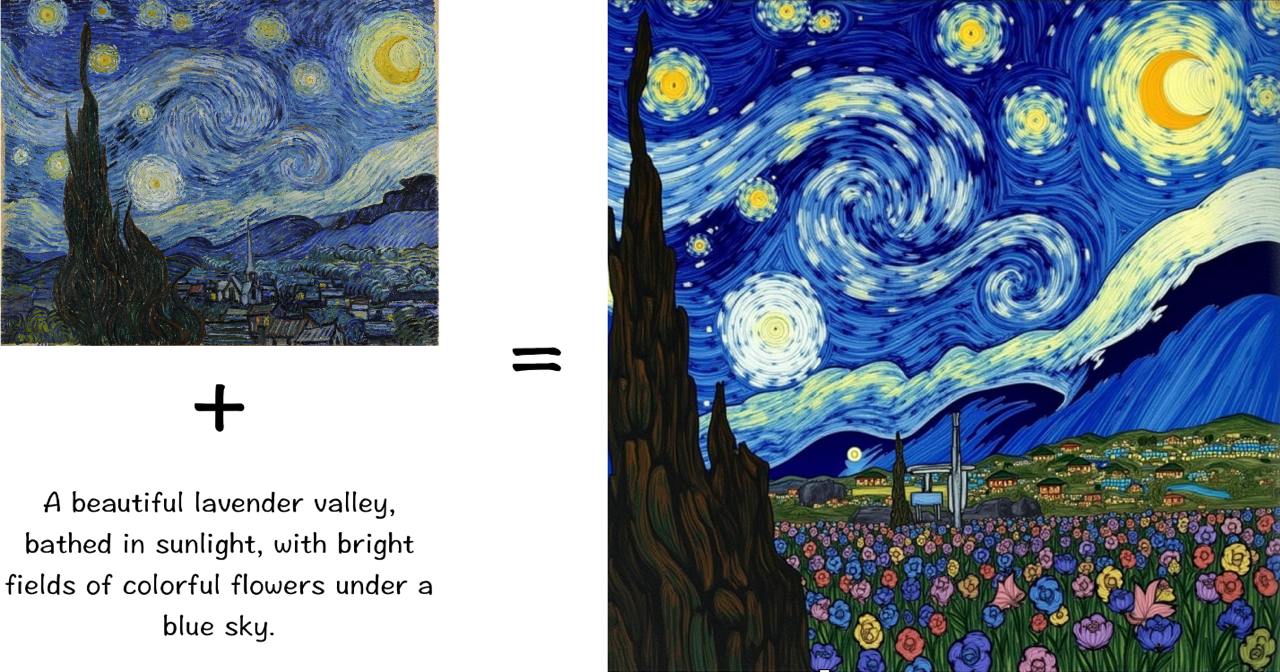

Examples

See examples of our models results below.

Also, some generation results with input images are provided in "Files and versions"

Inference

To try our models, you have 2 options:

- Use main.py from our official repo

- Use our custom nodes for ComfyUI and test it with provided workflows (check out folder /workflows)

Instruction for ComfyUI

- Go to ComfyUI/custom_nodes

- Clone x-flux-comfyui, path should be ComfyUI/custom_nodes/x-flux-comfyui/*, where * is all the files in this repo

- Go to ComfyUI/custom_nodes/x-flux-comfyui/ and run python setup.py

- Update x-flux-comfy with

git pullor reinstall it. - Download Clip-L

model.safetensorsfrom OpenAI VIT CLIP large, and put it toComfyUI/models/clip_vision/*. - Download our IPAdapter from huggingface, and put it to

ComfyUI/models/xlabs/ipadapters/*. - Use

Flux Load IPAdapterandApply Flux IPAdapternodes, choose right CLIP model and enjoy your genereations. - You can find example workflow in folder workflows in this repo.

If you get bad results, try to set to play with ip strength

Limitations

The IP Adapter is currently in beta.

We do not guarantee that you will get a good result right away, it may take more attempts to get a result.

License

Our weights fall under the FLUX.1 [dev] Non-Commercial License