Initial GPTQ model commit

Browse files

README.md

ADDED

|

@@ -0,0 +1,388 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

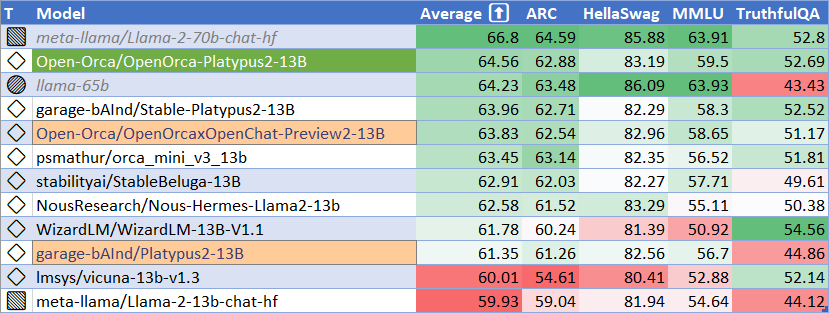

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

datasets:

|

| 3 |

+

- garage-bAInd/Open-Platypus

|

| 4 |

+

inference: false

|

| 5 |

+

language:

|

| 6 |

+

- en

|

| 7 |

+

license: other

|

| 8 |

+

model_creator: Open-Orca

|

| 9 |

+

model_link: https://huggingface.co/Open-Orca/OpenOrca-Platypus2-13B

|

| 10 |

+

model_name: OpenOrca Platypus2 13B

|

| 11 |

+

model_type: llama

|

| 12 |

+

quantized_by: TheBloke

|

| 13 |

+

---

|

| 14 |

+

|

| 15 |

+

<!-- header start -->

|

| 16 |

+

<div style="width: 100%;">

|

| 17 |

+

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

|

| 18 |

+

</div>

|

| 19 |

+

<div style="display: flex; justify-content: space-between; width: 100%;">

|

| 20 |

+

<div style="display: flex; flex-direction: column; align-items: flex-start;">

|

| 21 |

+

<p><a href="https://discord.gg/theblokeai">Chat & support: my new Discord server</a></p>

|

| 22 |

+

</div>

|

| 23 |

+

<div style="display: flex; flex-direction: column; align-items: flex-end;">

|

| 24 |

+

<p><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

|

| 25 |

+

</div>

|

| 26 |

+

</div>

|

| 27 |

+

<!-- header end -->

|

| 28 |

+

|

| 29 |

+

# OpenOrca Platypus2 13B - GPTQ

|

| 30 |

+

- Model creator: [Open-Orca](https://huggingface.co/Open-Orca)

|

| 31 |

+

- Original model: [OpenOrca Platypus2 13B](https://huggingface.co/Open-Orca/OpenOrca-Platypus2-13B)

|

| 32 |

+

|

| 33 |

+

## Description

|

| 34 |

+

|

| 35 |

+

This repo contains GPTQ model files for [Open-Orca's OpenOrca Platypus2 13B](https://huggingface.co/Open-Orca/OpenOrca-Platypus2-13B).

|

| 36 |

+

|

| 37 |

+

Multiple GPTQ parameter permutations are provided; see Provided Files below for details of the options provided, their parameters, and the software used to create them.

|

| 38 |

+

|

| 39 |

+

## Repositories available

|

| 40 |

+

|

| 41 |

+

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/OpenOrca-Platypus2-13B-GPTQ)

|

| 42 |

+

* [2, 3, 4, 5, 6 and 8-bit GGML models for CPU+GPU inference](https://huggingface.co/TheBloke/OpenOrca-Platypus2-13B-GGML)

|

| 43 |

+

* [Open-Orca's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/Open-Orca/OpenOrca-Platypus2-13B)

|

| 44 |

+

|

| 45 |

+

## Prompt template: Alpaca-InstructOnly

|

| 46 |

+

|

| 47 |

+

```

|

| 48 |

+

### Instruction:

|

| 49 |

+

|

| 50 |

+

{prompt}

|

| 51 |

+

|

| 52 |

+

### Response:

|

| 53 |

+

```

|

| 54 |

+

|

| 55 |

+

## Provided files and GPTQ parameters

|

| 56 |

+

|

| 57 |

+

Multiple quantisation parameters are provided, to allow you to choose the best one for your hardware and requirements.

|

| 58 |

+

|

| 59 |

+

Each separate quant is in a different branch. See below for instructions on fetching from different branches.

|

| 60 |

+

|

| 61 |

+

All GPTQ files are made with AutoGPTQ.

|

| 62 |

+

|

| 63 |

+

<details>

|

| 64 |

+

<summary>Explanation of GPTQ parameters</summary>

|

| 65 |

+

|

| 66 |

+

- Bits: The bit size of the quantised model.

|

| 67 |

+

- GS: GPTQ group size. Higher numbers use less VRAM, but have lower quantisation accuracy. "None" is the lowest possible value.

|

| 68 |

+

- Act Order: True or False. Also known as `desc_act`. True results in better quantisation accuracy. Some GPTQ clients have issues with models that use Act Order plus Group Size.

|

| 69 |

+

- Damp %: A GPTQ parameter that affects how samples are processed for quantisation. 0.01 is default, but 0.1 results in slightly better accuracy.

|

| 70 |

+

- GPTQ dataset: The dataset used for quantisation. Using a dataset more appropriate to the model's training can improve quantisation accuracy. Note that the GPTQ dataset is not the same as the dataset used to train the model - please refer to the original model repo for details of the training dataset(s).

|

| 71 |

+

- Sequence Length: The length of the dataset sequences used for quantisation. Ideally this is the same as the model sequence length. For some very long sequence models (16+K), a lower sequence length may have to be used. Note that a lower sequence length does not limit the sequence length of the quantised model. It only impacts the quantisation accuracy on longer inference sequences.

|

| 72 |

+

- ExLlama Compatibility: Whether this file can be loaded with ExLlama, which currently only supports Llama models in 4-bit.

|

| 73 |

+

|

| 74 |

+

</details>

|

| 75 |

+

|

| 76 |

+

| Branch | Bits | GS | Act Order | Damp % | GPTQ Dataset | Seq Len | Size | ExLlama | Desc |

|

| 77 |

+

| ------ | ---- | -- | --------- | ------ | ------------ | ------- | ---- | ------- | ---- |

|

| 78 |

+

| [main](https://huggingface.co/TheBloke/OpenOrca-Platypus2-13B-GPTQ/tree/main) | 4 | 128 | No | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 7.26 GB | Yes | Most compatible option. Good inference speed in AutoGPTQ and GPTQ-for-LLaMa. Lower inference quality than other options. |

|

| 79 |

+

| [gptq-4bit-32g-actorder_True](https://huggingface.co/TheBloke/OpenOrca-Platypus2-13B-GPTQ/tree/gptq-4bit-32g-actorder_True) | 4 | 32 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 8.00 GB | Yes | 4-bit, with Act Order and group size 32g. Gives highest possible inference quality, with maximum VRAM usage. Poor AutoGPTQ CUDA speed. |

|

| 80 |

+

| [gptq-4bit-64g-actorder_True](https://huggingface.co/TheBloke/OpenOrca-Platypus2-13B-GPTQ/tree/gptq-4bit-64g-actorder_True) | 4 | 64 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 7.51 GB | Yes | 4-bit, with Act Order and group size 64g. Uses less VRAM than 32g, but with slightly lower accuracy. Poor AutoGPTQ CUDA speed. |

|

| 81 |

+

| [gptq-4bit-128g-actorder_True](https://huggingface.co/TheBloke/OpenOrca-Platypus2-13B-GPTQ/tree/gptq-4bit-128g-actorder_True) | 4 | 128 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 7.26 GB | Yes | 4-bit, with Act Order and group size 128g. Uses even less VRAM than 64g, but with slightly lower accuracy. Poor AutoGPTQ CUDA speed. |

|

| 82 |

+

| [gptq-8bit--1g-actorder_True](https://huggingface.co/TheBloke/OpenOrca-Platypus2-13B-GPTQ/tree/gptq-8bit--1g-actorder_True) | 8 | None | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 13.36 GB | No | 8-bit, with Act Order. No group size, to lower VRAM requirements and to improve AutoGPTQ speed. |

|

| 83 |

+

| [gptq-8bit-128g-actorder_True](https://huggingface.co/TheBloke/OpenOrca-Platypus2-13B-GPTQ/tree/gptq-8bit-128g-actorder_True) | 8 | 128 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 13.65 GB | No | 8-bit, with group size 128g for higher inference quality and with Act Order for even higher accuracy. Poor AutoGPTQ CUDA speed. |

|

| 84 |

+

|

| 85 |

+

## How to download from branches

|

| 86 |

+

|

| 87 |

+

- In text-generation-webui, you can add `:branch` to the end of the download name, eg `TheBloke/OpenOrca-Platypus2-13B-GPTQ:gptq-4bit-32g-actorder_True`

|

| 88 |

+

- With Git, you can clone a branch with:

|

| 89 |

+

```

|

| 90 |

+

git clone --single-branch --branch gptq-4bit-32g-actorder_True https://huggingface.co/TheBloke/OpenOrca-Platypus2-13B-GPTQ

|

| 91 |

+

```

|

| 92 |

+

- In Python Transformers code, the branch is the `revision` parameter; see below.

|

| 93 |

+

|

| 94 |

+

## How to easily download and use this model in [text-generation-webui](https://github.com/oobabooga/text-generation-webui).

|

| 95 |

+

|

| 96 |

+

Please make sure you're using the latest version of [text-generation-webui](https://github.com/oobabooga/text-generation-webui).

|

| 97 |

+

|

| 98 |

+

It is strongly recommended to use the text-generation-webui one-click-installers unless you know how to make a manual install.

|

| 99 |

+

|

| 100 |

+

1. Click the **Model tab**.

|

| 101 |

+

2. Under **Download custom model or LoRA**, enter `TheBloke/OpenOrca-Platypus2-13B-GPTQ`.

|

| 102 |

+

- To download from a specific branch, enter for example `TheBloke/OpenOrca-Platypus2-13B-GPTQ:gptq-4bit-32g-actorder_True`

|

| 103 |

+

- see Provided Files above for the list of branches for each option.

|

| 104 |

+

3. Click **Download**.

|

| 105 |

+

4. The model will start downloading. Once it's finished it will say "Done"

|

| 106 |

+

5. In the top left, click the refresh icon next to **Model**.

|

| 107 |

+

6. In the **Model** dropdown, choose the model you just downloaded: `OpenOrca-Platypus2-13B-GPTQ`

|

| 108 |

+

7. The model will automatically load, and is now ready for use!

|

| 109 |

+

8. If you want any custom settings, set them and then click **Save settings for this model** followed by **Reload the Model** in the top right.

|

| 110 |

+

* Note that you do not need to set GPTQ parameters any more. These are set automatically from the file `quantize_config.json`.

|

| 111 |

+

9. Once you're ready, click the **Text Generation tab** and enter a prompt to get started!

|

| 112 |

+

|

| 113 |

+

## How to use this GPTQ model from Python code

|

| 114 |

+

|

| 115 |

+

First make sure you have [AutoGPTQ](https://github.com/PanQiWei/AutoGPTQ) 0.3.1 or later installed:

|

| 116 |

+

|

| 117 |

+

```

|

| 118 |

+

pip3 install auto-gptq

|

| 119 |

+

```

|

| 120 |

+

|

| 121 |

+

If you have problems installing AutoGPTQ, please build from source instead:

|

| 122 |

+

```

|

| 123 |

+

pip3 uninstall -y auto-gptq

|

| 124 |

+

git clone https://github.com/PanQiWei/AutoGPTQ

|

| 125 |

+

cd AutoGPTQ

|

| 126 |

+

pip3 install .

|

| 127 |

+

```

|

| 128 |

+

|

| 129 |

+

Then try the following example code:

|

| 130 |

+

|

| 131 |

+

```python

|

| 132 |

+

from transformers import AutoTokenizer, pipeline, logging

|

| 133 |

+

from auto_gptq import AutoGPTQForCausalLM, BaseQuantizeConfig

|

| 134 |

+

|

| 135 |

+

model_name_or_path = "TheBloke/OpenOrca-Platypus2-13B-GPTQ"

|

| 136 |

+

|

| 137 |

+

use_triton = False

|

| 138 |

+

|

| 139 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, use_fast=True)

|

| 140 |

+

|

| 141 |

+

model = AutoGPTQForCausalLM.from_quantized(model_name_or_path,

|

| 142 |

+

use_safetensors=True,

|

| 143 |

+

trust_remote_code=False,

|

| 144 |

+

device="cuda:0",

|

| 145 |

+

use_triton=use_triton,

|

| 146 |

+

quantize_config=None)

|

| 147 |

+

|

| 148 |

+

"""

|

| 149 |

+

# To download from a specific branch, use the revision parameter, as in this example:

|

| 150 |

+

# Note that `revision` requires AutoGPTQ 0.3.1 or later!

|

| 151 |

+

|

| 152 |

+

model = AutoGPTQForCausalLM.from_quantized(model_name_or_path,

|

| 153 |

+

revision="gptq-4bit-32g-actorder_True",

|

| 154 |

+

use_safetensors=True,

|

| 155 |

+

trust_remote_code=False,

|

| 156 |

+

device="cuda:0",

|

| 157 |

+

quantize_config=None)

|

| 158 |

+

"""

|

| 159 |

+

|

| 160 |

+

prompt = "Tell me about AI"

|

| 161 |

+

prompt_template=f'''### Instruction:

|

| 162 |

+

|

| 163 |

+

{prompt}

|

| 164 |

+

|

| 165 |

+

### Response:

|

| 166 |

+

'''

|

| 167 |

+

|

| 168 |

+

print("\n\n*** Generate:")

|

| 169 |

+

|

| 170 |

+

input_ids = tokenizer(prompt_template, return_tensors='pt').input_ids.cuda()

|

| 171 |

+

output = model.generate(inputs=input_ids, temperature=0.7, max_new_tokens=512)

|

| 172 |

+

print(tokenizer.decode(output[0]))

|

| 173 |

+

|

| 174 |

+

# Inference can also be done using transformers' pipeline

|

| 175 |

+

|

| 176 |

+

# Prevent printing spurious transformers error when using pipeline with AutoGPTQ

|

| 177 |

+

logging.set_verbosity(logging.CRITICAL)

|

| 178 |

+

|

| 179 |

+

print("*** Pipeline:")

|

| 180 |

+

pipe = pipeline(

|

| 181 |

+

"text-generation",

|

| 182 |

+

model=model,

|

| 183 |

+

tokenizer=tokenizer,

|

| 184 |

+

max_new_tokens=512,

|

| 185 |

+

temperature=0.7,

|

| 186 |

+

top_p=0.95,

|

| 187 |

+

repetition_penalty=1.15

|

| 188 |

+

)

|

| 189 |

+

|

| 190 |

+

print(pipe(prompt_template)[0]['generated_text'])

|

| 191 |

+

```

|

| 192 |

+

|

| 193 |

+

## Compatibility

|

| 194 |

+

|

| 195 |

+

The files provided will work with AutoGPTQ (CUDA and Triton modes), GPTQ-for-LLaMa (only CUDA has been tested), and Occ4m's GPTQ-for-LLaMa fork.

|

| 196 |

+

|

| 197 |

+

ExLlama works with Llama models in 4-bit. Please see the Provided Files table above for per-file compatibility.

|

| 198 |

+

|

| 199 |

+

<!-- footer start -->

|

| 200 |

+

## Discord

|

| 201 |

+

|

| 202 |

+

For further support, and discussions on these models and AI in general, join us at:

|

| 203 |

+

|

| 204 |

+

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

|

| 205 |

+

|

| 206 |

+

## Thanks, and how to contribute.

|

| 207 |

+

|

| 208 |

+

Thanks to the [chirper.ai](https://chirper.ai) team!

|

| 209 |

+

|

| 210 |

+

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

|

| 211 |

+

|

| 212 |

+

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

|

| 213 |

+

|

| 214 |

+

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

|

| 215 |

+

|

| 216 |

+

* Patreon: https://patreon.com/TheBlokeAI

|

| 217 |

+

* Ko-Fi: https://ko-fi.com/TheBlokeAI

|

| 218 |

+

|

| 219 |

+

**Special thanks to**: Luke from CarbonQuill, Aemon Algiz.

|

| 220 |

+

|

| 221 |

+

**Patreon special mentions**: Ajan Kanaga, David Ziegler, Raymond Fosdick, SuperWojo, Sam, webtim, Steven Wood, knownsqashed, Tony Hughes, Junyu Yang, J, Olakabola, Dan Guido, Stephen Murray, John Villwock, vamX, William Sang, Sean Connelly, LangChain4j, Olusegun Samson, Fen Risland, Derek Yates, Karl Bernard, transmissions 11, Trenton Dambrowitz, Pieter, Preetika Verma, Swaroop Kallakuri, Andrey, Slarti, Jonathan Leane, Michael Levine, Kalila, Joseph William Delisle, Rishabh Srivastava, Deo Leter, Luke Pendergrass, Spencer Kim, Geoffrey Montalvo, Thomas Belote, Jeffrey Morgan, Mandus, ya boyyy, Matthew Berman, Magnesian, Ai Maven, senxiiz, Alps Aficionado, Luke @flexchar, Raven Klaugh, Imad Khwaja, Gabriel Puliatti, Johann-Peter Hartmann, usrbinkat, Spiking Neurons AB, Artur Olbinski, chris gileta, danny, Willem Michiel, WelcomeToTheClub, Deep Realms, alfie_i, Dave, Leonard Tan, NimbleBox.ai, Randy H, Daniel P. Andersen, Pyrater, Will Dee, Elle, Space Cruiser, Gabriel Tamborski, Asp the Wyvern, Illia Dulskyi, Nikolai Manek, Sid, Brandon Frisco, Nathan LeClaire, Edmond Seymore, Enrico Ros, Pedro Madruga, Eugene Pentland, John Detwiler, Mano Prime, Stanislav Ovsiannikov, Alex, Vitor Caleffi, K, biorpg, Michael Davis, Lone Striker, Pierre Kircher, theTransient, Fred von Graf, Sebastain Graf, Vadim, Iucharbius, Clay Pascal, Chadd, Mesiah Bishop, terasurfer, Rainer Wilmers, Alexandros Triantafyllidis, Stefan Sabev, Talal Aujan, Cory Kujawski, Viktor Bowallius, subjectnull, ReadyPlayerEmma, zynix

|

| 222 |

+

|

| 223 |

+

|

| 224 |

+

Thank you to all my generous patrons and donaters!

|

| 225 |

+

|

| 226 |

+

<!-- footer end -->

|

| 227 |

+

|

| 228 |

+

# Original model card: Open-Orca's OpenOrca Platypus2 13B

|

| 229 |

+

|

| 230 |

+

|

| 231 |

+

<p><h1>🐋 The First OrcaPlatypus! 🐋</h1></p>

|

| 232 |

+

|

| 233 |

+

|

| 234 |

+

|

| 235 |

+

|

| 236 |

+

# OpenOrca-Platypus2-13B

|

| 237 |

+

|

| 238 |

+

OpenOrca-Platypus2-13B is a merge of [`garage-bAInd/Platypus2-13B`](https://huggingface.co/garage-bAInd/Platypus2-13B) and [`Open-Orca/OpenOrcaxOpenChat-Preview2-13B`](https://huggingface.co/Open-Orca/OpenOrcaxOpenChat-Preview2-13B).

|

| 239 |

+

|

| 240 |

+

This model is more than the sum of its parts! We are happy to be teaming up with the [Platypus](https://platypus-llm.github.io/) team to bring you a new model which once again tops the leaderboards!

|

| 241 |

+

|

| 242 |

+

Want to visualize our full (pre-filtering) dataset? Check out our [Nomic Atlas Map](https://atlas.nomic.ai/map/c1b88b47-2d9b-47e0-9002-b80766792582/2560fd25-52fe-42f1-a58f-ff5eccc890d2).

|

| 243 |

+

|

| 244 |

+

|

| 245 |

+

[<img src="https://huggingface.co/Open-Orca/OpenOrca-Preview1-13B/resolve/main/OpenOrca%20Nomic%20Atlas.png" alt="Atlas Nomic Dataset Map" width="400" height="400" />](https://atlas.nomic.ai/map/c1b88b47-2d9b-47e0-9002-b80766792582/2560fd25-52fe-42f1-a58f-ff5eccc890d2)

|

| 246 |

+

|

| 247 |

+

|

| 248 |

+

We are in-process with training more models, so keep a look out on our org for releases coming soon with exciting partners.

|

| 249 |

+

|

| 250 |

+

We will also give sneak-peak announcements on our Discord, which you can find here:

|

| 251 |

+

|

| 252 |

+

https://AlignmentLab.ai

|

| 253 |

+

|

| 254 |

+

# Benchmark Metrics

|

| 255 |

+

|

| 256 |

+

|

| 257 |

+

|

| 258 |

+

| Metric | Value |

|

| 259 |

+

|-----------------------|-------|

|

| 260 |

+

| MMLU (5-shot) | 59.5 |

|

| 261 |

+

| ARC (25-shot) | 62.88 |

|

| 262 |

+

| HellaSwag (10-shot) | 83.19 |

|

| 263 |

+

| TruthfulQA (0-shot) | 52.69 |

|

| 264 |

+

| Avg. | 64.56 |

|

| 265 |

+

|

| 266 |

+

We use [Language Model Evaluation Harness](https://github.com/EleutherAI/lm-evaluation-harness) to run the benchmark tests above, using the same version as the HuggingFace LLM Leaderboard. Please see below for detailed instructions on reproducing benchmark results.

|

| 267 |

+

|

| 268 |

+

|

| 269 |

+

# Model Details

|

| 270 |

+

|

| 271 |

+

* **Trained by**: **Platypus2-13B** trained by Cole Hunter & Ariel Lee; **OpenOrcaxOpenChat-Preview2-13B** trained by Open-Orca

|

| 272 |

+

* **Model type:** **OpenOrca-Platypus2-13B** is an auto-regressive language model based on the LLaMA 2 transformer architecture.

|

| 273 |

+

* **Language(s)**: English

|

| 274 |

+

* **License for Platypus2-13B base weights**: Non-Commercial Creative Commons license ([CC BY-NC-4.0](https://creativecommons.org/licenses/by-nc/4.0/))

|

| 275 |

+

* **License for OpenOrcaxOpenChat-Preview2-13B base weights**: LLaMa-2 commercial

|

| 276 |

+

|

| 277 |

+

|

| 278 |

+

# Prompt Template for base Platypus2-13B

|

| 279 |

+

```

|

| 280 |

+

### Instruction:

|

| 281 |

+

|

| 282 |

+

<prompt> (without the <>)

|

| 283 |

+

|

| 284 |

+

### Response:

|

| 285 |

+

```

|

| 286 |

+

|

| 287 |

+

|

| 288 |

+

# Prompt Template for base OpenOrcaxOpenChat-Preview2-13B

|

| 289 |

+

|

| 290 |

+

OpenChat Llama2 V1: see [OpenOrcaxOpenChat-Preview2-13B](https://huggingface.co/Open-Orca/OpenOrcaxOpenChat-Preview2-13B) for additional information.

|

| 291 |

+

|

| 292 |

+

|

| 293 |

+

# Training Datasets

|

| 294 |

+

|

| 295 |

+

`garage-bAInd/Platypus2-13B` trained using STEM and logic based dataset [`garage-bAInd/Open-Platypus`](https://huggingface.co/datasets/garage-bAInd/Open-Platypus).

|

| 296 |

+

|

| 297 |

+

Please see our [paper](https://platypus-llm.github.io/Platypus.pdf) and [project webpage](https://platypus-llm.github.io) for additional information.

|

| 298 |

+

|

| 299 |

+

[`Open-Orca/OpenOrcaxOpenChat-Preview2-13B`] trained using a refined subset of most of the GPT-4 data from the [OpenOrca dataset](https://huggingface.co/datasets/Open-Orca/OpenOrca).

|

| 300 |

+

|

| 301 |

+

|

| 302 |

+

# Training Procedure

|

| 303 |

+

|

| 304 |

+

`Open-Orca/Platypus2-13B` was instruction fine-tuned using LoRA on 1 A100 80GB. For training details and inference instructions please see the [Platypus](https://github.com/arielnlee/Platypus) GitHub repo.

|

| 305 |

+

|

| 306 |

+

|

| 307 |

+

# Reproducing Evaluation Results

|

| 308 |

+

|

| 309 |

+

Install LM Evaluation Harness:

|

| 310 |

+

```

|

| 311 |

+

# clone repository

|

| 312 |

+

git clone https://github.com/EleutherAI/lm-evaluation-harness.git

|

| 313 |

+

# change to repo directory

|

| 314 |

+

cd lm-evaluation-harness

|

| 315 |

+

# check out the correct commit

|

| 316 |

+

git checkout b281b0921b636bc36ad05c0b0b0763bd6dd43463

|

| 317 |

+

# install

|

| 318 |

+

pip install -e .

|

| 319 |

+

```

|

| 320 |

+

Each task was evaluated on a single A100 80GB GPU.

|

| 321 |

+

|

| 322 |

+

ARC:

|

| 323 |

+

```

|

| 324 |

+

python main.py --model hf-causal-experimental --model_args pretrained=Open-Orca/OpenOrca-Platypus2-13B --tasks arc_challenge --batch_size 1 --no_cache --write_out --output_path results/OpenOrca-Platypus2-13B/arc_challenge_25shot.json --device cuda --num_fewshot 25

|

| 325 |

+

```

|

| 326 |

+

|

| 327 |

+

HellaSwag:

|

| 328 |

+

```

|

| 329 |

+

python main.py --model hf-causal-experimental --model_args pretrained=Open-Orca/OpenOrca-Platypus2-13B --tasks hellaswag --batch_size 1 --no_cache --write_out --output_path results/OpenOrca-Platypus2-13B/hellaswag_10shot.json --device cuda --num_fewshot 10

|

| 330 |

+

```

|

| 331 |

+

|

| 332 |

+

MMLU:

|

| 333 |

+

```

|

| 334 |

+

python main.py --model hf-causal-experimental --model_args pretrained=Open-Orca/OpenOrca-Platypus2-13B --tasks hendrycksTest-* --batch_size 1 --no_cache --write_out --output_path results/OpenOrca-Platypus2-13B/mmlu_5shot.json --device cuda --num_fewshot 5

|

| 335 |

+

```

|

| 336 |

+

|

| 337 |

+

TruthfulQA:

|

| 338 |

+

```

|

| 339 |

+

python main.py --model hf-causal-experimental --model_args pretrained=Open-Orca/OpenOrca-Platypus2-13B --tasks truthfulqa_mc --batch_size 1 --no_cache --write_out --output_path results/OpenOrca-Platypus2-13B/truthfulqa_0shot.json --device cuda

|

| 340 |

+

```

|

| 341 |

+

|

| 342 |

+

|

| 343 |

+

# Limitations and bias

|

| 344 |

+

|

| 345 |

+

Llama 2 and fine-tuned variants are a new technology that carries risks with use. Testing conducted to date has been in English, and has not covered, nor could it cover all scenarios. For these reasons, as with all LLMs, Llama 2 and any fine-tuned varient's potential outputs cannot be predicted in advance, and the model may in some instances produce inaccurate, biased or other objectionable responses to user prompts. Therefore, before deploying any applications of Llama 2 variants, developers should perform safety testing and tuning tailored to their specific applications of the model.

|

| 346 |

+

|

| 347 |

+

Please see the Responsible Use Guide available at https://ai.meta.com/llama/responsible-use-guide/

|

| 348 |

+

|

| 349 |

+

|

| 350 |

+

# Citations

|

| 351 |

+

|

| 352 |

+

```bibtex

|

| 353 |

+

@misc{touvron2023llama,

|

| 354 |

+

title={Llama 2: Open Foundation and Fine-Tuned Chat Models},

|

| 355 |

+

author={Hugo Touvron and Louis Martin and Kevin Stone and Peter Albert and Amjad Almahairi and Yasmine Babaei and Nikolay Bashlykov and Soumya Batra and Prajjwal Bhargava and Shruti Bhosale and Dan Bikel and Lukas Blecher and Cristian Canton Ferrer and Moya Chen and Guillem Cucurull and David Esiobu and Jude Fernandes and Jeremy Fu and Wenyin Fu and Brian Fuller and Cynthia Gao and Vedanuj Goswami and Naman Goyal and Anthony Hartshorn and Saghar Hosseini and Rui Hou and Hakan Inan and Marcin Kardas and Viktor Kerkez and Madian Khabsa and Isabel Kloumann and Artem Korenev and Punit Singh Koura and Marie-Anne Lachaux and Thibaut Lavril and Jenya Lee and Diana Liskovich and Yinghai Lu and Yuning Mao and Xavier Martinet and Todor Mihaylov and Pushkar Mishra and Igor Molybog and Yixin Nie and Andrew Poulton and Jeremy Reizenstein and Rashi Rungta and Kalyan Saladi and Alan Schelten and Ruan Silva and Eric Michael Smith and Ranjan Subramanian and Xiaoqing Ellen Tan and Binh Tang and Ross Taylor and Adina Williams and Jian Xiang Kuan and Puxin Xu and Zheng Yan and Iliyan Zarov and Yuchen Zhang and Angela Fan and Melanie Kambadur and Sharan Narang and Aurelien Rodriguez and Robert Stojnic and Sergey Edunov and Thomas Scialom},

|

| 356 |

+

year={2023},

|

| 357 |

+

eprint= arXiv 2307.09288

|

| 358 |

+

}

|

| 359 |

+

```

|

| 360 |

+

```bibtex

|

| 361 |

+

@article{hu2021lora,

|

| 362 |

+

title={LoRA: Low-Rank Adaptation of Large Language Models},

|

| 363 |

+

author={Hu, Edward J. and Shen, Yelong and Wallis, Phillip and Allen-Zhu, Zeyuan and Li, Yuanzhi and Wang, Shean and Chen, Weizhu},

|

| 364 |

+

journal={CoRR},

|

| 365 |

+

year={2021}

|

| 366 |

+

}

|

| 367 |

+

```

|

| 368 |

+

```bibtex

|

| 369 |

+

@software{OpenOrcaxOpenChatPreview2,

|

| 370 |

+

title = {OpenOrcaxOpenChatPreview2: Llama2-13B Model Instruct-tuned on Filtered OpenOrcaV1 GPT-4 Dataset},

|

| 371 |

+

author = {Guan Wang and Bleys Goodson and Wing Lian and Eugene Pentland and Austin Cook and Chanvichet Vong and "Teknium"},

|

| 372 |

+

year = {2023},

|

| 373 |

+

publisher = {HuggingFace},

|

| 374 |

+

journal = {HuggingFace repository},

|

| 375 |

+

howpublished = {\url{https://https://huggingface.co/Open-Orca/OpenOrcaxOpenChat-Preview2-13B},

|

| 376 |

+

}

|

| 377 |

+

```

|

| 378 |

+

```bibtex

|

| 379 |

+

@software{openchat,

|

| 380 |

+

title = {{OpenChat: Advancing Open-source Language Models with Imperfect Data}},

|

| 381 |

+

author = {Wang, Guan and Cheng, Sijie and Yu, Qiying and Liu, Changling},

|

| 382 |

+

doi = {10.5281/zenodo.8105775},

|

| 383 |

+

url = {https://github.com/imoneoi/openchat},

|

| 384 |

+

version = {pre-release},

|

| 385 |

+

year = {2023},

|

| 386 |

+

month = {7},

|

| 387 |

+

}

|

| 388 |

+

```

|