metadata

language:

- sv

license: apache-2.0

tags:

- hf-asr-leaderboard

- generated_from_trainer

datasets:

- mozilla-foundation/common_voice_11_0

model-index:

- name: whisper-small-se

results: []

whisper-small-se

This model is a fine-tuned version of openai/whisper-small on the Common Voice 11.0 dataset.

Model description

The model was initially trained on 680 000 hours of audio with corresponding transcripts from the internet, 65% of which was in english audio and 83 % of which had english transcripts.

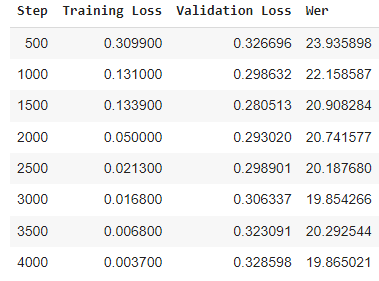

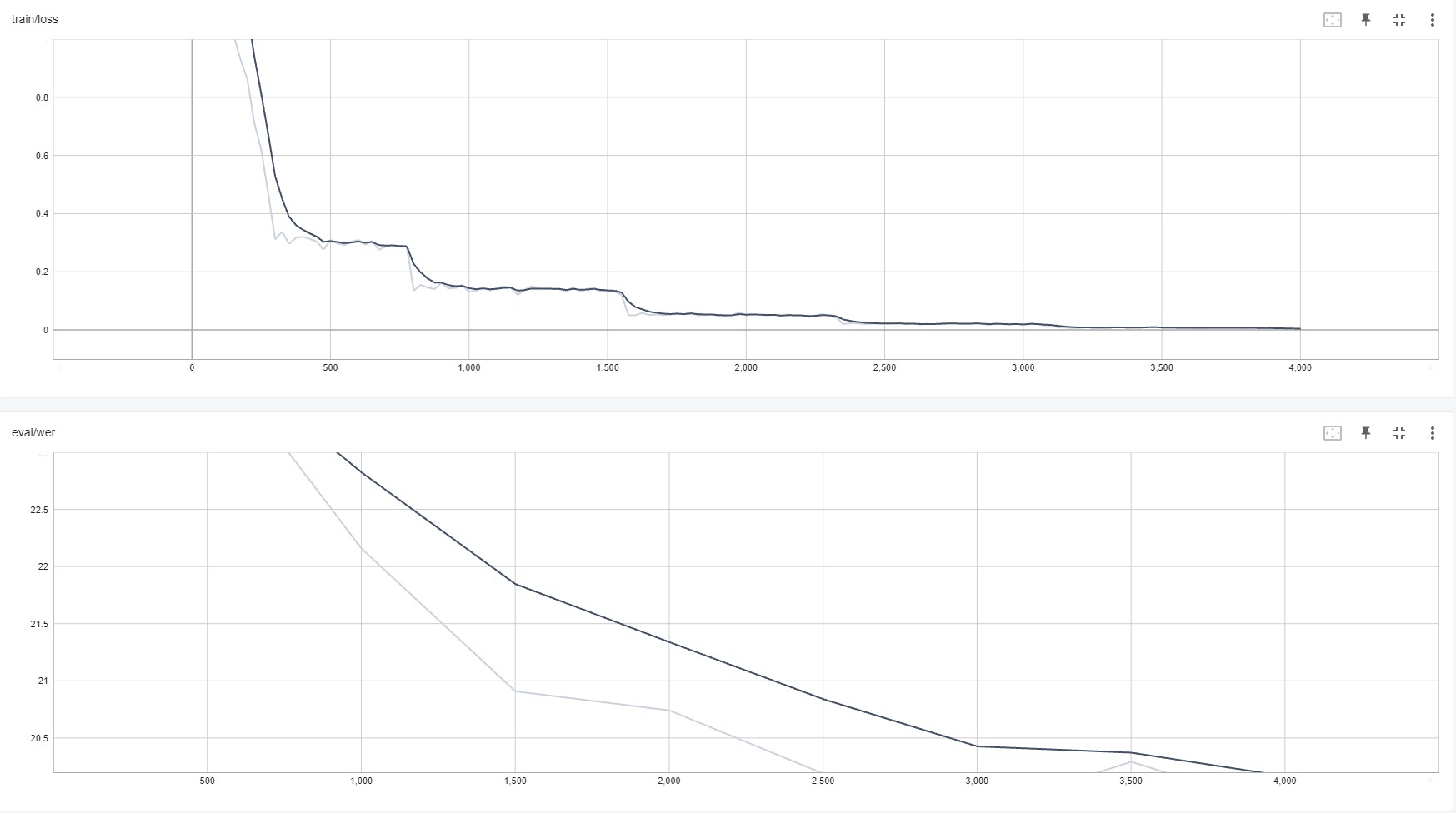

The model was then further trained for 4000 iterations, 500 of which as warm-up, on Swedish data from Common_voice 11.0. Achieving a WER of 19.865.

Intended uses & limitations

More information needed

Training and evaluation data

More information needed

Training procedure

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- training_steps: 500

- mixed_precision_training: Native AMP

Training results

Model Plot

Framework versions

- Transformers 4.26.0.dev0

- Pytorch 1.12.1+cu113

- Datasets 2.7.1

- Tokenizers 0.13.2