Upload folder using huggingface_hub (#3)

Browse files- 436a4866fd8bbac54e4a4c75c7dcd91ba276cc1d35d837a444c0812f5148d3ce (a18385ece4d3df46bbf68e60b97013bc4a47b4c5)

- 86338f9249f324c13f82985e2db1bc5b5059b6ed8f2891e900807d0e32526497 (840c7e4cfaceaa1d4c72af0bdc4225e4646ee327)

- README.md +2 -2

- config.json +1 -1

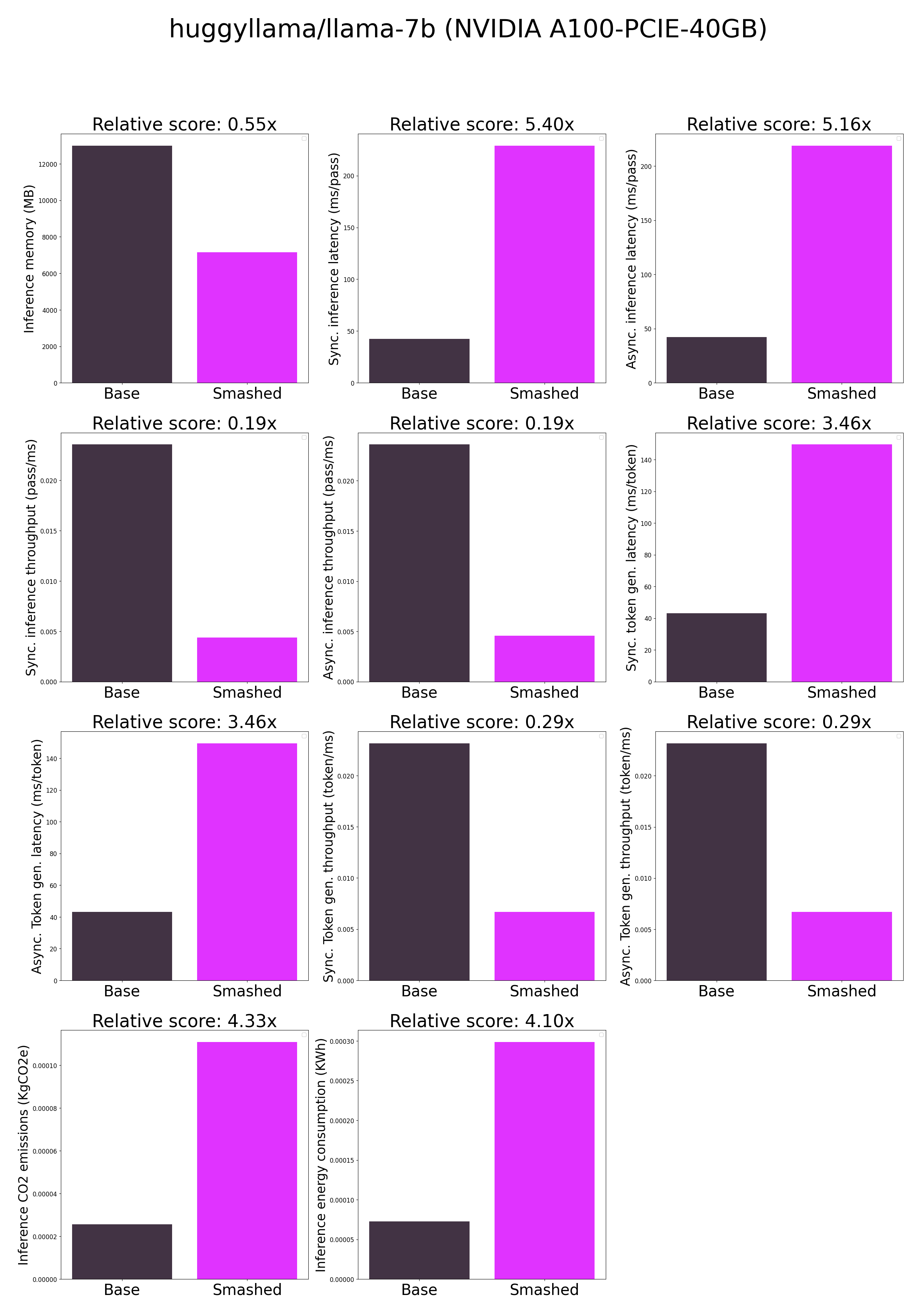

- plots.png +0 -0

- smash_config.json +1 -1

README.md

CHANGED

|

@@ -39,7 +39,7 @@ tags:

|

|

| 39 |

**Frequently Asked Questions**

|

| 40 |

- ***How does the compression work?*** The model is compressed with llm-int8.

|

| 41 |

- ***How does the model quality change?*** The quality of the model output might vary compared to the base model.

|

| 42 |

-

- ***How is the model efficiency evaluated?*** These results were obtained on

|

| 43 |

- ***What is the model format?*** We use safetensors.

|

| 44 |

- ***What calibration data has been used?*** If needed by the compression method, we used WikiText as the calibration data.

|

| 45 |

- ***What is the naming convention for Pruna Huggingface models?*** We take the original model name and append "turbo", "tiny", or "green" if the smashed model has a measured inference speed, inference memory, or inference energy consumption which is less than 90% of the original base model.

|

|

@@ -60,7 +60,7 @@ You can run the smashed model with these steps:

|

|

| 60 |

```python

|

| 61 |

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 62 |

|

| 63 |

-

model = AutoModelForCausalLM.from_pretrained("PrunaAI/huggyllama-llama-7b-

|

| 64 |

trust_remote_code=True, device_map='auto')

|

| 65 |

tokenizer = AutoTokenizer.from_pretrained("huggyllama/llama-7b")

|

| 66 |

|

|

|

|

| 39 |

**Frequently Asked Questions**

|

| 40 |

- ***How does the compression work?*** The model is compressed with llm-int8.

|

| 41 |

- ***How does the model quality change?*** The quality of the model output might vary compared to the base model.

|

| 42 |

+

- ***How is the model efficiency evaluated?*** These results were obtained on NVIDIA A100-PCIE-40GB with configuration described in `model/smash_config.json` and are obtained after a hardware warmup. The smashed model is directly compared to the original base model. Efficiency results may vary in other settings (e.g. other hardware, image size, batch size, ...). We recommend to directly run them in the use-case conditions to know if the smashed model can benefit you.

|

| 43 |

- ***What is the model format?*** We use safetensors.

|

| 44 |

- ***What calibration data has been used?*** If needed by the compression method, we used WikiText as the calibration data.

|

| 45 |

- ***What is the naming convention for Pruna Huggingface models?*** We take the original model name and append "turbo", "tiny", or "green" if the smashed model has a measured inference speed, inference memory, or inference energy consumption which is less than 90% of the original base model.

|

|

|

|

| 60 |

```python

|

| 61 |

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 62 |

|

| 63 |

+

model = AutoModelForCausalLM.from_pretrained("PrunaAI/huggyllama-llama-7b-bnb-8bit-smashed",

|

| 64 |

trust_remote_code=True, device_map='auto')

|

| 65 |

tokenizer = AutoTokenizer.from_pretrained("huggyllama/llama-7b")

|

| 66 |

|

config.json

CHANGED

|

@@ -1,5 +1,5 @@

|

|

| 1 |

{

|

| 2 |

-

"_name_or_path": "/tmp/

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

|

|

|

| 1 |

{

|

| 2 |

+

"_name_or_path": "/tmp/tmp0d1dv2jd",

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

plots.png

CHANGED

|

|

smash_config.json

CHANGED

|

@@ -8,7 +8,7 @@

|

|

| 8 |

"compilers": "None",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

-

"cache_dir": "/ceph/hdd/staff/charpent/.cache/

|

| 12 |

"batch_size": 1,

|

| 13 |

"n_quantization_bits": 8,

|

| 14 |

"tokenizer": "LlamaTokenizerFast(name_or_path='huggyllama/llama-7b', vocab_size=32000, model_max_length=2048, is_fast=True, padding_side='left', truncation_side='right', special_tokens={'bos_token': '<s>', 'eos_token': '</s>', 'unk_token': '<unk>'}, clean_up_tokenization_spaces=False), added_tokens_decoder={\n\t0: AddedToken(\"<unk>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n\t1: AddedToken(\"<s>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n\t2: AddedToken(\"</s>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n}",

|

|

|

|

| 8 |

"compilers": "None",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

+

"cache_dir": "/ceph/hdd/staff/charpent/.cache/models4gxc1509",

|

| 12 |

"batch_size": 1,

|

| 13 |

"n_quantization_bits": 8,

|

| 14 |

"tokenizer": "LlamaTokenizerFast(name_or_path='huggyllama/llama-7b', vocab_size=32000, model_max_length=2048, is_fast=True, padding_side='left', truncation_side='right', special_tokens={'bos_token': '<s>', 'eos_token': '</s>', 'unk_token': '<unk>'}, clean_up_tokenization_spaces=False), added_tokens_decoder={\n\t0: AddedToken(\"<unk>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n\t1: AddedToken(\"<s>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n\t2: AddedToken(\"</s>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n}",

|