Upload folder using huggingface_hub (#3)

Browse files- 86ce1a2a5663a9a94ea04f6e92e6eabd40efc0d4f1fb6c1a57c376231a028fe4 (be552d4ce3bee87708c562d0aa22658b1c8192fb)

- 1e9d184d562fdf90e69d4d85c2b7788ac4816bfdf11dac285045cfe91490defb (52cf3de97134b7b04c691c9a857bdce1d7ea0a11)

- README.md +2 -2

- config.json +2 -2

- plots.png +0 -0

- smash_config.json +1 -1

README.md

CHANGED

|

@@ -34,7 +34,7 @@ tags:

|

|

| 34 |

|

| 35 |

## Results

|

| 36 |

|

| 37 |

-

|

| 38 |

|

| 39 |

**Frequently Asked Questions**

|

| 40 |

- ***How does the compression work?*** The model is compressed with llm-int8.

|

|

@@ -61,7 +61,7 @@ You can run the smashed model with these steps:

|

|

| 61 |

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 62 |

|

| 63 |

model = AutoModelForCausalLM.from_pretrained("PrunaAI/codellama-CodeLlama-7b-Python-hf-bnb-8bit-smashed",

|

| 64 |

-

trust_remote_code=True)

|

| 65 |

tokenizer = AutoTokenizer.from_pretrained("codellama/CodeLlama-7b-Python-hf")

|

| 66 |

|

| 67 |

input_ids = tokenizer("What is the color of prunes?,", return_tensors='pt').to(model.device)["input_ids"]

|

|

|

|

| 34 |

|

| 35 |

## Results

|

| 36 |

|

| 37 |

+

|

| 38 |

|

| 39 |

**Frequently Asked Questions**

|

| 40 |

- ***How does the compression work?*** The model is compressed with llm-int8.

|

|

|

|

| 61 |

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 62 |

|

| 63 |

model = AutoModelForCausalLM.from_pretrained("PrunaAI/codellama-CodeLlama-7b-Python-hf-bnb-8bit-smashed",

|

| 64 |

+

trust_remote_code=True, device_map='auto')

|

| 65 |

tokenizer = AutoTokenizer.from_pretrained("codellama/CodeLlama-7b-Python-hf")

|

| 66 |

|

| 67 |

input_ids = tokenizer("What is the color of prunes?,", return_tensors='pt').to(model.device)["input_ids"]

|

config.json

CHANGED

|

@@ -1,5 +1,5 @@

|

|

| 1 |

{

|

| 2 |

-

"_name_or_path": "/tmp/

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

|

@@ -20,7 +20,7 @@

|

|

| 20 |

"quantization_config": {

|

| 21 |

"bnb_4bit_compute_dtype": "bfloat16",

|

| 22 |

"bnb_4bit_quant_type": "fp4",

|

| 23 |

-

"bnb_4bit_use_double_quant":

|

| 24 |

"llm_int8_enable_fp32_cpu_offload": false,

|

| 25 |

"llm_int8_has_fp16_weight": false,

|

| 26 |

"llm_int8_skip_modules": [

|

|

|

|

| 1 |

{

|

| 2 |

+

"_name_or_path": "/tmp/tmpehf7tv62",

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

|

|

|

| 20 |

"quantization_config": {

|

| 21 |

"bnb_4bit_compute_dtype": "bfloat16",

|

| 22 |

"bnb_4bit_quant_type": "fp4",

|

| 23 |

+

"bnb_4bit_use_double_quant": false,

|

| 24 |

"llm_int8_enable_fp32_cpu_offload": false,

|

| 25 |

"llm_int8_has_fp16_weight": false,

|

| 26 |

"llm_int8_skip_modules": [

|

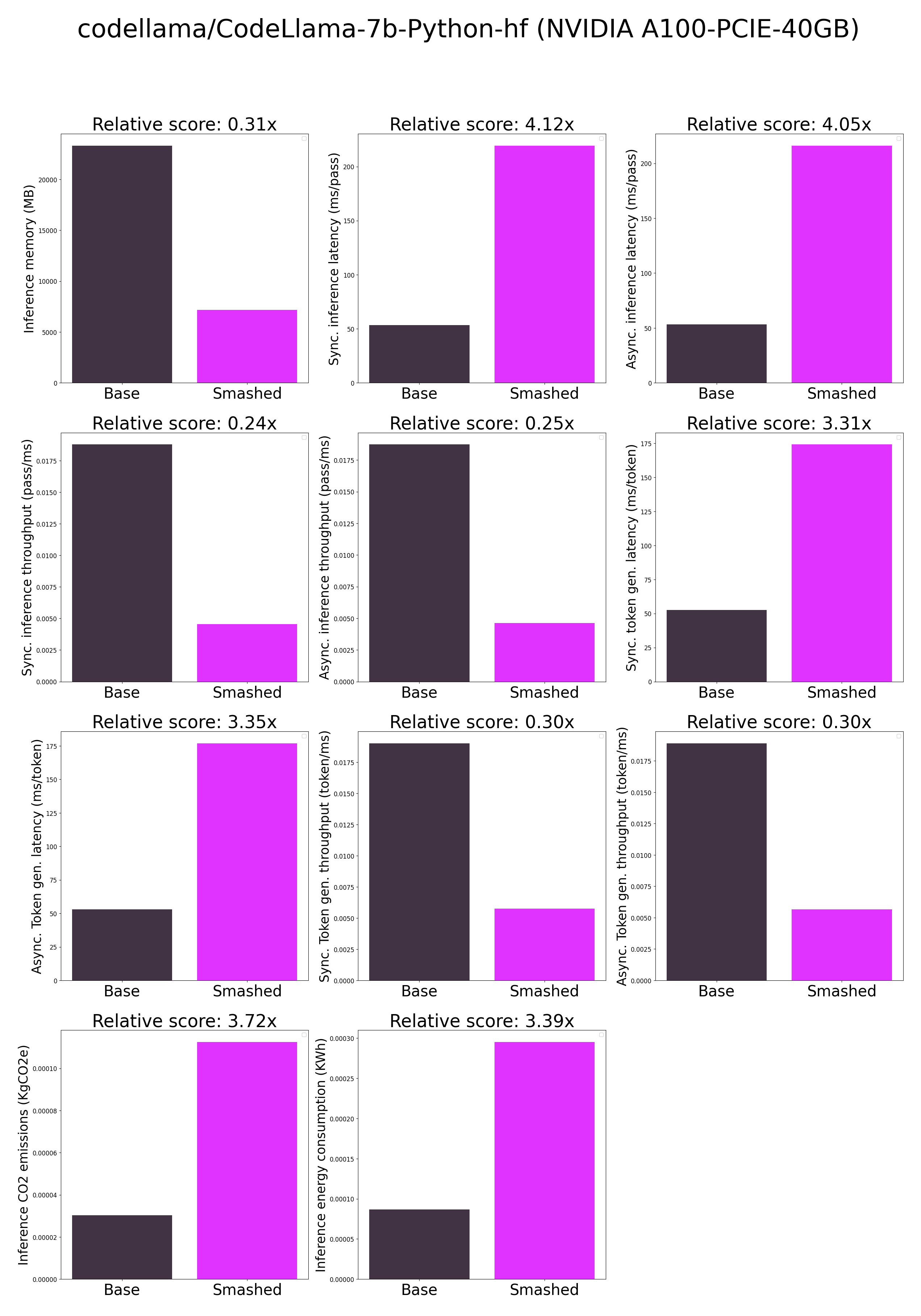

plots.png

ADDED

|

smash_config.json

CHANGED

|

@@ -8,7 +8,7 @@

|

|

| 8 |

"compilers": "None",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

-

"cache_dir": "/ceph/hdd/staff/charpent/.cache/

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "codellama/CodeLlama-7b-Python-hf",

|

| 14 |

"pruning_ratio": 0.0,

|

|

|

|

| 8 |

"compilers": "None",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

+

"cache_dir": "/ceph/hdd/staff/charpent/.cache/modelsr74xdfqc",

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "codellama/CodeLlama-7b-Python-hf",

|

| 14 |

"pruning_ratio": 0.0,

|