metadata

license: apache-2.0

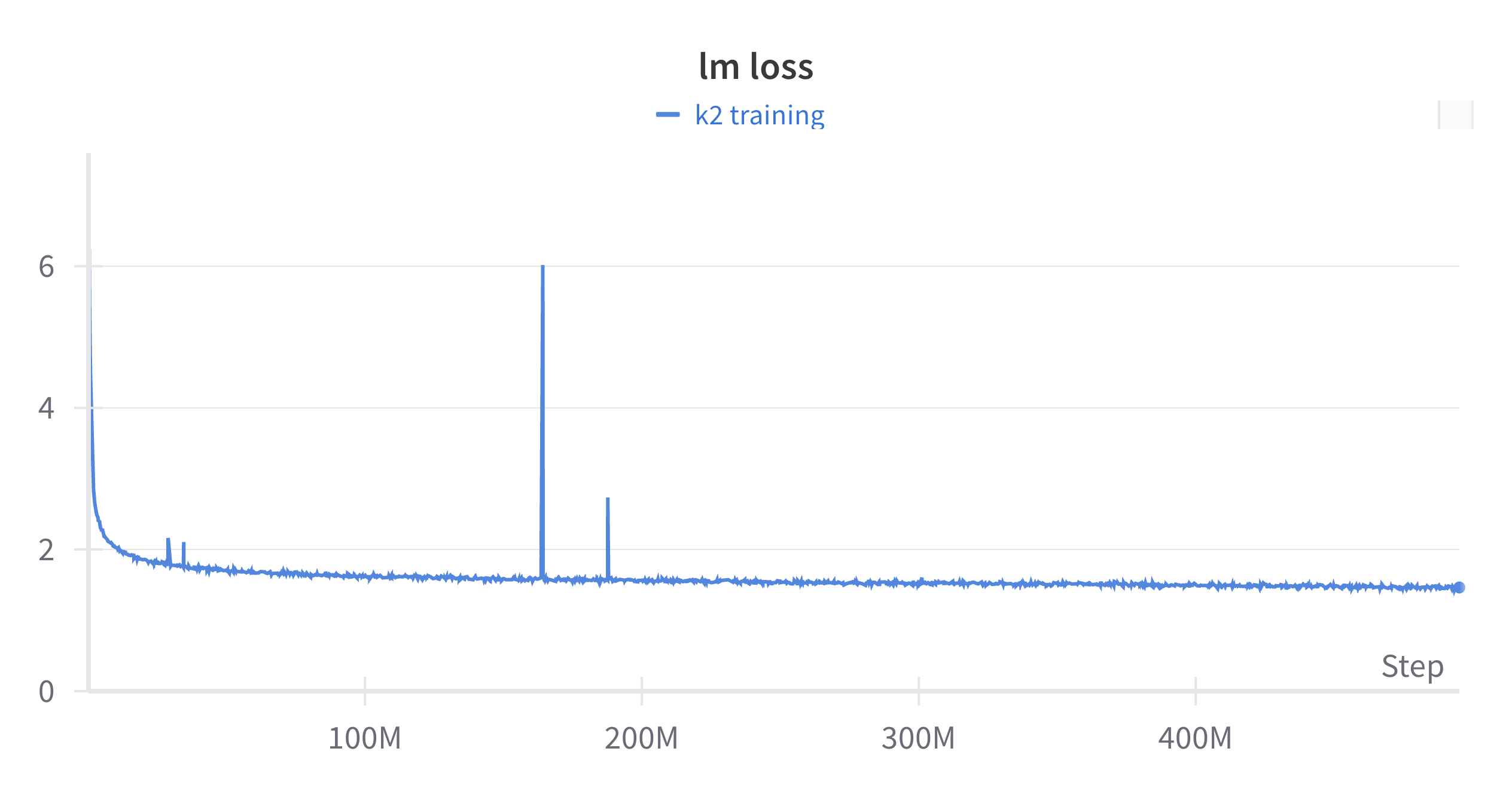

LLM360 Research Suite: K2 Loss Spike 2

We encountered two major loss spikes while training K2.

- The first loss spike occured after 160 checkpoints and lasted over ~34 checkpoints. We restarted training at checkpoint 160 and training returned to normal.

- The second loss spike occured after restarting training to fix the first loss spike at checkpoint 186 and lasted from ~8 checkpoints.

- For every spike checkpoint, we also uploaded the corresponding normal checkpoint for easy comparison. You could find different checkpoints in different branches.

We are releasing these checkpoints so others can study this interesting phenomena in large model training.

Purpose

Loss spikes are still a relatively unknown phenomena. By making these spikes and associated training details available, we hope others use these artifacts to further the worlds knowledge on this topic.

All Checkpoints

| Checkpoints | |

|---|---|

| Checkpoint 186 | Checkpoint 194 |

| Checkpoint 188 | Checkpoint 196 |

| Checkpoint 190 | Checkpoint 198 |

| Checkpoint 192 | Checkpoint 200 |

[to find all branches: git branch -a]

Loss Spike's on the LLM360 Evaluation Suite

View all the evaluations on our Weights & Biases here

About the LLM360 Research Suite

The LLM360 Research Suite is a comprehensive set of large language model (LLM) artifacts from Amber, CrystalCoder, and K2 for academic and industry researchers to explore LLM training dynamics. Additional resources can be found at llm360.ai.

Citation

BibTeX:

@misc{

title={LLM360-K2-65B: Scaling Up Open and Transparent Language Models},

author={The LLM360 Team},

year={2024},

}