metadata

license: creativeml-openrail-m

tags:

- stable-diffusion

- prompt-generator

- distilgpt2

datasets:

- FredZhang7/krea-ai-prompts

- Gustavosta/Stable-Diffusion-Prompts

- bartman081523/stable-diffusion-discord-prompts

DistilGPT2 Stable Diffusion Model Card

DistilGPT2 Stable Diffusion is a text-to-text model used to generate creative and coherent prompts for text-to-image models, given any text. This model was finetuned on 2.03 million descriptive stable diffusion prompts from Stable Diffusion discord, Lexica.art, and (my hand-picked) Krea.ai. I filtered the hand-picked prompts based on the output results from Stable Diffusion v1.4.

Compared to other prompt generation models using GPT2, this one runs with 50% faster forwardpropagation and 40% less disk space & RAM.

PyTorch

pip install --upgrade transformers

# download DistilGPT2 Stable Diffusion if haven't already

import os

if not os.path.exists('./distil-sd-gpt2.pt'):

import urllib.request

print('Downloading model...')

urllib.request.urlretrieve('https://huggingface.co/FredZhang7/distilgpt2-stable-diffusion/resolve/main/distil-sd-gpt2.pt', './distil-sd-gpt2.pt')

print('Model downloaded.')

from transformers import GPT2Tokenizer, GPT2LMHeadModel

# load the pretrained tokenizer

tokenizer = GPT2Tokenizer.from_pretrained('distilgpt2')

tokenizer.add_special_tokens({'pad_token': '[PAD]'})

tokenizer.max_len = 512

# load the fine-tuned model

import torch

model = GPT2LMHeadModel.from_pretrained('distilgpt2')

model.load_state_dict(torch.load('distil-sd-gpt2.pt'))

# generate text using fine-tuned model

from transformers import pipeline

nlp = pipeline('text-generation', model=model, tokenizer=tokenizer)

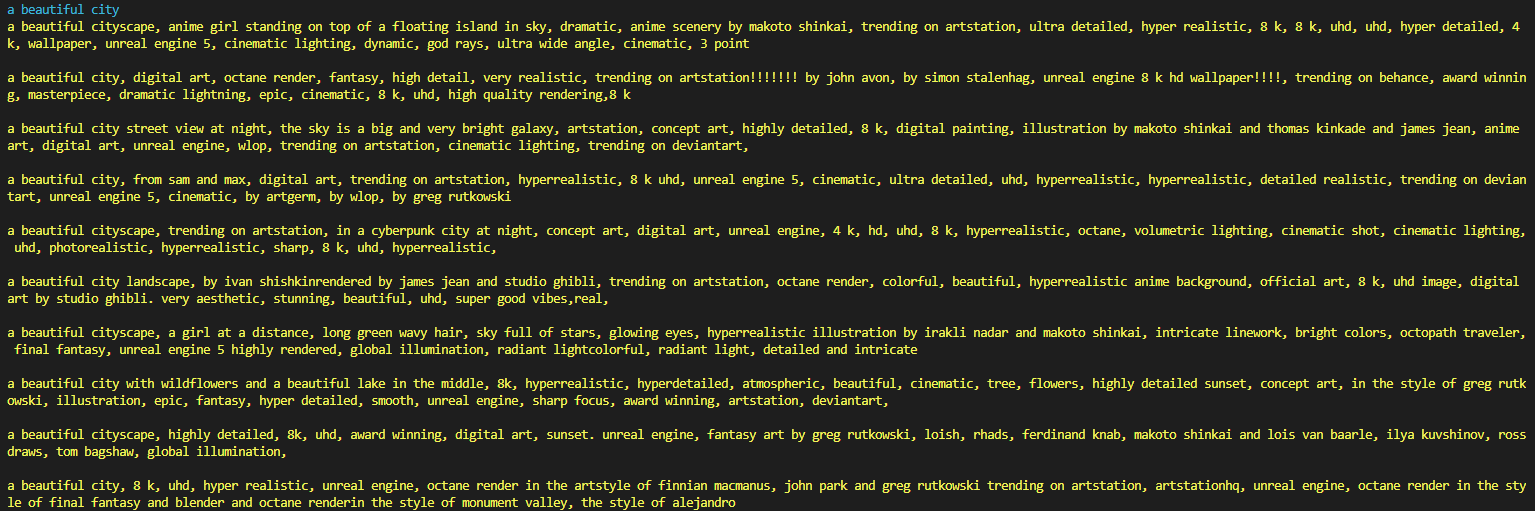

ins = "a beautiful city"

# generate 10 samples

outs = nlp(ins, max_length=80, num_return_sequences=10)

# print the 10 samples

for i in range(len(outs)):

outs[i] = str(outs[i]['generated_text']).replace(' ', '')

print('\033[96m' + ins + '\033[0m')

print('\033[93m' + '\n\n'.join(outs) + '\033[0m')