metadata

license: openrail++

language:

- en

library_name: diffusers

tags:

- text-to-image

- prior

- unclip

- kandinskyv2.2

Introduction

This ECLIPSE model weight is a tiny (33M parameter) non-diffusion text-to-image prior model trained on CC12M data.

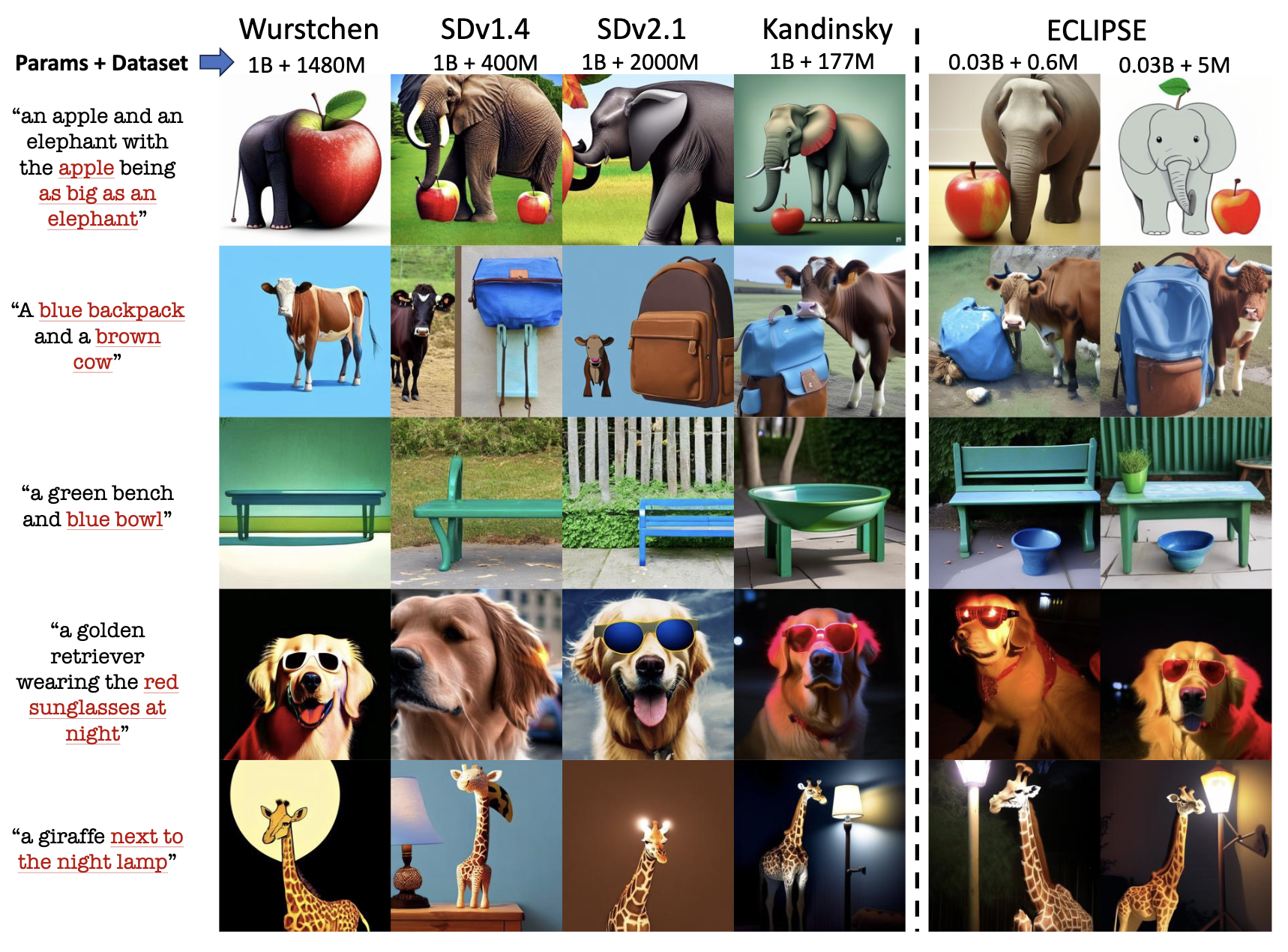

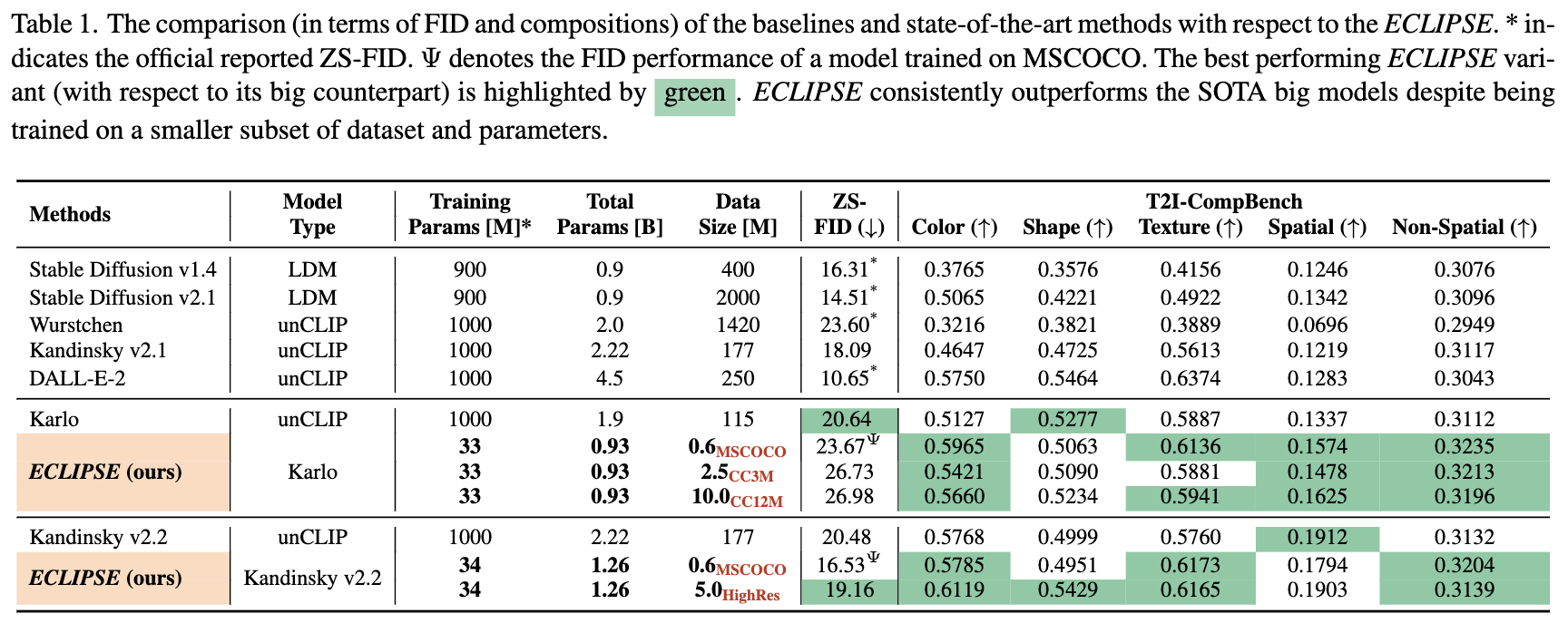

Despite being so small and trained on a limited amount of data, ECLIPSE priors achieve results that of 1 Billion parameter T2I prior models trained on millions of image-text pairs.

- Project Page: https://eclipse-t2i.vercel.app

- GitHub: https://github.com/eclipse-t2i/eclipse-inference

Evaluations

Installation

git clone git@github.com:eclipse-t2i/eclipse-inference.git

conda create -p ./venv python=3.9

pip install -r requirements.txt

Run Inference

This repository supports two pre-trained image decoders: Karlo-v1-alpha and Kandinsky-v2.2. Note: ECLIPSE prior is not a diffusion model -- while image decoders are.

Karlo Inference

from src.pipelines.pipeline_unclip import UnCLIPPipeline

from src.priors.prior_transformer import PriorTransformer

prior = PriorTransformer.from_pretrained("ECLIPSE-Community/ECLIPSE_Karlo_Prior")

pipe = UnCLIPPipeline.from_pretrained("kakaobrain/karlo-v1-alpha", prior=prior).to("cuda")

prompt="black apples in the basket"

images = pipe(prompt, decoder_guidance_scale=7.5).images

images[0]

Kandinsky Inference

from src.pipelines.pipeline_kandinsky_prior import KandinskyPriorPipeline

from src.priors.prior_transformer import PriorTransformer

from diffusers import DiffusionPipeline

prior = PriorTransformer.from_pretrained("ECLIPSE-Community/ECLIPSE_KandinskyV22_Prior")

pipe_prior = KandinskyPriorPipeline.from_pretrained("kandinsky-community/kandinsky-2-2-prior", prior=prior).to("cuda")

pipe = DiffusionPipeline.from_pretrained("kandinsky-community/kandinsky-2-2-decoder").to("cuda")

prompt = "black apples in the basket"

image_embeds, negative_image_embeds = pipe_prior(prompt).to_tuple()

images = pipe(

num_inference_steps=50,

image_embeds=image_embeds,

negative_image_embeds=negative_image_embeds,

).images

images[0]

Limitations

The model is intended for research purposes only to show a way to reduce the unnecessary resource usage in existing T2I research.

As this prior model is trained using very small LAION subset and CLIP supervision, it will observe the limitations from the CLIP model such as:

- Lack of spatial understanding.

- Cannot render legible text

- Complex compositionality is still a big challenge that can be improved if CLIP is improved.

- While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases.