File size: 2,663 Bytes

fd1b197 c828e63 fd1b197 c828e63 fd1b197 c828e63 fd1b197 2c34326 fd1b197 c828e63 fd1b197 2c34326 fd1b197 2c34326 fd1b197 2c34326 fd1b197 c828e63 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 |

---

license: apache-2.0

base_model: hustvl/yolos-tiny

tags:

- generated_from_trainer

- medical

- science

model-index:

- name: yolos-tiny-Brain_Tumor_Detection

results: []

datasets:

- Francesco/brain-tumor-m2pbp

language:

- en

pipeline_tag: object-detection

---

# yolos-tiny-Brain_Tumor_Detection

This model is a fine-tuned version of [hustvl/yolos-tiny](https://huggingface.co/hustvl/yolos-tiny).

## Model description

For more information on how it was created, check out the following link: https://github.com/DunnBC22/Vision_Audio_and_Multimodal_Projects/blob/main/Computer%20Vision/Object%20Detection/Brain%20Tumors/Brain_Tumor_m2pbp_Object_Detection_YOLOS.ipynb

**If you intend on trying this project yourself, I highly recommend using (at least) the yolos-small checkpoint.

## Intended uses & limitations

This model is intended to demonstrate my ability to solve a complex problem using technology.

## Training and evaluation data

Dataset Source: https://huggingface.co/datasets/Francesco/brain-tumor-m2pbp

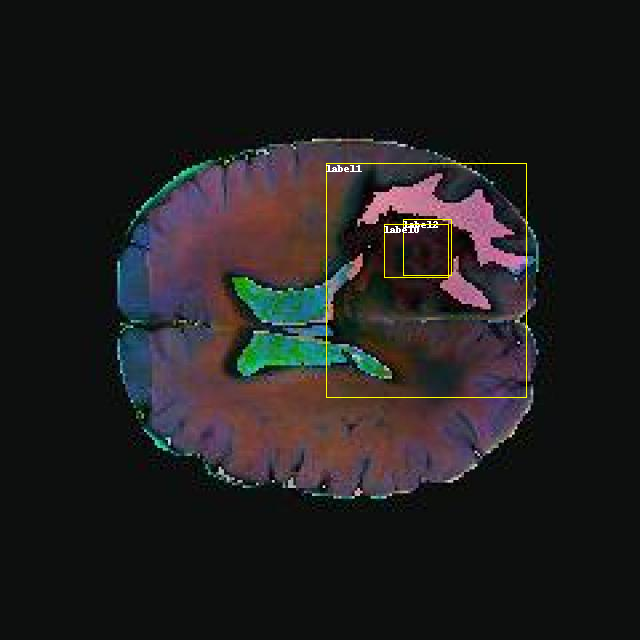

**Example**

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 20

### Training results

| Metric Name | IoU | Area | maxDets | Metric Value |

|:-----:|:-----:|:-----:|:-----:|:-----:|

| Average Precision (AP) | IoU=0.50:0.95 | area= all | maxDets=100 | 0.185

| Average Precision (AP) | IoU=0.50 | area= all | maxDets=100 | 0.448

| Average Precision (AP) | IoU=0.75 | area= all | maxDets=100 | 0.126

| Average Precision (AP) | IoU=0.50:0.95 | area= small | maxDets=100 | 0.001

| Average Precision (AP) | IoU=0.50:0.95 | area=medium | maxDets=100 | 0.080

| Average Precision (AP) | IoU=0.50:0.95 | area= large | maxDets=100 | 0.296

| Average Recall (AR) | IoU=0.50:0.95 | area= all | maxDets= 1 | 0.254

| Average Recall (AR) | IoU=0.50:0.95 | area= all | maxDets= 10 | 0.353

| Average Recall (AR) | IoU=0.50:0.95 | area= all | maxDets=100 | 0.407

| Average Recall (AR) | IoU=0.50:0.95 | area= small | maxDets=100 | 0.036

| Average Recall (AR) | IoU=0.50:0.95 | area=medium | maxDets=100 | 0.312

| Average Recall (AR) |IoU=0.50:0.95 | area= large | maxDets=100 | 0.565

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.2

- Tokenizers 0.13.3 |