Commit

•

fa83bd9

1

Parent(s):

92e5c4f

Upload folder using huggingface_hub

Browse files- .gitattributes +2 -9

- README.md +143 -0

- config.json +32 -0

- merges.txt +0 -0

- pytorch_model.bin +3 -0

- special_tokens_map.json +32 -0

- stats.png +0 -0

- tokenizer_config.json +67 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -1,35 +1,28 @@

|

|

| 1 |

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

*.bin filter=lfs diff=lfs merge=lfs -text

|

|

|

|

| 4 |

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

-

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

-

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

-

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

-

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

-

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

-

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

-

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

-

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

-

*.

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

| 1 |

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bin.* filter=lfs diff=lfs merge=lfs -text

|

| 5 |

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

|

|

|

| 6 |

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

| 11 |

*.model filter=lfs diff=lfs merge=lfs -text

|

| 12 |

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

| 13 |

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 14 |

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 15 |

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 16 |

*.pb filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

| 17 |

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 18 |

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 19 |

*.rar filter=lfs diff=lfs merge=lfs -text

|

|

|

|

| 20 |

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 21 |

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

| 22 |

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 23 |

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 24 |

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 25 |

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 26 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.zstandard filter=lfs diff=lfs merge=lfs -text

|

| 28 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,143 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

language:

|

| 4 |

+

- ar

|

| 5 |

+

- he

|

| 6 |

+

- vi

|

| 7 |

+

- id

|

| 8 |

+

- jv

|

| 9 |

+

- ms

|

| 10 |

+

- tl

|

| 11 |

+

- lv

|

| 12 |

+

- lt

|

| 13 |

+

- eu

|

| 14 |

+

- ml

|

| 15 |

+

- ta

|

| 16 |

+

- te

|

| 17 |

+

- hy

|

| 18 |

+

- bn

|

| 19 |

+

- mr

|

| 20 |

+

- hi

|

| 21 |

+

- ur

|

| 22 |

+

- af

|

| 23 |

+

- da

|

| 24 |

+

- en

|

| 25 |

+

- de

|

| 26 |

+

- sv

|

| 27 |

+

- fr

|

| 28 |

+

- it

|

| 29 |

+

- pt

|

| 30 |

+

- ro

|

| 31 |

+

- es

|

| 32 |

+

- el

|

| 33 |

+

- os

|

| 34 |

+

- tg

|

| 35 |

+

- fa

|

| 36 |

+

- ja

|

| 37 |

+

- ka

|

| 38 |

+

- ko

|

| 39 |

+

- th

|

| 40 |

+

- bxr

|

| 41 |

+

- xal

|

| 42 |

+

- mn

|

| 43 |

+

- sw

|

| 44 |

+

- yo

|

| 45 |

+

- be

|

| 46 |

+

- bg

|

| 47 |

+

- ru

|

| 48 |

+

- uk

|

| 49 |

+

- pl

|

| 50 |

+

- my

|

| 51 |

+

- uz

|

| 52 |

+

- ba

|

| 53 |

+

- kk

|

| 54 |

+

- ky

|

| 55 |

+

- tt

|

| 56 |

+

- az

|

| 57 |

+

- cv

|

| 58 |

+

- tr

|

| 59 |

+

- tk

|

| 60 |

+

- tyv

|

| 61 |

+

- sax

|

| 62 |

+

- et

|

| 63 |

+

- fi

|

| 64 |

+

- hu

|

| 65 |

+

|

| 66 |

+

pipeline_tag: text-generation

|

| 67 |

+

tags:

|

| 68 |

+

- multilingual

|

| 69 |

+

- PyTorch

|

| 70 |

+

- Transformers

|

| 71 |

+

- gpt3

|

| 72 |

+

- gpt2

|

| 73 |

+

- Deepspeed

|

| 74 |

+

- Megatron

|

| 75 |

+

datasets:

|

| 76 |

+

- mc4

|

| 77 |

+

- wikipedia

|

| 78 |

+

thumbnail: "https://github.com/sberbank-ai/mgpt"

|

| 79 |

+

---

|

| 80 |

+

|

| 81 |

+

# Multilingual GPT model

|

| 82 |

+

|

| 83 |

+

We introduce a family of autoregressive GPT-like models with 1.3 billion parameters trained on 61 languages from 25 language families using Wikipedia and Colossal Clean Crawled Corpus.

|

| 84 |

+

|

| 85 |

+

We reproduce the GPT-3 architecture using GPT-2 sources and the sparse attention mechanism, [Deepspeed](https://github.com/microsoft/DeepSpeed) and [Megatron](https://github.com/NVIDIA/Megatron-LM) frameworks allows us to effectively parallelize the training and inference steps. The resulting models show performance on par with the recently released [XGLM](https://arxiv.org/pdf/2112.10668.pdf) models at the same time covering more languages and enhancing NLP possibilities for low resource languages.

|

| 86 |

+

|

| 87 |

+

## Code

|

| 88 |

+

The source code for the mGPT XL model is available on [Github](https://github.com/sberbank-ai/mgpt)

|

| 89 |

+

|

| 90 |

+

## Paper

|

| 91 |

+

mGPT: Few-Shot Learners Go Multilingual

|

| 92 |

+

|

| 93 |

+

[Abstract](https://arxiv.org/abs/2204.07580) [PDF](https://arxiv.org/pdf/2204.07580.pdf)

|

| 94 |

+

|

| 95 |

+

|

| 96 |

+

|

| 97 |

+

```

|

| 98 |

+

@misc{https://doi.org/10.48550/arxiv.2204.07580,

|

| 99 |

+

doi = {10.48550/ARXIV.2204.07580},

|

| 100 |

+

|

| 101 |

+

url = {https://arxiv.org/abs/2204.07580},

|

| 102 |

+

|

| 103 |

+

author = {Shliazhko, Oleh and Fenogenova, Alena and Tikhonova, Maria and Mikhailov, Vladislav and Kozlova, Anastasia and Shavrina, Tatiana},

|

| 104 |

+

|

| 105 |

+

keywords = {Computation and Language (cs.CL), Artificial Intelligence (cs.AI), FOS: Computer and information sciences, FOS: Computer and information sciences, I.2; I.2.7, 68-06, 68-04, 68T50, 68T01},

|

| 106 |

+

|

| 107 |

+

title = {mGPT: Few-Shot Learners Go Multilingual},

|

| 108 |

+

|

| 109 |

+

publisher = {arXiv},

|

| 110 |

+

|

| 111 |

+

year = {2022},

|

| 112 |

+

|

| 113 |

+

copyright = {Creative Commons Attribution 4.0 International}

|

| 114 |

+

}

|

| 115 |

+

|

| 116 |

+

```

|

| 117 |

+

|

| 118 |

+

|

| 119 |

+

## Languages

|

| 120 |

+

|

| 121 |

+

Model supports 61 languages:

|

| 122 |

+

|

| 123 |

+

ISO codes:

|

| 124 |

+

```ar he vi id jv ms tl lv lt eu ml ta te hy bn mr hi ur af da en de sv fr it pt ro es el os tg fa ja ka ko th bxr xal mn sw yo be bg ru uk pl my uz ba kk ky tt az cv tr tk tyv sax et fi hu```

|

| 125 |

+

|

| 126 |

+

|

| 127 |

+

Languages:

|

| 128 |

+

|

| 129 |

+

```Arabic, Hebrew, Vietnamese, Indonesian, Javanese, Malay, Tagalog, Latvian, Lithuanian, Basque, Malayalam, Tamil, Telugu, Armenian, Bengali, Marathi, Hindi, Urdu, Afrikaans, Danish, English, German, Swedish, French, Italian, Portuguese, Romanian, Spanish, Greek, Ossetian, Tajik, Persian, Japanese, Georgian, Korean, Thai, Buryat, Kalmyk, Mongolian, Swahili, Yoruba, Belarusian, Bulgarian, Russian, Ukrainian, Polish, Burmese, Uzbek, Bashkir, Kazakh, Kyrgyz, Tatar, Azerbaijani, Chuvash, Turkish, Turkmen, Tuvan, Yakut, Estonian, Finnish, Hungarian```

|

| 130 |

+

|

| 131 |

+

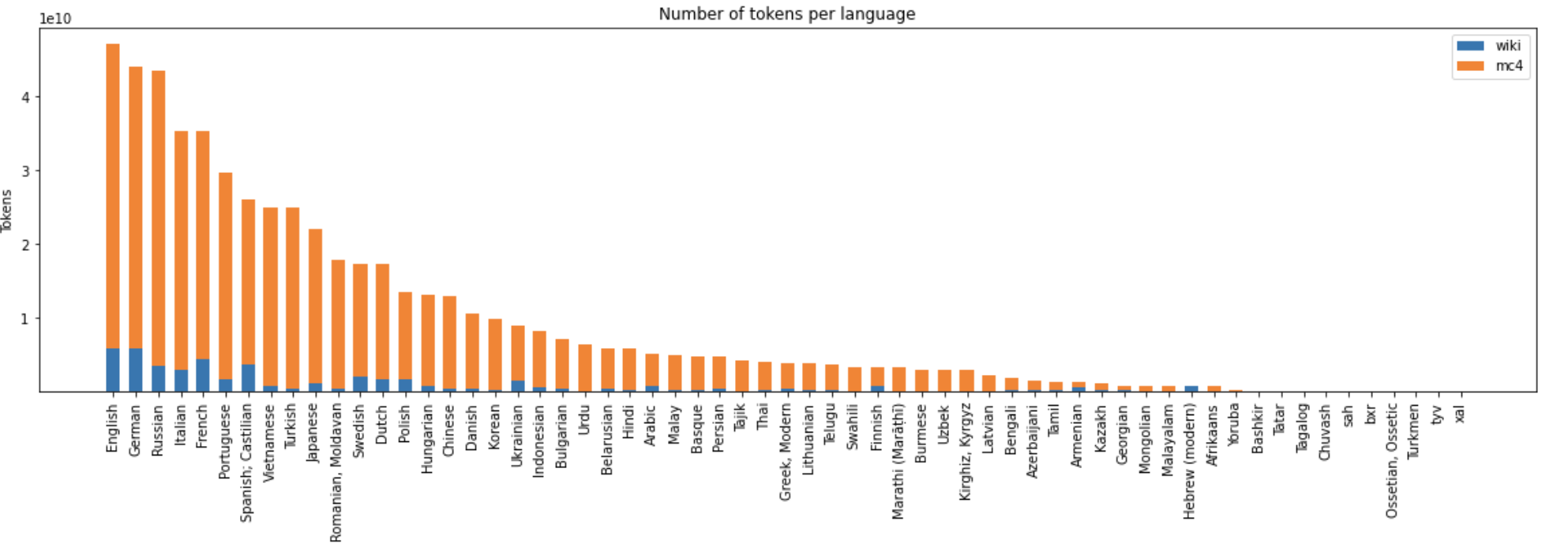

## Training Data Statistics

|

| 132 |

+

|

| 133 |

+

- Size: 488 Billion UTF characters

|

| 134 |

+

|

| 135 |

+

|

| 136 |

+

<img style="text-align:center; display:block;" src="https://huggingface.co/sberbank-ai/mGPT/resolve/main/stats.png">

|

| 137 |

+

"General training corpus statistics"

|

| 138 |

+

|

| 139 |

+

|

| 140 |

+

## Details

|

| 141 |

+

The model was trained with sequence length 512 using Megatron and Deepspeed libs by [SberDevices](https://sberdevices.ru/) team on a dataset of 600 GB of texts in 61 languages. The model has seen 440 billion BPE tokens in total.

|

| 142 |

+

|

| 143 |

+

Total training time was around 14 days on 256 Nvidia V100 GPUs.

|

config.json

ADDED

|

@@ -0,0 +1,32 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"activation_function": "gelu_new",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"GPT2LMHeadModel"

|

| 5 |

+

],

|

| 6 |

+

"attn_pdrop": 0.1,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"embd_pdrop": 0.1,

|

| 9 |

+

"eos_token_id": 5,

|

| 10 |

+

"gradient_checkpointing": false,

|

| 11 |

+

"initializer_range": 0.02,

|

| 12 |

+

"layer_norm_epsilon": 1e-05,

|

| 13 |

+

"model_type": "gpt2",

|

| 14 |

+

"n_ctx": 2048,

|

| 15 |

+

"n_embd": 2048,

|

| 16 |

+

"n_head": 16,

|

| 17 |

+

"n_inner": null,

|

| 18 |

+

"n_layer": 24,

|

| 19 |

+

"n_positions": 2048,

|

| 20 |

+

"pad_token_id": 1,

|

| 21 |

+

"resid_pdrop": 0.1,

|

| 22 |

+

"scale_attn_weights": true,

|

| 23 |

+

"summary_activation": null,

|

| 24 |

+

"summary_first_dropout": 0.1,

|

| 25 |

+

"summary_proj_to_labels": true,

|

| 26 |

+

"summary_type": "cls_index",

|

| 27 |

+

"summary_use_proj": true,

|

| 28 |

+

"torch_dtype": "float32",

|

| 29 |

+

"transformers_version": "4.10.3",

|

| 30 |

+

"use_cache": true,

|

| 31 |

+

"vocab_size": 100000

|

| 32 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c45c522411e64dbed6da1ed0ce0839bf03931d3a17b80182d06d4dab8d8b2fbb

|

| 3 |

+

size 3446232428

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,32 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<s>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": true,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "<|endoftext|>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": true,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"mask_token": "<mask>",

|

| 17 |

+

"pad_token": {

|

| 18 |

+

"content": "<pad>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": true,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

},

|

| 24 |

+

"sep_token": "</s>",

|

| 25 |

+

"unk_token": {

|

| 26 |

+

"content": "<unk>",

|

| 27 |

+

"lstrip": false,

|

| 28 |

+

"normalized": true,

|

| 29 |

+

"rstrip": false,

|

| 30 |

+

"single_word": false

|

| 31 |

+

}

|

| 32 |

+

}

|

stats.png

ADDED

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,67 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_bos_token": false,

|

| 3 |

+

"add_prefix_space": false,

|

| 4 |

+

"added_tokens_decoder": {

|

| 5 |

+

"0": {

|

| 6 |

+

"content": "<s>",

|

| 7 |

+

"lstrip": false,

|

| 8 |

+

"normalized": true,

|

| 9 |

+

"rstrip": false,

|

| 10 |

+

"single_word": false,

|

| 11 |

+

"special": true

|

| 12 |

+

},

|

| 13 |

+

"1": {

|

| 14 |

+

"content": "<pad>",

|

| 15 |

+

"lstrip": false,

|

| 16 |

+

"normalized": true,

|

| 17 |

+

"rstrip": false,

|

| 18 |

+

"single_word": false,

|

| 19 |

+

"special": true

|

| 20 |

+

},

|

| 21 |

+

"2": {

|

| 22 |

+

"content": "</s>",

|

| 23 |

+

"lstrip": false,

|

| 24 |

+

"normalized": false,

|

| 25 |

+

"rstrip": false,

|

| 26 |

+

"single_word": false,

|

| 27 |

+

"special": true

|

| 28 |

+

},

|

| 29 |

+

"3": {

|

| 30 |

+

"content": "<unk>",

|

| 31 |

+

"lstrip": false,

|

| 32 |

+

"normalized": true,

|

| 33 |

+

"rstrip": false,

|

| 34 |

+

"single_word": false,

|

| 35 |

+

"special": true

|

| 36 |

+

},

|

| 37 |

+

"4": {

|

| 38 |

+

"content": "<mask>",

|

| 39 |

+

"lstrip": false,

|

| 40 |

+

"normalized": false,

|

| 41 |

+

"rstrip": false,

|

| 42 |

+

"single_word": false,

|

| 43 |

+

"special": true

|

| 44 |

+

},

|

| 45 |

+

"5": {

|

| 46 |

+

"content": "<|endoftext|>",

|

| 47 |

+

"lstrip": false,

|

| 48 |

+

"normalized": true,

|

| 49 |

+

"rstrip": false,

|

| 50 |

+

"single_word": false,

|

| 51 |

+

"special": true

|

| 52 |

+

}

|

| 53 |

+

},

|

| 54 |

+

"bos_token": "<s>",

|

| 55 |

+

"clean_up_tokenization_spaces": true,

|

| 56 |

+

"eos_token": "<|endoftext|>",

|

| 57 |

+

"errors": "replace",

|

| 58 |

+

"mask_token": "<mask>",

|

| 59 |

+

"model_max_length": 2048,

|

| 60 |

+

"pad_token": "<pad>",

|

| 61 |

+

"padding_side": "left",

|

| 62 |

+

"sep_token": "</s>",

|

| 63 |

+

"tokenizer_class": "GPT2Tokenizer",

|

| 64 |

+

"truncation_side": "left",

|

| 65 |

+

"trust_remote_code": false,

|

| 66 |

+

"unk_token": "<unk>"

|

| 67 |

+

}

|

vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|