|

--- |

|

license: mit |

|

language: |

|

- zh |

|

tags: |

|

- gpt2 |

|

- vit |

|

--- |

|

# 模型介绍 |

|

|

|

|

|

|

|

|

|

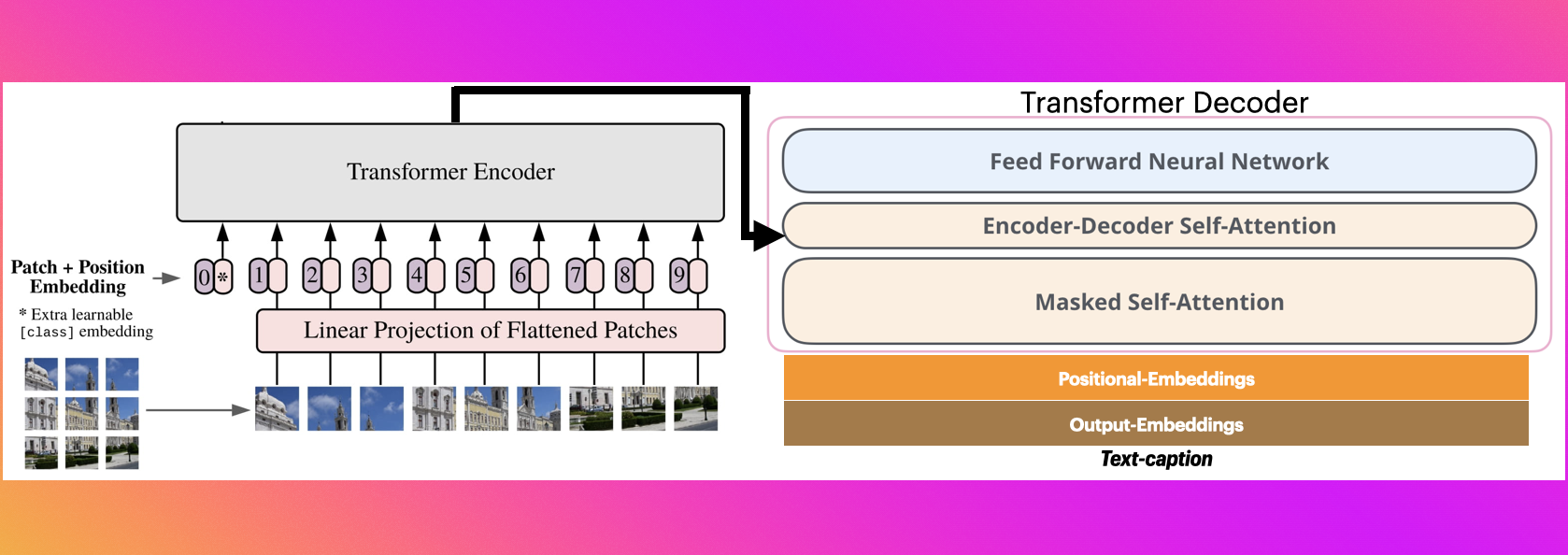

1. vit对图像做encoder,然后再用gpt2做decoder |

|

2. vit模型使用的是`google/vit-base-patch16-224`, gpt2使用的是`yuanzhoulvpi/gpt2_chinese` |

|

3. 本模型支持中文 |

|

|

|

|

|

# 训练代码 |

|

|

|

[https://github.com/yuanzhoulvpi2017/zero_nlp/tree/main/vit-gpt2-image-chinese-captioning](https://github.com/yuanzhoulvpi2017/zero_nlp/tree/main/vit-gpt2-image-chinese-captioning) |

|

|

|

|

|

|

|

|

|

|

|

|

|

# 推理代码 |

|

# infer |

|

|

|

```python |

|

from transformers import (VisionEncoderDecoderModel, |

|

AutoTokenizer,ViTImageProcessor) |

|

import torch |

|

from PIL import Image |

|

|

|

``` |

|

|

|

|

|

```python |

|

vision_encoder_decoder_model_name_or_path = "yuanzhoulvpi/vit-gpt2-image-chinese-captioning"#"vit-gpt2-image-chinese-captioning/checkpoint-3200" |

|

|

|

processor = ViTImageProcessor.from_pretrained(vision_encoder_decoder_model_name_or_path) |

|

tokenizer = AutoTokenizer.from_pretrained(vision_encoder_decoder_model_name_or_path) |

|

model = VisionEncoderDecoderModel.from_pretrained(vision_encoder_decoder_model_name_or_path) |

|

device = torch.device("cuda" if torch.cuda.is_available() else "cpu") |

|

model.to(device) |

|

``` |

|

|

|

|

|

```python |

|

max_length = 16 |

|

num_beams = 4 |

|

gen_kwargs = {"max_length": max_length, "num_beams": num_beams} |

|

|

|

|

|

def predict_step(image_paths): |

|

images = [] |

|

for image_path in image_paths: |

|

i_image = Image.open(image_path) |

|

if i_image.mode != "RGB": |

|

i_image = i_image.convert(mode="RGB") |

|

|

|

images.append(i_image) |

|

|

|

pixel_values = processor(images=images, return_tensors="pt").pixel_values |

|

pixel_values = pixel_values.to(device) |

|

|

|

output_ids = model.generate(pixel_values, **gen_kwargs) |

|

|

|

preds = tokenizer.batch_decode(output_ids, skip_special_tokens=True) |

|

preds = [pred.strip() for pred in preds] |

|

return preds |

|

|

|

|

|

predict_step(['bigdata/image_data/train-1000200.jpg']) |

|

|

|

``` |

|

|

|

|

|

# 效果 |

|

## example 1 |

|

|

|

## example 2 |

|

|