Update README.md

Browse files

README.md

CHANGED

|

@@ -1,29 +1,56 @@

|

|

| 1 |

---

|

| 2 |

license: mit

|

| 3 |

-

license_link: https://huggingface.co/microsoft/Florence-2-

|

| 4 |

pipeline_tag: image-text-to-text

|

| 5 |

tags:

|

| 6 |

- vision

|

|

|

|

|

|

|

| 7 |

---

|

| 8 |

-

|

| 9 |

-

# Florence-2: Advancing a Unified Representation for a Variety of Vision Tasks

|

| 10 |

|

| 11 |

## Model Summary

|

| 12 |

|

| 13 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 14 |

|

| 15 |

-

|

| 16 |

|

| 17 |

-

|

| 18 |

-

+ [Florence-2 technical report](https://arxiv.org/abs/2311.06242).

|

| 19 |

-

+ [Jupyter Notebook for inference and visualization of Florence-2-large model](https://huggingface.co/microsoft/Florence-2-large/blob/main/sample_inference.ipynb)

|

| 20 |

|

| 21 |

-

|

| 22 |

-

|

| 23 |

-

|

| 24 |

-

|

| 25 |

-

|

| 26 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 27 |

|

| 28 |

## How to Get Started with the Model

|

| 29 |

|

|

@@ -31,17 +58,15 @@ Use the code below to get started with the model.

|

|

| 31 |

|

| 32 |

```python

|

| 33 |

import requests

|

| 34 |

-

|

| 35 |

from PIL import Image

|

| 36 |

from transformers import AutoProcessor, AutoModelForCausalLM

|

| 37 |

|

| 38 |

-

|

| 39 |

-

|

| 40 |

-

processor = AutoProcessor.from_pretrained("microsoft/Florence-2-large-ft", trust_remote_code=True)

|

| 41 |

|

| 42 |

prompt = "<OD>"

|

| 43 |

|

| 44 |

-

url = "https://huggingface.co/

|

| 45 |

image = Image.open(requests.get(url, stream=True).raw)

|

| 46 |

|

| 47 |

inputs = processor(text=prompt, images=image, return_tensors="pt")

|

|

@@ -58,199 +83,20 @@ generated_text = processor.batch_decode(generated_ids, skip_special_tokens=False

|

|

| 58 |

parsed_answer = processor.post_process_generation(generated_text, task="<OD>", image_size=(image.width, image.height))

|

| 59 |

|

| 60 |

print(parsed_answer)

|

| 61 |

-

|

| 62 |

-

```

|

| 63 |

-

|

| 64 |

-

|

| 65 |

-

## Tasks

|

| 66 |

-

|

| 67 |

-

This model is capable of performing different tasks through changing the prompts.

|

| 68 |

-

|

| 69 |

-

First, let's define a function to run a prompt.

|

| 70 |

-

|

| 71 |

-

<details>

|

| 72 |

-

<summary> Click to expand </summary>

|

| 73 |

-

|

| 74 |

-

```python

|

| 75 |

-

import requests

|

| 76 |

-

|

| 77 |

-

from PIL import Image

|

| 78 |

-

from transformers import AutoProcessor, AutoModelForCausalLM

|

| 79 |

-

|

| 80 |

-

|

| 81 |

-

model = AutoModelForCausalLM.from_pretrained("microsoft/Florence-2-large-ft", trust_remote_code=True)

|

| 82 |

-

processor = AutoProcessor.from_pretrained("microsoft/Florence-2-large-ft", trust_remote_code=True)

|

| 83 |

-

|

| 84 |

-

url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/tasks/car.jpg?download=true"

|

| 85 |

-

image = Image.open(requests.get(url, stream=True).raw)

|

| 86 |

-

|

| 87 |

-

def run_example(task_prompt, text_input=None):

|

| 88 |

-

if text_input is None:

|

| 89 |

-

prompt = task_prompt

|

| 90 |

-

else:

|

| 91 |

-

prompt = task_prompt + text_input

|

| 92 |

-

inputs = processor(text=prompt, images=image, return_tensors="pt")

|

| 93 |

-

generated_ids = model.generate(

|

| 94 |

-

input_ids=inputs["input_ids"],

|

| 95 |

-

pixel_values=inputs["pixel_values"],

|

| 96 |

-

max_new_tokens=1024,

|

| 97 |

-

num_beams=3

|

| 98 |

-

)

|

| 99 |

-

generated_text = processor.batch_decode(generated_ids, skip_special_tokens=False)[0]

|

| 100 |

-

|

| 101 |

-

parsed_answer = processor.post_process_generation(generated_text, task=task_prompt, image_size=(image.width, image.height))

|

| 102 |

-

|

| 103 |

-

print(parsed_answer)

|

| 104 |

```

|

| 105 |

-

</details>

|

| 106 |

-

|

| 107 |

-

Here are the tasks `Florence-2` could perform:

|

| 108 |

-

|

| 109 |

-

<details>

|

| 110 |

-

<summary> Click to expand </summary>

|

| 111 |

-

|

| 112 |

-

|

| 113 |

-

|

| 114 |

-

### Caption

|

| 115 |

-

```python

|

| 116 |

-

prompt = "<CAPTION>"

|

| 117 |

-

run_example(prompt)

|

| 118 |

-

```

|

| 119 |

-

|

| 120 |

-

### Detailed Caption

|

| 121 |

-

```python

|

| 122 |

-

prompt = "<DETAILED_CAPTION>"

|

| 123 |

-

run_example(prompt)

|

| 124 |

-

```

|

| 125 |

-

|

| 126 |

-

### More Detailed Caption

|

| 127 |

-

```python

|

| 128 |

-

prompt = "<MORE_DETAILED_CAPTION>"

|

| 129 |

-

run_example(prompt)

|

| 130 |

-

```

|

| 131 |

-

|

| 132 |

-

### Caption to Phrase Grounding

|

| 133 |

-

caption to phrase grounding task requires additional text input, i.e. caption.

|

| 134 |

-

|

| 135 |

-

Caption to phrase grounding results format:

|

| 136 |

-

{'\<CAPTION_TO_PHRASE_GROUNDING>': {'bboxes': [[x1, y1, x2, y2], ...], 'labels': ['', '', ...]}}

|

| 137 |

-

```python

|

| 138 |

-

task_prompt = "<CAPTION_TO_PHRASE_GROUNDING>"

|

| 139 |

-

results = run_example(task_prompt, text_input="A green car parked in front of a yellow building.")

|

| 140 |

-

```

|

| 141 |

-

|

| 142 |

-

### Object Detection

|

| 143 |

-

|

| 144 |

-

OD results format:

|

| 145 |

-

{'\<OD>': {'bboxes': [[x1, y1, x2, y2], ...],

|

| 146 |

-

'labels': ['label1', 'label2', ...]} }

|

| 147 |

-

|

| 148 |

-

```python

|

| 149 |

-

prompt = "<OD>"

|

| 150 |

-

run_example(prompt)

|

| 151 |

-

```

|

| 152 |

-

|

| 153 |

-

### Dense Region Caption

|

| 154 |

-

Dense region caption results format:

|

| 155 |

-

{'\<DENSE_REGION_CAPTION>' : {'bboxes': [[x1, y1, x2, y2], ...],

|

| 156 |

-

'labels': ['label1', 'label2', ...]} }

|

| 157 |

-

```python

|

| 158 |

-

prompt = "<DENSE_REGION_CAPTION>"

|

| 159 |

-

run_example(prompt)

|

| 160 |

-

```

|

| 161 |

-

|

| 162 |

-

### Region proposal

|

| 163 |

-

Dense region caption results format:

|

| 164 |

-

{'\<REGION_PROPOSAL>': {'bboxes': [[x1, y1, x2, y2], ...],

|

| 165 |

-

'labels': ['', '', ...]}}

|

| 166 |

-

```python

|

| 167 |

-

prompt = "<REGION_PROPOSAL>"

|

| 168 |

-

run_example(prompt)

|

| 169 |

-

```

|

| 170 |

-

|

| 171 |

-

|

| 172 |

-

### OCR

|

| 173 |

-

|

| 174 |

-

```python

|

| 175 |

-

prompt = "<OCR>"

|

| 176 |

-

run_example(prompt)

|

| 177 |

-

```

|

| 178 |

-

|

| 179 |

-

### OCR with Region

|

| 180 |

-

OCR with region output format:

|

| 181 |

-

{'\<OCR_WITH_REGION>': {'quad_boxes': [[x1, y1, x2, y2, x3, y3, x4, y4], ...], 'labels': ['text1', ...]}}

|

| 182 |

-

```python

|

| 183 |

-

prompt = "<OCR_WITH_REGION>"

|

| 184 |

-

run_example(prompt)

|

| 185 |

-

```

|

| 186 |

-

|

| 187 |

-

for More detailed examples, please refer to [notebook](https://huggingface.co/microsoft/Florence-2-large/blob/main/sample_inference.ipynb)

|

| 188 |

-

</details>

|

| 189 |

-

|

| 190 |

-

# Benchmarks

|

| 191 |

-

|

| 192 |

-

## Florence-2 Zero-shot performance

|

| 193 |

-

|

| 194 |

-

The following table presents the zero-shot performance of generalist vision foundation models on image captioning and object detection evaluation tasks. These models have not been exposed to the training data of the evaluation tasks during their training phase.

|

| 195 |

-

|

| 196 |

-

| Method | #params | COCO Cap. test CIDEr | NoCaps val CIDEr | TextCaps val CIDEr | COCO Det. val2017 mAP |

|

| 197 |

-

|--------|---------|----------------------|------------------|--------------------|-----------------------|

|

| 198 |

-

| Flamingo | 80B | 84.3 | - | - | - |

|

| 199 |

-

| Florence-2-base| 0.23B | 133.0 | 118.7 | 70.1 | 34.7 |

|

| 200 |

-

| Florence-2-large| 0.77B | 135.6 | 120.8 | 72.8 | 37.5 |

|

| 201 |

-

|

| 202 |

-

|

| 203 |

-

The following table continues the comparison with performance on other vision-language evaluation tasks.

|

| 204 |

-

|

| 205 |

-

| Method | Flickr30k test R@1 | Refcoco val Accuracy | Refcoco test-A Accuracy | Refcoco test-B Accuracy | Refcoco+ val Accuracy | Refcoco+ test-A Accuracy | Refcoco+ test-B Accuracy | Refcocog val Accuracy | Refcocog test Accuracy | Refcoco RES val mIoU |

|

| 206 |

-

|--------|----------------------|----------------------|-------------------------|-------------------------|-----------------------|--------------------------|--------------------------|-----------------------|------------------------|----------------------|

|

| 207 |

-

| Kosmos-2 | 78.7 | 52.3 | 57.4 | 47.3 | 45.5 | 50.7 | 42.2 | 60.6 | 61.7 | - |

|

| 208 |

-

| Florence-2-base | 83.6 | 53.9 | 58.4 | 49.7 | 51.5 | 56.4 | 47.9 | 66.3 | 65.1 | 34.6 |

|

| 209 |

-

| Florence-2-large | 84.4 | 56.3 | 61.6 | 51.4 | 53.6 | 57.9 | 49.9 | 68.0 | 67.0 | 35.8 |

|

| 210 |

-

|

| 211 |

|

|

|

|

| 212 |

|

| 213 |

-

##

|

| 214 |

|

| 215 |

-

|

| 216 |

-

|

| 217 |

-

The table below compares the performance of specialist and generalist models on various captioning and Visual Question Answering (VQA) tasks. Specialist models are fine-tuned specifically for each task, whereas generalist models are fine-tuned in a task-agnostic manner across all tasks. The symbol "▲" indicates the usage of external OCR as input.

|

| 218 |

-

|

| 219 |

-

| Method | # Params | COCO Caption Karpathy test CIDEr | NoCaps val CIDEr | TextCaps val CIDEr | VQAv2 test-dev Acc | TextVQA test-dev Acc | VizWiz VQA test-dev Acc |

|

| 220 |

-

|----------------|----------|-----------------------------------|------------------|--------------------|--------------------|----------------------|-------------------------|

|

| 221 |

-

| **Specialist Models** | | | | | | | |

|

| 222 |

-

| CoCa | 2.1B | 143.6 | 122.4 | - | 82.3 | - | - |

|

| 223 |

-

| BLIP-2 | 7.8B | 144.5 | 121.6 | - | 82.2 | - | - |

|

| 224 |

-

| GIT2 | 5.1B | 145.0 | 126.9 | 148.6 | 81.7 | 67.3 | 71.0 |

|

| 225 |

-

| Flamingo | 80B | 138.1 | - | - | 82.0 | 54.1 | 65.7 |

|

| 226 |

-

| PaLI | 17B | 149.1 | 127.0 | 160.0▲ | 84.3 | 58.8 / 73.1▲ | 71.6 / 74.4▲ |

|

| 227 |

-

| PaLI-X | 55B | 149.2 | 126.3 | 147.0 / 163.7▲ | 86.0 | 71.4 / 80.8▲ | 70.9 / 74.6▲ |

|

| 228 |

-

| **Generalist Models** | | | | | | | |

|

| 229 |

-

| Unified-IO | 2.9B | - | 100.0 | - | 77.9 | - | 57.4 |

|

| 230 |

-

| Florence-2-base-ft | 0.23B | 140.0 | 116.7 | 143.9 | 79.7 | 63.6 | 63.6 |

|

| 231 |

-

| Florence-2-large-ft | 0.77B | 143.3 | 124.9 | 151.1 | 81.7 | 73.5 | 72.6 |

|

| 232 |

-

|

| 233 |

-

|

| 234 |

-

| Method | # Params | COCO Det. val2017 mAP | Flickr30k test R@1 | RefCOCO val Accuracy | RefCOCO test-A Accuracy | RefCOCO test-B Accuracy | RefCOCO+ val Accuracy | RefCOCO+ test-A Accuracy | RefCOCO+ test-B Accuracy | RefCOCOg val Accuracy | RefCOCOg test Accuracy | RefCOCO RES val mIoU |

|

| 235 |

-

|----------------------|----------|-----------------------|--------------------|----------------------|-------------------------|-------------------------|------------------------|---------------------------|---------------------------|------------------------|-----------------------|------------------------|

|

| 236 |

-

| **Specialist Models** | | | | | | | | | | | | |

|

| 237 |

-

| SeqTR | - | - | - | 83.7 | 86.5 | 81.2 | 71.5 | 76.3 | 64.9 | 74.9 | 74.2 | - |

|

| 238 |

-

| PolyFormer | - | - | - | 90.4 | 92.9 | 87.2 | 85.0 | 89.8 | 78.0 | 85.8 | 85.9 | 76.9 |

|

| 239 |

-

| UNINEXT | 0.74B | 60.6 | - | 92.6 | 94.3 | 91.5 | 85.2 | 89.6 | 79.8 | 88.7 | 89.4 | - |

|

| 240 |

-

| Ferret | 13B | - | - | 89.5 | 92.4 | 84.4 | 82.8 | 88.1 | 75.2 | 85.8 | 86.3 | - |

|

| 241 |

-

| **Generalist Models** | | | | | | | | | | | | |

|

| 242 |

-

| UniTAB | - | - | - | 88.6 | 91.1 | 83.8 | 81.0 | 85.4 | 71.6 | 84.6 | 84.7 | - |

|

| 243 |

-

| Florence-2-base-ft | 0.23B | 41.4 | 84.0 | 92.6 | 94.8 | 91.5 | 86.8 | 91.7 | 82.2 | 89.8 | 82.2 | 78.0 |

|

| 244 |

-

| Florence-2-large-ft| 0.77B | 43.4 | 85.2 | 93.4 | 95.3 | 92.0 | 88.3 | 92.9 | 83.6 | 91.2 | 91.7 | 80.5 |

|

| 245 |

-

|

| 246 |

|

| 247 |

## BibTex and citation info

|

| 248 |

|

| 249 |

```

|

| 250 |

-

@

|

| 251 |

-

|

| 252 |

-

|

| 253 |

-

|

| 254 |

-

year={2023}

|

| 255 |

}

|

| 256 |

```

|

|

|

|

| 1 |

---

|

| 2 |

license: mit

|

| 3 |

+

license_link: https://huggingface.co/microsoft/Florence-2-base-ft/resolve/main/LICENSE

|

| 4 |

pipeline_tag: image-text-to-text

|

| 5 |

tags:

|

| 6 |

- vision

|

| 7 |

+

- ocr

|

| 8 |

+

- segmentation

|

| 9 |

---

|

| 10 |

+

# TF-ID: Table/Figure IDentifier for academic papers

|

|

|

|

| 11 |

|

| 12 |

## Model Summary

|

| 13 |

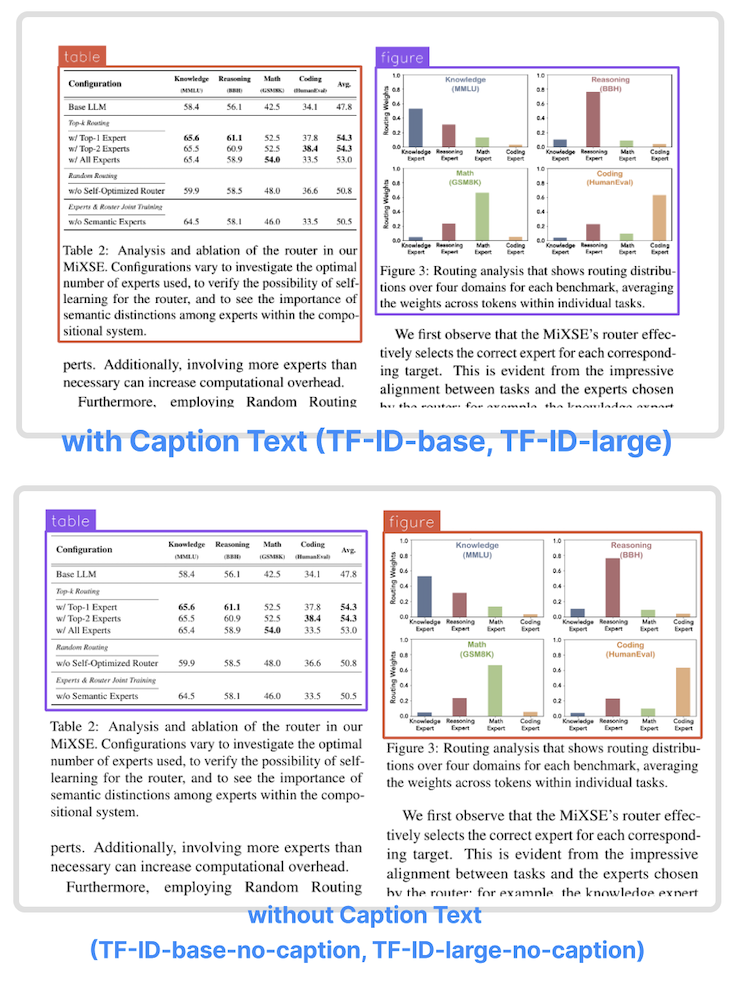

|

| 14 |

+

TF-ID (Table/Figure IDentifier) is a family of object detection models finetuned to extract tables and figures in academic papers created by [Yifei Hu](https://x.com/hu_yifei). They come in four versions:

|

| 15 |

+

| Model | Model size | Model Description |

|

| 16 |

+

| ------- | ------------- | ------------- |

|

| 17 |

+

| TF-ID-base[[HF]](https://huggingface.co/yifeihu/TF-ID-base) | 0.23B | Extract tables/figures and their caption text

|

| 18 |

+

| TF-ID-large[[HF]](https://huggingface.co/yifeihu/TF-ID-large) | 0.77B | Extract tables/figures and their caption text

|

| 19 |

+

| TF-ID-base-no-caption[[HF]](https://huggingface.co/yifeihu/TF-ID-base-no-caption) | 0.23B | Extract tables/figures without caption text

|

| 20 |

+

| TF-ID-large-no-caption[[HF]](https://huggingface.co/yifeihu/TF-ID-large-no-caption) | 0.77B | Extract tables/figures without caption text

|

| 21 |

+

All TF-ID models are finetuned from [microsoft/Florence-2](https://huggingface.co/microsoft/Florence-2-large-ft) checkpoints.

|

| 22 |

+

|

| 23 |

+

The models were finetuned with papers from Hugging Face Daily Papers. All bounding boxes are manually annotated and checked by humans.

|

| 24 |

|

| 25 |

+

TF-ID models take an image of a single paper page as the input, and return bounding boxes for all tables and figures in the given page.

|

| 26 |

|

| 27 |

+

TF-ID-base and TF-ID-large draw bounding boxes around tables/figures and their caption text.

|

|

|

|

|

|

|

| 28 |

|

| 29 |

+

TF-ID-base-no-caption and TF-ID-large-no-caption draw bounding boxes around tables/figures without their caption text.

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

Object Detection results format:

|

| 34 |

+

{'\<OD>': {'bboxes': [[x1, y1, x2, y2], ...],

|

| 35 |

+

'labels': ['label1', 'label2', ...]} }

|

| 36 |

+

|

| 37 |

+

## Benchmarks

|

| 38 |

+

|

| 39 |

+

We tested the models on paper pages outside the training dataset. The papers are a subset of huggingface daily paper.

|

| 40 |

+

|

| 41 |

+

Correct output - the model draws correct bounding boxes for every table/figure in the given page.

|

| 42 |

+

|

| 43 |

+

| Model | Total Images | Correct Output | Success Rate |

|

| 44 |

+

|---------------------------------------------------------------|--------------|----------------|--------------|

|

| 45 |

+

| TF-ID-base[[HF]](https://huggingface.co/yifeihu/TF-ID-base) | 258 | 251 | 97.29% |

|

| 46 |

+

| TF-ID-large[[HF]](https://huggingface.co/yifeihu/TF-ID-large) | 258 | 253 | 98.06% |

|

| 47 |

+

|

| 48 |

+

| Model | Total Images | Correct Output | Success Rate |

|

| 49 |

+

|---------------------------------------------------------------|--------------|----------------|--------------|

|

| 50 |

+

| TF-ID-base-no-caption[[HF]](https://huggingface.co/yifeihu/TF-ID-base-no-caption) | 261 | 253 | 96.93% |

|

| 51 |

+

| TF-ID-large-no-caption[[HF]](https://huggingface.co/yifeihu/TF-ID-large-no-caption) | 261 | 254 | 97.32% |

|

| 52 |

+

|

| 53 |

+

Depending on the use cases, some "incorrect" output could be totally usable. For example, the model draw two bounding boxes for one figure with two child components.

|

| 54 |

|

| 55 |

## How to Get Started with the Model

|

| 56 |

|

|

|

|

| 58 |

|

| 59 |

```python

|

| 60 |

import requests

|

|

|

|

| 61 |

from PIL import Image

|

| 62 |

from transformers import AutoProcessor, AutoModelForCausalLM

|

| 63 |

|

| 64 |

+

model = AutoModelForCausalLM.from_pretrained("yifeihu/TF-ID-large-no-caption", trust_remote_code=True)

|

| 65 |

+

processor = AutoProcessor.from_pretrained("yifeihu/TF-ID-large-no-caption", trust_remote_code=True)

|

|

|

|

| 66 |

|

| 67 |

prompt = "<OD>"

|

| 68 |

|

| 69 |

+

url = "https://huggingface.co/yifeihu/TF-ID-base/resolve/main/arxiv_2305_10853_5.png?download=true"

|

| 70 |

image = Image.open(requests.get(url, stream=True).raw)

|

| 71 |

|

| 72 |

inputs = processor(text=prompt, images=image, return_tensors="pt")

|

|

|

|

| 83 |

parsed_answer = processor.post_process_generation(generated_text, task="<OD>", image_size=(image.width, image.height))

|

| 84 |

|

| 85 |

print(parsed_answer)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 86 |

```

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 87 |

|

| 88 |

+

To visualize the results, see [this tutorial notebook](https://colab.research.google.com/github/roboflow-ai/notebooks/blob/main/notebooks/how-to-finetune-florence-2-on-detection-dataset.ipynb) for more details.

|

| 89 |

|

| 90 |

+

## Finetuning Code and Dataset

|

| 91 |

|

| 92 |

+

Coming soon!

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 93 |

|

| 94 |

## BibTex and citation info

|

| 95 |

|

| 96 |

```

|

| 97 |

+

@misc{TF-ID,

|

| 98 |

+

url={[https://huggingface.co/yifeihu/TF-ID-base](https://huggingface.co/yifeihu/TF-ID-base)},

|

| 99 |

+

title={TF-ID: Table/Figure IDentifier for academic papers},

|

| 100 |

+

author={"Yifei Hu"}

|

|

|

|

| 101 |

}

|

| 102 |

```

|