{}

Reward model for HH-RLHF

Thanks for your intersts in this reward model! We recommed you to use weqweasdas/RM-Gemma-2B instead.

In this repo, we present a reward model trained by the framework LMFlow. The reward model is for the HH-RLHF dataset (helpful part only), and is trained from the base model openlm-research/open_llama_3b.

Model Details

The training curves and some other details can be found in our paper RAFT (Reward ranked finetuning). If you have any question with this reward model and also any question about reward modeling, feel free to drop me an email with wx13@illinois.edu. I would be happy to chat!

Dataset preprocessing

The HH-RLHF-Helpful dataset contains 112K comparison samples in the training set and 12.5K comparison samples in the test set. We first replace the \n\nHuman'' and \n\nAssistant'' in the dataset by ###Human'' and ###Assistant'', respectively.

Then, we split the dataset as follows:

- SFT dataset: 112K training samples + the first 6275 samples in the test set, we only use the chosen responses;

- Training set of reward modeling: 112K training samples + the first 6275 samples in the test set, we use both the chosen and rejected responses;

- Test set of reward modeling: the last 6226 samples of the original test set.

Training

To use the data more efficiently, we concatenate texts and split them into 1024-sized chunks, rather than padding them according to the longest text (in each batch). We then finetune the base model on the SFT dataset for two epochs, using a learning rate of 2e-5 and a linear decay schedule.

We conduct reward modeling with learning rate 5e-6 for 1 epoch and linear decay schedule because it seems that the model easily overfits with more than 1 epoches. We discard the samples longer than 512 tokens so we have approximately 106K samples in the training set and 5K samples in the test set for reward modeling.

We use bf16 and do not use LoRA in both of the stages.

The resulting model achieves an evaluation loss of 0.5 and an evaluation accuracy 75.48%. (Note that there can be data leakage in the HH-RLHF dataset.)

Generalization

We further test the generalization ability of the reward model but with another round of training during another research project (with the same hyper-parameter though). We test the accuracy on open assistant dataset and chatbot dataset, and compare the reward model to the reward models trained directly on these two datasets. The results are as follows:

| Dataset training/test | open assistant | chatbot | hh_rlhf |

|---|---|---|---|

| open assistant | 69.5 | 61.1 | 58.7 |

| chatbot | 66.5 | 62.7 | 56.0 |

| hh_rlhf | 69.4 | 64.2 | 77.6 |

As we can see, the reward model trained on the HH-RLHF achieves matching or even better accuracy on open assistant and chatbot datasets, even though it is not trained on them directly. Therefore, the reward model may also be used for these two datasets.

Uses

rm_tokenizer = AutoTokenizer.from_pretrained("weqweasdas/hh_rlhf_rm_open_llama_3b")

rm_pipe = pipeline(

"sentiment-analysis",

model="weqweasdas/hh_rlhf_rm_open_llama_3b",

device="auto",

tokenizer=rm_tokenizer,

model_kwargs={"torch_dtype": torch.bfloat16}

)

pipe_kwargs = {

"return_all_scores": True,

"function_to_apply": "none",

"batch_size": 1

}

test_texts = [

"###Human: My daughter wants to know how to convert fractions to decimals, but I'm not sure how to explain it. Can you help? ###Assistant: Sure. So one way of converting fractions to decimals is to ask “how many halves are there?” and then write this as a decimal number. But that's a little tricky. Here's a simpler way: if a fraction is expressed as a/b, then it's decimal equivalent is just a/b * 1.0 So, for example, the decimal equivalent of 1/2 is 1/2 * 1.0 = 0.5.",

"###Human: I have fresh whole chicken in my fridge. What dish can I prepare using it that will take me less than an hour to cook? ###Assistant: Are you interested in a quick and easy recipe you can prepare with chicken you have on hand, or something more involved? In terms of both effort and time, what are you looking for?"]

pipe_outputs = rm_pipe(test_texts, **pipe_kwargs)

rewards = [output[0]["score"] for output in pipe_outputs]

RAFT Example

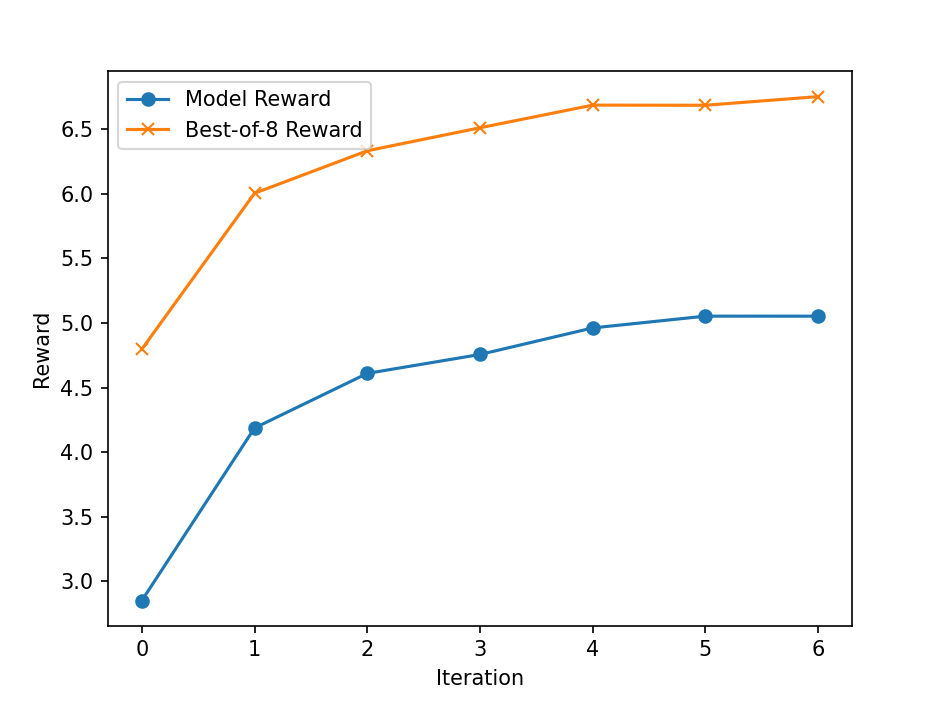

We test the reward model by the RAFT and with EleutherAI/gpt-neo-2.7B as the starting checkpoint.

For each iteration, we sample 2048 prompts from the HH-RLHF dataset, and for each prompt, we generate K=8 responses by the current model, and pick the response with the highest reward. Then, we finetune the model on this picked set to get the new model. We report the learning curve as follows:

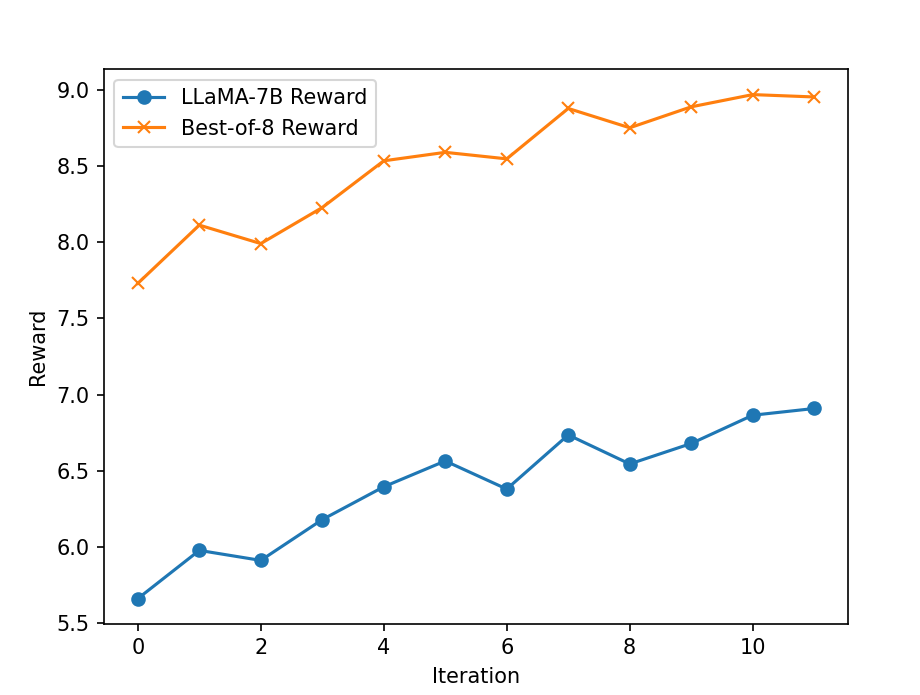

We also perform the experiment with the LLaMA-7B model but we first fine-tune the base model using the chosen responses in the HH-RLHF dataset for 1 epoch with learning rate 2e-5. The hyper-parameters for RAFT are the same with the GPT-Neo-2.7B and the reward curves are presented as follows:

Reference

If you found this model useful, please cite our framework and paper using the following BibTeX:

@article{diao2023lmflow,

title={Lmflow: An extensible toolkit for finetuning and inference of large foundation models},

author={Diao, Shizhe and Pan, Rui and Dong, Hanze and Shum, Ka Shun and Zhang, Jipeng and Xiong, Wei and Zhang, Tong},

journal={arXiv preprint arXiv:2306.12420},

year={2023}

}

@article{dong2023raft,

title={Raft: Reward ranked finetuning for generative foundation model alignment},

author={Dong, Hanze and Xiong, Wei and Goyal, Deepanshu and Pan, Rui and Diao, Shizhe and Zhang, Jipeng and Shum, Kashun and Zhang, Tong},

journal={arXiv preprint arXiv:2304.06767},

year={2023}

}