huwenxing

commited on

Commit

·

a9522ba

1

Parent(s):

83fdb73

First model version

Browse files- README.md +195 -0

- config.json +32 -0

- configuration_internlm.py +121 -0

- generation_config.json +7 -0

- modeling_internlm.py +1086 -0

- pytorch_model-00001-of-00008.bin +3 -0

- pytorch_model-00002-of-00008.bin +3 -0

- pytorch_model-00003-of-00008.bin +3 -0

- pytorch_model-00004-of-00008.bin +3 -0

- pytorch_model-00005-of-00008.bin +3 -0

- pytorch_model-00006-of-00008.bin +3 -0

- pytorch_model-00007-of-00008.bin +3 -0

- pytorch_model-00008-of-00008.bin +3 -0

- pytorch_model.bin.index.json +458 -0

- special_tokens_map.json +6 -0

- tokenization_internlm.py +242 -0

- tokenizer.model +3 -0

- tokenizer_config.json +15 -0

README.md

ADDED

|

@@ -0,0 +1,195 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

pipeline_tag: text-generation

|

| 3 |

+

---

|

| 4 |

+

# InternLM

|

| 5 |

+

|

| 6 |

+

<div align="center">

|

| 7 |

+

|

| 8 |

+

<img src="https://github.com/InternLM/InternLM/assets/22529082/b9788105-8892-4398-8b47-b513a292378e" width="200"/>

|

| 9 |

+

<div> </div>

|

| 10 |

+

<div align="center">

|

| 11 |

+

<b><font size="5">InternLM</font></b>

|

| 12 |

+

<sup>

|

| 13 |

+

<a href="https://internlm.intern-ai.org.cn/">

|

| 14 |

+

<i><font size="4">HOT</font></i>

|

| 15 |

+

</a>

|

| 16 |

+

</sup>

|

| 17 |

+

<div> </div>

|

| 18 |

+

</div>

|

| 19 |

+

|

| 20 |

+

[](https://github.com/internLM/OpenCompass/)

|

| 21 |

+

|

| 22 |

+

[💻Github Repo](https://github.com/InternLM/InternLM) • [🤔Reporting Issues](https://github.com/InternLM/InternLM/issues/new)

|

| 23 |

+

|

| 24 |

+

</div>

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

## Introduction

|

| 28 |

+

|

| 29 |

+

InternLM has open-sourced a 7 billion parameter base model and a chat model tailored for practical scenarios. The model has the following characteristics:

|

| 30 |

+

- It leverages trillions of high-quality tokens for training to establish a powerful knowledge base.

|

| 31 |

+

- It supports an 8k context window length, enabling longer input sequences and stronger reasoning capabilities.

|

| 32 |

+

- It provides a versatile toolset for users to flexibly build their own workflows.

|

| 33 |

+

|

| 34 |

+

## InternLM-7B

|

| 35 |

+

|

| 36 |

+

### Performance Evaluation

|

| 37 |

+

|

| 38 |

+

We conducted a comprehensive evaluation of InternLM using the open-source evaluation tool [OpenCompass](https://github.com/internLM/OpenCompass/). The evaluation covered five dimensions of capabilities: disciplinary competence, language competence, knowledge competence, inference competence, and comprehension competence. Here are some of the evaluation results, and you can visit the [OpenCompass leaderboard](https://opencompass.org.cn/rank) for more evaluation results.

|

| 39 |

+

|

| 40 |

+

| Datasets\Models | **InternLM-Chat-7B** | **InternLM-7B** | LLaMA-7B | Baichuan-7B | ChatGLM2-6B | Alpaca-7B | Vicuna-7B |

|

| 41 |

+

| -------------------- | --------------------- | ---------------- | --------- | --------- | ------------ | --------- | ---------- |

|

| 42 |

+

| C-Eval(Val) | 53.2 | 53.4 | 24.2 | 42.7 | 50.9 | 28.9 | 31.2 |

|

| 43 |

+

| MMLU | 50.8 | 51.0 | 35.2* | 41.5 | 46.0 | 39.7 | 47.3 |

|

| 44 |

+

| AGIEval | 42.5 | 37.6 | 20.8 | 24.6 | 39.0 | 24.1 | 26.4 |

|

| 45 |

+

| CommonSenseQA | 75.2 | 59.5 | 65.0 | 58.8 | 60.0 | 68.7 | 66.7 |

|

| 46 |

+

| BUSTM | 74.3 | 50.6 | 48.5 | 51.3 | 55.0 | 48.8 | 62.5 |

|

| 47 |

+

| CLUEWSC | 78.6 | 59.1 | 50.3 | 52.8 | 59.8 | 50.3 | 52.2 |

|

| 48 |

+

| MATH | 6.4 | 7.1 | 2.8 | 3.0 | 6.6 | 2.2 | 2.8 |

|

| 49 |

+

| GSM8K | 34.5 | 31.2 | 10.1 | 9.7 | 29.2 | 6.0 | 15.3 |

|

| 50 |

+

| HumanEval | 14.0 | 10.4 | 14.0 | 9.2 | 9.2 | 9.2 | 11.0 |

|

| 51 |

+

| RACE(High) | 76.3 | 57.4 | 46.9* | 28.1 | 66.3 | 40.7 | 54.0 |

|

| 52 |

+

|

| 53 |

+

- The evaluation results were obtained from [OpenCompass 20230706](https://github.com/internLM/OpenCompass/) (some data marked with *, which means come from the original papers), and evaluation configuration can be found in the configuration files provided by [OpenCompass](https://github.com/internLM/OpenCompass/).

|

| 54 |

+

- The evaluation data may have numerical differences due to the version iteration of [OpenCompass](https://github.com/internLM/OpenCompass/), so please refer to the latest evaluation results of [OpenCompass](https://github.com/internLM/OpenCompass/).

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

**Limitations:** Although we have made efforts to ensure the safety of the model during the training process and to encourage the model to generate text that complies with ethical and legal requirements, the model may still produce unexpected outputs due to its size and probabilistic generation paradigm. For example, the generated responses may contain biases, discrimination, or other harmful content. Please do not propagate such content. We are not responsible for any consequences resulting from the dissemination of harmful information.

|

| 58 |

+

|

| 59 |

+

### Import from Transformers

|

| 60 |

+

To load the InternLM 7B Chat model using Transformers, use the following code:

|

| 61 |

+

```python

|

| 62 |

+

import torch

|

| 63 |

+

from transformers import AutoTokenizer, AutoModelForCausalLM

|

| 64 |

+

tokenizer = AutoTokenizer.from_pretrained("internlm/internlm-chat-7b", trust_remote_code=True)

|

| 65 |

+

# Set `torch_dtype=torch.float16` to load model in float16, otherwise it will be loaded as float32 and cause OOM Error.

|

| 66 |

+

model = AutoModelForCausalLM.from_pretrained("internlm/internlm-chat-7b", torch_dtype=torch.float16, trust_remote_code=True).cuda()

|

| 67 |

+

model = model.eval()

|

| 68 |

+

response, history = model.chat(tokenizer, "hello", history=[])

|

| 69 |

+

print(response)

|

| 70 |

+

# Hello! How can I help you today?

|

| 71 |

+

response, history = model.chat(tokenizer, "please provide three suggestions about time management", history=history)

|

| 72 |

+

print(response)

|

| 73 |

+

# Sure, here are three tips for effective time management:

|

| 74 |

+

#

|

| 75 |

+

# 1. Prioritize tasks based on importance and urgency: Make a list of all your tasks and categorize them into "important and urgent," "important but not urgent," and "not important but urgent." Focus on completing the tasks in the first category before moving on to the others.

|

| 76 |

+

# 2. Use a calendar or planner: Write down deadlines and appointments in a calendar or planner so you don't forget them. This will also help you schedule your time more effectively and avoid overbooking yourself.

|

| 77 |

+

# 3. Minimize distractions: Try to eliminate any potential distractions when working on important tasks. Turn off notifications on your phone, close unnecessary tabs on your computer, and find a quiet place to work if possible.

|

| 78 |

+

#

|

| 79 |

+

# Remember, good time management skills take practice and patience. Start with small steps and gradually incorporate these habits into your daily routine.

|

| 80 |

+

```

|

| 81 |

+

|

| 82 |

+

The responses can be streamed using `stream_chat`:

|

| 83 |

+

|

| 84 |

+

```python

|

| 85 |

+

import torch

|

| 86 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 87 |

+

|

| 88 |

+

model_path = "internlm/internlm-chat-7b"

|

| 89 |

+

model = AutoModelForCausalLM.from_pretrained(model_path, torch_dtype=torch.float16, trust_remote_code=True)

|

| 90 |

+

tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

|

| 91 |

+

|

| 92 |

+

model = model.eval()

|

| 93 |

+

length = 0

|

| 94 |

+

for response, history in model.stream_chat(tokenizer, "Hello", history=[]):

|

| 95 |

+

print(response[length:], flush=True, end="")

|

| 96 |

+

length = len(response)

|

| 97 |

+

```

|

| 98 |

+

|

| 99 |

+

### Dialogue

|

| 100 |

+

|

| 101 |

+

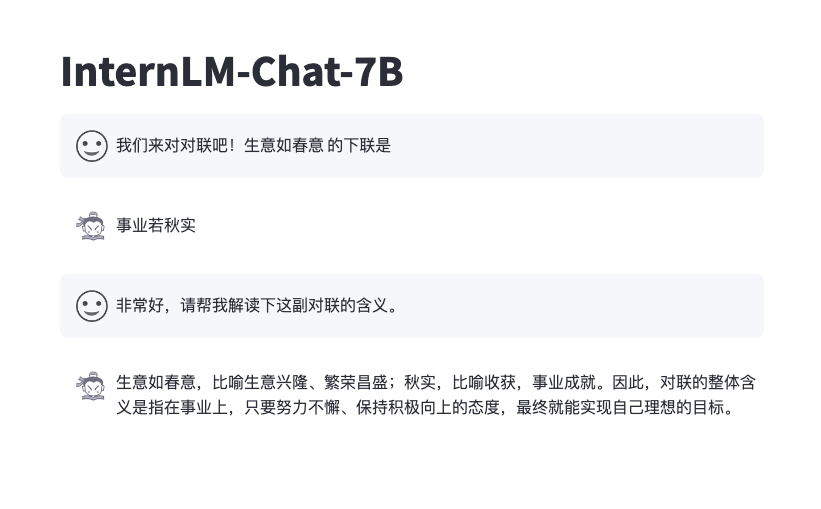

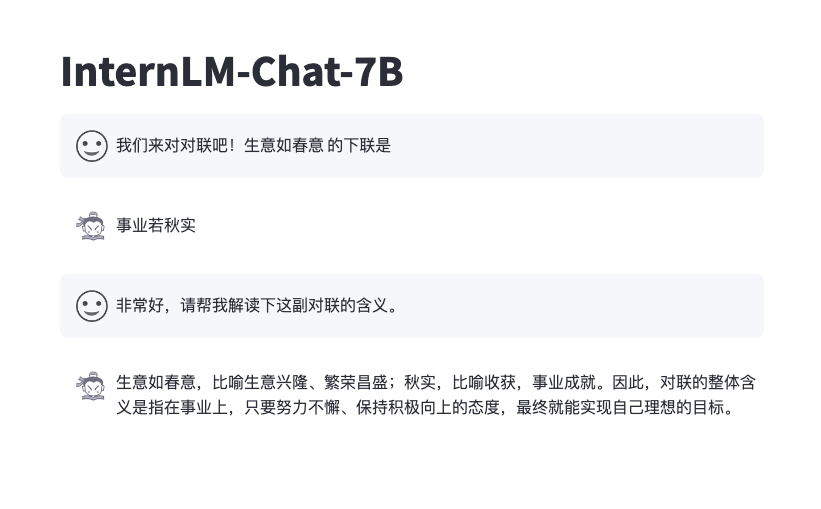

You can interact with the InternLM Chat 7B model through a frontend interface by running the following code:

|

| 102 |

+

```bash

|

| 103 |

+

pip install streamlit==1.24.0

|

| 104 |

+

pip install transformers==4.30.2

|

| 105 |

+

streamlit run web_demo.py

|

| 106 |

+

```

|

| 107 |

+

The effect is as follows

|

| 108 |

+

|

| 109 |

+

|

| 110 |

+

|

| 111 |

+

## Open Source License

|

| 112 |

+

|

| 113 |

+

The code is licensed under Apache-2.0, while model weights are fully open for academic research and also allow **free** commercial usage. To apply for a commercial license, please fill in the [application form (English)](https://wj.qq.com/s2/12727483/5dba/)/[申请表(中文)](https://wj.qq.com/s2/12725412/f7c1/). For other questions or collaborations, please contact <internlm@pjlab.org.cn>.

|

| 114 |

+

|

| 115 |

+

## 简介

|

| 116 |

+

InternLM ,即书生·浦语大模型,包含面向实用场景的70亿参数基础模型与对话模型 (InternLM-7B)。模型具有以下特点:

|

| 117 |

+

- 使用上万亿高质量预料,建立模型超强知识体系;

|

| 118 |

+

- 支持8k语境窗口长度,实现更长输入与更强推理体验;

|

| 119 |

+

- 通用工具调用能力,支持用户灵活自助搭建流程;

|

| 120 |

+

|

| 121 |

+

## InternLM-7B

|

| 122 |

+

|

| 123 |

+

### 性能评测

|

| 124 |

+

|

| 125 |

+

我们使用开源评测工具 [OpenCompass](https://github.com/internLM/OpenCompass/) 从学科综合能力、语言能力、知识能力、推理能力、理解能力五大能力维度对InternLM开展全面评测,部分评测结果如下表所示,欢迎访问[ OpenCompass 榜单 ](https://opencompass.org.cn/rank)获取更多的评测结果。

|

| 126 |

+

|

| 127 |

+

| 数据集\模型 | **InternLM-Chat-7B** | **InternLM-7B** | LLaMA-7B | Baichuan-7B | ChatGLM2-6B | Alpaca-7B | Vicuna-7B |

|

| 128 |

+

| -------------------- | --------------------- | ---------------- | --------- | --------- | ------------ | --------- | ---------- |

|

| 129 |

+

| C-Eval(Val) | 53.2 | 53.4 | 24.2 | 42.7 | 50.9 | 28.9 | 31.2 |

|

| 130 |

+

| MMLU | 50.8 | 51.0 | 35.2* | 41.5 | 46.0 | 39.7 | 47.3 |

|

| 131 |

+

| AGIEval | 42.5 | 37.6 | 20.8 | 24.6 | 39.0 | 24.1 | 26.4 |

|

| 132 |

+

| CommonSenseQA | 75.2 | 59.5 | 65.0 | 58.8 | 60.0 | 68.7 | 66.7 |

|

| 133 |

+

| BUSTM | 74.3 | 50.6 | 48.5 | 51.3 | 55.0 | 48.8 | 62.5 |

|

| 134 |

+

| CLUEWSC | 78.6 | 59.1 | 50.3 | 52.8 | 59.8 | 50.3 | 52.2 |

|

| 135 |

+

| MATH | 6.4 | 7.1 | 2.8 | 3.0 | 6.6 | 2.2 | 2.8 |

|

| 136 |

+

| GSM8K | 34.5 | 31.2 | 10.1 | 9.7 | 29.2 | 6.0 | 15.3 |

|

| 137 |

+

| HumanEval | 14.0 | 10.4 | 14.0 | 9.2 | 9.2 | 9.2 | 11.0 |

|

| 138 |

+

| RACE(High) | 76.3 | 57.4 | 46.9* | 28.1 | 66.3 | 40.7 | 54.0 |

|

| 139 |

+

|

| 140 |

+

- 以上评测结果基于 [OpenCompass 20230706](https://github.com/internLM/OpenCompass/) 获得(部分数据标注`*`代表数据来自原始论文),具体测试细节可参见 [OpenCompass](https://github.com/internLM/OpenCompass/) 中提供的配置文件。

|

| 141 |

+

- ��测数据会因 [OpenCompass](https://github.com/internLM/OpenCompass/) 的版本迭代而存在数值差异,请以 [OpenCompass](https://github.com/internLM/OpenCompass/) 最新版的评测结果为主。

|

| 142 |

+

|

| 143 |

+

**局限性:** 尽管在训练过程中我们非常注重模型的安全性,尽力促使模型输出符合伦理和法律要求的文本,但受限于模型大小以及概率生成范式,模型可能会产生各种不符合预期的输出,例如回复内容包含偏见、歧视等有害内容,请勿传播这些内容。由于传播不良信息导致的任何后果,本项目不承担责任。

|

| 144 |

+

|

| 145 |

+

### 通过 Transformers 加载

|

| 146 |

+

通过以下的代码加载 InternLM 7B Chat 模型

|

| 147 |

+

```python

|

| 148 |

+

import torch

|

| 149 |

+

from transformers import AutoTokenizer, AutoModelForCausalLM

|

| 150 |

+

tokenizer = AutoTokenizer.from_pretrained("internlm/internlm-chat-7b", trust_remote_code=True)

|

| 151 |

+

# `torch_dtype=torch.float16` 可以令模型以 float16 精度加载,否则 transformers 会将模型加载为 float32,导致显存不足

|

| 152 |

+

model = AutoModelForCausalLM.from_pretrained("internlm/internlm-chat-7b", torch_dtype=torch.float16, trust_remote_code=True).cuda()

|

| 153 |

+

model = model.eval()

|

| 154 |

+

response, history = model.chat(tokenizer, "你好", history=[])

|

| 155 |

+

print(response)

|

| 156 |

+

# 你好!有什么我可以帮助你的吗?

|

| 157 |

+

response, history = model.chat(tokenizer, "请提供三个管理时间的建议。", history=history)

|

| 158 |

+

print(response)

|

| 159 |

+

# 当然可以!以下是三个管理时间的建议:

|

| 160 |

+

# 1. 制定计划:制定一个详细的计划,包括每天要完成的任务和活动。这将有助于您更好地组织时间,并确保您能够按时完成任务。

|

| 161 |

+

# 2. 优先级:将任务按照优先级排序,先完成最重要的任务。这将确保您能够在最短的时间内完成最重要的任务,从而节省时间。

|

| 162 |

+

# 3. 集中注意力:避免分心,集中注意力完成任务。关闭社交媒体和电子邮件通知,专注于任务,这将帮助您更快地完成任务,并减少错误的可能性。

|

| 163 |

+

```

|

| 164 |

+

|

| 165 |

+

如果想进行流式生成,则可以使用 `stream_chat` 接口:

|

| 166 |

+

|

| 167 |

+

```python

|

| 168 |

+

import torch

|

| 169 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 170 |

+

|

| 171 |

+

model_path = "internlm/internlm-chat-7b"

|

| 172 |

+

model = AutoModelForCausalLM.from_pretrained(model_path, torch_dype=torch.float16, trust_remote_code=True)

|

| 173 |

+

tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

|

| 174 |

+

|

| 175 |

+

model = model.eval()

|

| 176 |

+

length = 0

|

| 177 |

+

for response, history in model.stream_chat(tokenizer, "你好", history=[]):

|

| 178 |

+

print(response[length:], flush=True, end="")

|

| 179 |

+

length = len(response)

|

| 180 |

+

```

|

| 181 |

+

|

| 182 |

+

### 通过前端网页对话

|

| 183 |

+

可以通过以下代码启动一个前端的界面来与 InternLM Chat 7B 模型进行交互

|

| 184 |

+

```bash

|

| 185 |

+

pip install streamlit==1.24.0

|

| 186 |

+

pip install transformers==4.30.2

|

| 187 |

+

streamlit run web_demo.py

|

| 188 |

+

```

|

| 189 |

+

效果如下

|

| 190 |

+

|

| 191 |

+

|

| 192 |

+

|

| 193 |

+

## 开源许可证

|

| 194 |

+

|

| 195 |

+

本仓库的代码依照 Apache-2.0 协议开源。模型权重对学术研究完全开放,也可申请免费的商业使用授权([申请表](https://wj.qq.com/s2/12725412/f7c1/))。其他问题与合作请联系 <internlm@pjlab.org.cn>。

|

config.json

ADDED

|

@@ -0,0 +1,32 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"InternLMForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"auto_map": {

|

| 6 |

+

"AutoConfig": "configuration_internlm.InternLMConfig",

|

| 7 |

+

"AutoModel": "modeling_internlm.InternLMForCausalLM",

|

| 8 |

+

"AutoModelForCausalLM": "modeling_internlm.InternLMForCausalLM"

|

| 9 |

+

},

|

| 10 |

+

"bias": true,

|

| 11 |

+

"bos_token_id": 1,

|

| 12 |

+

"eos_token_id": 2,

|

| 13 |

+

"hidden_act": "silu",

|

| 14 |

+

"hidden_size": 4096,

|

| 15 |

+

"initializer_range": 0.02,

|

| 16 |

+

"intermediate_size": 11008,

|

| 17 |

+

"max_position_embeddings": 2048,

|

| 18 |

+

"model_type": "internlm",

|

| 19 |

+

"num_attention_heads": 32,

|

| 20 |

+

"num_hidden_layers": 32,

|

| 21 |

+

"pad_token_id": 2,

|

| 22 |

+

"rms_norm_eps": 1e-06,

|

| 23 |

+

"tie_word_embeddings": false,

|

| 24 |

+

"torch_dtype": "float16",

|

| 25 |

+

"transformers_version": "4.29.2",

|

| 26 |

+

"use_cache": true,

|

| 27 |

+

"vocab_size": 103168,

|

| 28 |

+

"rotary": {

|

| 29 |

+

"base": 10000,

|

| 30 |

+

"type": "dynamic"

|

| 31 |

+

}

|

| 32 |

+

}

|

configuration_internlm.py

ADDED

|

@@ -0,0 +1,121 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# coding=utf-8

|

| 2 |

+

# Copyright 2022 EleutherAI and the HuggingFace Inc. team. All rights reserved.

|

| 3 |

+

#

|

| 4 |

+

# This code is based on EleutherAI's GPT-NeoX library and the GPT-NeoX

|

| 5 |

+

# and OPT implementations in this library. It has been modified from its

|

| 6 |

+

# original forms to accommodate minor architectural differences compared

|

| 7 |

+

# to GPT-NeoX and OPT used by the Meta AI team that trained the model.

|

| 8 |

+

#

|

| 9 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 10 |

+

# you may not use this file except in compliance with the License.

|

| 11 |

+

# You may obtain a copy of the License at

|

| 12 |

+

#

|

| 13 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 14 |

+

#

|

| 15 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 16 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 17 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 18 |

+

# See the License for the specific language governing permissions and

|

| 19 |

+

# limitations under the License.

|

| 20 |

+

""" InternLM model configuration"""

|

| 21 |

+

|

| 22 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 23 |

+

from transformers.utils import logging

|

| 24 |

+

|

| 25 |

+

logger = logging.get_logger(__name__)

|

| 26 |

+

|

| 27 |

+

INTERNLM_PRETRAINED_CONFIG_ARCHIVE_MAP = {}

|

| 28 |

+

|

| 29 |

+

|

| 30 |

+

class InternLMConfig(PretrainedConfig):

|

| 31 |

+

r"""

|

| 32 |

+

This is the configuration class to store the configuration of a [`InternLMModel`]. It is used to instantiate

|

| 33 |

+

an InternLM model according to the specified arguments, defining the model architecture. Instantiating a

|

| 34 |

+

configuration with the defaults will yield a similar configuration to that of the InternLM-7B.

|

| 35 |

+

|

| 36 |

+

Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

|

| 37 |

+

documentation from [`PretrainedConfig`] for more information.

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

Args:

|

| 41 |

+

vocab_size (`int`, *optional*, defaults to 32000):

|

| 42 |

+

Vocabulary size of the InternLM model. Defines the number of different tokens that can be represented by the

|

| 43 |

+

`inputs_ids` passed when calling [`InternLMModel`]

|

| 44 |

+

hidden_size (`int`, *optional*, defaults to 4096):

|

| 45 |

+

Dimension of the hidden representations.

|

| 46 |

+

intermediate_size (`int`, *optional*, defaults to 11008):

|

| 47 |

+

Dimension of the MLP representations.

|

| 48 |

+

num_hidden_layers (`int`, *optional*, defaults to 32):

|

| 49 |

+

Number of hidden layers in the Transformer encoder.

|

| 50 |

+

num_attention_heads (`int`, *optional*, defaults to 32):

|

| 51 |

+

Number of attention heads for each attention layer in the Transformer encoder.

|

| 52 |

+

hidden_act (`str` or `function`, *optional*, defaults to `"silu"`):

|

| 53 |

+

The non-linear activation function (function or string) in the decoder.

|

| 54 |

+

max_position_embeddings (`int`, *optional*, defaults to 2048):

|

| 55 |

+

The maximum sequence length that this model might ever be used with. Typically set this to something large

|

| 56 |

+

just in case (e.g., 512 or 1024 or 2048).

|

| 57 |

+

initializer_range (`float`, *optional*, defaults to 0.02):

|

| 58 |

+

The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

|

| 59 |

+

rms_norm_eps (`float`, *optional*, defaults to 1e-12):

|

| 60 |

+

The epsilon used by the rms normalization layers.

|

| 61 |

+

use_cache (`bool`, *optional*, defaults to `True`):

|

| 62 |

+

Whether or not the model should return the last key/values attentions (not used by all models). Only

|

| 63 |

+

relevant if `config.is_decoder=True`.

|

| 64 |

+

tie_word_embeddings(`bool`, *optional*, defaults to `False`):

|

| 65 |

+

Whether to tie weight embeddings

|

| 66 |

+

Example:

|

| 67 |

+

|

| 68 |

+

```python

|

| 69 |

+

>>> from transformers import InternLMModel, InternLMConfig

|

| 70 |

+

|

| 71 |

+

>>> # Initializing a InternLM internlm-7b style configuration

|

| 72 |

+

>>> configuration = InternLMConfig()

|

| 73 |

+

|

| 74 |

+

>>> # Initializing a model from the internlm-7b style configuration

|

| 75 |

+

>>> model = InternLMModel(configuration)

|

| 76 |

+

|

| 77 |

+

>>> # Accessing the model configuration

|

| 78 |

+

>>> configuration = model.config

|

| 79 |

+

```"""

|

| 80 |

+

model_type = "internlm"

|

| 81 |

+

_auto_class = "AutoConfig"

|

| 82 |

+

|

| 83 |

+

def __init__( # pylint: disable=W0102

|

| 84 |

+

self,

|

| 85 |

+

vocab_size=103168,

|

| 86 |

+

hidden_size=4096,

|

| 87 |

+

intermediate_size=11008,

|

| 88 |

+

num_hidden_layers=32,

|

| 89 |

+

num_attention_heads=32,

|

| 90 |

+

hidden_act="silu",

|

| 91 |

+

max_position_embeddings=2048,

|

| 92 |

+

initializer_range=0.02,

|

| 93 |

+

rms_norm_eps=1e-6,

|

| 94 |

+

use_cache=True,

|

| 95 |

+

pad_token_id=0,

|

| 96 |

+

bos_token_id=1,

|

| 97 |

+

eos_token_id=2,

|

| 98 |

+

tie_word_embeddings=False,

|

| 99 |

+

bias=True,

|

| 100 |

+

rotary={"base": 10000, "type": "dynamic"}, # pylint: disable=W0102

|

| 101 |

+

**kwargs,

|

| 102 |

+

):

|

| 103 |

+

self.vocab_size = vocab_size

|

| 104 |

+

self.max_position_embeddings = max_position_embeddings

|

| 105 |

+

self.hidden_size = hidden_size

|

| 106 |

+

self.intermediate_size = intermediate_size

|

| 107 |

+

self.num_hidden_layers = num_hidden_layers

|

| 108 |

+

self.num_attention_heads = num_attention_heads

|

| 109 |

+

self.hidden_act = hidden_act

|

| 110 |

+

self.initializer_range = initializer_range

|

| 111 |

+

self.rms_norm_eps = rms_norm_eps

|

| 112 |

+

self.use_cache = use_cache

|

| 113 |

+

self.bias = bias

|

| 114 |

+

self.rotary = rotary

|

| 115 |

+

super().__init__(

|

| 116 |

+

pad_token_id=pad_token_id,

|

| 117 |

+

bos_token_id=bos_token_id,

|

| 118 |

+

eos_token_id=eos_token_id,

|

| 119 |

+

tie_word_embeddings=tie_word_embeddings,

|

| 120 |

+

**kwargs,

|

| 121 |

+

)

|

generation_config.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 1,

|

| 4 |

+

"eos_token_id": 2,

|

| 5 |

+

"pad_token_id": 2,

|

| 6 |

+

"transformers_version": "4.29.2"

|

| 7 |

+

}

|

modeling_internlm.py

ADDED

|

@@ -0,0 +1,1086 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# coding=utf-8

|

| 2 |

+

# Copyright 2022 EleutherAI and the HuggingFace Inc. team. All rights reserved.

|

| 3 |

+

#

|

| 4 |

+

# This code is based on EleutherAI's GPT-NeoX library and the GPT-NeoX

|

| 5 |

+

# and OPT implementations in this library. It has been modified from its

|

| 6 |

+

# original forms to accommodate minor architectural differences compared

|

| 7 |

+

# to GPT-NeoX and OPT used by the Meta AI team that trained the model.

|

| 8 |

+

#

|

| 9 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 10 |

+

# you may not use this file except in compliance with the License.

|

| 11 |

+

# You may obtain a copy of the License at

|

| 12 |

+

#

|

| 13 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 14 |

+

#

|

| 15 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 16 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 17 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 18 |

+

# See the License for the specific language governing permissions and

|

| 19 |

+

# limitations under the License.

|

| 20 |

+

""" PyTorch InternLM model."""

|

| 21 |

+

import math

|

| 22 |

+

import queue

|

| 23 |

+

import threading

|

| 24 |

+

from typing import List, Optional, Tuple, Union

|

| 25 |

+

|

| 26 |

+

import torch

|

| 27 |

+

import torch.utils.checkpoint

|

| 28 |

+

from torch import nn

|

| 29 |

+

from torch.nn import BCEWithLogitsLoss, CrossEntropyLoss, MSELoss

|

| 30 |

+

from transformers.activations import ACT2FN

|

| 31 |

+

from transformers.generation.streamers import BaseStreamer

|

| 32 |

+

from transformers.modeling_outputs import (

|

| 33 |

+

BaseModelOutputWithPast,

|

| 34 |

+

CausalLMOutputWithPast,

|

| 35 |

+

SequenceClassifierOutputWithPast,

|

| 36 |

+

)

|

| 37 |

+

from transformers.modeling_utils import PreTrainedModel

|

| 38 |

+

from transformers.utils import (

|

| 39 |

+

add_start_docstrings,

|

| 40 |

+

add_start_docstrings_to_model_forward,

|

| 41 |

+

logging,

|

| 42 |

+

replace_return_docstrings,

|

| 43 |

+

)

|

| 44 |

+

|

| 45 |

+

from .configuration_internlm import InternLMConfig

|

| 46 |

+

|

| 47 |

+

logger = logging.get_logger(__name__)

|

| 48 |

+

|

| 49 |

+

_CONFIG_FOR_DOC = "InternLMConfig"

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

# Copied from transformers.models.bart.modeling_bart._make_causal_mask

|

| 53 |

+

def _make_causal_mask(

|

| 54 |

+

input_ids_shape: torch.Size, dtype: torch.dtype, device: torch.device, past_key_values_length: int = 0

|

| 55 |

+

):

|

| 56 |

+

"""

|

| 57 |

+

Make causal mask used for bi-directional self-attention.

|

| 58 |

+

"""

|

| 59 |

+

bsz, tgt_len = input_ids_shape

|

| 60 |

+

mask = torch.full((tgt_len, tgt_len), torch.tensor(torch.finfo(dtype).min, device=device), device=device)

|

| 61 |

+

mask_cond = torch.arange(mask.size(-1), device=device)

|

| 62 |

+

mask.masked_fill_(mask_cond < (mask_cond + 1).view(mask.size(-1), 1), 0)

|

| 63 |

+

mask = mask.to(dtype)

|

| 64 |

+

|

| 65 |

+

if past_key_values_length > 0:

|

| 66 |

+

mask = torch.cat([torch.zeros(tgt_len, past_key_values_length, dtype=dtype, device=device), mask], dim=-1)

|

| 67 |

+

return mask[None, None, :, :].expand(bsz, 1, tgt_len, tgt_len + past_key_values_length)

|

| 68 |

+

|

| 69 |

+

|

| 70 |

+

# Copied from transformers.models.bart.modeling_bart._expand_mask

|

| 71 |

+

def _expand_mask(mask: torch.Tensor, dtype: torch.dtype, tgt_len: Optional[int] = None):

|

| 72 |

+

"""

|

| 73 |

+

Expands attention_mask from `[bsz, seq_len]` to `[bsz, 1, tgt_seq_len, src_seq_len]`.

|

| 74 |

+

"""

|

| 75 |

+

bsz, src_len = mask.size()

|

| 76 |

+

tgt_len = tgt_len if tgt_len is not None else src_len

|

| 77 |

+

|

| 78 |

+

expanded_mask = mask[:, None, None, :].expand(bsz, 1, tgt_len, src_len).to(dtype)

|

| 79 |

+

|

| 80 |

+

inverted_mask = 1.0 - expanded_mask

|

| 81 |

+

|

| 82 |

+

return inverted_mask.masked_fill(inverted_mask.to(torch.bool), torch.finfo(dtype).min)

|

| 83 |

+

|

| 84 |

+

|

| 85 |

+

class InternLMRMSNorm(nn.Module):

|

| 86 |

+

"""RMSNorm implemention."""

|

| 87 |

+

|

| 88 |

+

def __init__(self, hidden_size, eps=1e-6):

|

| 89 |

+

"""

|

| 90 |

+

InternLMRMSNorm is equivalent to T5LayerNorm

|

| 91 |

+

"""

|

| 92 |

+

super().__init__()

|

| 93 |

+

self.weight = nn.Parameter(torch.ones(hidden_size))

|

| 94 |

+

self.variance_epsilon = eps

|

| 95 |

+

|

| 96 |

+

def forward(self, hidden_states):

|

| 97 |

+

variance = hidden_states.to(torch.float32).pow(2).mean(-1, keepdim=True)

|

| 98 |

+

hidden_states = hidden_states * torch.rsqrt(variance + self.variance_epsilon)

|

| 99 |

+

|

| 100 |

+

# convert into half-precision if necessary

|

| 101 |

+

if self.weight.dtype in [torch.float16, torch.bfloat16]:

|

| 102 |

+

hidden_states = hidden_states.to(self.weight.dtype)

|

| 103 |

+

|

| 104 |

+

return self.weight * hidden_states

|

| 105 |

+

|

| 106 |

+

|

| 107 |

+

class InternLMRotaryEmbedding(torch.nn.Module):

|

| 108 |

+

"""Implement InternLM's rotary embedding.

|

| 109 |

+

|

| 110 |

+

Args:

|

| 111 |

+

dim (int): Characteristic dimension of each self-attentional head.

|

| 112 |

+

max_position_embeddings (int, optional): Model's training length. Defaults to 2048.

|

| 113 |

+

base (int, optional): The rotation position encodes the rotation Angle base number. Defaults to 10000.

|

| 114 |

+

device (Any, optional): Running device. Defaults to None.

|

| 115 |

+

"""

|

| 116 |

+

def __init__(self, dim, max_position_embeddings=2048, base=10000, device=None):

|

| 117 |

+

super().__init__()

|

| 118 |

+

inv_freq = 1.0 / (base ** (torch.arange(0, dim, 2).float().to(device) / dim))

|

| 119 |

+

self.register_buffer("inv_freq", inv_freq, persistent=False)

|

| 120 |

+

|

| 121 |

+

# Build here to make `torch.jit.trace` work.

|

| 122 |

+

self.max_seq_len_cached = max_position_embeddings

|

| 123 |

+

t = torch.arange(self.max_seq_len_cached, device=self.inv_freq.device, dtype=self.inv_freq.dtype)

|

| 124 |

+

freqs = torch.einsum("i,j->ij", t, self.inv_freq)

|

| 125 |

+

# Different from paper, but it uses a different permutation in order to obtain the same calculation

|

| 126 |

+

emb = torch.cat((freqs, freqs), dim=-1)

|

| 127 |

+

self.register_buffer("cos_cached", emb.cos()[None, None, :, :], persistent=False)

|

| 128 |

+

self.register_buffer("sin_cached", emb.sin()[None, None, :, :], persistent=False)

|

| 129 |

+

|

| 130 |

+

def forward(self, x, seq_len=None):

|

| 131 |

+

# x: [bs, num_attention_heads, seq_len, head_size]

|

| 132 |

+

# This `if` block is unlikely to be run after we build sin/cos in `__init__`. Keep the logic here just in case.

|

| 133 |

+

if seq_len > self.max_seq_len_cached:

|

| 134 |

+

self.max_seq_len_cached = seq_len

|

| 135 |

+

t = torch.arange(self.max_seq_len_cached, device=x.device, dtype=self.inv_freq.dtype)

|

| 136 |

+

freqs = torch.einsum("i,j->ij", t, self.inv_freq)

|

| 137 |

+

# Different from paper, but it uses a different permutation in order to obtain the same calculation

|

| 138 |

+

emb = torch.cat((freqs, freqs), dim=-1).to(x.device)

|

| 139 |

+

self.register_buffer("cos_cached", emb.cos()[None, None, :, :], persistent=False)

|

| 140 |

+

self.register_buffer("sin_cached", emb.sin()[None, None, :, :], persistent=False)

|

| 141 |

+

return (

|

| 142 |

+

self.cos_cached[:, :, :seq_len, ...].to(dtype=x.dtype),

|

| 143 |

+

self.sin_cached[:, :, :seq_len, ...].to(dtype=x.dtype),

|

| 144 |

+

)

|

| 145 |

+

|

| 146 |

+

|

| 147 |

+

class InternLMDynamicNTKScalingRotaryEmbedding(torch.nn.Module):

|

| 148 |

+

"""Implement InternLM's DyanmicNTK extrapolation method, thereby broadening the model support context to 16K.

|

| 149 |

+

|

| 150 |

+

Args:

|

| 151 |

+

dim (int): Characteristic dimension of each self-attentional head.

|

| 152 |

+

max_position_embeddings (int, optional): Model's training length. Defaults to 2048.

|

| 153 |

+