Transformers documentation

Sharing

Sharing

The Hugging Face Hub is a platform for sharing, discovering, and consuming models of all different types and sizes. We highly recommend sharing your model on the Hub to push open-source machine learning forward for everyone!

This guide will show you how to share a model to the Hub from Transformers.

Set up

To share a model to the Hub, you need a Hugging Face account. Create a User Access Token (stored in the cache by default) and login to your account from either the command line or notebook.

huggingface-cli login

Repository features

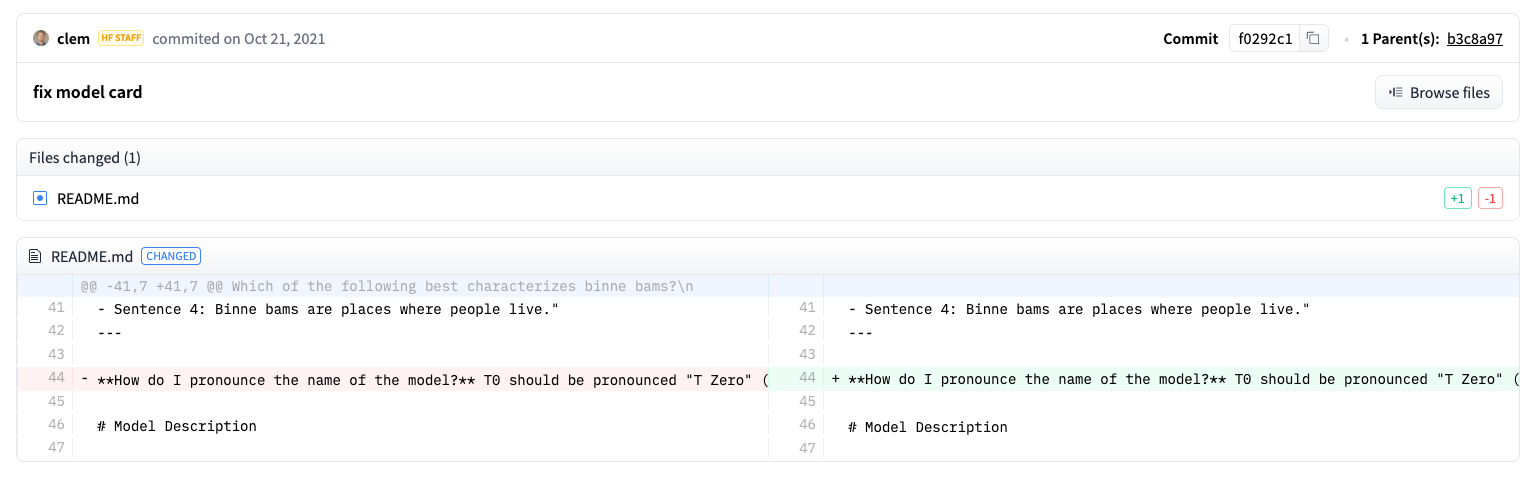

Each model repository features versioning, commit history, and diff visualization.

Versioning is based on Git and Git Large File Storage (LFS), and it enables revisions, a way to specify a model version with a commit hash, tag or branch.

For example, use the revision parameter in from_pretrained() to load a specific model version from a commit hash.

model = AutoModel.from_pretrained(

"julien-c/EsperBERTo-small", revision="4c77982"

)Model repositories also support gating to control who can access a model. Gating is common for allowing a select group of users to preview a research model before it’s made public.

A model repository also includes an inference widget for users to directly interact with a model on the Hub.

Check out the Hub Models documentation to for more information.

Model framework conversion

Reach a wider audience by making a model available in PyTorch, TensorFlow, and Flax. While users can still load a model if they’re using a different framework, it is slower because Transformers needs to convert the checkpoint on the fly. It is faster to convert the checkpoint first.

Set from_tf=True to convert a checkpoint from TensorFlow to PyTorch and then save it.

from transformers import DistilBertForSequenceClassification

pt_model = DistilBertForSequenceClassification.from_pretrained("path/to/awesome-name-you-picked", from_tf=True)

pt_model.save_pretrained("path/to/awesome-name-you-picked")Uploading a model

There are several ways to upload a model to the Hub depending on your workflow preference. You can push a model with Trainer, a callback for TensorFlow models, call push_to_hub() directly on a model, or use the Hub web interface.

Trainer

Trainer can push a model directly to the Hub after training. Set push_to_hub=True in TrainingArguments and pass it to Trainer. Once training is complete, call push_to_hub() to upload the model.

push_to_hub() automatically adds useful information like training hyperparameters and results to the model card.

from transformers import TrainingArguments, Trainer

training_args = TrainingArguments(output_dir="my-awesome-model", push_to_hub=True)

trainer = Trainer(

model=model,

args=training_args,

train_dataset=small_train_dataset,

eval_dataset=small_eval_dataset,

compute_metrics=compute_metrics,

)

trainer.push_to_hub()PushToHubCallback

For TensorFlow models, add the PushToHubCallback to the fit method.

from transformers import PushToHubCallback

push_to_hub_callback = PushToHubCallback(

output_dir="./your_model_save_path", tokenizer=tokenizer, hub_model_id="your-username/my-awesome-model"

)

model.fit(tf_train_dataset, validation_data=tf_validation_dataset, epochs=3, callbacks=push_to_hub_callback)PushToHubMixin

The PushToHubMixin provides functionality for pushing a model or tokenizer to the Hub.

Call push_to_hub() directly on a model to upload it to the Hub. It creates a repository under your namespace with the model name specified in push_to_hub().

model.push_to_hub("my-awesome-model")Other objects like a tokenizer or TensorFlow model are also pushed to the Hub in the same way.

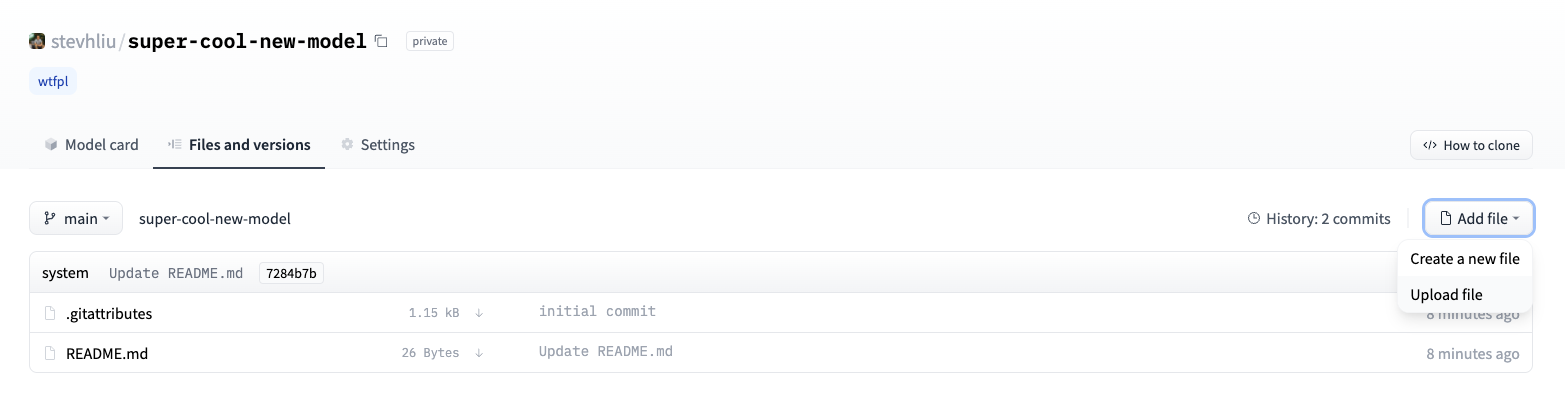

tokenizer.push_to_hub("my-awesome-model")Your Hugging Face profile should now display the newly created model repository. Navigate to the Files tab to see all the uploaded files.

Refer to the Upload files to the Hub guide for more information about pushing files to the Hub.

Hub web interface

The Hub web interface is a no-code approach for uploading a model.

- Create a new repository by selecting New Model.

Add some information about your model:

- Select the owner of the repository. This can be yourself or any of the organizations you belong to.

- Pick a name for your model, which will also be the repository name.

- Choose whether your model is public or private.

- Set the license usage.

Click on Create model to create the model repository.

Select the Files tab and click on the Add file button to drag-and-drop a file to your repository. Add a commit message and click on Commit changes to main to commit the file.

Model card

Model cards inform users about a models performance, limitations, potential biases, and ethical considerations. It is highly recommended to add a model card to your repository!

A model card is a README.md file in your repository. Add this file by:

- manually creating and uploading a

README.mdfile - clicking on the Edit model card button in the repository

Take a look at the Llama 3.1 model card for an example of what to include on a model card.

Learn more about other model card metadata (carbon emissions, license, link to paper, etc.) available in the Model Cards guide.

< > Update on GitHub