Spaces:

Running

on

Zero

Running

on

Zero

Commit

•

2ce2e82

1

Parent(s):

b7393af

Upload 4 files

Browse files

LICENSE

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright [yyyy] [name of copyright owner]

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

README.md

CHANGED

|

@@ -11,4 +11,73 @@ license: apache-2.0

|

|

| 11 |

short_description: Pearl-7B, an xtraordinary Space

|

| 12 |

---

|

| 13 |

|

| 14 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 11 |

short_description: Pearl-7B, an xtraordinary Space

|

| 12 |

---

|

| 13 |

|

| 14 |

+

# MANATEE(lm) : Market Analysis based on language model architectures

|

| 15 |

+

[](https://badge.fury.io/py/tensorflow) [](https://opensource.org/licenses/Apache-2.0)

|

| 16 |

+

|

| 17 |

+

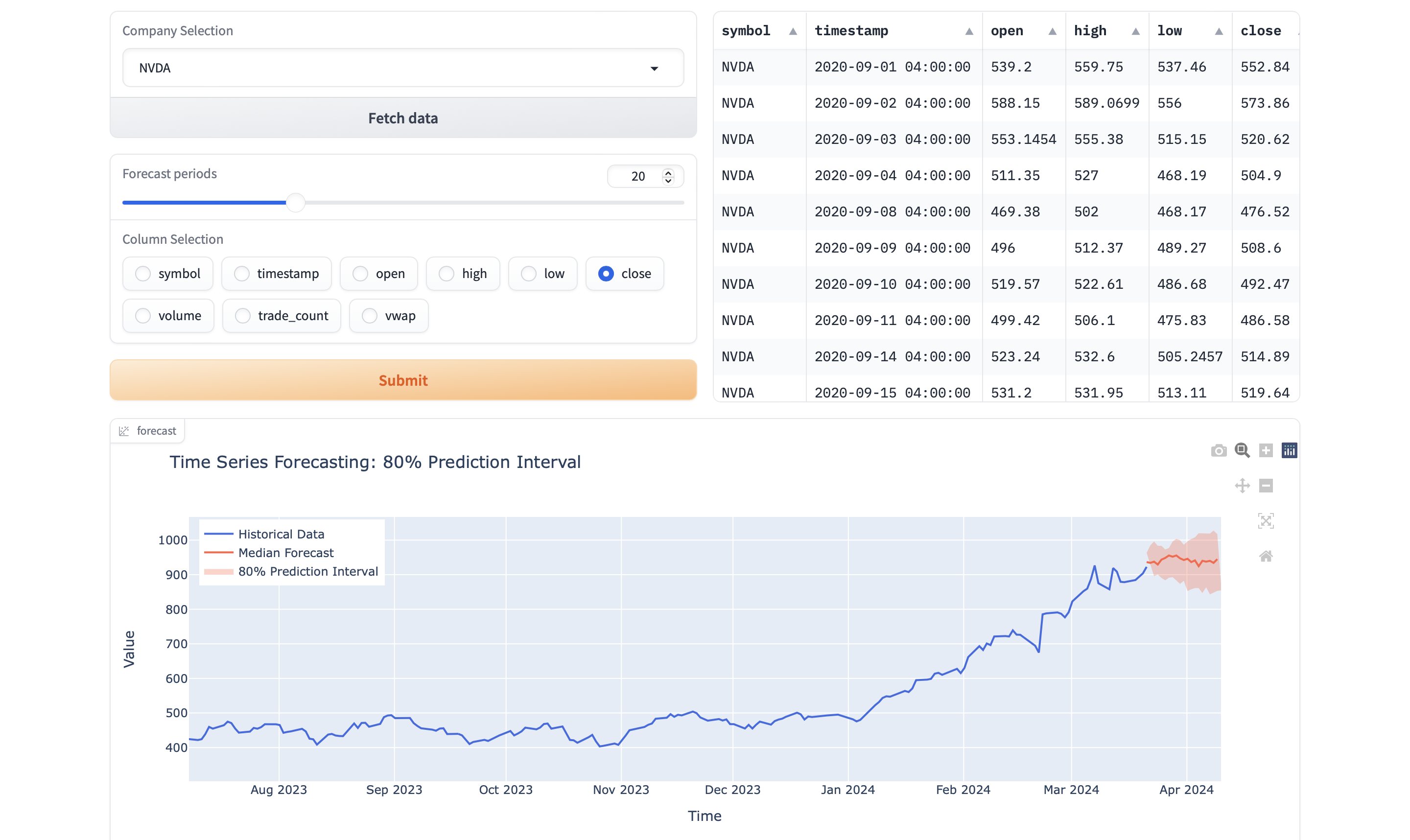

This project focuses on employing LLM to analyze time series data for forecasting purposes, based on the "Chronos: Learning the Language of Time Series" paper from the Amazon Web Services and Amazon Supply Chain Optimization Technologies. The MANATEE project is designed to fetch, compute, and plot historical data for financial securities, leveraging APIs from Alpaca and the power of Polars and Plotly for data manipulation and visualization. With features like calculating the rolling mean and Relative Strength Index (RSI), this tool also aids in analyzing the past performance of stocks and crypto assets.

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

From source :

|

| 22 |

+

> In this work, we take a step back and ask: what are the fundamental differences between a language model that predicts the next token, and a time series forecasting model that predicts the next values? Despite the apparent distinction — tokens from a finite dictionary versus values from an unbounded, usually continuous domain — both endeavors fundamentally aim to model the sequential structure of the data to predict future patterns. Shouldn't good language models “just work” on time series? This naive question prompts us to challenge the necessity of time-series-specific modifications, and answering it led us to develop Chronos, a language modeling framework minimally adapted for time series forecasting. Chronos tokenizes time series into discrete bins through simple scaling and quantization of real values. In this way, we can train off-the-shelf language models on this “language of time series,” with no changes to the model architecture. Remarkably, this straightforward approach proves to be effective and efficient, underscoring the potential for language model architectures to address a broad range of time series problems with minimal modifications.

|

| 23 |

+

[...]

|

| 24 |

+

|

| 25 |

+

## Dependencies

|

| 26 |

+

### Libraries Used:

|

| 27 |

+

|

| 28 |

+

1. **`json`**: A built-in Python library for parsing JSON data. No need for installation.

|

| 29 |

+

|

| 30 |

+

2. **`datetime` & `time`**: Built-in Python libraries for handling date and time. Used here for defining time frames for data fetching. No installation required.

|

| 31 |

+

|

| 32 |

+

3. **`plotly`** (as `px`): Provides an easy-to-use interface to Plotly, which is used for creating interactive plots. Install via pip:

|

| 33 |

+

```shell

|

| 34 |

+

pip3 install plotly

|

| 35 |

+

```

|

| 36 |

+

|

| 37 |

+

4. **`polars`** (as `pl`): A fast DataFrames library ideal for financial time-series data. Install using pip:

|

| 38 |

+

```shell

|

| 39 |

+

pip3 install polars

|

| 40 |

+

```

|

| 41 |

+

|

| 42 |

+

5. **`alpaca-py`**: A Python library for Alpaca API. It provides access to historical stock/crypto data and trading operations. Install using pip:

|

| 43 |

+

```shell

|

| 44 |

+

pip3 install alpaca-trade-api

|

| 45 |

+

```

|

| 46 |

+

|

| 47 |

+

### Installation Guide

|

| 48 |

+

|

| 49 |

+

To install all the dependencies, you can use the following command:

|

| 50 |

+

|

| 51 |

+

```shell

|

| 52 |

+

pip3 install plotly polars alpaca-py transformers gradio spaces

|

| 53 |

+

```

|

| 54 |

+

|

| 55 |

+

Note: Ensure you have Python installed on your system before proceeding with the installation of these libraries.

|

| 56 |

+

|

| 57 |

+

## Best Practices

|

| 58 |

+

- **API Keys Management**: For security reasons, avoid hardcoding your API keys into the script. Consider using environment variables or a secure vault service.

|

| 59 |

+

|

| 60 |

+

- **Data Privacy**: When handling financial data, it's crucial to comply with data protection regulations (such as GDPR, CCPA). Ensure you have the right to use and share the data fetched through this tool.

|

| 61 |

+

|

| 62 |

+

- **Error Handling**: The script includes basic error handling, but for production use, consider implementing more comprehensive try-except blocks to handle network errors, API limit exceptions, and data inconsistencies.

|

| 63 |

+

|

| 64 |

+

- **Plotting Considerations**: This tool uses Plotly for visualization, which is very versatile but can be resource-intensive for large datasets. For analyzing large datasets, consider creating plots with fewer data points or aggregating the data before plotting.

|

| 65 |

+

|

| 66 |

+

- **Resource Management**: When dealing with large datasets or numerous API requests, monitor your system's and the API's usage to avoid overloading.

|

| 67 |

+

|

| 68 |

+

- **Version Control**: Regularly update your dependencies. Financial APIs and data handling libraries evolve, and keeping them up to date can improve security, efficiency, and accessibility of new features.

|

| 69 |

+

|

| 70 |

+

## Citing this project

|

| 71 |

+

If you use this code in your research, please use the following BibTeX entry.

|

| 72 |

+

|

| 73 |

+

```BibTeX

|

| 74 |

+

@misc{louisbrulenaudet2023,

|

| 75 |

+

author = {Louis Brulé Naudet},

|

| 76 |

+

title = {MANATEE(lm) : Market Analysis based on language model architectures},

|

| 77 |

+

howpublished = {\url{https://huggingface.co/spaces/louisbrulenaudet/manatee}},

|

| 78 |

+

year = {2024}

|

| 79 |

+

}

|

| 80 |

+

|

| 81 |

+

```

|

| 82 |

+

## Feedback

|

| 83 |

+

If you have any feedback, please reach out at [louisbrulenaudet@icloud.com](mailto:louisbrulenaudet@icloud.com).

|

app.py

ADDED

|

@@ -0,0 +1,332 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# -*- coding: utf-8 -*-

|

| 2 |

+

# Copyright (c) Louis Brulé Naudet. All Rights Reserved.

|

| 3 |

+

# This software may be used and distributed according to the terms of the License Agreement.

|

| 4 |

+

#

|

| 5 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 6 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 7 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 8 |

+

# See the License for the specific language governing permissions and

|

| 9 |

+

# limitations under the License.

|

| 10 |

+

|

| 11 |

+

import os

|

| 12 |

+

from threading import Thread

|

| 13 |

+

from typing import Iterator

|

| 14 |

+

|

| 15 |

+

import gradio as gr

|

| 16 |

+

import spaces

|

| 17 |

+

import torch

|

| 18 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer, TextIteratorStreamer

|

| 19 |

+

|

| 20 |

+

MAX_MAX_NEW_TOKENS = 2048

|

| 21 |

+

DEFAULT_MAX_NEW_TOKENS = 1024

|

| 22 |

+

|

| 23 |

+

MAX_INPUT_TOKEN_LENGTH = int(os.getenv("MAX_INPUT_TOKEN_LENGTH", "4096"))

|

| 24 |

+

|

| 25 |

+

def setup(

|

| 26 |

+

model_id: str,

|

| 27 |

+

description: str

|

| 28 |

+

) -> tuple:

|

| 29 |

+

"""

|

| 30 |

+

Set up the model and tokenizer for a given pre-trained model ID.

|

| 31 |

+

|

| 32 |

+

Parameters

|

| 33 |

+

----------

|

| 34 |

+

model_id : str

|

| 35 |

+

The ID of the pre-trained model to load.

|

| 36 |

+

|

| 37 |

+

description : str

|

| 38 |

+

A string containing additional description information.

|

| 39 |

+

|

| 40 |

+

Returns

|

| 41 |

+

-------

|

| 42 |

+

tuple

|

| 43 |

+

A tuple containing the loaded model, tokenizer, and updated description.

|

| 44 |

+

If an error occurs during setup, model and tokenizer are None, and an error message is appended to the description.

|

| 45 |

+

"""

|

| 46 |

+

if not torch.cuda.is_available():

|

| 47 |

+

description += "\n<p>Running on CPU 🥶 This demo does not work on CPU.</p>"

|

| 48 |

+

|

| 49 |

+

return None, None, description

|

| 50 |

+

|

| 51 |

+

try:

|

| 52 |

+

# Load the model and tokenizer

|

| 53 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 54 |

+

model_id,

|

| 55 |

+

torch_dtype=torch.bfloat16,

|

| 56 |

+

device_map="auto"

|

| 57 |

+

)

|

| 58 |

+

|

| 59 |

+

tokenizer = AutoTokenizer.from_pretrained(

|

| 60 |

+

model_id

|

| 61 |

+

)

|

| 62 |

+

|

| 63 |

+

tokenizer.use_default_system_prompt = False

|

| 64 |

+

|

| 65 |

+

# Update the description

|

| 66 |

+

description += "\n<p>Model and tokenizer set up successfully.</p>"

|

| 67 |

+

|

| 68 |

+

return model, tokenizer, description

|

| 69 |

+

|

| 70 |

+

except Exception as e:

|

| 71 |

+

# If an error occurs during setup, append the error message to the description

|

| 72 |

+

description += f"\n<p>Error occurred during model setup: {str(e)}</p>"

|

| 73 |

+

|

| 74 |

+

return None, None, description

|

| 75 |

+

|

| 76 |

+

|

| 77 |

+

def preprocess_conversation(

|

| 78 |

+

message: str,

|

| 79 |

+

chat_history: list,

|

| 80 |

+

system_prompt: str

|

| 81 |

+

):

|

| 82 |

+

"""

|

| 83 |

+

Preprocess the conversation history by formatting it appropriately.

|

| 84 |

+

|

| 85 |

+

Parameters

|

| 86 |

+

----------

|

| 87 |

+

message : str

|

| 88 |

+

The user's message.

|

| 89 |

+

|

| 90 |

+

chat_history : list

|

| 91 |

+

The conversation history, where each element is a tuple (user_message, assistant_response).

|

| 92 |

+

|

| 93 |

+

system_prompt : str

|

| 94 |

+

The system prompt.

|

| 95 |

+

|

| 96 |

+

Returns

|

| 97 |

+

-------

|

| 98 |

+

list

|

| 99 |

+

The formatted conversation history.

|

| 100 |

+

"""

|

| 101 |

+

conversation = []

|

| 102 |

+

|

| 103 |

+

if system_prompt:

|

| 104 |

+

conversation.append(

|

| 105 |

+

{

|

| 106 |

+

"role": "system",

|

| 107 |

+

"content": system_prompt

|

| 108 |

+

}

|

| 109 |

+

)

|

| 110 |

+

|

| 111 |

+

for user, assistant in chat_history:

|

| 112 |

+

conversation.extend(

|

| 113 |

+

[

|

| 114 |

+

{

|

| 115 |

+

"role": "user",

|

| 116 |

+

"content": user

|

| 117 |

+

},

|

| 118 |

+

{

|

| 119 |

+

"role": "assistant",

|

| 120 |

+

"content": assistant

|

| 121 |

+

}

|

| 122 |

+

]

|

| 123 |

+

)

|

| 124 |

+

|

| 125 |

+

conversation.append(

|

| 126 |

+

{

|

| 127 |

+

"role": "user",

|

| 128 |

+

"content": message

|

| 129 |

+

}

|

| 130 |

+

)

|

| 131 |

+

|

| 132 |

+

return conversation

|

| 133 |

+

|

| 134 |

+

|

| 135 |

+

def trim_input_ids(

|

| 136 |

+

input_ids,

|

| 137 |

+

max_length

|

| 138 |

+

):

|

| 139 |

+

"""

|

| 140 |

+

Trim the input token IDs if they exceed the maximum length.

|

| 141 |

+

|

| 142 |

+

Parameters

|

| 143 |

+

----------

|

| 144 |

+

input_ids : torch.Tensor

|

| 145 |

+

The input token IDs.

|

| 146 |

+

|

| 147 |

+

max_length : int

|

| 148 |

+

The maximum length allowed.

|

| 149 |

+

|

| 150 |

+

Returns

|

| 151 |

+

-------

|

| 152 |

+

torch.Tensor

|

| 153 |

+

The trimmed input token IDs.

|

| 154 |

+

"""

|

| 155 |

+

if input_ids.shape[1] > max_length:

|

| 156 |

+

input_ids = input_ids[:, -max_length:]

|

| 157 |

+

print(f"Trimmed input from conversation as it was longer than {max_length} tokens.")

|

| 158 |

+

|

| 159 |

+

return input_ids

|

| 160 |

+

|

| 161 |

+

|

| 162 |

+

@spaces.GPU

|

| 163 |

+

def generate(

|

| 164 |

+

message: str,

|

| 165 |

+

chat_history: list,

|

| 166 |

+

system_prompt: str,

|

| 167 |

+

max_new_tokens: int = 1024,

|

| 168 |

+

temperature: float = 0.6,

|

| 169 |

+

top_p: float = 0.9,

|

| 170 |

+

top_k: int = 50,

|

| 171 |

+

repetition_penalty: float = 1,

|

| 172 |

+

) -> Iterator[str]:

|

| 173 |

+

"""

|

| 174 |

+

Generate a response to a given message within a conversation context.

|

| 175 |

+

|

| 176 |

+

This function utilizes a pre-trained language model to generate a response to a given message, considering the conversation context provided in the chat history.

|

| 177 |

+

|

| 178 |

+

Parameters

|

| 179 |

+

----------

|

| 180 |

+

message : str

|

| 181 |

+

The user's message for which a response is generated.

|

| 182 |

+

|

| 183 |

+

chat_history : list

|

| 184 |

+

A list containing tuples representing the conversation history. Each tuple should consist of two elements: the user's message and the assistant's response.

|

| 185 |

+

|

| 186 |

+

system_prompt : str

|

| 187 |

+

The system prompt, if any, to be included in the conversation context.

|

| 188 |

+

|

| 189 |

+

max_new_tokens : int, optional

|

| 190 |

+

The maximum number of tokens to generate for the response (default is 1024).

|

| 191 |

+

|

| 192 |

+

temperature : float, optional

|

| 193 |

+

The temperature parameter controlling the randomness of token generation (default is 0.6).

|

| 194 |

+

|

| 195 |

+

top_p : float, optional

|

| 196 |

+

The cumulative probability cutoff for token generation (default is 0.9).

|

| 197 |

+

|

| 198 |

+

top_k : int, optional

|

| 199 |

+

The number of top tokens to consider for token generation (default is 50).

|

| 200 |

+

|

| 201 |

+

repetition_penalty : float, optional

|

| 202 |

+

The repetition penalty controlling the likelihood of repeating tokens in the generated sequence (default is 1).

|

| 203 |

+

|

| 204 |

+

Yields

|

| 205 |

+

------

|

| 206 |

+

str

|

| 207 |

+

A generated response to the given message.

|

| 208 |

+

|

| 209 |

+

Notes

|

| 210 |

+

-----

|

| 211 |

+

- This function requires a GPU for efficient processing and may not work properly on CPU.

|

| 212 |

+

- The conversation history should be provided in the form of a list of tuples, where each tuple represents a user message followed by the assistant's response.

|

| 213 |

+

"""

|

| 214 |

+

global tokenizer

|

| 215 |

+

global model

|

| 216 |

+

|

| 217 |

+

conversation = preprocess_conversation(

|

| 218 |

+

message=message,

|

| 219 |

+

chat_history=chat_history,

|

| 220 |

+

system_prompt=system_prompt

|

| 221 |

+

)

|

| 222 |

+

|

| 223 |

+

input_ids = tokenizer.apply_chat_template(

|

| 224 |

+

conversation,

|

| 225 |

+

return_tensors="pt",

|

| 226 |

+

add_generation_prompt=True

|

| 227 |

+

)

|

| 228 |

+

input_ids = trim_input_ids(

|

| 229 |

+

input_ids=input_ids,

|

| 230 |

+

max_length=MAX_INPUT_TOKEN_LENGTH

|

| 231 |

+

)

|

| 232 |

+

|

| 233 |

+

input_ids = input_ids.to(

|

| 234 |

+

torch.device("cuda")

|

| 235 |

+

)

|

| 236 |

+

|

| 237 |

+

streamer = TextIteratorStreamer(

|

| 238 |

+

tokenizer,

|

| 239 |

+

timeout=10.0,

|

| 240 |

+

skip_prompt=True,

|

| 241 |

+

skip_special_tokens=True

|

| 242 |

+

)

|

| 243 |

+

|

| 244 |

+

generate_kwargs = {

|

| 245 |

+

"input_ids": input_ids,

|

| 246 |

+

"streamer": streamer,

|

| 247 |

+

"max_new_tokens": max_new_tokens,

|

| 248 |

+

"do_sample": False,

|

| 249 |

+

"num_beams": 1,

|

| 250 |

+

"repetition_penalty": repetition_penalty,

|

| 251 |

+

"eos_token_id": tokenizer.eos_token_id

|

| 252 |

+

}

|

| 253 |

+

|

| 254 |

+

t = Thread(

|

| 255 |

+

target=model.generate,

|

| 256 |

+

kwargs=generate_kwargs

|

| 257 |

+

)

|

| 258 |

+

t.start()

|

| 259 |

+

|

| 260 |

+

outputs = []

|

| 261 |

+

|

| 262 |

+

for text in streamer:

|

| 263 |

+

outputs.append(text)

|

| 264 |

+

|

| 265 |

+

return "".join(outputs)

|

| 266 |

+

|

| 267 |

+

|

| 268 |

+

model, tokenizer, description = setup(

|

| 269 |

+

model_id="louisbrulenaudet/Pearl-7B-0211-ties",

|

| 270 |

+

description

|

| 271 |

+

)

|

| 272 |

+

|

| 273 |

+

chat_interface = gr.ChatInterface(

|

| 274 |

+

fn=generate,

|

| 275 |

+

additional_inputs=[

|

| 276 |

+

gr.Textbox(label="System prompt", lines=6),

|

| 277 |

+

gr.Slider(

|

| 278 |

+

label="Max new tokens",

|

| 279 |

+

minimum=1,

|

| 280 |

+

maximum=1048,

|

| 281 |

+

step=1,

|

| 282 |

+

value=1048,

|

| 283 |

+

),

|

| 284 |

+

gr.Slider(

|

| 285 |

+

label="Top-p (nucleus sampling)",

|

| 286 |

+

minimum=0.05,

|

| 287 |

+

maximum=1.0,

|

| 288 |

+

step=0.05,

|

| 289 |

+

value=0.9,

|

| 290 |

+

),

|

| 291 |

+

gr.Slider(

|

| 292 |

+

label="Top-k",

|

| 293 |

+

minimum=1,

|

| 294 |

+

maximum=1000,

|

| 295 |

+

step=1,

|

| 296 |

+

value=50,

|

| 297 |

+

),

|

| 298 |

+

gr.Slider(

|

| 299 |

+

label="Repetition penalty",

|

| 300 |

+

minimum=1.0,

|

| 301 |

+

maximum=2.0,

|

| 302 |

+

step=0.05,

|

| 303 |

+

value=1,

|

| 304 |

+

),

|

| 305 |

+

],

|

| 306 |

+

stop_btn=None,

|

| 307 |

+

examples=[

|

| 308 |

+

["implement snake game using pygame"],

|

| 309 |

+

["Can you explain briefly to me what is the Python programming language?"],

|

| 310 |

+

["write a program to find the factorial of a number"],

|

| 311 |

+

],

|

| 312 |

+

)

|

| 313 |

+

|

| 314 |

+

|

| 315 |

+

DESCRIPTION = """\

|

| 316 |

+

# Pearl-7B-0211-ties, an xtraordinary 7B model

|

| 317 |

+

|

| 318 |

+

This space showcases the <a style='color:white;' href='https://huggingface.co/louisbrulenaudet/Pearl-7B-0211-ties'>Pearl-7B-0211-ties</a>

|

| 319 |

+

model by Louis Brulé Naudet, a language model with 7.24 billion parameters that achieves a score exceeding 75.10 on the Open LLM Leaderboard

|

| 320 |

+

(average).

|

| 321 |

+

"""

|

| 322 |

+

|

| 323 |

+

with gr.Blocks() as demo:

|

| 324 |

+

gr.Markdown(

|

| 325 |

+

value=DESCRIPTION

|

| 326 |

+

)

|

| 327 |

+

chat_interface.render()

|

| 328 |

+

|

| 329 |

+

if __name__ == "__main__":

|

| 330 |

+

demo.queue().launch(

|

| 331 |

+

show_api=False

|

| 332 |

+

)

|

requirements.txt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

gradio==4.22.0

|

| 2 |

+

transformers==4.38.2

|

| 3 |

+

spaces==0.24.2

|