Spaces:

Running

Running

Update README.md

Browse files

README.md

CHANGED

|

@@ -1,10 +1,112 @@

|

|

| 1 |

---

|

| 2 |

title: Grpo Sql Optimizer

|

| 3 |

-

emoji:

|

| 4 |

colorFrom: pink

|

| 5 |

colorTo: red

|

| 6 |

sdk: static

|

| 7 |

pinned: false

|

| 8 |

---

|

| 9 |

|

| 10 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

title: Grpo Sql Optimizer

|

| 3 |

+

emoji: 🧠

|

| 4 |

colorFrom: pink

|

| 5 |

colorTo: red

|

| 6 |

sdk: static

|

| 7 |

pinned: false

|

| 8 |

---

|

| 9 |

|

| 10 |

+

# GRPO Training for SQL Query Optimization (DuckDB-Verifiable Rewards)

|

| 11 |

+

|

| 12 |

+

Fine-tuned `Qwen/Qwen2.5-0.5B-Instruct` using **GRPO (Group Relative Policy Optimization)** to optimize SQL queries with **verifiable rewards**: we **execute** the original + rewritten SQL against a real DuckDB database and score based on measured speedup and correctness.

|

| 13 |

+

|

| 14 |

+

- **Repo (source of truth):** https://github.com/OfficialAbhinavSingh/SQL-Query-Optimization-Environment-

|

| 15 |

+

- **Model:** https://huggingface.co/laterabhi/grpo-sql-optimizer

|

| 16 |

+

- **Space:** https://huggingface.co/spaces/laterabhi/grpo-sql-optimizer

|

| 17 |

+

|

| 18 |

+

---

|

| 19 |

+

|

| 20 |

+

## Why this matters

|

| 21 |

+

|

| 22 |

+

LLMs often generate SQL that is syntactically valid but **slow** (or subtly wrong) at scale. Classic training setups use heuristic scoring, which can be gamed. This project trains/evaluates SQL optimization with **execution-grounded feedback**.

|

| 23 |

+

|

| 24 |

+

---

|

| 25 |

+

|

| 26 |

+

## Environment (5 tasks, increasing difficulty)

|

| 27 |

+

|

| 28 |

+

We use the **SQL Query Optimization Environment** (OpenEnv compliant), backed by an in-memory DuckDB dataset:

|

| 29 |

+

|

| 30 |

+

- `users` (10k), `orders` (500k), `events` (1M), `products` (1k)

|

| 31 |

+

|

| 32 |

+

Tasks:

|

| 33 |

+

1. `task_1_basic_antipatterns` (easy)

|

| 34 |

+

2. `task_2_correlated_subqueries` (medium)

|

| 35 |

+

3. `task_3_wildcard_scan` (medium-hard)

|

| 36 |

+

4. `task_4_implicit_join` (hard)

|

| 37 |

+

5. `task_5_window_functions` (expert)

|

| 38 |

+

|

| 39 |

+

---

|

| 40 |

+

|

| 41 |

+

## Reward function (execution-grounded)

|

| 42 |

+

|

| 43 |

+

Composite reward in \[0, 1\], combining:

|

| 44 |

+

|

| 45 |

+

- **execution_speedup (35%)**: measured ratio `original_ms / optimized_ms` from DuckDB

|

| 46 |

+

- **result_correctness (20%)**: results match check (order-independent for large outputs)

|

| 47 |

+

- **issue_detection (25%)**: anti-pattern detection vs ground-truth keywords per task

|

| 48 |

+

- **approval_correctness (8%)**

|

| 49 |

+

- **summary_quality (7%)**

|

| 50 |

+

- **severity_labels (5%)**

|

| 51 |

+

|

| 52 |

+

This is designed to be **hard to game**: “fast but wrong” loses correctness; “verbose but slow” loses speedup.

|

| 53 |

+

|

| 54 |

+

---

|

| 55 |

+

|

| 56 |

+

## Training setup (GRPO)

|

| 57 |

+

|

| 58 |

+

- **Algorithm:** GRPO (group-relative policy optimization)

|

| 59 |

+

- **Base model:** `Qwen/Qwen2.5-0.5B-Instruct`

|

| 60 |

+

- **Group size:** 4 completions per prompt

|

| 61 |

+

- **Notebook:** Kaggle (linked in the repo README)

|

| 62 |

+

|

| 63 |

+

---

|

| 64 |

+

|

| 65 |

+

## Results (from the GitHub repo)

|

| 66 |

+

|

| 67 |

+

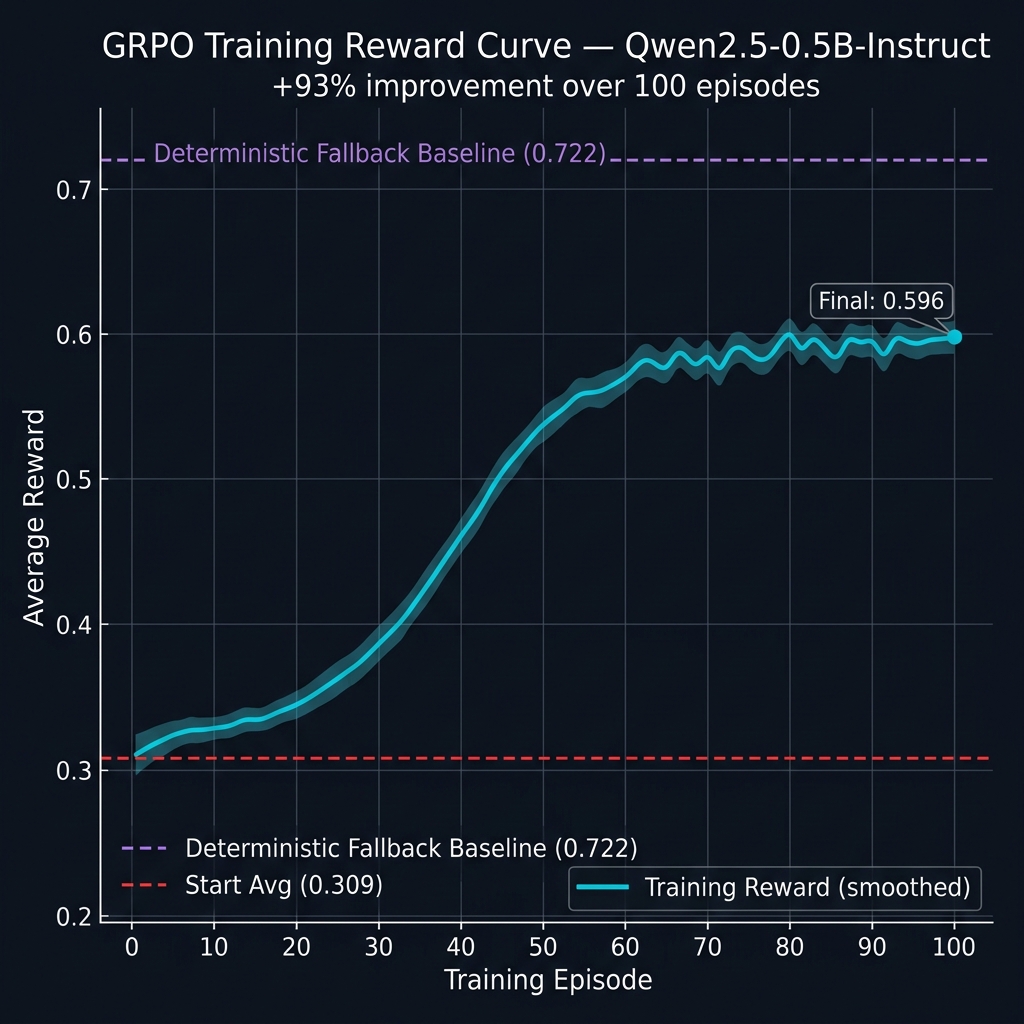

### Training progress (100 episodes)

|

| 68 |

+

|

| 69 |

+

| Metric | Value |

|

| 70 |

+

|--------|-------|

|

| 71 |

+

| Start avg (ep 1–10) | 0.3090 |

|

| 72 |

+

| End avg (ep 91–100) | 0.5962 |

|

| 73 |

+

| Improvement | **+93%** |

|

| 74 |

+

|

| 75 |

+

**Reward curve:**

|

| 76 |

+

|

| 77 |

+

|

| 78 |

+

|

| 79 |

+

### Final evaluation (per task)

|

| 80 |

+

|

| 81 |

+

| Task | Difficulty | Score |

|

| 82 |

+

|------|-----------|-------|

|

| 83 |

+

| task_1_basic_antipatterns | easy | **0.7500** ✅ |

|

| 84 |

+

| task_2_correlated_subqueries | medium | **0.8313** ✅ |

|

| 85 |

+

| task_3_wildcard_scan | medium-hard | **0.6563** ✅ |

|

| 86 |

+

| task_4_implicit_join | hard | **0.6563** ✅ |

|

| 87 |

+

| task_5_window_functions | expert | **0.6500** ✅ |

|

| 88 |

+

|

| 89 |

+

> **Task 5 note:** `task_5_window_functions` is the **expert** scenario, so it’s expected to be the lowest. This is not an error—just the hardest distribution.

|

| 90 |

+

|

| 91 |

+

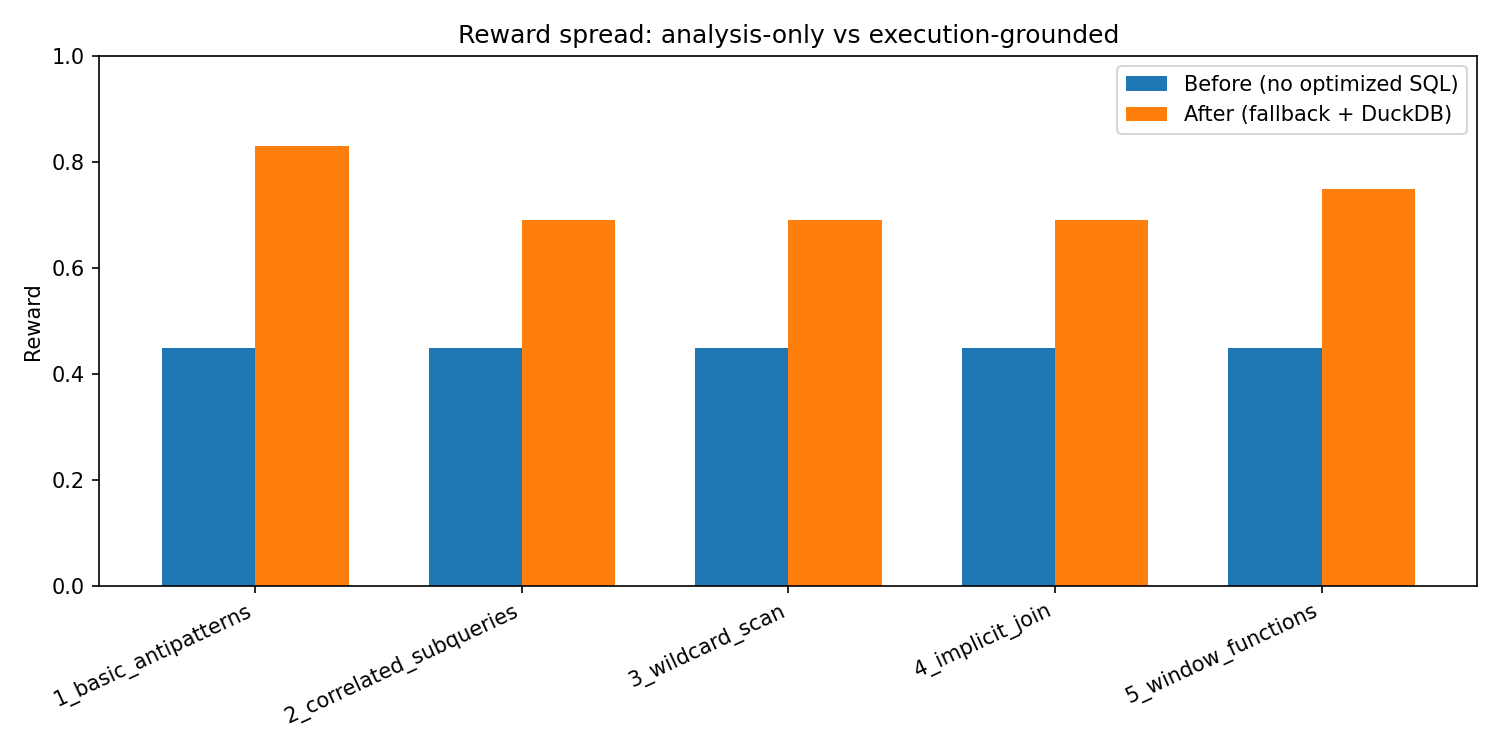

### “Before / After” (environment-only, no API keys)

|

| 92 |

+

|

| 93 |

+

We also provide a reproducible before/after contrast:

|

| 94 |

+

- **Before:** suggestions present but `optimized_query` empty (no speedup/correctness signal)

|

| 95 |

+

- **After:** deterministic fallback policy with a real optimized query

|

| 96 |

+

|

| 97 |

+

|

| 98 |

+

|

| 99 |

+

---

|

| 100 |

+

|

| 101 |

+

## How to reproduce (locally)

|

| 102 |

+

|

| 103 |

+

```bash

|

| 104 |

+

git clone https://github.com/OfficialAbhinavSingh/SQL-Query-Optimization-Environment-.git

|

| 105 |

+

cd SQL-Query-Optimization-Environment-

|

| 106 |

+

pip install -r requirements.txt

|

| 107 |

+

|

| 108 |

+

# Baselines (fallback + optional LLM if HF_TOKEN set)

|

| 109 |

+

python baseline_runner.py

|

| 110 |

+

|

| 111 |

+

# Environment-only before/after (no API keys)

|

| 112 |

+

python training/eval_before_after.py --save-dir results

|