Spaces:

Runtime error

Runtime error

Commit

•

0366b8b

1

Parent(s):

e14ba5f

Upload folder using huggingface_hub

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +1 -0

- .gitignore +10 -0

- LICENSE +201 -0

- README.md +257 -12

- assets/00.gif +0 -0

- assets/01.gif +0 -0

- assets/02.gif +0 -0

- assets/03.gif +0 -0

- assets/04.gif +0 -0

- assets/05.gif +0 -0

- assets/06.gif +0 -0

- assets/07.gif +0 -0

- assets/08.gif +0 -0

- assets/09.gif +0 -0

- assets/10.gif +0 -0

- assets/11.gif +0 -0

- assets/12.gif +0 -0

- assets/13.gif +3 -0

- assets/72105_388.mp4_00-00.png +0 -0

- assets/72105_388.mp4_00-01.png +0 -0

- assets/72109_125.mp4_00-00.png +0 -0

- assets/72109_125.mp4_00-01.png +0 -0

- assets/72110_255.mp4_00-00.png +0 -0

- assets/72110_255.mp4_00-01.png +0 -0

- assets/74302_1349_frame1.png +0 -0

- assets/74302_1349_frame3.png +0 -0

- assets/Japan_v2_1_070321_s3_frame1.png +0 -0

- assets/Japan_v2_1_070321_s3_frame3.png +0 -0

- assets/Japan_v2_2_062266_s2_frame1.png +0 -0

- assets/Japan_v2_2_062266_s2_frame3.png +0 -0

- assets/frame0001_05.png +0 -0

- assets/frame0001_09.png +0 -0

- assets/frame0001_10.png +0 -0

- assets/frame0001_11.png +0 -0

- assets/frame0016_10.png +0 -0

- assets/frame0016_11.png +0 -0

- configs/inference_512_v1.0.yaml +103 -0

- configs/training_1024_v1.0/config.yaml +166 -0

- configs/training_1024_v1.0/run.sh +37 -0

- configs/training_512_v1.0/config.yaml +166 -0

- configs/training_512_v1.0/run.sh +37 -0

- gradio_app.py +82 -0

- lvdm/basics.py +100 -0

- lvdm/common.py +94 -0

- lvdm/data/base.py +23 -0

- lvdm/data/webvid.py +202 -0

- lvdm/distributions.py +95 -0

- lvdm/ema.py +76 -0

- lvdm/models/autoencoder.py +275 -0

- lvdm/models/autoencoder_dualref.py +1177 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

assets/13.gif filter=lfs diff=lfs merge=lfs -text

|

.gitignore

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

.DS_Store

|

| 2 |

+

*pyc

|

| 3 |

+

.vscode

|

| 4 |

+

__pycache__

|

| 5 |

+

*.egg-info

|

| 6 |

+

|

| 7 |

+

checkpoints

|

| 8 |

+

results

|

| 9 |

+

backup

|

| 10 |

+

LOG

|

LICENSE

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright Tencent

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

README.md

CHANGED

|

@@ -1,12 +1,257 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

| 6 |

-

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

|

| 12 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

## ___***ToonCrafter: Generative Cartoon Interpolation***___

|

| 2 |

+

<!-- {: width="50%"} -->

|

| 3 |

+

<!--  -->

|

| 4 |

+

<div align="center">

|

| 5 |

+

|

| 6 |

+

|

| 7 |

+

|

| 8 |

+

</div>

|

| 9 |

+

|

| 10 |

+

## 🔆 Introduction

|

| 11 |

+

|

| 12 |

+

⚠️ Please check our [disclaimer](#disc) first.

|

| 13 |

+

|

| 14 |

+

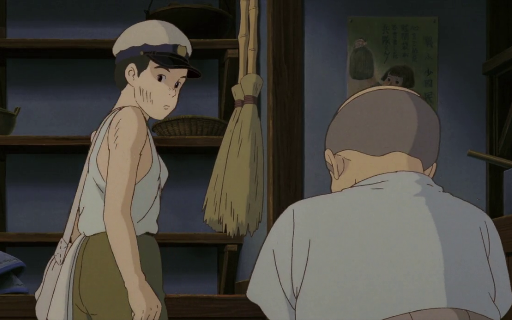

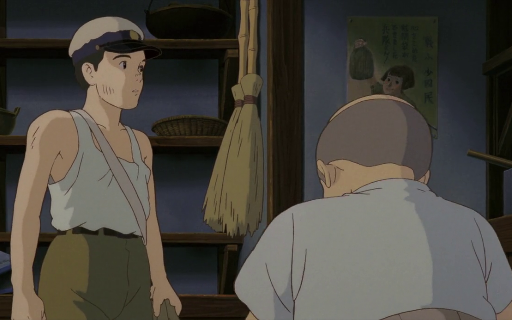

🤗 ToonCrafter can interpolate two cartoon images by leveraging the pre-trained image-to-video diffusion priors. Please check our project page and paper for more information. <br>

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

### 1.1 Showcases (512x320)

|

| 23 |

+

<table class="center">

|

| 24 |

+

<tr style="font-weight: bolder;text-align:center;">

|

| 25 |

+

<td>Input starting frame</td>

|

| 26 |

+

<td>Input ending frame</td>

|

| 27 |

+

<td>Generated video</td>

|

| 28 |

+

</tr>

|

| 29 |

+

<tr>

|

| 30 |

+

<td>

|

| 31 |

+

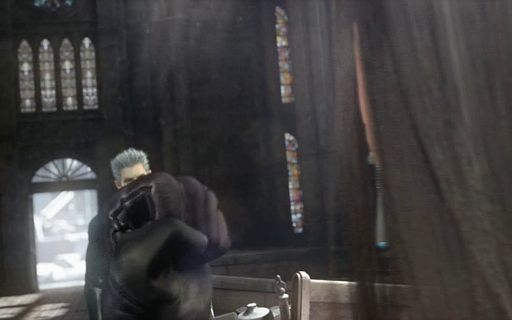

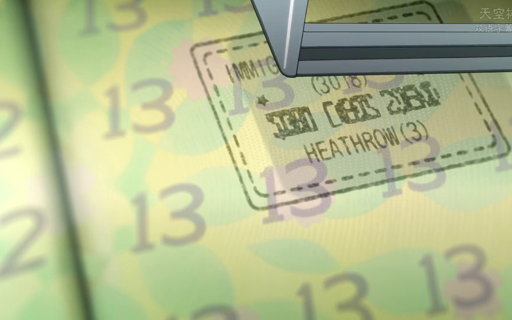

<img src=assets/72109_125.mp4_00-00.png width="250">

|

| 32 |

+

</td>

|

| 33 |

+

<td>

|

| 34 |

+

<img src=assets/72109_125.mp4_00-01.png width="250">

|

| 35 |

+

</td>

|

| 36 |

+

<td>

|

| 37 |

+

<img src=assets/00.gif width="250">

|

| 38 |

+

</td>

|

| 39 |

+

</tr>

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

<tr>

|

| 43 |

+

<td>

|

| 44 |

+

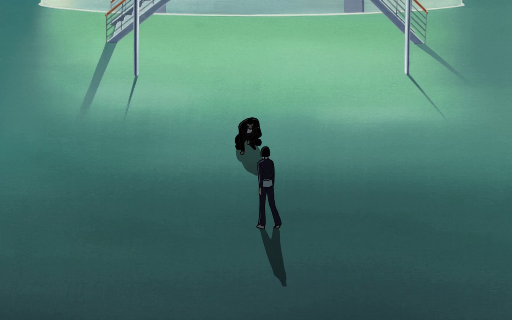

<img src=assets/Japan_v2_2_062266_s2_frame1.png width="250">

|

| 45 |

+

</td>

|

| 46 |

+

<td>

|

| 47 |

+

<img src=assets/Japan_v2_2_062266_s2_frame3.png width="250">

|

| 48 |

+

</td>

|

| 49 |

+

<td>

|

| 50 |

+

<img src=assets/03.gif width="250">

|

| 51 |

+

</td>

|

| 52 |

+

</tr>

|

| 53 |

+

<tr>

|

| 54 |

+

<td>

|

| 55 |

+

<img src=assets/Japan_v2_1_070321_s3_frame1.png width="250">

|

| 56 |

+

</td>

|

| 57 |

+

<td>

|

| 58 |

+

<img src=assets/Japan_v2_1_070321_s3_frame3.png width="250">

|

| 59 |

+

</td>

|

| 60 |

+

<td>

|

| 61 |

+

<img src=assets/02.gif width="250">

|

| 62 |

+

</td>

|

| 63 |

+

</tr>

|

| 64 |

+

<tr>

|

| 65 |

+

<td>

|

| 66 |

+

<img src=assets/74302_1349_frame1.png width="250">

|

| 67 |

+

</td>

|

| 68 |

+

<td>

|

| 69 |

+

<img src=assets/74302_1349_frame3.png width="250">

|

| 70 |

+

</td>

|

| 71 |

+

<td>

|

| 72 |

+

<img src=assets/01.gif width="250">

|

| 73 |

+

</td>

|

| 74 |

+

</tr>

|

| 75 |

+

</table>

|

| 76 |

+

|

| 77 |

+

### 1.2 Sparse sketch guidance

|

| 78 |

+

<table class="center">

|

| 79 |

+

<tr style="font-weight: bolder;text-align:center;">

|

| 80 |

+

<td>Input starting frame</td>

|

| 81 |

+

<td>Input ending frame</td>

|

| 82 |

+

<td>Input sketch guidance</td>

|

| 83 |

+

<td>Generated video</td>

|

| 84 |

+

</tr>

|

| 85 |

+

<tr>

|

| 86 |

+

<td>

|

| 87 |

+

<img src=assets/72105_388.mp4_00-00.png width="200">

|

| 88 |

+

</td>

|

| 89 |

+

<td>

|

| 90 |

+

<img src=assets/72105_388.mp4_00-01.png width="200">

|

| 91 |

+

</td>

|

| 92 |

+

<td>

|

| 93 |

+

<img src=assets/06.gif width="200">

|

| 94 |

+

</td>

|

| 95 |

+

<td>

|

| 96 |

+

<img src=assets/07.gif width="200">

|

| 97 |

+

</td>

|

| 98 |

+

</tr>

|

| 99 |

+

|

| 100 |

+

<tr>

|

| 101 |

+

<td>

|

| 102 |

+

<img src=assets/72110_255.mp4_00-00.png width="200">

|

| 103 |

+

</td>

|

| 104 |

+

<td>

|

| 105 |

+

<img src=assets/72110_255.mp4_00-01.png width="200">

|

| 106 |

+

</td>

|

| 107 |

+

<td>

|

| 108 |

+

<img src=assets/12.gif width="200">

|

| 109 |

+

</td>

|

| 110 |

+

<td>

|

| 111 |

+

<img src=assets/13.gif width="200">

|

| 112 |

+

</td>

|

| 113 |

+

</tr>

|

| 114 |

+

|

| 115 |

+

|

| 116 |

+

</table>

|

| 117 |

+

|

| 118 |

+

|

| 119 |

+

### 2. Applications

|

| 120 |

+

#### 2.1 Cartoon Sketch Interpolation (see project page for more details)

|

| 121 |

+

<table class="center">

|

| 122 |

+

<tr style="font-weight: bolder;text-align:center;">

|

| 123 |

+

<td>Input starting frame</td>

|

| 124 |

+

<td>Input ending frame</td>

|

| 125 |

+

<td>Generated video</td>

|

| 126 |

+

</tr>

|

| 127 |

+

|

| 128 |

+

<tr>

|

| 129 |

+

<td>

|

| 130 |

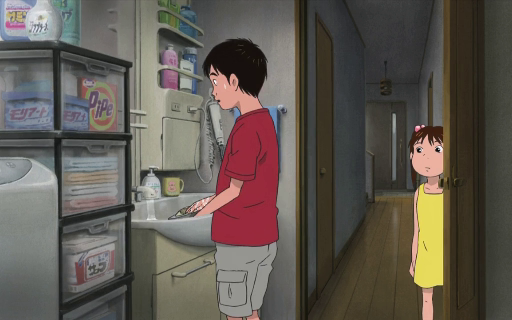

+

<img src=assets/frame0001_10.png width="250">

|

| 131 |

+

</td>

|

| 132 |

+

<td>

|

| 133 |

+

<img src=assets/frame0016_10.png width="250">

|

| 134 |

+

</td>

|

| 135 |

+

<td>

|

| 136 |

+

<img src=assets/10.gif width="250">

|

| 137 |

+

</td>

|

| 138 |

+

</tr>

|

| 139 |

+

|

| 140 |

+

|

| 141 |

+

<tr>

|

| 142 |

+

<td>

|

| 143 |

+

<img src=assets/frame0001_11.png width="250">

|

| 144 |

+

</td>

|

| 145 |

+

<td>

|

| 146 |

+

<img src=assets/frame0016_11.png width="250">

|

| 147 |

+

</td>

|

| 148 |

+

<td>

|

| 149 |

+

<img src=assets/11.gif width="250">

|

| 150 |

+

</td>

|

| 151 |

+

</tr>

|

| 152 |

+

|

| 153 |

+

</table>

|

| 154 |

+

|

| 155 |

+

|

| 156 |

+

#### 2.2 Reference-based Sketch Colorization

|

| 157 |

+

<table class="center">

|

| 158 |

+

<tr style="font-weight: bolder;text-align:center;">

|

| 159 |

+

<td>Input sketch</td>

|

| 160 |

+

<td>Input reference</td>

|

| 161 |

+

<td>Colorization results</td>

|

| 162 |

+

</tr>

|

| 163 |

+

|

| 164 |

+

<tr>

|

| 165 |

+

<td>

|

| 166 |

+

<img src=assets/04.gif width="250">

|

| 167 |

+

</td>

|

| 168 |

+

<td>

|

| 169 |

+

<img src=assets/frame0001_05.png width="250">

|

| 170 |

+

</td>

|

| 171 |

+

<td>

|

| 172 |

+

<img src=assets/05.gif width="250">

|

| 173 |

+

</td>

|

| 174 |

+

</tr>

|

| 175 |

+

|

| 176 |

+

|

| 177 |

+

<tr>

|

| 178 |

+

<td>

|

| 179 |

+

<img src=assets/08.gif width="250">

|

| 180 |

+

</td>

|

| 181 |

+

<td>

|

| 182 |

+

<img src=assets/frame0001_09.png width="250">

|

| 183 |

+

</td>

|

| 184 |

+

<td>

|

| 185 |

+

<img src=assets/09.gif width="250">

|

| 186 |

+

</td>

|

| 187 |

+

</tr>

|

| 188 |

+

|

| 189 |

+

</table>

|

| 190 |

+

|

| 191 |

+

|

| 192 |

+

|

| 193 |

+

|

| 194 |

+

|

| 195 |

+

|

| 196 |

+

|

| 197 |

+

## 📝 Changelog

|

| 198 |

+

- [ ] Add sketch control and colorization function.

|

| 199 |

+

- __[2024.05.29]__: 🔥🔥 Release code and model weights.

|

| 200 |

+

- __[2024.05.28]__: Launch the project page and update the arXiv preprint.

|

| 201 |

+

<br>

|

| 202 |

+

|

| 203 |

+

|

| 204 |

+

## 🧰 Models

|

| 205 |

+

|

| 206 |

+

|Model|Resolution|GPU Mem. & Inference Time (A100, ddim 50steps)|Checkpoint|

|

| 207 |

+

|:---------|:---------|:--------|:--------|

|

| 208 |

+

|ToonCrafter_512|320x512| TBD (`perframe_ae=True`)|[Hugging Face](https://huggingface.co/Doubiiu/ToonCrafter/blob/main/model.ckpt)|

|

| 209 |

+

|

| 210 |

+

|

| 211 |

+

Currently, our ToonCrafter can support generating videos of up to 16 frames with a resolution of 512x320. The inference time can be reduced by using fewer DDIM steps.

|

| 212 |

+

|

| 213 |

+

|

| 214 |

+

|

| 215 |

+

## ⚙️ Setup

|

| 216 |

+

|

| 217 |

+

### Install Environment via Anaconda (Recommended)

|

| 218 |

+

```bash

|

| 219 |

+

conda create -n tooncrafter python=3.8.5

|

| 220 |

+

conda activate tooncrafter

|

| 221 |

+

pip install -r requirements.txt

|

| 222 |

+

```

|

| 223 |

+

|

| 224 |

+

|

| 225 |

+

## 💫 Inference

|

| 226 |

+

### 1. Command line

|

| 227 |

+

|

| 228 |

+

Download pretrained ToonCrafter_512 and put the `model.ckpt` in `checkpoints/tooncrafter_512_interp_v1/model.ckpt`.

|

| 229 |

+

```bash

|

| 230 |

+

sh scripts/run.sh

|

| 231 |

+

```

|

| 232 |

+

|

| 233 |

+

|

| 234 |

+

### 2. Local Gradio demo

|

| 235 |

+

|

| 236 |

+

Download the pretrained model and put it in the corresponding directory according to the previous guidelines.

|

| 237 |

+

```bash

|

| 238 |

+

python gradio_app.py

|

| 239 |

+

```

|

| 240 |

+

|

| 241 |

+

|

| 242 |

+

|

| 243 |

+

|

| 244 |

+

|

| 245 |

+

|

| 246 |

+

<!-- ## 🤝 Community Support -->

|

| 247 |

+

|

| 248 |

+

|

| 249 |

+

|

| 250 |

+

<a name="disc"></a>

|

| 251 |

+

## 📢 Disclaimer

|

| 252 |

+

Calm down. Our framework opens up the era of generative cartoon interpolation, but due to the variaity of generative video prior, the success rate is not guaranteed.

|

| 253 |

+

|

| 254 |

+

⚠️This is an open-source research exploration, instead of commercial products. It can't meet all your expectations.

|

| 255 |

+

|

| 256 |

+

This project strives to impact the domain of AI-driven video generation positively. Users are granted the freedom to create videos using this tool, but they are expected to comply with local laws and utilize it responsibly. The developers do not assume any responsibility for potential misuse by users.

|

| 257 |

+

****

|

assets/00.gif

ADDED

|

assets/01.gif

ADDED

|

assets/02.gif

ADDED

|

assets/03.gif

ADDED

|

assets/04.gif

ADDED

|

assets/05.gif

ADDED

|

assets/06.gif

ADDED

|

assets/07.gif

ADDED

|

assets/08.gif

ADDED

|

assets/09.gif

ADDED

|

assets/10.gif

ADDED

|

assets/11.gif

ADDED

|

assets/12.gif

ADDED

|

assets/13.gif

ADDED

|

Git LFS Details

|

assets/72105_388.mp4_00-00.png

ADDED

|

assets/72105_388.mp4_00-01.png

ADDED

|

assets/72109_125.mp4_00-00.png

ADDED

|

assets/72109_125.mp4_00-01.png

ADDED

|

assets/72110_255.mp4_00-00.png

ADDED

|

assets/72110_255.mp4_00-01.png

ADDED

|

assets/74302_1349_frame1.png

ADDED

|

assets/74302_1349_frame3.png

ADDED

|

assets/Japan_v2_1_070321_s3_frame1.png

ADDED

|

assets/Japan_v2_1_070321_s3_frame3.png

ADDED

|

assets/Japan_v2_2_062266_s2_frame1.png

ADDED

|

assets/Japan_v2_2_062266_s2_frame3.png

ADDED

|

assets/frame0001_05.png

ADDED

|

assets/frame0001_09.png

ADDED

|

assets/frame0001_10.png

ADDED

|

assets/frame0001_11.png

ADDED

|

assets/frame0016_10.png

ADDED

|

assets/frame0016_11.png

ADDED

|

configs/inference_512_v1.0.yaml

ADDED

|

@@ -0,0 +1,103 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model:

|

| 2 |

+

target: lvdm.models.ddpm3d.LatentVisualDiffusion

|

| 3 |

+

params:

|

| 4 |

+

rescale_betas_zero_snr: True

|

| 5 |

+

parameterization: "v"

|

| 6 |

+

linear_start: 0.00085

|

| 7 |

+

linear_end: 0.012

|

| 8 |

+

num_timesteps_cond: 1

|

| 9 |

+

timesteps: 1000

|

| 10 |

+

first_stage_key: video

|

| 11 |

+

cond_stage_key: caption

|

| 12 |

+

cond_stage_trainable: False

|

| 13 |

+

conditioning_key: hybrid

|

| 14 |

+

image_size: [40, 64]

|

| 15 |

+

channels: 4

|

| 16 |

+

scale_by_std: False

|

| 17 |

+

scale_factor: 0.18215

|

| 18 |

+

use_ema: False

|

| 19 |

+

uncond_type: 'empty_seq'

|

| 20 |

+

use_dynamic_rescale: true

|

| 21 |

+

base_scale: 0.7

|

| 22 |

+

fps_condition_type: 'fps'

|

| 23 |

+

perframe_ae: True

|

| 24 |

+

loop_video: true

|

| 25 |

+

unet_config:

|

| 26 |

+

target: lvdm.modules.networks.openaimodel3d.UNetModel

|

| 27 |

+

params:

|

| 28 |

+

in_channels: 8

|

| 29 |

+

out_channels: 4

|

| 30 |

+

model_channels: 320

|

| 31 |

+

attention_resolutions:

|

| 32 |

+

- 4

|

| 33 |

+

- 2

|

| 34 |

+

- 1

|

| 35 |

+

num_res_blocks: 2

|

| 36 |

+

channel_mult:

|

| 37 |

+

- 1

|

| 38 |

+

- 2

|

| 39 |

+

- 4

|

| 40 |

+

- 4

|

| 41 |

+

dropout: 0.1

|

| 42 |

+

num_head_channels: 64

|

| 43 |

+

transformer_depth: 1

|

| 44 |

+

context_dim: 1024

|

| 45 |

+

use_linear: true

|

| 46 |

+

use_checkpoint: True

|

| 47 |

+

temporal_conv: True

|

| 48 |

+

temporal_attention: True

|

| 49 |

+

temporal_selfatt_only: true

|

| 50 |

+

use_relative_position: false

|

| 51 |

+

use_causal_attention: False

|

| 52 |

+

temporal_length: 16

|

| 53 |

+

addition_attention: true

|

| 54 |

+

image_cross_attention: true

|

| 55 |

+

default_fs: 24

|

| 56 |

+

fs_condition: true

|

| 57 |

+

|

| 58 |

+

first_stage_config:

|

| 59 |

+

target: lvdm.models.autoencoder.AutoencoderKL_Dualref

|

| 60 |

+

params:

|

| 61 |

+

embed_dim: 4

|

| 62 |

+

monitor: val/rec_loss

|

| 63 |

+

ddconfig:

|

| 64 |

+

double_z: True

|

| 65 |

+

z_channels: 4

|

| 66 |

+

resolution: 256

|

| 67 |

+

in_channels: 3

|

| 68 |

+

out_ch: 3

|

| 69 |

+

ch: 128

|

| 70 |

+

ch_mult:

|

| 71 |

+

- 1

|

| 72 |

+

- 2

|

| 73 |

+

- 4

|

| 74 |

+

- 4

|

| 75 |

+

num_res_blocks: 2

|

| 76 |

+

attn_resolutions: []

|

| 77 |

+

dropout: 0.0

|

| 78 |

+

lossconfig:

|

| 79 |

+

target: torch.nn.Identity

|

| 80 |

+

|

| 81 |

+

cond_stage_config:

|

| 82 |

+

target: lvdm.modules.encoders.condition.FrozenOpenCLIPEmbedder

|

| 83 |

+

params:

|

| 84 |

+

freeze: true

|

| 85 |

+

layer: "penultimate"

|

| 86 |

+

|

| 87 |

+

img_cond_stage_config:

|

| 88 |

+

target: lvdm.modules.encoders.condition.FrozenOpenCLIPImageEmbedderV2

|

| 89 |

+

params:

|

| 90 |

+

freeze: true

|

| 91 |

+

|

| 92 |

+

image_proj_stage_config:

|

| 93 |

+

target: lvdm.modules.encoders.resampler.Resampler

|

| 94 |

+

params:

|

| 95 |

+

dim: 1024

|

| 96 |

+

depth: 4

|

| 97 |

+

dim_head: 64

|

| 98 |

+

heads: 12

|

| 99 |

+

num_queries: 16

|

| 100 |

+

embedding_dim: 1280

|

| 101 |

+

output_dim: 1024

|

| 102 |

+

ff_mult: 4

|

| 103 |

+

video_length: 16

|

configs/training_1024_v1.0/config.yaml

ADDED

|

@@ -0,0 +1,166 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model:

|

| 2 |

+

pretrained_checkpoint: checkpoints/dynamicrafter_1024_v1/model.ckpt

|

| 3 |

+

base_learning_rate: 1.0e-05

|

| 4 |

+

scale_lr: False

|

| 5 |

+

target: lvdm.models.ddpm3d.LatentVisualDiffusion

|

| 6 |

+

params:

|

| 7 |

+

rescale_betas_zero_snr: True

|

| 8 |

+

parameterization: "v"

|

| 9 |

+

linear_start: 0.00085

|

| 10 |

+

linear_end: 0.012

|

| 11 |

+

num_timesteps_cond: 1

|

| 12 |

+

log_every_t: 200

|

| 13 |

+

timesteps: 1000

|

| 14 |

+

first_stage_key: video

|

| 15 |

+

cond_stage_key: caption

|

| 16 |

+

cond_stage_trainable: False

|

| 17 |

+

image_proj_model_trainable: True

|

| 18 |

+

conditioning_key: hybrid

|

| 19 |

+

image_size: [72, 128]

|

| 20 |

+

channels: 4

|

| 21 |

+

scale_by_std: False

|

| 22 |

+

scale_factor: 0.18215

|

| 23 |

+

use_ema: False

|

| 24 |

+

uncond_prob: 0.05

|

| 25 |

+

uncond_type: 'empty_seq'

|

| 26 |

+

rand_cond_frame: true

|

| 27 |

+

use_dynamic_rescale: true

|

| 28 |

+

base_scale: 0.3

|

| 29 |

+

fps_condition_type: 'fps'

|

| 30 |

+

perframe_ae: True

|

| 31 |

+

|

| 32 |

+

unet_config:

|

| 33 |

+

target: lvdm.modules.networks.openaimodel3d.UNetModel

|

| 34 |

+

params:

|

| 35 |

+

in_channels: 8

|

| 36 |

+

out_channels: 4

|

| 37 |

+

model_channels: 320

|

| 38 |

+

attention_resolutions:

|

| 39 |

+

- 4

|

| 40 |

+

- 2

|

| 41 |

+

- 1

|

| 42 |

+

num_res_blocks: 2

|

| 43 |

+

channel_mult:

|

| 44 |

+

- 1

|

| 45 |

+

- 2

|

| 46 |

+

- 4

|

| 47 |

+

- 4

|

| 48 |

+

dropout: 0.1

|

| 49 |

+

num_head_channels: 64

|

| 50 |

+

transformer_depth: 1

|

| 51 |

+

context_dim: 1024

|

| 52 |

+

use_linear: true

|

| 53 |

+

use_checkpoint: True

|

| 54 |

+

temporal_conv: True

|

| 55 |

+

temporal_attention: True

|

| 56 |

+

temporal_selfatt_only: true

|

| 57 |

+

use_relative_position: false

|

| 58 |

+

use_causal_attention: False

|

| 59 |

+

temporal_length: 16

|

| 60 |

+

addition_attention: true

|

| 61 |

+

image_cross_attention: true

|

| 62 |

+

default_fs: 10

|

| 63 |

+

fs_condition: true

|

| 64 |

+

|

| 65 |

+

first_stage_config:

|

| 66 |

+

target: lvdm.models.autoencoder.AutoencoderKL

|

| 67 |

+

params:

|

| 68 |

+

embed_dim: 4

|

| 69 |

+

monitor: val/rec_loss

|

| 70 |

+

ddconfig:

|

| 71 |

+

double_z: True

|

| 72 |

+

z_channels: 4

|

| 73 |

+

resolution: 256

|

| 74 |

+

in_channels: 3

|

| 75 |

+

out_ch: 3

|

| 76 |

+

ch: 128

|

| 77 |

+

ch_mult:

|

| 78 |

+

- 1

|

| 79 |

+

- 2

|

| 80 |

+

- 4

|

| 81 |

+

- 4

|

| 82 |

+

num_res_blocks: 2

|

| 83 |

+

attn_resolutions: []

|

| 84 |

+

dropout: 0.0

|

| 85 |

+

lossconfig:

|

| 86 |

+

target: torch.nn.Identity

|

| 87 |

+

|

| 88 |

+

cond_stage_config:

|

| 89 |

+

target: lvdm.modules.encoders.condition.FrozenOpenCLIPEmbedder

|

| 90 |

+

params:

|

| 91 |

+

freeze: true

|

| 92 |

+

layer: "penultimate"

|

| 93 |

+

|

| 94 |

+

img_cond_stage_config:

|

| 95 |

+

target: lvdm.modules.encoders.condition.FrozenOpenCLIPImageEmbedderV2

|

| 96 |

+

params:

|

| 97 |

+

freeze: true

|

| 98 |

+

|

| 99 |

+

image_proj_stage_config:

|

| 100 |

+

target: lvdm.modules.encoders.resampler.Resampler

|

| 101 |

+

params:

|

| 102 |

+

dim: 1024

|

| 103 |

+

depth: 4

|

| 104 |

+

dim_head: 64

|

| 105 |

+

heads: 12

|

| 106 |

+

num_queries: 16

|

| 107 |

+

embedding_dim: 1280

|

| 108 |

+

output_dim: 1024

|

| 109 |

+

ff_mult: 4

|

| 110 |

+

video_length: 16

|

| 111 |

+

|

| 112 |

+

data:

|

| 113 |

+

target: utils_data.DataModuleFromConfig

|

| 114 |

+

params:

|

| 115 |

+

batch_size: 1

|

| 116 |

+

num_workers: 12

|

| 117 |

+

wrap: false

|

| 118 |

+

train:

|

| 119 |

+

target: lvdm.data.webvid.WebVid

|

| 120 |

+

params:

|

| 121 |

+

data_dir: <WebVid10M DATA>

|

| 122 |

+

meta_path: <.csv FILE>

|

| 123 |

+

video_length: 16

|

| 124 |

+

frame_stride: 6

|

| 125 |

+

load_raw_resolution: true

|

| 126 |

+

resolution: [576, 1024]

|

| 127 |

+

spatial_transform: resize_center_crop

|

| 128 |

+

random_fs: true ## if true, we uniformly sample fs with max_fs=frame_stride (above)

|

| 129 |

+

|

| 130 |

+

lightning:

|

| 131 |

+

precision: 16

|

| 132 |

+

# strategy: deepspeed_stage_2

|

| 133 |

+

trainer:

|

| 134 |

+

benchmark: True

|

| 135 |

+

accumulate_grad_batches: 2

|

| 136 |

+

max_steps: 100000

|

| 137 |

+

# logger

|

| 138 |

+

log_every_n_steps: 50

|

| 139 |

+

# val

|

| 140 |

+

val_check_interval: 0.5

|

| 141 |

+

gradient_clip_algorithm: 'norm'

|

| 142 |

+

gradient_clip_val: 0.5

|

| 143 |

+

callbacks:

|

| 144 |

+

model_checkpoint:

|

| 145 |

+

target: pytorch_lightning.callbacks.ModelCheckpoint

|

| 146 |

+

params:

|

| 147 |

+

every_n_train_steps: 9000 #1000

|

| 148 |

+

filename: "{epoch}-{step}"

|

| 149 |

+

save_weights_only: True

|

| 150 |

+

metrics_over_trainsteps_checkpoint:

|

| 151 |

+

target: pytorch_lightning.callbacks.ModelCheckpoint

|

| 152 |

+

params:

|

| 153 |

+

filename: '{epoch}-{step}'

|

| 154 |

+

save_weights_only: True

|

| 155 |

+

every_n_train_steps: 10000 #20000 # 3s/step*2w=

|

| 156 |

+

batch_logger:

|

| 157 |

+

target: callbacks.ImageLogger

|

| 158 |

+

params:

|

| 159 |

+

batch_frequency: 500

|

| 160 |

+

to_local: False

|

| 161 |

+

max_images: 8

|

| 162 |

+

log_images_kwargs:

|

| 163 |

+

ddim_steps: 50

|

| 164 |

+

unconditional_guidance_scale: 7.5

|

| 165 |

+

timestep_spacing: uniform_trailing

|

| 166 |

+

guidance_rescale: 0.7

|

configs/training_1024_v1.0/run.sh

ADDED

|

@@ -0,0 +1,37 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# NCCL configuration

|

| 2 |

+

# export NCCL_DEBUG=INFO

|

| 3 |

+

# export NCCL_IB_DISABLE=0

|

| 4 |

+

# export NCCL_IB_GID_INDEX=3

|

| 5 |

+

# export NCCL_NET_GDR_LEVEL=3

|

| 6 |

+

# export NCCL_TOPO_FILE=/tmp/topo.txt

|

| 7 |

+

|

| 8 |

+

# args

|

| 9 |

+

name="training_1024_v1.0"

|

| 10 |

+

config_file=configs/${name}/config.yaml

|

| 11 |

+

|

| 12 |

+

# save root dir for logs, checkpoints, tensorboard record, etc.

|

| 13 |

+

save_root="<YOUR_SAVE_ROOT_DIR>"

|

| 14 |

+

|

| 15 |

+

mkdir -p $save_root/$name

|

| 16 |

+

|

| 17 |

+

## run

|

| 18 |

+

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 python3 -m torch.distributed.launch \

|

| 19 |

+

--nproc_per_node=$HOST_GPU_NUM --nnodes=1 --master_addr=127.0.0.1 --master_port=12352 --node_rank=0 \

|

| 20 |

+

./main/trainer.py \

|

| 21 |

+

--base $config_file \

|

| 22 |

+

--train \

|

| 23 |

+

--name $name \

|

| 24 |

+

--logdir $save_root \

|

| 25 |

+

--devices $HOST_GPU_NUM \

|

| 26 |

+

lightning.trainer.num_nodes=1

|

| 27 |

+

|

| 28 |

+

## debugging

|

| 29 |

+

# CUDA_VISIBLE_DEVICES=0,1,2,3 python3 -m torch.distributed.launch \

|

| 30 |

+

# --nproc_per_node=4 --nnodes=1 --master_addr=127.0.0.1 --master_port=12352 --node_rank=0 \

|

| 31 |

+

# ./main/trainer.py \

|

| 32 |

+

# --base $config_file \

|

| 33 |

+

# --train \

|

| 34 |

+

# --name $name \

|

| 35 |

+

# --logdir $save_root \

|

| 36 |

+

# --devices 4 \

|

| 37 |

+

# lightning.trainer.num_nodes=1

|

configs/training_512_v1.0/config.yaml

ADDED

|

@@ -0,0 +1,166 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|