Spaces:

Running

A newer version of the Gradio SDK is available:

6.0.2

title: det-metrics

tags:

- evaluate

- metric

description: >-

Modified cocoevals.py which is wrapped into torchmetrics' mAP metric with

numpy instead of torch dependency.

sdk: gradio

sdk_version: 4.44.0

app_file: app.py

pinned: false

emoji: 🕵️

SEA-AI/det-metrics

This hugging face metric uses seametrics.detection.PrecisionRecallF1Support under the hood to compute coco-like metrics for object detection tasks. It is a modified cocoeval.py wrapped inside torchmetrics' mAP metric but with numpy arrays instead of torch tensors.

Getting Started

To get started with det-metrics, make sure you have the necessary dependencies installed. This metric relies on the evaluate and seametrics libraries for metric calculation and integration with FiftyOne datasets.

Installation

First, ensure you have Python 3.8 or later installed. Then, install det-metrics using pip:

pip install evaluate git+https://github.com/SEA-AI/seametrics@develop

Basic Usage

Here's how to quickly evaluate your object detection models using SEA-AI/det-metrics:

import evaluate

# Define your predictions and references (dict values can also by numpy arrays)

predictions = [

{

"boxes": [[449.3, 197.75390625, 6.25, 7.03125], [334.3, 181.58203125, 11.5625, 6.85546875]],

"labels": [0, 0],

"scores": [0.153076171875, 0.72314453125],

}

]

references = [

{

"boxes": [[449.3, 197.75390625, 6.25, 7.03125], [334.3, 181.58203125, 11.5625, 6.85546875]],

"labels": [0, 0],

"area": [132.2, 83.8],

}

]

# Load SEA-AI/det-metrics and evaluate

module = evaluate.load("SEA-AI/det-metrics")

module.add(prediction=predictions, reference=references)

results = module.compute()

print(results)

This will output the following dictionary containing metrics for the detection model. The key of the dictionary will be the model name or "custom" if no model names are available like in this case. Additionally, there is a single key "classes" which maps the labels to the respective indices of the results. If the results are class agnostic, the value of "classes" is None.

{

"classes": ...

"custom": {

"metrics": ...,

"eval": ...,

"params": ...

}

}

metrics: A dictionary containing performance metrics for each area rangeeval: Output of COCOeval.accumulate()params: COCOeval parameters object

See Output Values for more detailed information about the returned results structure, which includes metrics, eval, and params fields for each model passed as input.

FiftyOne Integration

Integrate SEA-AI/det-metrics with FiftyOne datasets for enhanced analysis and visualization:

Class-agnostic Example

import evaluate

import logging

from seametrics.payload.processor import PayloadProcessor

logging.basicConfig(level=logging.WARNING)

# Configure your dataset and model details

processor = PayloadProcessor(

dataset_name="SAILING_DATASET_QA",

gt_field="ground_truth_det",

models=["yolov5n6_RGB_D2304-v1_9C", "tf1zoo_ssd-mobilenet-v2_agnostic_D2207"],

sequence_list=["Trip_14_Seq_1"],

data_type="rgb",

slices=["rgb"]

)

# Evaluate using SEA-AI/det-metrics

module = evaluate.load("SEA-AI/det-metrics", payload=processor.payload)

results = module.compute()

print(results)

This will output the following dictionary containing metrics for the detection model. The key of the dictionary will be the model name.

{

"yolov5n6_RGB_D2304-v1_9C": {

"metrics": ...,

"eval": ...,

"params": ...

},

"tf1zoo_ssd-mobilenet-v2_agnostic_D2207": {

"metrics": ...,

"eval": ...,

"params": ...

}

}

metrics: A dictionary containing performance metrics for each area rangeeval: Output of COCOeval.accumulate()params: COCOeval parameters object

See Output Values for more detailed information about the returned results structure, which includes metrics, eval, and params fields for each model passed as input.

Class-specific example

import evaluate

import logging

from seametrics.payload.processor import PayloadProcessor

logging.basicConfig(level=logging.WARNING)

# Configure your dataset and model details

processor = PayloadProcessor(

dataset_name="SAILING_DATASET_QA",

gt_field="ground_truth_det",

models=["yolov5n6_RGB_D2304-v1_9C", "tf1zoo_ssd-mobilenet-v2_agnostic_D2207"],

sequence_list=["Trip_14_Seq_1"],

data_type="rgb",

slices=["rgb"]

)

# Evaluate using SEA-AI/det-metrics

module = evaluate.load("SEA-AI/det-metrics", payload=processor.payload, class_agnostic=False)

print("Used labels: \n", module.label_mapping)

results = module.compute()

print("Results: \n", results)

Used labels:

{

"SHIP": 0,

"FISHING_SHIP": 0,

"BOAT_WITHOUT_SAILS": 1,

...

}

Results:

{

"yolov5n6_RGB_D2304-v1_9C": {

"metrics": ..., # metrics are arrays instead of single numbers, where the indices represent class 0, 1, etc. from the label mapping

"eval": ...,

"params": ...

},

"tf1zoo_ssd-mobilenet-v2_agnostic_D2207": {

"metrics": ...,

"eval": ...,

"params": ...

}

}

Metric Settings

Customize your evaluation by specifying various parameters when loading SEA-AI/det-metrics:

- area_ranges_tuples: Define different area ranges for metrics calculation.

- bbox_format: Set the bounding box format (e.g.,

"xywh"). - iou_threshold: Choose the IOU threshold for determining correct detections.

- class_agnostic: Specify whether to calculate metrics disregarding class labels.

- label_mapping: Provide an optional mapping of string labels to numeric labels in the form of a dictionary (e.g.,

{"SHIP": 0, "BOAT": 1}). Defaults to a label mapping defined by the SEA.AI label merging map.

area_ranges_tuples = [

("all", [0, 1e5**2]),

("small", [0**2, 6**2]),

("medium", [6**2, 12**2]),

("large", [12**2, 1e5**2]),

]

module = evaluate.load(

"SEA-AI/det-metrics",

iou_threshold=[0.00001],

area_ranges_tuples=area_ranges_tuples,

)

Output Values

For every model passed as input, the results contain the metrics, eval, and params fields. If no specific model was passed (usage without payload), the default model name “custom” will be used.

{

"model_1": {

"metrics": ...,

"eval": ...,

"params": ...

},

"model_2": {

"metrics": ...,

"eval": ...,

"params": ...

},

"model_3": {

"metrics": ...,

"eval": ...,

"params": ...

},

...

}

Metrics

SEA-AI/det-metrics metrics dictionary provides a detailed breakdown of performance metrics for each specified area range:

- range: The area range considered.

- iouThr: The IOU threshold applied.

- maxDets: The maximum number of detections evaluated.

- tp/fp/fn: Counts of true positives, false positives, and false negatives.

- duplicates: Number of duplicate detections.

- precision/recall/f1: Calculated precision, recall, and F1 score.

- support: Number of ground truth boxes considered.

- fpi: Number of images with predictions but no ground truths.

- nImgs: Total number of images evaluated.

If the det-metrics is computed with class_agnostic=False, all counts (tp/fp/fn/duplicates/support/fpi) and scores (precision/recall/f1) are arrays instead of single numbers. For a label mapping of {"SHIP": 0, "BOAT": 1}, a exemplary array could be tp=np.array([10, 4]), which means there are 10 true positive ships and 4 true positive boats.

Eval

The SEA-AI/det-metrics evaluation dictionary provides details about evaluation metrics and results. Below is a description of each field:

params: Parameters used for evaluation, defining settings and conditions.

counts: Dimensions of parameters used in evaluation, represented as a list [T, R, K, A, M]:

- T: IoU threshold (default: [1e-10])

- R: Recall threshold (not used)

- K: Class index (class-agnostic, so only 0)

- A: Area range (0=all, 1=valid_n, 2=valid_w, 3=tiny, 4=small, 5=medium, 6=large)

- M: Max detections (default: [100])

date: The date when the evaluation was performed.

precision: A multi-dimensional array [TxRxKxAxM] storing precision values for each evaluation setting.

recall: A multi-dimensional array [TxKxAxM] storing maximum recall values for each evaluation setting.

scores: Scores for each detection.

TP: True Positives - correct detections matching ground truth.

FP: False Positives - incorrect detections not matching ground truth.

FN: False Negatives - ground truth objects not detected.

duplicates: Duplicate detections of the same object.

support: Number of ground truth objects for each category.

FPI: False Positives per Image.

TPC: True Positives per Category.

FPC: False Positives per Category.

sorted_conf: Confidence scores of detections sorted in descending order.

Note: precision and recall are set to -1 for settings with no ground truth objects.

Params

The params return value of the COCO evaluation parameters in PyCOCO represents a dictionary with various evaluation settings that can be customized. Here’s a breakdown of what each parameter means:

- imgIds: List of image IDs to use for evaluation. By default, it evaluates on all images.

- catIds: List of category IDs to use for evaluation. By default, it evaluates on all categories.

- iouThrs: List of IoU (Intersection over Union) thresholds for evaluation. By default, it uses thresholds from 0.5 to 0.95 with a step of 0.05 (i.e., [0.5, 0.55, …, 0.95]).

- recThrs: List of recall thresholds for evaluation. By default, it uses 101 thresholds from 0 to 1 with a step of 0.01 (i.e., [0, 0.01, …, 1]).

- areaRng: Object area ranges for evaluation. This parameter defines the sizes of objects to evaluate. It is specified as a list of tuples, where each tuple represents a range of area in square pixels.

- maxDets: List of thresholds on maximum detections per image for evaluation. By default, it evaluates with thresholds of 1, 10, and 100 detections per image.

- iouType: Type of IoU calculation used for evaluation. It can be ‘segm’ (segmentation), ‘bbox’ (bounding box), or ‘keypoints’.

- class_agnostic: Boolean flag indicating whether to use category labels for evaluation (default is 1, meaning true).

- label_mapping: Dict of str: int pairs, mapping string labels to numeric labels, so that the payload labels can be mapped to numeric labels (default is a label mapping defined by the class merging structure). Should be provided only if

class_agnostic=False.

Note: If useCats=0 category labels are ignored as in proposal scoring. Multiple areaRngs [Ax2] and maxDets [Mx1] can be specified.

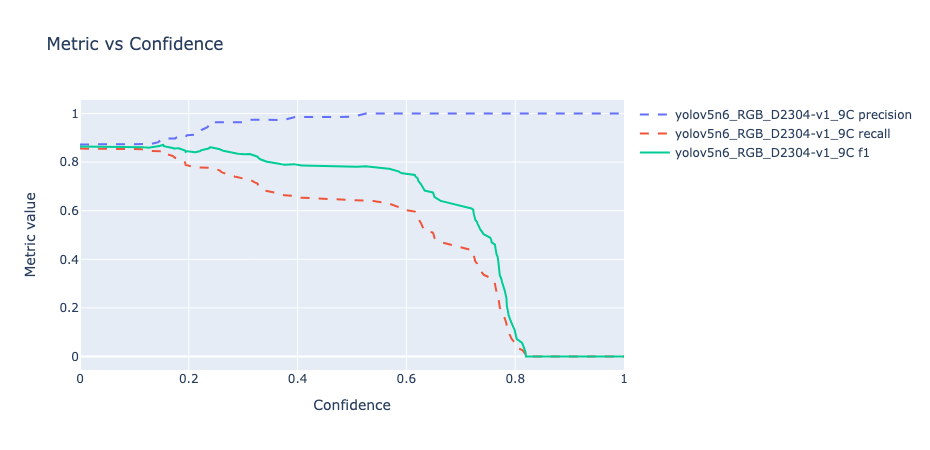

Confidence Curves

When you use module.generate_confidence_curves(), it creates a graph that shows how metrics like precision, recall, and f1 score change as you adjust confidence thresholds. This helps you see the trade-offs between precision (how accurate positive predictions are) and recall (how well the model finds all positive instances) at different confidence levels. As confidence scores go up, models usually have higher precision but may find fewer positive instances, reflecting their certainty in making correct predictions.

Confidence Config

The confidence_config dictionary is set as {"T": 0, "R": 0, "K": 0, "A": 0, "M": 0}, where:

T = 0: represents the IoU (Intersection over Union) threshold.R = 0: is the recall threshold, although it's currently not used.K = 0: indicates a class index for class-agnostic mean Average Precision (mAP), with only one class indexed at 0.A = 0: signifies that all object sizes are considered for evaluation. (0=all, 1=small, 2=medium, 3=large, ... depending on area ranges)M = 0: sets the default maximum detections (maxDets) to 100 in precision_recall_f1_support calculations.

import evaluate

import logging

from seametrics.payload.processor import PayloadProcessor

logging.basicConfig(level=logging.WARNING)

# Configure your dataset and model details

processor = PayloadProcessor(

dataset_name="SAILING_DATASET_QA",

gt_field="ground_truth_det",

models=["yolov5n6_RGB_D2304-v1_9C"],

sequence_list=["Trip_14_Seq_1"],

data_type="rgb",

)

# Evaluate using SEA-AI/det-metrics

module = evaluate.load("SEA-AI/det-metrics", payload=processor.payload)

results = module.compute()

# Plot confidence curves

confidence_config={"T": 0, "R": 0, "K": 0, "A": 0, "M": 0}

fig = module.generate_confidence_curves(results, confidence_config)

fig.show()

W&B logging

When you use module.wandb(), it is possible to log the detection metrics values for each model in Weights and Bias (W&B). The W&B key is stored as a Secret in this repository.

Params

- wandb_project - Name of the W&B project (Default:

'detection_metrics')

import evaluate

import logging

from seametrics.payload.processor import PayloadProcessor

logging.basicConfig(level=logging.WARNING)

# Configure your dataset and model details

processor = PayloadProcessor(

dataset_name="SAILING_PANOPTIC_DATASET_QA_REVIEWED",

gt_field="ground_truth_det",

models=["ahoy-b2-IR"],

data_type="thermal",

slices = ['thermal_right', 'thermal_left', 'thermal_narrow', 'thermal_wide'],

)

AREA_RNGS = [[0, 1e5**2],

[0**2, 6**2],

[6**2, 12**2],

[12**2, 1e5**2]]

AREA_NAMES = ["all", "small", "medium", "large"]

area_ranges_tuples = list(zip(AREA_NAMES, AREA_RNGS))

# Evaluate using SEA-AI/det-metrics

module = evaluate.load("SEA-AI/det-metrics", payload=processor.payload, area_ranges_tuples=area_ranges_tuples)

results = module.compute()

# Log the results on W&B.

module.wandb(results)

Further References

- seametrics Library: Explore the seametrics GitHub repository for more details on the underlying library.

- Pycoco Tools: SEA-AI/det-metrics calculations are based on pycoco tools, a widely used library for COCO dataset evaluation.

- Understanding Metrics: For a deeper understanding of precision, recall, and other metrics, read this comprehensive guide.

Contribution

Your contributions are welcome! If you'd like to improve SEA-AI/det-metrics or add new features, please feel free to fork the repository, make your changes, and submit a pull request.