Spaces:

Runtime error

Runtime error

Commit

·

d1721f9

1

Parent(s):

9946bad

First commit

Browse files- app.py +46 -0

- packages.txt +1 -0

- realesrgan/__pycache__/utils.cpython-37.pyc +0 -0

- realesrgan/utils.py +293 -0

- render0001.png +0 -0

- render0001_DC.png +0 -0

- render1546.png +0 -0

- render1546_DC.png +0 -0

- render1682.png +0 -0

- render1682_DC.png +0 -0

- requirements.txt +9 -0

app.py

ADDED

|

@@ -0,0 +1,46 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import gradio as gr

|

| 2 |

+

from PIL import Image

|

| 3 |

+

|

| 4 |

+

from basicsr.archs.rrdbnet_arch import RRDBNet

|

| 5 |

+

from realesrgan.utils import RealESRGANer

|

| 6 |

+

|

| 7 |

+

# model load

|

| 8 |

+

netscale = 4

|

| 9 |

+

super_res_model = RRDBNet(num_in_ch=3, num_out_ch=3, num_feat=64, num_block=23, num_grow_ch=32, scale=4)

|

| 10 |

+

super_res_upsampler = RealESRGANer(scale=netscale, model_path='model_zoo/RealESRGAN_x4plus.pth', model=super_res_model, tile=0,

|

| 11 |

+

tile_pad=10, pre_pad=0, half=False, gpu_id=None)

|

| 12 |

+

fisheye_correction_model = RRDBNet(num_in_ch=3, num_out_ch=3, num_feat=64, num_block=23, num_grow_ch=32, scale=4)

|

| 13 |

+

fisheye_correction_upsampler = RealESRGANer(scale=netscale, model_path='model_zoo/RealESRGAN_x4plus_fine_tuned_400k.pth', model=fisheye_correction_model, tile=0,

|

| 14 |

+

tile_pad=10, pre_pad=0, half=False, gpu_id=None)

|

| 15 |

+

|

| 16 |

+

def predict(radio_btn, input_img):

|

| 17 |

+

out = None

|

| 18 |

+

|

| 19 |

+

# preprocess input

|

| 20 |

+

if(input_img is not None):

|

| 21 |

+

if(radio_btn == 'Super resolution'):

|

| 22 |

+

upsampler = super_res_upsampler

|

| 23 |

+

else:

|

| 24 |

+

upsampler = fisheye_correction_upsampler

|

| 25 |

+

output, _ = upsampler.enhance(input_img, outscale=4)

|

| 26 |

+

|

| 27 |

+

# convert to pil image

|

| 28 |

+

out = Image.fromarray(output)

|

| 29 |

+

return out

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

gr.Interface(

|

| 33 |

+

fn=predict,

|

| 34 |

+

inputs=[

|

| 35 |

+

gr.Radio(choices=["Super resolution", "Distortion correction"], value="Super resolution", label="Select task:"), gr.inputs.Image()

|

| 36 |

+

],

|

| 37 |

+

outputs=[

|

| 38 |

+

gr.inputs.Image()

|

| 39 |

+

],

|

| 40 |

+

title="Real-ESRGAN moon distortion",

|

| 41 |

+

description="Description of the app",

|

| 42 |

+

examples=[

|

| 43 |

+

["Super resolution", "render0001.png"], ["Super resolution", "render1546.png"], ["Super resolution", "render1682.png"],

|

| 44 |

+

["Distortion correction", "render0001_DC.png"], ["Distortion correction", "render1546_DC.png"], ["Distortion correction", "render1682_DC.png"]

|

| 45 |

+

]

|

| 46 |

+

).launch()

|

packages.txt

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

python3-opencv

|

realesrgan/__pycache__/utils.cpython-37.pyc

ADDED

|

Binary file (8.42 kB). View file

|

|

|

realesrgan/utils.py

ADDED

|

@@ -0,0 +1,293 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import cv2

|

| 2 |

+

import math

|

| 3 |

+

import numpy as np

|

| 4 |

+

import os

|

| 5 |

+

import queue

|

| 6 |

+

import threading

|

| 7 |

+

import torch

|

| 8 |

+

from basicsr.utils.download_util import load_file_from_url

|

| 9 |

+

from torch.nn import functional as F

|

| 10 |

+

|

| 11 |

+

ROOT_DIR = os.path.dirname(os.path.dirname(os.path.abspath(__file__)))

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

class RealESRGANer():

|

| 15 |

+

"""A helper class for upsampling images with RealESRGAN.

|

| 16 |

+

|

| 17 |

+

Args:

|

| 18 |

+

scale (int): Upsampling scale factor used in the networks. It is usually 2 or 4.

|

| 19 |

+

model_path (str): The path to the pretrained model. It can be urls (will first download it automatically).

|

| 20 |

+

model (nn.Module): The defined network. Default: None.

|

| 21 |

+

tile (int): As too large images result in the out of GPU memory issue, so this tile option will first crop

|

| 22 |

+

input images into tiles, and then process each of them. Finally, they will be merged into one image.

|

| 23 |

+

0 denotes for do not use tile. Default: 0.

|

| 24 |

+

tile_pad (int): The pad size for each tile, to remove border artifacts. Default: 10.

|

| 25 |

+

pre_pad (int): Pad the input images to avoid border artifacts. Default: 10.

|

| 26 |

+

half (float): Whether to use half precision during inference. Default: False.

|

| 27 |

+

"""

|

| 28 |

+

|

| 29 |

+

def __init__(self,

|

| 30 |

+

scale,

|

| 31 |

+

model_path,

|

| 32 |

+

model=None,

|

| 33 |

+

tile=0,

|

| 34 |

+

tile_pad=10,

|

| 35 |

+

pre_pad=10,

|

| 36 |

+

half=False,

|

| 37 |

+

device=None,

|

| 38 |

+

gpu_id=None):

|

| 39 |

+

self.scale = scale

|

| 40 |

+

self.tile_size = tile

|

| 41 |

+

self.tile_pad = tile_pad

|

| 42 |

+

self.pre_pad = pre_pad

|

| 43 |

+

self.mod_scale = None

|

| 44 |

+

self.half = half

|

| 45 |

+

|

| 46 |

+

# initialize model

|

| 47 |

+

if gpu_id:

|

| 48 |

+

self.device = torch.device(

|

| 49 |

+

f'cuda:{gpu_id}' if torch.cuda.is_available() else 'cpu') if device is None else device

|

| 50 |

+

else:

|

| 51 |

+

self.device = torch.device('cuda' if torch.cuda.is_available() else 'cpu') if device is None else device

|

| 52 |

+

# if the model_path starts with https, it will first download models to the folder: realesrgan/weights

|

| 53 |

+

if model_path.startswith('https://'):

|

| 54 |

+

model_path = load_file_from_url(

|

| 55 |

+

url=model_path, model_dir=os.path.join(ROOT_DIR, 'realesrgan/weights'), progress=True, file_name=None)

|

| 56 |

+

loadnet = torch.load(model_path, map_location=torch.device('cpu'))

|

| 57 |

+

# prefer to use params_ema

|

| 58 |

+

if 'params_ema' in loadnet:

|

| 59 |

+

keyname = 'params_ema'

|

| 60 |

+

else:

|

| 61 |

+

keyname = 'params'

|

| 62 |

+

model.load_state_dict(loadnet[keyname], strict=True)

|

| 63 |

+

model.eval()

|

| 64 |

+

self.model = model.to(self.device)

|

| 65 |

+

if self.half:

|

| 66 |

+

self.model = self.model.half()

|

| 67 |

+

|

| 68 |

+

def pre_process(self, img):

|

| 69 |

+

"""Pre-process, such as pre-pad and mod pad, so that the images can be divisible

|

| 70 |

+

"""

|

| 71 |

+

img = torch.from_numpy(np.transpose(img, (2, 0, 1))).float()

|

| 72 |

+

self.img = img.unsqueeze(0).to(self.device)

|

| 73 |

+

if self.half:

|

| 74 |

+

self.img = self.img.half()

|

| 75 |

+

|

| 76 |

+

# pre_pad

|

| 77 |

+

if self.pre_pad != 0:

|

| 78 |

+

self.img = F.pad(self.img, (0, self.pre_pad, 0, self.pre_pad), 'reflect')

|

| 79 |

+

# mod pad for divisible borders

|

| 80 |

+

if self.scale == 2:

|

| 81 |

+

self.mod_scale = 2

|

| 82 |

+

elif self.scale == 1:

|

| 83 |

+

self.mod_scale = 4

|

| 84 |

+

if self.mod_scale is not None:

|

| 85 |

+

self.mod_pad_h, self.mod_pad_w = 0, 0

|

| 86 |

+

_, _, h, w = self.img.size()

|

| 87 |

+

if (h % self.mod_scale != 0):

|

| 88 |

+

self.mod_pad_h = (self.mod_scale - h % self.mod_scale)

|

| 89 |

+

if (w % self.mod_scale != 0):

|

| 90 |

+

self.mod_pad_w = (self.mod_scale - w % self.mod_scale)

|

| 91 |

+

self.img = F.pad(self.img, (0, self.mod_pad_w, 0, self.mod_pad_h), 'reflect')

|

| 92 |

+

|

| 93 |

+

def process(self):

|

| 94 |

+

# model inference

|

| 95 |

+

self.output = self.model(self.img)

|

| 96 |

+

|

| 97 |

+

def tile_process(self):

|

| 98 |

+

"""It will first crop input images to tiles, and then process each tile.

|

| 99 |

+

Finally, all the processed tiles are merged into one images.

|

| 100 |

+

|

| 101 |

+

Modified from: https://github.com/ata4/esrgan-launcher

|

| 102 |

+

"""

|

| 103 |

+

batch, channel, height, width = self.img.shape

|

| 104 |

+

output_height = height * self.scale

|

| 105 |

+

output_width = width * self.scale

|

| 106 |

+

output_shape = (batch, channel, output_height, output_width)

|

| 107 |

+

|

| 108 |

+

# start with black image

|

| 109 |

+

self.output = self.img.new_zeros(output_shape)

|

| 110 |

+

tiles_x = math.ceil(width / self.tile_size)

|

| 111 |

+

tiles_y = math.ceil(height / self.tile_size)

|

| 112 |

+

|

| 113 |

+

# loop over all tiles

|

| 114 |

+

for y in range(tiles_y):

|

| 115 |

+

for x in range(tiles_x):

|

| 116 |

+

# extract tile from input image

|

| 117 |

+

ofs_x = x * self.tile_size

|

| 118 |

+

ofs_y = y * self.tile_size

|

| 119 |

+

# input tile area on total image

|

| 120 |

+

input_start_x = ofs_x

|

| 121 |

+

input_end_x = min(ofs_x + self.tile_size, width)

|

| 122 |

+

input_start_y = ofs_y

|

| 123 |

+

input_end_y = min(ofs_y + self.tile_size, height)

|

| 124 |

+

|

| 125 |

+

# input tile area on total image with padding

|

| 126 |

+

input_start_x_pad = max(input_start_x - self.tile_pad, 0)

|

| 127 |

+

input_end_x_pad = min(input_end_x + self.tile_pad, width)

|

| 128 |

+

input_start_y_pad = max(input_start_y - self.tile_pad, 0)

|

| 129 |

+

input_end_y_pad = min(input_end_y + self.tile_pad, height)

|

| 130 |

+

|

| 131 |

+

# input tile dimensions

|

| 132 |

+

input_tile_width = input_end_x - input_start_x

|

| 133 |

+

input_tile_height = input_end_y - input_start_y

|

| 134 |

+

tile_idx = y * tiles_x + x + 1

|

| 135 |

+

input_tile = self.img[:, :, input_start_y_pad:input_end_y_pad, input_start_x_pad:input_end_x_pad]

|

| 136 |

+

|

| 137 |

+

# upscale tile

|

| 138 |

+

try:

|

| 139 |

+

with torch.no_grad():

|

| 140 |

+

output_tile = self.model(input_tile)

|

| 141 |

+

except RuntimeError as error:

|

| 142 |

+

print('Error', error)

|

| 143 |

+

print(f'\tTile {tile_idx}/{tiles_x * tiles_y}')

|

| 144 |

+

|

| 145 |

+

# output tile area on total image

|

| 146 |

+

output_start_x = input_start_x * self.scale

|

| 147 |

+

output_end_x = input_end_x * self.scale

|

| 148 |

+

output_start_y = input_start_y * self.scale

|

| 149 |

+

output_end_y = input_end_y * self.scale

|

| 150 |

+

|

| 151 |

+

# output tile area without padding

|

| 152 |

+

output_start_x_tile = (input_start_x - input_start_x_pad) * self.scale

|

| 153 |

+

output_end_x_tile = output_start_x_tile + input_tile_width * self.scale

|

| 154 |

+

output_start_y_tile = (input_start_y - input_start_y_pad) * self.scale

|

| 155 |

+

output_end_y_tile = output_start_y_tile + input_tile_height * self.scale

|

| 156 |

+

|

| 157 |

+

# put tile into output image

|

| 158 |

+

self.output[:, :, output_start_y:output_end_y,

|

| 159 |

+

output_start_x:output_end_x] = output_tile[:, :, output_start_y_tile:output_end_y_tile,

|

| 160 |

+

output_start_x_tile:output_end_x_tile]

|

| 161 |

+

|

| 162 |

+

def post_process(self):

|

| 163 |

+

# remove extra pad

|

| 164 |

+

if self.mod_scale is not None:

|

| 165 |

+

_, _, h, w = self.output.size()

|

| 166 |

+

self.output = self.output[:, :, 0:h - self.mod_pad_h * self.scale, 0:w - self.mod_pad_w * self.scale]

|

| 167 |

+

# remove prepad

|

| 168 |

+

if self.pre_pad != 0:

|

| 169 |

+

_, _, h, w = self.output.size()

|

| 170 |

+

self.output = self.output[:, :, 0:h - self.pre_pad * self.scale, 0:w - self.pre_pad * self.scale]

|

| 171 |

+

return self.output

|

| 172 |

+

|

| 173 |

+

@torch.no_grad()

|

| 174 |

+

def enhance(self, img, outscale=None, alpha_upsampler='realesrgan'):

|

| 175 |

+

h_input, w_input = img.shape[0:2]

|

| 176 |

+

# img: numpy

|

| 177 |

+

img = img.astype(np.float32)

|

| 178 |

+

if np.max(img) > 256: # 16-bit image

|

| 179 |

+

max_range = 65535

|

| 180 |

+

print('\tInput is a 16-bit image')

|

| 181 |

+

else:

|

| 182 |

+

max_range = 255

|

| 183 |

+

img = img / max_range

|

| 184 |

+

if len(img.shape) == 2: # gray image

|

| 185 |

+

img_mode = 'L'

|

| 186 |

+

img = cv2.cvtColor(img, cv2.COLOR_GRAY2RGB)

|

| 187 |

+

elif img.shape[2] == 4: # RGBA image with alpha channel

|

| 188 |

+

img_mode = 'RGBA'

|

| 189 |

+

alpha = img[:, :, 3]

|

| 190 |

+

img = img[:, :, 0:3]

|

| 191 |

+

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

|

| 192 |

+

if alpha_upsampler == 'realesrgan':

|

| 193 |

+

alpha = cv2.cvtColor(alpha, cv2.COLOR_GRAY2RGB)

|

| 194 |

+

else:

|

| 195 |

+

img_mode = 'RGB'

|

| 196 |

+

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

|

| 197 |

+

|

| 198 |

+

# ------------------- process image (without the alpha channel) ------------------- #

|

| 199 |

+

self.pre_process(img)

|

| 200 |

+

if self.tile_size > 0:

|

| 201 |

+

self.tile_process()

|

| 202 |

+

else:

|

| 203 |

+

self.process()

|

| 204 |

+

output_img = self.post_process()

|

| 205 |

+

output_img = output_img.data.squeeze().float().cpu().clamp_(0, 1).numpy()

|

| 206 |

+

output_img = np.transpose(output_img[[2, 1, 0], :, :], (1, 2, 0))

|

| 207 |

+

if img_mode == 'L':

|

| 208 |

+

output_img = cv2.cvtColor(output_img, cv2.COLOR_BGR2GRAY)

|

| 209 |

+

|

| 210 |

+

# ------------------- process the alpha channel if necessary ------------------- #

|

| 211 |

+

if img_mode == 'RGBA':

|

| 212 |

+

if alpha_upsampler == 'realesrgan':

|

| 213 |

+

self.pre_process(alpha)

|

| 214 |

+

if self.tile_size > 0:

|

| 215 |

+

self.tile_process()

|

| 216 |

+

else:

|

| 217 |

+

self.process()

|

| 218 |

+

output_alpha = self.post_process()

|

| 219 |

+

output_alpha = output_alpha.data.squeeze().float().cpu().clamp_(0, 1).numpy()

|

| 220 |

+

output_alpha = np.transpose(output_alpha[[2, 1, 0], :, :], (1, 2, 0))

|

| 221 |

+

output_alpha = cv2.cvtColor(output_alpha, cv2.COLOR_BGR2GRAY)

|

| 222 |

+

else: # use the cv2 resize for alpha channel

|

| 223 |

+

h, w = alpha.shape[0:2]

|

| 224 |

+

output_alpha = cv2.resize(alpha, (w * self.scale, h * self.scale), interpolation=cv2.INTER_LINEAR)

|

| 225 |

+

|

| 226 |

+

# merge the alpha channel

|

| 227 |

+

output_img = cv2.cvtColor(output_img, cv2.COLOR_BGR2BGRA)

|

| 228 |

+

output_img[:, :, 3] = output_alpha

|

| 229 |

+

|

| 230 |

+

# ------------------------------ return ------------------------------ #

|

| 231 |

+

if max_range == 65535: # 16-bit image

|

| 232 |

+

output = (output_img * 65535.0).round().astype(np.uint16)

|

| 233 |

+

else:

|

| 234 |

+

output = (output_img * 255.0).round().astype(np.uint8)

|

| 235 |

+

|

| 236 |

+

if outscale is not None and outscale != float(self.scale):

|

| 237 |

+

output = cv2.resize(

|

| 238 |

+

output, (

|

| 239 |

+

int(w_input * outscale),

|

| 240 |

+

int(h_input * outscale),

|

| 241 |

+

), interpolation=cv2.INTER_LANCZOS4)

|

| 242 |

+

|

| 243 |

+

return output, img_mode

|

| 244 |

+

|

| 245 |

+

|

| 246 |

+

class PrefetchReader(threading.Thread):

|

| 247 |

+

"""Prefetch images.

|

| 248 |

+

|

| 249 |

+

Args:

|

| 250 |

+

img_list (list[str]): A image list of image paths to be read.

|

| 251 |

+

num_prefetch_queue (int): Number of prefetch queue.

|

| 252 |

+

"""

|

| 253 |

+

|

| 254 |

+

def __init__(self, img_list, num_prefetch_queue):

|

| 255 |

+

super().__init__()

|

| 256 |

+

self.que = queue.Queue(num_prefetch_queue)

|

| 257 |

+

self.img_list = img_list

|

| 258 |

+

|

| 259 |

+

def run(self):

|

| 260 |

+

for img_path in self.img_list:

|

| 261 |

+

img = cv2.imread(img_path, cv2.IMREAD_UNCHANGED)

|

| 262 |

+

self.que.put(img)

|

| 263 |

+

|

| 264 |

+

self.que.put(None)

|

| 265 |

+

|

| 266 |

+

def __next__(self):

|

| 267 |

+

next_item = self.que.get()

|

| 268 |

+

if next_item is None:

|

| 269 |

+

raise StopIteration

|

| 270 |

+

return next_item

|

| 271 |

+

|

| 272 |

+

def __iter__(self):

|

| 273 |

+

return self

|

| 274 |

+

|

| 275 |

+

|

| 276 |

+

class IOConsumer(threading.Thread):

|

| 277 |

+

|

| 278 |

+

def __init__(self, opt, que, qid):

|

| 279 |

+

super().__init__()

|

| 280 |

+

self._queue = que

|

| 281 |

+

self.qid = qid

|

| 282 |

+

self.opt = opt

|

| 283 |

+

|

| 284 |

+

def run(self):

|

| 285 |

+

while True:

|

| 286 |

+

msg = self._queue.get()

|

| 287 |

+

if isinstance(msg, str) and msg == 'quit':

|

| 288 |

+

break

|

| 289 |

+

|

| 290 |

+

output = msg['output']

|

| 291 |

+

save_path = msg['save_path']

|

| 292 |

+

cv2.imwrite(save_path, output)

|

| 293 |

+

print(f'IO worker {self.qid} is done.')

|

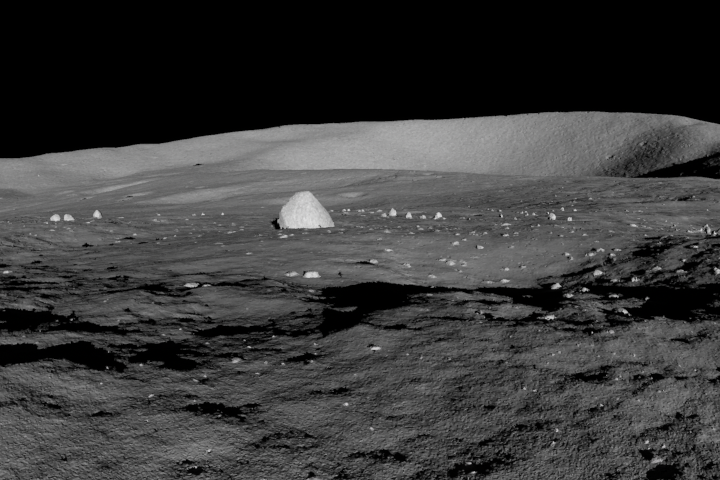

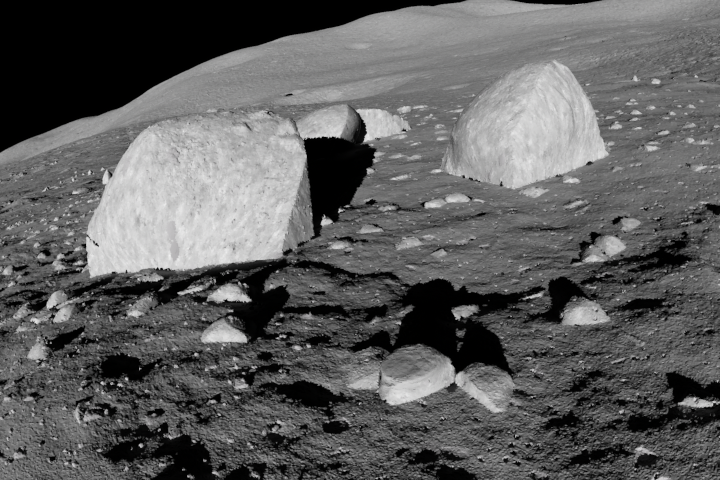

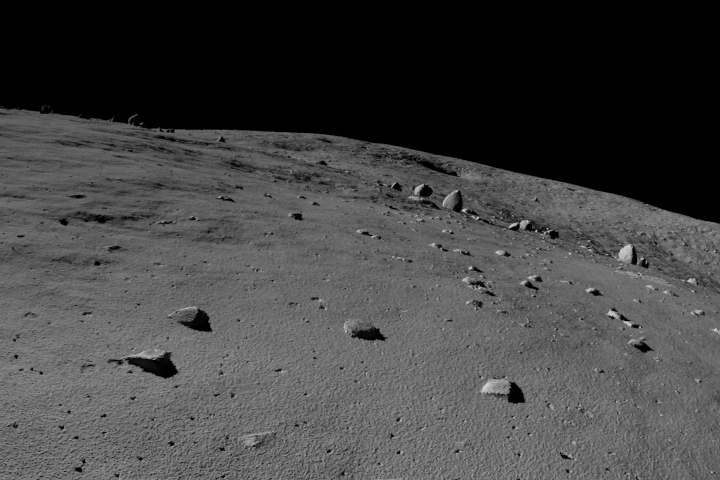

render0001.png

ADDED

|

render0001_DC.png

ADDED

|

render1546.png

ADDED

|

render1546_DC.png

ADDED

|

render1682.png

ADDED

|

render1682_DC.png

ADDED

|

requirements.txt

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

basicsr>=1.3.3.11

|

| 2 |

+

facexlib>=0.2.0.3

|

| 3 |

+

gfpgan>=0.2.1

|

| 4 |

+

numpy

|

| 5 |

+

opencv-python

|

| 6 |

+

Pillow

|

| 7 |

+

torch>=1.7

|

| 8 |

+

torchvision

|

| 9 |

+

tqdm

|