Spaces:

Runtime error

Runtime error

Upload 18 files

Browse files- 01.jpeg +0 -0

- 02.jpeg +0 -0

- 03.jpeg +0 -0

- 04.jpeg +0 -0

- 05.jpeg +0 -0

- 06.jpeg +0 -0

- 07.jpeg +0 -0

- 08.jpeg +0 -0

- 09.jpeg +0 -0

- 10.jpeg +0 -0

- 11.jpeg +0 -0

- 12.jpeg +0 -0

- README.txt +13 -0

- Wild Horses 2.jpeg +0 -0

- app.py +127 -0

- model.pth +3 -0

- model2.pth +3 -0

- requirements.txt +2 -0

01.jpeg

ADDED

|

02.jpeg

ADDED

|

03.jpeg

ADDED

|

04.jpeg

ADDED

|

05.jpeg

ADDED

|

06.jpeg

ADDED

|

07.jpeg

ADDED

|

08.jpeg

ADDED

|

09.jpeg

ADDED

|

10.jpeg

ADDED

|

11.jpeg

ADDED

|

12.jpeg

ADDED

|

README.txt

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: ✏️Image to Line Drawing🖼️Gradio

|

| 3 |

+

emoji: 🖼️❤️✏️

|

| 4 |

+

colorFrom: green

|

| 5 |

+

colorTo: indigo

|

| 6 |

+

sdk: gradio

|

| 7 |

+

sdk_version: 2.9.3

|

| 8 |

+

app_file: app.py

|

| 9 |

+

pinned: false

|

| 10 |

+

license: mit

|

| 11 |

+

---

|

| 12 |

+

|

| 13 |

+

Check out the configuration reference at https://huggingface.co/docs/hub/spaces#reference

|

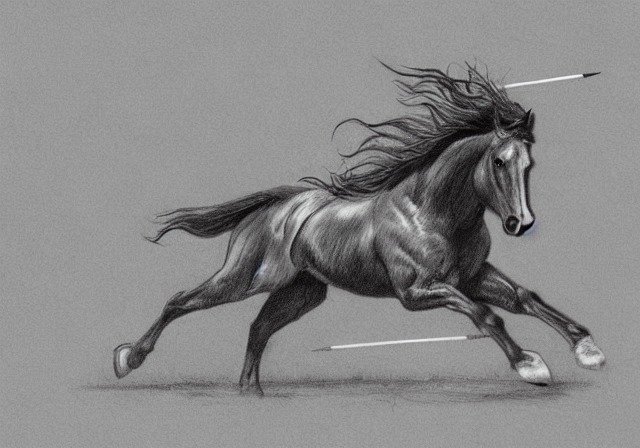

Wild Horses 2.jpeg

ADDED

|

app.py

ADDED

|

@@ -0,0 +1,127 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import numpy as np

|

| 2 |

+

import torch

|

| 3 |

+

import torch.nn as nn

|

| 4 |

+

import gradio as gr

|

| 5 |

+

from PIL import Image

|

| 6 |

+

import torchvision.transforms as transforms

|

| 7 |

+

|

| 8 |

+

norm_layer = nn.InstanceNorm2d

|

| 9 |

+

|

| 10 |

+

class ResidualBlock(nn.Module):

|

| 11 |

+

def __init__(self, in_features):

|

| 12 |

+

super(ResidualBlock, self).__init__()

|

| 13 |

+

|

| 14 |

+

conv_block = [ nn.ReflectionPad2d(1),

|

| 15 |

+

nn.Conv2d(in_features, in_features, 3),

|

| 16 |

+

norm_layer(in_features),

|

| 17 |

+

nn.ReLU(inplace=True),

|

| 18 |

+

nn.ReflectionPad2d(1),

|

| 19 |

+

nn.Conv2d(in_features, in_features, 3),

|

| 20 |

+

norm_layer(in_features)

|

| 21 |

+

]

|

| 22 |

+

|

| 23 |

+

self.conv_block = nn.Sequential(*conv_block)

|

| 24 |

+

|

| 25 |

+

def forward(self, x):

|

| 26 |

+

return x + self.conv_block(x)

|

| 27 |

+

|

| 28 |

+

|

| 29 |

+

class Generator(nn.Module):

|

| 30 |

+

def __init__(self, input_nc, output_nc, n_residual_blocks=9, sigmoid=True):

|

| 31 |

+

super(Generator, self).__init__()

|

| 32 |

+

|

| 33 |

+

# Initial convolution block

|

| 34 |

+

model0 = [ nn.ReflectionPad2d(3),

|

| 35 |

+

nn.Conv2d(input_nc, 64, 7),

|

| 36 |

+

norm_layer(64),

|

| 37 |

+

nn.ReLU(inplace=True) ]

|

| 38 |

+

self.model0 = nn.Sequential(*model0)

|

| 39 |

+

|

| 40 |

+

# Downsampling

|

| 41 |

+

model1 = []

|

| 42 |

+

in_features = 64

|

| 43 |

+

out_features = in_features*2

|

| 44 |

+

for _ in range(2):

|

| 45 |

+

model1 += [ nn.Conv2d(in_features, out_features, 3, stride=2, padding=1),

|

| 46 |

+

norm_layer(out_features),

|

| 47 |

+

nn.ReLU(inplace=True) ]

|

| 48 |

+

in_features = out_features

|

| 49 |

+

out_features = in_features*2

|

| 50 |

+

self.model1 = nn.Sequential(*model1)

|

| 51 |

+

|

| 52 |

+

model2 = []

|

| 53 |

+

# Residual blocks

|

| 54 |

+

for _ in range(n_residual_blocks):

|

| 55 |

+

model2 += [ResidualBlock(in_features)]

|

| 56 |

+

self.model2 = nn.Sequential(*model2)

|

| 57 |

+

|

| 58 |

+

# Upsampling

|

| 59 |

+

model3 = []

|

| 60 |

+

out_features = in_features//2

|

| 61 |

+

for _ in range(2):

|

| 62 |

+

model3 += [ nn.ConvTranspose2d(in_features, out_features, 3, stride=2, padding=1, output_padding=1),

|

| 63 |

+

norm_layer(out_features),

|

| 64 |

+

nn.ReLU(inplace=True) ]

|

| 65 |

+

in_features = out_features

|

| 66 |

+

out_features = in_features//2

|

| 67 |

+

self.model3 = nn.Sequential(*model3)

|

| 68 |

+

|

| 69 |

+

# Output layer

|

| 70 |

+

model4 = [ nn.ReflectionPad2d(3),

|

| 71 |

+

nn.Conv2d(64, output_nc, 7)]

|

| 72 |

+

if sigmoid:

|

| 73 |

+

model4 += [nn.Sigmoid()]

|

| 74 |

+

|

| 75 |

+

self.model4 = nn.Sequential(*model4)

|

| 76 |

+

|

| 77 |

+

def forward(self, x, cond=None):

|

| 78 |

+

out = self.model0(x)

|

| 79 |

+

out = self.model1(out)

|

| 80 |

+

out = self.model2(out)

|

| 81 |

+

out = self.model3(out)

|

| 82 |

+

out = self.model4(out)

|

| 83 |

+

|

| 84 |

+

return out

|

| 85 |

+

|

| 86 |

+

model1 = Generator(3, 1, 3)

|

| 87 |

+

model1.load_state_dict(torch.load('model.pth', map_location=torch.device('cpu')))

|

| 88 |

+

model1.eval()

|

| 89 |

+

|

| 90 |

+

model2 = Generator(3, 1, 3)

|

| 91 |

+

model2.load_state_dict(torch.load('model2.pth', map_location=torch.device('cpu')))

|

| 92 |

+

model2.eval()

|

| 93 |

+

|

| 94 |

+

def predict(input_img, ver):

|

| 95 |

+

input_img = Image.open(input_img)

|

| 96 |

+

transform = transforms.Compose([transforms.Resize(256, Image.BICUBIC), transforms.ToTensor()])

|

| 97 |

+

input_img = transform(input_img)

|

| 98 |

+

input_img = torch.unsqueeze(input_img, 0)

|

| 99 |

+

|

| 100 |

+

drawing = 0

|

| 101 |

+

with torch.no_grad():

|

| 102 |

+

if ver == 'Simple Lines':

|

| 103 |

+

drawing = model2(input_img)[0].detach()

|

| 104 |

+

else:

|

| 105 |

+

drawing = model1(input_img)[0].detach()

|

| 106 |

+

|

| 107 |

+

drawing = transforms.ToPILImage()(drawing)

|

| 108 |

+

return drawing

|

| 109 |

+

|

| 110 |

+

title="Image to Line Drawings - Complex and Simple Portraits and Landscapes"

|

| 111 |

+

examples=[

|

| 112 |

+

['01.jpeg', 'Simple Lines'], ['02.jpeg', 'Simple Lines'], ['03.jpeg', 'Simple Lines'],

|

| 113 |

+

['07.jpeg', 'Complex Lines'], ['08.jpeg', 'Complex Lines'], ['09.jpeg', 'Complex Lines'],

|

| 114 |

+

['10.jpeg', 'Simple Lines'], ['11.jpeg', 'Simple Lines'], ['12.jpeg', 'Simple Lines'],

|

| 115 |

+

['01.jpeg', 'Complex Lines'], ['02.jpeg', 'Complex Lines'], ['03.jpeg', 'Complex Lines'],

|

| 116 |

+

['04.jpeg', 'Simple Lines'], ['05.jpeg', 'Simple Lines'], ['06.jpeg', 'Simple Lines'],

|

| 117 |

+

['07.jpeg', 'Simple Lines'], ['08.jpeg', 'Simple Lines'], ['09.jpeg', 'Simple Lines'],

|

| 118 |

+

['04.jpeg', 'Complex Lines'], ['05.jpeg', 'Complex Lines'], ['06.jpeg', 'Complex Lines'],

|

| 119 |

+

['10.jpeg', 'Complex Lines'], ['11.jpeg', 'Complex Lines'], ['12.jpeg', 'Complex Lines'],

|

| 120 |

+

['Upload Wild Horses 2.jpeg', 'Complex Lines']

|

| 121 |

+

]

|

| 122 |

+

|

| 123 |

+

iface = gr.Interface(predict, [gr.inputs.Image(type='filepath'),

|

| 124 |

+

gr.inputs.Radio(['Complex Lines','Simple Lines'], type="value", default='Simple Lines', label='version')],

|

| 125 |

+

gr.outputs.Image(type="pil"), title=title,examples=examples)

|

| 126 |

+

|

| 127 |

+

iface.launch()

|

model.pth

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c686ced2a666b4850b4bb6ccf0748031c3eda9f822de73a34b8979970d90f0c6

|

| 3 |

+

size 17173511

|

model2.pth

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:30a534781061f34e83bb9406b4335da4ff2616c95d22a585c1245aa8363e74e0

|

| 3 |

+

size 17173511

|

requirements.txt

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

torch

|

| 2 |

+

torchvision

|