Upload 8 files

Browse files- README.md +22 -35

- config.json +7 -3

- generation_config.json +2 -2

- image.png +0 -0

- special_tokens_map.json +30 -0

- tokenizer.model +3 -0

- tokenizer_config.json +32 -24

- web_streamlit_for_wukong.py +305 -0

README.md

CHANGED

|

@@ -1,38 +1,25 @@

|

|

|

|

|

| 1 |

---

|

| 2 |

license: apache-2.0

|

| 3 |

---

|

| 4 |

-

|

| 5 |

-

|

| 6 |

-

-

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

|

| 12 |

-

|

| 13 |

-

|

| 14 |

-

|

| 15 |

-

|

| 16 |

-

|

| 17 |

-

|

| 18 |

-

|

| 19 |

-

|

| 20 |

-

|

| 21 |

-

|

| 22 |

-

|

| 23 |

-

|

| 24 |

-

|

| 25 |

-

|

| 26 |

-

## Caveats and Recommendations

|

| 27 |

-

- **Known limitations:** [List known limitations of the model]

|

| 28 |

-

- **Best practices:** [Suggestions on best practices for implementation of the model]

|

| 29 |

-

|

| 30 |

-

## Change Log

|

| 31 |

-

- **[Date]:** Model version 1.0 released.

|

| 32 |

-

|

| 33 |

-

## Contact Information

|

| 34 |

-

- **Maintainer(s):** [Contact details for the person or team responsible for maintaining the model]

|

| 35 |

-

- **Issues:** [Information on where to report issues or bugs]

|

| 36 |

-

|

| 37 |

-

## License

|

| 38 |

-

- **Model license:** [Details of the model's usage license, if applicable]

|

|

|

|

| 1 |

+

|

| 2 |

---

|

| 3 |

license: apache-2.0

|

| 4 |

---

|

| 5 |

+

|

| 6 |

+

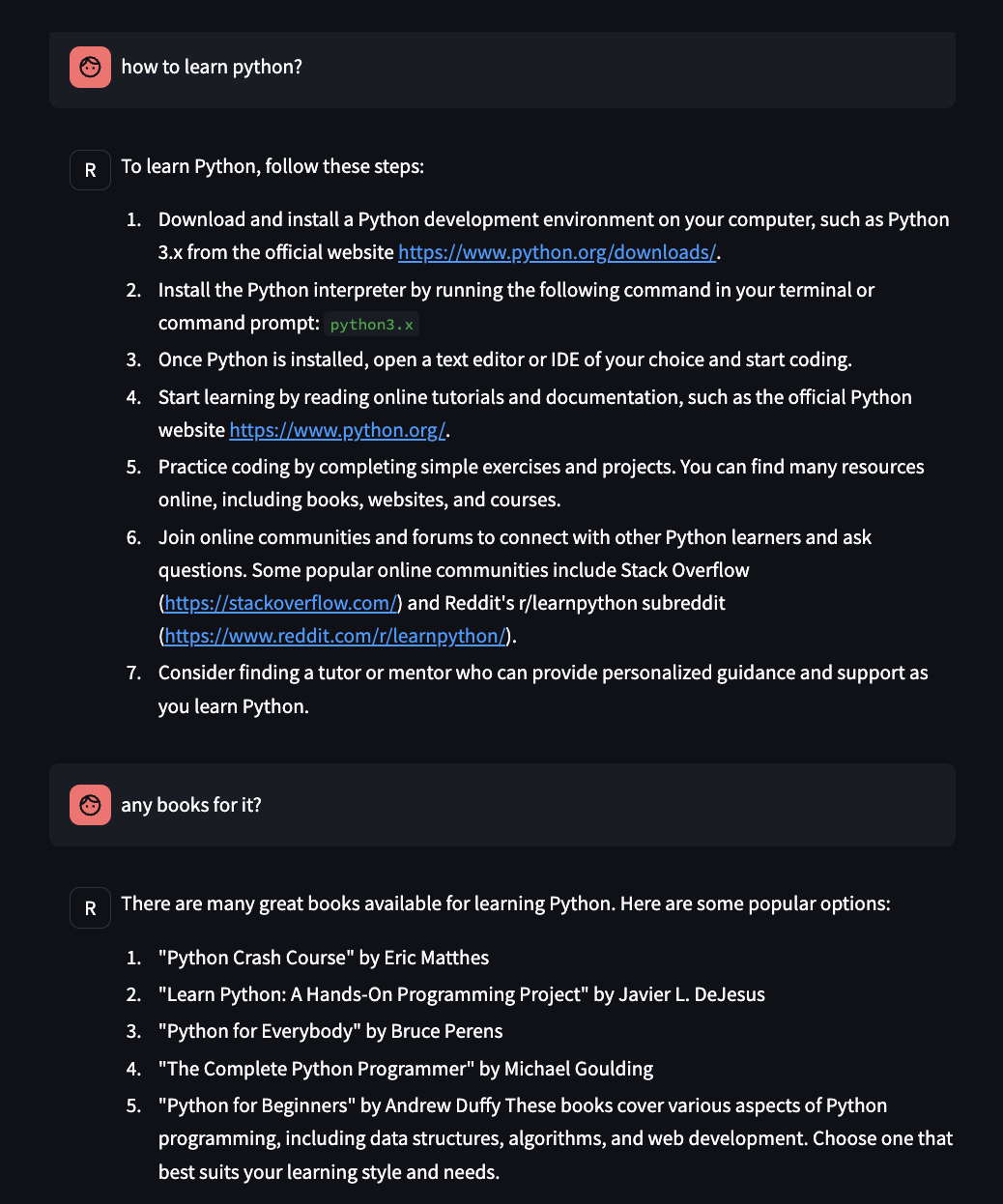

### wukong-chat

|

| 7 |

+

csg-wukong 英文对话版

|

| 8 |

+

|

| 9 |

+

### 下载

|

| 10 |

+

```

|

| 11 |

+

git lfs install

|

| 12 |

+

git lfs clone https://opencsg.com/models/baicai/CSG-Wukong-1B-English-chat.git

|

| 13 |

+

```

|

| 14 |

+

|

| 15 |

+

### 网页推理

|

| 16 |

+

执行以下命令安装依赖包:

|

| 17 |

+

```

|

| 18 |

+

pip install -U streamlit transformers peft

|

| 19 |

+

```

|

| 20 |

+

执行以下命令启动网页推理:

|

| 21 |

+

```

|

| 22 |

+

streamlit ./CSG-Wukong-1B-English-chat/run web_streamlit_for_wukong.py ./CSG-Wukong-1B-English-chat/ --theme.base="dark"

|

| 23 |

+

```

|

| 24 |

+

|

| 25 |

+

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

config.json

CHANGED

|

@@ -1,14 +1,17 @@

|

|

| 1 |

{

|

|

|

|

| 2 |

"architectures": [

|

| 3 |

"LlamaForCausalLM"

|

| 4 |

],

|

|

|

|

|

|

|

| 5 |

"bos_token_id": 1,

|

| 6 |

"eos_token_id": 2,

|

| 7 |

"hidden_act": "silu",

|

| 8 |

"hidden_size": 2048,

|

| 9 |

"initializer_range": 0.02,

|

| 10 |

"intermediate_size": 5632,

|

| 11 |

-

"max_position_embeddings":

|

| 12 |

"model_type": "llama",

|

| 13 |

"num_attention_heads": 32,

|

| 14 |

"num_hidden_layers": 22,

|

|

@@ -16,9 +19,10 @@

|

|

| 16 |

"pretraining_tp": 1,

|

| 17 |

"rms_norm_eps": 1e-05,

|

| 18 |

"rope_scaling": null,

|

|

|

|

| 19 |

"tie_word_embeddings": false,

|

| 20 |

-

"torch_dtype": "

|

| 21 |

-

"transformers_version": "4.

|

| 22 |

"use_cache": true,

|

| 23 |

"vocab_size": 32000

|

| 24 |

}

|

|

|

|

| 1 |

{

|

| 2 |

+

"_name_or_path": "/root/lxl/PP_llama3/csg-wukong-1B",

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

| 6 |

+

"attention_bias": false,

|

| 7 |

+

"attention_dropout": 0.0,

|

| 8 |

"bos_token_id": 1,

|

| 9 |

"eos_token_id": 2,

|

| 10 |

"hidden_act": "silu",

|

| 11 |

"hidden_size": 2048,

|

| 12 |

"initializer_range": 0.02,

|

| 13 |

"intermediate_size": 5632,

|

| 14 |

+

"max_position_embeddings": 32768,

|

| 15 |

"model_type": "llama",

|

| 16 |

"num_attention_heads": 32,

|

| 17 |

"num_hidden_layers": 22,

|

|

|

|

| 19 |

"pretraining_tp": 1,

|

| 20 |

"rms_norm_eps": 1e-05,

|

| 21 |

"rope_scaling": null,

|

| 22 |

+

"rope_theta": 4000000,

|

| 23 |

"tie_word_embeddings": false,

|

| 24 |

+

"torch_dtype": "float16",

|

| 25 |

+

"transformers_version": "4.40.1",

|

| 26 |

"use_cache": true,

|

| 27 |

"vocab_size": 32000

|

| 28 |

}

|

generation_config.json

CHANGED

|

@@ -1,7 +1,7 @@

|

|

| 1 |

{

|

| 2 |

"bos_token_id": 1,

|

| 3 |

"eos_token_id": 2,

|

|

|

|

| 4 |

"pad_token_id": 0,

|

| 5 |

-

"

|

| 6 |

-

"transformers_version": "4.37.2"

|

| 7 |

}

|

|

|

|

| 1 |

{

|

| 2 |

"bos_token_id": 1,

|

| 3 |

"eos_token_id": 2,

|

| 4 |

+

"max_length": 32768,

|

| 5 |

"pad_token_id": 0,

|

| 6 |

+

"transformers_version": "4.40.1"

|

|

|

|

| 7 |

}

|

image.png

ADDED

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,30 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<s>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": false,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "</s>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": false,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": {

|

| 17 |

+

"content": "<unk>",

|

| 18 |

+

"lstrip": false,

|

| 19 |

+

"normalized": false,

|

| 20 |

+

"rstrip": false,

|

| 21 |

+

"single_word": false

|

| 22 |

+

},

|

| 23 |

+

"unk_token": {

|

| 24 |

+

"content": "<unk>",

|

| 25 |

+

"lstrip": false,

|

| 26 |

+

"normalized": false,

|

| 27 |

+

"rstrip": false,

|

| 28 |

+

"single_word": false

|

| 29 |

+

}

|

| 30 |

+

}

|

tokenizer.model

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9e556afd44213b6bd1be2b850ebbbd98f5481437a8021afaf58ee7fb1818d347

|

| 3 |

+

size 499723

|

tokenizer_config.json

CHANGED

|

@@ -1,35 +1,43 @@

|

|

| 1 |

{

|

| 2 |

"add_bos_token": true,

|

| 3 |

"add_eos_token": false,

|

| 4 |

-

"

|

| 5 |

-

|

| 6 |

-

"

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

| 10 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 11 |

},

|

|

|

|

| 12 |

"clean_up_tokenization_spaces": false,

|

| 13 |

-

"eos_token":

|

| 14 |

-

"__type": "AddedToken",

|

| 15 |

-

"content": "</s>",

|

| 16 |

-

"lstrip": false,

|

| 17 |

-

"normalized": false,

|

| 18 |

-

"rstrip": false,

|

| 19 |

-

"single_word": false

|

| 20 |

-

},

|

| 21 |

"legacy": false,

|

| 22 |

"model_max_length": 1000000000000000019884624838656,

|

| 23 |

-

"pad_token":

|

| 24 |

"padding_side": "right",

|

| 25 |

"sp_model_kwargs": {},

|

|

|

|

| 26 |

"tokenizer_class": "LlamaTokenizer",

|

| 27 |

-

"unk_token":

|

| 28 |

-

|

| 29 |

-

"content": "<unk>",

|

| 30 |

-

"lstrip": false,

|

| 31 |

-

"normalized": false,

|

| 32 |

-

"rstrip": false,

|

| 33 |

-

"single_word": false

|

| 34 |

-

}

|

| 35 |

}

|

|

|

|

| 1 |

{

|

| 2 |

"add_bos_token": true,

|

| 3 |

"add_eos_token": false,

|

| 4 |

+

"add_prefix_space": true,

|

| 5 |

+

"added_tokens_decoder": {

|

| 6 |

+

"0": {

|

| 7 |

+

"content": "<unk>",

|

| 8 |

+

"lstrip": false,

|

| 9 |

+

"normalized": false,

|

| 10 |

+

"rstrip": false,

|

| 11 |

+

"single_word": false,

|

| 12 |

+

"special": true

|

| 13 |

+

},

|

| 14 |

+

"1": {

|

| 15 |

+

"content": "<s>",

|

| 16 |

+

"lstrip": false,

|

| 17 |

+

"normalized": false,

|

| 18 |

+

"rstrip": false,

|

| 19 |

+

"single_word": false,

|

| 20 |

+

"special": true

|

| 21 |

+

},

|

| 22 |

+

"2": {

|

| 23 |

+

"content": "</s>",

|

| 24 |

+

"lstrip": false,

|

| 25 |

+

"normalized": false,

|

| 26 |

+

"rstrip": false,

|

| 27 |

+

"single_word": false,

|

| 28 |

+

"special": true

|

| 29 |

+

}

|

| 30 |

},

|

| 31 |

+

"bos_token": "<s>",

|

| 32 |

"clean_up_tokenization_spaces": false,

|

| 33 |

+

"eos_token": "</s>",

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 34 |

"legacy": false,

|

| 35 |

"model_max_length": 1000000000000000019884624838656,

|

| 36 |

+

"pad_token": "<unk>",

|

| 37 |

"padding_side": "right",

|

| 38 |

"sp_model_kwargs": {},

|

| 39 |

+

"spaces_between_special_tokens": false,

|

| 40 |

"tokenizer_class": "LlamaTokenizer",

|

| 41 |

+

"unk_token": "<unk>",

|

| 42 |

+

"use_default_system_prompt": false

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 43 |

}

|

web_streamlit_for_wukong.py

ADDED

|

@@ -0,0 +1,305 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import copy

|

| 2 |

+

import warnings

|

| 3 |

+

from dataclasses import asdict, dataclass

|

| 4 |

+

from typing import Callable, List, Optional

|

| 5 |

+

|

| 6 |

+

import streamlit as st

|

| 7 |

+

import torch

|

| 8 |

+

from torch import nn

|

| 9 |

+

from transformers.generation.utils import (LogitsProcessorList,

|

| 10 |

+

StoppingCriteriaList)

|

| 11 |

+

from transformers.utils import logging

|

| 12 |

+

|

| 13 |

+

from transformers import AutoTokenizer, AutoModelForCausalLM, BitsAndBytesConfig

|

| 14 |

+

from peft import PeftModel

|

| 15 |

+

|

| 16 |

+

logger = logging.get_logger(__name__)

|

| 17 |

+

st.set_page_config(page_title="Wukong-chat")

|

| 18 |

+

|

| 19 |

+

import argparse

|

| 20 |

+

|

| 21 |

+

@dataclass

|

| 22 |

+

class GenerationConfig:

|

| 23 |

+

max_length: int = 8192

|

| 24 |

+

max_new_tokens: int = 600

|

| 25 |

+

top_p: float = 0.8

|

| 26 |

+

temperature: float = 0.8

|

| 27 |

+

do_sample: bool = True

|

| 28 |

+

repetition_penalty: float = 1.05

|

| 29 |

+

|

| 30 |

+

@torch.inference_mode()

|

| 31 |

+

def generate_interactive(

|

| 32 |

+

model,

|

| 33 |

+

tokenizer,

|

| 34 |

+

prompt,

|

| 35 |

+

generation_config: Optional[GenerationConfig] = None,

|

| 36 |

+

logits_processor: Optional[LogitsProcessorList] = None,

|

| 37 |

+

stopping_criteria: Optional[StoppingCriteriaList] = None,

|

| 38 |

+

prefix_allowed_tokens_fn: Optional[Callable[[int, torch.Tensor],

|

| 39 |

+

List[int]]] = None,

|

| 40 |

+

additional_eos_token_id: Optional[int] = None,

|

| 41 |

+

**kwargs,

|

| 42 |

+

):

|

| 43 |

+

inputs = tokenizer([prompt], return_tensors='pt')

|

| 44 |

+

input_length = len(inputs['input_ids'][0])

|

| 45 |

+

for k, v in inputs.items():

|

| 46 |

+

inputs[k] = v.cuda()

|

| 47 |

+

input_ids = inputs['input_ids']

|

| 48 |

+

_, input_ids_seq_length = input_ids.shape[0], input_ids.shape[-1]

|

| 49 |

+

if generation_config is None:

|

| 50 |

+

generation_config = model.generation_config

|

| 51 |

+

generation_config = copy.deepcopy(generation_config)

|

| 52 |

+

model_kwargs = generation_config.update(**kwargs)

|

| 53 |

+

bos_token_id, eos_token_id = ( # noqa: F841 # pylint: disable=W0612

|

| 54 |

+

generation_config.bos_token_id,

|

| 55 |

+

generation_config.eos_token_id,

|

| 56 |

+

)

|

| 57 |

+

if isinstance(eos_token_id, int):

|

| 58 |

+

eos_token_id = [eos_token_id]

|

| 59 |

+

if additional_eos_token_id is not None:

|

| 60 |

+

eos_token_id.append(additional_eos_token_id)

|

| 61 |

+

has_default_max_length = kwargs.get(

|

| 62 |

+

'max_length') is None and generation_config.max_length is not None

|

| 63 |

+

if has_default_max_length and generation_config.max_new_tokens is None:

|

| 64 |

+

warnings.warn(

|

| 65 |

+

f"Using 'max_length''s default ({repr(generation_config.max_length)}) \

|

| 66 |

+

to control the generation length. "

|

| 67 |

+

'This behaviour is deprecated and will be removed from the \

|

| 68 |

+

config in v5 of Transformers -- we'

|

| 69 |

+

' recommend using `max_new_tokens` to control the maximum \

|

| 70 |

+

length of the generation.',

|

| 71 |

+

UserWarning,

|

| 72 |

+

)

|

| 73 |

+

elif generation_config.max_new_tokens is not None:

|

| 74 |

+

generation_config.max_length = generation_config.max_new_tokens + \

|

| 75 |

+

input_ids_seq_length

|

| 76 |

+

if not has_default_max_length:

|

| 77 |

+

logger.warn( # pylint: disable=W4902

|

| 78 |

+

f"Both 'max_new_tokens' (={generation_config.max_new_tokens}) "

|

| 79 |

+

f"and 'max_length'(={generation_config.max_length}) seem to "

|

| 80 |

+

"have been set. 'max_new_tokens' will take precedence. "

|

| 81 |

+

'Please refer to the documentation for more information. '

|

| 82 |

+

'(https://huggingface.co/docs/transformers/main/'

|

| 83 |

+

'en/main_classes/text_generation)',

|

| 84 |

+

UserWarning,

|

| 85 |

+

)

|

| 86 |

+

|

| 87 |

+

if input_ids_seq_length >= generation_config.max_length:

|

| 88 |

+

input_ids_string = 'input_ids'

|

| 89 |

+

logger.warning(

|

| 90 |

+

f"Input length of {input_ids_string} is {input_ids_seq_length}, "

|

| 91 |

+

f"but 'max_length' is set to {generation_config.max_length}. "

|

| 92 |

+

'This can lead to unexpected behavior. You should consider'

|

| 93 |

+

" increasing 'max_new_tokens'.")

|

| 94 |

+

|

| 95 |

+

# 2. Set generation parameters if not already defined

|

| 96 |

+

logits_processor = logits_processor if logits_processor is not None \

|

| 97 |

+

else LogitsProcessorList()

|

| 98 |

+

stopping_criteria = stopping_criteria if stopping_criteria is not None \

|

| 99 |

+

else StoppingCriteriaList()

|

| 100 |

+

|

| 101 |

+

logits_processor = model._get_logits_processor(

|

| 102 |

+

generation_config=generation_config,

|

| 103 |

+

input_ids_seq_length=input_ids_seq_length,

|

| 104 |

+

encoder_input_ids=input_ids,

|

| 105 |

+

prefix_allowed_tokens_fn=prefix_allowed_tokens_fn,

|

| 106 |

+

logits_processor=logits_processor,

|

| 107 |

+

)

|

| 108 |

+

|

| 109 |

+

stopping_criteria = model._get_stopping_criteria(

|

| 110 |

+

generation_config=generation_config,

|

| 111 |

+

stopping_criteria=stopping_criteria)

|

| 112 |

+

logits_warper = model._get_logits_warper(generation_config)

|

| 113 |

+

|

| 114 |

+

unfinished_sequences = input_ids.new(input_ids.shape[0]).fill_(1)

|

| 115 |

+

scores = None

|

| 116 |

+

while True:

|

| 117 |

+

model_inputs = model.prepare_inputs_for_generation(

|

| 118 |

+

input_ids, **model_kwargs)

|

| 119 |

+

# forward pass to get next token

|

| 120 |

+

outputs = model(

|

| 121 |

+

**model_inputs,

|

| 122 |

+

return_dict=True,

|

| 123 |

+

output_attentions=False,

|

| 124 |

+

output_hidden_states=False,

|

| 125 |

+

)

|

| 126 |

+

|

| 127 |

+

next_token_logits = outputs.logits[:, -1, :]

|

| 128 |

+

|

| 129 |

+

# pre-process distribution

|

| 130 |

+

next_token_scores = logits_processor(input_ids, next_token_logits)

|

| 131 |

+

next_token_scores = logits_warper(input_ids, next_token_scores)

|

| 132 |

+

|

| 133 |

+

# sample

|

| 134 |

+

probs = nn.functional.softmax(next_token_scores, dim=-1)

|

| 135 |

+

if generation_config.do_sample:

|

| 136 |

+

next_tokens = torch.multinomial(probs, num_samples=1).squeeze(1)

|

| 137 |

+

else:

|

| 138 |

+

next_tokens = torch.argmax(probs, dim=-1)

|

| 139 |

+

|

| 140 |

+

# update generated ids, model inputs, and length for next step

|

| 141 |

+

input_ids = torch.cat([input_ids, next_tokens[:, None]], dim=-1)

|

| 142 |

+

model_kwargs = model._update_model_kwargs_for_generation(

|

| 143 |

+

outputs, model_kwargs, is_encoder_decoder=False)

|

| 144 |

+

unfinished_sequences = unfinished_sequences.mul(

|

| 145 |

+

(min(next_tokens != i for i in eos_token_id)).long())

|

| 146 |

+

|

| 147 |

+

output_token_ids = input_ids[0].cpu().tolist()

|

| 148 |

+

output_token_ids = output_token_ids[input_length:]

|

| 149 |

+

for each_eos_token_id in eos_token_id:

|

| 150 |

+

if output_token_ids[-1] == each_eos_token_id:

|

| 151 |

+

output_token_ids = output_token_ids[:-1]

|

| 152 |

+

response = tokenizer.decode(output_token_ids, skip_special_tokens=True)

|

| 153 |

+

|

| 154 |

+

yield response

|

| 155 |

+

# stop when each sentence is finished

|

| 156 |

+

# or if we exceed the maximum length

|

| 157 |

+

if unfinished_sequences.max() == 0 or stopping_criteria(

|

| 158 |

+

input_ids, scores):

|

| 159 |

+

break

|

| 160 |

+

|

| 161 |

+

|

| 162 |

+

def on_btn_click():

|

| 163 |

+

del st.session_state.messages

|

| 164 |

+

|

| 165 |

+

|

| 166 |

+

@st.cache_resource

|

| 167 |

+

def load_model(model_name_or_path, adapter_name_or_path=None, load_in_4bit=False):

|

| 168 |

+

if load_in_4bit:

|

| 169 |

+

quantization_config = BitsAndBytesConfig(

|

| 170 |

+

load_in_4bit=True,

|

| 171 |

+

bnb_4bit_compute_dtype=torch.float16,

|

| 172 |

+

bnb_4bit_use_double_quant=True,

|

| 173 |

+

bnb_4bit_quant_type="nf4",

|

| 174 |

+

llm_int8_threshold=6.0,

|

| 175 |

+

llm_int8_has_fp16_weight=False,

|

| 176 |

+

)

|

| 177 |

+

else:

|

| 178 |

+

quantization_config = None

|

| 179 |

+

|

| 180 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 181 |

+

model_name_or_path,

|

| 182 |

+

load_in_4bit=load_in_4bit,

|

| 183 |

+

trust_remote_code=True,

|

| 184 |

+

low_cpu_mem_usage=True,

|

| 185 |

+

torch_dtype=torch.float16,

|

| 186 |

+

device_map='auto',

|

| 187 |

+

quantization_config=quantization_config

|

| 188 |

+

)

|

| 189 |

+

if adapter_name_or_path is not None:

|

| 190 |

+

model = PeftModel.from_pretrained(model, adapter_name_or_path)

|

| 191 |

+

model.eval()

|

| 192 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, trust_remote_code=True)

|

| 193 |

+

|

| 194 |

+

return model, tokenizer

|

| 195 |

+

|

| 196 |

+

|

| 197 |

+

def prepare_generation_config():

|

| 198 |

+

with st.sidebar:

|

| 199 |

+

st.title('超参数面板')

|

| 200 |

+

# 大输入框

|

| 201 |

+

system_prompt_content = st.text_area('系统提示词',

|

| 202 |

+

'''You are a creative super artificial intelligence assistant, possessing all the knowledge of humankind. Your name is csg-wukong, developed by OpenCSG. You need to understand and infer the true intentions of users based on the topics discussed in the chat history, and respond to user questions correctly as required. You enjoy responding to users with accurate and insightful answers. Please pay attention to the appropriate style and format when replying, try to avoid repetitive words and sentences, and keep your responses as concise and profound as possible. You carefully consider the context of the discussion when replying to users. When the user says "continue," please proceed with the continuation of the previous assistant's response.''',

|

| 203 |

+

height=200,

|

| 204 |

+

key='system_prompt_content'

|

| 205 |

+

)

|

| 206 |

+

max_new_tokens = st.slider('最大回复长度', 100, 8192, 660, step=8)

|

| 207 |

+

top_p = st.slider('Top P', 0.0, 1.0, 0.8, step=0.01)

|

| 208 |

+

temperature = st.slider('温度系数', 0.0, 1.0, 0.7, step=0.01)

|

| 209 |

+

repetition_penalty = st.slider("重复惩罚系数", 1.0, 2.0, 1.07, step=0.01)

|

| 210 |

+

st.button('重置聊天', on_click=on_btn_click)

|

| 211 |

+

|

| 212 |

+

generation_config = GenerationConfig(max_new_tokens=max_new_tokens,

|

| 213 |

+

top_p=top_p,

|

| 214 |

+

temperature=temperature,

|

| 215 |

+

repetition_penalty=repetition_penalty,

|

| 216 |

+

)

|

| 217 |

+

|

| 218 |

+

return generation_config

|

| 219 |

+

|

| 220 |

+

system_prompt = '<|system|>\n{content}</s>'

|

| 221 |

+

user_prompt = '<|user|>\n{content}</s>\n'

|

| 222 |

+

robot_prompt = '<|assistant|>\n{content}</s>\n'

|

| 223 |

+

cur_query_prompt = '<|user|>\n{content}</s>\n<|assistant|>\n'

|

| 224 |

+

|

| 225 |

+

|

| 226 |

+

def combine_history(prompt):

|

| 227 |

+

messages = st.session_state.messages

|

| 228 |

+

total_prompt = ''

|

| 229 |

+

for message in messages:

|

| 230 |

+

cur_content = message['content']

|

| 231 |

+

if message['role'] == 'user':

|

| 232 |

+

cur_prompt = user_prompt.format(content=cur_content)

|

| 233 |

+

elif message['role'] == 'robot':

|

| 234 |

+

cur_prompt = robot_prompt.format(content=cur_content)

|

| 235 |

+

else:

|

| 236 |

+

raise RuntimeError

|

| 237 |

+

total_prompt += cur_prompt

|

| 238 |

+

|

| 239 |

+

system_prompt_content = st.session_state.system_prompt_content

|

| 240 |

+

system = system_prompt.format(content=system_prompt_content)

|

| 241 |

+

total_prompt = system + total_prompt + cur_query_prompt.format(content=prompt)

|

| 242 |

+

|

| 243 |

+

return total_prompt

|

| 244 |

+

|

| 245 |

+

|

| 246 |

+

def main(model_name_or_path, adapter_name_or_path):

|

| 247 |

+

# torch.cuda.empty_cache()

|

| 248 |

+

print('load model...')

|

| 249 |

+

model, tokenizer = load_model(model_name_or_path, adapter_name_or_path=adapter_name_or_path, load_in_4bit=False)

|

| 250 |

+

print('load model end.')

|

| 251 |

+

|

| 252 |

+

st.title('Wukong-chat')

|

| 253 |

+

|

| 254 |

+

generation_config = prepare_generation_config()

|

| 255 |

+

|

| 256 |

+

# Initialize chat history

|

| 257 |

+

if 'messages' not in st.session_state:

|

| 258 |

+

st.session_state.messages = []

|

| 259 |

+

|

| 260 |

+

# Display chat messages from history on app rerun

|

| 261 |

+

for message in st.session_state.messages:

|

| 262 |

+

with st.chat_message(message['role']):

|

| 263 |

+

st.markdown(message['content'])

|

| 264 |

+

|

| 265 |

+

# Accept user input

|

| 266 |

+

if prompt := st.chat_input('hello'):

|

| 267 |

+

# Display user message in chat message container

|

| 268 |

+

with st.chat_message('user'):

|

| 269 |

+

st.markdown(prompt)

|

| 270 |

+

real_prompt = combine_history(prompt)

|

| 271 |

+

# Add user message to chat history

|

| 272 |

+

st.session_state.messages.append({

|

| 273 |

+

'role': 'user',

|

| 274 |

+

'content': prompt,

|

| 275 |

+

})

|

| 276 |

+

|

| 277 |

+

with st.chat_message('robot'):

|

| 278 |

+

message_placeholder = st.empty()

|

| 279 |

+

for cur_response in generate_interactive(

|

| 280 |

+

model=model,

|

| 281 |

+

tokenizer=tokenizer,

|

| 282 |

+

prompt=real_prompt,

|

| 283 |

+

additional_eos_token_id=128009,

|

| 284 |

+

**asdict(generation_config),

|

| 285 |

+

):

|

| 286 |

+

# Display robot response in chat message container

|

| 287 |

+

message_placeholder.markdown(cur_response + '▌')

|

| 288 |

+

message_placeholder.markdown(cur_response)

|

| 289 |

+

# Add robot response to chat history

|

| 290 |

+

st.session_state.messages.append({

|

| 291 |

+

'role': 'robot',

|

| 292 |

+

'content': cur_response, # pylint: disable=undefined-loop-variable

|

| 293 |

+

})

|

| 294 |

+

torch.cuda.empty_cache()

|

| 295 |

+

|

| 296 |

+

|

| 297 |

+

if __name__ == '__main__':

|

| 298 |

+

|

| 299 |

+

import sys

|

| 300 |

+

model_name_or_path = sys.argv[1]

|

| 301 |

+

if len(sys.argv) >= 3:

|

| 302 |

+

adapter_name_or_path = sys.argv[2]

|

| 303 |

+

else:

|

| 304 |

+

adapter_name_or_path = None

|

| 305 |

+

main(model_name_or_path, adapter_name_or_path)

|