Create README.md

Browse files

README.md

ADDED

|

@@ -0,0 +1,83 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

tags:

|

| 4 |

+

- vision

|

| 5 |

+

- image-classification

|

| 6 |

+

datasets:

|

| 7 |

+

- imagenet-1k

|

| 8 |

+

widget:

|

| 9 |

+

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/tiger.jpg

|

| 10 |

+

example_title: Tiger

|

| 11 |

+

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/teapot.jpg

|

| 12 |

+

example_title: Teapot

|

| 13 |

+

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/palace.jpg

|

| 14 |

+

example_title: Palace

|

| 15 |

+

---

|

| 16 |

+

|

| 17 |

+

# FocalNet (tiny-sized large reception field model)

|

| 18 |

+

|

| 19 |

+

FocalNet model trained on ImageNet-1k at resolution 224x224. It was introduced in the paper [Focal Modulation Networks

|

| 20 |

+

](https://arxiv.org/abs/2203.11926) by Yang et al. and first released in [this repository](https://github.com/microsoft/FocalNet).

|

| 21 |

+

|

| 22 |

+

Disclaimer: The team releasing FocalNet did not write a model card for this model so this model card has been written by the Hugging Face team.

|

| 23 |

+

|

| 24 |

+

## Model description

|

| 25 |

+

|

| 26 |

+

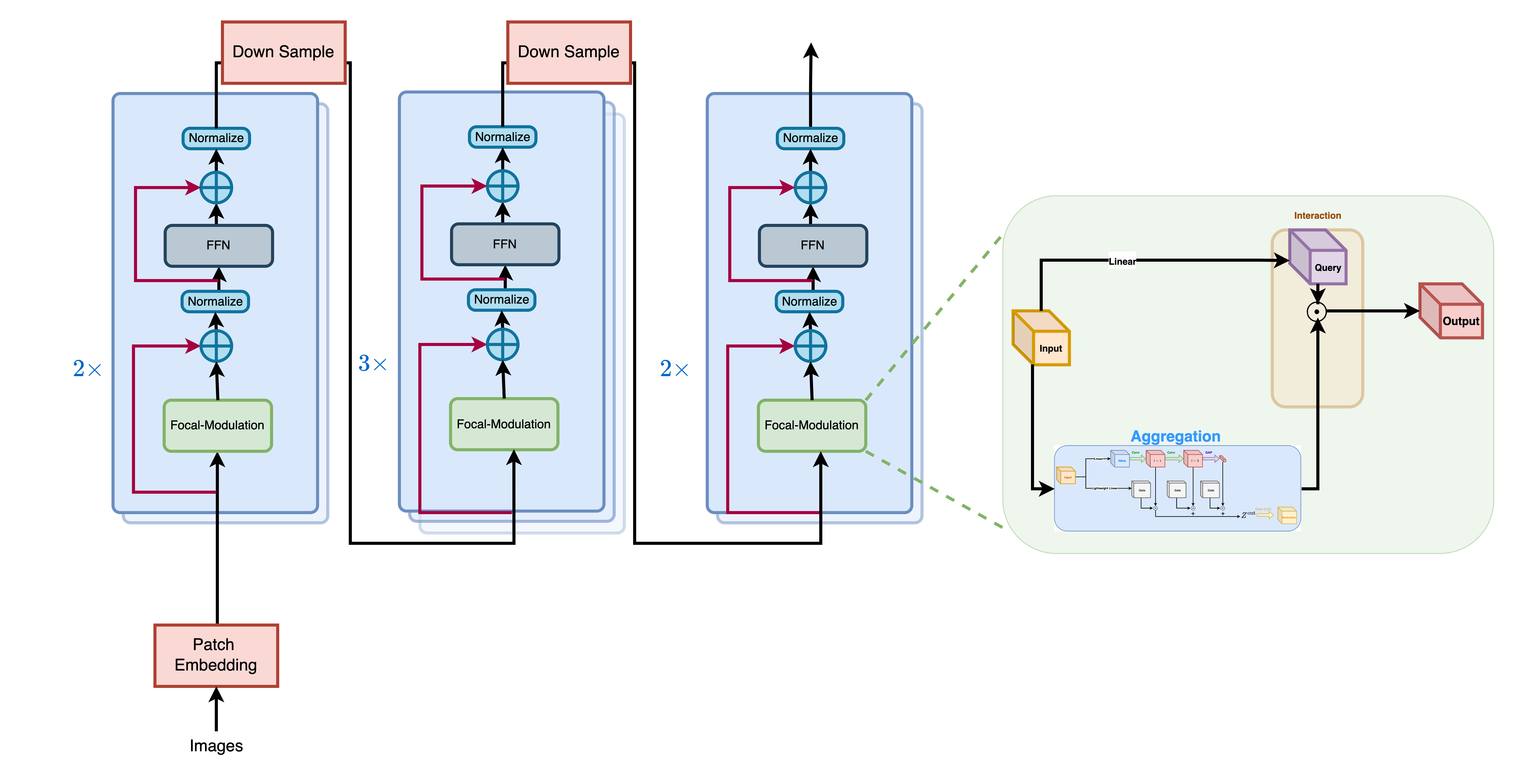

Focul Modulation Networks are an alternative to Vision Transformers, where self-attention (SA) is completely replaced by a focal modulation mechanism for modeling token interactions in vision.

|

| 27 |

+

Focal modulation comprises three components: (i) hierarchical contextualization, implemented using a stack of depth-wise convolutional layers, to encode visual contexts from short to long ranges, (ii) gated aggregation to selectively gather contexts for each query token based on its

|

| 28 |

+

content, and (iii) element-wise modulation or affine transformation to inject the aggregated context into the query. Extensive experiments show FocalNets outperform the state-of-the-art SA counterparts (e.g., Vision Transformers, Swin and Focal Transformers) with similar computational costs on the tasks of image classification, object detection, and segmentation.

|

| 29 |

+

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

## Intended uses & limitations

|

| 33 |

+

|

| 34 |

+

You can use the raw model for image classification. See the [model hub](https://huggingface.co/models?search=focalnet) to look for

|

| 35 |

+

fine-tuned versions on a task that interests you.

|

| 36 |

+

|

| 37 |

+

### How to use

|

| 38 |

+

|

| 39 |

+

Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes:

|

| 40 |

+

|

| 41 |

+

```python

|

| 42 |

+

from transformers import FocalNetImageProcessor, FocalNetForImageClassification

|

| 43 |

+

import torch

|

| 44 |

+

from datasets import load_dataset

|

| 45 |

+

|

| 46 |

+

dataset = load_dataset("huggingface/cats-image")

|

| 47 |

+

image = dataset["test"]["image"][0]

|

| 48 |

+

|

| 49 |

+

preprocessor = FocalNetImageProcessor.from_pretrained("microsoft/focalnet-tiny-lrf")

|

| 50 |

+

model = FocalNetForImageClassification.from_pretrained("microsoft/focalnet-tiny-lrf")

|

| 51 |

+

|

| 52 |

+

inputs = preprocessor(image, return_tensors="pt")

|

| 53 |

+

|

| 54 |

+

with torch.no_grad():

|

| 55 |

+

logits = model(**inputs).logits

|

| 56 |

+

|

| 57 |

+

# model predicts one of the 1000 ImageNet classes

|

| 58 |

+

predicted_label = logits.argmax(-1).item()

|

| 59 |

+

print(model.config.id2label[predicted_label]),

|

| 60 |

+

```

|

| 61 |

+

|

| 62 |

+

For more code examples, we refer to the [documentation](https://huggingface.co/docs/transformers/master/en/model_doc/focalnet).

|

| 63 |

+

|

| 64 |

+

### BibTeX entry and citation info

|

| 65 |

+

|

| 66 |

+

```bibtex

|

| 67 |

+

@article{DBLP:journals/corr/abs-2203-11926,

|

| 68 |

+

author = {Jianwei Yang and

|

| 69 |

+

Chunyuan Li and

|

| 70 |

+

Jianfeng Gao},

|

| 71 |

+

title = {Focal Modulation Networks},

|

| 72 |

+

journal = {CoRR},

|

| 73 |

+

volume = {abs/2203.11926},

|

| 74 |

+

year = {2022},

|

| 75 |

+

url = {https://doi.org/10.48550/arXiv.2203.11926},

|

| 76 |

+

doi = {10.48550/arXiv.2203.11926},

|

| 77 |

+

eprinttype = {arXiv},

|

| 78 |

+

eprint = {2203.11926},

|

| 79 |

+

timestamp = {Tue, 29 Mar 2022 18:07:24 +0200},

|

| 80 |

+

biburl = {https://dblp.org/rec/journals/corr/abs-2203-11926.bib},

|

| 81 |

+

bibsource = {dblp computer science bibliography, https://dblp.org}

|

| 82 |

+

}

|

| 83 |

+

```

|