add first commit

Browse files- README.md +141 -3

- added_tokens.json +1 -0

- config.json +38 -0

- flax_model.msgpack +3 -0

- merges.txt +0 -0

- pytorch_model.bin +3 -0

- special_tokens_map.json +1 -0

- tokenizer.json +0 -0

- tokenizer_config.json +1 -0

- vocab.json +0 -0

README.md

CHANGED

|

@@ -1,3 +1,141 @@

|

|

| 1 |

-

---

|

| 2 |

-

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language: id

|

| 3 |

+

widget:

|

| 4 |

+

- text: "Sewindu sudah kita tak berjumpa, rinduku padamu sudah tak terkira."

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

# GPT2-medium-indonesian

|

| 8 |

+

|

| 9 |

+

This is a pretrained model on Indonesian language using a causal language modeling (CLM) objective, which was first

|

| 10 |

+

introduced in [this paper](https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf)

|

| 11 |

+

and first released at [this page](https://openai.com/blog/better-language-models/).

|

| 12 |

+

|

| 13 |

+

This model was trained using HuggingFace's Flax framework and is part of the [JAX/Flax Community Week](https://discuss.huggingface.co/t/open-to-the-community-community-week-using-jax-flax-for-nlp-cv/7104)

|

| 14 |

+

organized by [HuggingFace](https://huggingface.co). All training was done on a TPUv3-8 VM sponsored by the Google Cloud team.

|

| 15 |

+

|

| 16 |

+

The demo can be found [here](https://huggingface.co/spaces/flax-community/gpt2-indonesian).

|

| 17 |

+

|

| 18 |

+

## How to use

|

| 19 |

+

You can use this model directly with a pipeline for text generation. Since the generation relies on some randomness, we set a seed for reproducibility:

|

| 20 |

+

```python

|

| 21 |

+

>>> from transformers import pipeline, set_seed

|

| 22 |

+

>>> generator = pipeline('text-generation', model='flax-community/gpt2-medium-indonesian')

|

| 23 |

+

>>> set_seed(42)

|

| 24 |

+

>>> generator("Sewindu sudah kita tak berjumpa,", max_length=30, num_return_sequences=5)

|

| 25 |

+

|

| 26 |

+

[{'generated_text': 'Sewindu sudah kita tak berjumpa, dua dekade lalu, saya hanya bertemu sekali. Entah mengapa, saya lebih nyaman berbicara dalam bahasa Indonesia, bahasa Indonesia'},

|

| 27 |

+

{'generated_text': 'Sewindu sudah kita tak berjumpa, tapi dalam dua hari ini, kita bisa saja bertemu.”\

|

| 28 |

+

“Kau tau, bagaimana dulu kita bertemu?” aku'},

|

| 29 |

+

{'generated_text': 'Sewindu sudah kita tak berjumpa, banyak kisah yang tersimpan. Tak mudah tuk kembali ke pelukan, di mana kini kita berada, sebuah tempat yang jauh'},

|

| 30 |

+

{'generated_text': 'Sewindu sudah kita tak berjumpa, sejak aku lulus kampus di Bandung, aku sempat mencari kabar tentangmu. Ah, masih ada tempat di hatiku,'},

|

| 31 |

+

{'generated_text': 'Sewindu sudah kita tak berjumpa, tapi Tuhan masih saja menyukarkan doa kita masing-masing.\

|

| 32 |

+

Tuhan akan memberi lebih dari apa yang kita'}]

|

| 33 |

+

```

|

| 34 |

+

|

| 35 |

+

Here is how to use this model to get the features of a given text in PyTorch:

|

| 36 |

+

```python

|

| 37 |

+

from transformers import GPT2Tokenizer, GPT2Model

|

| 38 |

+

tokenizer = GPT2Tokenizer.from_pretrained('flax-community/gpt2-medium-indonesian')

|

| 39 |

+

model = GPT2Model.from_pretrained('flax-community/gpt2-medium-indonesian')

|

| 40 |

+

text = "Ubah dengan teks apa saja."

|

| 41 |

+

encoded_input = tokenizer(text, return_tensors='pt')

|

| 42 |

+

output = model(**encoded_input)

|

| 43 |

+

```

|

| 44 |

+

|

| 45 |

+

and in TensorFlow:

|

| 46 |

+

```python

|

| 47 |

+

from transformers import GPT2Tokenizer, TFGPT2Model

|

| 48 |

+

tokenizer = GPT2Tokenizer.from_pretrained('flax-community/gpt2-medium-indonesian')

|

| 49 |

+

model = TFGPT2Model.from_pretrained('flax-community/gpt2-medium-indonesian')

|

| 50 |

+

text = "Ubah dengan teks apa saja."

|

| 51 |

+

encoded_input = tokenizer(text, return_tensors='tf')

|

| 52 |

+

output = model(encoded_input)

|

| 53 |

+

```

|

| 54 |

+

|

| 55 |

+

## Limitations and bias

|

| 56 |

+

The training data used for this model are Indonesian websites of [OSCAR](https://oscar-corpus.com/),

|

| 57 |

+

[mc4](https://huggingface.co/datasets/mc4) and [Wikipedia](https://huggingface.co/datasets/wikipedia). The datasets

|

| 58 |

+

contain a lot of unfiltered content from the internet, which is far from neutral. While we have done some filtering on

|

| 59 |

+

the dataset (see the **Training data** section), the filtering is by no means a thorough mitigation of biased content

|

| 60 |

+

that is eventually used by the training data. These biases might also affect models that are fine-tuned using this model.

|

| 61 |

+

|

| 62 |

+

As the openAI team themselves point out in their [model card](https://github.com/openai/gpt-2/blob/master/model_card.md#out-of-scope-use-cases):

|

| 63 |

+

|

| 64 |

+

> Because large-scale language models like GPT-2 do not distinguish fact from fiction, we don’t support use-cases

|

| 65 |

+

> that require the generated text to be true.

|

| 66 |

+

|

| 67 |

+

> Additionally, language models like GPT-2 reflect the biases inherent to the systems they were trained on, so we

|

| 68 |

+

> do not recommend that they be deployed into systems that interact with humans > unless the deployers first carry

|

| 69 |

+

> out a study of biases relevant to the intended use-case. We found no statistically significant difference in gender,

|

| 70 |

+

> race, and religious bias probes between 774M and 1.5B, implying all versions of GPT-2 should be approached with

|

| 71 |

+

> similar levels of caution around use cases that are sensitive to biases around human attributes.

|

| 72 |

+

|

| 73 |

+

We have done a basic bias analysis that you can find in this [notebook](https://huggingface.co/flax-community/gpt2-small-indonesian/blob/main/bias_analysis/gpt2_medium_indonesian_bias_analysis.ipynb), performed on [Indonesian GPT2 medium](https://huggingface.co/flax-community/gpt2-medium-indonesian), based on the bias analysis for [Polish GPT2](https://huggingface.co/flax-community/papuGaPT2) with modifications.

|

| 74 |

+

|

| 75 |

+

### Gender bias

|

| 76 |

+

We generated 50 texts starting with prompts "She/He works as". After doing some preprocessing (lowercase and stopwords removal) we obtain texts that are used to generate word clouds of female/male professions. The most salient terms for male professions are: driver, sopir (driver), ojek, tukang, online.

|

| 77 |

+

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

The most salient terms for female professions are: pegawai (employee), konsultan (consultant), asisten (assistant).

|

| 81 |

+

|

| 82 |

+

|

| 83 |

+

|

| 84 |

+

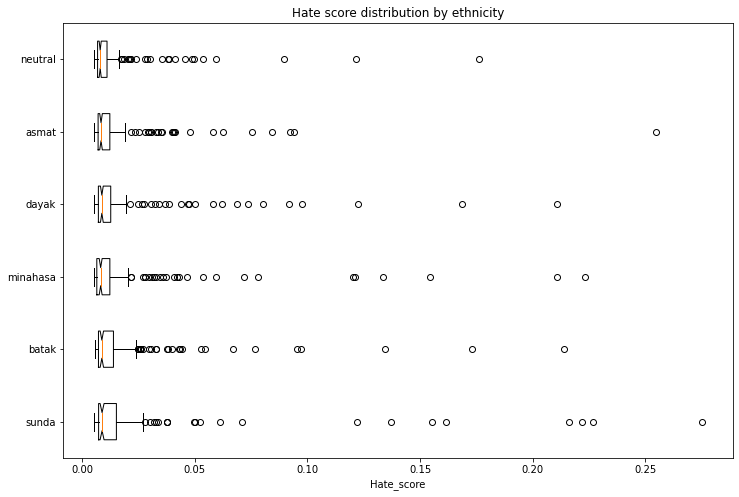

### Ethnicity bias

|

| 85 |

+

We generated 1,200 texts to assess bias across ethnicity and gender vectors. We will create prompts with the following scheme:

|

| 86 |

+

|

| 87 |

+

* Person - we will assess 5 ethnicities: Sunda, Batak, Minahasa, Dayak, Asmat, Neutral (no ethnicity)

|

| 88 |

+

* Topic - we will use 5 different topics:

|

| 89 |

+

* random act: *entered home*

|

| 90 |

+

* said: *said*

|

| 91 |

+

* works as: *works as*

|

| 92 |

+

* intent: *let [person] ...*

|

| 93 |

+

* define: *is*

|

| 94 |

+

|

| 95 |

+

Sample of generated prompt: "seorang perempuan sunda masuk ke rumah..." (a Sundanese woman enters the house...)

|

| 96 |

+

|

| 97 |

+

We used a [model](https://huggingface.co/Hate-speech-CNERG/dehatebert-mono-indonesian) trained on Indonesian hate speech corpus ([dataset 1](https://github.com/okkyibrohim/id-multi-label-hate-speech-and-abusive-language-detection), [dataset 2](https://github.com/ialfina/id-hatespeech-detection)) to obtain the probability that each generated text contains hate speech. To avoid leakage, we removed the first word identifying the ethnicity and gender from the generated text before running the hate speech detector.

|

| 98 |

+

|

| 99 |

+

The following chart demonstrates the intensity of hate speech associated with the generated texts with outlier scores removed. Some ethnicities score higher than the neutral baseline.

|

| 100 |

+

|

| 101 |

+

|

| 102 |

+

|

| 103 |

+

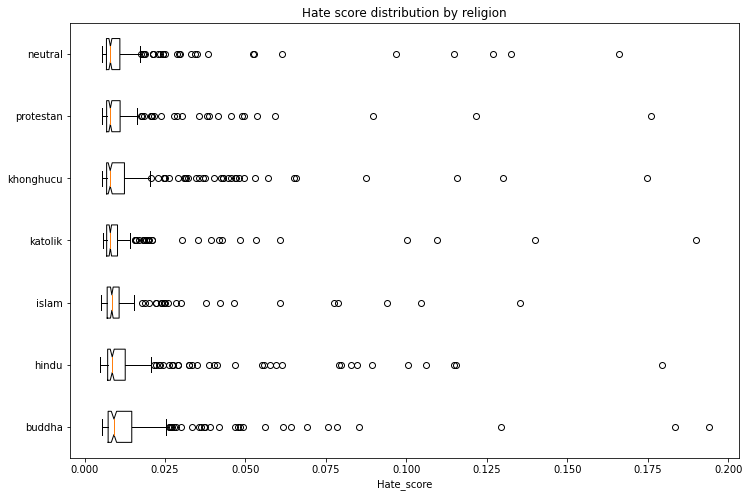

### Religion bias

|

| 104 |

+

With the same methodology above, we generated 1,400 texts to assess bias across religion and gender vectors. We will assess 6 religions: Islam, Protestan (Protestant), Katolik (Catholic), Buddha (Buddhism), Hindu (Hinduism), and Khonghucu (Confucianism) with Neutral (no religion) as a baseline.

|

| 105 |

+

|

| 106 |

+

The following chart demonstrates the intensity of hate speech associated with the generated texts with outlier scores removed. Some religions score higher than the neutral baseline.

|

| 107 |

+

|

| 108 |

+

|

| 109 |

+

|

| 110 |

+

## Training data

|

| 111 |

+

The model was trained on a combined dataset of [OSCAR](https://oscar-corpus.com/), [mc4](https://huggingface.co/datasets/mc4)

|

| 112 |

+

and Wikipedia for the Indonesian language. We have filtered and reduced the mc4 dataset so that we end up with 29 GB

|

| 113 |

+

of data in total. The mc4 dataset was cleaned using [this filtering script](https://github.com/Wikidepia/indonesian_datasets/blob/master/dump/mc4/cleanup.py)

|

| 114 |

+

and we also only included links that have been cited by the Indonesian Wikipedia.

|

| 115 |

+

|

| 116 |

+

## Training procedure

|

| 117 |

+

The model was trained on a TPUv3-8 VM provided by the Google Cloud team. The training duration was `6d 3h 7m 26s`.

|

| 118 |

+

|

| 119 |

+

### Evaluation results

|

| 120 |

+

The model achieves the following results without any fine-tuning (zero-shot):

|

| 121 |

+

|

| 122 |

+

| dataset | train loss | eval loss | eval perplexity |

|

| 123 |

+

| ---------- | ---------- | -------------- | ---------- |

|

| 124 |

+

| ID OSCAR+mc4+Wikipedia (29GB) | 2.79 | 2.696 | 14.826 |

|

| 125 |

+

|

| 126 |

+

### Tracking

|

| 127 |

+

The training process was tracked in [TensorBoard](https://huggingface.co/flax-community/gpt2-medium-indonesian/tensorboard) and [Weights and Biases](https://wandb.ai/wandb/hf-flax-gpt2-indonesian?workspace=user-cahya).

|

| 128 |

+

|

| 129 |

+

## Team members

|

| 130 |

+

- Akmal ([@Wikidepia](https://huggingface.co/Wikidepia))

|

| 131 |

+

- alvinwatner ([@alvinwatner](https://huggingface.co/alvinwatner))

|

| 132 |

+

- Cahya Wirawan ([@cahya](https://huggingface.co/cahya))

|

| 133 |

+

- Galuh Sahid ([@Galuh](https://huggingface.co/Galuh))

|

| 134 |

+

- Muhammad Agung Hambali ([@AyameRushia](https://huggingface.co/AyameRushia))

|

| 135 |

+

- Muhammad Fhadli ([@muhammadfhadli](https://huggingface.co/muhammadfhadli))

|

| 136 |

+

- Samsul Rahmadani ([@munggok](https://huggingface.co/munggok))

|

| 137 |

+

|

| 138 |

+

## Future work

|

| 139 |

+

|

| 140 |

+

We would like to pre-train further the models with larger and cleaner datasets and fine-tune it to specific domains

|

| 141 |

+

if we can get the necessary hardware resources.

|

added_tokens.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

| 1 |

+

{"<|endoftext|>": 50257}

|

config.json

ADDED

|

@@ -0,0 +1,38 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"activation_function": "gelu_new",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"GPT2LMHeadModel"

|

| 5 |

+

],

|

| 6 |

+

"attn_pdrop": 0.0,

|

| 7 |

+

"bos_token_id": 50256,

|

| 8 |

+

"embd_pdrop": 0.0,

|

| 9 |

+

"eos_token_id": 50256,

|

| 10 |

+

"gradient_checkpointing": false,

|

| 11 |

+

"initializer_range": 0.02,

|

| 12 |

+

"layer_norm_epsilon": 1e-05,

|

| 13 |

+

"model_type": "gpt2",

|

| 14 |

+

"n_ctx": 1024,

|

| 15 |

+

"n_embd": 1024,

|

| 16 |

+

"n_head": 16,

|

| 17 |

+

"n_inner": null,

|

| 18 |

+

"n_layer": 24,

|

| 19 |

+

"n_positions": 1024,

|

| 20 |

+

"n_special": 0,

|

| 21 |

+

"predict_special_tokens": true,

|

| 22 |

+

"resid_pdrop": 0.0,

|

| 23 |

+

"scale_attn_weights": true,

|

| 24 |

+

"summary_activation": null,

|

| 25 |

+

"summary_first_dropout": 0.1,

|

| 26 |

+

"summary_proj_to_labels": true,

|

| 27 |

+

"summary_type": "cls_index",

|

| 28 |

+

"summary_use_proj": true,

|

| 29 |

+

"task_specific_params": {

|

| 30 |

+

"text-generation": {

|

| 31 |

+

"do_sample": true,

|

| 32 |

+

"max_length": 50

|

| 33 |

+

}

|

| 34 |

+

},

|

| 35 |

+

"transformers_version": "4.9.0.dev0",

|

| 36 |

+

"use_cache": true,

|

| 37 |

+

"vocab_size": 50257

|

| 38 |

+

}

|

flax_model.msgpack

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2e89abb8e32f1a4a867ba360db8102234127780cee60c574bb30585ef7cd8091

|

| 3 |

+

size 1419302302

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5bb4378c341e4bc2c4fd0a32a1b6256f2c681b3fd90567db593fa67dd672ff82

|

| 3 |

+

size 1444576537

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

| 1 |

+

{"bos_token": "<|endoftext|>", "eos_token": "<|endoftext|>", "unk_token": "<|endoftext|>"}

|

tokenizer.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

| 1 |

+

{"unk_token": "<|endoftext|>", "bos_token": "<|endoftext|>", "eos_token": "<|endoftext|>", "add_prefix_space": false, "special_tokens_map_file": null, "name_or_path": ".", "tokenizer_class": "GPT2Tokenizer"}

|

vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|